- The paper introduces a theoretical framework for neuromorphic computing that quantifies its time, space, and energy scaling properties versus conventional architectures.

- The paper highlights neuromorphic systems’ unique asynchronous, parallel processing and low-power consumption, making them ideal for sparse, iterative tasks.

- The paper outlines potential improvements in dynamic computation and advocates for future research on specialized algorithms and hardware optimizations.

Neuromorphic Computing: A Theoretical Framework for Time, Space, and Energy Scaling

This essay provides a comprehensive analysis of the theoretical framework for neuromorphic computing (NMC), focusing on its time, space, and energy scaling properties compared to conventional computing architectures. We explore how these properties position NMC as a complementary technology to traditional von Neumann architectures like CPUs and GPUs, particularly in the context of general-purpose and programmable computing.

Introduction to Neuromorphic Computing

NMC is increasingly considered as an alternative to conventional architectures primarily due to its potential for low-power consumption. Unlike von Neumann systems, which separate processing and memory, NMC integrates these functions, allowing for unique scaling properties, particularly in terms of energy usage. The central thesis of NMC's value proposition is its ability to effectively handle sparse and dynamically structured algorithms, such as iterative optimization and large-scale sampling.

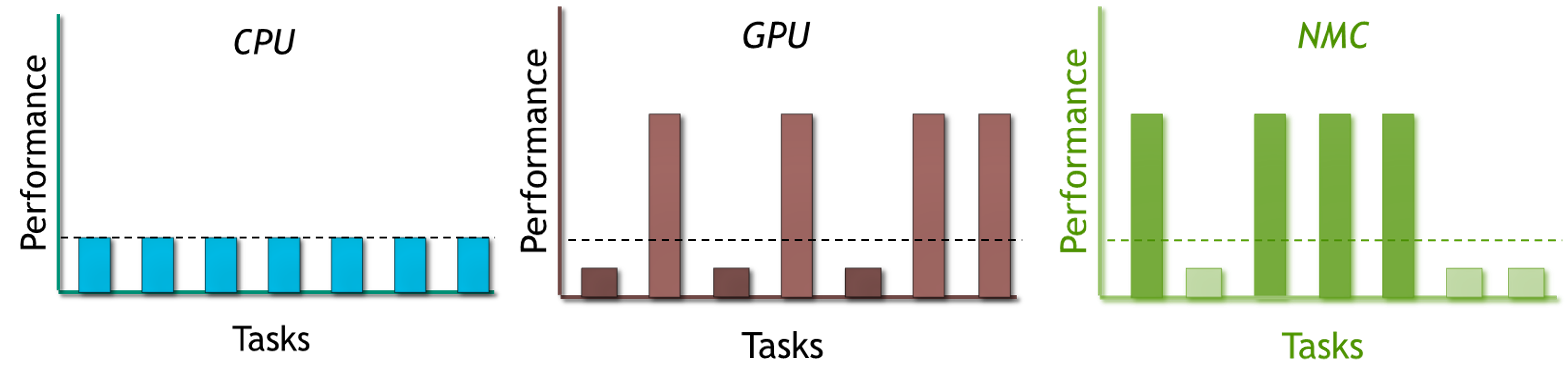

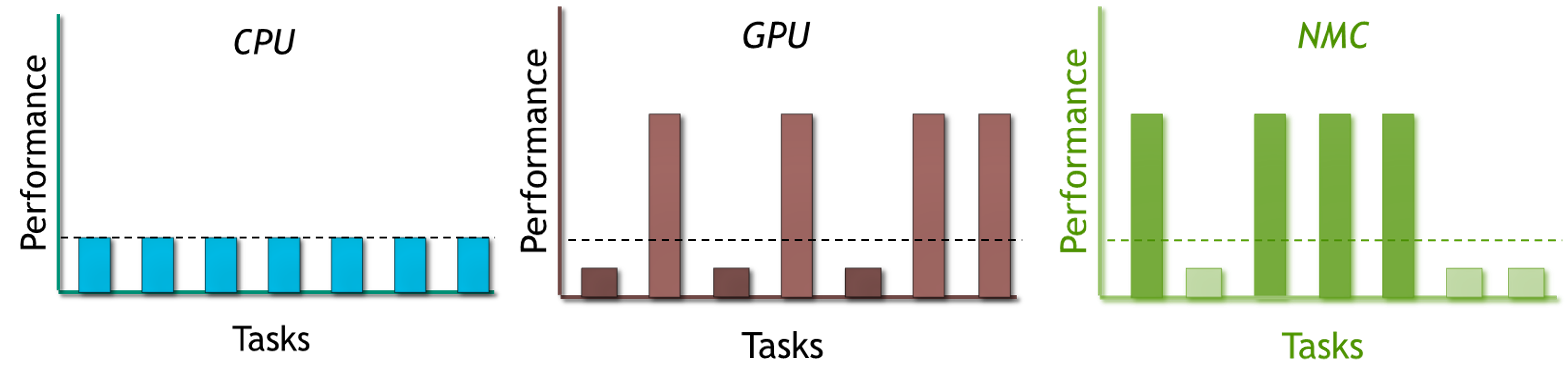

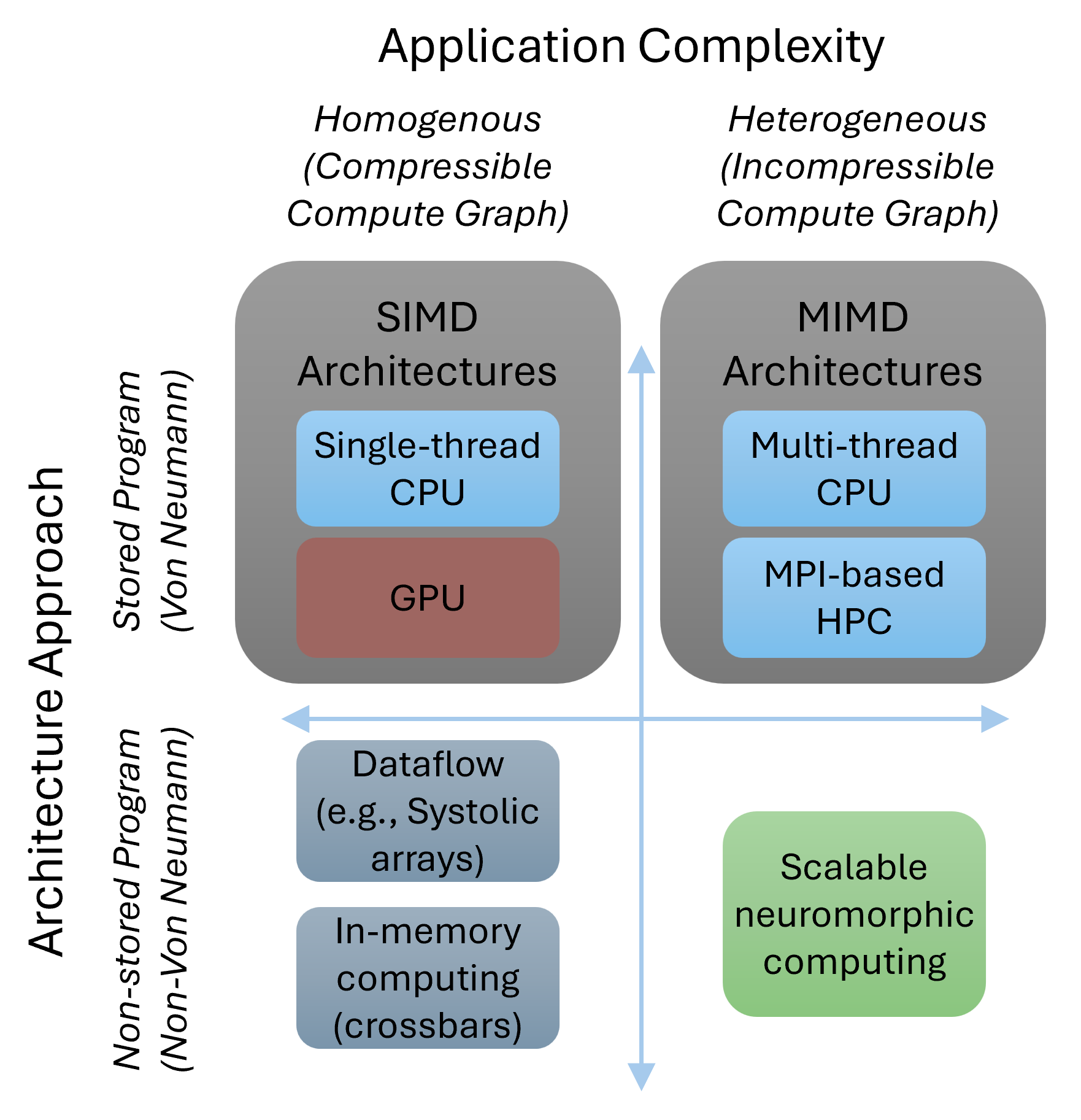

Figure 1: NMC hardware is GPU-like in generality, but shows advantageous capabilities in a different set of tasks. This paper explores the classes of computation for which NMC shows preferential advantages.

Theoretical Framework

Definition and Architecture

A neuromorphic system is characterized by its use of neurons and synapses, which are fundamentally different from the operations in von Neumann architectures. Neurons perform computations based on non-linear transfer functions, while synapses handle the transfer of information between neurons. This architecture is inherently asynchronous and parallel, allowing NMC to execute tasks more dynamically than conventional systems.

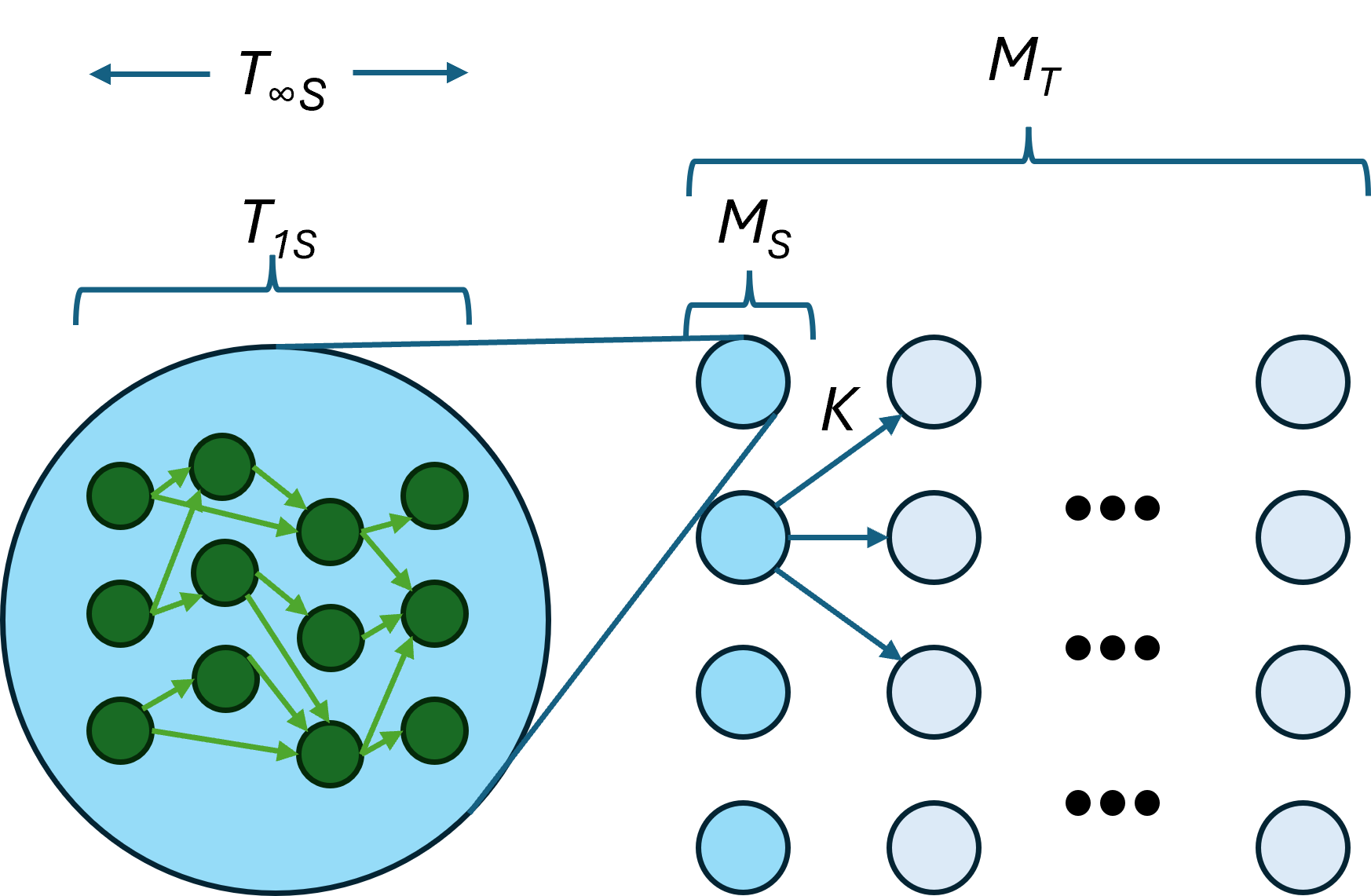

Time and Space Complexity

The time complexity of NMC is determined by the minimal depth of the computational graph (Tinf), while space complexity scales with the total number of operations (T1). This is in contrast to conventional computing, where space complexity is often dominated by memory size rather than computation.

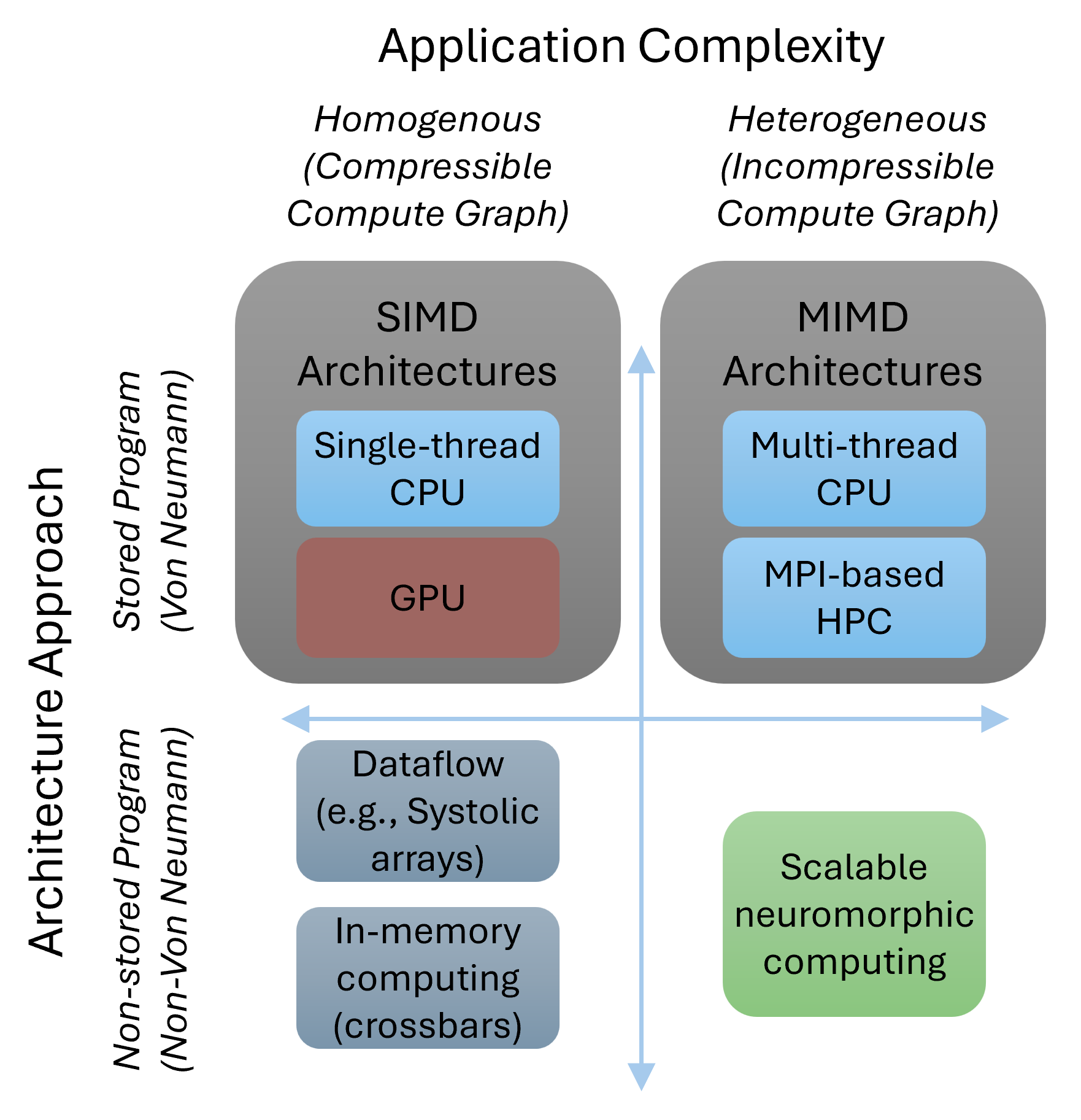

Figure 2: Notional landscape of parallel computing approaches. For applications that are relatively homogeneous in their computational graph (e.g., the structured linear algebra of conventional neural networks), SIMD and dataflow architectures are effective, whereas for heterogeneous applications an MIMD or neuromorphic approach should be more effective.

Energy Scaling

The energy efficiency of NMC is a cornerstone of its theoretical advantage. While conventional systems see energy scaling with total computational work, NMC energy use scales with the changes in the computational state. This makes NMC particularly suitable for applications where the computation graph is sparse or iterative.

Application Examples

Iterative Mesh-Based Algorithms

For algorithms such as finite element simulations, where updates are localized and iterative, NMC demonstrates superior performance. These problems benefit from NMC’s ability to handle complex computations over a distributed set of neurons, minimizing energy consumption as the system approaches steady-state.

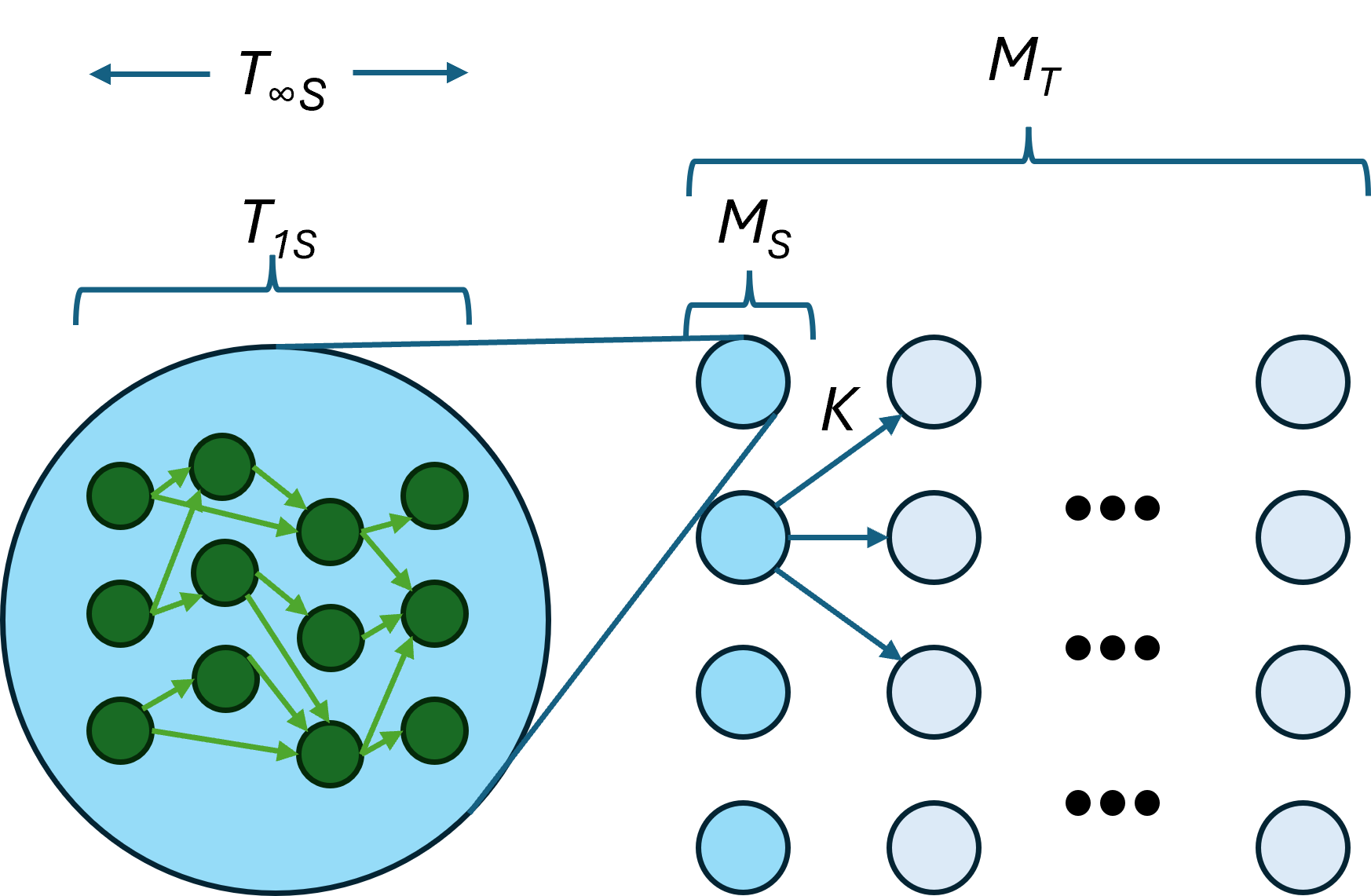

Figure 3: Expanded graph of generic mesh problem.

Dense Linear Algebra-Based ANNs

Conversely, dense matrix operations commonly used in traditional artificial neural networks (ANNs) do not align well with NMC’s strengths. The dense connectivity and lack of temporal compression in these tasks mean that conventional architectures or GPUs typically perform better.

Implications and Future Directions

NMC's theoretical advantages in time, space, and energy scaling suggest a significant potential for applications involving heterogeneous and dynamic computations. However, realizing these advantages requires developing specialized NMC algorithms and optimizing hardware implementations to align with the identified benefits.

Implementing neuromorphic solutions could involve focusing on algorithms that inherently benefit from NMC’s parallel processing capability and energy-efficient state changes. Future NMC systems could leverage advanced materials and non-traditional computing elements, such as analog or hybrid systems, to enhance these theoretical benefits further.

Conclusion

The exploration of NMC within a theoretical framework highlights its potential advantages over conventional systems in specific domains. While NMC offers promising prospects for enhancing computational efficiency, especially for dynamic and iterative tasks, further research is needed to refine its application across broader computational domains. Understanding and optimizing the unique scaling properties of NMC remains a critical step toward its widespread adoption.