- The paper introduces HYPIR, a framework that initializes image restoration using diffusion model score priors followed by adversarial fine-tuning.

- It demonstrates rapid convergence and numerical stability, maintaining near-complete mode coverage and high perceptual quality.

- Empirical results on synthetic and real-world datasets validate its superiority over traditional MSE and GAN restoration methods.

Harnessing Diffusion-Yielded Score Priors for Image Restoration: A Technical Analysis

Introduction and Motivation

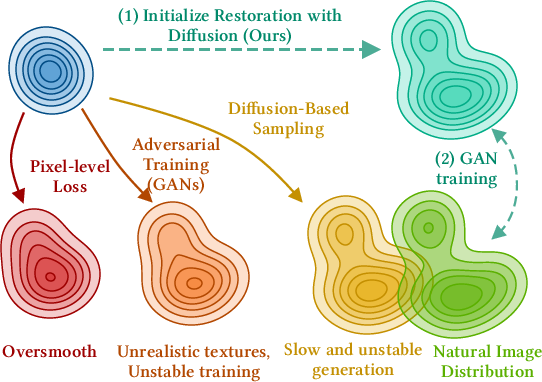

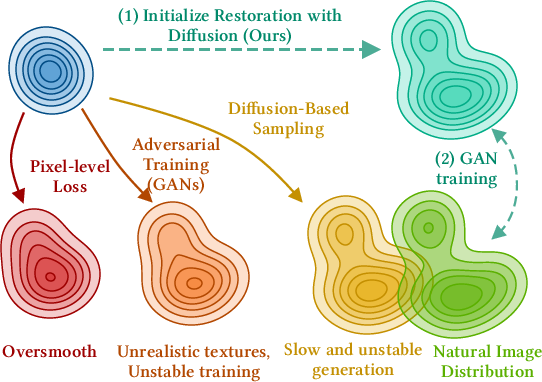

The paper introduces HYPIR, a novel image restoration framework that leverages pretrained diffusion models as strong generative priors, followed by adversarial fine-tuning. The approach is motivated by the persistent trade-offs in existing restoration paradigms: MSE-based models yield over-smoothed outputs, GAN-based models suffer from mode collapse and instability, and diffusion-based models, while producing high-fidelity results, are computationally expensive due to iterative sampling. HYPIR aims to combine the strengths of diffusion and adversarial training, achieving both high perceptual quality and computational efficiency.

Figure 1: Existing pixel-level loss, adversarial training, and diffusion-based image restoration methods struggle with over-smoothness, unrealistic textures, and slow, unstable generation. HYPIR leverages diffusion initialization followed by GAN training, balancing realism and efficiency.

Methodology

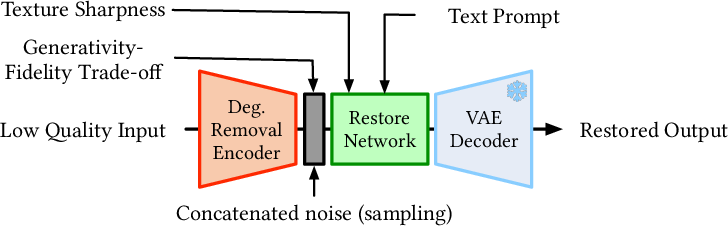

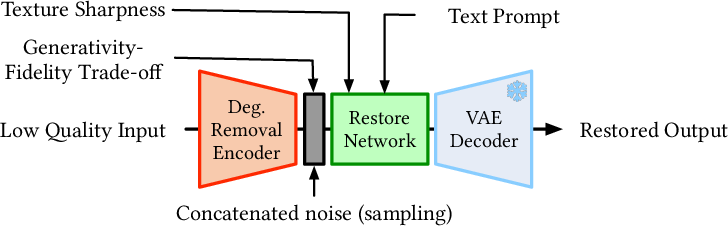

Pipeline Overview

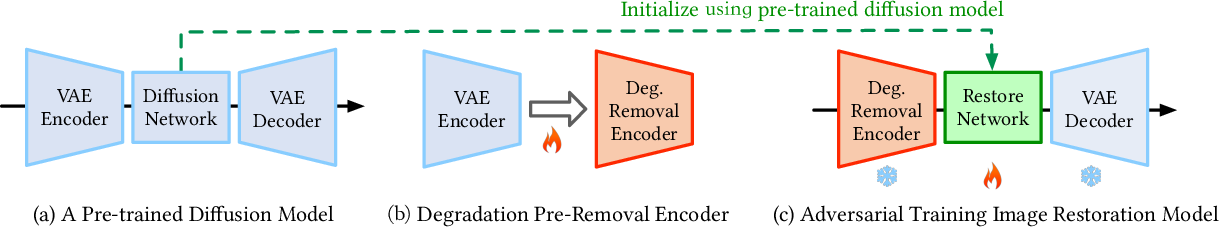

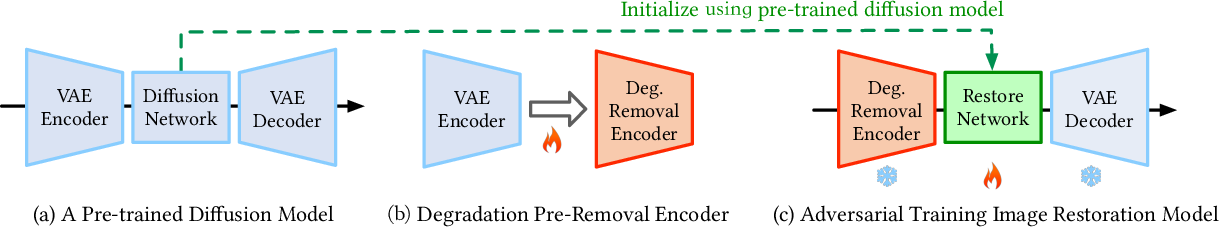

HYPIR's pipeline consists of three main stages:

- Diffusion Model Initialization: A pretrained diffusion model provides the initial weights for the restoration network.

- Encoder Fine-tuning for Degradation Pre-removal: The VAE encoder is fine-tuned to map degraded images into a latent space that is robust to severe degradations.

- Adversarial Fine-tuning: The initialized network is further fine-tuned using adversarial loss, with only the restoration network (typically a U-Net) being updated.

Figure 2: The HYPIR pipeline: (a) pretrained diffusion model, (b) encoder fine-tuning for degradation pre-removal, (c) adversarial fine-tuning with only the restoration network optimized.

Theoretical Justification

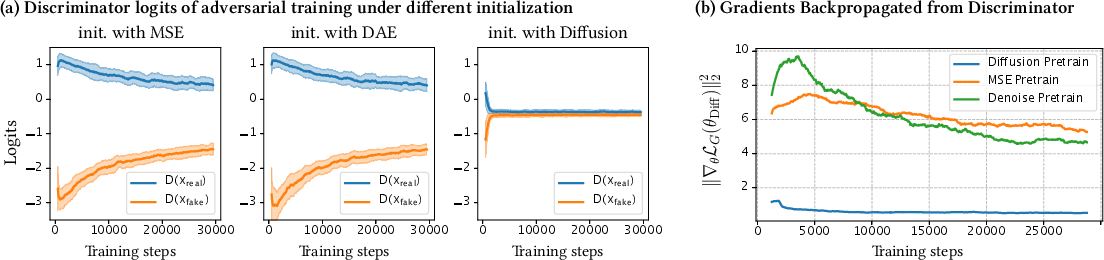

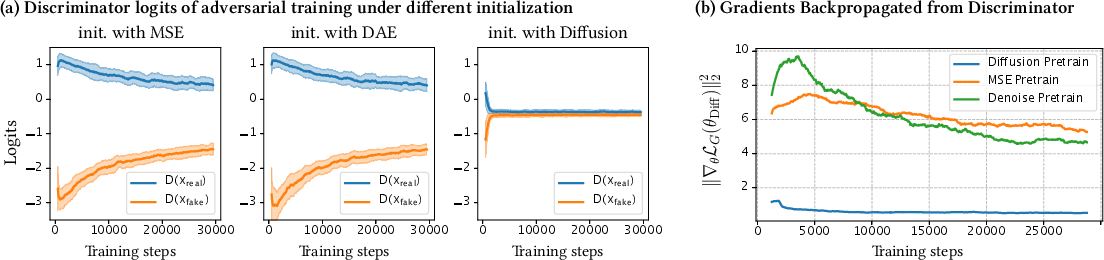

The core insight is that diffusion models are trained to estimate the score function (gradient of the log-density) of the data distribution. For restoration, the optimal operator is also a score estimator, suggesting that a pretrained diffusion model is already near-optimal for restoration tasks. The paper provides a formal analysis, showing that initializing adversarial training from a diffusion model places the generator close to the natural image manifold, resulting in:

- Small initial adversarial gradients (improved numerical stability)

- Near-complete mode coverage (mitigating mode collapse)

- Rapid convergence (logarithmic in the initial distributional gap)

Figure 3: (a) Discriminator logits and (b) generator gradient magnitudes during training. Diffusion-based initialization yields rapid, stable convergence and small gradients, compared to MSE and DAE initializations.

Empirical Evidence

Empirical results confirm the theoretical claims:

- GANs trained from scratch or with MSE/DAE initialization exhibit mode collapse and unstable training.

- Diffusion-initialized GANs converge rapidly, maintain stable gradients, and produce diverse, high-fidelity outputs.

Figure 4: Mode collapse in GANs without diffusion initialization (middle row) vs. improved semantic diversity with HYPIR (bottom row).

Figure 5: Restoration progress without (top) and with (bottom) diffusion initialization. HYPIR yields clearer, stable outputs early in training.

Implementation Details

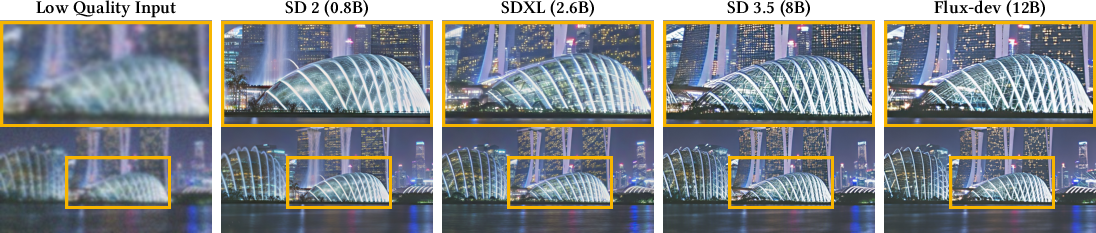

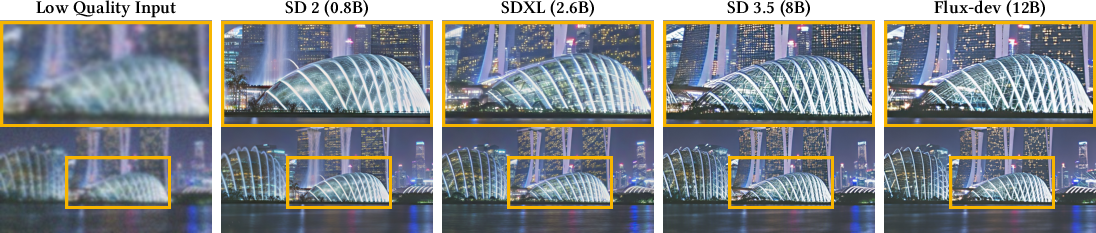

Diffusion Model Selection

HYPIR is agnostic to the choice of diffusion model. Larger and more advanced models (e.g., SD2, SDXL, SD3, Flux) provide better score approximations and improved restoration quality. The method is scalable to models with billions of parameters due to its efficient fine-tuning strategy (LoRA).

Figure 6: Restoration quality improves with larger, more advanced diffusion models.

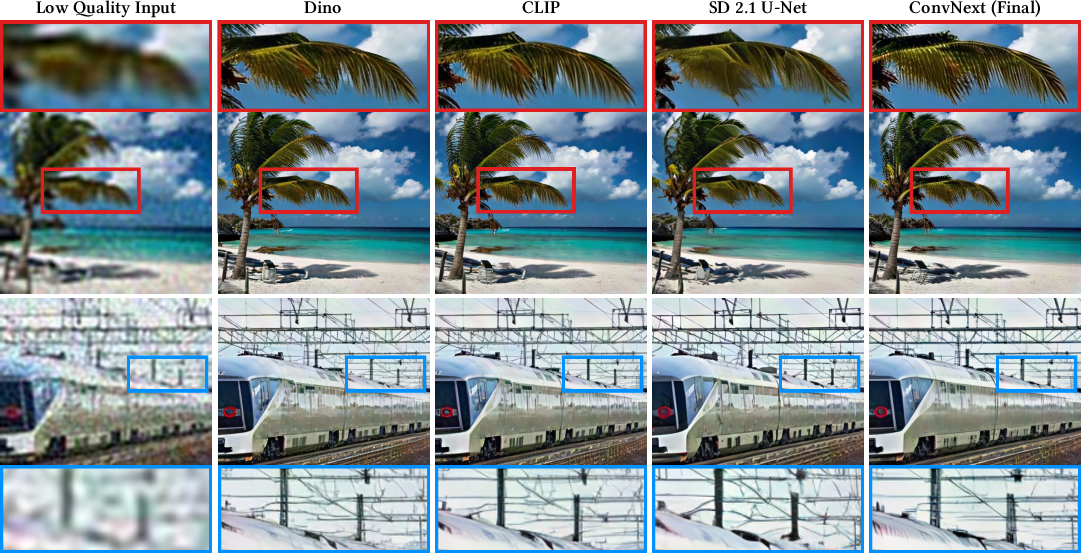

Discriminator Design

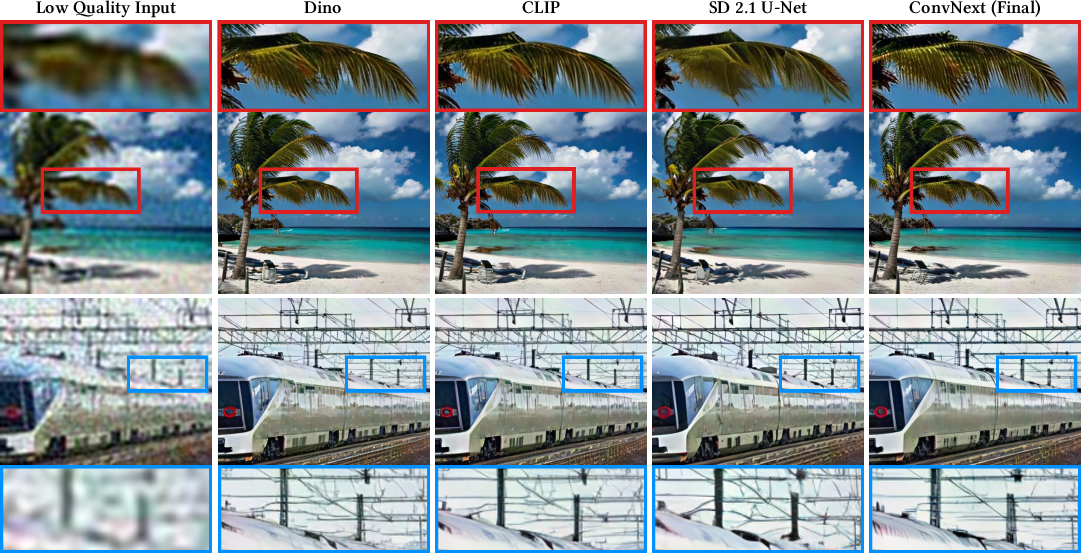

The discriminator is initialized from a pretrained vision backbone (e.g., ConvNeXt, DINO, CLIP, diffusion U-Net). ConvNeXt is preferred for its ability to process high-resolution images without resizing, preserving fine details.

Figure 7: Restoration quality with different discriminator backbones. ConvNeXt yields richer, more precise textures.

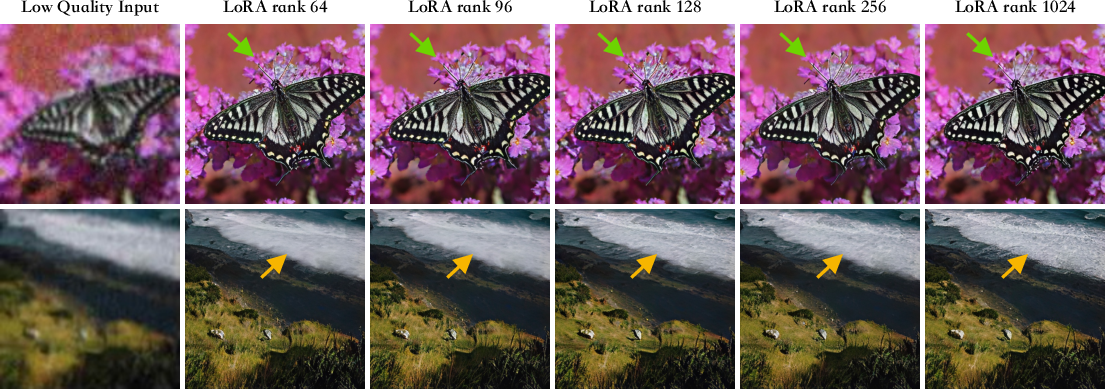

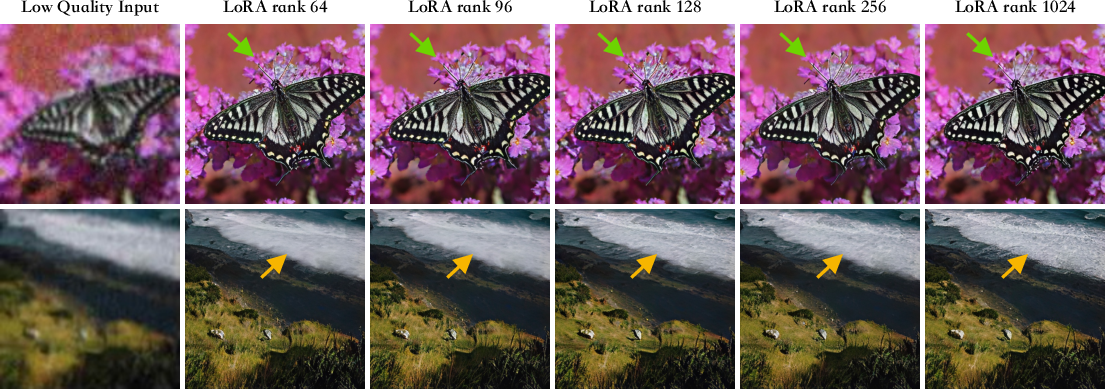

LoRA Fine-tuning

LoRA is used to reduce the number of trainable parameters during adversarial fine-tuning, making the approach practical for large-scale diffusion models. Increasing LoRA rank improves capacity but with diminishing returns beyond a certain point.

Figure 8: Restoration quality as a function of LoRA rank. Higher ranks increase capacity but may not justify the computational cost.

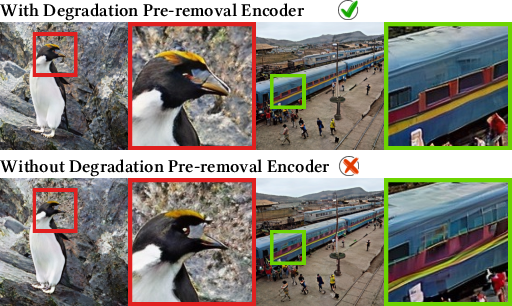

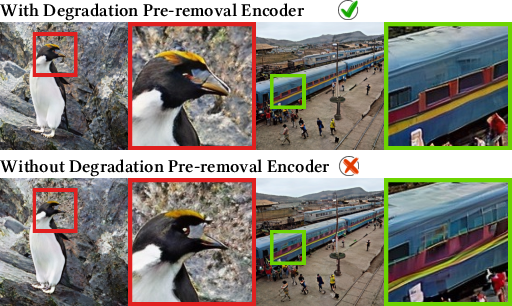

Degradation Pre-removal

Fine-tuning the encoder for degradation pre-removal is critical. Without this step, the encoder may misinterpret degraded content, introducing artifacts.

Figure 9: Encoder-based degradation pre-removal mitigates artifacts and improves restoration quality.

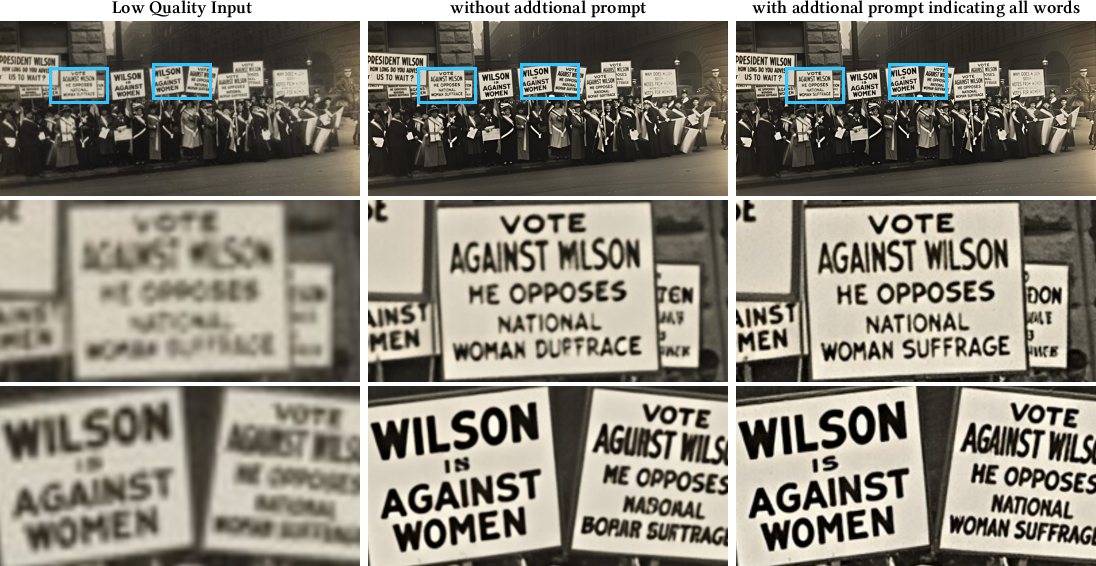

Controllability and User Interaction

HYPIR inherits the controllability of diffusion models, supporting:

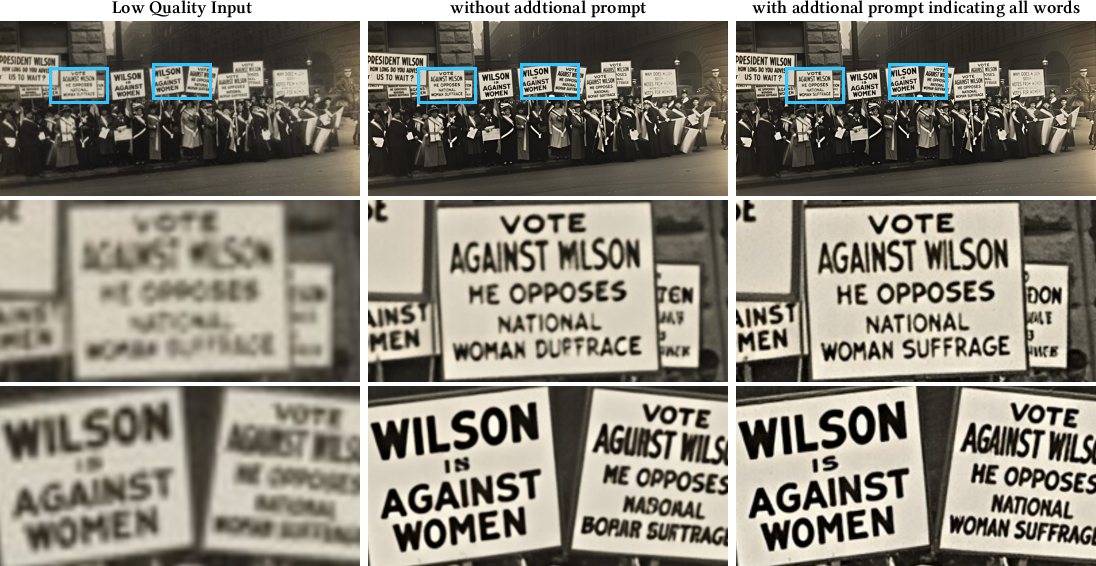

- Text-guided restoration: Textual prompts can guide restoration, especially for ambiguous or textual content.

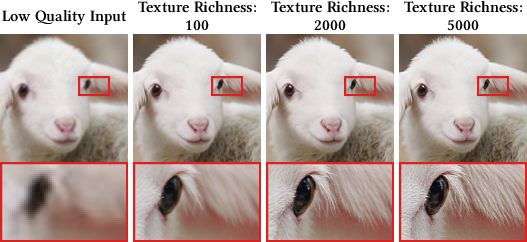

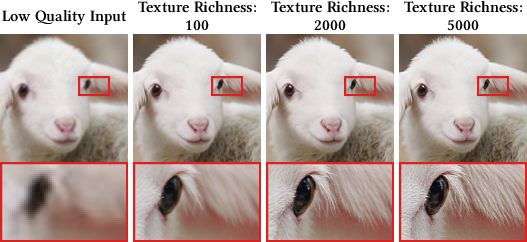

- Texture richness adjustment: Users can control the global texture density via a Laplacian-based metric.

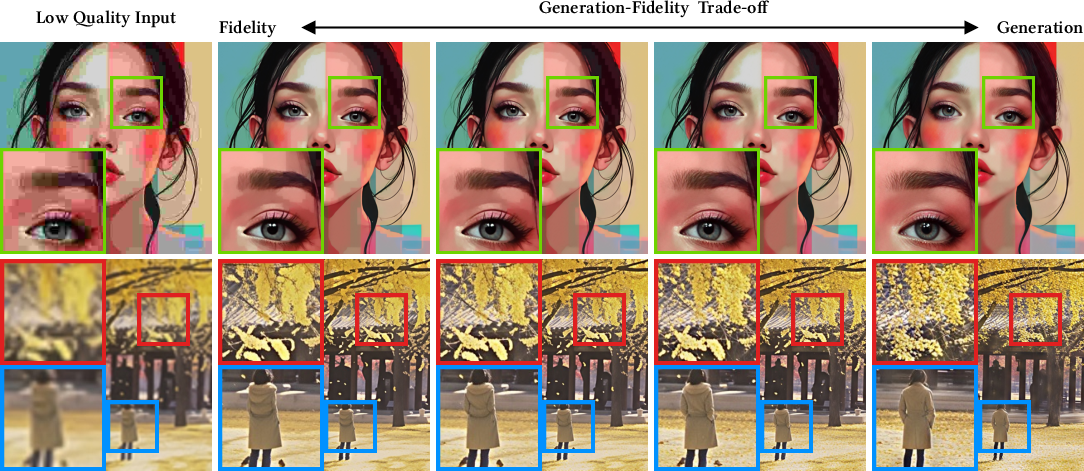

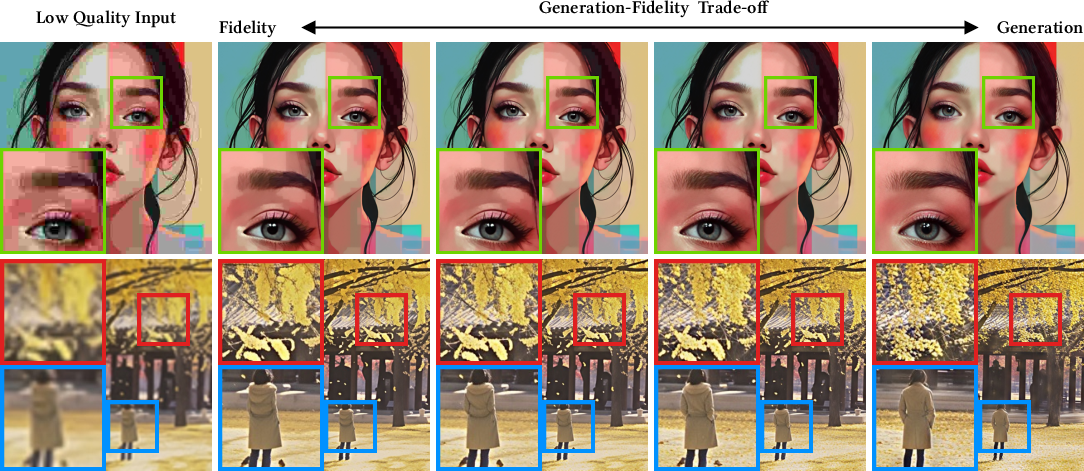

- Generativity-fidelity trade-off: Artificial noise injection allows users to balance strict fidelity against generative enhancement.

- Random sampling: Diverse plausible restorations can be generated by varying the noise input.

Figure 10: HYPIR supports text prompts, texture richness adjustment, generativity-fidelity trade-off, and random sampling.

Figure 11: Text correction via prompts enables accurate, semantically faithful restoration of degraded text.

Figure 12: Generativity-fidelity trade-off: higher generative ratios synthesize realistic textures in heavily degraded regions.

Figure 13: Texture richness parameter enables intuitive stylistic adjustments.

Figure 14: Random sampling produces diverse restoration results from the same degraded input.

Experimental Results

Quantitative and Qualitative Evaluation

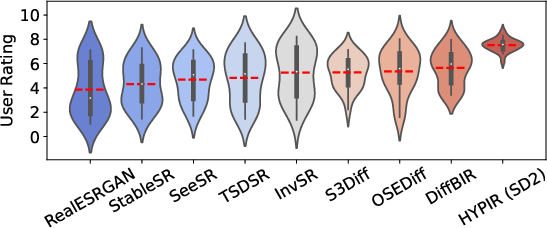

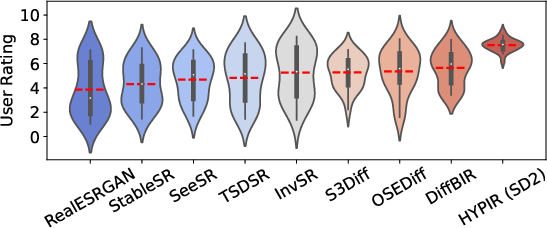

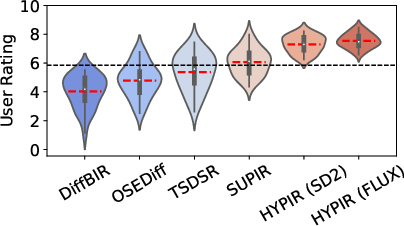

HYPIR is evaluated on synthetic (DIV2K) and real-world (RealPhoto60, RealLR200) datasets. It consistently outperforms or matches state-of-the-art methods in both perceptual user studies and objective IQA metrics. Notably, HYPIR achieves these results with a single forward pass, in contrast to the multi-step inference required by diffusion-based methods.

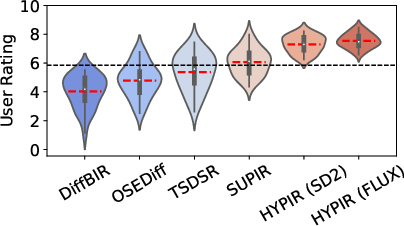

Figure 15: User study results: HYPIR achieves the highest perceptual ratings among lightweight and large-scale models.

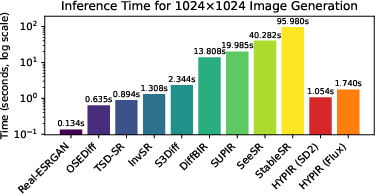

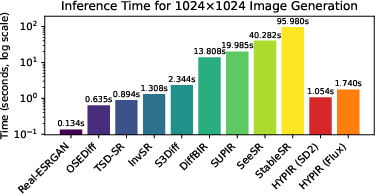

Figure 16: Inference time comparison. HYPIR matches single-step models in speed, even with large models, while delivering superior quality.

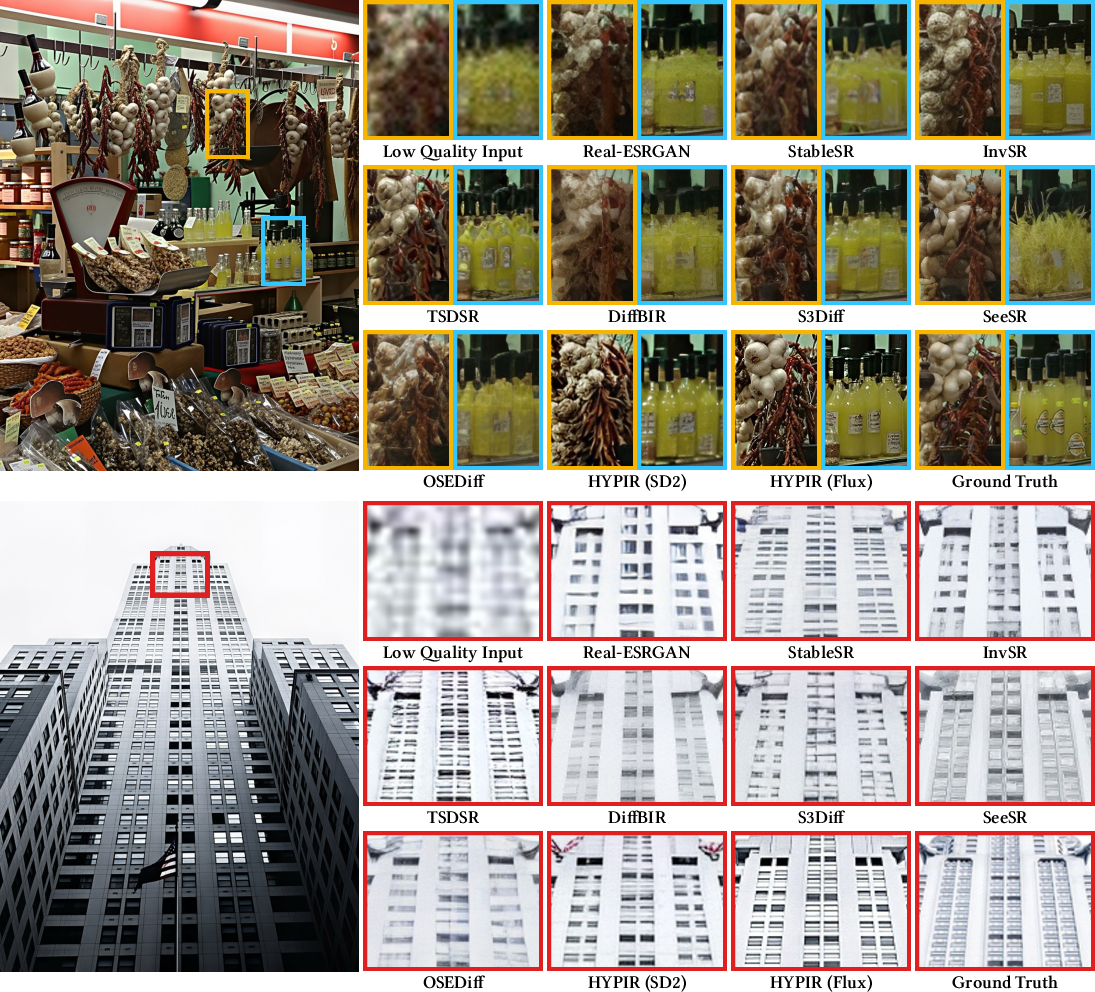

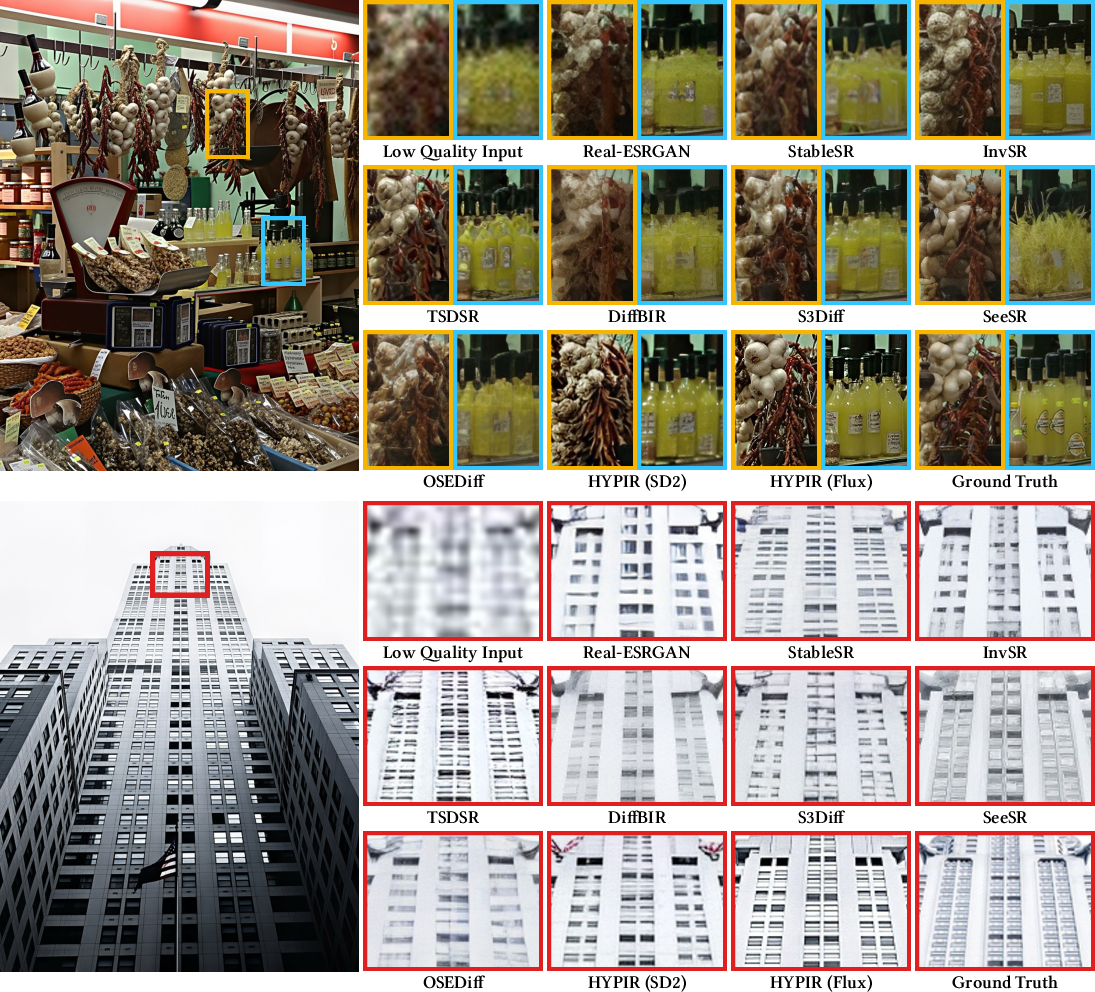

Figure 17: Qualitative comparison on synthetic data. HYPIR restores fine details missed by other methods.

Figure 18: Restoration of real-world images, recovering facial features, text, and architectural details.

Figure 19: Restoration of century-old photographs at 4K/6K resolution, preserving fine details and authentic textures.

Ablation Studies

Ablations confirm the necessity of each component:

Implications and Future Directions

HYPIR demonstrates that diffusion models, when used as initialization for adversarial training, can overcome the limitations of both GANs and diffusion-based restoration. The approach achieves a favorable balance between perceptual quality, fidelity, and computational efficiency. Theoretically, this work bridges the gap between score-based generative modeling and adversarial learning, suggesting new avenues for hybrid generative models.

Practically, HYPIR enables scalable, high-quality restoration for large images and real-world degradations, with user-controllable outputs. The method is extensible to other conditional generation tasks, such as inpainting, deblurring, and domain adaptation.

Future research may explore:

- Further integration of diffusion and adversarial objectives during joint training.

- Application to video restoration and other modalities.

- Improved user interaction mechanisms for fine-grained control.

Conclusion

HYPIR provides a principled and practical solution to image restoration by harnessing diffusion-yielded score priors for initialization and adversarial fine-tuning for refinement. The method achieves rapid convergence, numerical stability, and state-of-the-art restoration quality, while supporting rich user control and efficient inference. This work establishes a new paradigm for leveraging large-scale generative models in low-level vision tasks, with broad implications for both theory and application.