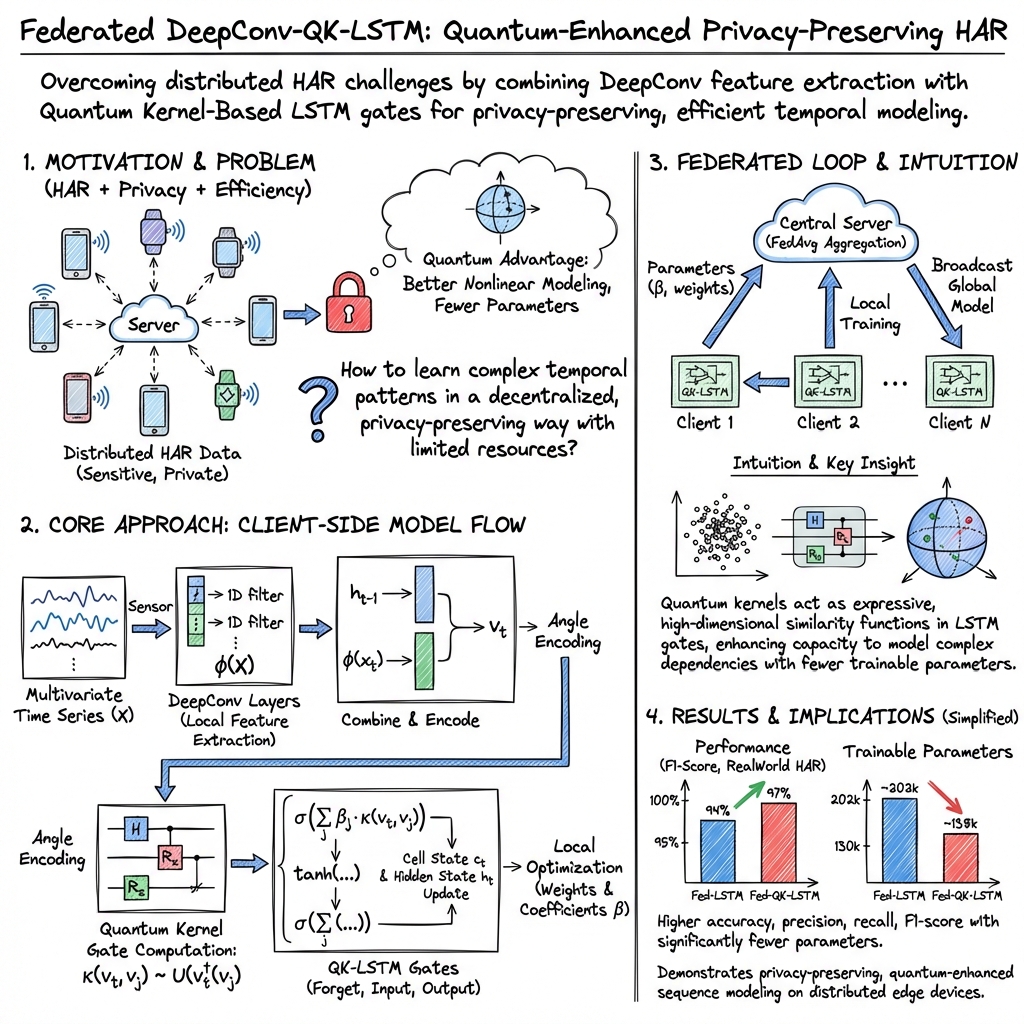

- The paper introduces a novel Fed-QK-LSTM framework that combines quantum kernel methods with LSTM to enhance HAR while preserving data privacy.

- It leverages deep convolutional layers and federated learning to extract local patterns and reduce trainable parameters efficiently.

- Experimental results on the RWHAR dataset show improved accuracy, precision, and recall compared to classical federated LSTM models.

Federated Quantum Kernel-Based Long Short-term Memory for Human Activity Recognition

Introduction

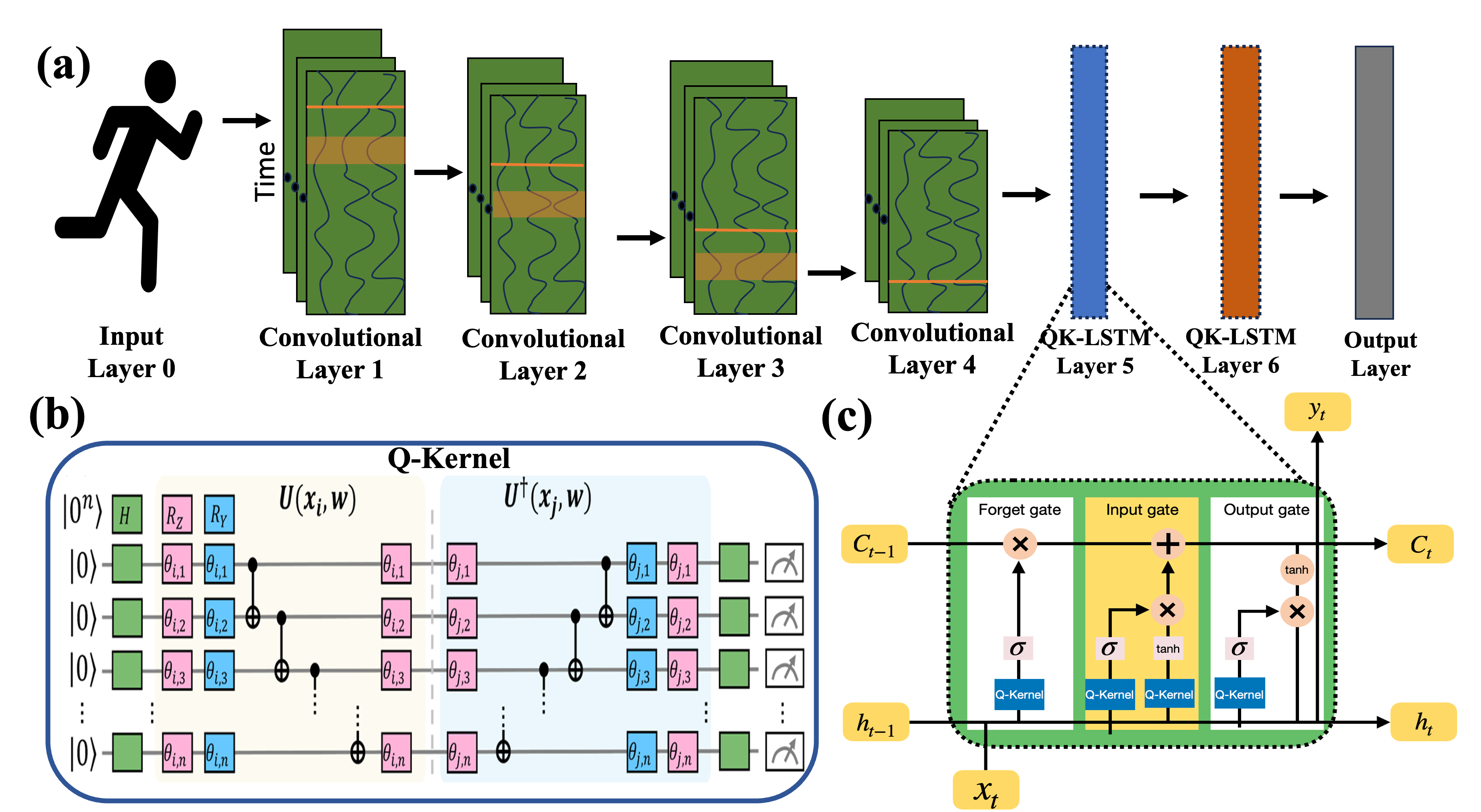

The paper "Federated Quantum Kernel-Based Long Short-term Memory for Human Activity Recognition" (2508.06078) introduces the Fed-QK-LSTM framework, integrating quantum kernel methods with LSTM in a federated learning context to enhance Human Activity Recognition (HAR). Researchers aimed to create a robust and privacy-preserving system by leveraging quantum computing strengths in distributed learning. The proposed architecture combines classical deep learning methods with quantum computing to efficiently model complex relationships in HAR data.

Long Short-Term Memory

Classical Long Short-Term Memory

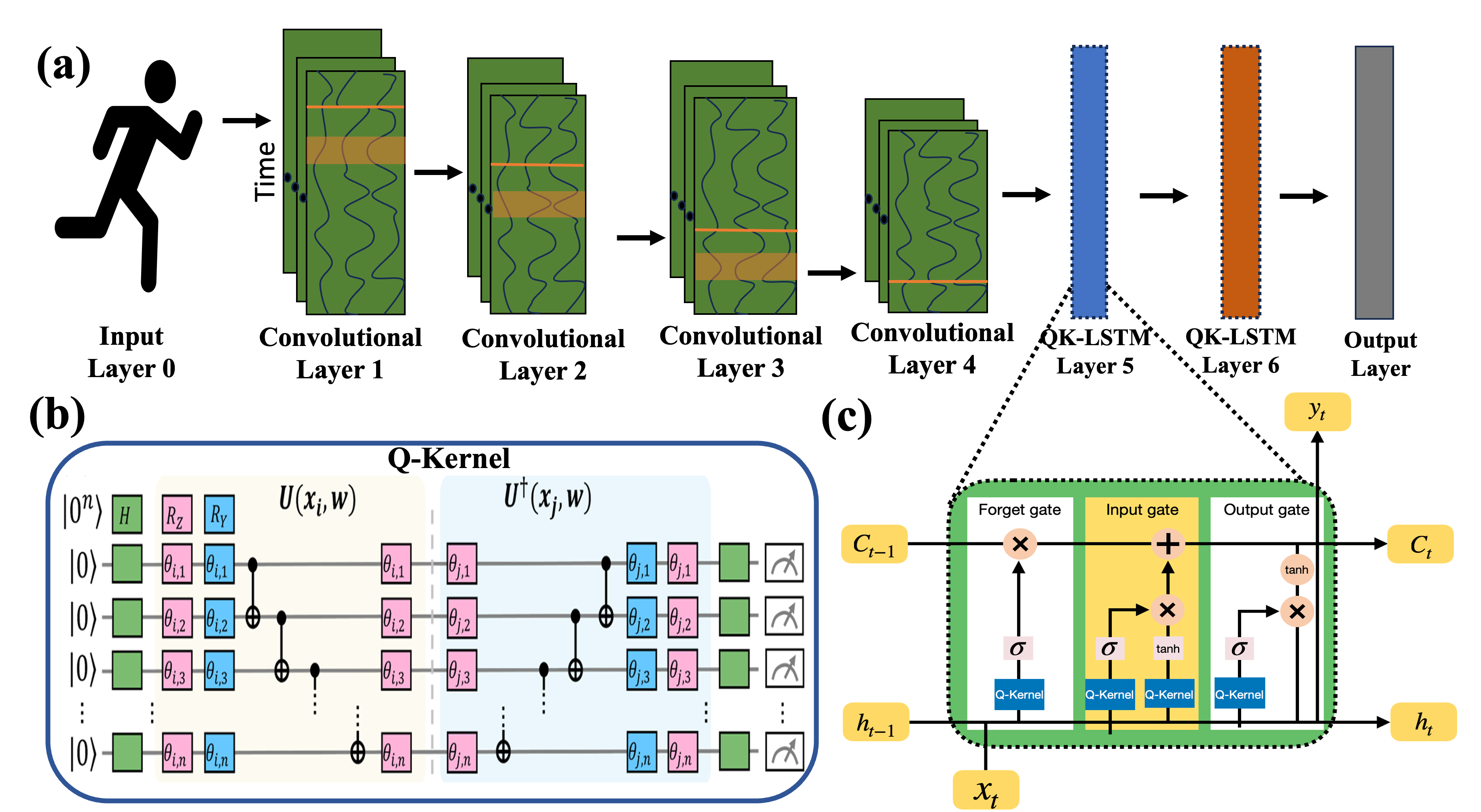

LSTM networks have significantly advanced the modeling of sequential data, offering stability in learning long-term dependencies through gate mechanisms. These networks excel in diverse applications like time-series forecasting and natural language processing. In this study, LSTM networks are applied to HAR tasks, effectively capturing short-term and long-term dependencies within multivariate sensor signals.

Quantum Kernel-Based Long Short-Term Memory

QK-LSTM combines classical LSTM networks with quantum kernel methods, enhancing model performance by leveraging quantum computing’s high-dimensional feature spaces. The QK-LSTM model reduces parameters and utilizes quantum-enhanced mechanisms for efficient temporal modeling in HAR applications.

DeepConv-QK-LSTM

Deep convolutional layers precede the QK-LSTM layer to extract local temporal patterns from HAR data, reducing dimensionality before processing. This architecture allows for efficient temporal modeling, combining historical context with deep local features.

Figure 1: Illustration of the DeepConv-QK-LSTM model.

Federated Learning

Classical Federated Learning

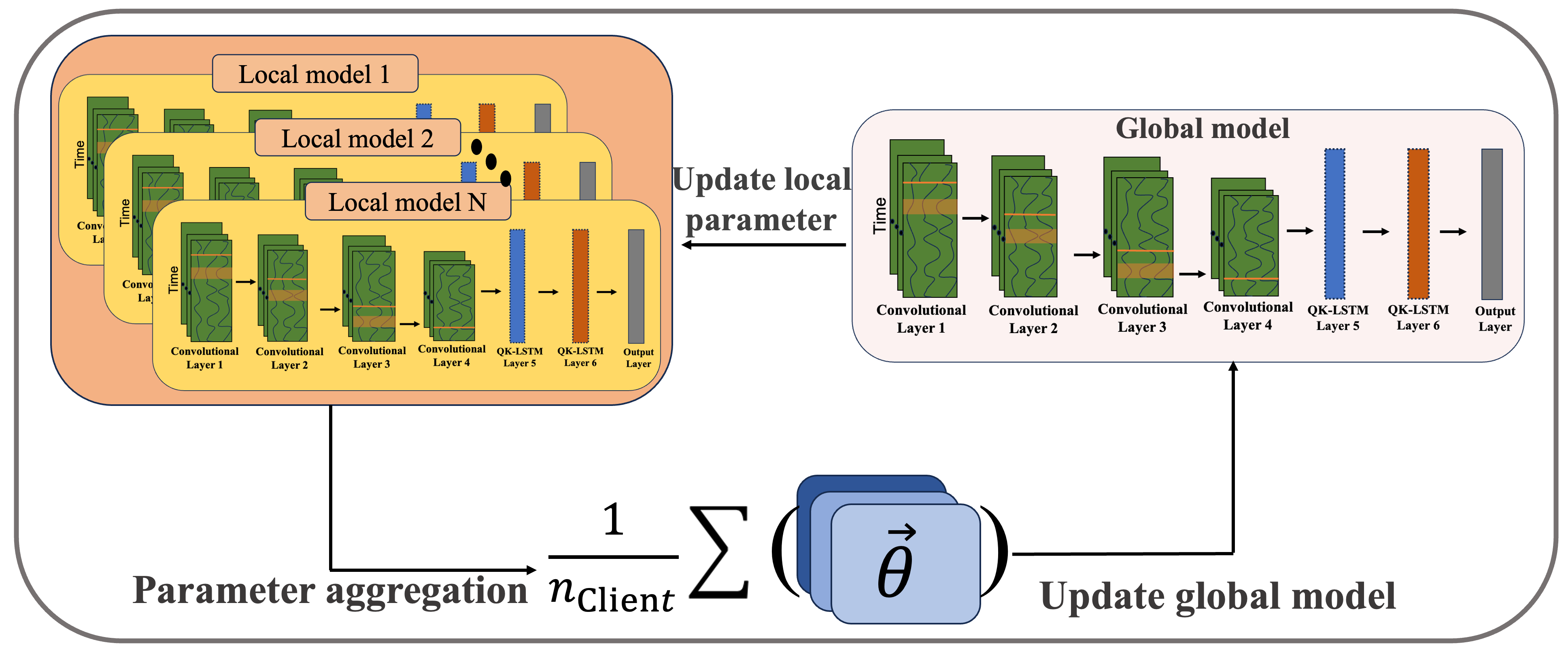

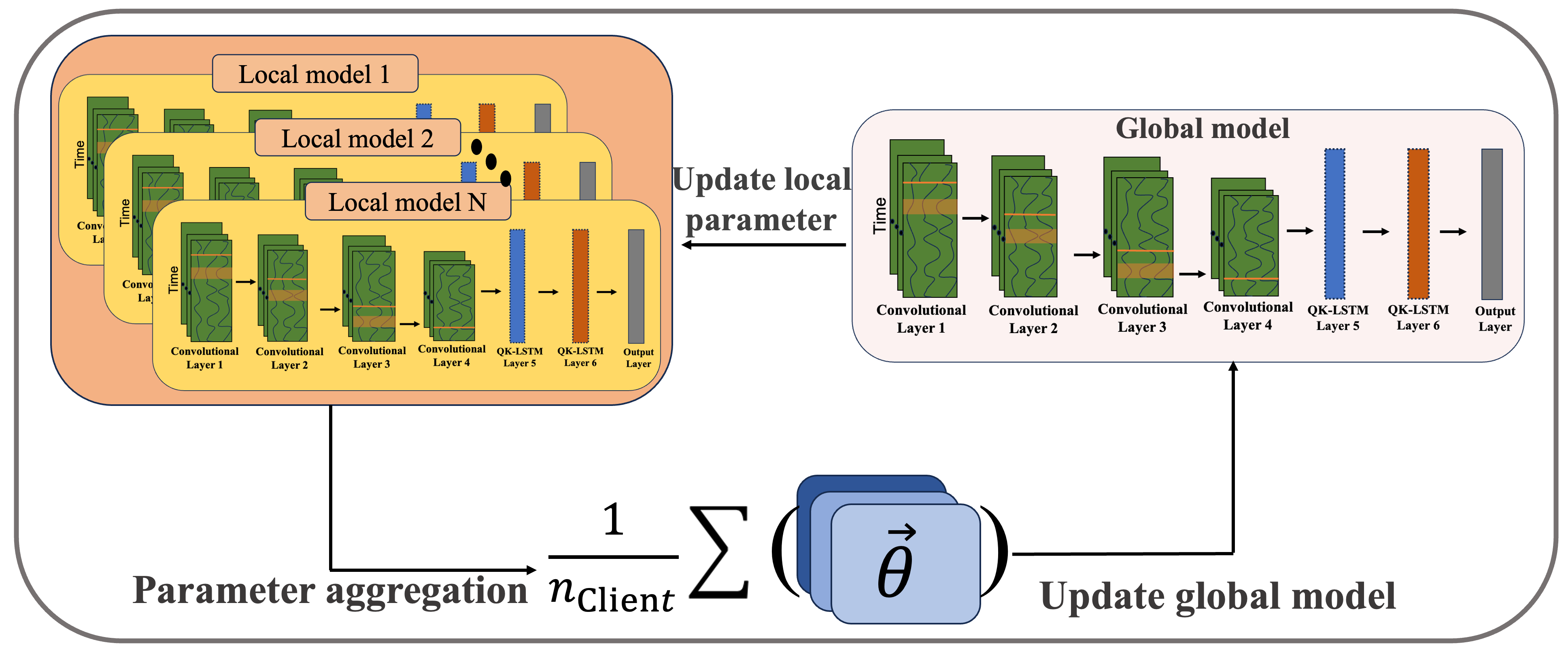

Federated learning emerged as a decentralized approach to train models collaboratively across multiple clients while preserving data privacy. The FedAvg algorithm facilitates model synchronization by averaging local model updates, proving effective in diverse applications with data heterogeneity.

Federated QK-LSTM

The Fed-QK-LSTM framework incorporates DeepConv-QK-LSTM models within a federated learning environment, enabling quantum-enhanced decentralized learning. Clients compute quantum kernel values locally, facilitating parameter optimization without sharing raw data. This hybrid approach combines quantum computing’s expressiveness with federated learning's privacy-preserving nature.

Figure 2: Schematic representation of the Fed-QK-LSTM.

Numerical Results and Discussion

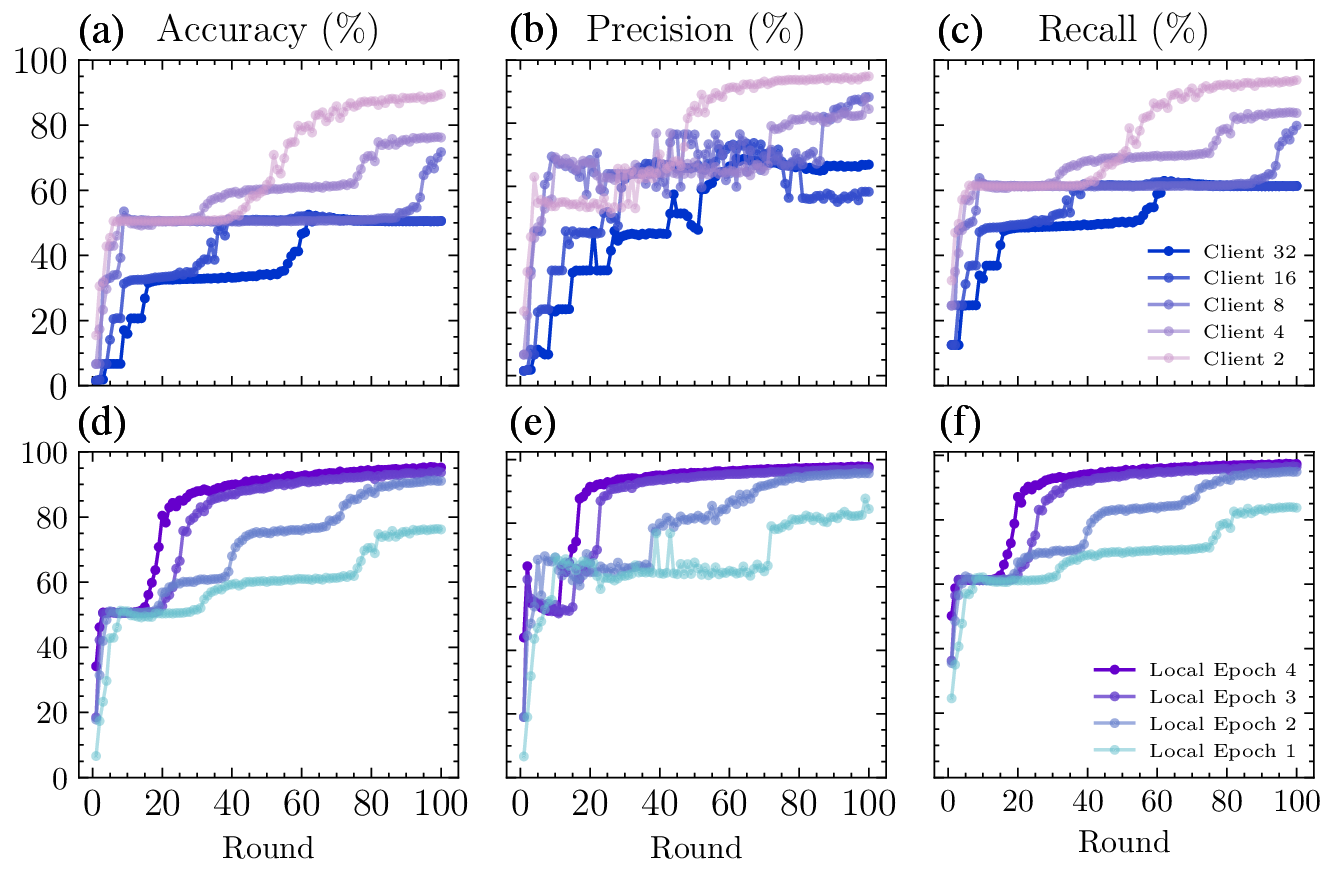

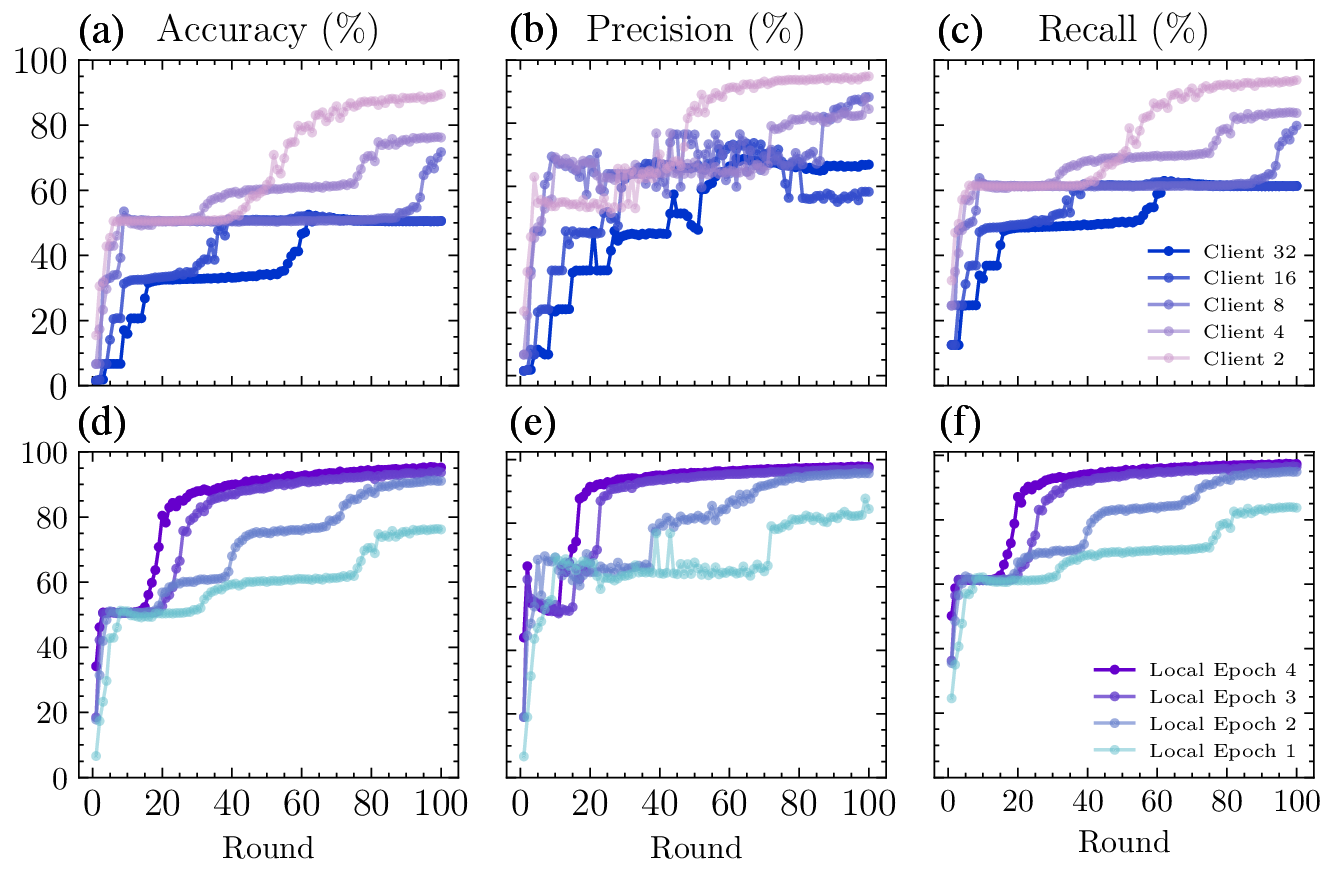

The study evaluates Fed-QK-LSTM on the RWHAR dataset, utilizing varying numbers of clients and local epochs to assess performance. Results demonstrate high accuracy and efficiency, with increased client numbers initially improving learning. Fed-QK-LSTM consistently outperforms classical federated LSTM models across accuracy, precision, and other metrics, while reducing trainable parameters.

Figure 3: Experimental results of federated learning with different number of clients and different number of local epochs of each client.

Table 1 compares the performance of Fed-QK-LSTM and Fed-LSTM models, illustrating the quantum model’s superior accuracy, precision, recall, and reduced parameter count.

Conclusion

The Fed-QK-LSTM framework successfully integrates quantum kernel methods into LSTM networks within a federated learning environment, enhancing HAR tasks. This approach mitigates data privacy concerns while improving learning efficiency. The results validate quantum machine learning's applicability in real-world scenarios, paving the way for scalable implementations in privacy-sensitive distributed systems.

The study's findings suggest that quantum-enhanced federated learning can deliver competitive results with fewer parameters, marking a significant step toward practical deployments in quantum computing applications.