- The paper introduces DP-SPRT, which embeds differential privacy into SPRT by injecting Laplace and Gaussian noise to protect sensitive data during sequential testing.

- The novel OutsideInterval mechanism adds coordinated noise to both test statistics and thresholds, providing tighter privacy while maintaining error limits.

- Experimental evaluations show that DP-SPRT achieves lower error rates and improved sample efficiency compared to existing methods, enhancing privacy-preserving analysis.

DP-SPRT: Differentially Private Sequential Probability Ratio Tests

Introduction

The paper introduces a novel approach to incorporating differential privacy into sequential hypothesis testing through the development of the Differentially Private Sequential Probability Ratio Test (DP-SPRT). The Sequential Probability Ratio Test (SPRT), initially proposed by Wald, is renowned for its statistical efficiency, particularly in scenarios requiring hypothesis testing with minimal sample size. Nonetheless, when handling sensitive data, traditional SPRT applications are vulnerable to privacy breaches, particularly in revealing information about individual data points involved in the decision-making process.

To address this privacy concern, the authors propose a differential privacy mechanism compatible with SPRT. Differential privacy, a robust framework for safeguarding individual data points during analysis, is well-researched in fixed-sample settings but has seen limited application in the context of sequential analysis until now.

Differential Privacy in Sequential Testing

The paper builds upon existing work in differential privacy, notably the AboveThreshold mechanism and Renyi differential privacy, to enhance privacy in sequential hypothesis testing. This involves incorporating Laplace and Gaussian noise into the decision thresholds of the SPRT framework.

A novel contribution detailed in the paper is the "OutsideInterval" mechanism, which adds noise to both the test statistic and decision thresholds in a coordinated manner. This mechanism differs from traditional applications that treat threshold crossings individually, offering tighter privacy assurances.

The authors also present theoretical correctness guarantees for DP-SPRT, ensuring that it adheres to specified Type I and Type II error probabilities. This is accompanied by an analysis of sample complexity, revealing how privacy constraints impact the number of observations required by the test.

Experimental Evaluation

The paper includes extensive experimental evaluations contrasting DP-SPRT with existing methods, such as PrivSPRT, unveiling superior performance in terms of sample efficiency and privacy protection.

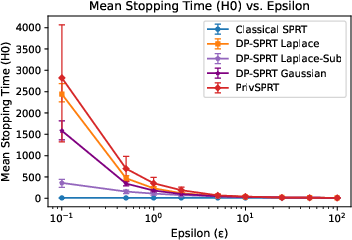

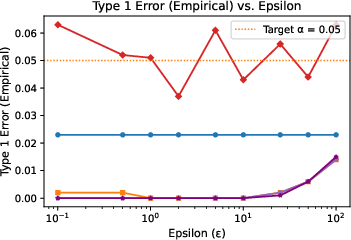

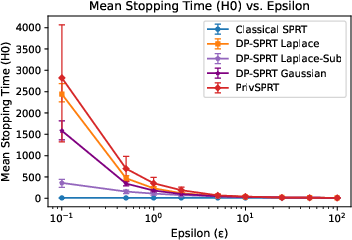

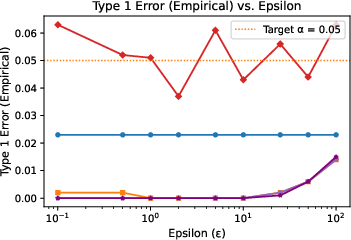

Figure 1: Experimental results for different variants: (a) Average sample size as privacy parameter varies for α=β=0.05; (b) Empirical Type I error probability vs. privacy parameter. Error bars represent 95% percentile intervals over 1000 trials.

These results demonstrate that DP-SPRT retains lower error rates and requires fewer samples, making it preferable in scenarios necessitating privacy-preserving sequential testing.

Implications and Future Directions

The introduction of DP-SPRT marks a significant step toward integrating rigorous privacy safeguards with sequential analysis processes. The practical implications are substantial, offering a framework for safely conducting medical trials, financial analyses, and other domains reliant on privacy-sensitive sequential data.

The theoretical foundations outlined suggest potential for future expansion into more complex hypothesis testing scenarios and the adaptation of privacy mechanisms into broader statistical applications. Further exploration is warranted to extend the approach to composite hypothesis testing and multi-armed bandit problems, which could benefit from the privacy-utility trade-offs explored in this work.

Conclusion

The DP-SPRT framework refines the traditional SPRT by embedding privacy considerations, preserving efficiency while extending privacy assurances. This pioneering methodology sets the stage for continued advancements in statistical privacy, ensuring ethically responsible data analysis practices in diverse applications. The work contributes prominently to the interface of privacy and statistical decision theory, promoting the safe utilization of sequential analysis in sensitive environments.