- The paper demonstrates gradient-based adversarial attacks that induce partial suppression in Whisper models by optimizing attack magnitudes and snippet lengths.

- It shows that reducing clamp limits and snippet durations maintains effectiveness on smaller models, with metrics like BLEU scores and WER quantifying disruptiveness.

- The study highlights low-pass filter defenses as a promising mitigation strategy while noting limited transferability of attacks across different Whisper model sizes.

Adversarial Attacks on Automatic Speech Recognition Models

Introduction

The paper "Whisper Smarter, not Harder: Adversarial Attack on Partial Suppression" investigates the susceptibility of Automatic Speech Recognition (ASR) models, such as Whisper, to adversarial attacks. The authors explore the robustness and imperceptibility of these attacks and suggest improvements by loosening the strict criteria for attack success. The study also examines potential defenses against these attacks, particularly focusing on low-pass filter techniques to mitigate attack impacts.

Complete Suppression Attacks

Overview and Methodology

The study employs gradient-based training to generate universal adversarial snippets, aiming for complete suppression of ASR output by inducing premature generation of the EOS token in Whisper models. The robustness of these attacks is tested by varying the attack snippet's magnitude, length, and position. The paper posits that recommended parameters for smaller models can be optimized to increase attack imperceptibility without sacrificing effectiveness.

Experimental Results

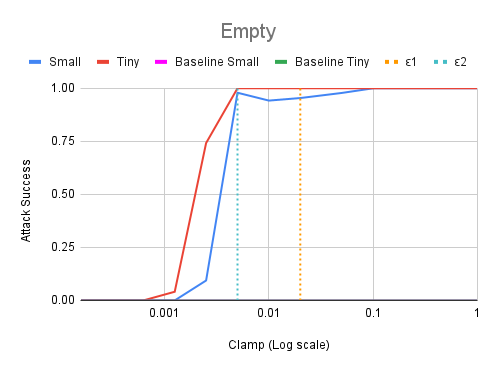

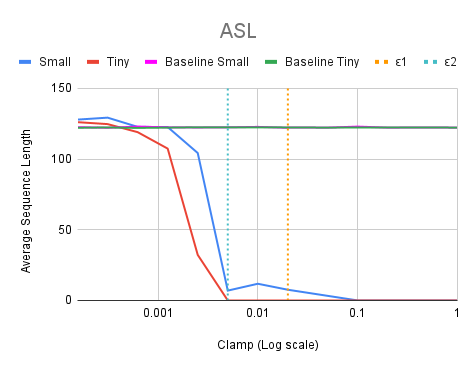

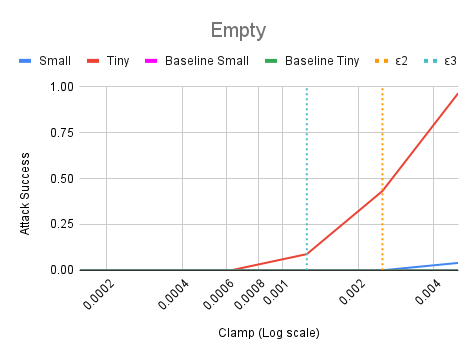

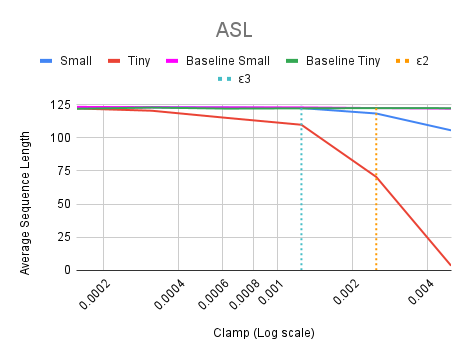

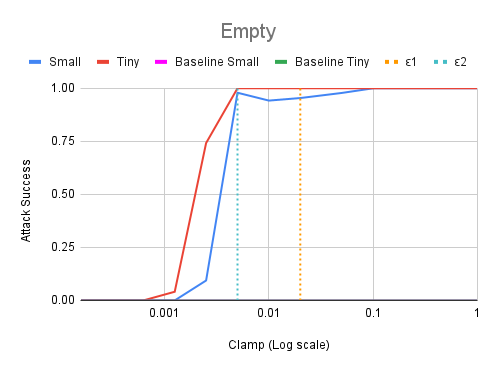

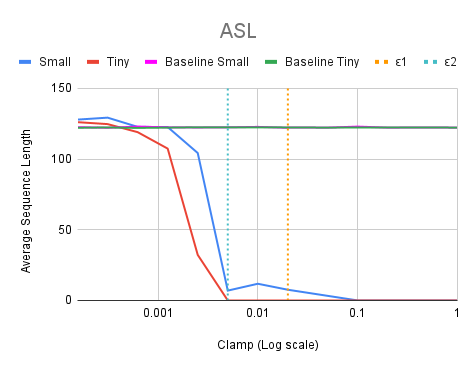

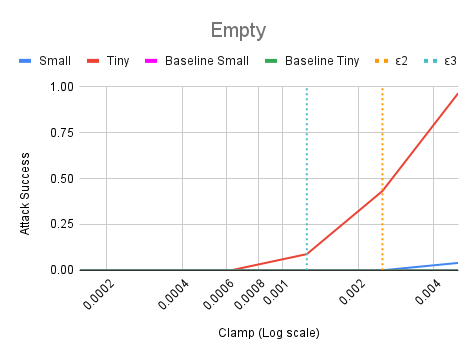

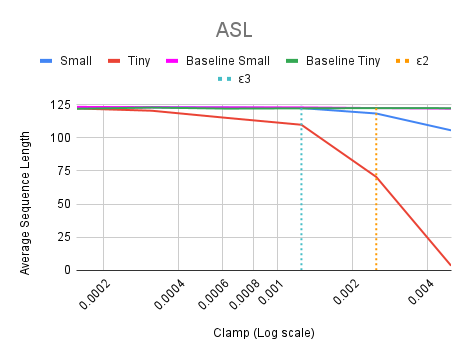

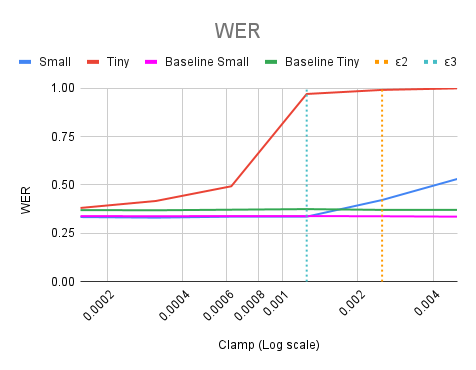

The results indicate that reducing the attack magnitude (clamp limit ε) further than previously recommended still maintains efficacy for smaller models (Figure 1).

Figure 1: The ∅ and ASL graphs over various clamp values on the logarithmic scale, with ε1 and ε2 notated as orange and blue dotted lines respectively.

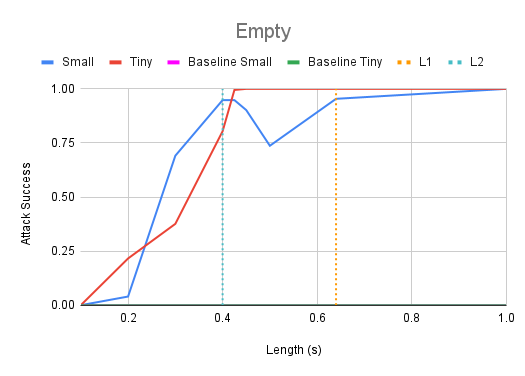

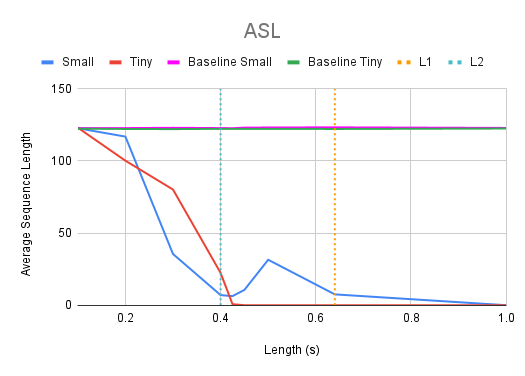

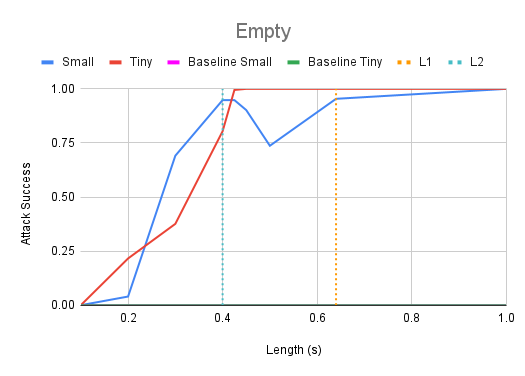

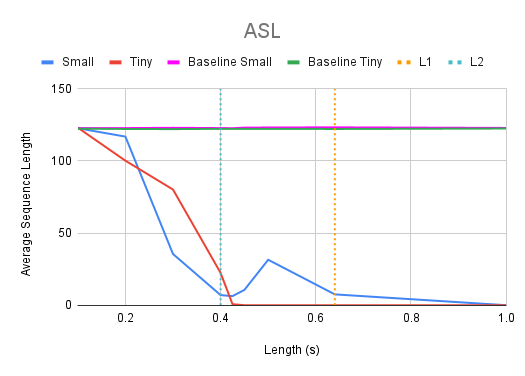

Additionally, the suggested snippet length (L=0.64s) can be reduced to around L=0.4s while preserving attack strength, as demonstrated in Figure 2.

Figure 2: The ∅ and ASL graphs over various lengths, with L1 and L2 notated as orange and blue dotted lines respectively.

Partial Suppression Attacks

Introduction to Partial Suppression

An alternative approach to adversarial attack focuses on partial suppression, allowing some token generation rather than complete shutdown. This less stringent objective can potentially enhance attack robustness and imperceptibility, highlighting the trade-off between attack efficiency and audibility.

Metrics and Results

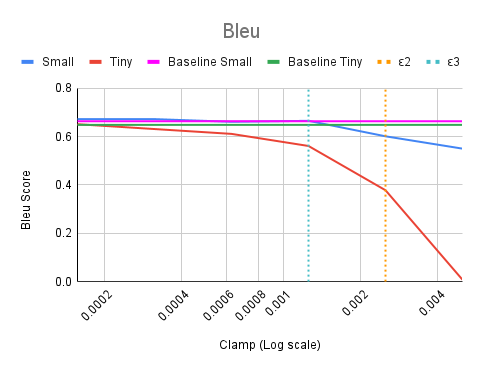

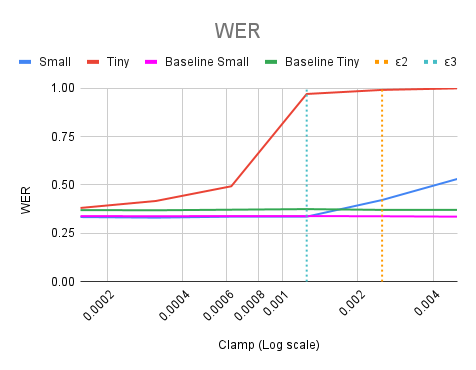

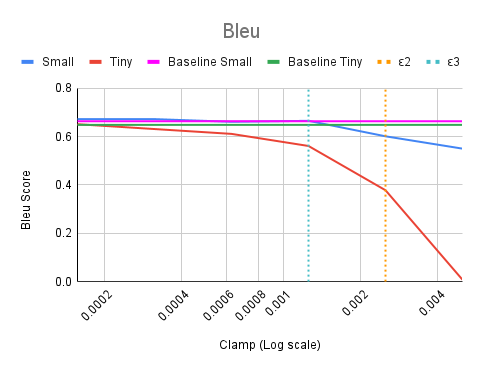

New metrics such as adjusted BLEU scores and WER are adopted to evaluate disruptiveness over complete suppression indicators. Figure 3 reflects the results showing strong disruptiveness even with reduced clamp limits.

Figure 3: The ∅, ASL, BLEU score, and WER graphs over various clamp limits, with ε2 and ε3 notated as orange and blue dotted lines respectively.

Similarly, an effective attack length for partial suppression is identified as L=0.3s, a reduction from L=0.4s.

Transferability and Robustness

The paper also examines the transferability of attack methods across ASR model sizes. Although both complete and partial suppression attacks show limited transferability between Whisper models of different sizes, the partial suppression approach suggests a marginal improvement in disrupting outputs.

Defense Mechanisms

Evaluated Defenses

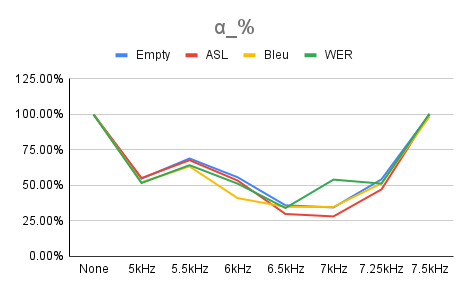

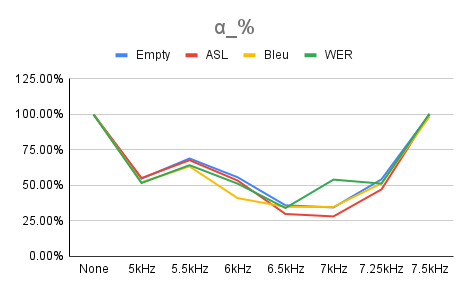

The paper evaluates basic audio defenses, particularly Mu-Law compression and Butterworth low-pass filters. Mu-Law compression proves ineffective in mitigating attack effects. On the contrary, low-pass filters demonstrate potential, with specific cut-off frequencies significantly reducing adversarial impact (Figure 4).

Figure 4: α% over a range of cut-off frequencies.

Conclusion

The research provides evidence for improving adversarial attacks against ASR systems through reduced input perturbation. It advocates for partial suppression as a promising method for attack enhancement. Additionally, conventional audio filter defenses, specifically low-pass filters, could form the foundation for future ASR model defenses. These findings primarily pertain to smaller Whisper models, signaling future research directives to assess generalizability to larger ASR systems.