- The paper introduces the MAESTRO framework that mitigates agentic AI security concerns through a seven-layered architecture.

- It employs structured threat modeling to address unique vulnerabilities like instruction manipulation and memory corruption.

- Experimental validations via DoS and memory poisoning tests confirm the framework's efficacy and highlight areas for future improvement.

Security Considerations for Agentic AI Systems

The research presented in "Securing Agentic AI: Threat Modeling and Risk Analysis for Network Monitoring Agentic AI System" describes the creation and implementation of the MAESTRO framework. This framework is designed to address and mitigate security concerns specific to agentic AI systems that incorporate LLMs for network monitoring tasks. The paper emphasizes the necessity for a multilayered security approach to manage emerging threats that traditional models such as STRIDE and PASTA fail to adequately address.

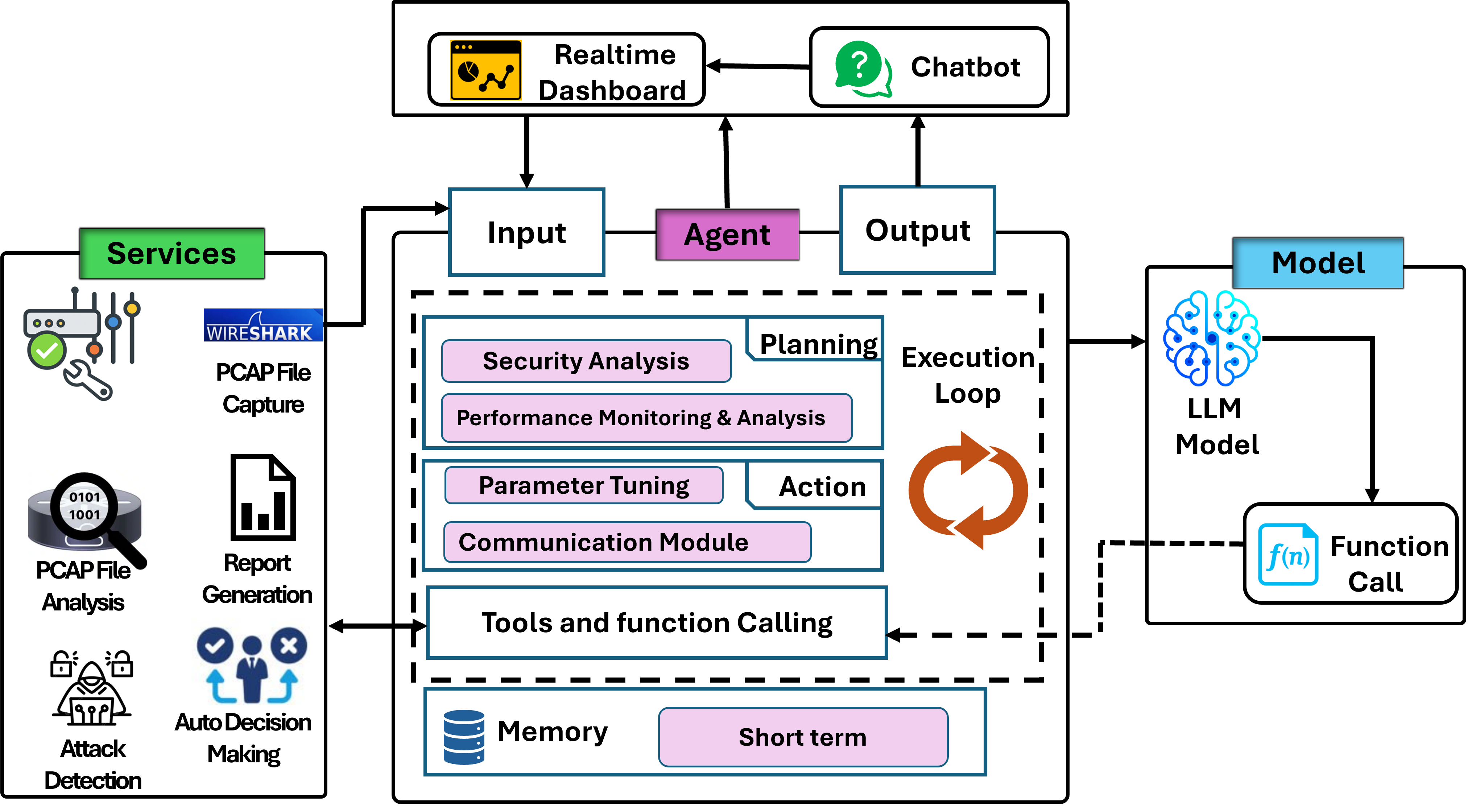

MAESTRO Framework and System Architecture

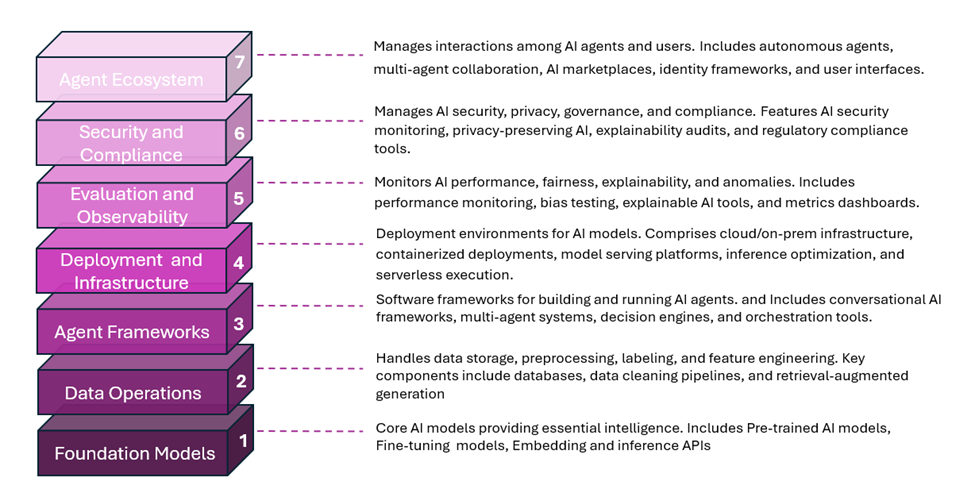

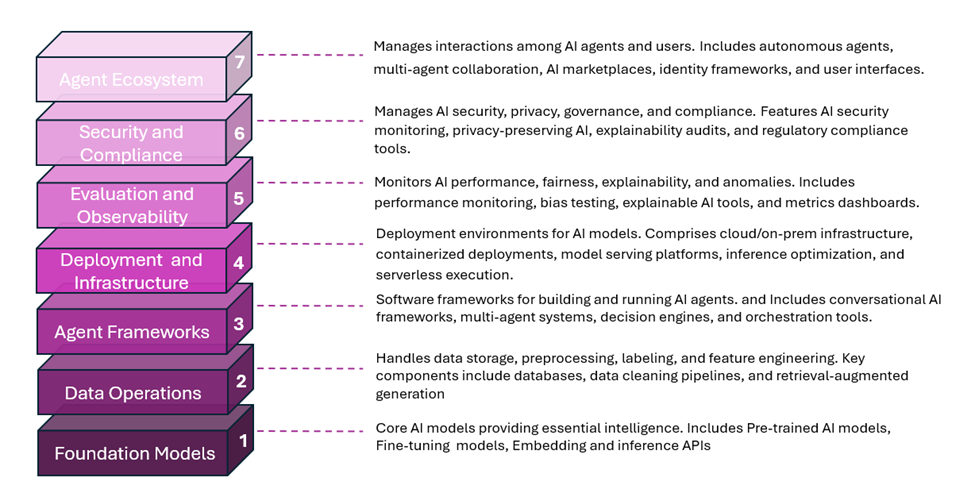

The MAESTRO architecture is structured into seven layers, each of which caters to specific aspects of the agentic AI system, from foundational models to the broader agent ecosystem.

Figure 1: Complete seven-layer MAESTRO architecture.

- Foundation Models (Layer 1): This layer involves pre-trained and fine-tuned LLMs responsible for the core reasoning capabilities of the agent.

- Data Operations (Layer 2): Encompasses all the necessary data pipelines for collecting and managing network performance data.

- Agent Frameworks (Layer 3): Responsible for the orchestration and decision-making processes, integrating various subsystems to enable agent actions.

- Deployment and Infrastructure (Layer 4): Details containerized environments and endpoint interactions which support the agent's deployment.

- Evaluation and Observability (Layer 5): Monitoring and assessment procedures that ensure the system's ongoing performance and integrity.

- Security and Compliance (Layer 6): Covers the protocols and standards ensuring the system's regulatory compliance.

- Agent Ecosystem (Layer 7): The interaction interface for the agent with other systems and human operators.

Threat Landscape and Considerations

The agent's architecture significantly broadens its attack surface, demanding meticulous threat modeling strategies. The research outlines several potential threats associated with agentic AI systems.

- Instruction Manipulation: Alteration of input prompts to maliciously redirect agent behavior.

- Goal Manipulation: Subtle shifts in the agent's objectives due to ambiguous feedback or injected telemetry data.

- Memory Corruption: Injections or alterations in the memory/context data that inform future agent decisions.

- Resource Exhaustion: Attacks that flood the system with data, thereby degrading its performance.

- Multi-Agent Exploitation: Activities that leverage shared memory to subvert multiple agents.

These threats necessitate a methodical mapping of vulnerabilities across MAESTRO's strata to formulate effective mitigation strategies.

Mitigation Strategies

The deployment of multilayered defenses is pivotal to ensuring robust agent security. Key strategies include:

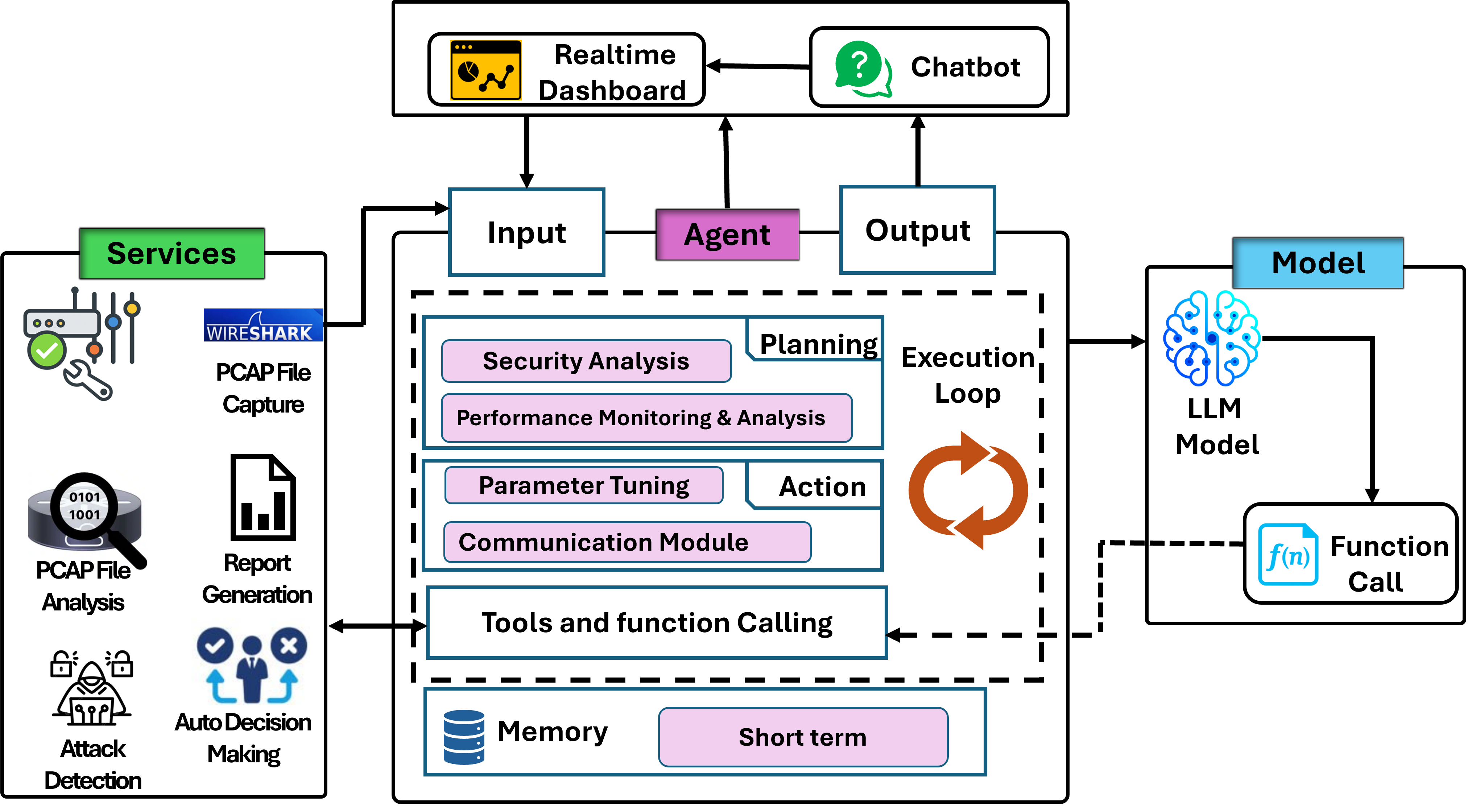

Figure 2: Prevention Strategies for the securement of an autonomous agent using MAESTRO Layer.

- Input Validation and Sanitization: Prevention techniques to guard against erroneous agent instructions.

- Memory Isolation and Contextual Integrity: Focus on fortifying memory-related operations to avert data poisoning.

- Secure Tool Access: Utilization of capability-based control access to limit agent actions to trusted operations.

- Anomaly Detection Mechanisms: Real-time monitoring to swiftly identify and rollback potential system disruptions.

Experimental Validation and Observations

Two high-impact test cases validate the vulnerabilities and security risk assessment of the agentic AI system:

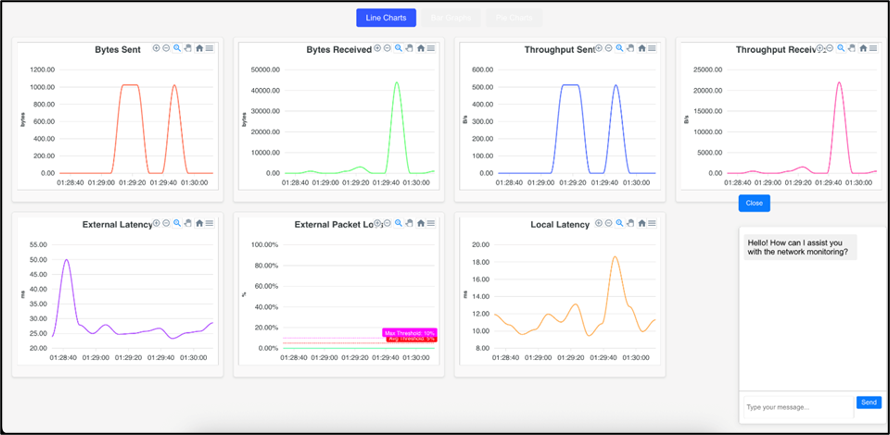

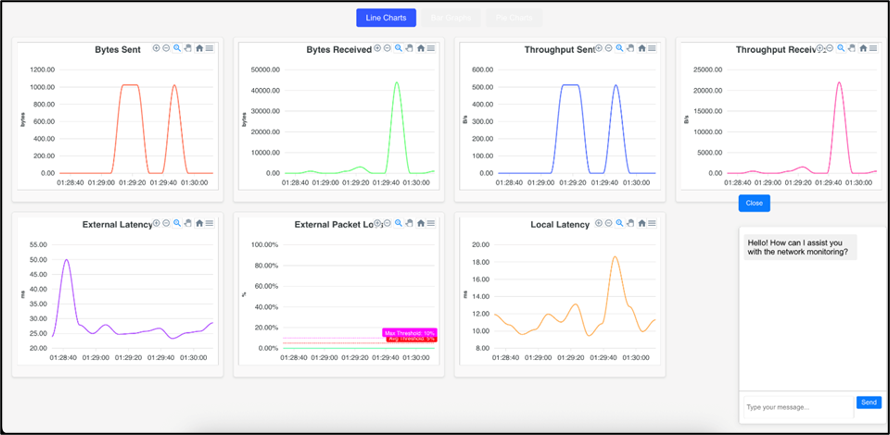

- Resource Exhaustion Test: Simulates a Denial-of-Service (DoS) scenario that validates the system's susceptibility to excessive load through TCP replay.

- Memory Poisoning Test: Validates the impact of corrupt historical data on the agent’s decision-making pipeline, highlighting the potential for cascading failures if memory management is compromised.

Figure 3: After-attack dashboard (after DoS attack).

Limitations and Future Work

The current system exhibits limitations in scalability and resilience under certain conditions, such as single-node deployments and lack of end-to-end cryptographic controls. Future research directions include:

- Multi-Agent Coordination: Enhancing trust and consensus across distributed agents.

- Adversarial Robustness: Strengthening defense mechanisms against adversarial manipulations at all MAESTRO layers.

- Cross-Layer Security Orchestration: Improving dynamic response strategies and security controls throughout the operational layers.

Conclusion

The MAESTRO framework provides a comprehensive approach to modeling and mitigating threats within agentic AI systems, emphasizing the importance of cross-layer analysis and multilayered defense strategies. Experimental results underscore the necessity for enhanced security measures tailored to the adaptive nature of agentic AI, setting the direction for future advancements in AI security frameworks.