- The paper demonstrates that pitchfork and saddle-node bifurcations govern the creation and erasure of memory attractors in Hopfield networks.

- The paper employs a continuous-time Hopfield model with Hebbian learning on 81 neurons to analyze how attractor basins evolve during training.

- The paper reveals that catastrophic forgetting results from inverse bifurcation events, highlighting the need for precise control of input timing and weight dynamics.

Memorization and Forgetting in a Learning Hopfield Neural Network

The paper presents a detailed examination of memory formation and forgetting in Hopfield neural networks (HNN) through bifurcation analysis. This analysis is crucial to understanding mechanisms behind memory retention and catastrophic forgetting in learning neural networks.

Network Description and Experiment Setup

The study uses a continuous-time Hopfield neural network consisting of 81 neurons, trained under the Hebbian learning rule. Hopfield networks are a type of recurrent neural network known for associative memory capabilities, making them suitable for pattern recognition tasks. Here, interaction strengths between neurons—connection weights—are modified by the Hebbian learning process.

The network inputs training data periodically, consisting of binary vectors coded with patterns. The learning phase is defined by the dynamic adjustment of weights, leading to network stabilization once learning completes.

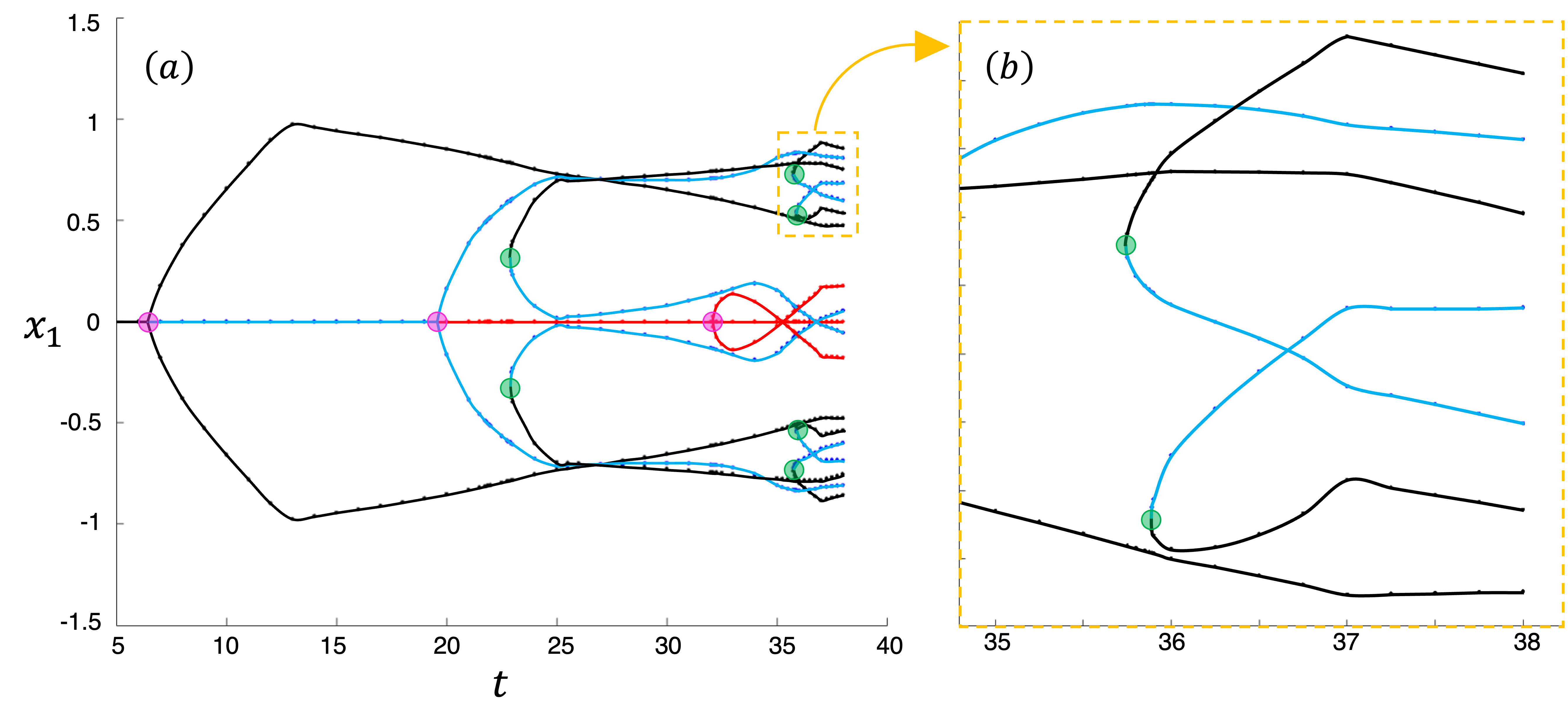

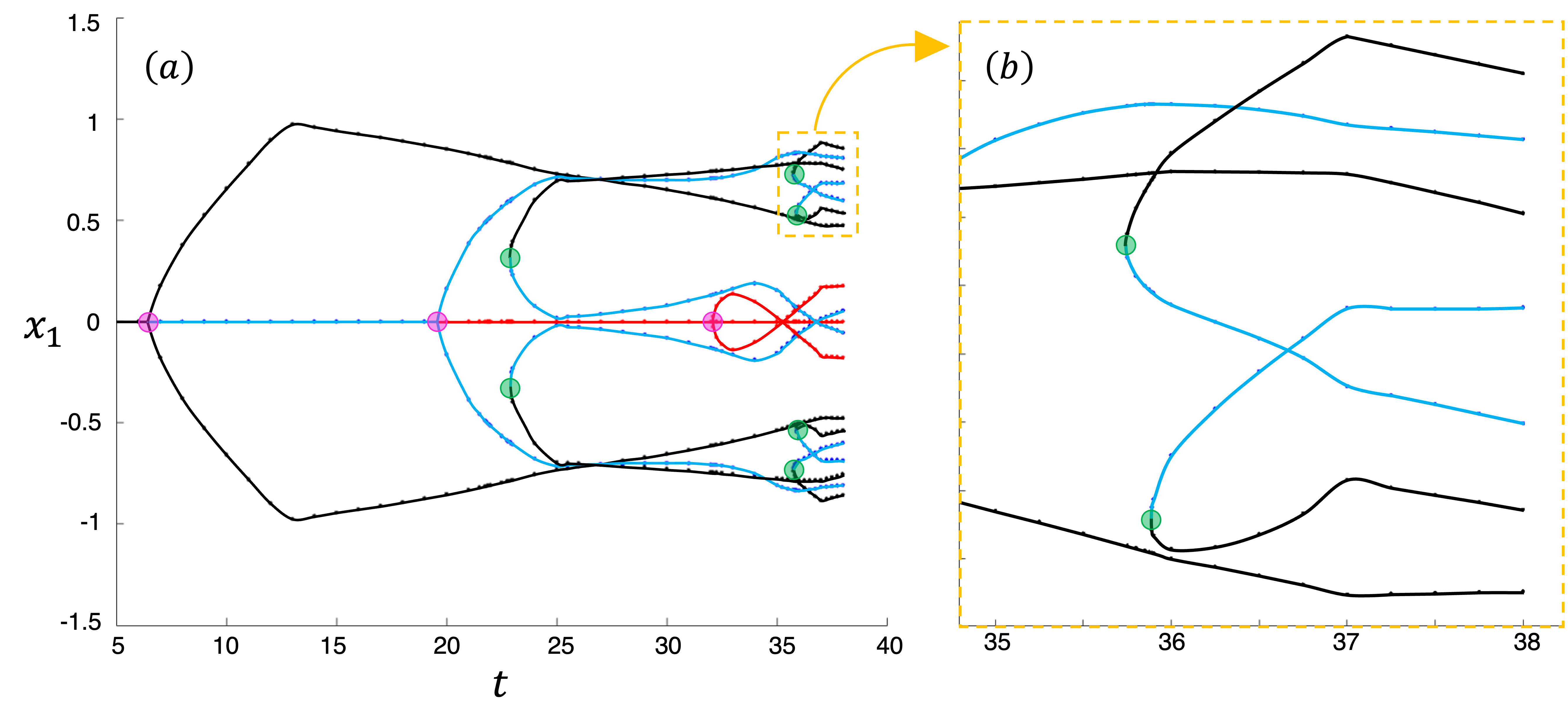

This research identifies pitchfork and saddle-node bifurcations as key mechanisms in memory formation. Pitchfork bifurcation destabilizes existing states creating new attractors, while saddle-node bifurcation adds new stable states (attractors) through a pairing mechanism. Attractors represent stable memories that encode learned input patterns.

Figure 1: One-parameter bifurcation diagram illustrating memory formation in early stages of learning by the NN.

Bifurcations occur at specific points during learning, indicating that memory formation is sequential and dependent on external stimulus duration. This finding suggests that timing in input data presentation is critical for effective memory formation.

Catastrophic Forgetting: Mechanisms Revealed

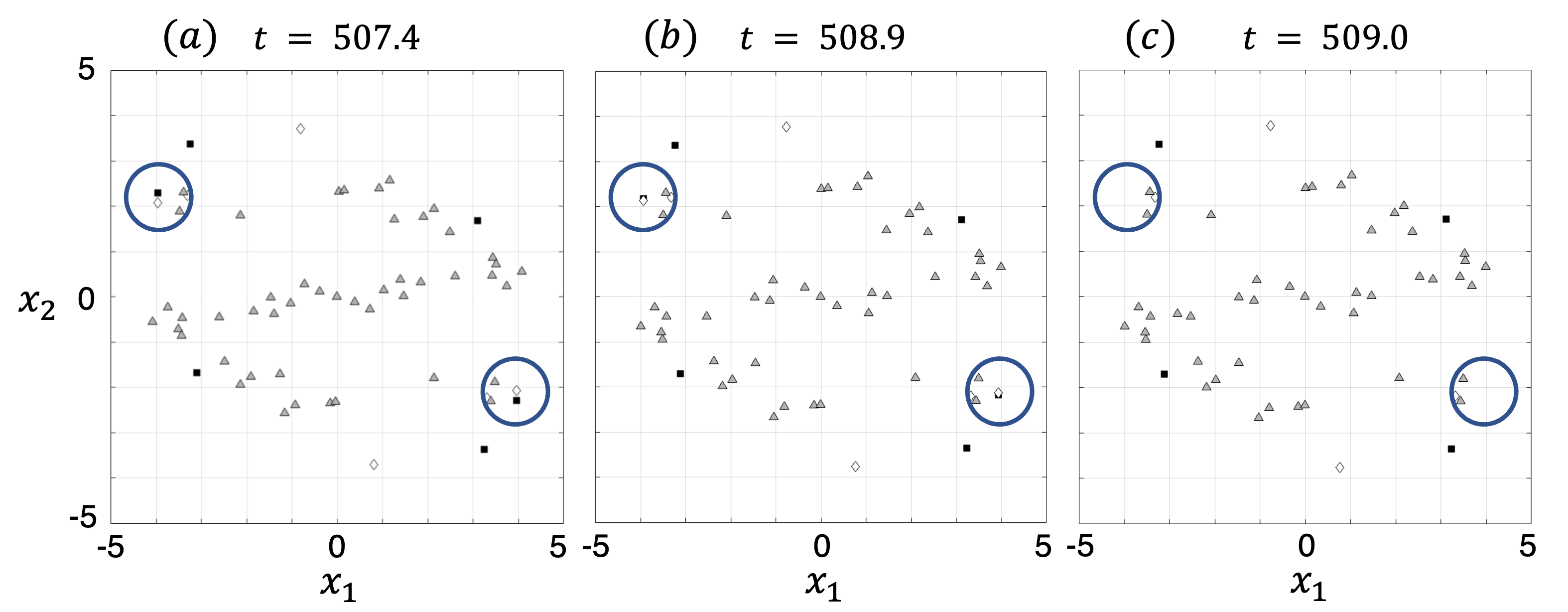

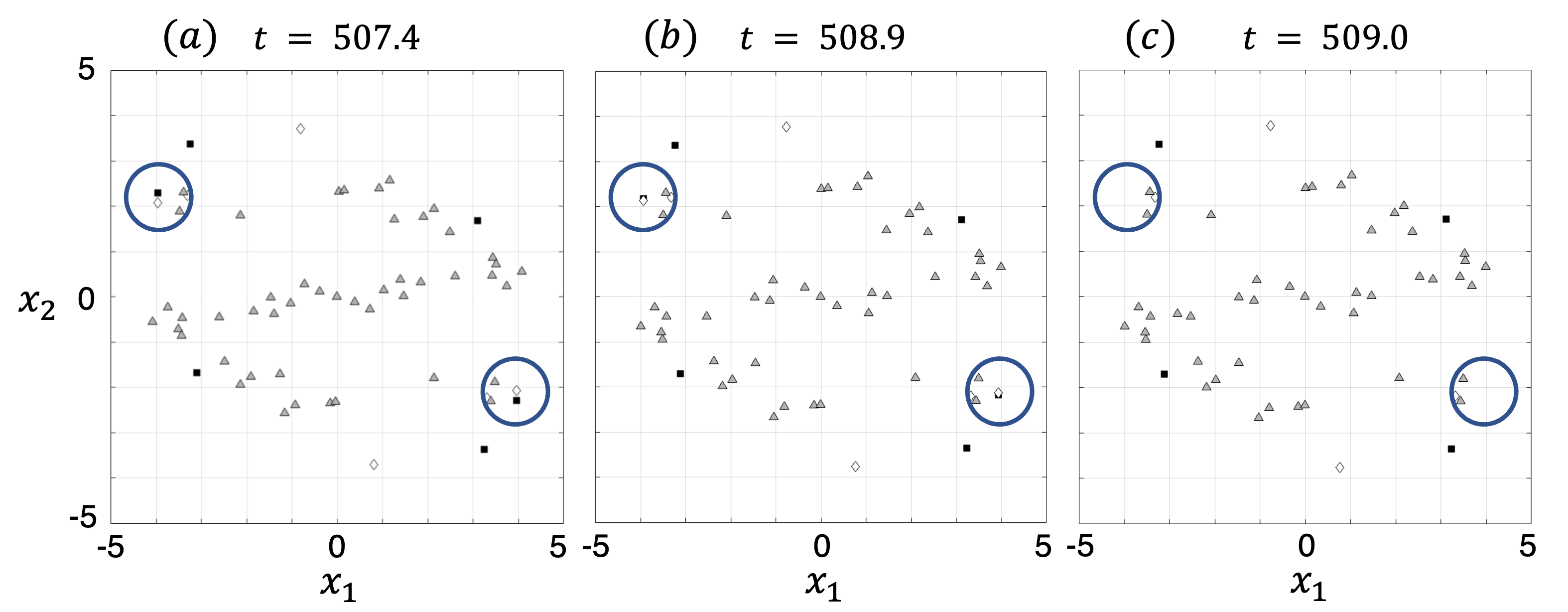

Catastrophic forgetting occurs when the network abruptly loses previously formed memories, driven by inverse bifurcation events. Here, the saddle-node bifurcations that previously formed memories reverse when the learning trajectory crosses bifurcation boundaries, effectively erasing memories.

Figure 2: Illustration of memory-destroying bifurcations, i.e., those underlying catastrophic forgetting.

This behavior underscores the need for careful weight trajectory management during learning to prevent unwanted interactions with bifurcation manifolds.

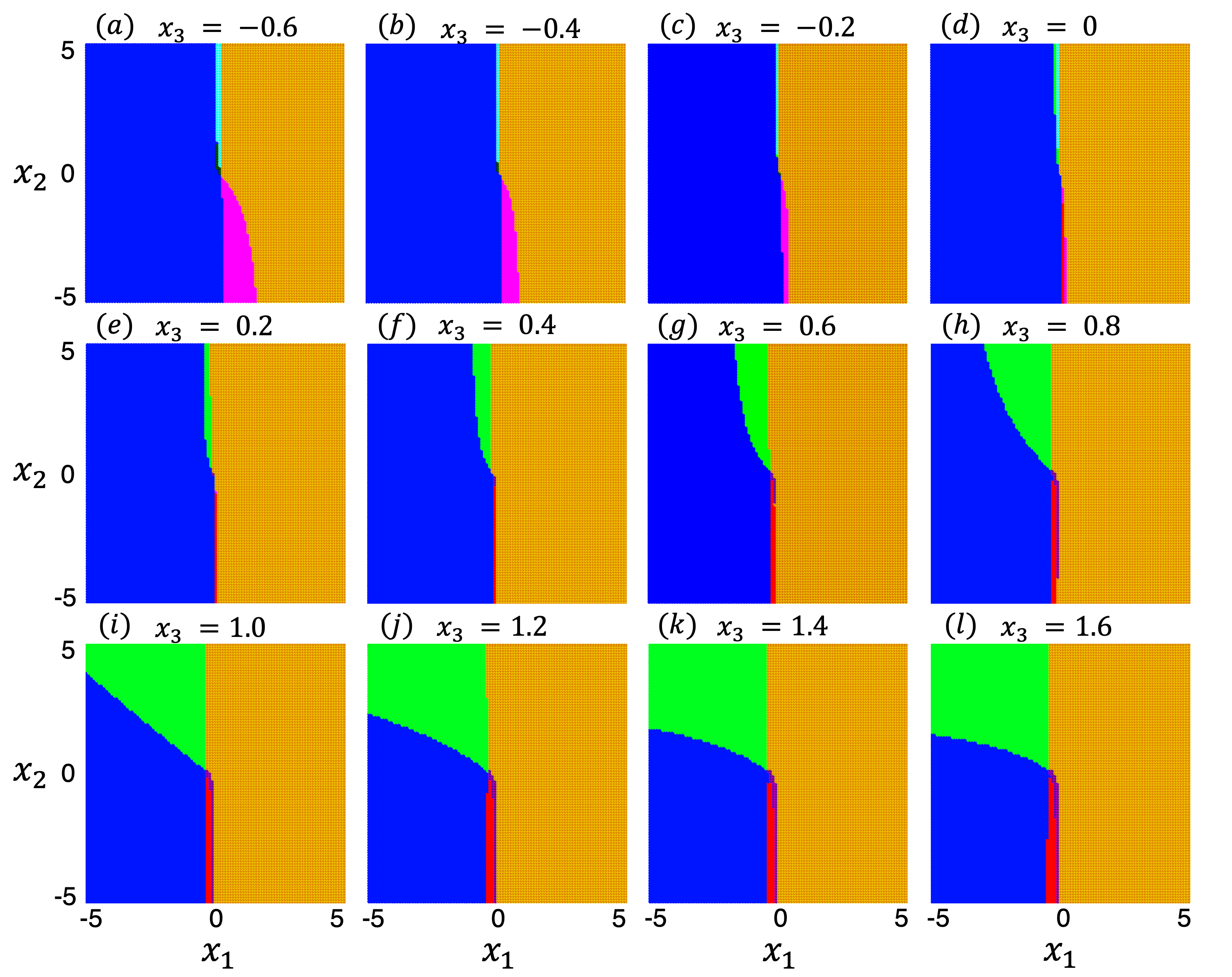

Attractor Basins and Memory Representation

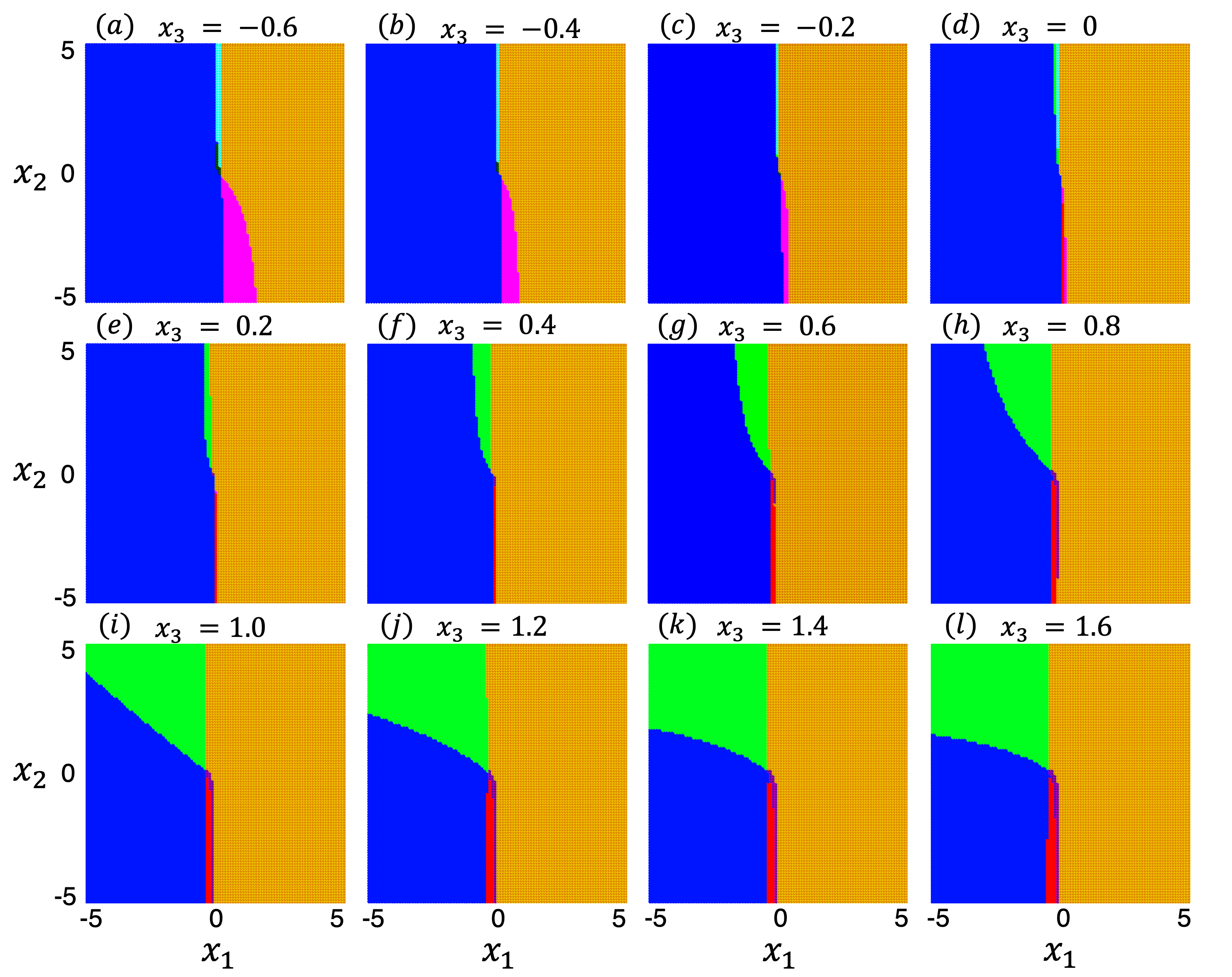

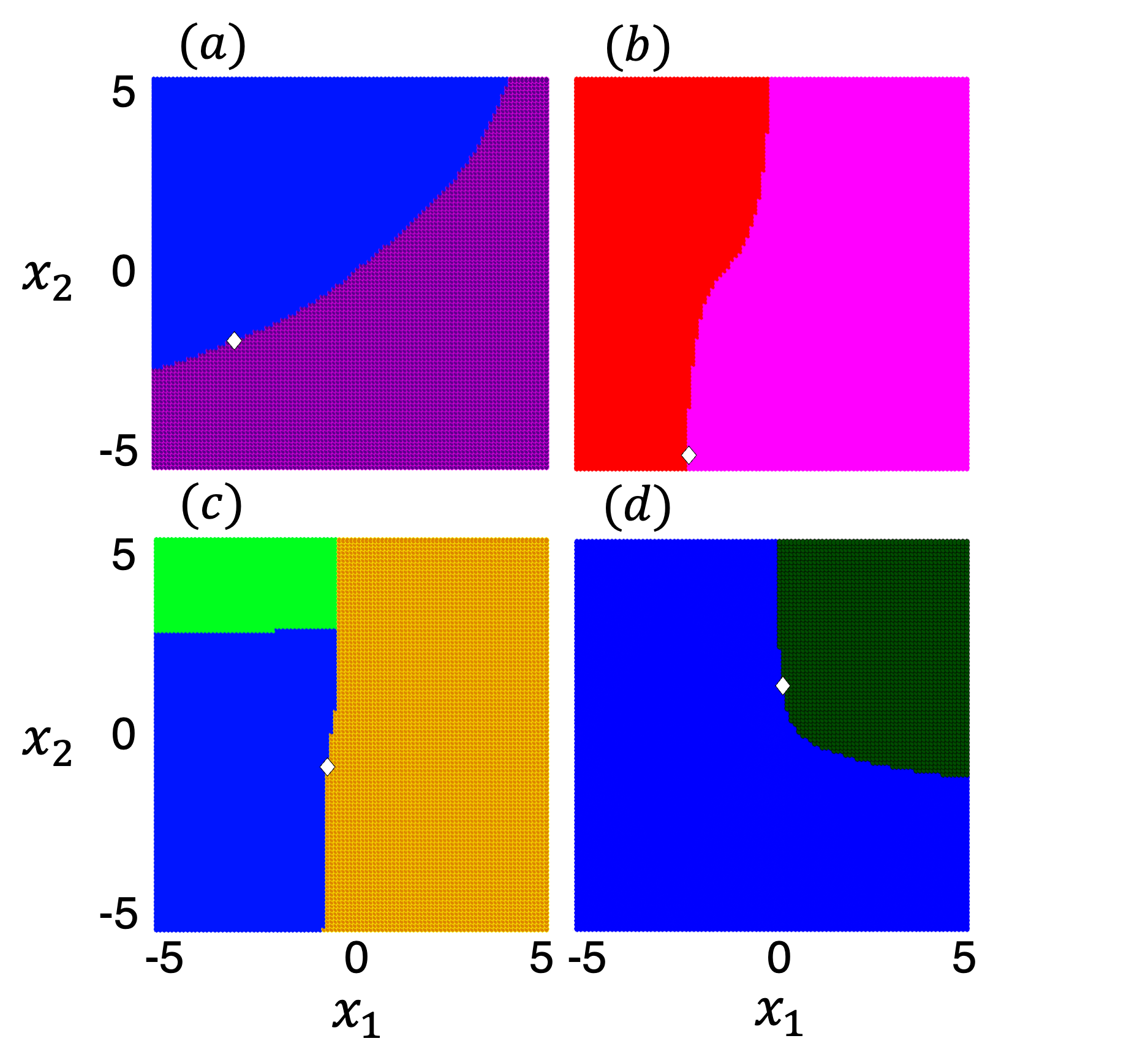

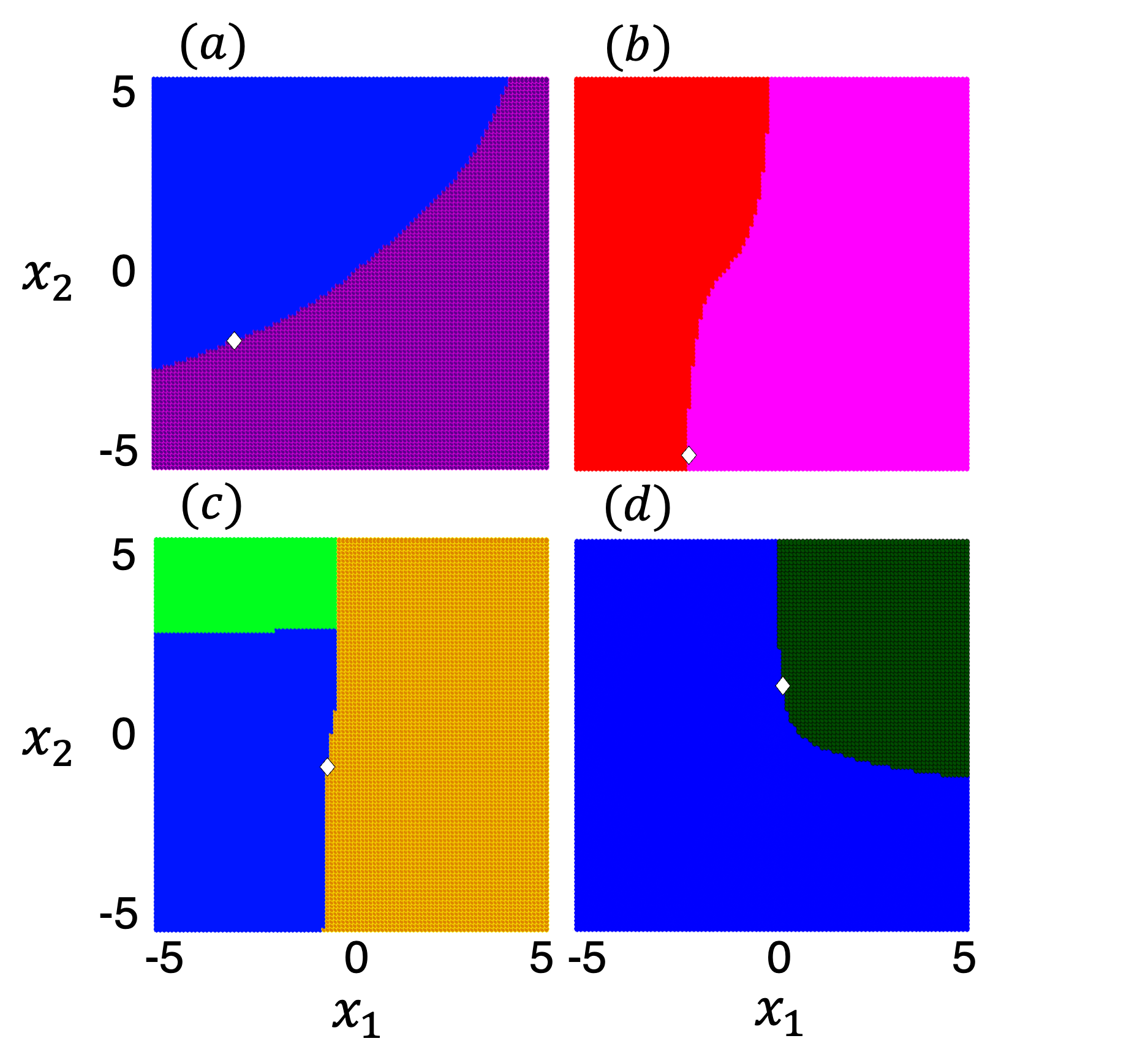

Attractor basins represent the domain from which the network will converge to a particular memory. These basins are critical to understanding how memories are stored and accessed in an HNN. The research demonstrates that the basins' boundaries are shaped by stable manifolds of saddle points, implying that memory access can be visualized through the network's dynamical landscape.

Figure 3: Attractor basins (shaded regions in various colors) developed in the NN.

Figure 4: Evidence that the boundaries of attractor basins are formed by the stable manifolds of the saddle fixed points with one positive eigenvalue.

This finding can aid in the design of networks that more effectively utilize their memory capacities without unnecessary overlap or interference, further enhancing the network's reliability in practical applications.

Conclusion

This rigorous examination of bifurcation in HNNs provides critical insights into memory dynamics within neural systems. It highlights the delicate balance between memory formation and forgetting and the importance of controlling bifurcation pathways during training. These insights lay the groundwork for advances in neural network design, reducing memory interference, addressing catastrophic forgetting, and perhaps offering new strategies for creating networks with improved learning efficiency and stability. Future work could leverage these insights to explore similar dynamics in other forms of recurrent neural networks or machine learning architectures.