- The paper introduces a novel sleep-like replay consolidation (SRC) algorithm to mitigate catastrophic forgetting in EP-trained RNNs.

- Experimental results on MNIST and other benchmarks show SRC and awake rehearsal significantly enhance retention and task accuracy.

- The study demonstrates that SRC restores task-specific synaptic patterns, promoting independent class representations and stability.

Toward Lifelong Learning in Equilibrium Propagation: Sleep-like and Awake Rehearsal for Enhanced Stability

Introduction

The paper "Toward Lifelong Learning in Equilibrium Propagation: Sleep-like and Awake Rehearsal for Enhanced Stability" (2508.14081) explores the limitations and potential improvements in recurrent neural networks (RNNs) trained using Equilibrium Propagation (EP). These networks, although showing remarkable capabilities in various tasks, are hamstrung by catastrophic forgetting when exposed to continuous learning scenarios. This issue is juxtaposed with the human brain's ability to retain and integrate information across different contexts, a capability enhanced by sleep's memory consolidation processes. The study introduced a novel sleep-like replay consolidation (SRC) algorithm aimed at boosting the resilience of EP-trained RNNs against forgetting. The proposed SRC, especially when paired with rehearsal strategies (awake replay), shows substantial enhancements in retention capabilities and parallels human-like learning behaviors.

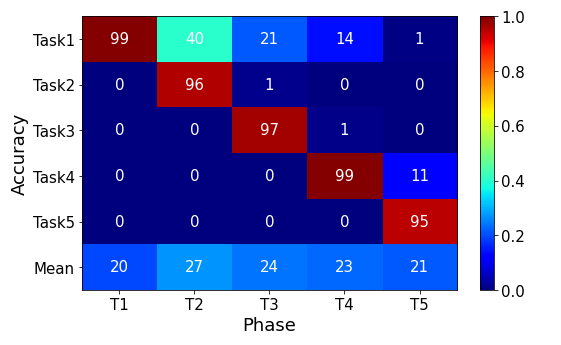

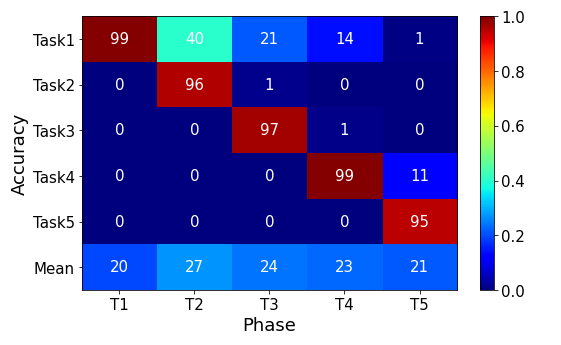

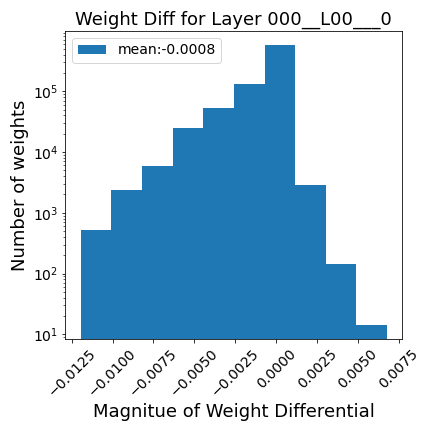

Figure 1: Performance of an RNN trained with EP on class-incremental MNIST, showing catastrophic forgetting as new tasks are learned.

Methods

Equilibrium Propagation (EP)

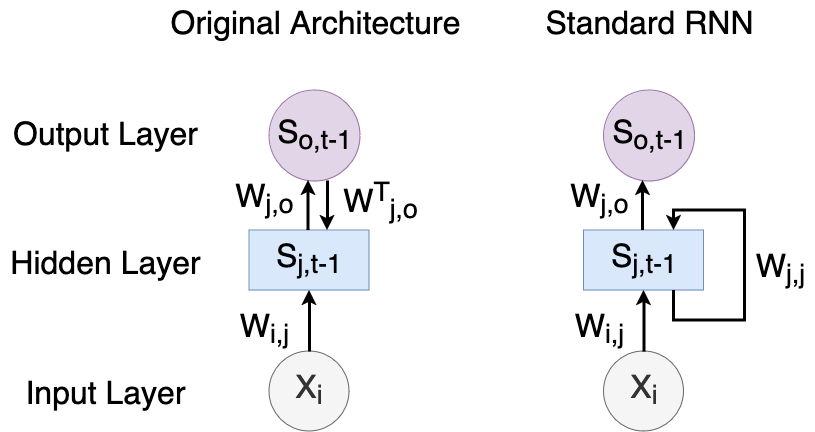

EP provides a biologically plausible model for training energy-based RNNs, operating in two phases: the free phase, where activations evolve autonomously, and the weakly clamped phase, where subtle teaching signals guide outputs closer to targets. The difference in neural activations across these phases results in contrastive synaptic weight updates. This architecture ensures stability—central to EP’s ability to handle static inputs effectively.

The energy function E delineates the network’s internal configuration. During training, neuron states minimize this local energy via gradient flow:

dtds=−∂s∂E.

Despite EP's promising theoretical foundation and practical application across convolutional networks, its vulnerability to catastrophic forgetting necessitates mechanisms akin to biological sleep for robust continual learning.

Sleep Replay Consolidation (SRC)

Modeling sleep-like states, SRC utilizes Hebbian plasticity mechanisms akin to spike-timing-dependent plasticity (STDP). This approach introduces feedback connections within RNN architectures, facilitating temporal pattern replay in hidden layers. The SRC algorithm, executed in spiking neural network domains, enhances representational stability with iterative replay phases post-task learning (Algorithm 1). This mimics memory consolidation through spontaneous pattern replay observed during biological sleep.

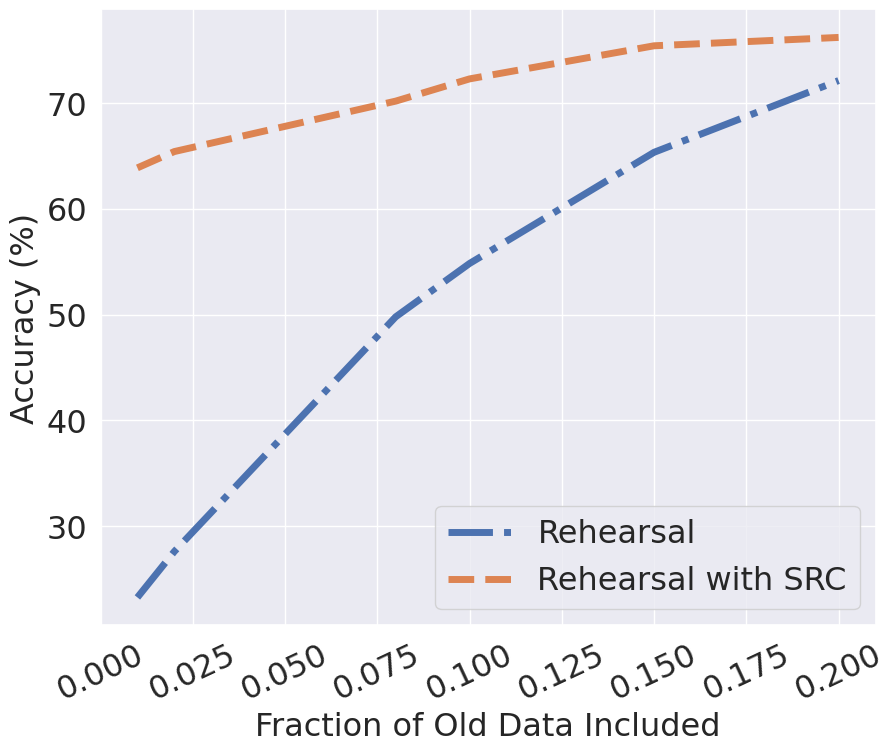

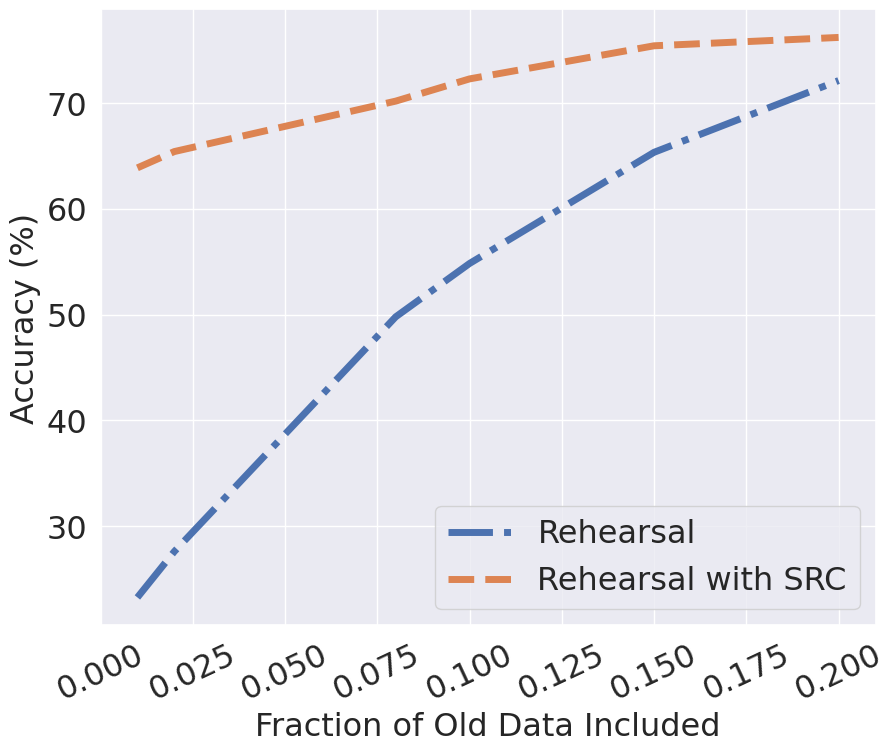

Rehearsal Method

Complementary to SRC, rehearsal methods iteratively reintroduce previous task data into the learning pipeline, enhancing retention through explicit awake replay processes. Despite advances in biologically sophisticated rehearsal mechanisms, minimal versions enable clearer comparisons against SRC capabilities, demonstrating significant capability enhancements when integrated.

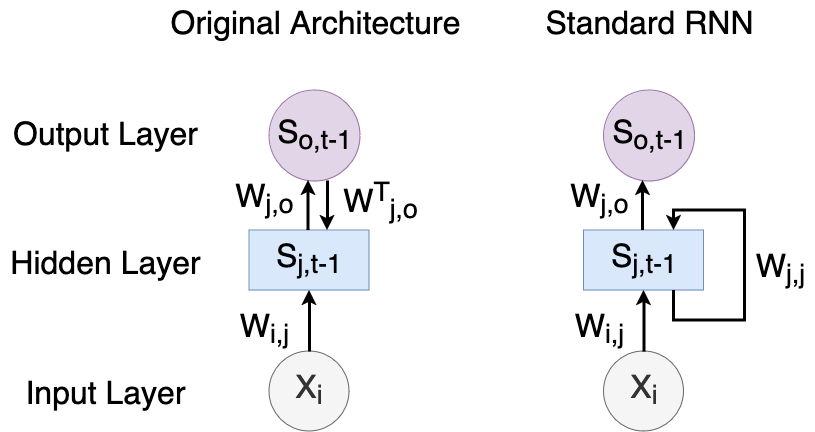

Figure 2: Model architectures comparing MRNN-EP and RNN baselines, highlighting unique feedback structures enabling advanced recurrent dynamics.

Results

Quantitative analysis reveals that EP-trained RNNs equipped with SRC consistently outperform baseline models across diverse datasets, including image classification tasks like MNIST, FMNIST, KMNIST, CIFAR-10, and ImageNet. The integration of SRC with rehearsal further bolsters models, reflecting improvements in retention and task accuracy:

| Dataset |

Method |

MRNN-EP Performance (%) |

MRNN-BPTT Performance (%) |

RNN-BPTT Performance (%) |

| MNIST |

SRC |

64.68 ± 4.35 |

65.41 ± 4.98 |

49.88 ± 3.28 |

Figure 3: Accuracies of MRNN-EP and rehearsal with SRC, confirming SRC's significance in enhancing performance metrics.

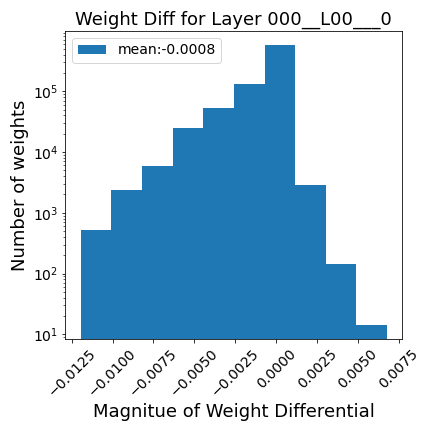

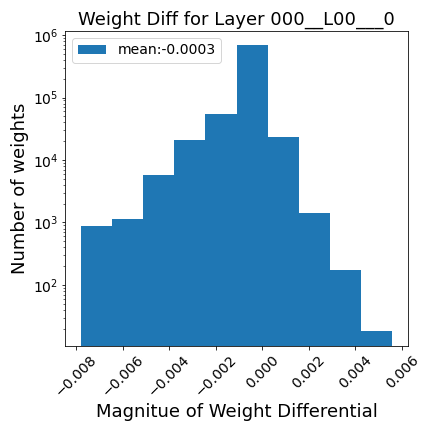

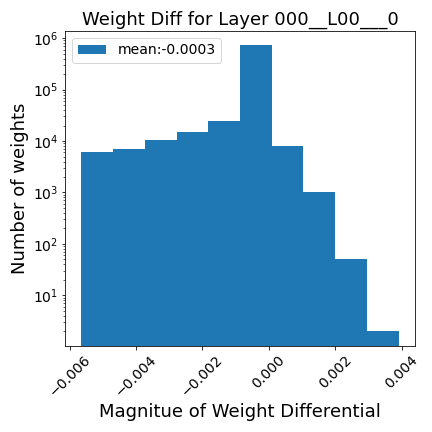

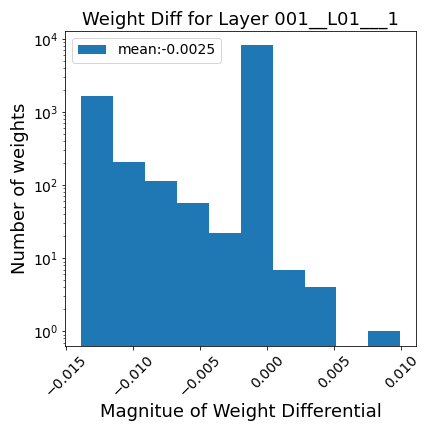

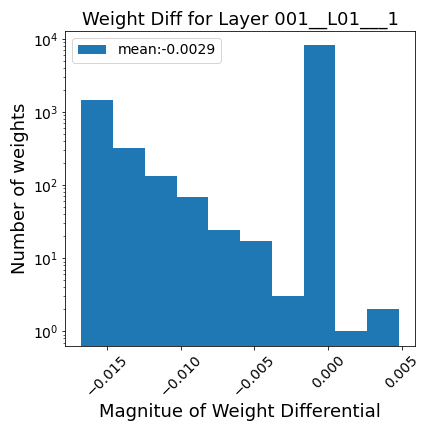

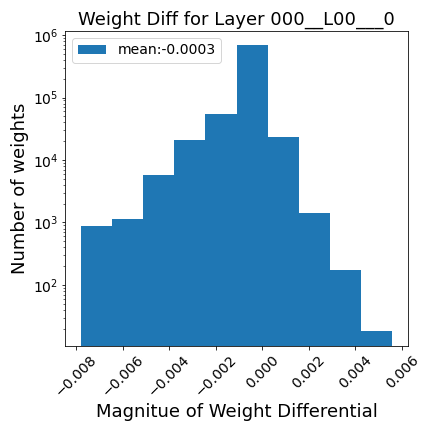

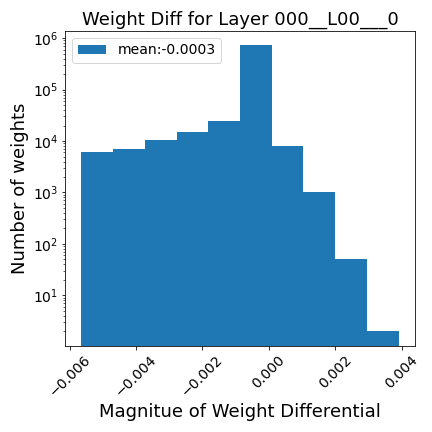

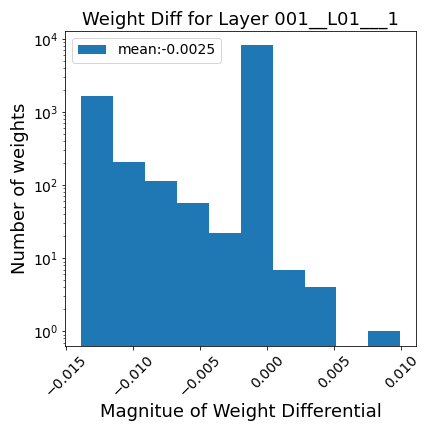

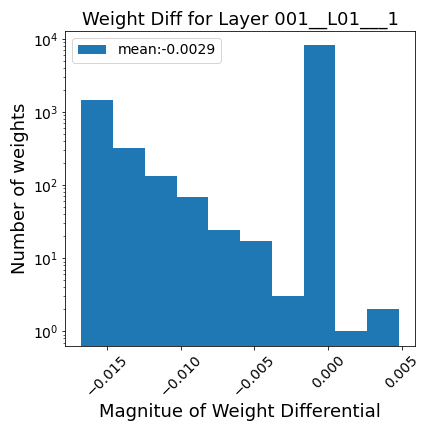

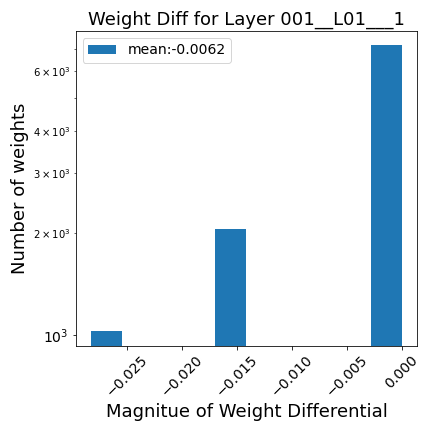

Synaptic Importance

Synaptic analysis using cosine similarity measurements indicates that SRC effectively restores task-specific weight patterns that are typically overwritten during new task learning. SRC enhances neuronal allocation, enabling more independent class representations and reducing task interference.

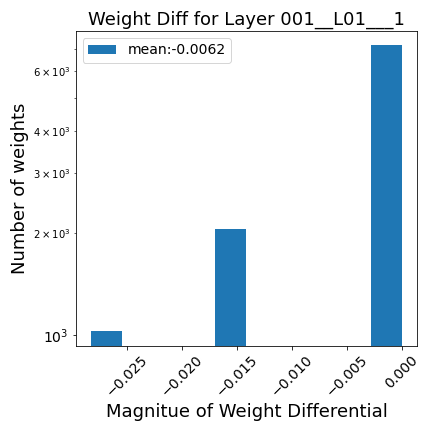

Figure 4: First weights for MRNN-EP post-SRC illustrating broader recovery of synaptic configurations.

Conclusion

The study unveils SRC as a pivotal advancement for overcoming the constraints of continual learning within EP-trained RNNs. The approach underscores biological sleep's role in neural networks, bridging gaps between artificial systems and human learning processes. While further refinement is necessary to emulate the full breadth of biological capabilities, SRC offers a promising avenue for robust, biologically plausible continuous learning frameworks. Future exploration into reinforcement learning environments, combined with EP and SRC, shines a light on potential advancements in dynamic, task-driven scenarios.