- The paper reveals that 93% of analyst queries align with cybersecurity competencies, emphasizing LLMs’ role as cognitive aids.

- The paper employs a five-phase thematic analysis of 3,090 queries over 10 months to uncover real-world analyst-LLM interactions.

- The paper advocates for embedding LLM functionalities in SOC dashboards to reduce cognitive load while preserving human decision-making.

Human-AI Collaboration in Security Operations Centres: Empirical Insights

Introduction

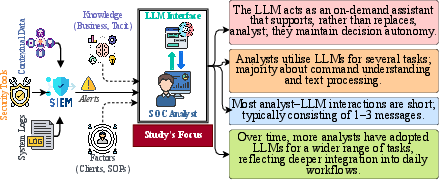

In the study "LLMs in the SOC: An Empirical Study of Human-AI Collaboration in Security Operations Centres," researchers investigate the integration of LLMs in Security Operations Centres (SOCs). The study analyzes 3,090 queries from 45 analysts within a 10-month period to understand how LLMs function as cognitive aids rather than decision-makers. The research reveals the relevance of LLM usage aligned with cybersecurity competencies and provides insights into SOC workflows enhanced by LLMs.

Figure 1: SOC workflow, focus of study and our insights.

Methodology and Data Collection

The study employed a five-phase approach to analyze SOC analysts' interactions with LLMs, focusing on familiarization with data, code generation, theme identification, and reporting. Analysts submitted queries to GPT-4 during live investigations over 10 months, revealing task priorities and engagement patterns. The study utilized thematic analysis to assess these interactions, uncovering rich insights into the role of LLMs as on-demand aids to augment situational awareness and streamline operational tasks.

Figure 2: The five-phased approach to analyze and understand SOC analysts' interactions with LLMs.

Key Findings

Usage Patterns and Task Engagement

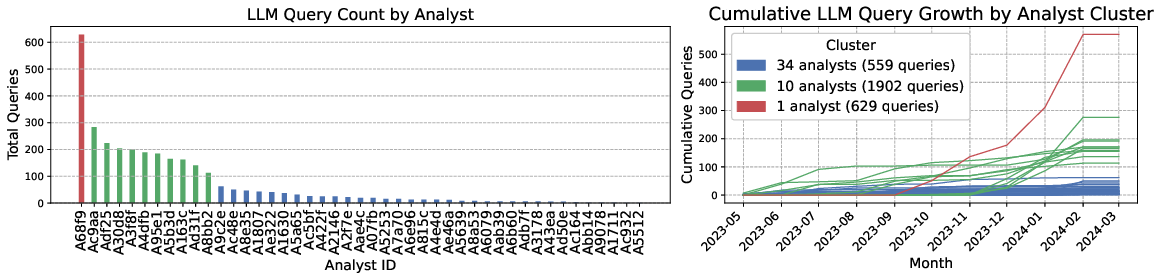

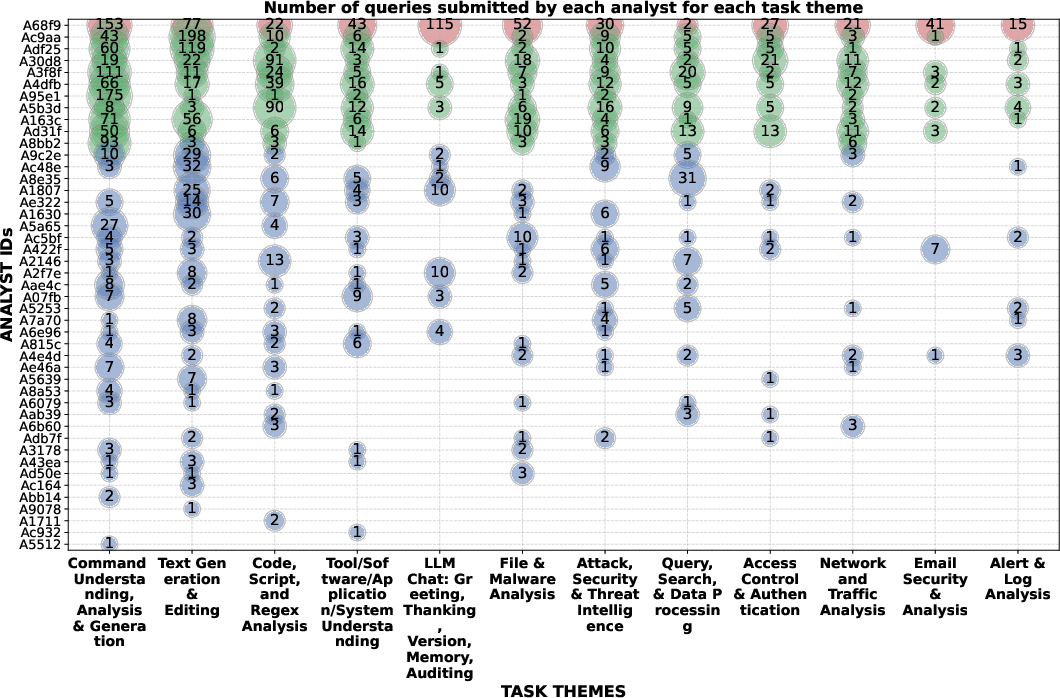

The study highlights that 93% of analyst queries align with established cybersecurity competencies, underscoring the practical relevance of LLMs in SOC-related tasks. Usage trends show a transition from exploratory to routine integration, driven by task types including command interpretation and technical communication enhancement. Analysts predominantly engaged LLMs for functional understanding, text processing, and command analysis, reflecting diverse cognitive needs and the LLM's role as an interpretive and drafting assistant.

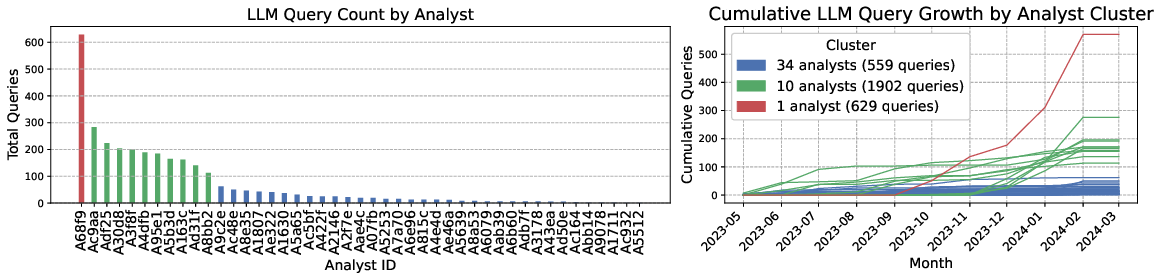

Figure 3: (Left) Query volumes vary across analysts, with heavy concentration among a few users. (Right) Overall, there is growing integration into workflows, but mostly driven by a subset of analysts (March 2024 has only 7 days of data).

Analyst-Level Analysis

Engagement with LLMs varied, with some analysts showing intense usage, indicating deep integration into workflows, particularly for command and text interpretation. Analysts adapted LLMs to specific needs ranging from code debugging to document refinement. The flexibility of LLMs allowed analysts to manage diverse task demands efficiently without extensive prompt engineering.

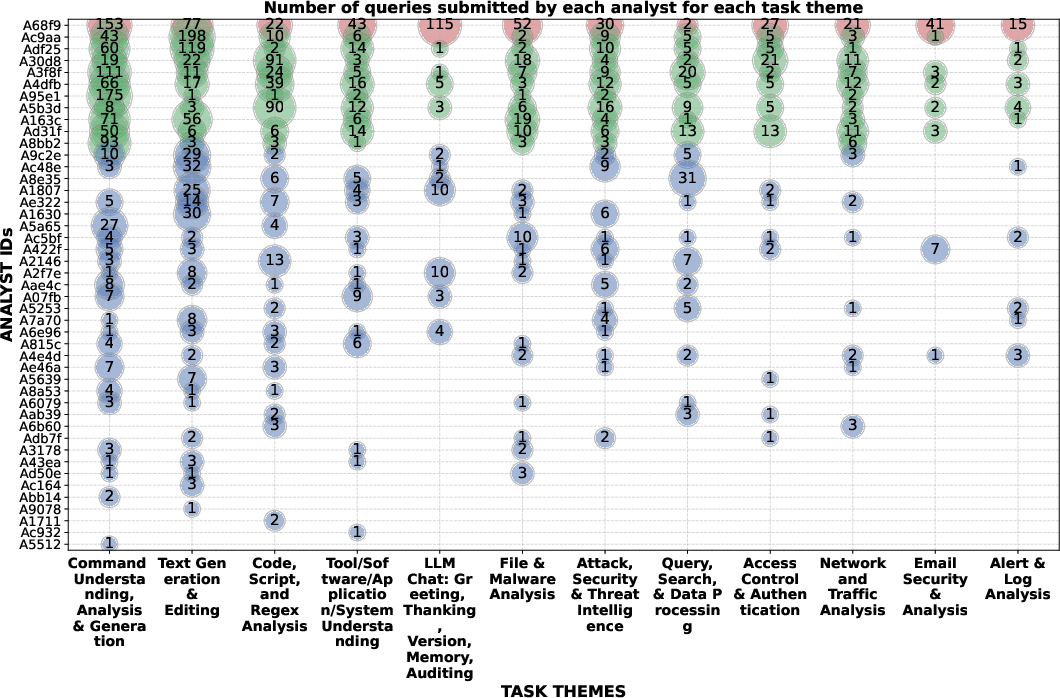

Figure 4: Number of queries per analyst (ordered by activity) and task theme (ordered by frequency). Analyst clusters are color-coded: red for most active, green for moderate, and blue for low-usage analysts.

Implications for SOC Design and AI Collaboration

The study advocates for embedded LLM functionalities within SOC dashboards to facilitate seamless micro-task integration, thus reducing cognitive load and preserving analyst decision authority. By surfacing evidence rather than recommendations, LLMs can align better with analysts' preference for maintaining final judgment, enhancing trust and interpretive efficiency. Future SOC systems should account for these preferences to optimize human-AI collaboration.

Conclusion

The study concludes that LLMs act as flexible, on-demand cognitive aids in SOCs, augmenting rather than replacing analyst expertise. By effectively integrating LLMs for interpretive and communicative tasks, SOCs can enhance operational efficiency and situational awareness. The findings provide empirical guidance for designing context-aware, human-centered AI assistance, representing a significant contribution to understanding real-world analyst-LLM dynamics in security environments.