- The paper demonstrates that aligned AI explanations notably increase misinformation detection accuracy compared to misaligned ones.

- It employs GPT-4 to generate both content-based and socio-contextual explanations across COVID-19 and political domains.

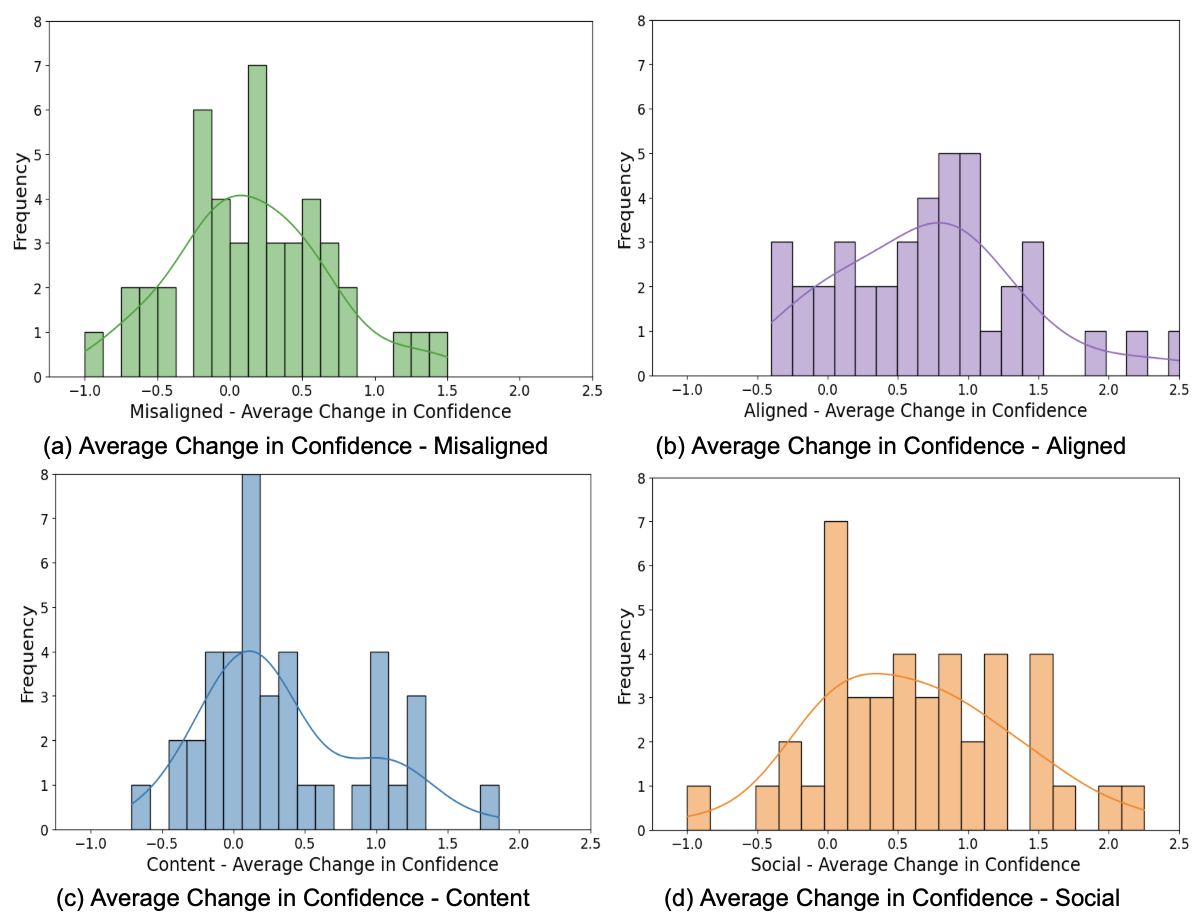

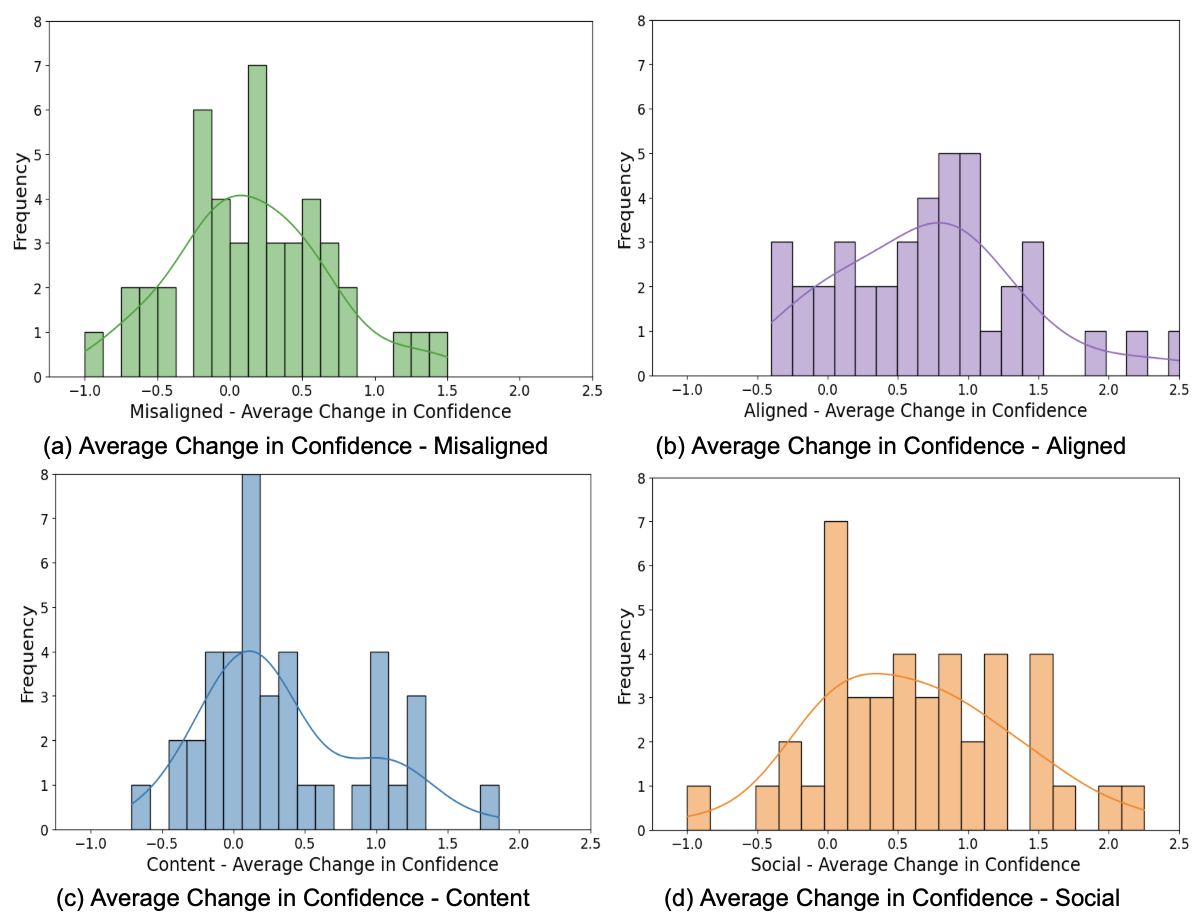

- User responses indicate that while aligned explanations boost confidence, misaligned ones stimulate analytical thinking.

Introduction

This paper examines the design and efficacy of AI explanations in the context of misinformation detection. The study investigates three types of explanations: content, social, and combined explanations, to enhance the ability of users to identify misinformation. Recognizing that previous AI explainability methods primarily emphasize content explanations focusing on linguistic features, this research innovates by incorporating social explanations which consider the broader social context of information dissemination.

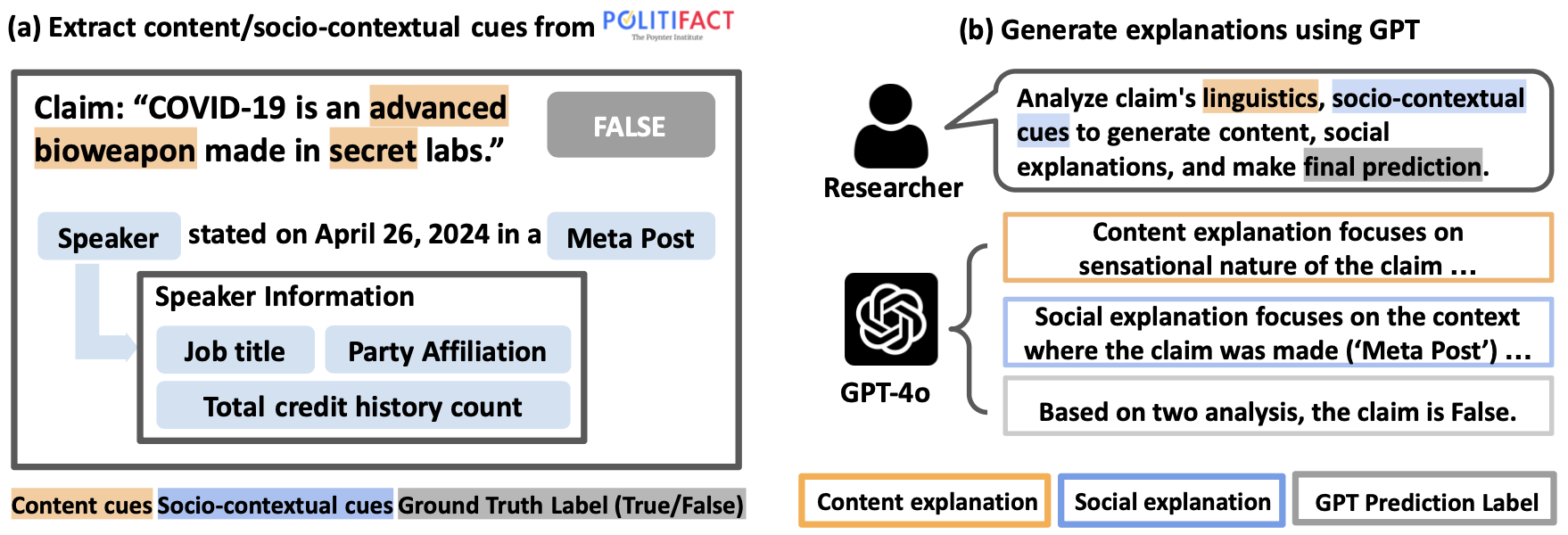

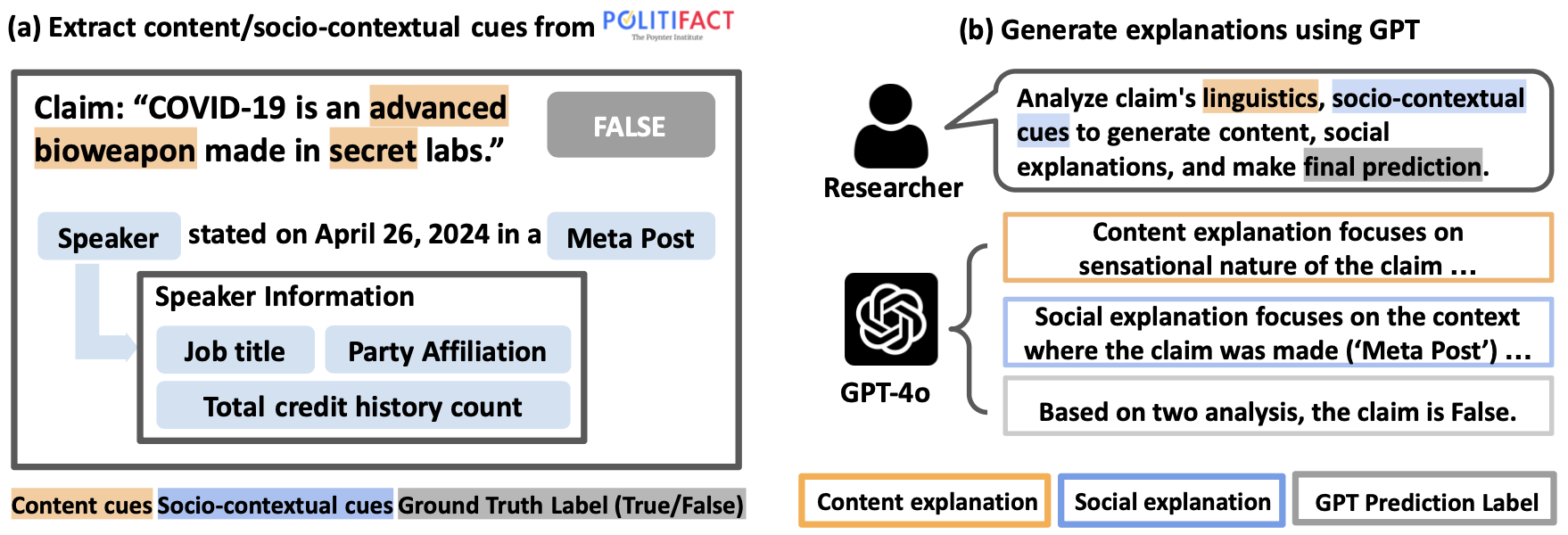

Explanation Generation Process

The explanation generation mechanism is depicted in an overview process involving content and socio-contextual analyses. Initially, content cues—such as syntactic, semantic, and structural aspects—are isolated to form content explanations. Concurrently, socio-contextual elements like speaker attributes and information dissemination contexts are evaluated to produce social explanations. These explanations are generated utilizing GPT-4, aiming to correlate linguistic patterns and social contexts with the credibility of claims.

Figure 1: Overview of the Explanation Generation Process Using GPT.

Experimental Design

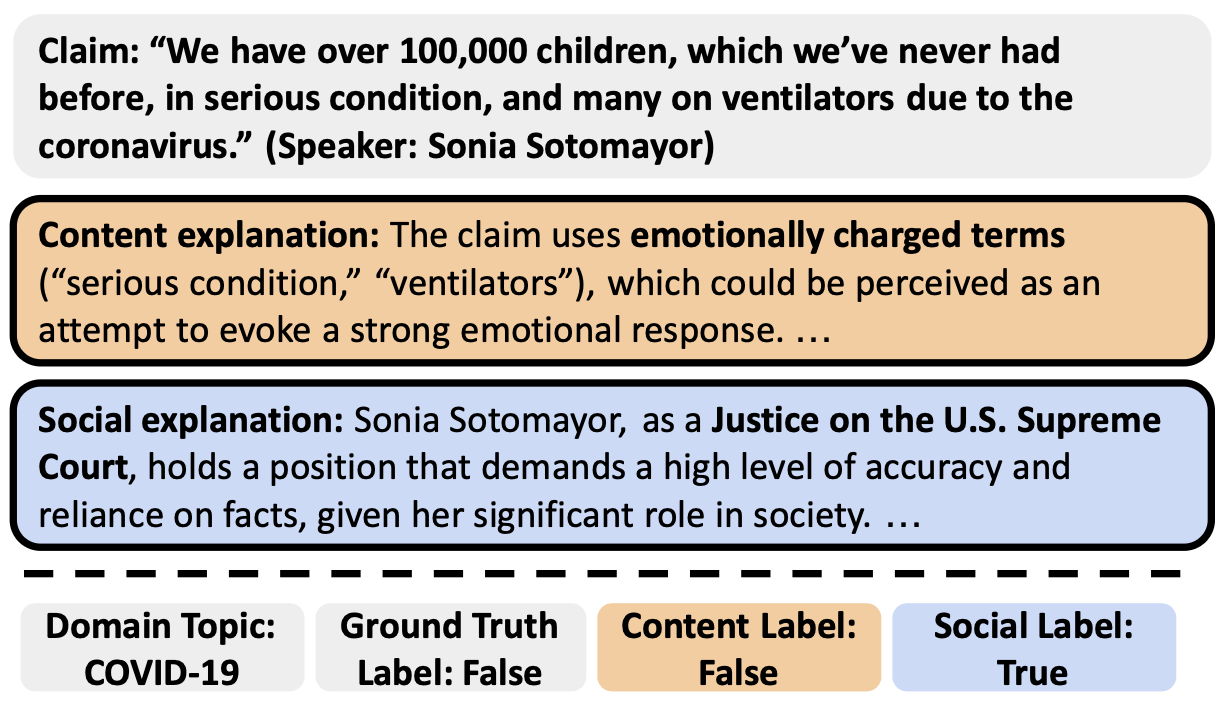

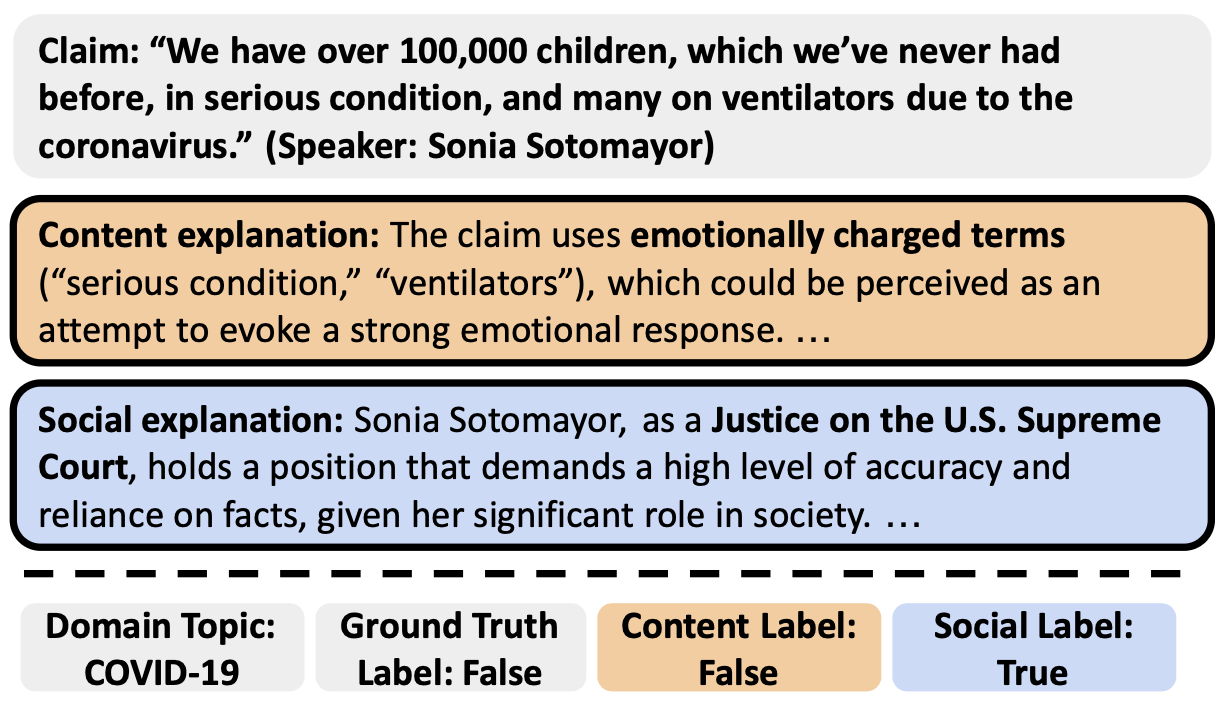

Two online studies were conducted across different misinformation domains, specifically COVID-19 and Politics, with participants sourced from Prolific and MTurk. Participants received explanations classified into five conditions: no explanation, content, social, aligned (consensus between content and social explanations), and misaligned (conflict between content and social explanations).

Figure 2: Examples of AI Explanations Shown to Participants. Participants in the content explanation condition were shown explanations in an orange box, while participants in the social explanation were shown explanations in the blue box.

Results and Analysis

Detection Accuracy

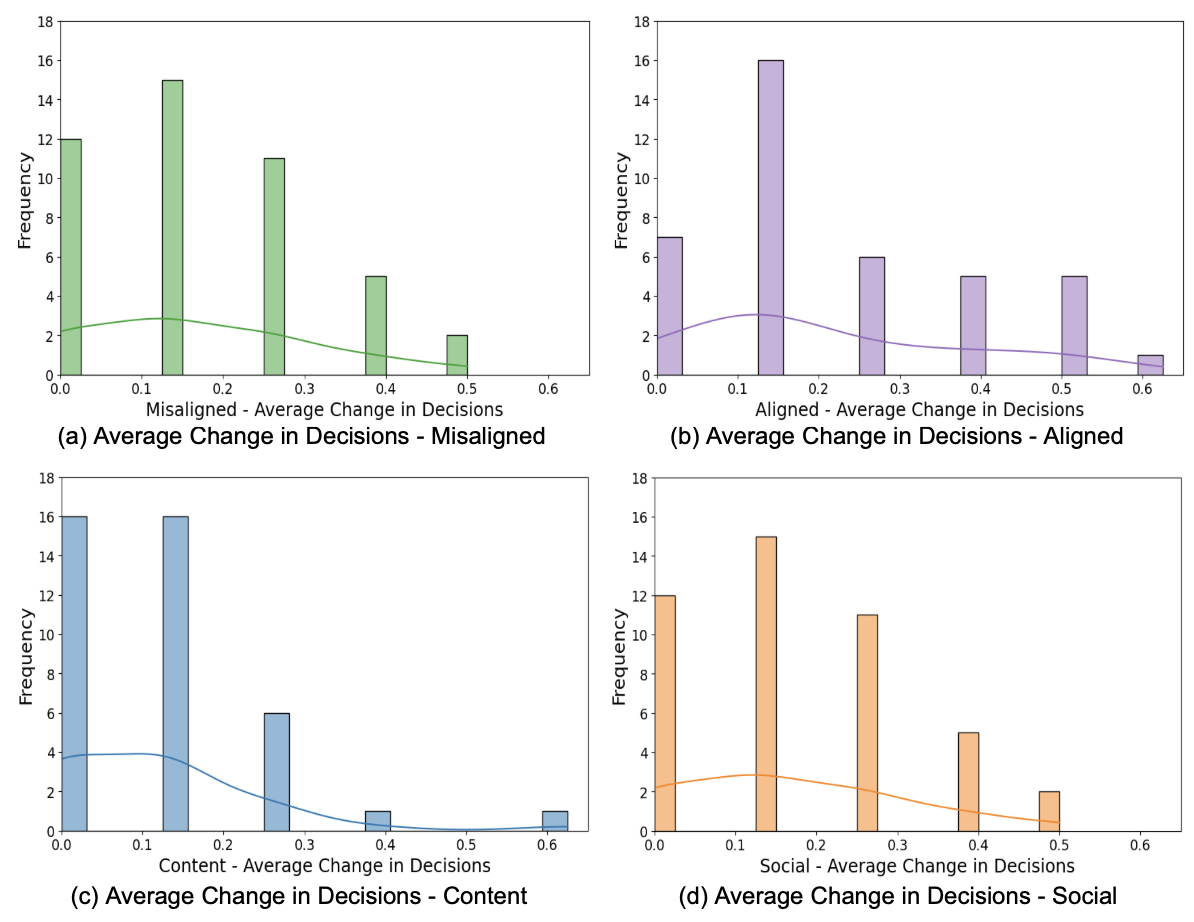

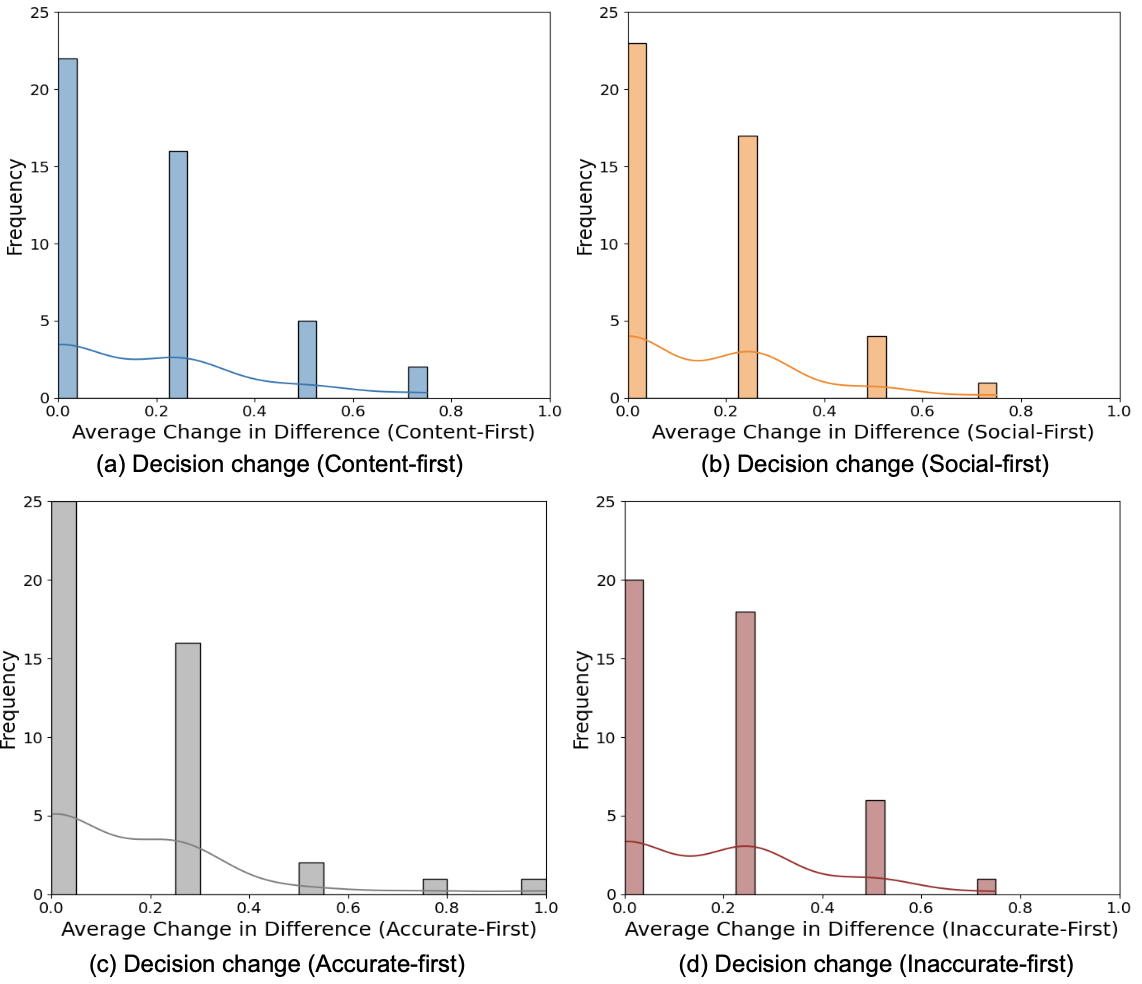

The experiments reveal that aligned explanations significantly enhance misinformation detection accuracy. Participants exposed to aligned explanations displayed higher accuracy compared to those who received misaligned explanations. Moreover, presentation order influenced effectiveness, with aligned explanations, particularly in the COVID-19 domain, demonstrating more substantial impacts when social explanations were presented first. This contrast was not observed in the political domain, suggesting different processing mechanisms are activated depending on domain-specific factors.

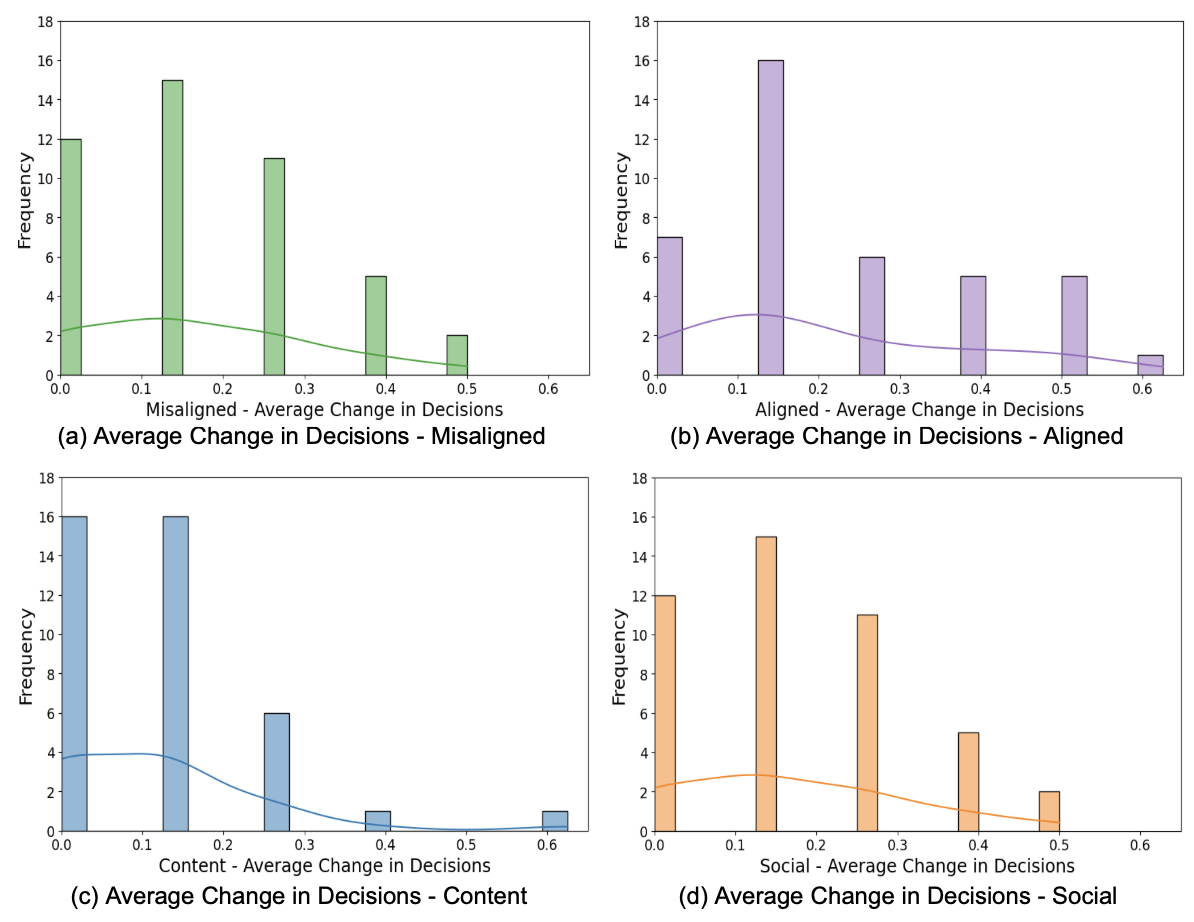

Figure 3: Decision changes across all conditions: Effects of presenting misaligned (3a), aligned (3b), content (3c), and social (3d) explanations.

User Perception

User experiences with explanations varied. Aligned explanations were perceived as more useful and fostering greater understanding of AI-inferred decisions. Interestingly, although misaligned explanations did not improve decision accuracy, they were found to sometimes encourage critical thinking, as participants actively scrutinized conflicting information. This contradiction underscores the complexity in users' information processing when faced with dual-conflict explanations.

Figure 4: Confidence changes across all conditions: Effects of presenting misaligned (4a), aligned (4b), content (4c), and social (4d) explanations.

Discussion

The findings highlight a dual nature in explanation-type effectiveness. Aligned explanations distinctly improve detection outcomes, yet misaligned explanations provide cognitive engagement, suggesting a potential role in nurturing analytical thinking—even if not always improving immediate detection accuracy.

The research further clarifies the criticality of explanation alignment, establishing that while misaligned explanations have limitations, they also invite users to deeply engage with content. Different explanation types trigger varied cognitive processing—either heuristic or analytical—depending on the misinformation domain.

Conclusion

The study advances understanding on designing AI explanations by emphasizing the importance of explanation alignment and sequence. It also opens potential directions for enhancing user-centric XAI systems in misinformation detection. Subsequent research should explore more refined methods for balancing explanation alignment, user perception, and domain-specific approaches to misinformation challenges.

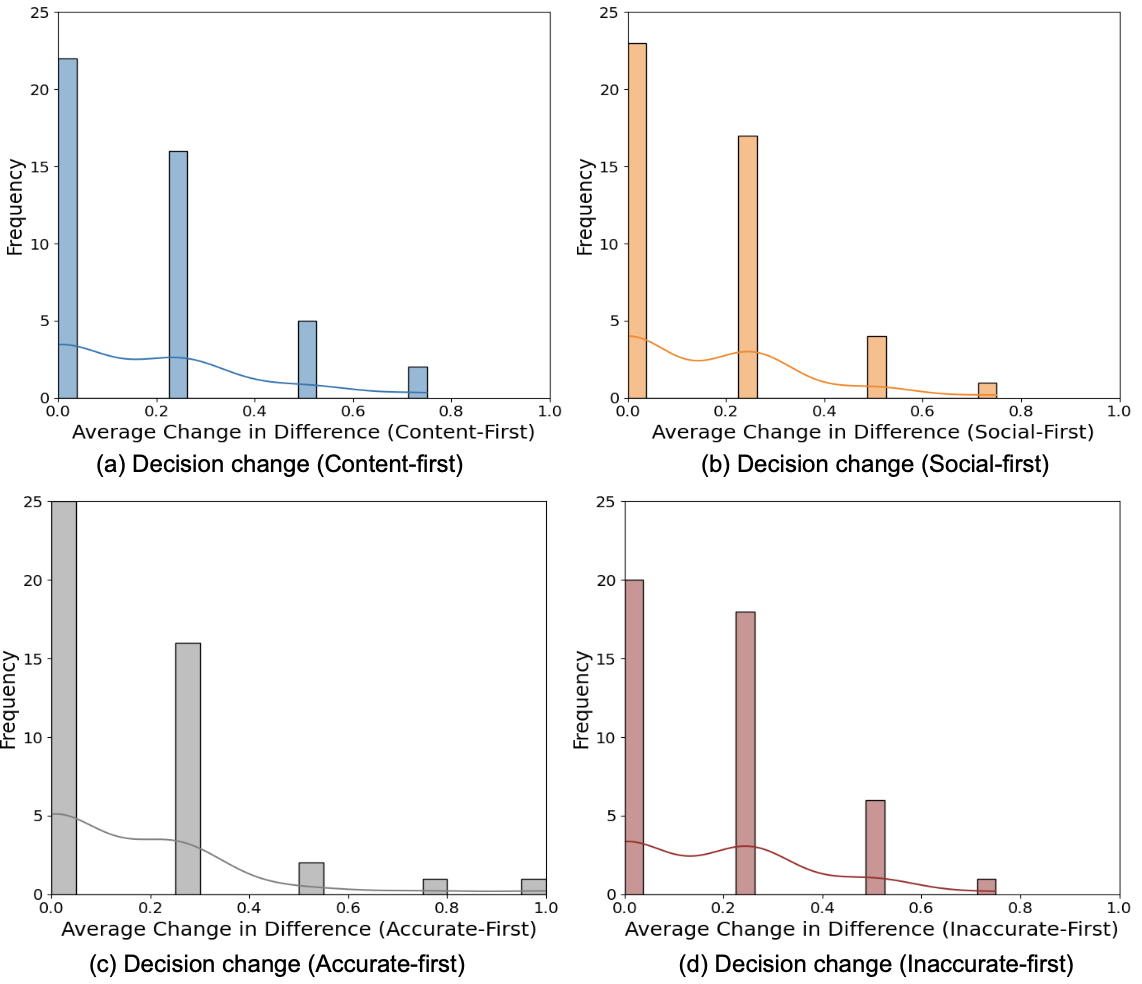

Figure 5: Decision changes under misaligned conditions: Effects of presenting content (5a) and social (5b) explanations first; Effects of presenting accurate (5c) and inaccurate (5d) explanations first.