- The paper presents systematic odometry calibration using gradient-based and stochastic methods to enhance pose accuracy in a wall climbing robot.

- It compares EKF and UKF sensor fusion techniques to improve state estimation, reducing errors from slippage and gravitational effects.

- Experimental results validate improved non-destructive testing performance on vertical surfaces and offer directions for future dynamic modeling.

Odometry Calibration and Pose Estimation for a 4WIS4WID Mobile Wall Climbing Robot

Introduction

This paper addresses the odometry calibration and pose estimation problem for a four-wheel independent steer, four-wheel independent drive (4WIS4WID) mobile wall climbing robot. The operational context is vertical surface navigation, where conventional localization modalities such as LIDAR, GPS, and external cameras are either infeasible or unreliable due to environmental constraints. The research focuses on systematic odometry calibration using both gradient-based and stochastic optimization methods, and on the design of sensor fusion-based pose estimators employing Extended Kalman Filter (EKF) and Unscented Kalman Filter (UKF) architectures. The experimental validation demonstrates the efficacy of the proposed methods in improving pose estimation accuracy, which is critical for autonomous non-destructive testing (NDT) tasks on building façades.

Robot Design and System Architecture

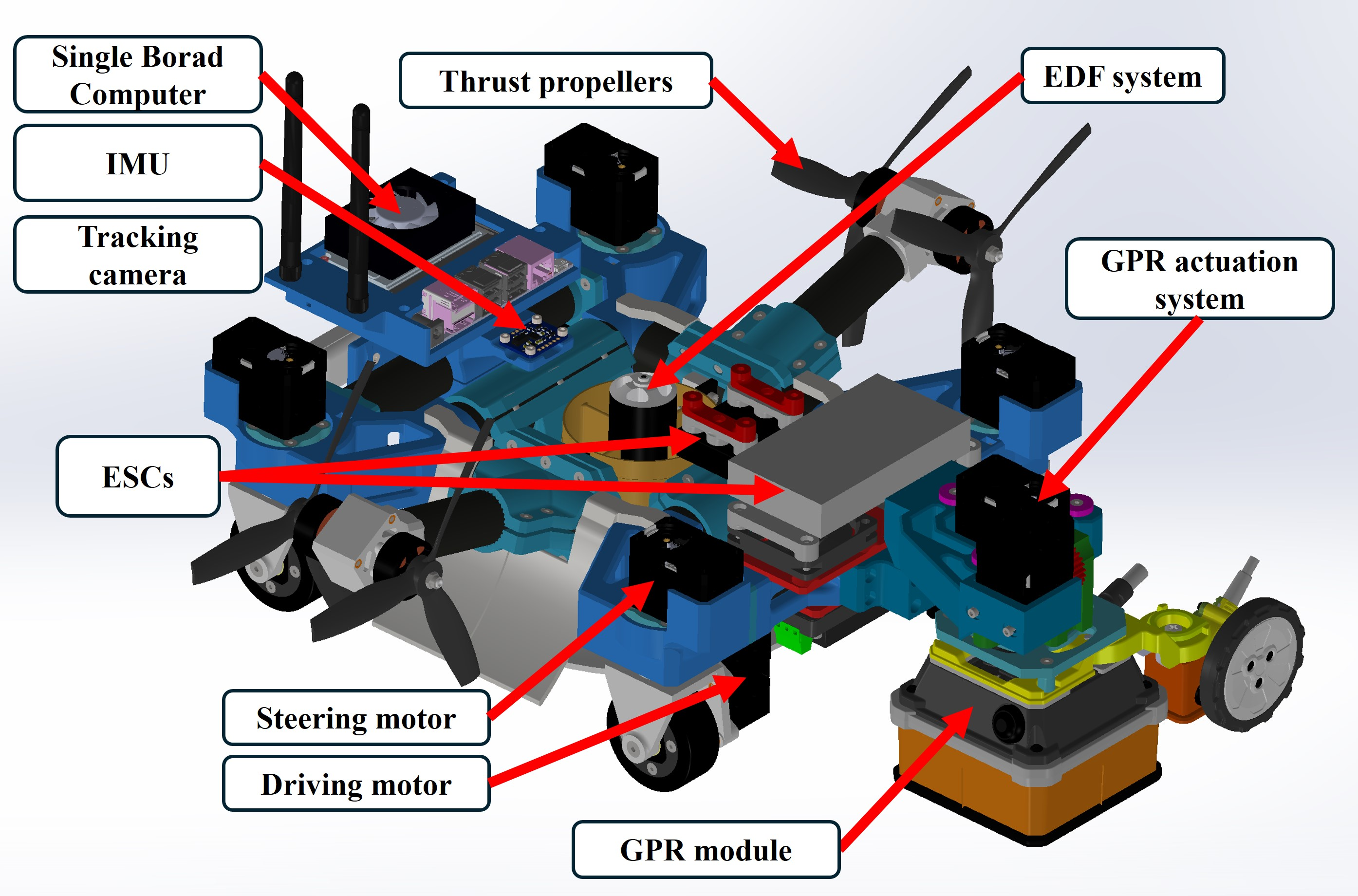

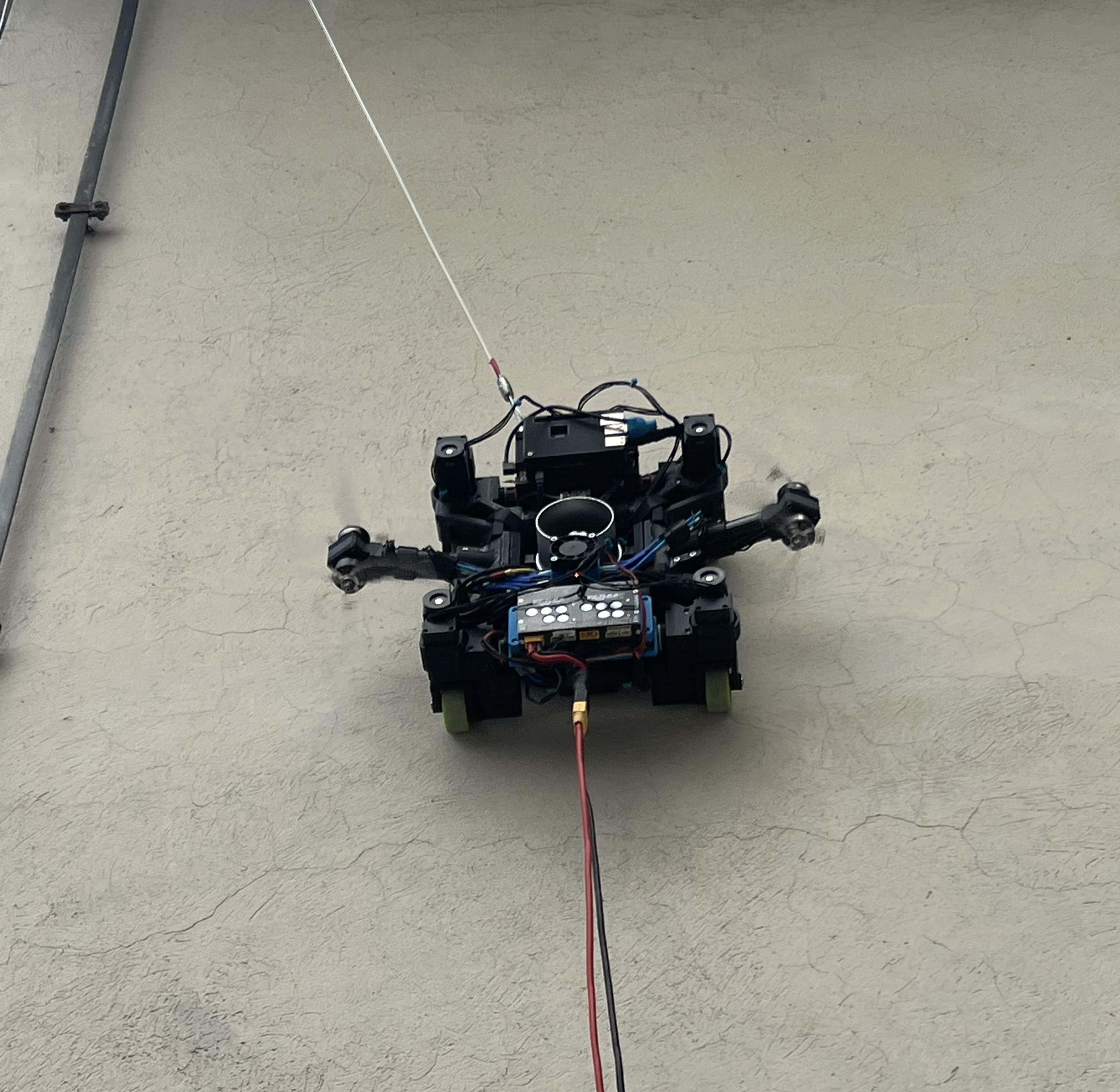

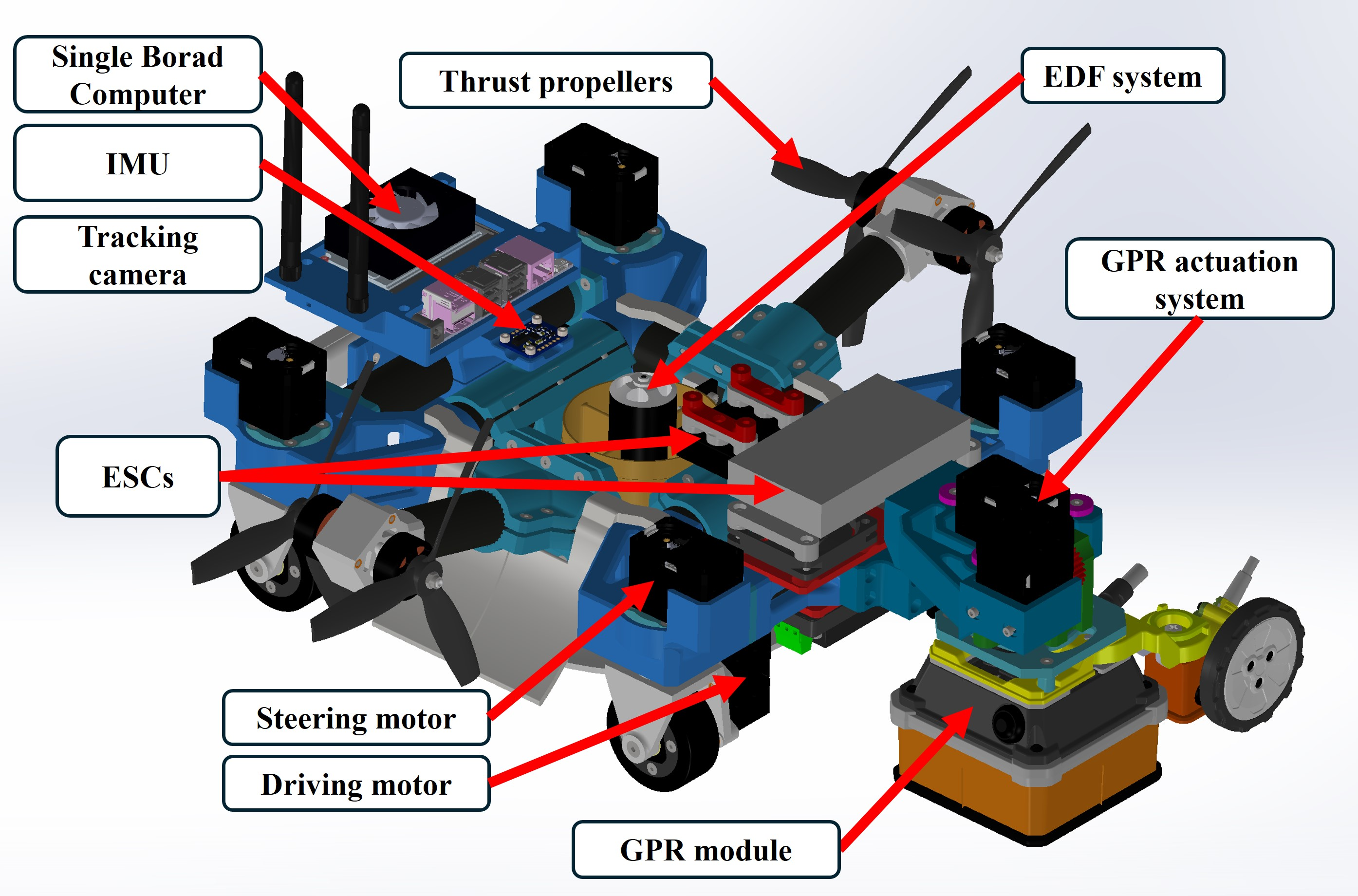

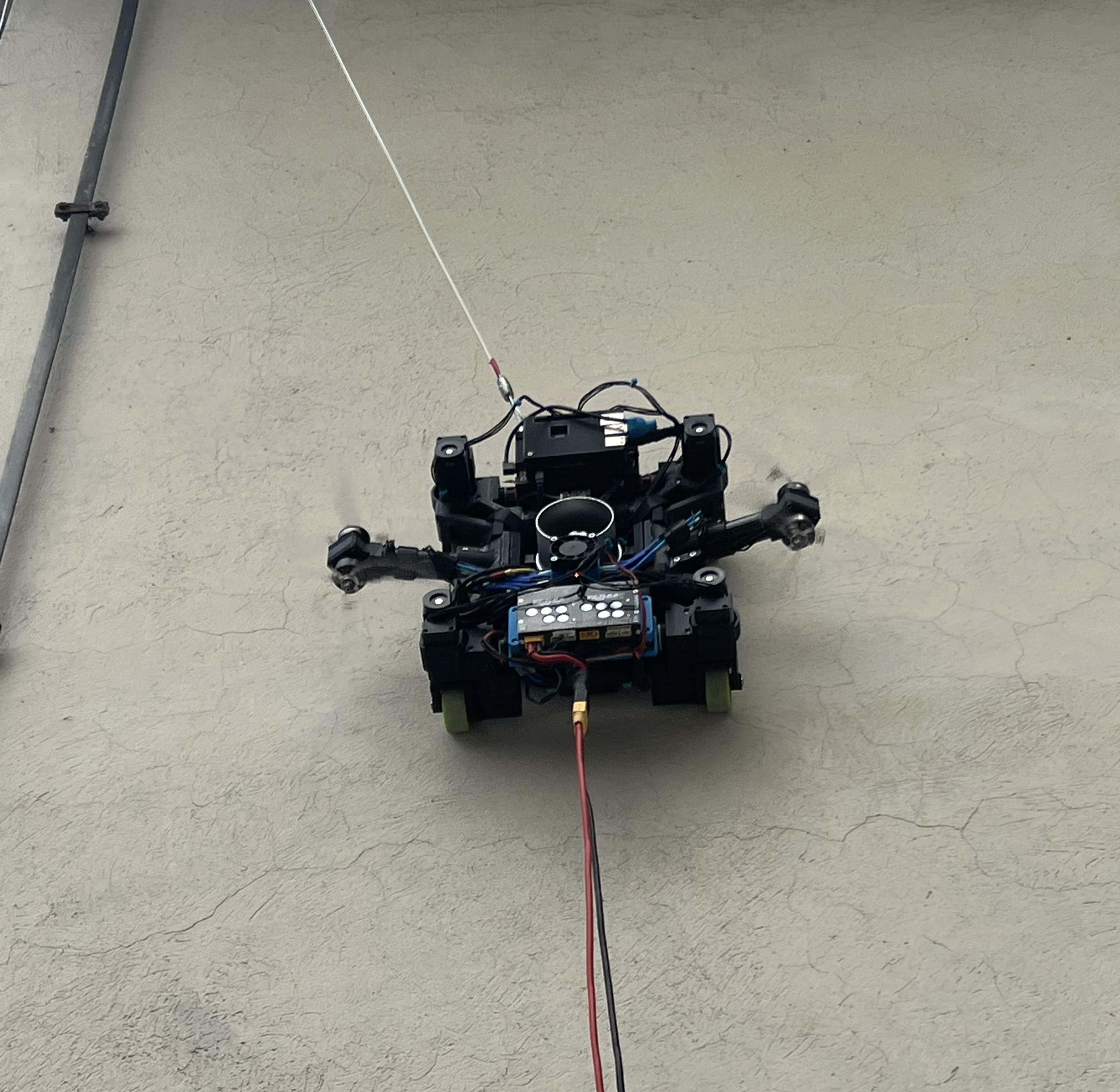

The robot is custom-built for wall climbing and NDT payload delivery, featuring a hybrid adhesion system utilizing negative pressure and thrust compensation. The mechanical structure is primarily ASA with carbon fiber reinforcements, supporting a payload of 1.5 kg. Actuation and steering are realized via Dynamixel XH430-W210-T smart servos, with feedback from AMS AS5045 magnetic rotary sensors. The sensor suite includes a Bosch BNO055 IMU and an Intel RealSense T265 for visual odometry. The control and estimation stack is implemented on NVIDIA Jetson NX running ROS2, enabling real-time sensor fusion and motor control.

Figure 1: Computer-aided design of the wall climbing robot, highlighting the NDT payload mounting section.

Figure 2: The 4WIS4WID robot operating on a vertical surface, demonstrating adhesion and omnidirectional mobility.

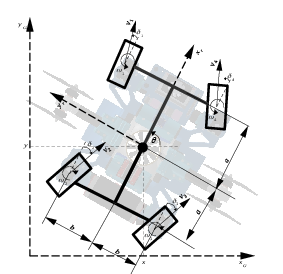

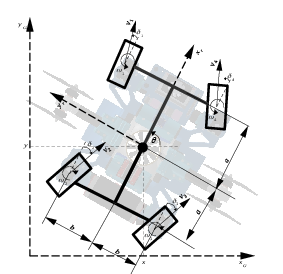

Figure 3: Kinematic structure of the 4WIS4WID robot, showing independent steering and drive for each wheel.

Kinematic Modeling and Odometry Calibration

The kinematic model is derived for the 4WIS4WID configuration, capturing the relationship between wheel velocities, steering angles, and the robot's global pose. The model incorporates 11 states, including position, orientation, wheel angular velocities, and steering angles. Systematic errors in odometry arise from uncertainties in wheel radii and wheelbase dimensions, necessitating calibration.

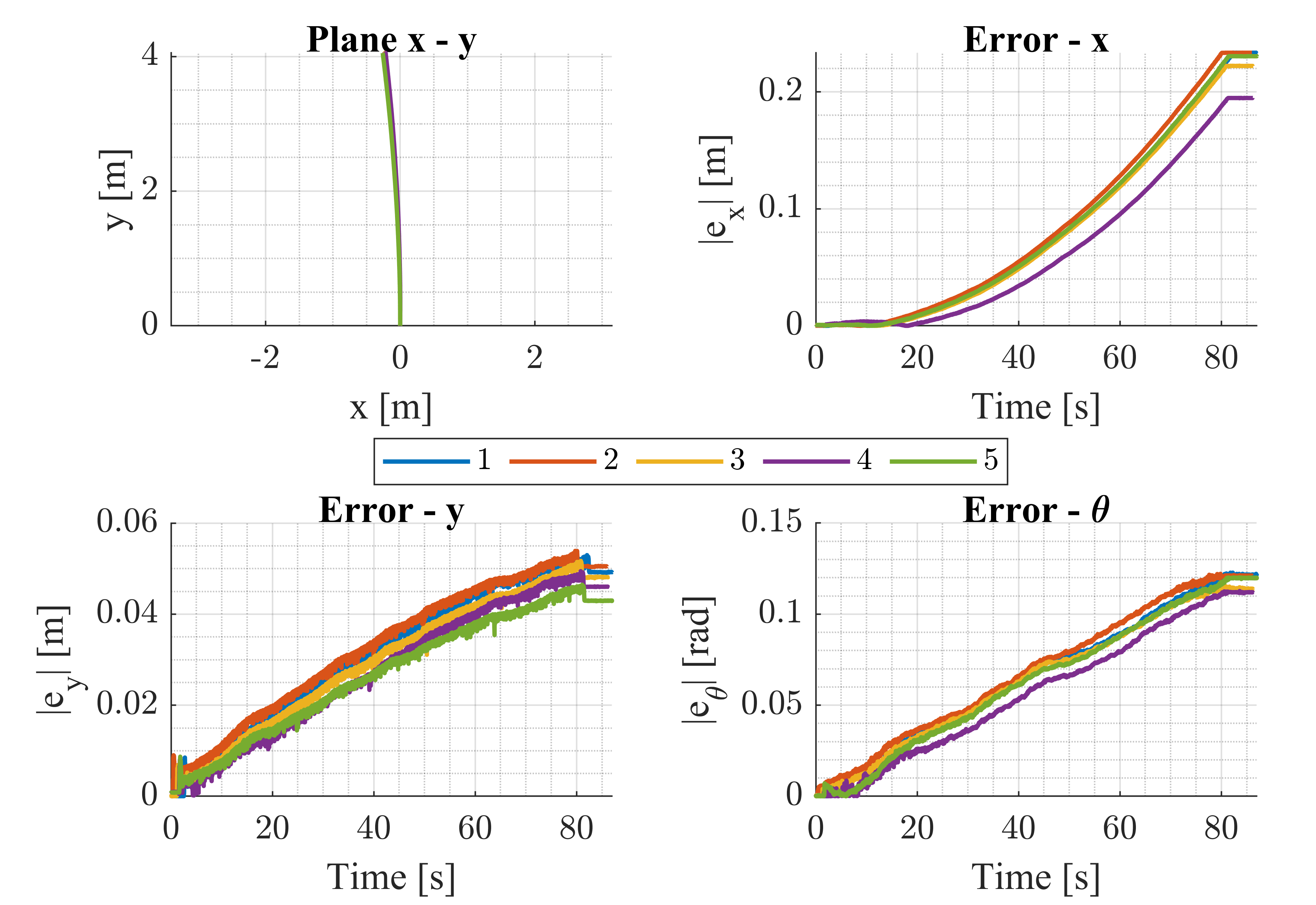

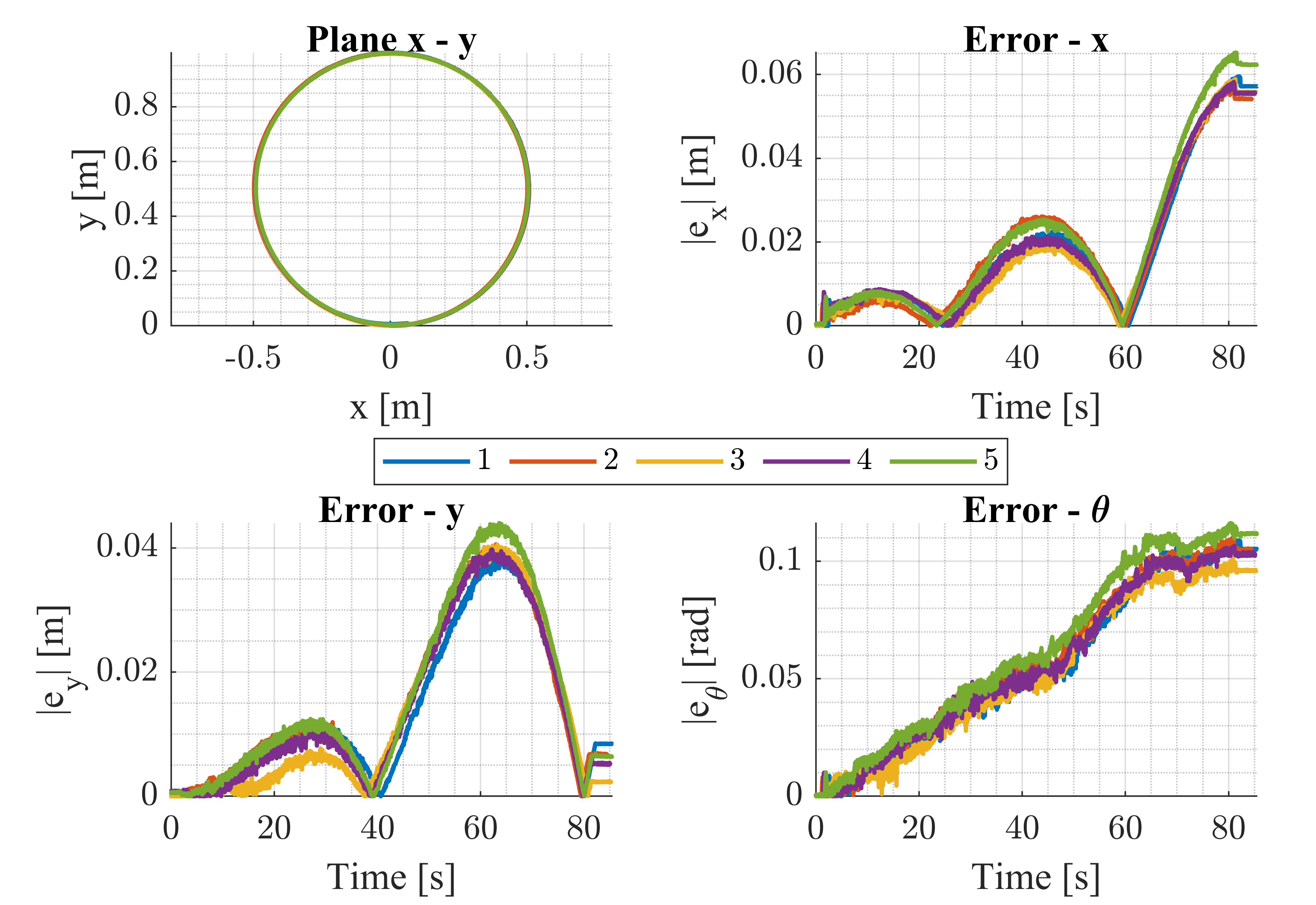

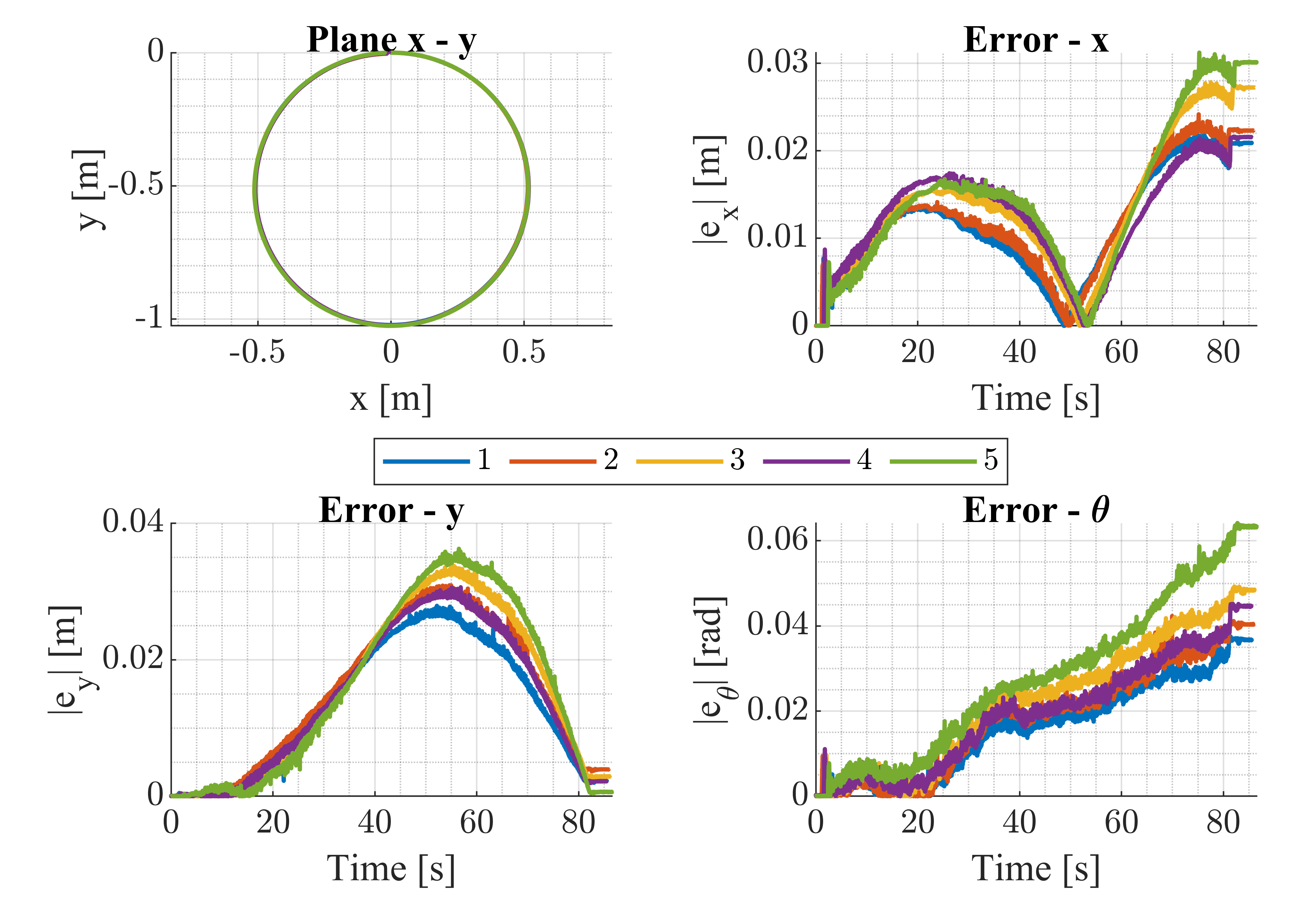

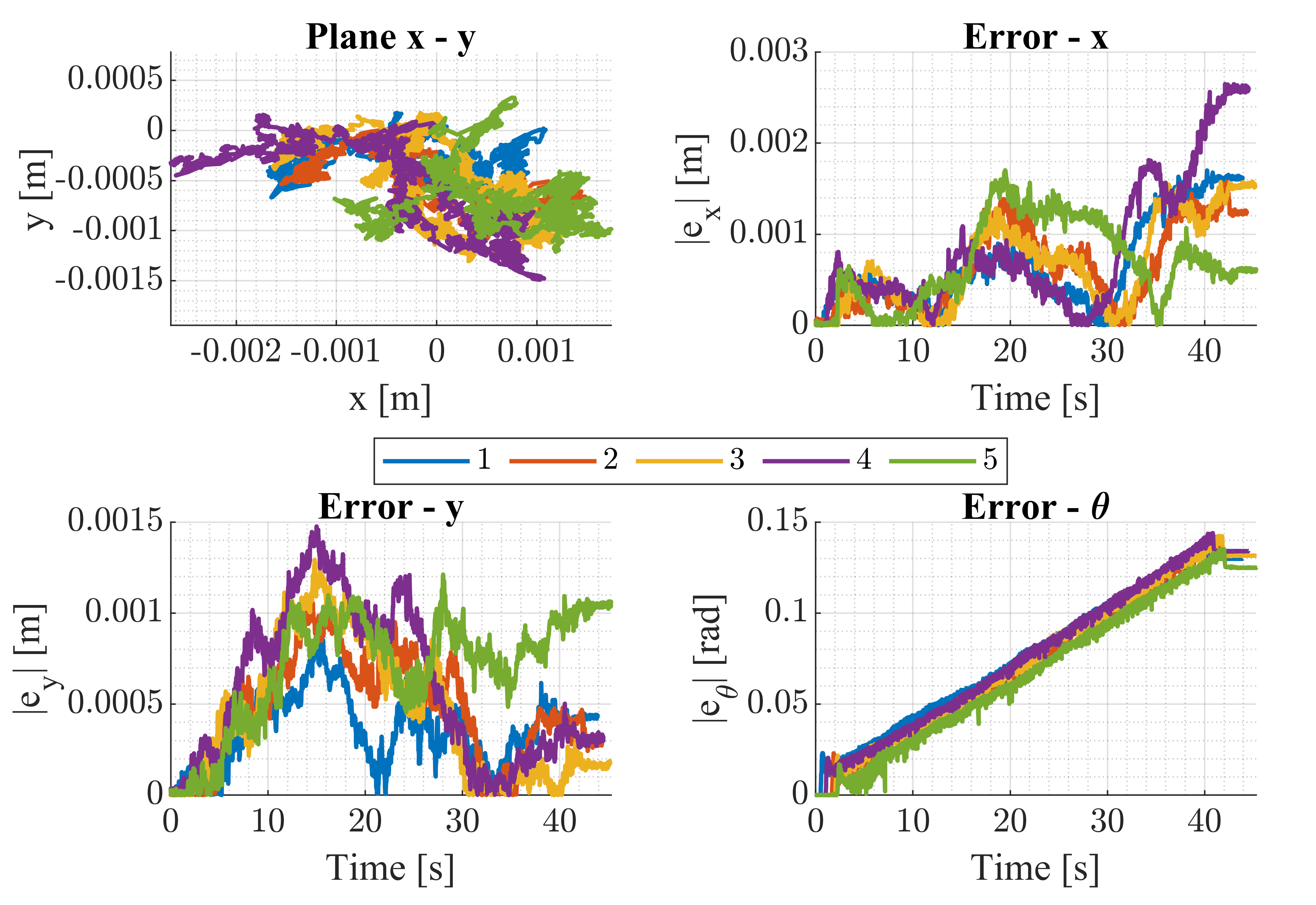

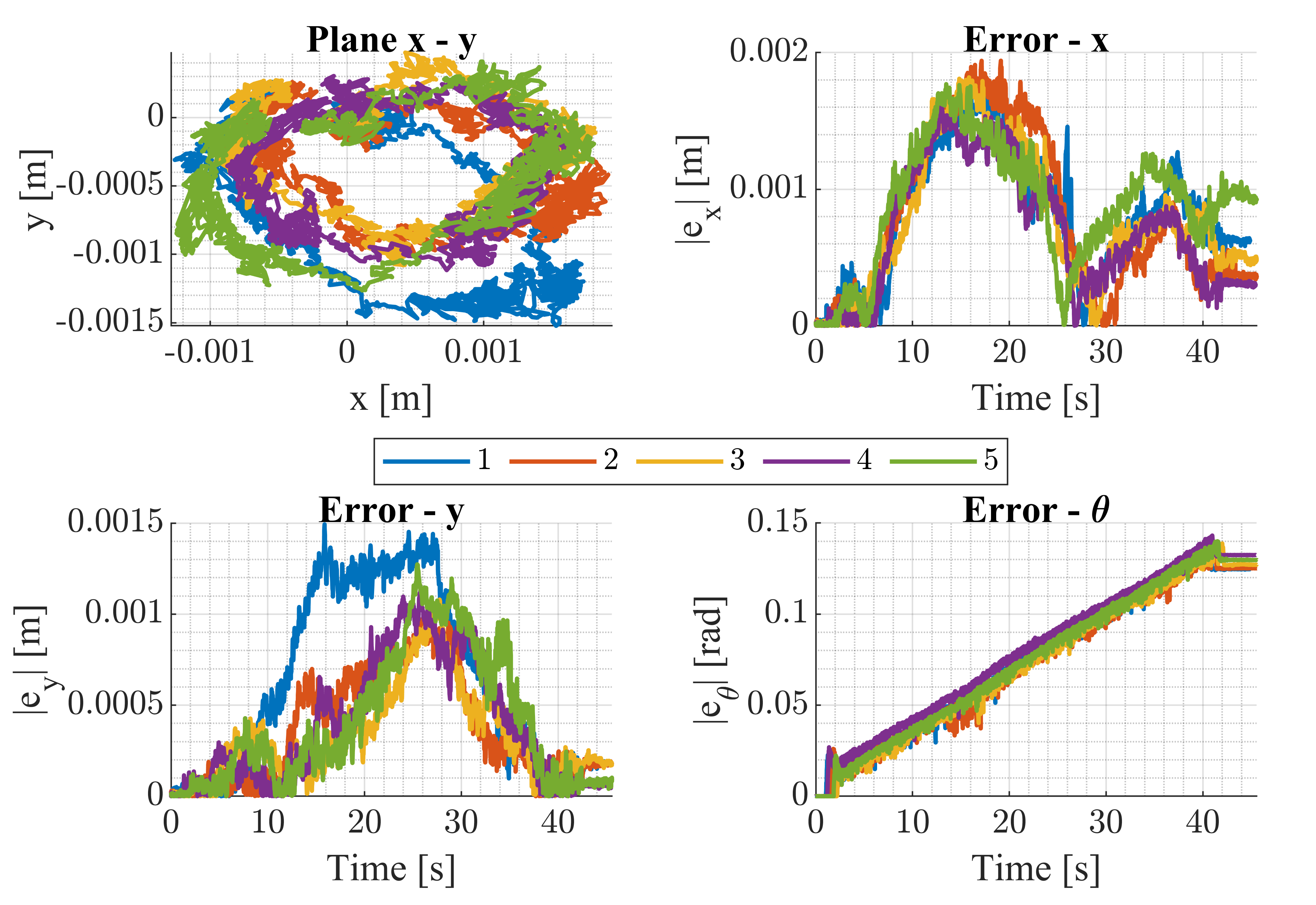

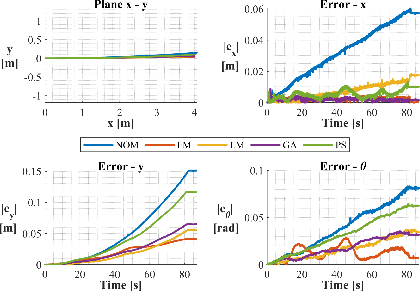

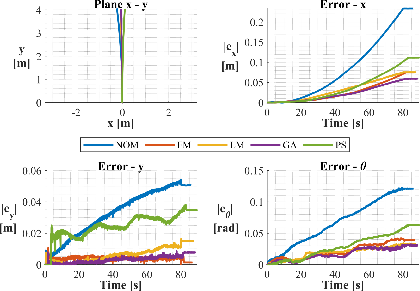

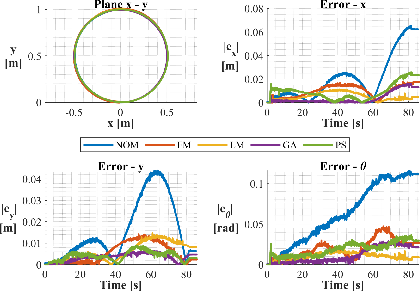

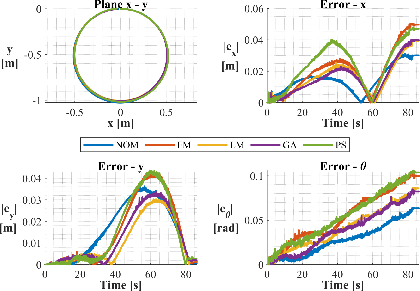

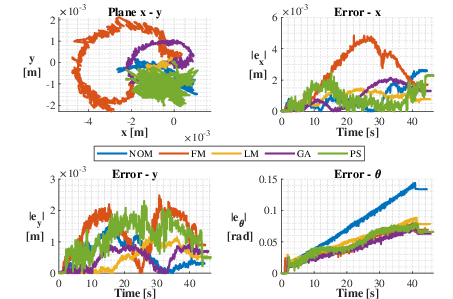

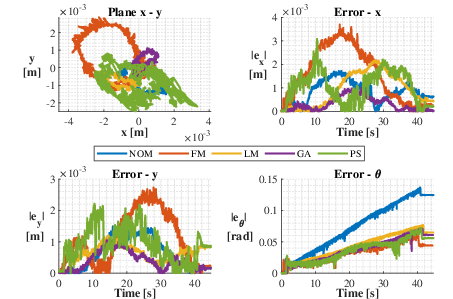

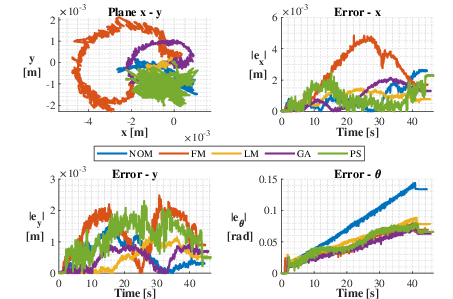

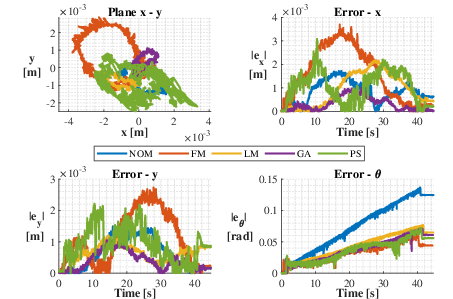

Calibration is formulated as a constrained nonlinear optimization problem, minimizing the squared error between ground truth (OptiTrack) and estimated pose across multiple trajectories (linear, circular, rotational). Four optimization methods are evaluated: interior-point (fmincon), Levenberg-Marquardt, Genetic Algorithm, and Particle Swarm. The calibration bounds are set to ±5% of nominal CAD-derived parameters.

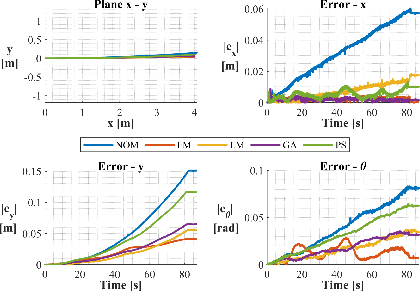

Figure 4: Straight-line motion along the x and y axes used for odometry calibration.

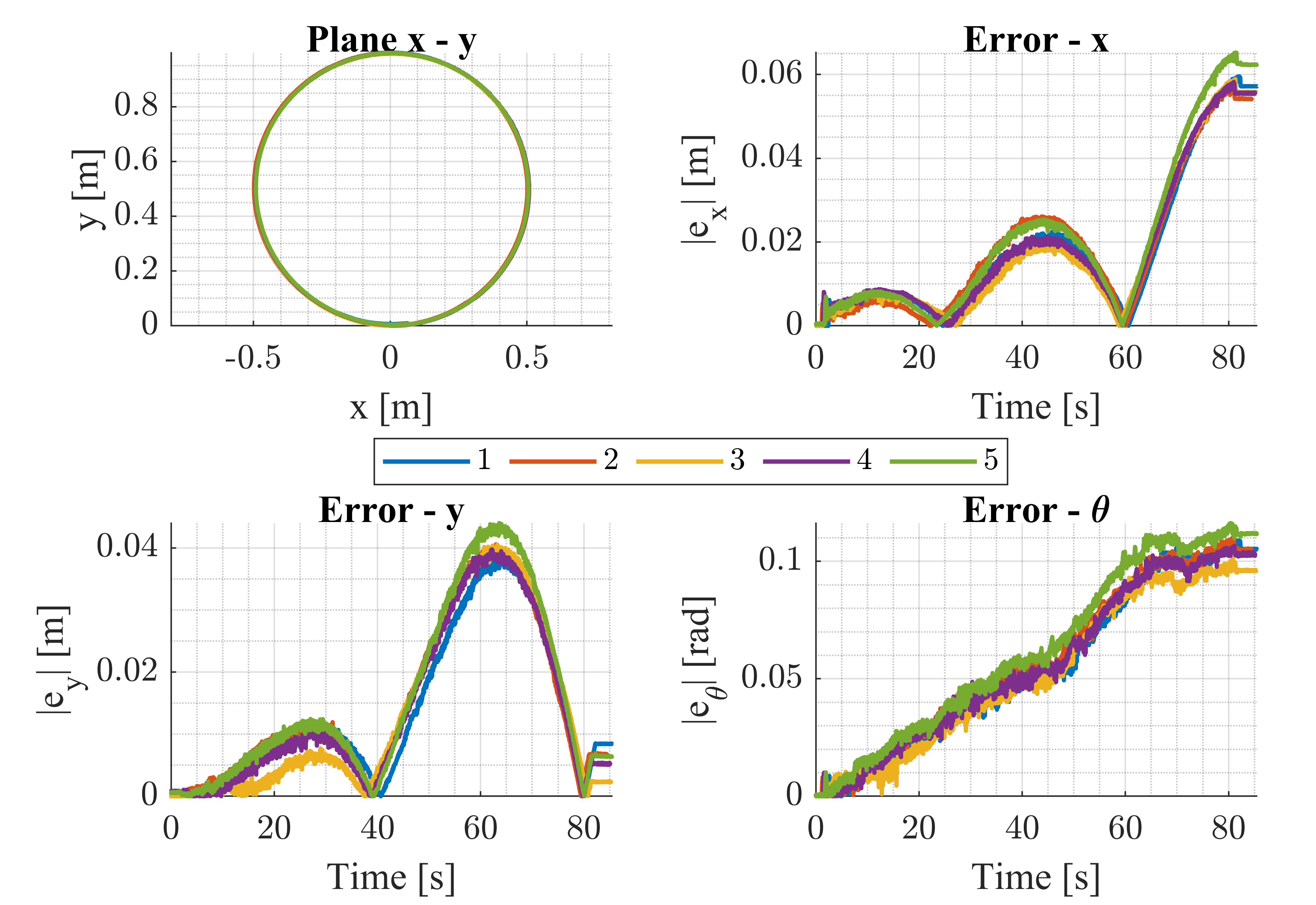

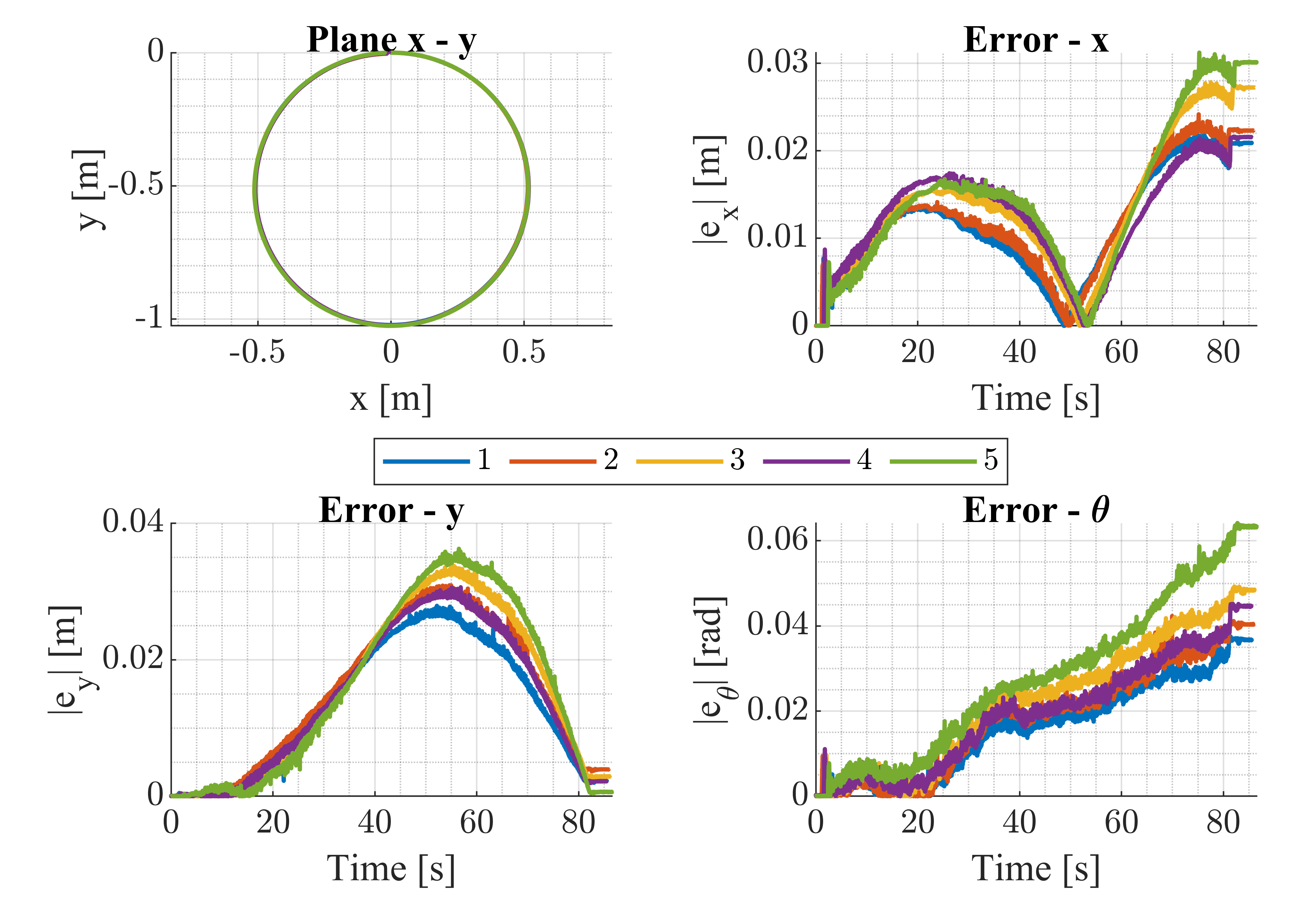

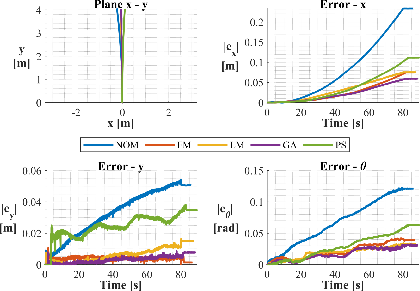

Figure 5: Circular motion in counterclockwise (top) and clockwise (bottom) directions for excitation of kinematic parameters.

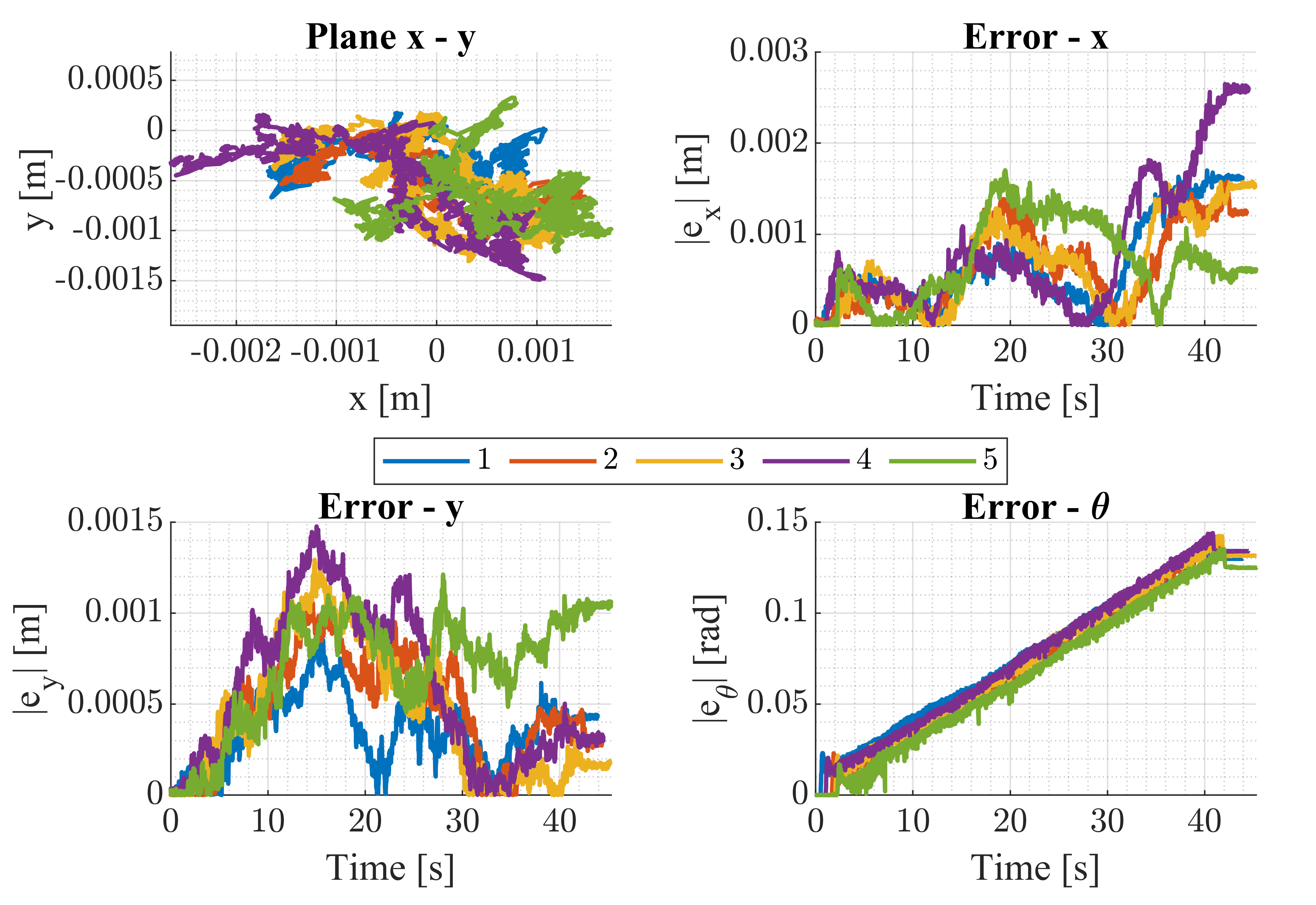

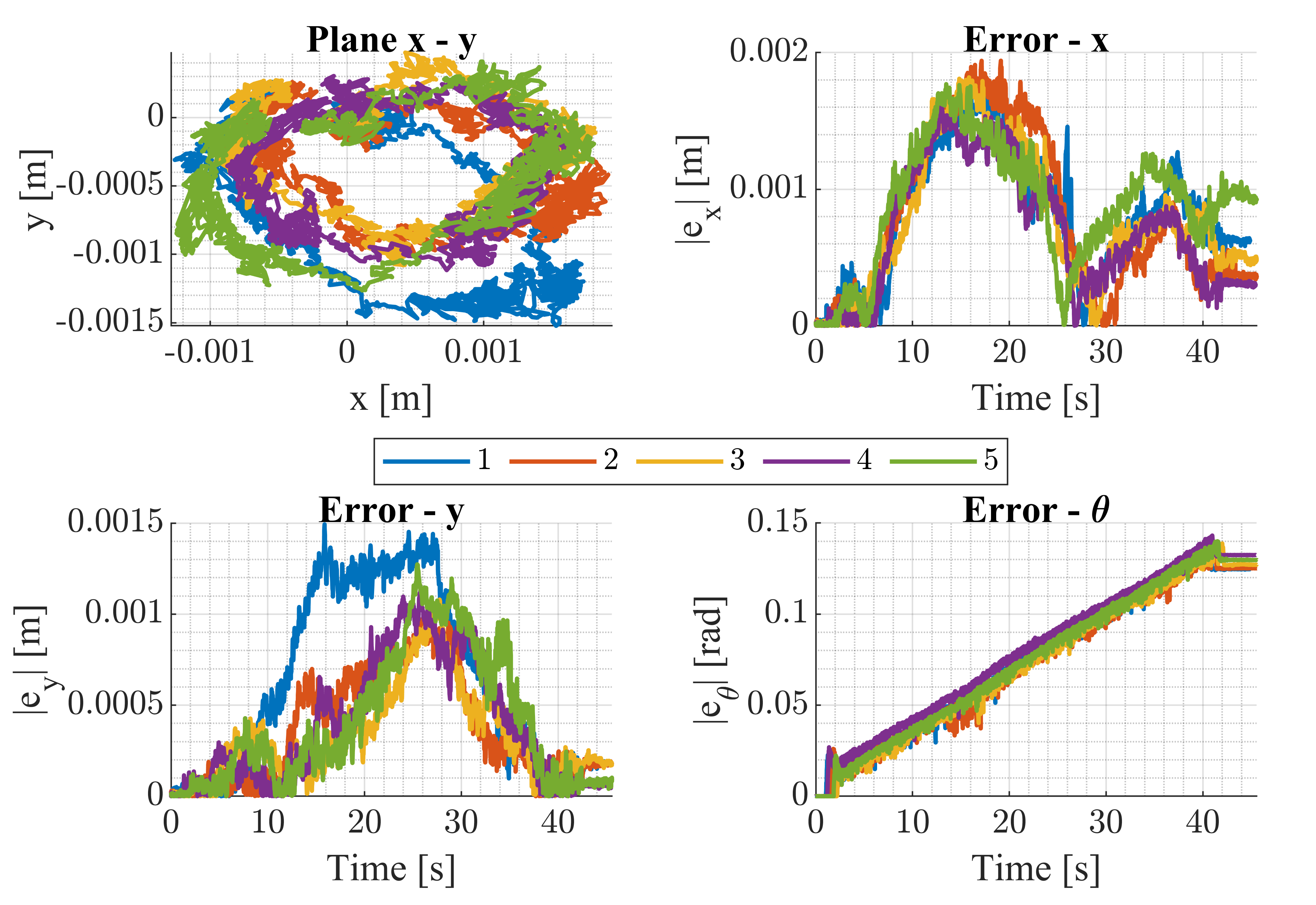

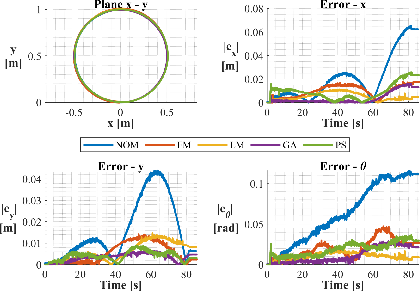

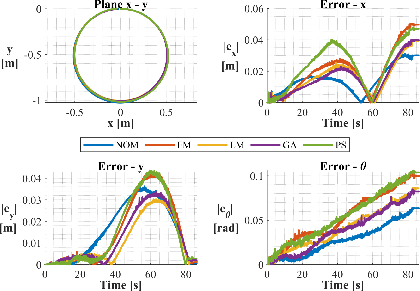

Figure 6: Rotational movement around the robot's z axis in both CCW and CW directions for calibration.

Calibration results indicate significant reduction in pose estimation errors post-optimization, with the exception of certain circular trajectories where overfitting to the aggregate dataset led to suboptimal performance. However, for grid-scan and linear motions typical of NDT tasks, the calibrated model yields robust improvements.

Figure 7: Comparison of calibration methods for straight-line trajectories, showing error reduction after optimization.

Figure 8: Calibration methods comparison for circular trajectories, highlighting method-dependent performance.

Figure 9: Calibration methods comparison for rotational movement, demonstrating improved orientation estimation.

Pose Estimator Design: EKF and UKF Sensor Fusion

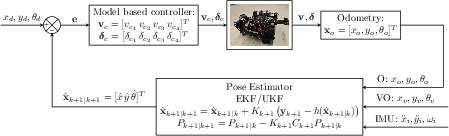

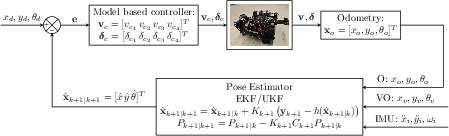

The pose estimation framework fuses odometry, IMU, and visual odometry using EKF and UKF. The nonlinear system model is discretized, with control inputs comprising wheel velocities and steering angles. EKF linearizes the process and measurement models around the current estimate, while UKF propagates sigma points through the nonlinear dynamics, achieving higher-order accuracy in mean and covariance estimation.

Figure 10: Schematic of the control system and state estimator, integrating sensor fusion for robust pose estimation.

EKF provides first-order accuracy in state and covariance, while UKF achieves third-order accuracy for Gaussian noise, and second-order for covariance. The UKF parameters are set as α=0.001, β=2.0, κ=0 for optimal sigma point spread.

Experimental Validation

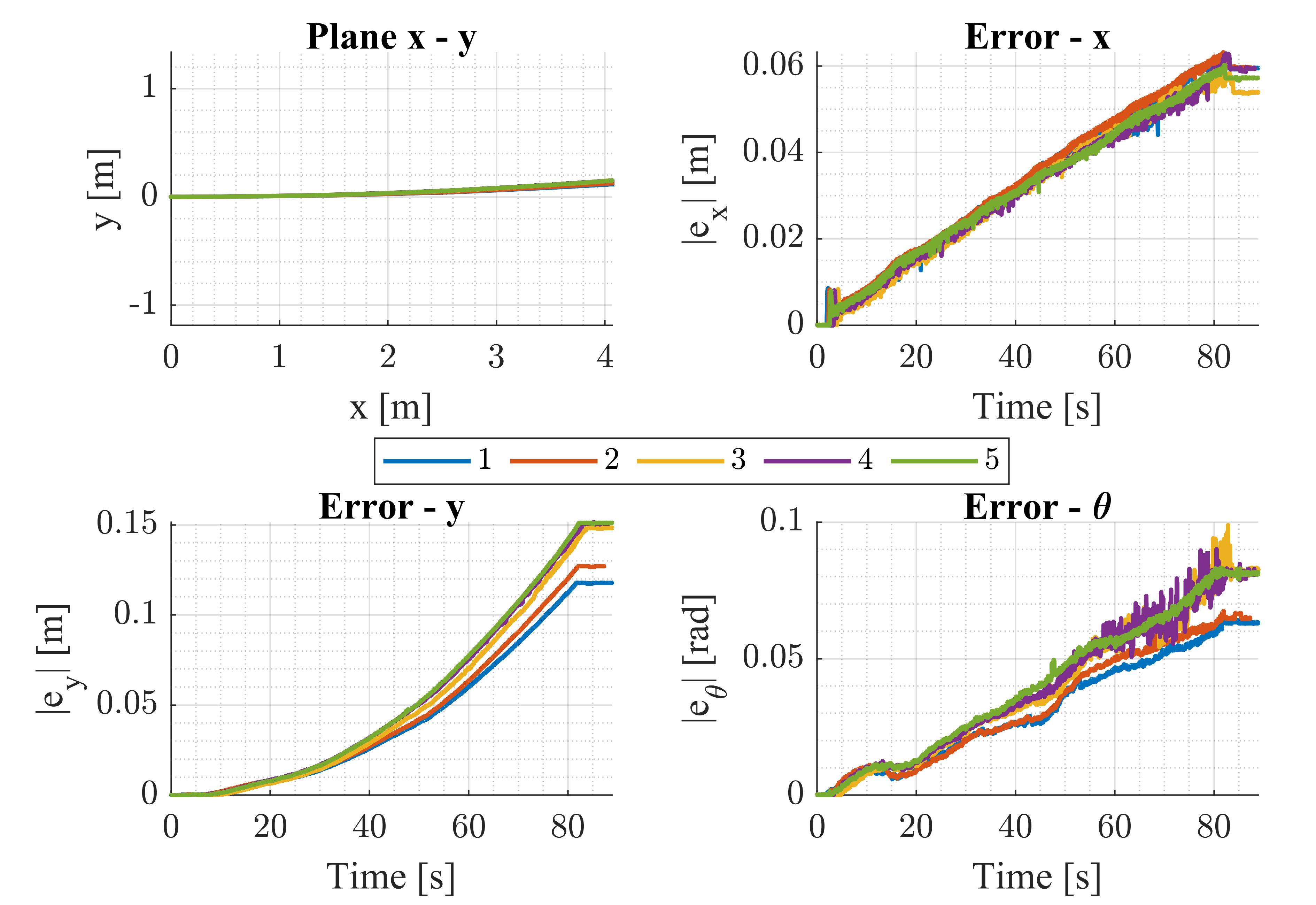

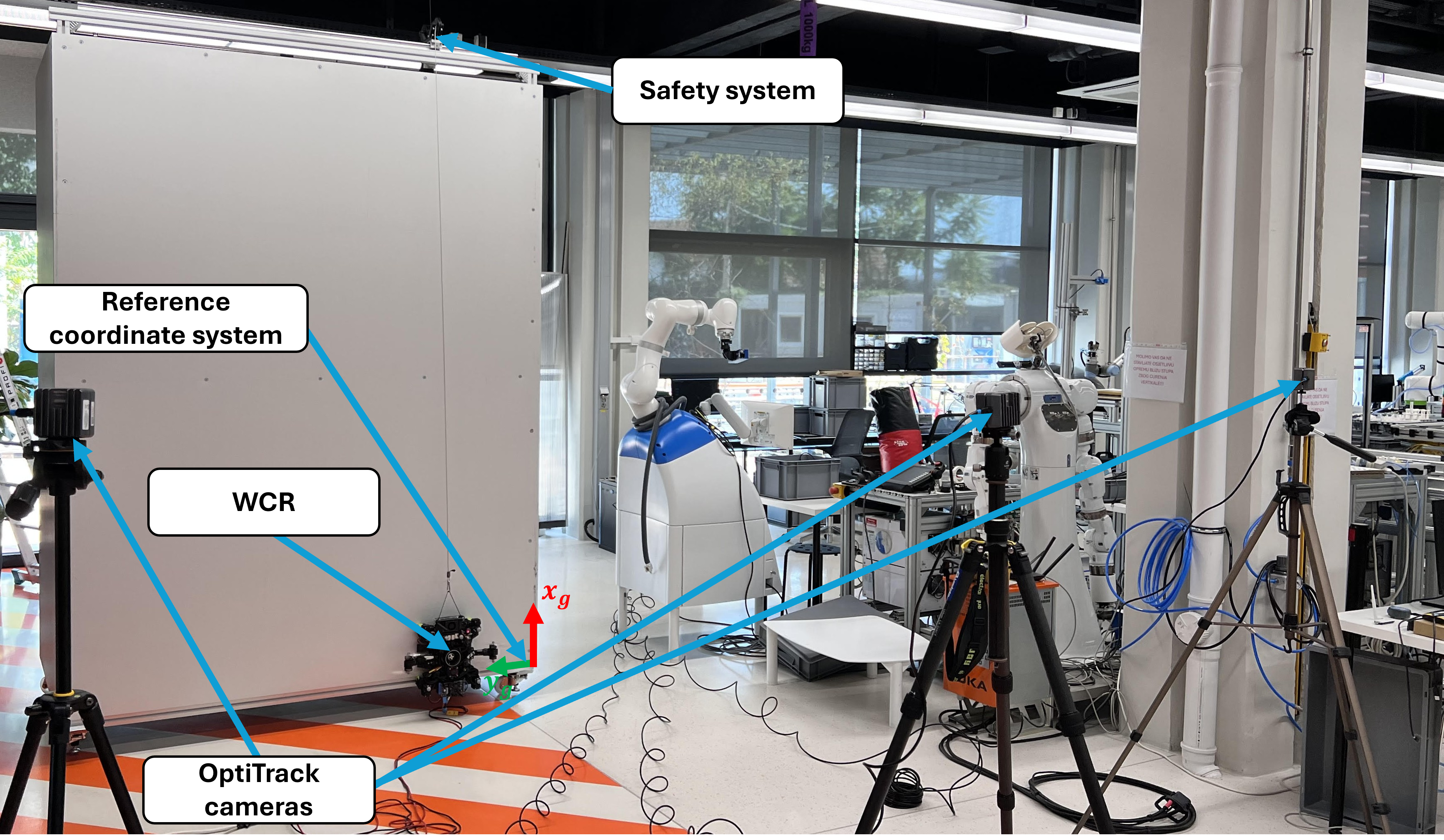

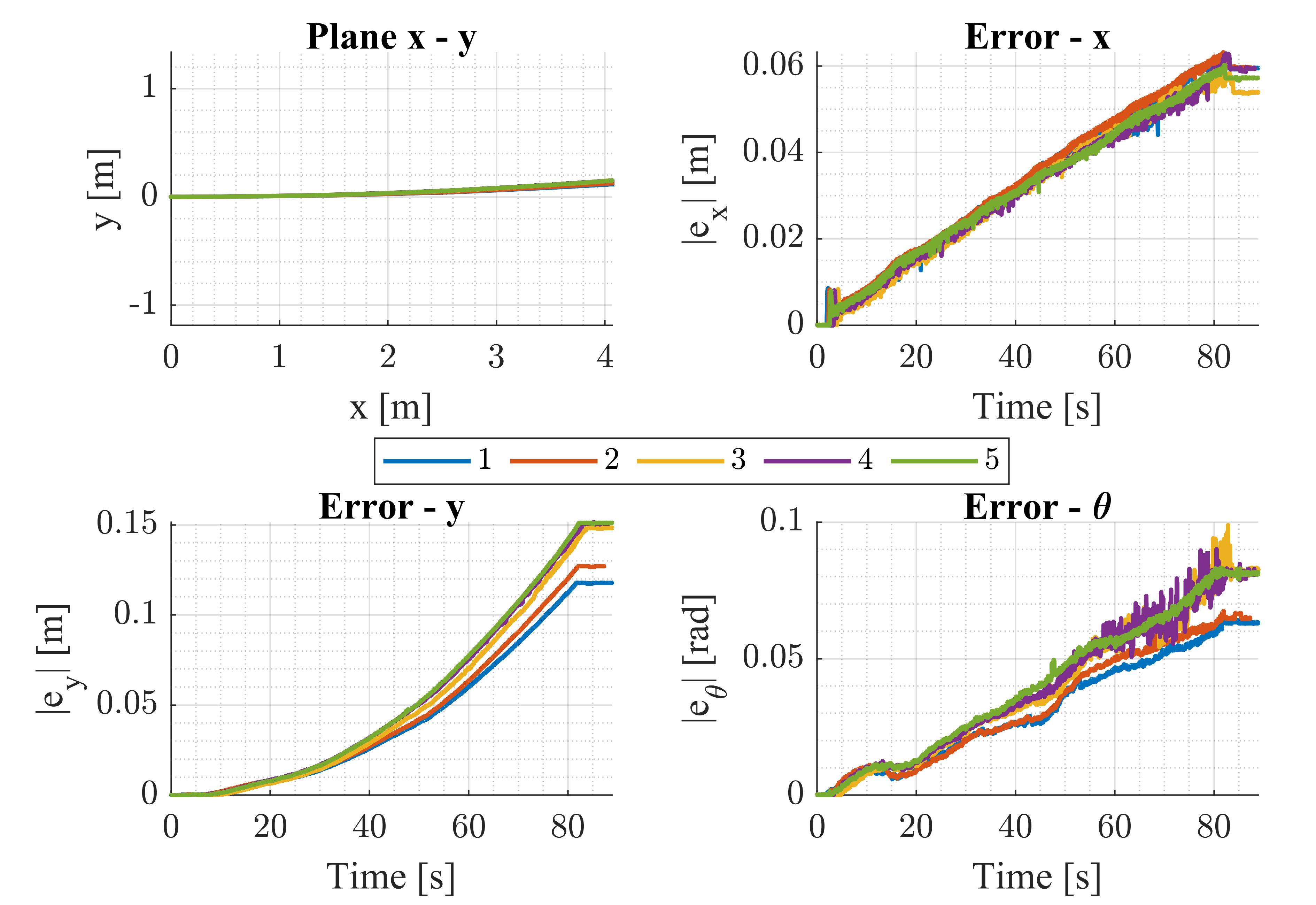

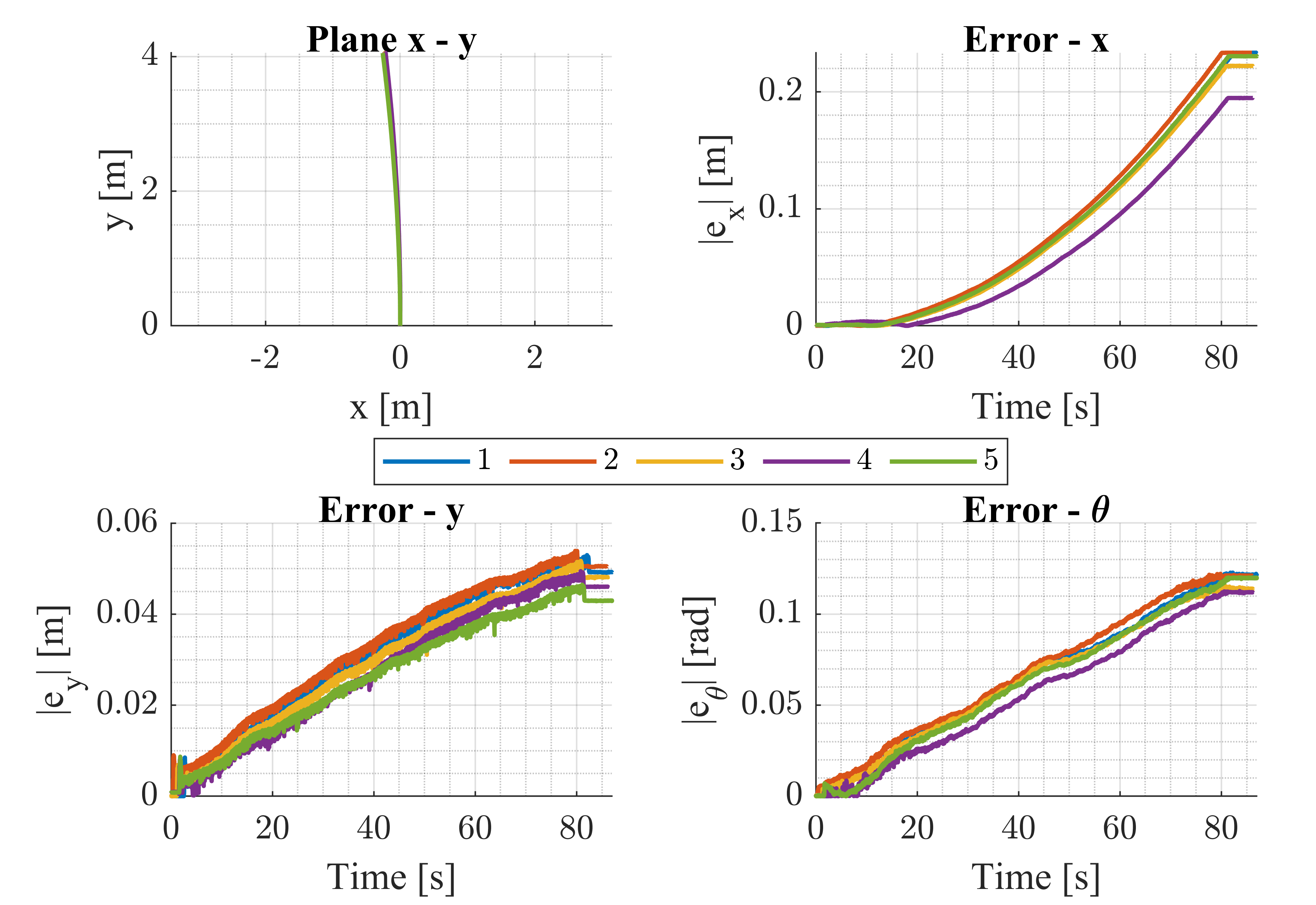

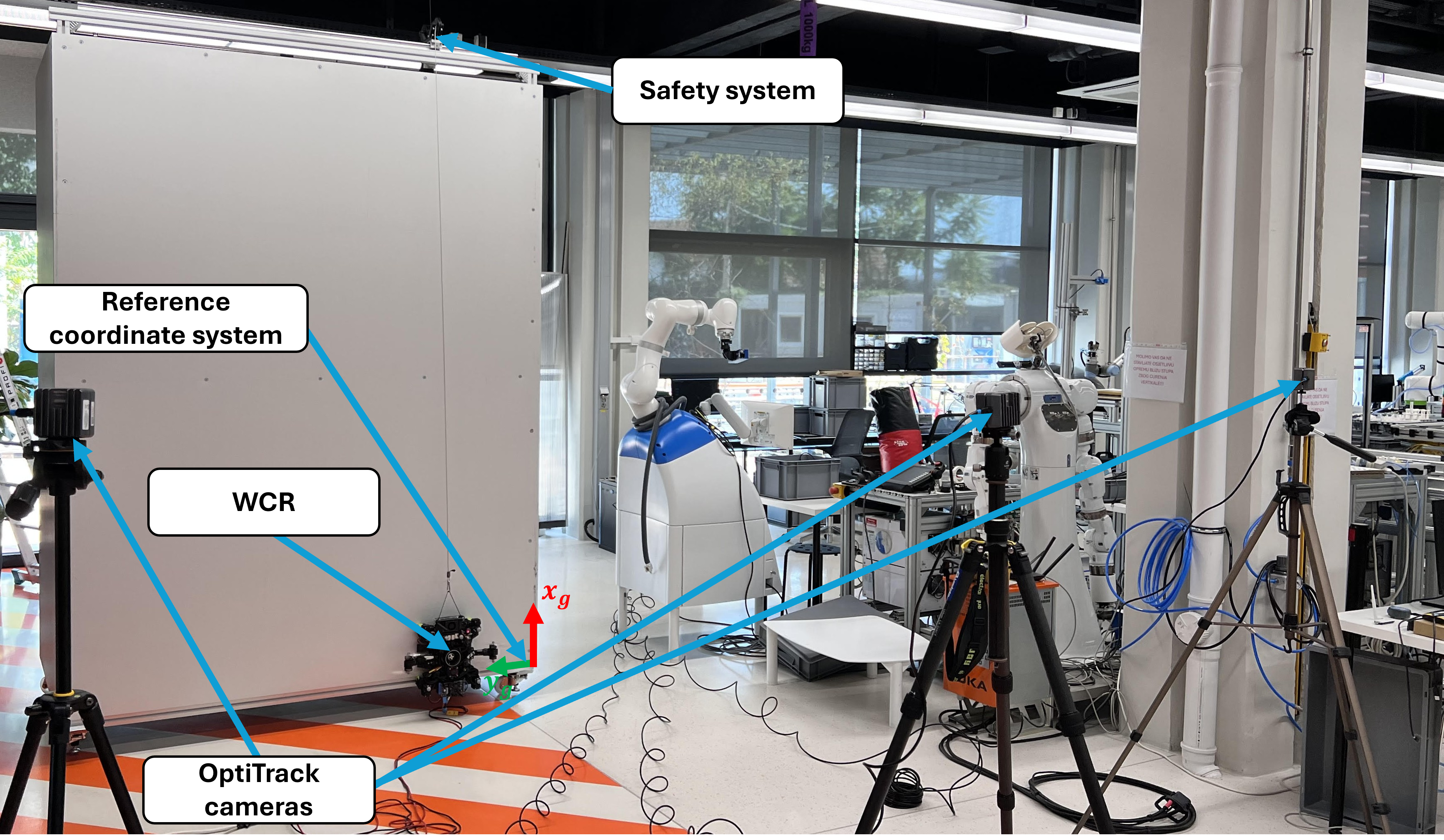

Experiments are conducted on a vertical melamine-faced chipboard wall, with safety systems in place. The robot tracks linear trajectories along X and Y axes, with pose estimation evaluated using odometry, EKF, and UKF. The global coordinate system is defined with X axis vertical and Y axis horizontal.

Figure 11: Experimental setup for pose estimator comparison, showing the robot and OptiTrack system.

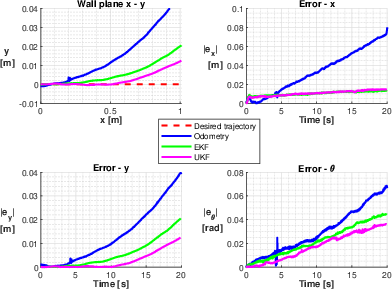

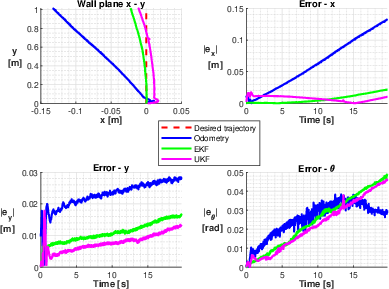

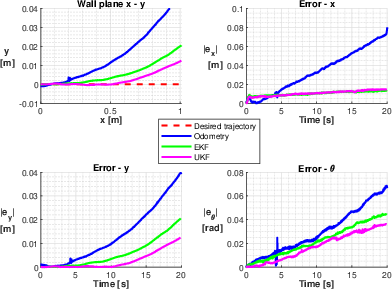

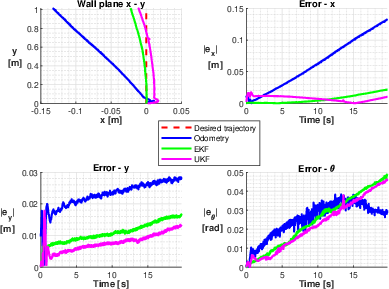

Results demonstrate that both EKF and UKF outperform odometry alone, particularly in orientation estimation and compensation for slippage and gravitational effects. Odometry-only estimation exhibits endpoint errors and unintended drift, while sensor fusion enables accurate trajectory tracking. The IMU is critical for detecting orientation deviations, and visual odometry assists in mitigating slippage.

Figure 12: Experimental results for pose estimators in X axis direction, showing improved endpoint accuracy with EKF/UKF.

Figure 13: Experimental results for pose estimators in Y axis direction, highlighting compensation for gravitational drift.

Implications and Future Directions

The research establishes a systematic approach for odometry calibration and sensor fusion-based pose estimation in 4WIS4WID wall climbing robots. The demonstrated reduction in pose estimation errors is essential for autonomous NDT and maintenance operations on vertical surfaces, where external localization is impractical. The comparative analysis of optimization methods provides guidance for parameter identification in complex kinematic systems.

Future work should address dynamic modeling, including frictional interactions between wheels and surface, and extend sensor fusion to incorporate additional modalities (e.g., force/torque sensors, event cameras). Robustness under varying surface conditions and real-time adaptation of estimator parameters are promising directions. Integration with advanced SLAM frameworks and learning-based estimators may further enhance autonomy and reliability.

Conclusion

This paper presents a comprehensive methodology for odometry calibration and pose estimation in a 4WIS4WID mobile wall climbing robot. The fusion of odometry, IMU, and visual odometry via EKF and UKF significantly improves pose accuracy, enabling reliable operation in challenging vertical environments. The systematic calibration and estimator design are generalizable to other wall climbing platforms, providing a foundation for future research in autonomous inspection and maintenance robotics.