- The paper introduces a continuous-time calibration approach using cubic uniform B-splines to precisely align asynchronous event and inertial data.

- It employs direct event-based pattern recognition with Lagrange polynomial tracking to robustly estimate both extrinsic and temporal parameters.

- Experimental results demonstrate high accuracy, repeatability, and robustness, achieving performance comparable to state-of-the-art frame-based methods.

Continuous-Time Spatiotemporal Calibration for Event-Based Visual-Inertial Systems: An Analysis of eKalibr-Inertial

Introduction

The paper introduces eKalibr-Inertial, a continuous-time, event-only spatiotemporal calibration framework for visual-inertial systems equipped with event cameras and IMUs. The method addresses the unique challenges posed by the asynchronous, high-temporal-resolution data streams of event cameras, which are increasingly used in robotics and perception tasks requiring low latency and high dynamic range. Accurate spatiotemporal calibration—specifically, the estimation of extrinsic (rigid-body) and temporal (clock offset) parameters between sensors—is critical for effective sensor fusion in such systems. eKalibr-Inertial extends prior work on event-based calibration by providing a fully open-source, continuous-time approach that leverages direct event data, robust pattern recognition, and batch optimization.

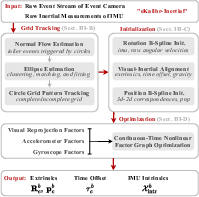

Figure 1: The eKalibr-Inertial pipeline, from event stream input through pattern recognition, initialization, and continuous-time batch optimization.

Methodological Framework

Event-Based Pattern Recognition and Tracking

The front-end of eKalibr-Inertial employs a robust event-based circle grid pattern recognition and tracking pipeline. Unlike frame-based methods, which reconstruct intensity images from events (often introducing noise and artifacts), this approach directly processes raw event streams. The method utilizes normal flow estimation and ellipse fitting on the surface of active events (SAE) to extract both complete and incomplete circle grid patterns. The tracking module, inherited from eKalibr-Stereo, uses Lagrange polynomial prediction to maintain pattern continuity under motion, which is essential for reliable calibration in dynamic scenarios.

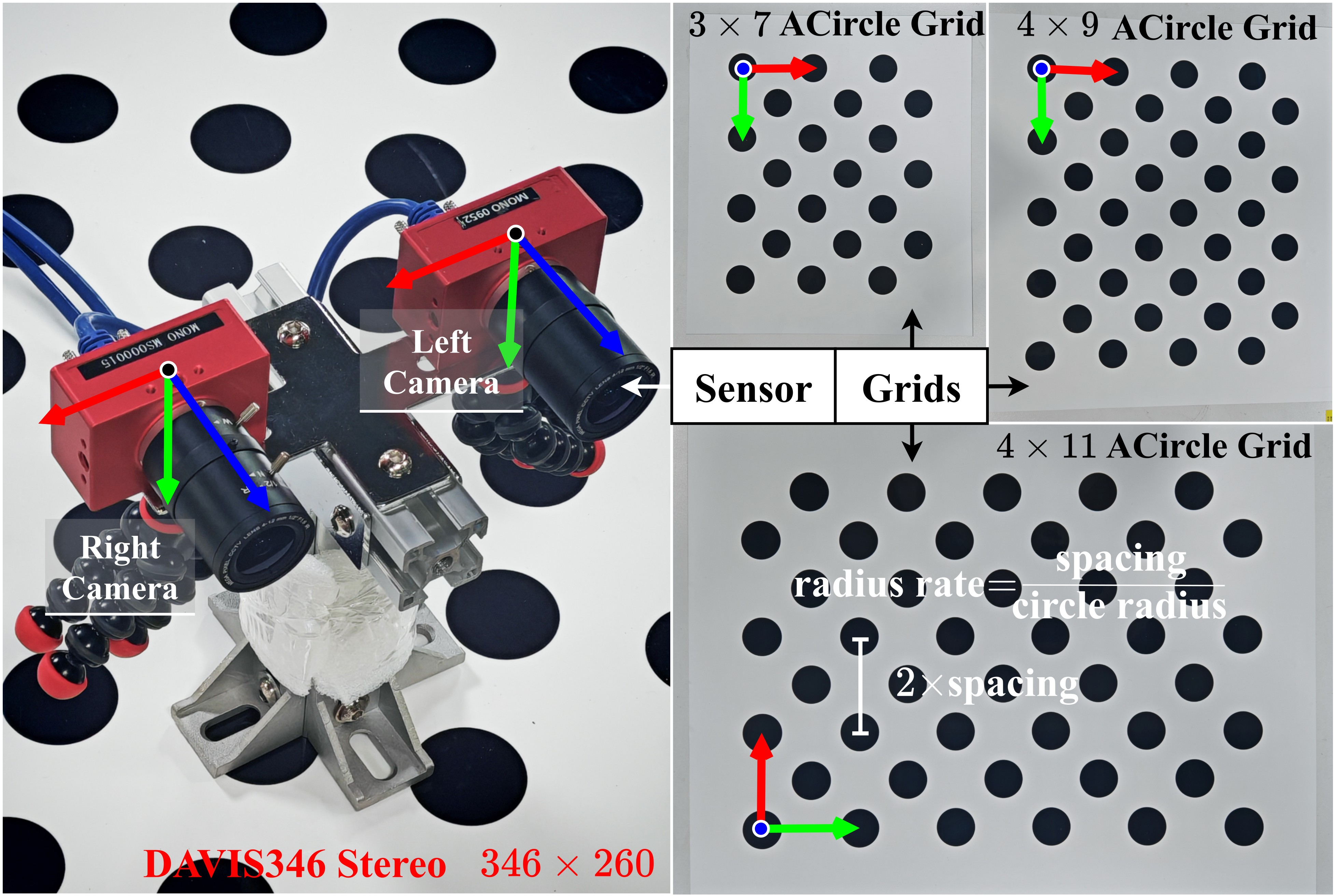

Figure 2: Experimental setup with stereo event camera rig and three asymmetric circle grid patterns used for calibration.

Continuous-Time State Representation

The core of the calibration framework is a continuous-time state representation using cubic uniform B-splines for both position and rotation. This allows for efficient, differentiable modeling of the IMU trajectory and supports state interpolation at arbitrary timestamps, which is crucial for handling the asynchronous nature of event and inertial data. The B-spline parameterization also introduces sparsity, improving computational efficiency during optimization.

Three-Stage Initialization

Given the high non-linearity of the batch optimization, a three-stage initialization is performed:

- Rotation B-Spline Initialization: The rotation trajectory is initialized by fitting a B-spline to raw gyroscope measurements via nonlinear least-squares minimization.

- Visual-Inertial Alignment: PnP is performed on extracted grid patterns to estimate camera poses, followed by rotation-only hand-eye alignment to recover extrinsic rotation and time offset. If the initial time offset is large, a cross-correlation-based method is used for coarse alignment.

- Position B-Spline Initialization: The position trajectory is initialized by aligning the estimated camera positions with the IMU trajectory, using the previously estimated spatiotemporal parameters.

Continuous-Time Batch Optimization

The final calibration is formulated as a nonlinear least-squares problem over a factor graph, incorporating:

- Visual Reprojection Factors: Enforcing consistency between observed 2D grid points and projected 3D pattern points, parameterized by the current state estimate.

- Gyroscope and Accelerometer Factors: Enforcing consistency between IMU measurements and the predicted motion from the B-spline trajectory, including IMU intrinsic parameters and gravity.

The optimization is solved using the Ceres solver, with Huber loss for robustness to outliers.

Experimental Evaluation

The system is validated on a stereo event camera rig (DAVIS346) with three asymmetric circle grid patterns of varying sizes. Monte Carlo experiments are conducted, collecting multiple 30-second sequences per pattern. The calibration results demonstrate:

- High accuracy and repeatability in both extrinsic and temporal parameter estimation, with standard deviations on the order of 0.01∘–0.1∘ for rotation, $0.02$–$0.2$ cm for translation, and $0.05$–$0.2$ ms for time offset.

- Robustness across different grid patterns and sensor configurations, indicating the method's generalizability.

- Comparable performance to state-of-the-art frame-based calibrators, despite operating solely on event data.

Implications and Future Directions

eKalibr-Inertial provides a practical, open-source solution for spatiotemporal calibration in event-based visual-inertial systems, addressing a critical bottleneck for high-precision sensor fusion in robotics and perception. The continuous-time formulation is particularly well-suited for asynchronous, high-rate data and can be extended to multi-camera, multi-IMU configurations. The direct event-based approach avoids the pitfalls of image reconstruction, offering improved accuracy and efficiency.

Potential future developments include:

- Online or incremental calibration to support long-term autonomy and adaptation to sensor drift.

- Integration with learning-based event processing for further robustness in challenging visual conditions.

- Extension to heterogeneous sensor suites (e.g., LiDAR, radar) using the same continuous-time optimization principles.

Conclusion

eKalibr-Inertial advances the state of the art in event-based visual-inertial calibration by combining direct event processing, continuous-time trajectory modeling, and robust batch optimization. The method achieves high-precision, repeatable calibration results in real-world experiments and is positioned as a foundational tool for the deployment of event-based sensor fusion in robotics and related fields. The open-source release further facilitates adoption and benchmarking within the research community.