- The paper introduces MCIGLE, a framework that processes multimodal graph data incrementally without relying on exemplars to avoid catastrophic forgetting.

- It employs optimal transport for aligning textual and visual features and uses recursive least squares for non-forgetting updates.

- Experimental evaluations demonstrate that MCIGLE outperforms state-of-the-art baselines on multiple benchmarks by effectively managing evolving graph structures.

MCIGLE: Multimodal Exemplar-Free Class-Incremental Graph Learning

Introduction

The paper "MCIGLE: Multimodal Exemplar-Free Class-Incremental Graph Learning" introduces a sophisticated framework aimed at overcoming significant challenges in the domain of class-incremental learning (CIL). MCIGLE is designed specifically for situations where multimodal and graph-structured data is prevalent, and addresses issues such as catastrophic forgetting, distribution bias, and memory constraints—all exacerbated by the absence of exemplar-based memory systems. The methodology leverages key concepts from multimodal graph learning and recursive algorithms to maintain model performance as new classes are introduced, without relying on historical data storage.

Multimodal Feature Processing Module

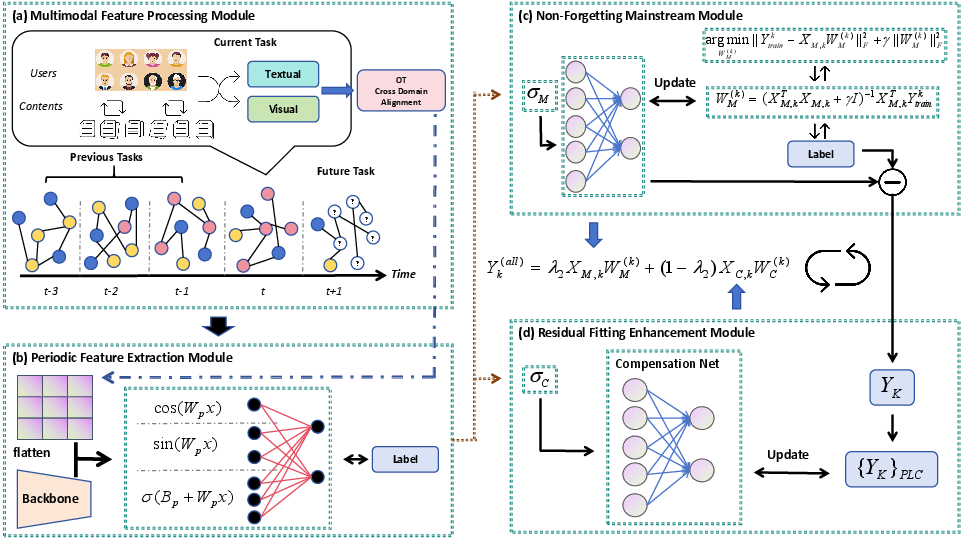

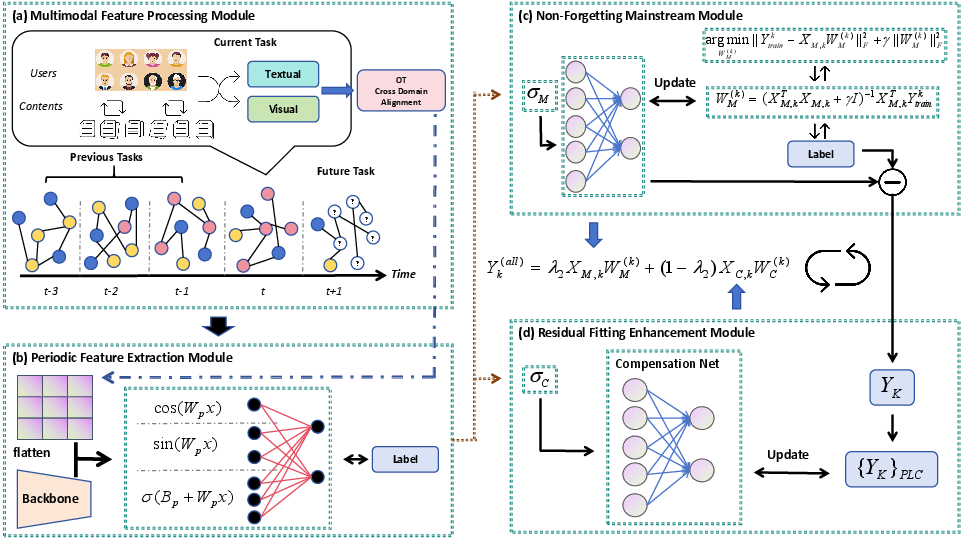

A core component of MCIGLE is the Multimodal Feature Processing Module, which systematically processes and aligns graph-structured data from diverse modalities such as text and images. The module utilizes optimal transport mechanisms to align visual and textual feature spaces, ensuring consistent node classification performance across evolving graph structures. This is achieved through a correlation-based weighting scheme for neighbor aggregation, ultimately enhancing the model's ability to generalize across tasks during continual learning.

Figure 1: Framework of MCIGLE.

Non-Forgetting Mainstream Module

The Non-Forgetting Mainstream Module within MCIGLE employs Concatenated Recursive Least Squares (C-RLS) to update model weights analytically without storing historical data. This recursive approach allows for effective knowledge retention and smooth updates as new class data becomes available. The framework ensures that incremental learning performance remains robust and comparable to joint training approaches, leveraging dynamic management of weight updates to minimize forgetting.

The integration of Fourier analysis into the Periodic Feature Extraction Module enables efficient modeling of temporal patterns with minimal parameters compared to traditional neural networks. This module transforms node-level embeddings into a global feature matrix, which is then processed through Fourier-based neural layers. The resulting features are employed in both the mainstream and compensation stages of MCIGLE, significantly enhancing the model's capability to handle complex, evolving datasets.

Residual Fitting Enhancement Module

To complement the linear characteristics of the main module, a Residual Fitting Enhancement Module is introduced to capture nuances missed by the primary linear mapping. This component utilizes nonlinear transformations to address potential underfitting issues, thereby refining prediction accuracy by correcting residual errors.

Experimental Validation

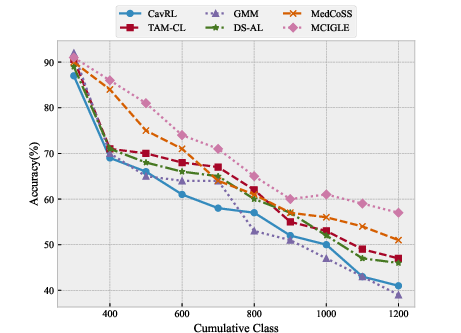

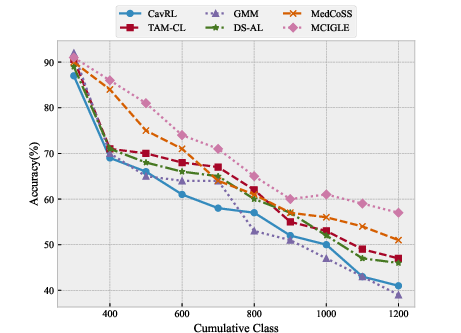

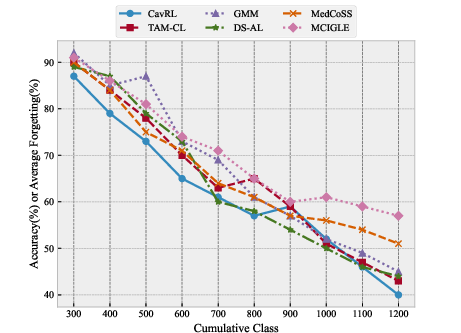

MCIGLE's performance is rigorously evaluated against a suite of state-of-the-art baseline models on publicly available datasets such as COCO-QA, VoxCeleb, and AudioSet-MI. The proposed framework demonstrates superior results across various metrics, particularly in maintaining low rates of forgetting while achieving high accuracy. Notably, MCIGLE outperformed in most experimental settings, barring specialized instances like the Acc metric on AudioSet-MI where domain-specific models prevailed.

Figure 2: AudioSet-MI.

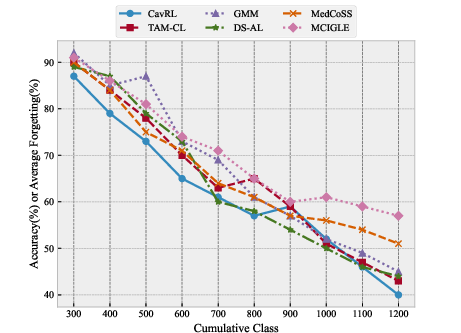

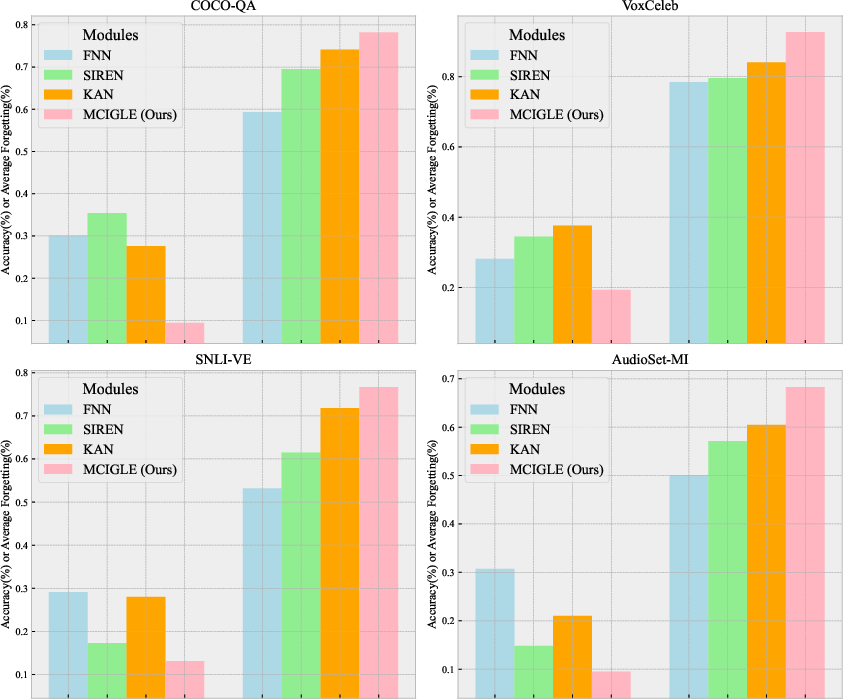

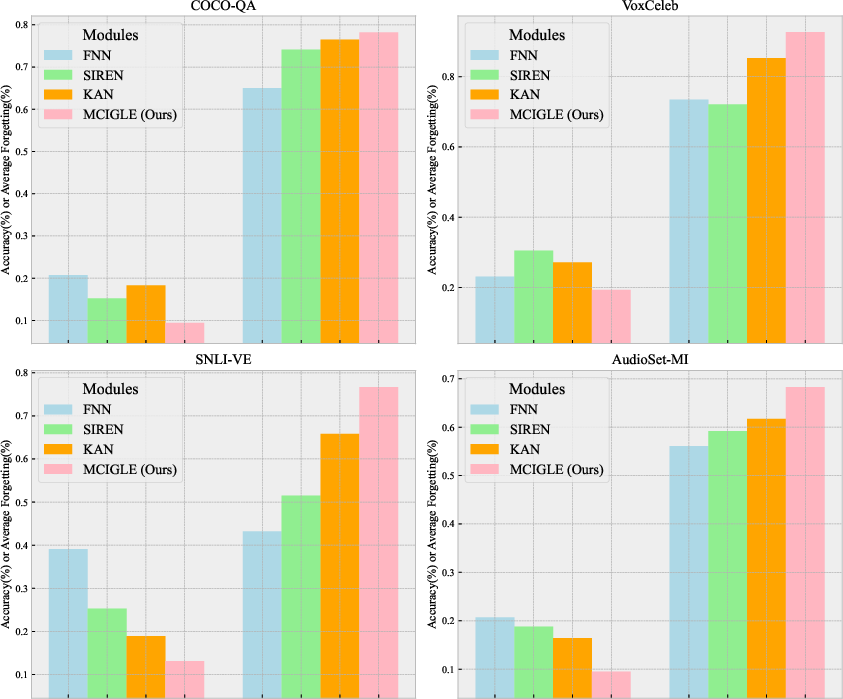

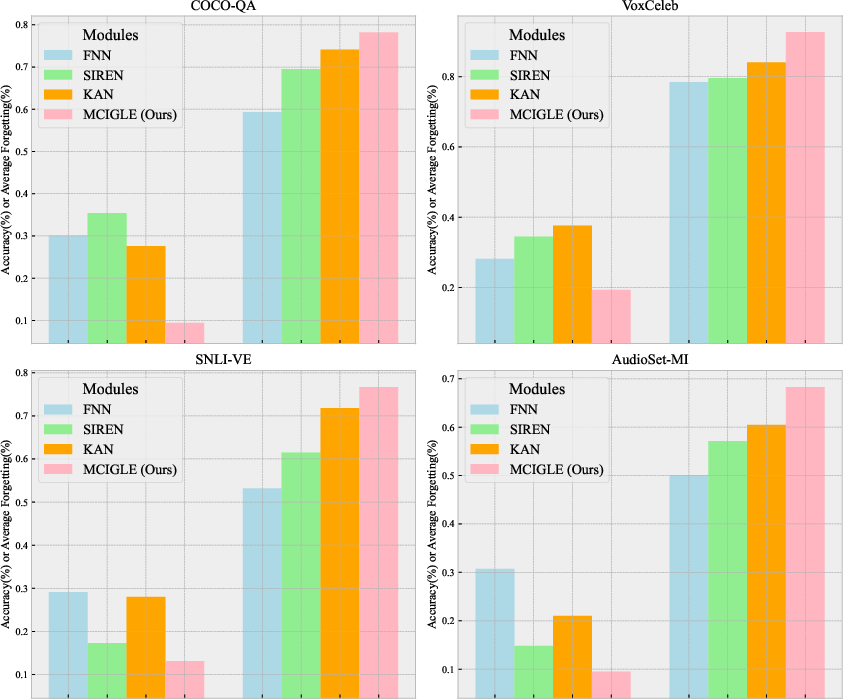

Comprehensive ablation studies further validate the effectiveness of MCIGLE's architecture. Replacing the Fourier-based network with traditional alternatives like KAN, FNN, and SIREN, or substituting C-RLS with conventional methods like knowledge distillation, yielded inferior performance, underscoring the robustness and innovative design of MCIGLE's components.

Figure 3: Ablation study replacing Fourier network with FNN, SIREN, and KAN.

Conclusion

MCIGLE represents a significant advancement in the field of exemplar-free class-incremental learning, offering a robust framework capable of processing and learning from multimodal, graph-structured data without historical exemplars. The methodology not only counters catastrophic forgetting but also enhances the scalability and adaptability of the models via recursive and multimodal processing innovations. This framework provides a foundational model for further exploration and implementation in diverse practical applications requiring continual learning under exemplar-free conditions. Future developments could expand on these foundational principles, integrating more complex data types and refinement techniques.