- The paper introduces a hybrid framework that periodically realigns SLAM-based HMD tracking with MoCap data to ensure consistent spatial co-location in VR.

- It leverages trajectory alignment and SE(3) optimization for extrinsics calibration, achieving ATE RMSE as low as 3.1 cm in single-user evaluations.

- The system supports robust multi-user interactions with ~5.2 cm error in dynamic tests and maintains low latency (~70 ms) during real-time tracking.

Hybrid SLAM-Based HMD Tracking and Motion Capture Synchronization for Co-Located VR

Introduction and Motivation

The paper addresses the persistent challenge of achieving robust, low-latency, and spatially accurate co-location in multi-user VR environments. Commercial HMDs predominantly utilize inside-out VI-SLAM tracking, which, while offering high framerate and low latency, fails to provide a shared spatial reference across devices. This limitation results in misalignment between users in both physical and virtual spaces, undermining collaborative VR experiences. Previous solutions relying on continuous external tracking (e.g., MoCap) introduce latency and jitter, while one-time calibration approaches cannot correct for drift or tracking breakdowns during runtime. The proposed framework integrates the strengths of both approaches, leveraging MoCap for periodic alignment and SLAM-based HMD tracking for real-time pose updates, thereby ensuring both accuracy and responsiveness.

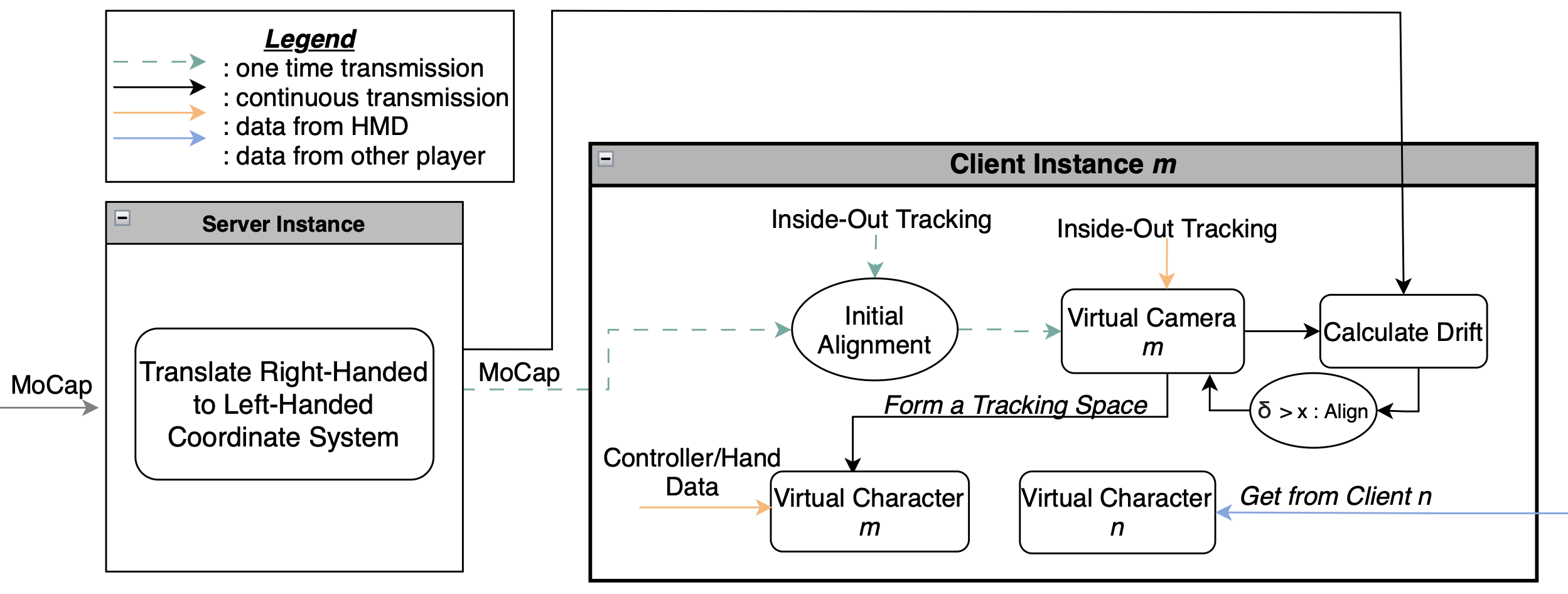

System Architecture and Methodology

The framework is modular, supporting arbitrary MoCap systems and HMDs, and is implemented using Qualisys MoCap and Meta Quest 2 HMDs. The architecture consists of several key processes:

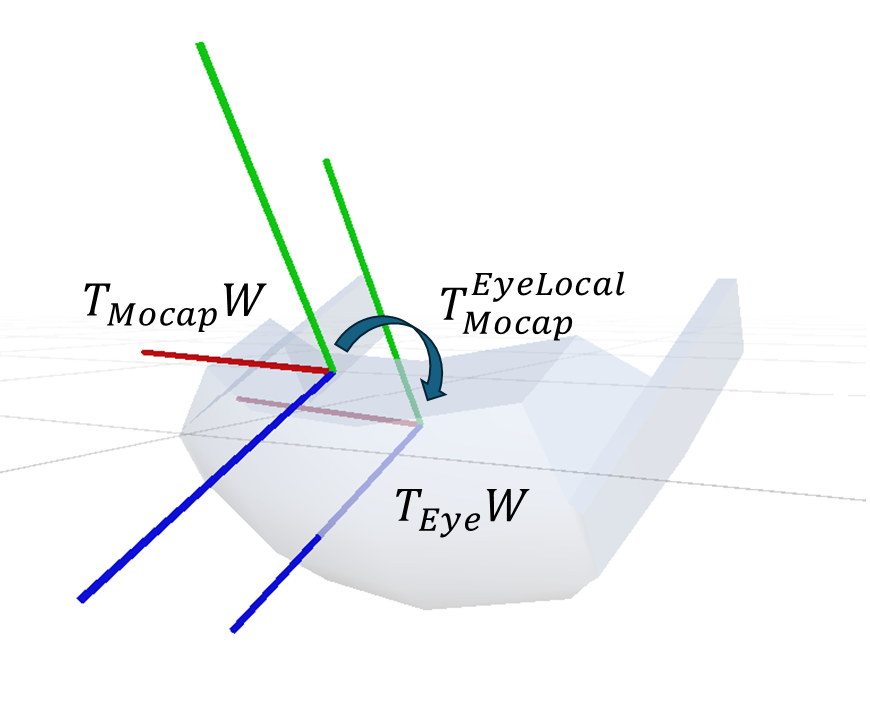

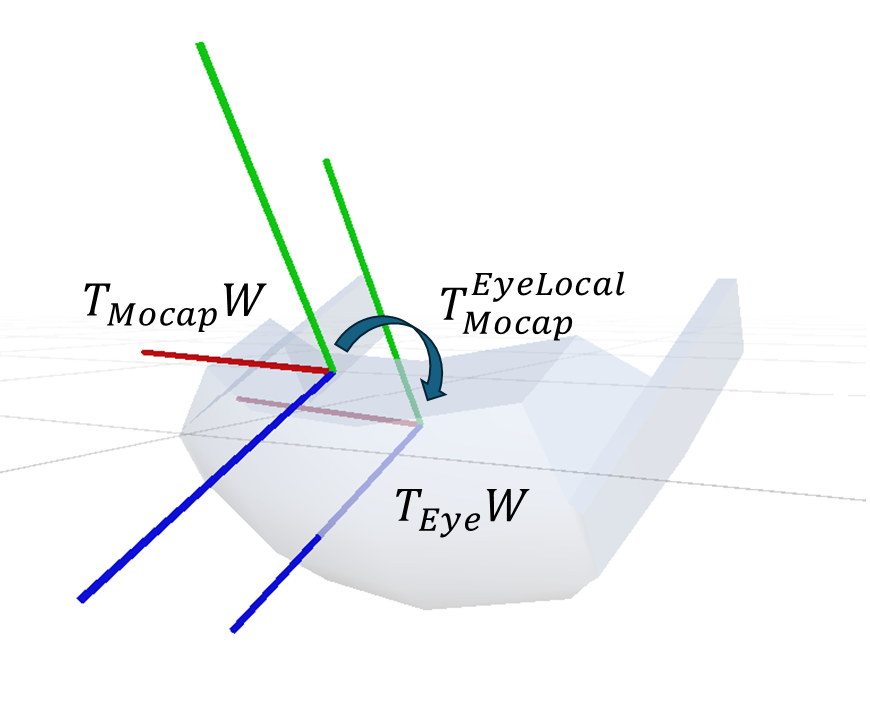

- Extrinsics Calibration: Estimation of the rigid transformation between the MoCap marker frame and the HMD's eye center (virtual camera pivot), crucial for accurate spatial alignment. This is achieved via trajectory alignment and optimization over SE(3), minimizing the discrepancy between MoCap and HMD pose streams.

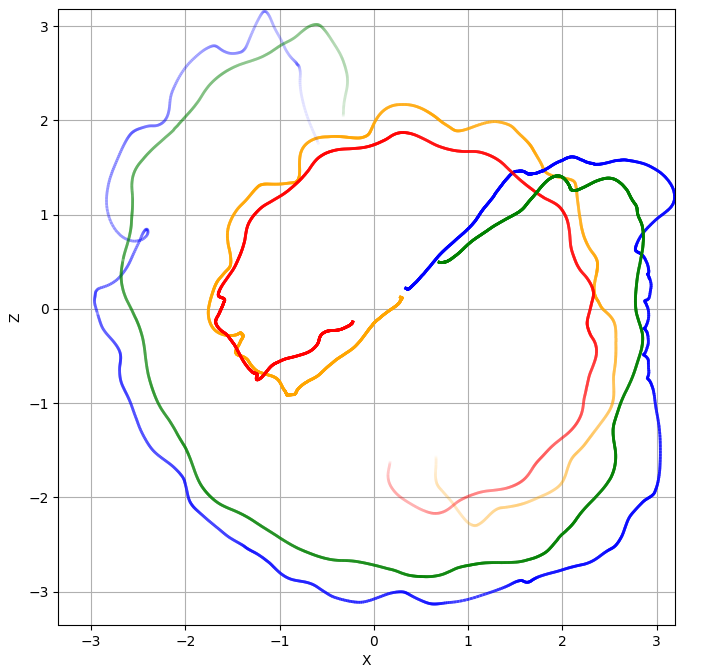

Figure 1: Rigid pose offset TEyeLocal between MoCap frame and HMD eye center.

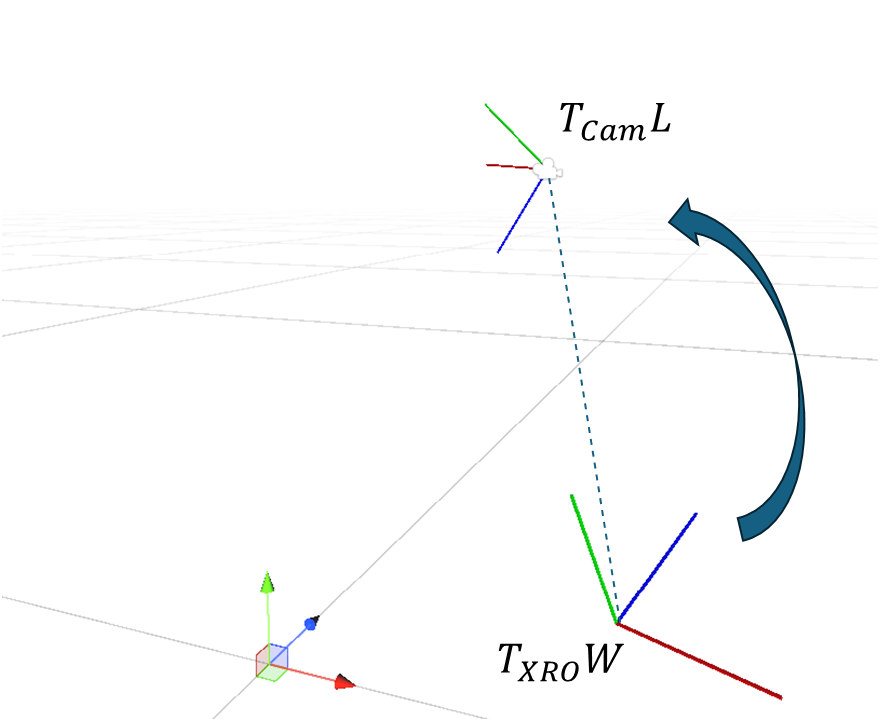

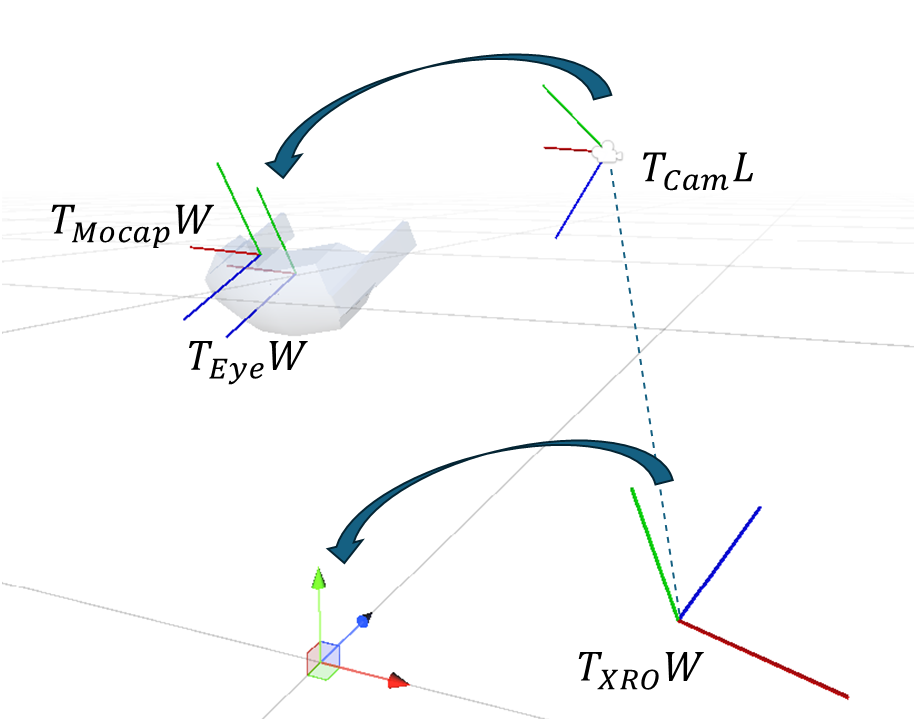

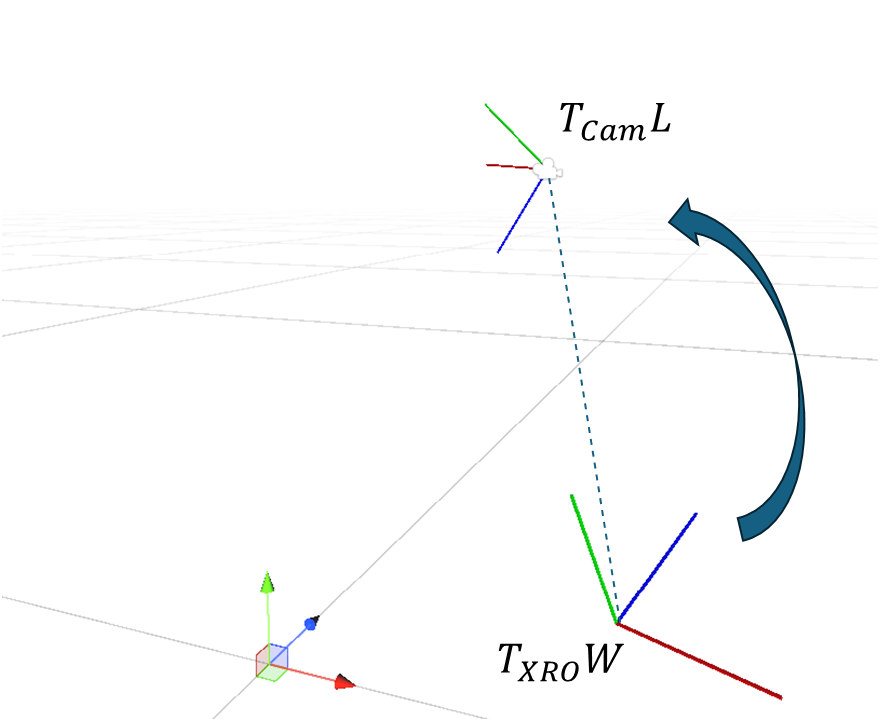

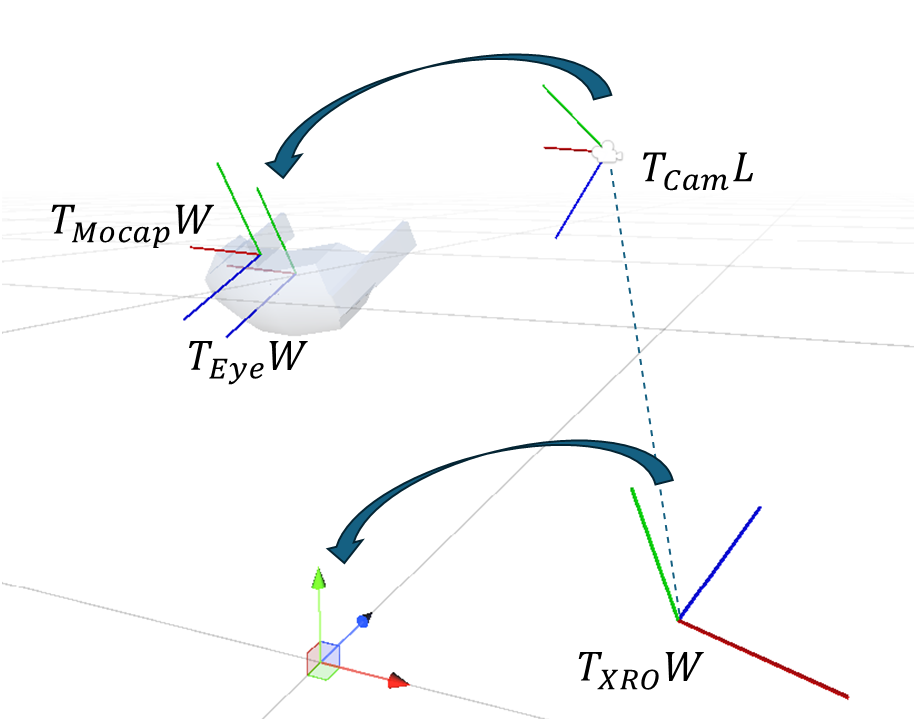

- Virtual Camera Alignment: The XR Origin transform in Unity is computed such that the virtual camera coincides with the calibrated eye center pose. The alignment is reduced to position and yaw to avoid tilting the tracking space, maintaining correct floor leveling and preventing bias in subsequent user movement.

Figure 2: The virtual camera pose TCam relative to XR Origin.

Figure 3: Co-location alignment: determining TXRO for correct virtual camera placement.

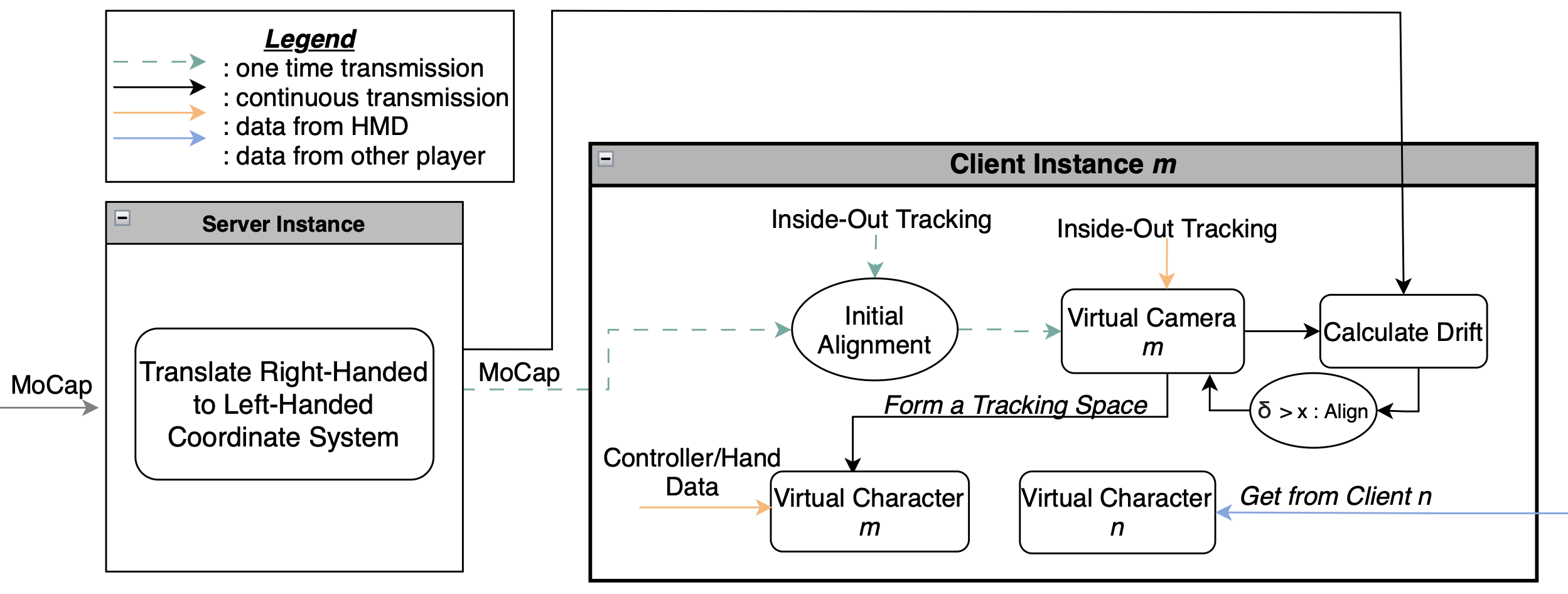

- Initialization and Data Flow: MoCap data is streamed to Unity via TCP/IP, converted to the engine's coordinate system, and distributed to user instances. The Colibri framework is used for inter-client pose sharing, supporting scalable multi-user scenarios.

Figure 4: Schema of the proposed framework with one exemplary user-instance.

- Tracking Main Loop: After initial alignment, HMD SLAM tracking is used for real-time updates, with periodic monitoring for drift. Pose data (head and hands) is transmitted between clients for consistent inter-user representation.

- Dynamic Alignment Correction: When drift exceeds a configurable threshold, realignment is triggered using MoCap data. This hybrid approach avoids the latency and jitter of continuous external tracking while maintaining spatial consistency.

Experimental Evaluation

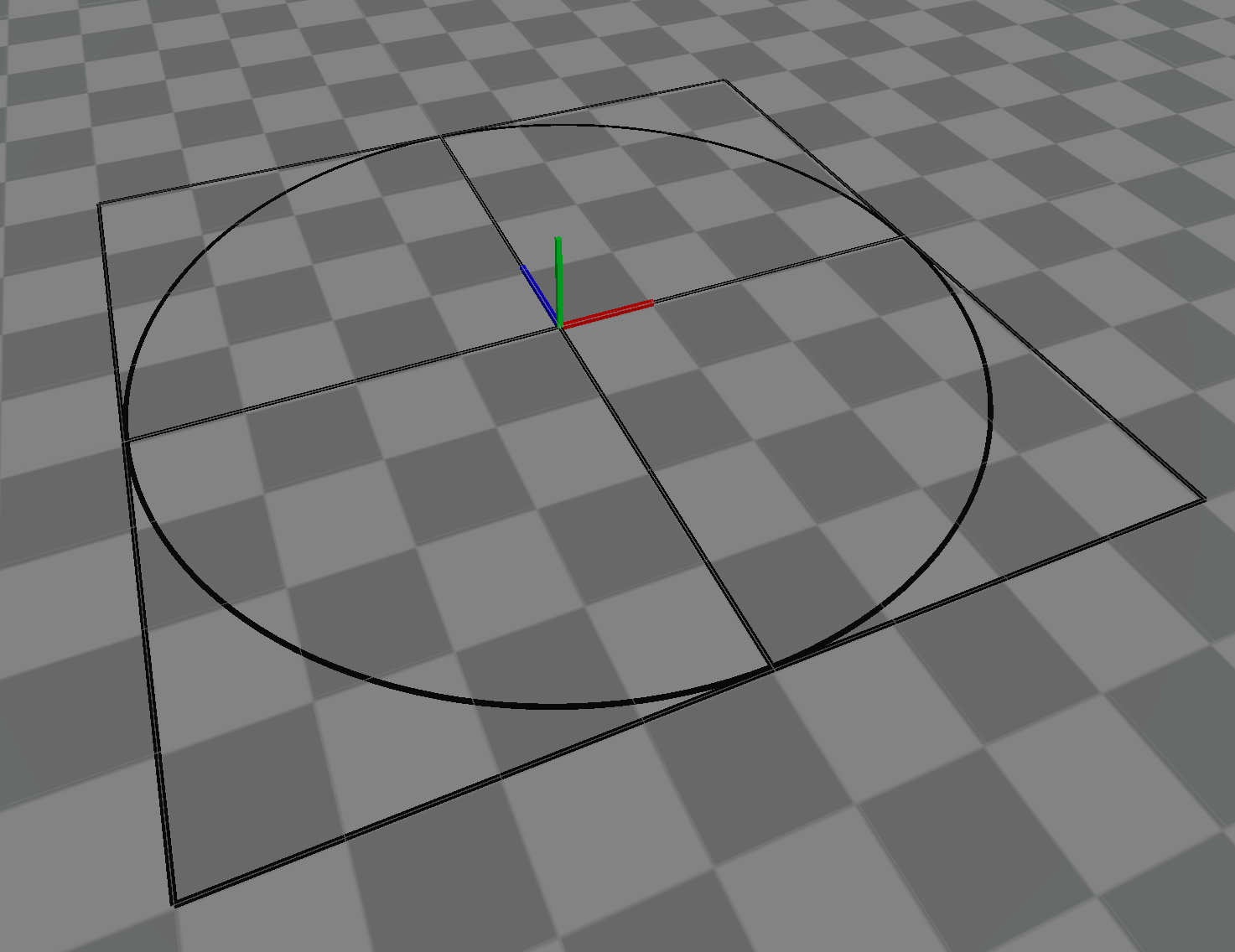

The system was evaluated in a 7m x 7m room with 38 Arqus A12 cameras. Both single-user and multi-user scenarios were tested, focusing on trajectory error (ATE RMSE) and interaction accuracy.

Figure 5: Environment used for testing.

Single-User Evaluation

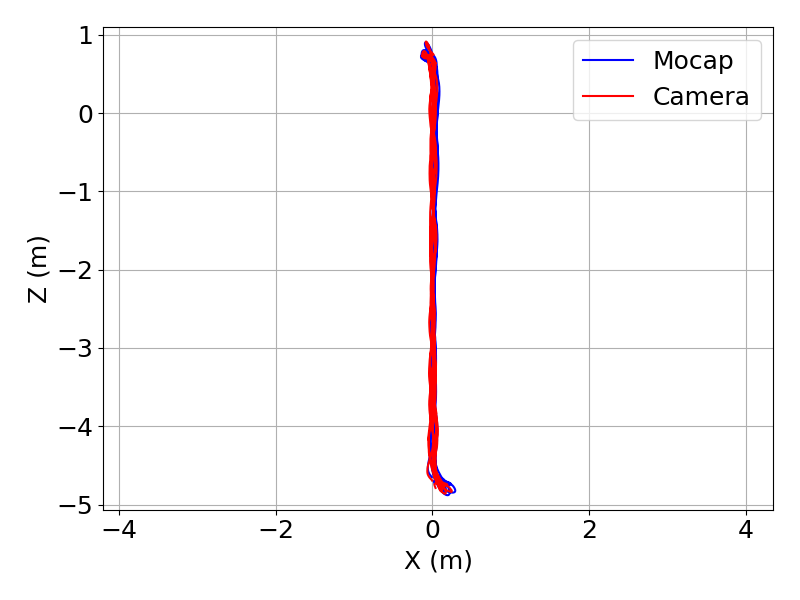

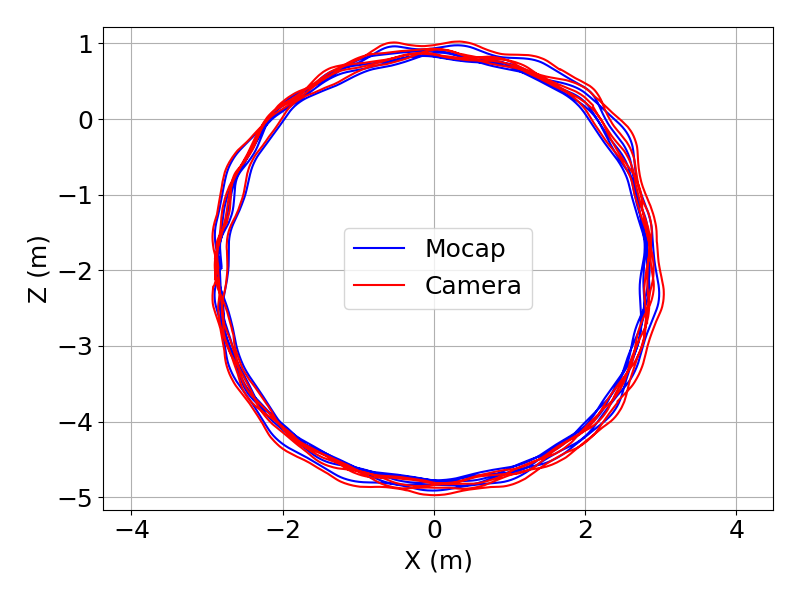

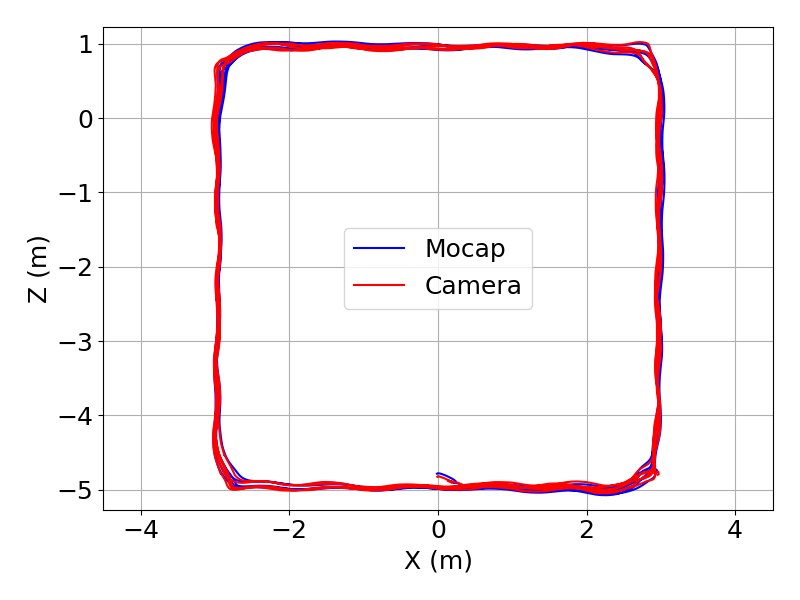

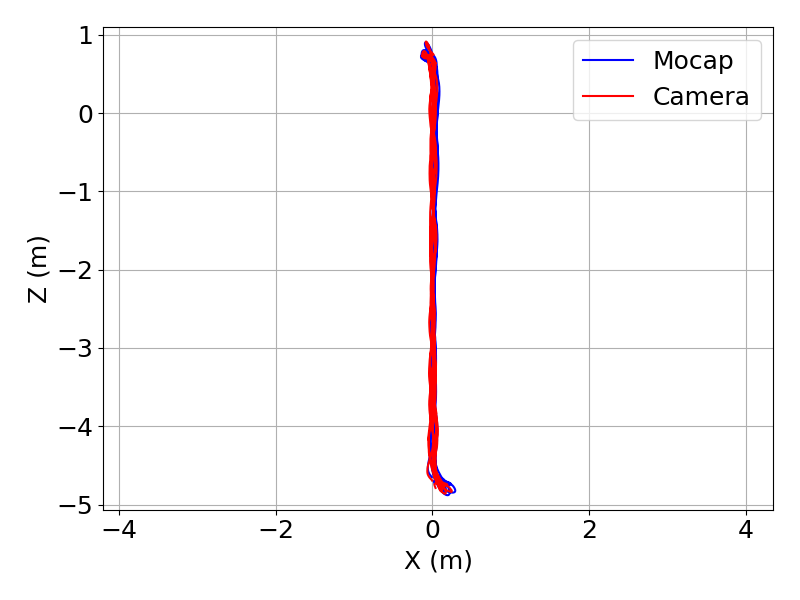

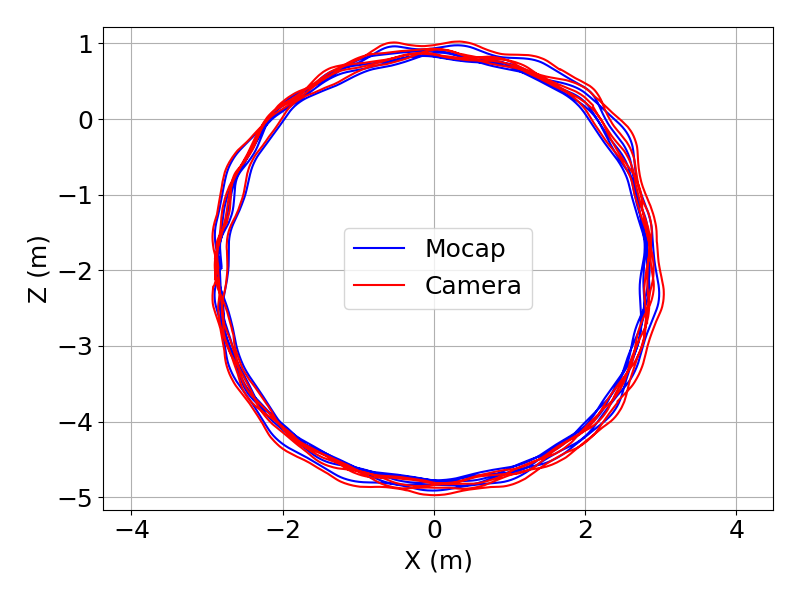

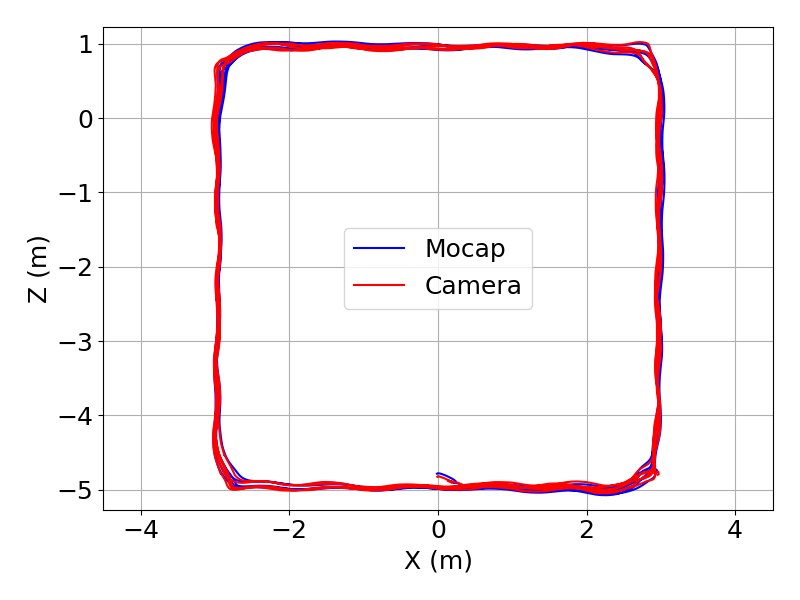

Three motion patterns were used: straight line, circle, and patrol. Users followed virtual circuits, and ATE was measured over 10 repetitions per pattern without realignment, allowing observation of drift accumulation.

Figure 6: Motion circuit, used for guiding users.

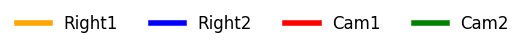

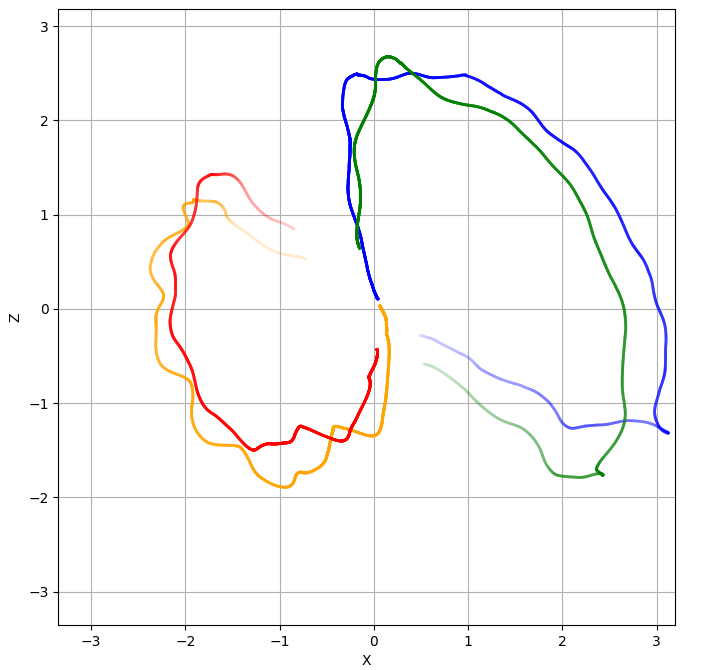

Figure 7: Top-down views of single-user motion patterns. Left: line motion. Middle: circle. Right: patrol.

ATE RMSE values ranged from 3.1 cm (patrol) to 4.9 cm (circle), consistent with the complexity of the motion and known SLAM limitations during rapid viewpoint shifts.

Multi-User Evaluation

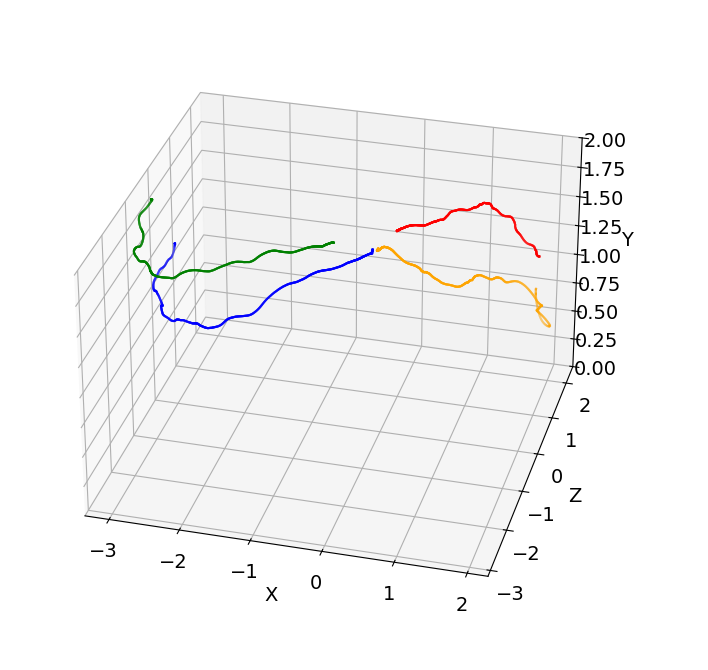

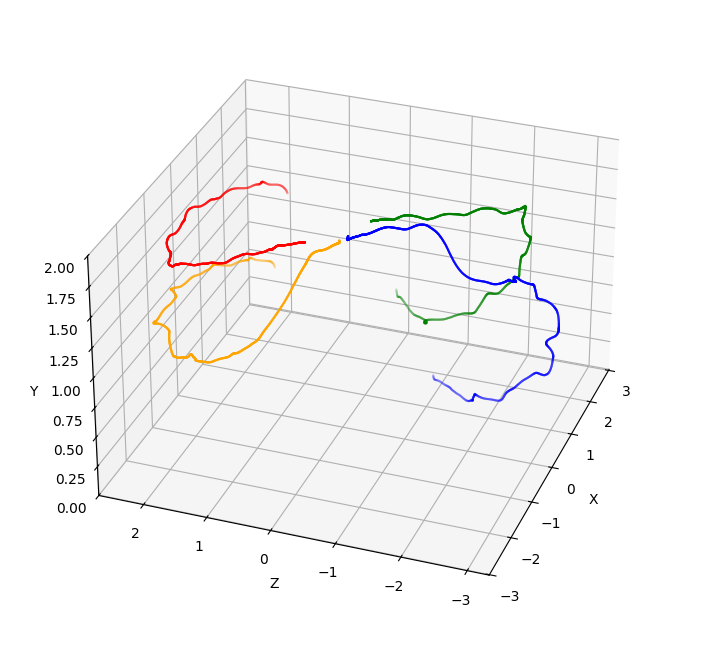

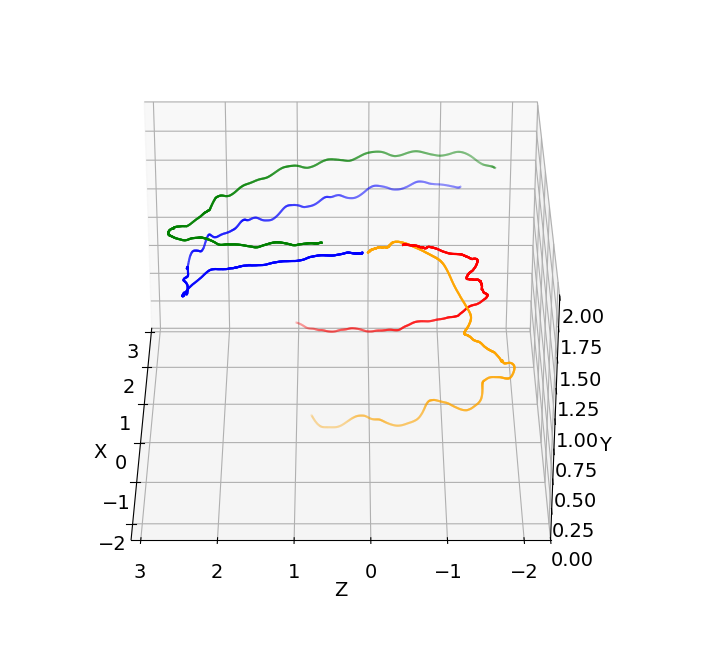

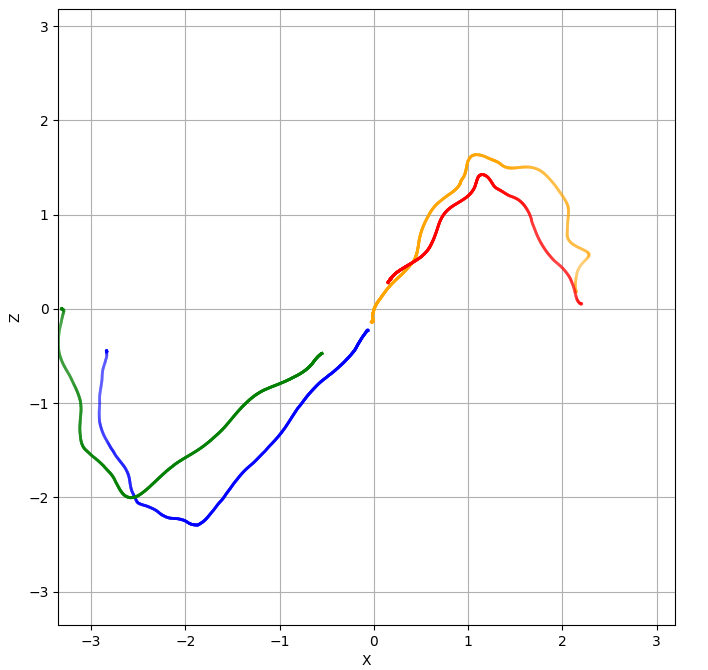

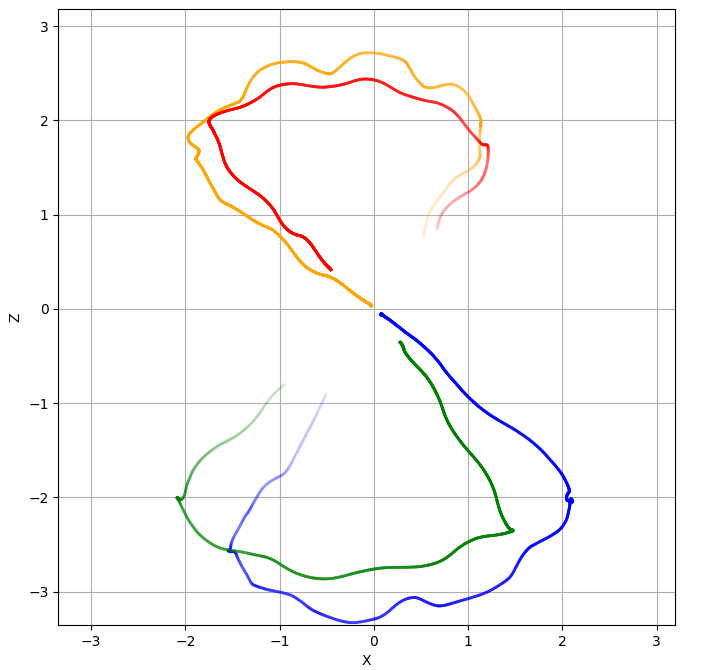

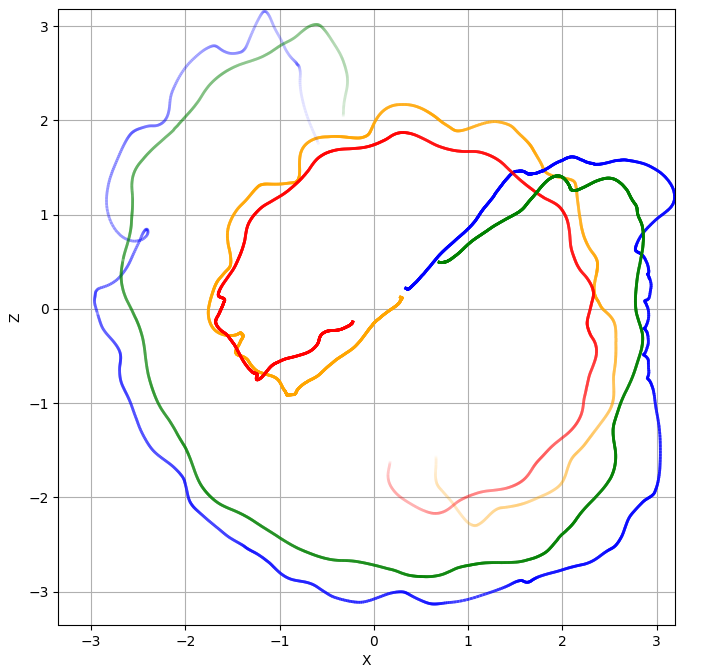

Two users performed independent motion paths and repeated fist bump interactions, testing spatial alignment under translation, rotation, and dynamic interaction.

Figure 8: Fist bump interaction, used for co-location validation.

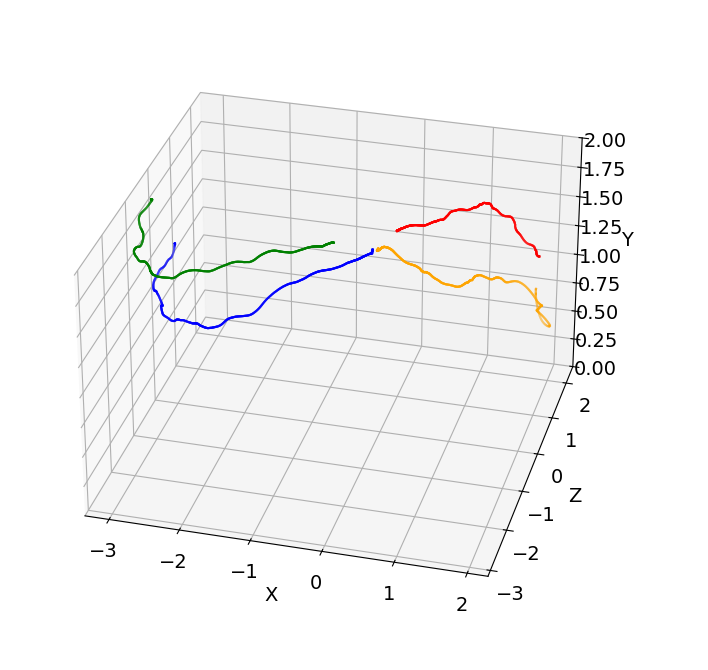

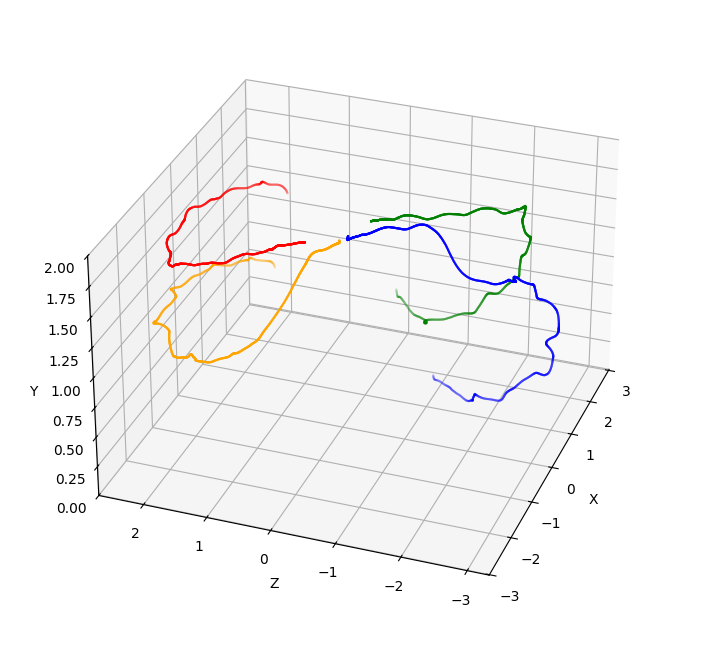

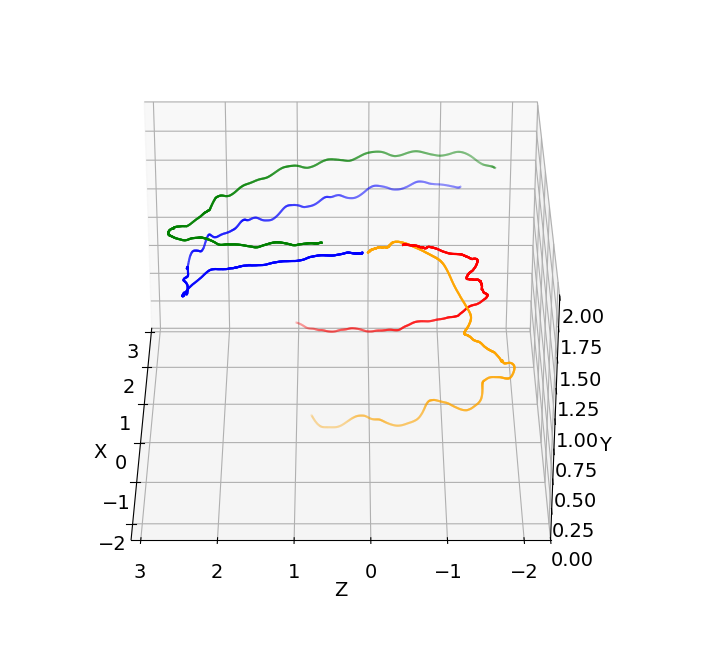

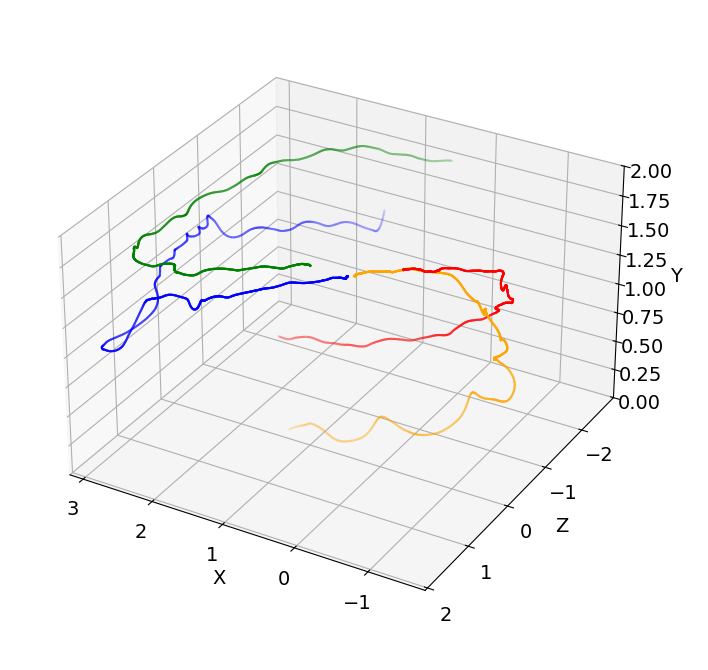

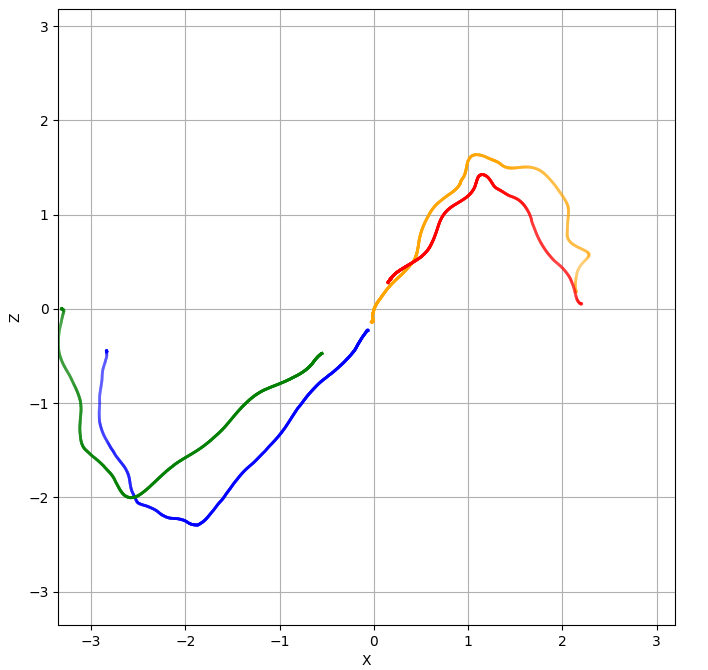

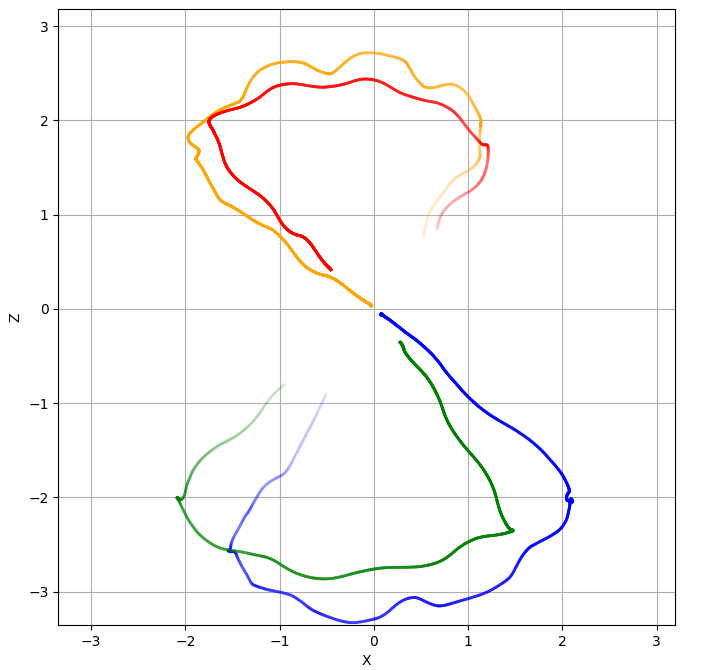

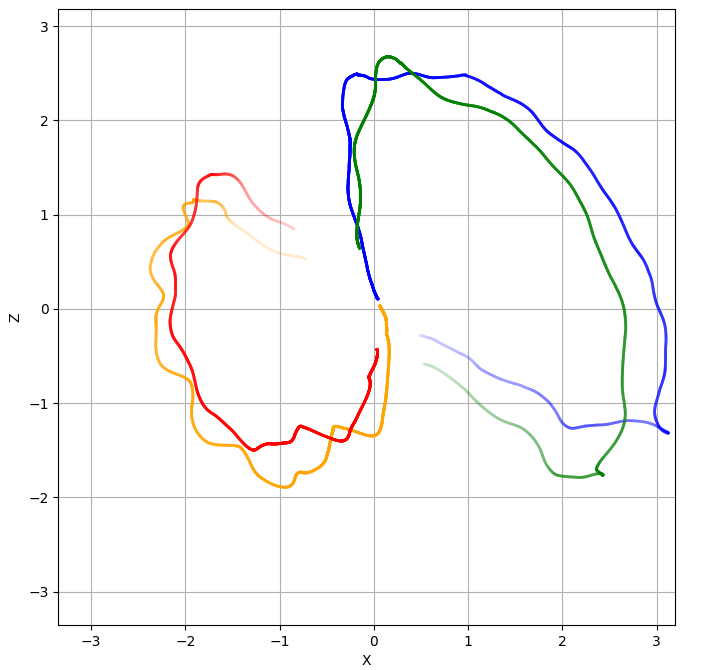

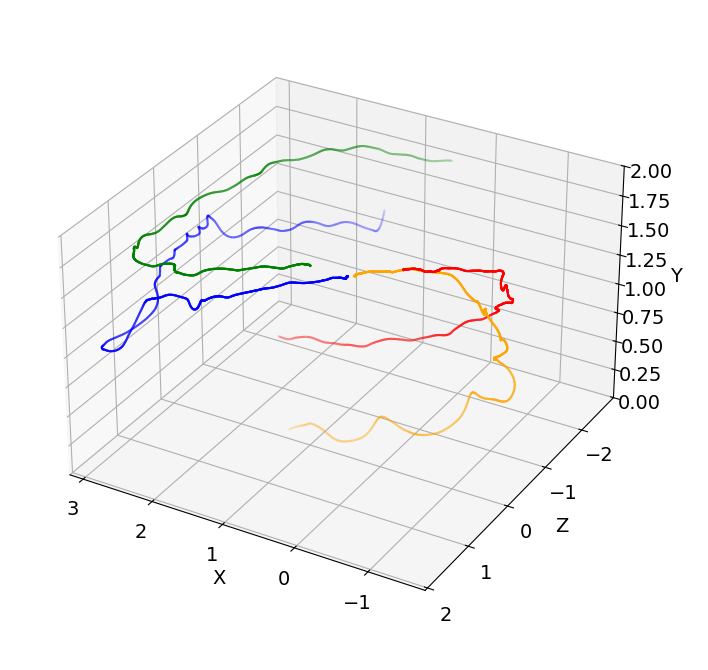

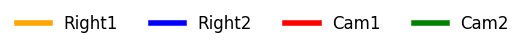

Figure 9: Fist bump events showing 3D trajectories (top row), legend (center) where cam1 and cam2 correspond to the users 1 and 2 virtual cameras and right1 and right2 the user's right controller, and top-down views (bottom row) for four selected frames.

ATE RMSE for multi-user motion was approximately 5.2 cm for both users, well below the 12 cm error reported in prior work for challenging scenarios. All fist bump trials were completed without perceptible misalignment, demonstrating robust co-location even during complex, repeated interactions.

Latency Analysis

Latency between MoCap and HMD tracking was measured at ~70 ms, confirming the advantage of relying on local SLAM tracking for real-time responsiveness. Continuous MoCap streaming would introduce unacceptable delays, leading to cybersickness and degraded user experience.

Discussion and Implications

The hybrid framework achieves high spatial accuracy and low latency, supporting natural multi-user interactions in shared physical-virtual spaces. The system's ability to maintain alignment during dynamic motion and interaction, with minimal reliance on continuous external tracking, is a significant practical advancement. The modular design allows for extension to full-body tracking and integration with other tracking modalities.

The results suggest that hybrid approaches are preferable for scalable, robust co-located VR, especially as HMDs continue to improve onboard sensor access and SLAM capabilities. Future work should explore collaborative SLAM for map sharing across devices, reducing dependency on external infrastructure and enabling larger-scale deployments. The framework is well-positioned for expansion to environments with more users and physical objects, facilitating richer collaborative and interactive VR scenarios.

Conclusion

The presented framework offers a practical solution for co-located multi-user VR, combining the accuracy of motion capture with the responsiveness of SLAM-based HMD tracking. The system maintains spatial consistency through periodic realignment, avoiding the latency and jitter of continuous external tracking. Empirical results demonstrate robust alignment and low error rates during complex motion and interaction, validating the approach for real-world applications. The architecture is scalable and extensible, with future directions including collaborative SLAM integration and support for larger user groups and physical object co-location.