- The paper introduces TaoGS, a topology-aware dynamic Gaussian representation that achieves robust tracking and high-fidelity rendering in human-centric volumetric videos.

- It employs spatio-temporal tracking and photometric error filtering to disentangle motion and appearance, enabling adaptive densification and up to 40× compression.

- Experimental results show superior PSNR, SSIM, and real-time performance compared to existing methods, even under complex topological changes.

Topology-Aware Optimization of Gaussian Primitives for Human-Centric Volumetric Videos

Introduction and Motivation

This paper introduces TaoGS, a topology-aware dynamic Gaussian representation for robust tracking and high-fidelity rendering of human-centric volumetric videos under topological changes. The work addresses a critical gap in dynamic scene modeling: the ability to maintain long-term tracking and adapt to topological variations (e.g., clothing changes, object interactions) without relying on fixed-topology templates or incurring excessive computational overhead. TaoGS disentangles motion and appearance using a sparse set of motion Gaussians, which are adaptively updated via spatio-temporal tracking and photometric cues, and a dense set of activatable appearance Gaussians for fine-grained texture modeling. The representation is designed for efficient training and is inherently compatible with standard video codec-based volumetric formats, supporting up to 40× compression.

Figure 1: TaoGS pipeline: motion-to-appearance Gaussian representation enables robust tracking and high-fidelity rendering under topological changes, with efficient compression via 2D attribute maps.

Methodology

Topology-Aware Motion Registration

TaoGS initializes motion Gaussians using dense multi-view triangulation, avoiding the limitations of SfM or mesh-based approaches in capturing fine structures. The initial set of ~20,000 Gaussians is optimized with Laplacian smoothness, isotropic, size, and color losses. For dynamic tracking, an as-rigid-as-possible (ARAP) regularizer is applied to a KNN-based deformation graph, ensuring physically plausible non-rigid motion.

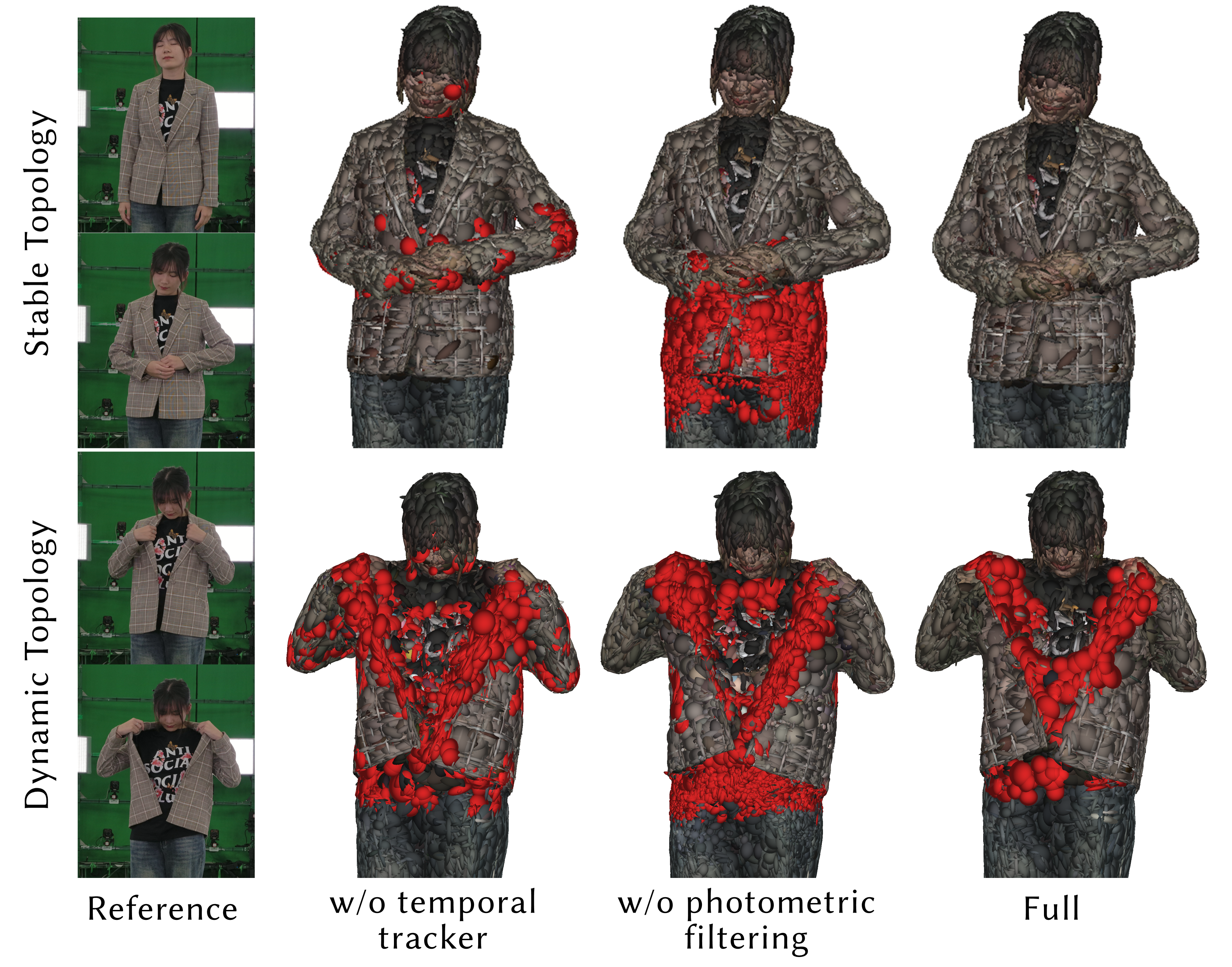

To handle topological changes, TaoGS employs a spatio-temporal tracker (CoTracker) and photometric error filtering to identify genuinely new observations and suppress redundant candidate Gaussians. The candidate filtering process leverages optical flow correspondences and photometric confidence thresholds, followed by graph updates that maintain local rigidity and prevent drift. Densification and pruning are performed every 300 iterations, with negligible computational overhead.

Figure 2: Candidate filtering and graph updating: spatio-temporal tracking and photometric error suppress redundancy and ensure only new topological changes are densified.

Activatable Appearance Gaussians

Sparse motion Gaussians anchor and activate a set of appearance Gaussians, which are non-rigidly warped to the current frame. Appearance Gaussians are initialized along the edges of the local KNN graph, inheriting attributes from their anchor motion Gaussian. This edge-based initialization ensures spatial coherence and fast convergence. The activation mechanism allows for efficient modeling of scene details and maintains temporal consistency, with each motion Gaussian activating nine appearance Gaussians per frame.

Lifespan-Aware Compression

A global Gaussian Lookup Table (GLUT) records the lifespan of each motion Gaussian and maps frame-specific Gaussians to a unified index space. Persistent Gaussians are spatially sorted using Morton encoding, while transient Gaussians are sorted by activation time. This layout aligns with intra-frame and inter-frame prediction mechanisms in standard video codecs (e.g., H.264), enabling efficient compression of 2D attribute maps. TaoGS achieves up to 40× compression even under complex topological changes.

Experimental Results

Dataset and Evaluation

The dataset comprises multi-view human performance capture at 30 FPS from 81 cameras at 4K resolution, featuring diverse topological changes (e.g., sword drawing, clothing removal, object manipulation).

Figure 3: Dataset overview: static samples and dynamic sequences with topological changes.

TaoGS is benchmarked against 4DGS, Spacetime Gaussian, DualGS, HiFi4G, and V3 on sequences with significant topological changes. Quantitative metrics include PSNR, SSIM, LPIPS, storage, training time, and rendering FPS.

Figure 4: Qualitative comparison: TaoGS achieves superior rendering quality compared to prior dynamic Gaussian methods.

TaoGS consistently achieves the highest rendering quality (PSNR: 37.264, SSIM: 0.9911, LPIPS: 0.0182 before compression; PSNR: 36.697, SSIM: 0.9881, LPIPS: 0.0214 after compression), efficient storage (1.335 MB/frame), and real-time rendering (125 FPS before compression, 65 FPS after compression). Competing methods either fail under topological changes, produce artifacts, or incur high storage and training costs.

Figure 5: Gallery of results: TaoGS robustly tracks and renders dynamic scenes with topological changes at high compression ratios.

Figure 6: Rendering of topological change scenarios: TaoGS handles diverse human-object interactions and clothing changes.

Ablation Studies

Ablations demonstrate the effectiveness of candidate filtering (temporal tracker + photometric error), edge-based initialization for appearance Gaussians, and combined sorting strategies for compression. EDGS-based initialization yields the best quality for motion Gaussians. The 9× edge-based appearance initialization achieves a favorable trade-off between quality and Gaussian count.

Figure 7: Evaluation of topology-aware Gaussian densification: full model suppresses redundancy and densifies only new observations.

Limitations

TaoGS cannot reactivate deactivated motion Gaussians, potentially leading to redundancy. Gaussian removal is opacity-based, which may leave residual artifacts. The method relies on accurate tracking and segmentation; errors in textureless regions or fine structures can introduce artifacts. TaoGS is tailored for indoor multi-view studio settings and is not robust to outdoor lighting variations or severe occlusions.

Practical and Theoretical Implications

TaoGS provides a scalable, adaptive solution for volumetric video under topological variation, supporting real-time, high-fidelity rendering and efficient compression. The motion-to-appearance disentanglement and lifespan-aware indexing enable practical deployment in mobile and VR applications. The methodology advances the state-of-the-art in dynamic scene modeling, particularly for human-centric scenarios with frequent topological changes.

Theoretically, TaoGS demonstrates the efficacy of sparse, topology-aware Gaussian primitives for disentangling motion and appearance, and highlights the importance of spatio-temporal tracking and photometric cues for robust adaptation. The compression strategy leverages spatial and temporal locality, suggesting future directions in codec-aligned neural representations.

Figure 8: Seamless integration of 4D assets with static environments for immersive experiences.

Conclusion

TaoGS introduces a topology-aware dynamic Gaussian representation that robustly tracks and renders human-centric 4D scenes with topological changes. The motion-to-appearance framework, guided by spatio-temporal tracking and photometric cues, enables adaptive detection of new observations and efficient training. Lifespan-aware compression aligns with standard video codecs, supporting up to 40× compression. TaoGS achieves state-of-the-art visual quality, storage efficiency, and real-time performance, making it a practical solution for immersive volumetric video. Future work may address semantic-aware Gaussian management, improved tracking in challenging conditions, and generalization to outdoor environments.