- The paper introduces a novel distributed algorithm that uses predictive spike timing for shortest path computation in spiking neural networks.

- It leverages local spike-timing coincidences and adaptive processing delays, avoiding reliance on global coordination or synaptic weight changes.

- Simulations in various maze environments demonstrate convergence to optimal paths, highlighting the critical role of global inhibition for efficient pruning.

Predictive Spike Timing for Distributed Shortest Path Computation in Spiking Neural Networks

Introduction and Motivation

This work introduces a biologically plausible, distributed algorithm for shortest path computation in spiking neural networks (SNNs), leveraging local spike-timing-based message passing and predictive temporal coding. The approach addresses the incompatibility of classical algorithms such as Dijkstra's and A* with biological constraints, particularly their reliance on global state and explicit backtracing, which are not feasible in neural substrates. The proposed method instead utilizes local spike-timing coincidences and adaptive processing delays to enable neurons to self-organize and identify shortest paths through purely local interactions, without synaptic weight changes or global coordination.

Model Architecture and Algorithmic Mechanism

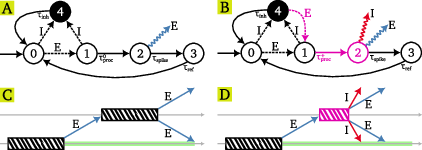

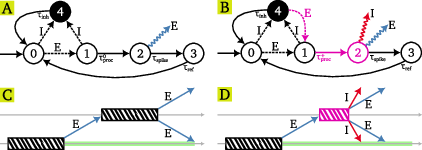

The core of the algorithm is a network of spiking neurons, each modeled as a timed state machine with five states: resting, processing, spiking, refractory, and inhibited. Neurons communicate via two types of messages: excitatory (E) and inhibitory (I). The network distinguishes between "tagged" and "untagged" neurons:

- Untagged neurons: Standard processing delays, emit only E messages when spiking.

- Tagged neurons: Reduced processing delays (modeling threshold adaptation), emit both E and global I messages, and can transition from inhibited to processing upon receiving E messages (disinhibition).

The state machine and message-passing protocol are illustrated in detail in (Figure 1).

Figure 1: State machine and message-passing protocol for untagged and tagged neurons, including timing diagrams for E/I message propagation and tagging events.

The algorithm proceeds as follows:

- Initialization: Only the target neuron is tagged.

- Forward Propagation: E messages propagate from the source neuron through the network.

- Tagging via Predictive Coincidence: When a neuron receives an I-E message pair earlier than predicted (due to the reduced delay of tagged neurons), it becomes tagged. This mechanism implements a form of local temporal prediction.

- Backward Propagation of Tagging: The tagging state propagates backward from the target to the source, compressing the temporal gradient along the shortest path.

- Convergence: Upon convergence, only neurons on the shortest path(s) are tagged and remain active.

The formal convergence proof demonstrates that, under idealized timing and connectivity assumptions, all nodes on the shortest path(s) from source to target are tagged in a finite number of iterations, with the number of iterations scaling linearly with path length.

Simulation Results and Empirical Analysis

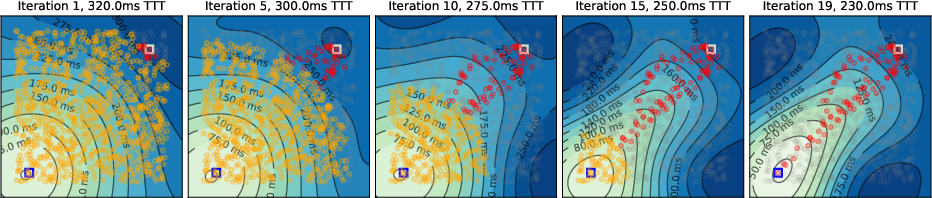

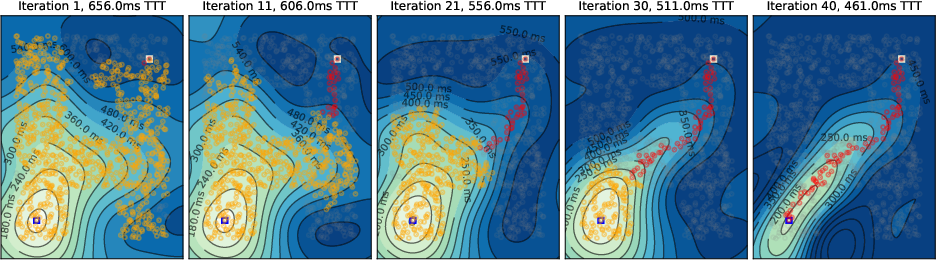

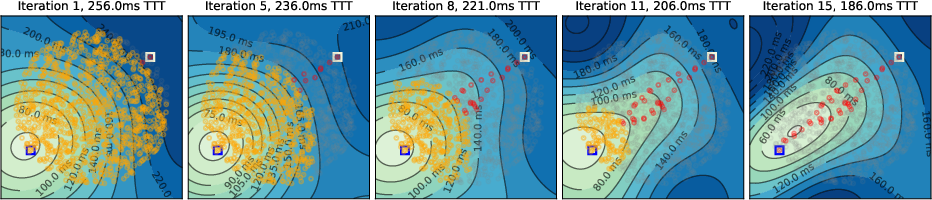

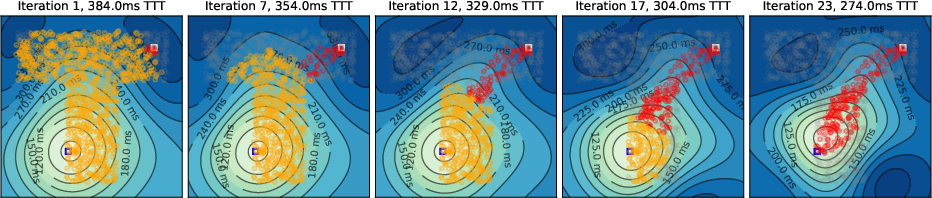

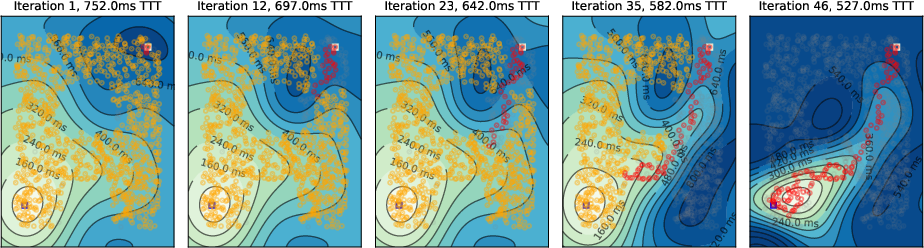

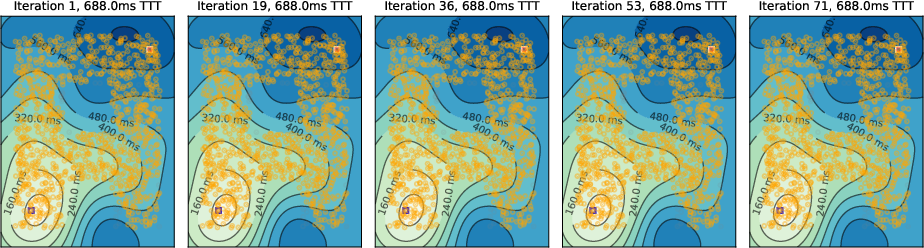

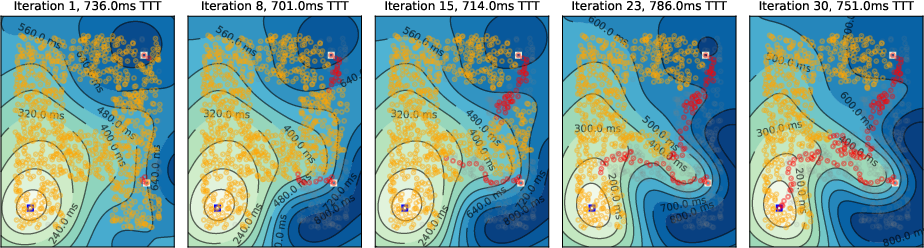

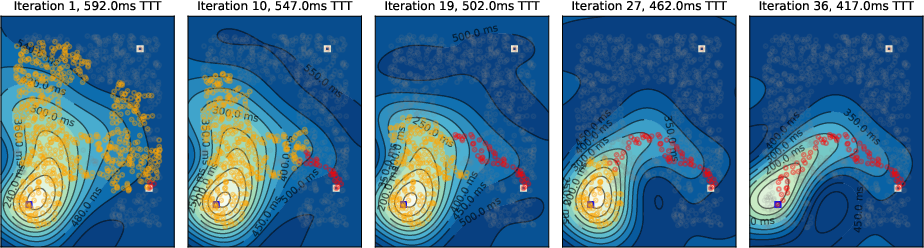

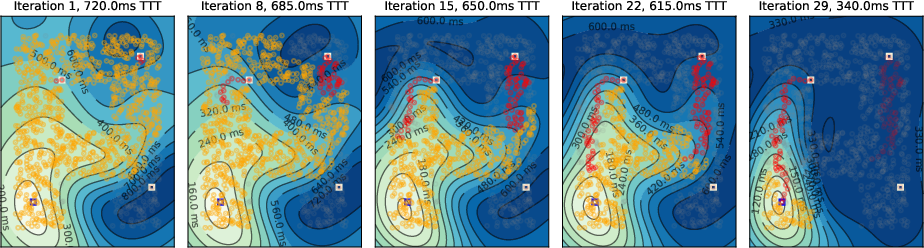

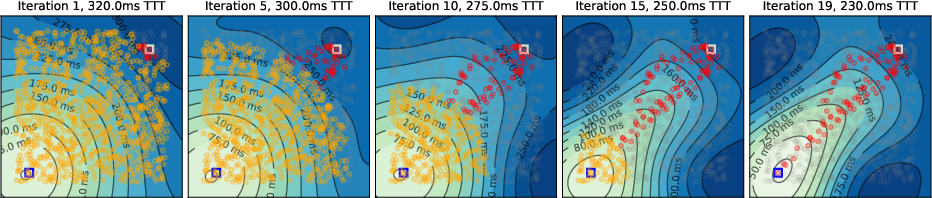

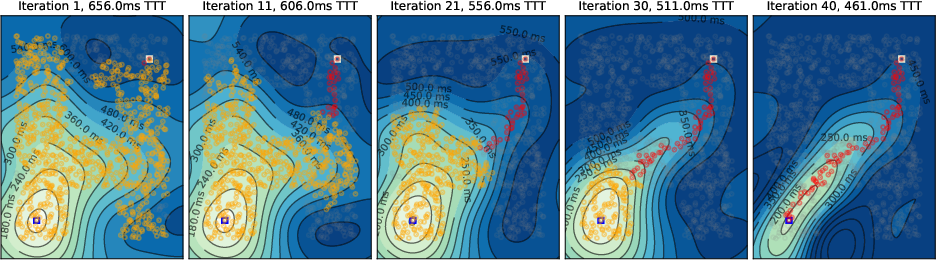

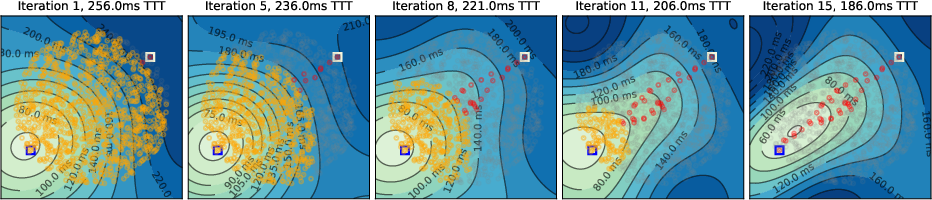

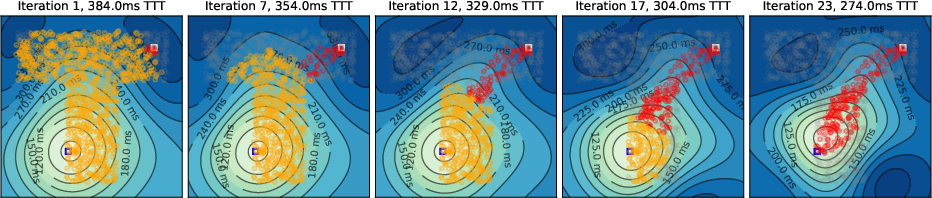

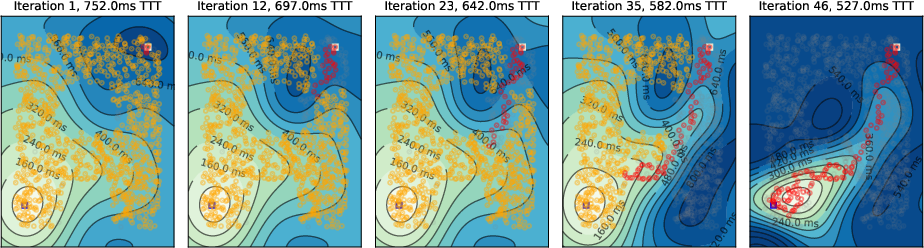

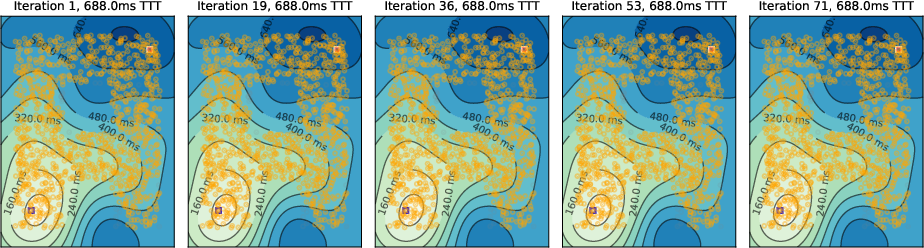

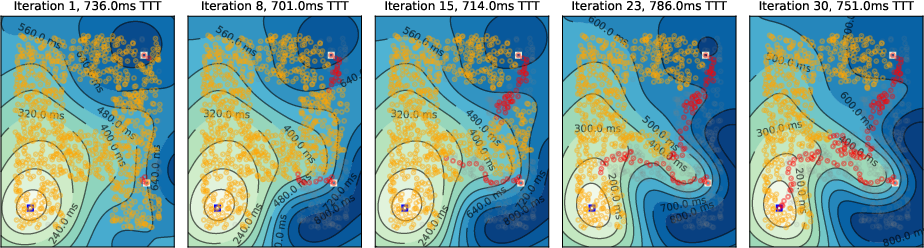

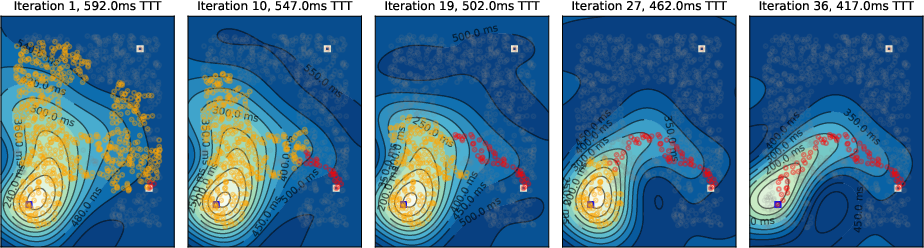

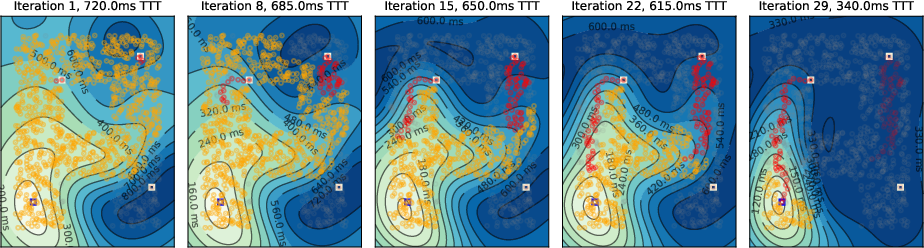

Simulations were conducted on spatially embedded random networks (1000 neurons) with local connectivity, using biologically plausible timing parameters. The evolution of the temporal gradient field and tagging dynamics were visualized for various environments, including square, A-shaped, circular, and T-shaped mazes.

Key observations include:

- Initial Activity Propagation: Neural activity spreads concentrically from the source, forming circular temporal gradients.

- Temporal Gradient Deformation: As tagged neurons accumulate, the temporal gradient field deforms, biasing activity toward the target and suppressing suboptimal paths via global inhibition.

- Convergence to Shortest Path: Upon convergence, only neurons on the shortest path(s) remain active, matching the solution of Dijkstra's algorithm.

Figure 2: Evolution of the temporal gradient field in square and A-maze environments, showing progressive tagging and pruning of suboptimal paths.

Figure 3: Temporal gradient evolution in a circular maze, illustrating the deformation of activity contours as tagged neurons accelerate propagation toward the target.

Figure 4: Temporal gradient evolution in a T-maze, highlighting the emergence of a "band" of shortest-path neurons due to high density and unweighted connectivity.

Additional experiments explored the effects of local versus global inhibition, the absence of inhibition (leading to algorithmic failure), and multiple target scenarios:

- Local Inhibition: Sufficient for single-target cases but results in more residual activity and less efficient pruning.

- No Inhibition: The algorithm fails to tag neurons, and shortest paths do not emerge.

- Multiple Targets: The algorithm can simultaneously identify multiple shortest paths, with global inhibition suppressing secondary paths more effectively than local inhibition.

Figure 5: Local inhibition leads to more persistent activity off the shortest path compared to global inhibition.

Figure 6: Absence of inhibition prevents the emergence of shortest paths.

Figure 7: Two-target scenario with local inhibition; both shortest paths remain active.

Figure 8: Two-target scenario with global inhibition; only one shortest path persists.

Figure 9: Three-target scenario with local inhibition; only one path remains after further iterations.

Theoretical and Practical Implications

The algorithm demonstrates that distributed, local spike-timing dynamics are sufficient for solving shortest path problems in SNNs, without requiring global state, explicit backtracing, or synaptic plasticity. The approach is grounded in established neurobiological mechanisms, including spike-timing prediction, threshold adaptation, and competitive inhibition. The tagging mechanism is conceptually related to neuromodulator-mediated disinhibition and transient excitability changes observed in cortical circuits.

Key claims and findings:

- Convergence is formally guaranteed for unweighted, static graphs with idealized timing.

- No synaptic weight changes are required; all computation is performed via transient state changes and message timing.

- Multiple shortest paths are discovered in parallel, matching the behavior of classical algorithms in unweighted graphs.

- Global inhibition is critical for efficient pruning and convergence; local inhibition is less effective, and absence of inhibition leads to failure.

Limitations:

- Linear scaling with path length: The number of iterations required is proportional to the shortest path length, which may be suboptimal for long-range planning.

- Sensitivity to timing precision: The algorithm relies on precise temporal windows; biological noise or heterogeneity could degrade performance.

- Global inhibition may be metabolically costly in large networks; alternative scalable inhibition mechanisms are needed.

- Static topology assumption: The algorithm does not handle dynamic changes in connectivity or obstacles during computation.

Implications for Neuroscience, AI, and Neuromorphic Systems

The results provide a plausible mechanistic account for rapid, flexible path planning and sequence retrieval in biological systems, consistent with observations of hippocampal replay and temporal compression during navigation and memory tasks. The model makes several testable predictions for neurophysiological and behavioral experiments, including:

- Progressive reduction in response latency for neurons closer to the goal during repeated navigation.

- Distinct temporal signatures for neurons on optimal versus suboptimal paths.

- Disruption of inhibitory signaling should impair path optimization but not basic path finding.

For artificial intelligence and neuromorphic engineering, the algorithm offers a template for implementing distributed search and planning in hardware-constrained environments, where global state and backtracing are infeasible. The approach could be extended to weighted graphs, hierarchical representations (e.g., Transition Scale-Space), and more complex temporal coding schemes.

Future Directions

Potential avenues for further research include:

- Integration with hierarchical, multi-scale representations to accelerate convergence and improve scalability.

- Robustness to noise and heterogeneity: Incorporating mechanisms for temporal averaging or probabilistic tagging.

- Extension to dynamic and weighted graphs: Adapting the timing protocol to handle variable edge costs and changing topologies.

- Implementation in neuromorphic hardware: Evaluating energy efficiency and real-time performance.

- Generalization to other graph algorithms: Exploring whether similar spike-timing-based protocols can implement broader classes of distributed computations.

Conclusion

This work establishes that predictive spike-timing and local temporal coding are sufficient for distributed shortest path computation in spiking neural networks, without requiring global state, explicit backtracing, or synaptic plasticity. The approach is both biologically plausible and formally principled, with clear implications for neuroscience, AI, and neuromorphic systems. The paradigm of local temporal prediction may serve as a foundation for a new class of distributed algorithms that bridge biological realism and computational efficiency.