- The paper demonstrates that adaptive fusion of camera and radar data in CaR1 achieves competitive BEV segmentation performance with an IoU of 57.6 on nuScenes.

- It introduces grid-wise radar encoding and multi-scale deformable attention to transform multi-view inputs into a robust BEV feature map.

- The study outlines how integrating sensor modalities can overcome single-sensor limitations, enhancing vehicle detection in autonomous driving.

CaR1: A Multi-Modal Baseline for BEV Vehicle Segmentation via Camera-Radar Fusion

Introduction

The paper introduces CaR1, a multi-modal fusion architecture designed for Bird's-Eye View (BEV) vehicle segmentation, which integrates camera and radar data, offering an alternative to LiDAR-based systems in autonomous driving. By leveraging BEVFusion and incorporating a grid-wise radar encoding alongside an adaptive fusion mechanism, CaR1 seeks to dynamically balance sensor contributions, overcoming the inherent view disparity and heterogeneous nature of camera and radar data. The results on the nuScenes dataset exhibit substantial competitive performance, marked by an Intersection over Union (IoU) of 57.6, commensurate with state-of-the-art benchmarks.

Figure 1: Our proposed method, CaR1, uses multi-view camera images and radar point clouds to predict a BEV vehicle segmentation map with color-coded predictions.

Camera-based Methods

Camera-centric architectures have predominantly faced challenges in depth estimation due to sensor limitations. Techniques leveraging geometric transformations and backward-projection have been employed to address view disparity, assisting in transforming learned features to the BEV context, thereby facilitating autonomous driving applications. Forward and backward-based methods differ fundamentally in feature correspondence mapping, impacting spatial resolution and computational overhead.

Radar-based Methods

Radar perceptions have historically been less advanced given sparse measurement limitations from conventional devices and dataset scarcity. Recent developments have seen radar-specific frameworks emerge, such as RadarNext, refining radar perceptions by adapting LiDAR-based models to better suit radar data structures. These advancements hold potential in augmenting BEV segmentation tasks underexplored in early benchmarks.

Fusion Methods

Fusion strategies capitalizing on radar's spatial and motion resolution, thus mitigating depth ambiguity from camera data, have been pivotal. Methods like SimpleBEV and CRN demonstrate varied fusion approaches ranging from dual-stage mechanisms to attention-based integration in BEV space. These endeavors collectively enhance BEV segmentation by refining depth predictions, guiding image-lifting transformations, and reinforcing spatial awareness.

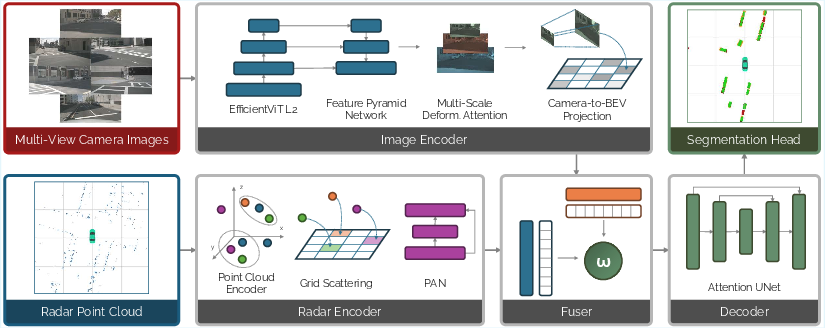

Figure 2: Main diagram of CaR1, illustrating the integration of camera and radar data into a unified BEV feature space.

Methodology

CaR1 employs a novel architecture that coalesces multi-modal data into robust BEV views for vehicle segmentation. This methodology encompasses several stages:

CaR1 harnesses EfficientViT-L2 for initial feature extraction from multi-camera inputs, deploying Multi-Scale Deformable Attention to refine feature maps into BEV-aligned representations. Using FPN and bilinear interpolation, discrete voxel projections are aggregated to facilitate image to BEV transformation.

Leveraging Point Transformer V3 as a radar encoder, CaR1 synthesizes point cloud data through serialized attention mechanisms, transforming radar measurements into structured grid representations for comprehensive BEV feature synthesis. This yields enhanced spatial context by layering aggregation techniques over radar data.

Adaptive Feature Fusion

Integral to CaR1 is the adaptive fusion strategy, which dynamically adjusts sensor contribution, assigning modality-specific weights through attention-based calibrations. Using Squeeze-and-Excite operations, feature modalities are harmonized into a cohesive BEV feature map, optimizing segregation and detection performance.

BEV Decoder

Attention U-Net refines fused BEV features, employing attentive skip-connections within a conventional U-Net framework to enhance feature resolution and segmentation precision. The architecture facilitates complex feature interplay, vital for improved localization.

Segmentation Head and Loss Functions

CaR1's segmentation head transposes features from pixelated spaces into BEV coordinates, applying dual convolutional block strategies. Supervision through BCE and Dice losses advocates region-level precision, essential in vehicle segmentation contexts.

Experimental Evaluation

Dataset and Metrics

The nuScenes dataset serves as the empirical benchmark for CaR1 evaluation, with 3D annotations guiding object detection tasks. Quantitative comparisons underscore CaR1's IoU performance advantage over camera-only frameworks, highlighting radar integration efficacy.

Implementation Details

Optimization on NVIDIA A100 GPUs facilitates large-scale data processing, utilizing advanced learning rate schedules and weight decay strategies. Mixed batch sizes and augmentation tactics further calibrate CaR1's operational robustness across diverse scenarios.

Results

Comparative analysis delineates CaR1's competitive stance, surpassing models like SimpleBEV by significant IoU margins, affirming the adaptive fusion mechanism's benefit. Ablation studies substantiate component enhancements, detailing how iterative precision gains result from targeted architectural modifications.

Conclusion

CaR1 exemplifies an innovative approach to multi-modal fusion for BEV vehicle segmentation, blending camera insights with radar resilience to elevate sensor integration standards in autonomous systems. The grid-wise encoding and fusion strategies are instrumental in navigating complex environmental perceptions, offering promising insights into future improvements and applications in AD landscapes.