- The paper introduces OS-SCL, a novel one-stage supervised contrastive learning framework that mitigates false alarms in anomalous sound detection.

- It employs a feature perturbation head in the embedding space using mixup to effectively distinguish samples with similar machine IDs.

- Experimental results on the DCASE 2020 Challenge show high performance with an AUC of 95.71% and improved efficiency for industrial deployment.

Noise Supervised Contrastive Learning and Feature-Perturbed for Anomalous Sound Detection

The paper "Noise Supervised Contrastive Learning and Feature-Perturbed for Anomalous Sound Detection" (2509.13853) introduces a training technique called One-Stage Supervised Contrastive Learning (OS-SCL) to address false alarm issues in anomalous sound detection (ASD) systems. This novel approach improves on self-supervised classification models that struggle with frequent false alarms due to similar samples from different machine IDs.

Introduction

Anomalous sound detection has become a critical aspect of industrial machine monitoring, primarily due to the lack of available anomalous audio samples in real-world settings. The challenge is that ASD systems need to distinguish between normal and anomalous sounds without direct examples of anomalous sounds during training. Historically, ASD systems have been divided into methods based on autoencoder reconstruction and machine ID self-supervised classification models. The paper highlights significant limitations in the latter approach due to the difficulty in establishing clear decision boundaries when normal samples from different machine IDs exhibit high similarity.

In response, OS-SCL integrates feature perturbations in the embedding space alongside a one-stage noisy supervised contrastive learning technique, which optimizes the model to discern subtle differences between samples of different machine IDs.

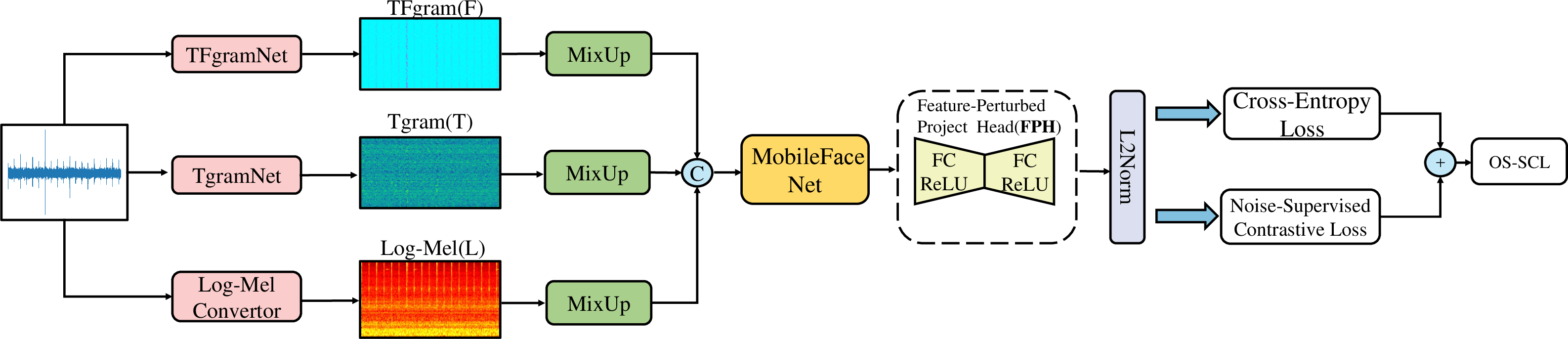

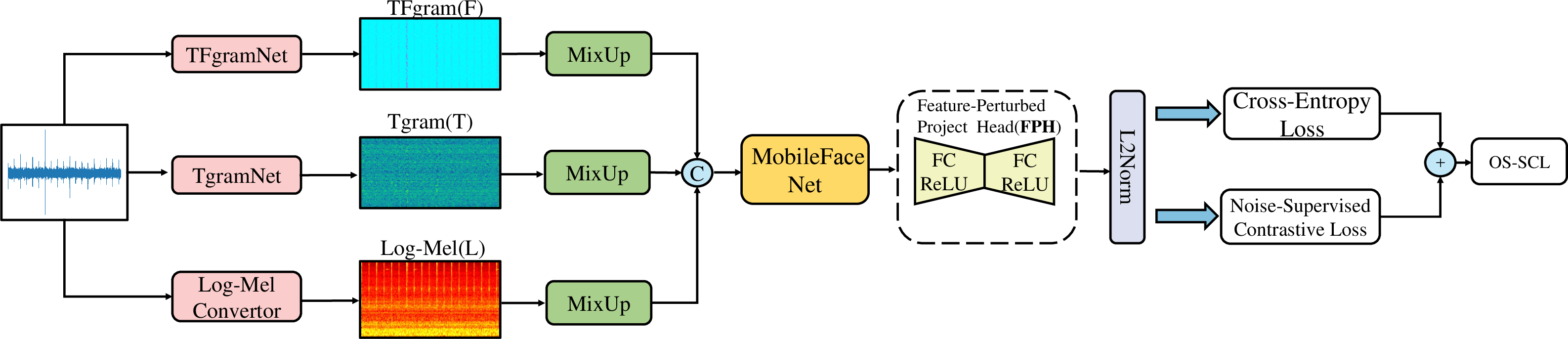

Figure 1: Framework of One-Stage Noise-Supervised Contrastive Learning with Embedding Space Feature Perturbation.

Proposed Method

Feature Mapping Perturbation

The method introduces a feature perturbation head (FPH) within the embedding space after applying mixup. Mixup blends data within batches based on varying coefficients, which yield features akin to original or permuted samples. The use of the Feature Perturbation Head ensures features are perturbed sufficiently, offering a mechanism for noise-supervised learning to better distinguish between samples of similar machine IDs.

Decision Boundary Learning

OS-SCL employs a one-stage training strategy that performs feature optimization concurrently with classification through supervised contrastive learning. Unlike traditional two-stage methods, this approach maintains the effectiveness of contrastive learning throughout the process, addressing the issue of similar samples by introducing beneficial noise during training. The supervised contrastive loss is designed to enhance the cohesion of similar samples while pushing apart samples with different machine IDs.

Feature Classification

The Noisy-ArcMix loss function dynamically adjusts the classification sensitivity by varying the weight of mixed labels, enabling better detection of anomalous samples. This dynamic adjustment counters the limitations of traditional ArcFace, which can struggle to maintain significant angular differences between normal and anomalous samples.

TFgramNet Architecture

TFgramNet, a modification of the PANN framework, includes a global max pooling layer designed to capture features comprehensively across time and frequency domains. The architecture extracts the TFgram features, facilitating effective identification of anomalies.

Experimental Results

The experimental evaluation on the DCASE 2020 Challenge Task 2 demonstrates the OS-SCL framework's superior performance, achieving an AUC of 95.71% and a pAUC of 90.23% with TFgram features. The study reveals that machine anomalous sound detection does not inherently rely on high-frequency components, countering prevalent beliefs about the essential nature of these components for anomaly detection.

Significantly, OS-SCL with Log-Mel features alone outperforms other methods, including large pre-trained models, while maintaining minimal parameter requirements, highlighting its practicality for industrial deployment where computational resources are limited.

Conclusion

The paper presents an innovative training technique that significantly advances the field of anomalous sound detection by addressing core issues related to classification boundaries between similar samples. The effectiveness of OS-SCL extends beyond the improvement of detection accuracy; it provides a computationally efficient solution suitable for real-world industrial applications. Our findings challenge assumptions about the role of high-frequency components in machine sound anomaly detection, offering new insights into feature selection and optimization strategies in ASD systems.