Quantifying Self-Awareness of Knowledge in Large Language Models

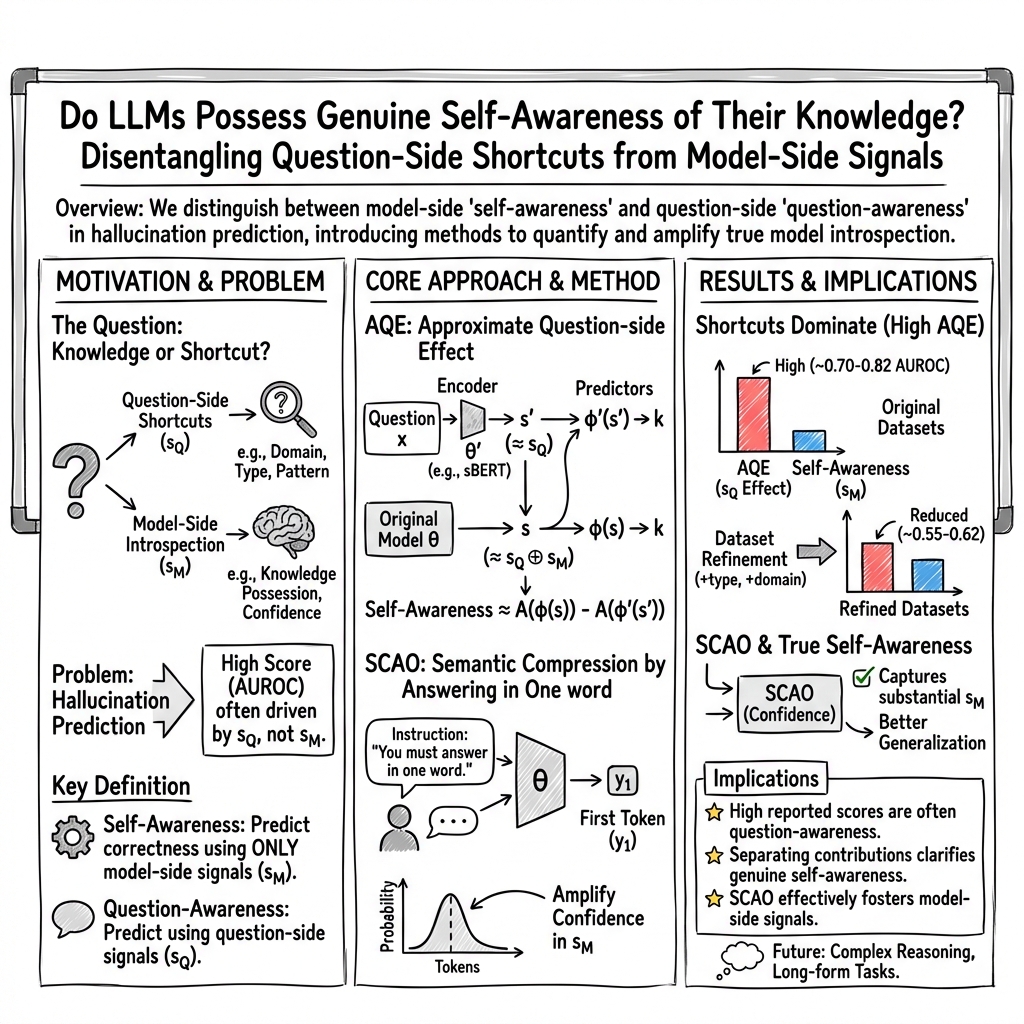

Abstract: Hallucination prediction in LLMs is often interpreted as a sign of self-awareness. However, we argue that such performance can arise from question-side shortcuts rather than true model-side introspection. To disentangle these factors, we propose the Approximate Question-side Effect (AQE), which quantifies the contribution of question-awareness. Our analysis across multiple datasets reveals that much of the reported success stems from exploiting superficial patterns in questions. We further introduce SCAO (Semantic Compression by Answering in One word), a method that enhances the use of model-side signals. Experiments show that SCAO achieves strong and consistent performance, particularly in settings with reduced question-side cues, highlighting its effectiveness in fostering genuine self-awareness in LLMs.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.