- The paper introduces robust conformalization techniques using quantile regression surrogates to enhance calibration in hyperparameter optimization.

- It develops novel surrogate architectures and acquisition functions, notably quantile ensembles, improving search performance on challenging datasets.

- Benchmarking shows that conformalized methods outperform traditional GP-based methods, especially in categorical, heteroskedastic, and asymmetric environments.

Introduction

This paper presents a comprehensive study of quantile-based hyperparameter optimization (HPO) frameworks, focusing on the integration of conformal prediction for improved calibration and search performance. The work systematically addresses the limitations of Gaussian Process (GP) surrogates in HPO, particularly in environments with categorical hyperparameters, heteroskedasticity, and non-symmetric loss surfaces. The study extends prior research by introducing new surrogate architectures, acquisition functions, and robust conformalization techniques, and benchmarks these against state-of-the-art HPO algorithms across diverse environments.

Quantile regression surrogates, trained via pinball loss, provide conditional quantile estimates for candidate configurations, enabling uncertainty-aware acquisition strategies. However, finite-sample calibration guarantees are not inherent to standard quantile regression. The paper leverages Conformalized Quantile Regression (CQR), which adjusts quantile intervals using non-conformity scores derived from a calibration set, yielding valid coverage guarantees even under distributional shift.

The study further addresses feedback covariate shift in sequential HPO by employing Adaptive Conformal Intervals (ACI) and the more robust Dynamically-tuned Adaptive Conformal Intervals (DtACI), which dynamically adjust the miscoverage level α based on empirical feedback. This ensures that conformal intervals remain valid throughout the optimization process.

Acquisition Function Extensions

The paper evaluates several acquisition functions within the conformal quantile framework:

- Thompson Sampling (TS): Utilizes discrete quantile estimates for randomized acquisition, shown to outperform deterministic approaches in most settings.

- Expected Improvement (EI): Approximates the expected gain over the current best, but suffers from poor tail quantile estimation in quantile-based surrogates.

- Optimistic Bayesian Sampling (OBS): A variant of TS that floors sampled values by the conditional expectation, promoting exploration in high-variance regions.

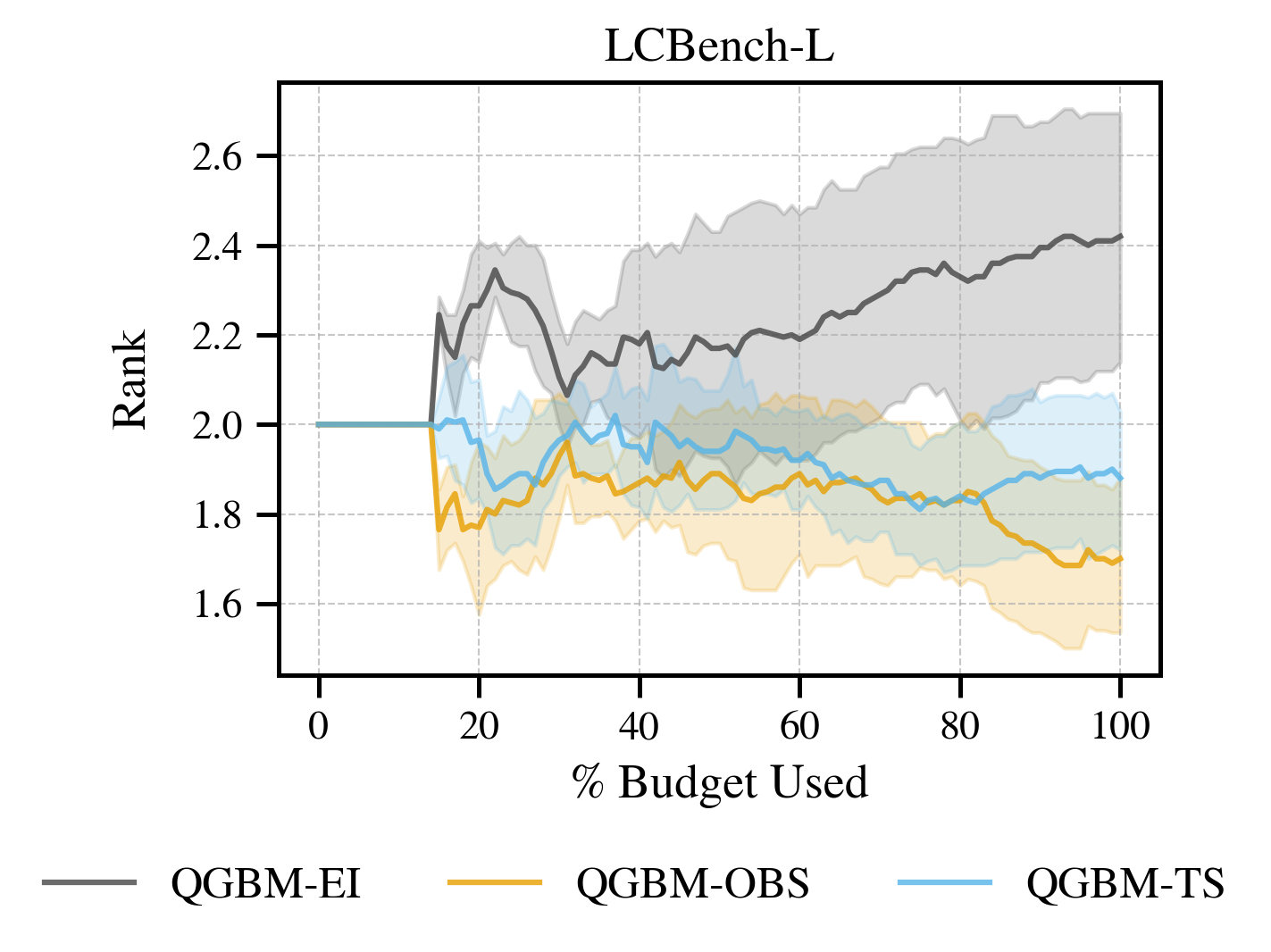

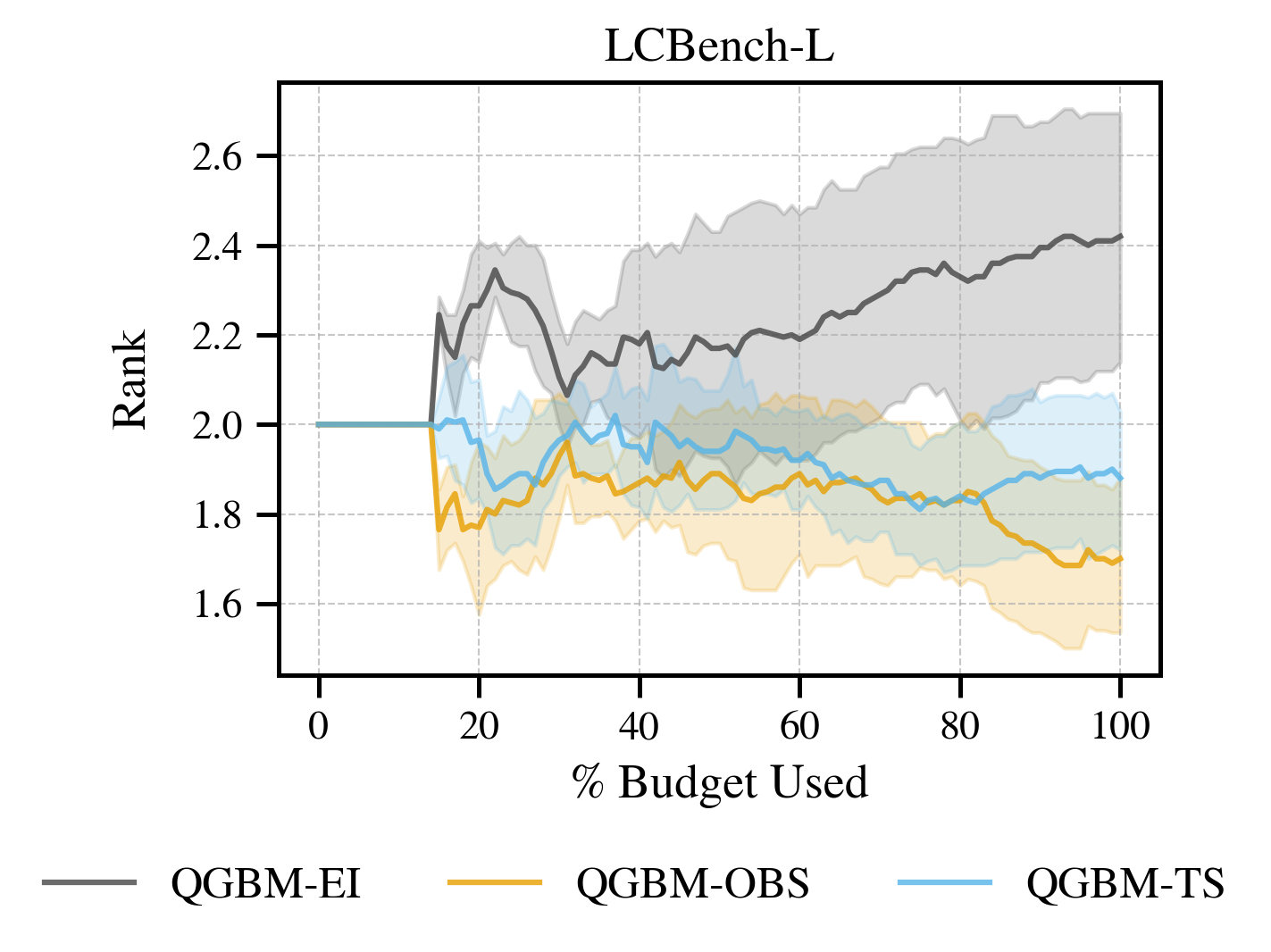

Empirical results demonstrate that TS and OBS consistently outperform EI in quantile HPO, with OBS providing additional exploratory benefits.

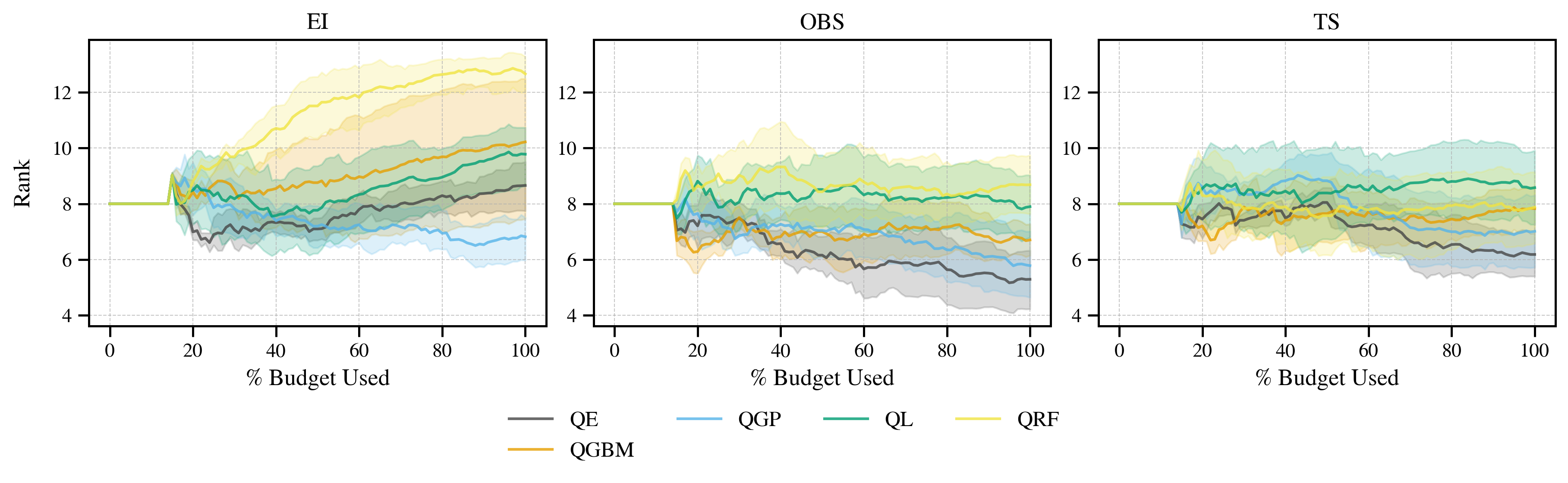

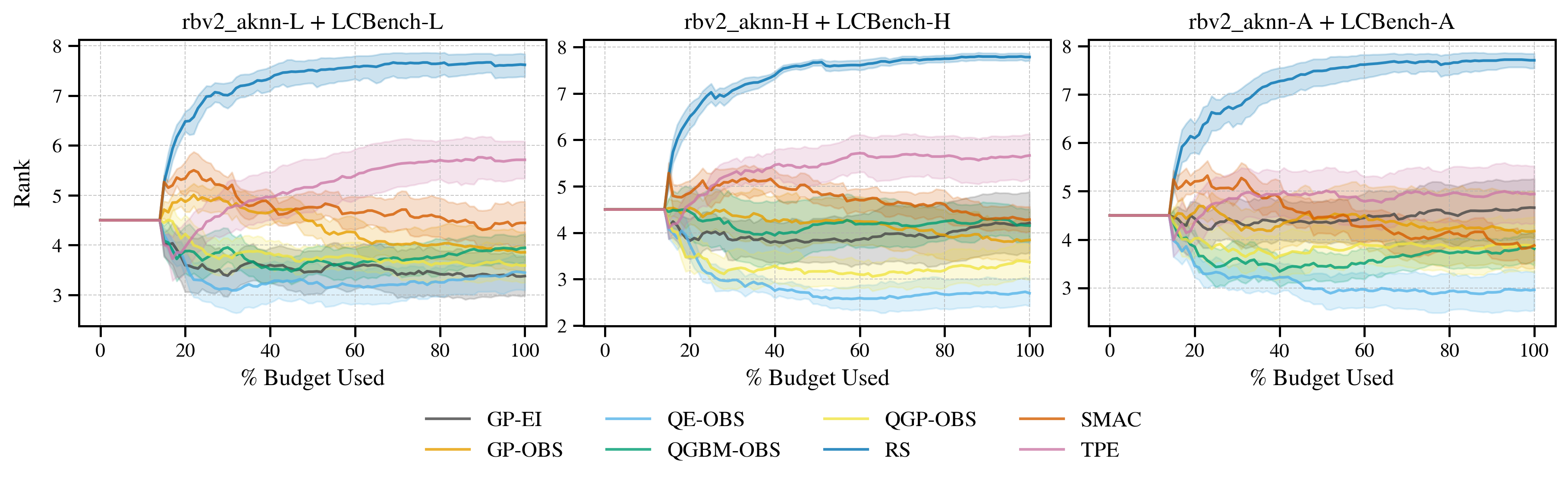

Figure 1: Search performance rank over iteration search budget per acquisition function on LCBench-L, across 20 random warm start initializations. Shaded region represents 95\% dataset-bootstrapped interval.

Surrogate Architecture Innovations

Beyond the established Quantile Gradient Boosted Machine (QGBM) and Quantile Regression Forest (QRF) surrogates, the paper introduces:

- Quantile Lasso (QL): Pinball loss-trained Lasso for high-dimensional, linear loss surfaces.

- Quantile Gaussian Process (QGP): Empirical quantile extraction from GP posteriors, enabling direct comparison with tree-based surrogates under conformalization.

- Quantile Ensemble (QE): Linear stacking of QGBM, QL, and QGP, with a Quantile Lasso meta-learner, designed to maximize generalization and robustness.

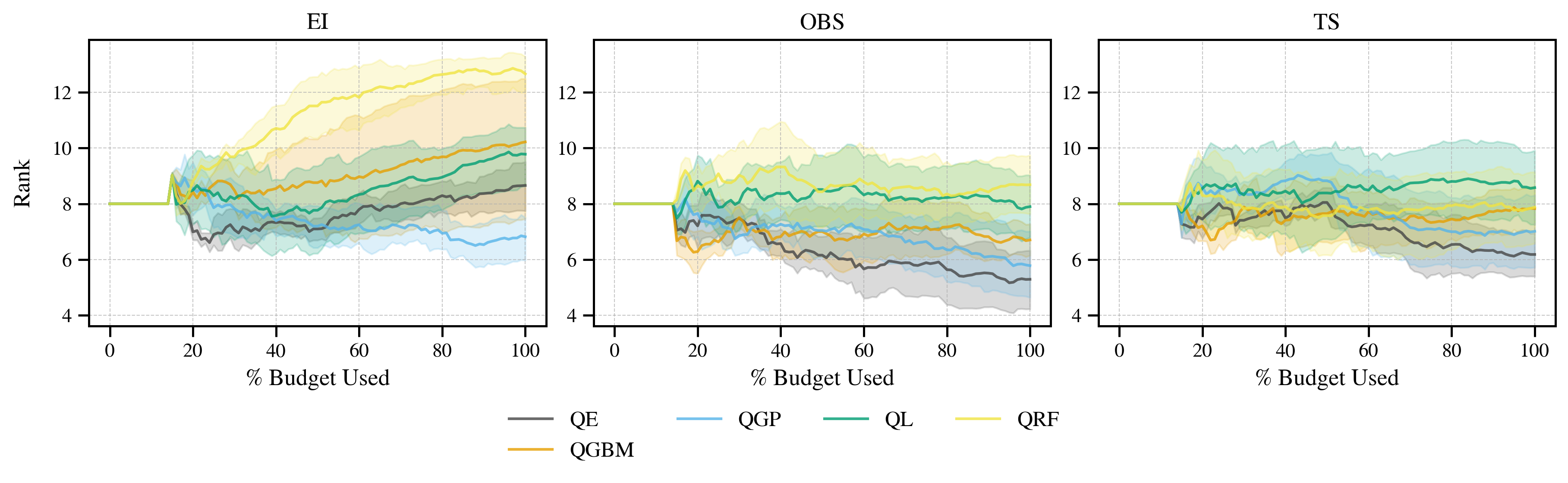

Ensembling (QE) yields the most consistent and superior performance across acquisition functions and benchmarks, particularly in environments violating GP assumptions.

Figure 2: LCBench-L search performance rank over iteration search budget for a range of surrogate architectures, across multiple acquisition functions (columns). Ranks are shared across plots (each surrogate and acquisition combination is treated as a ranking variant). Results cover 20 random warm start initializations. Shaded region represents 95\% dataset-bootstrapped interval.

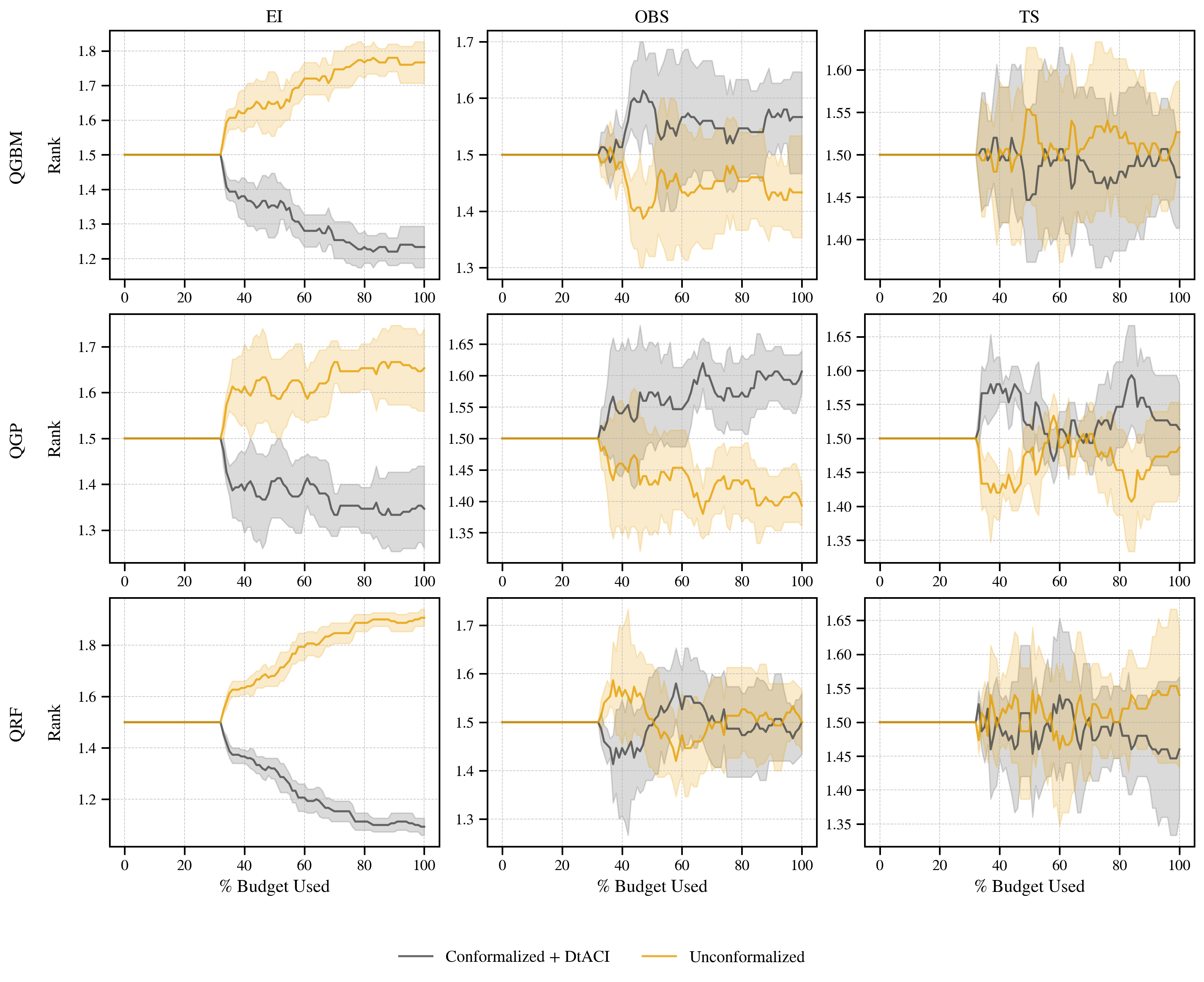

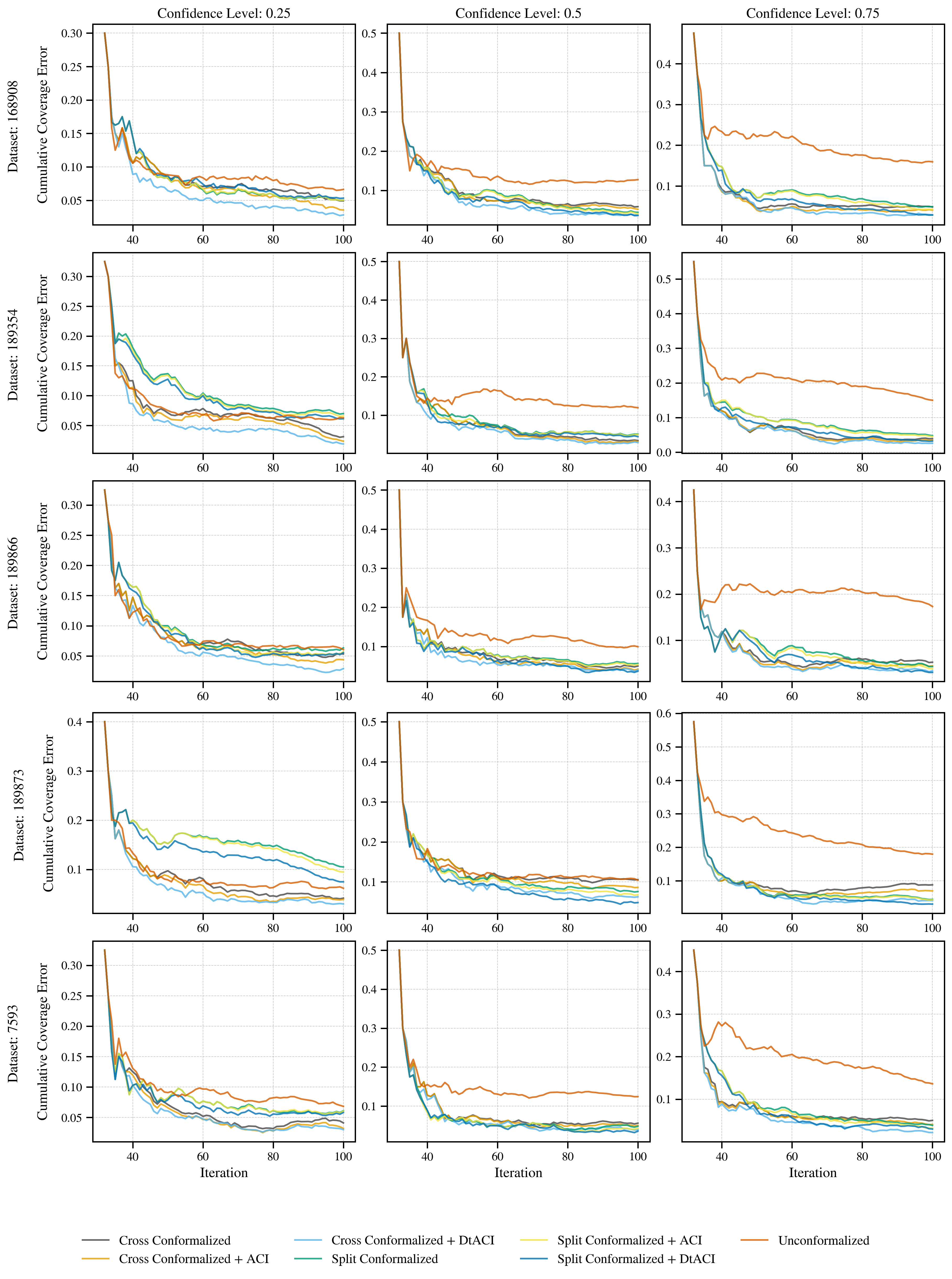

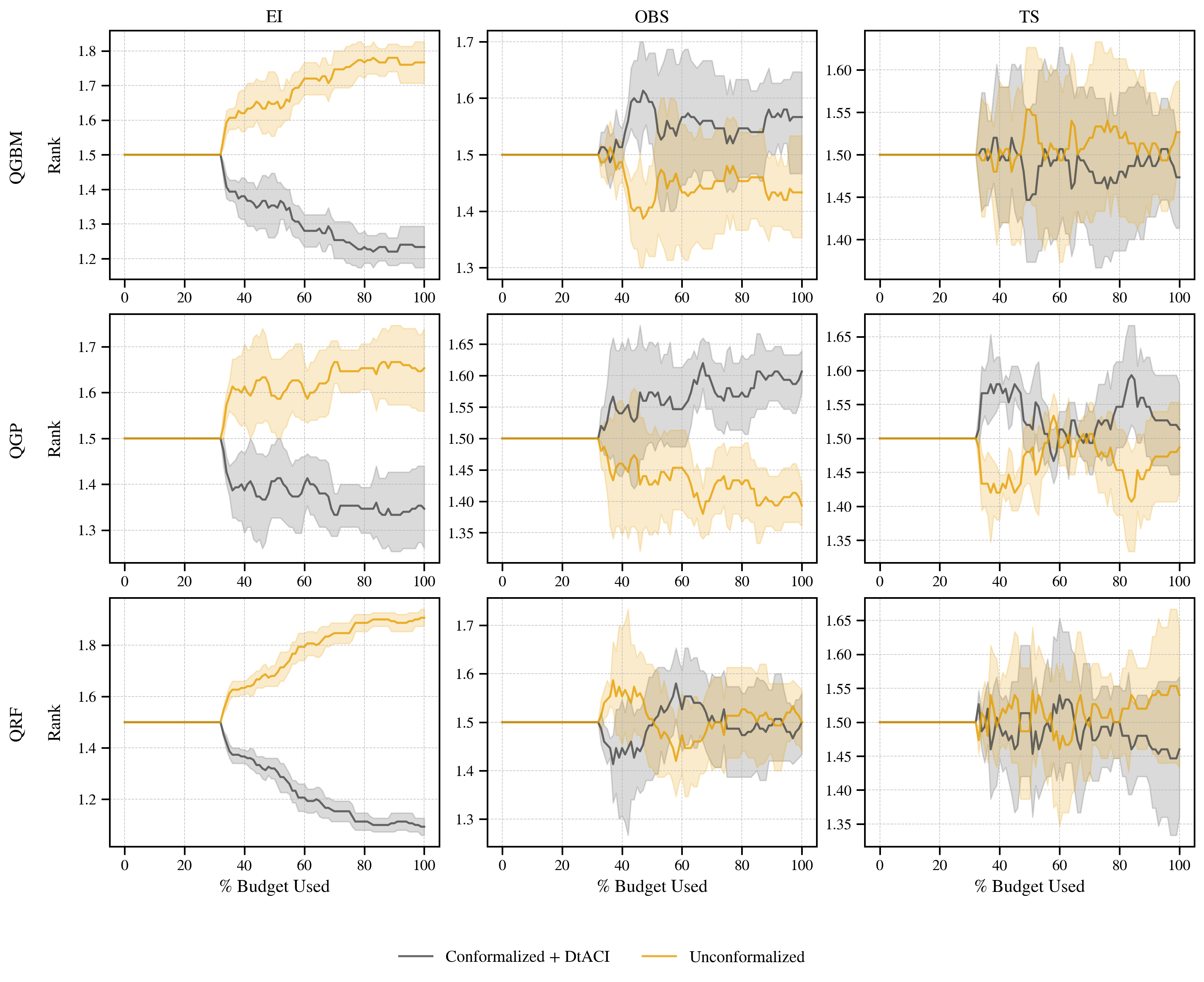

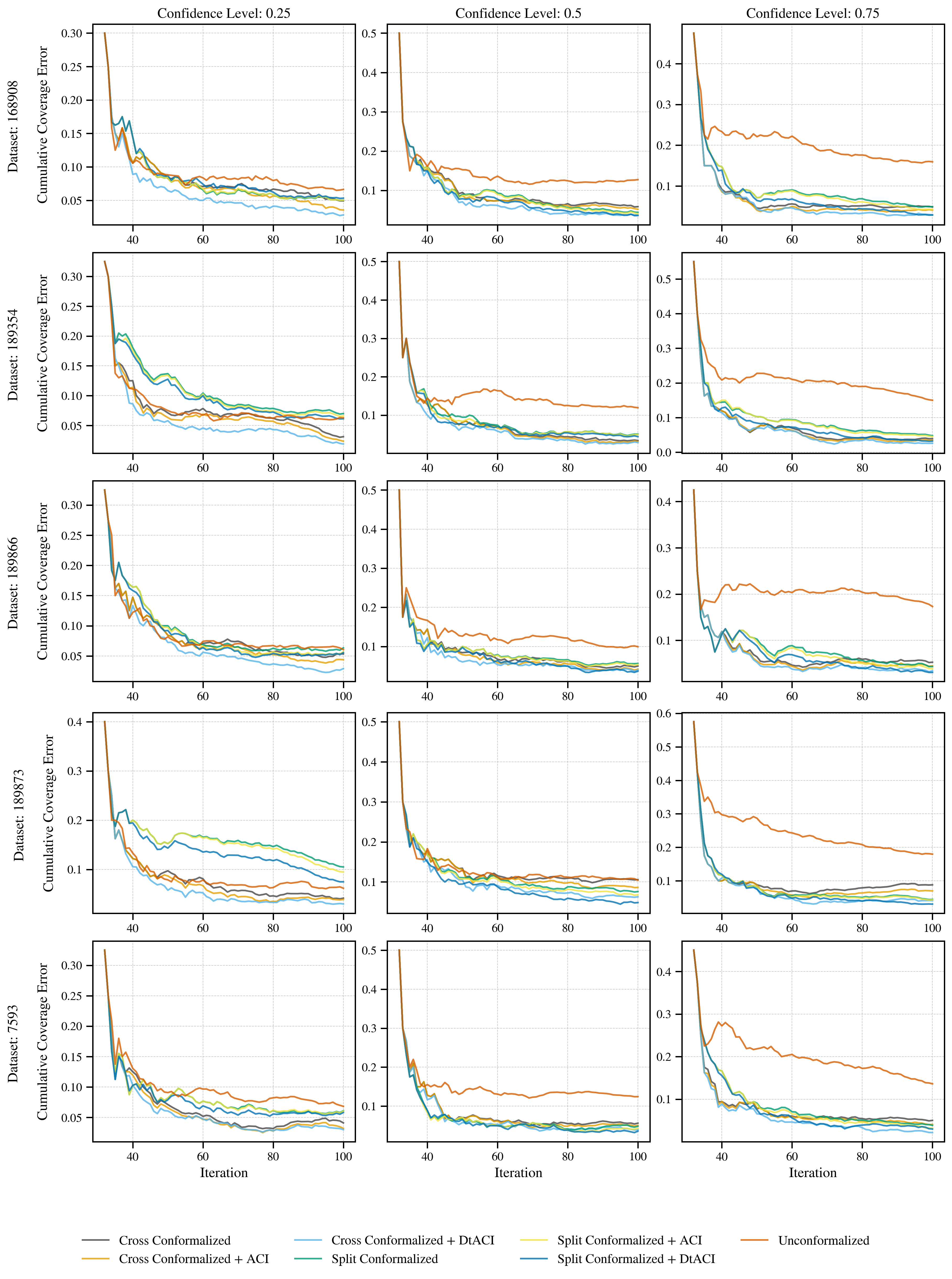

The study rigorously evaluates the impact of conformalization on both calibration metrics and search efficacy. Split Conformal Prediction (SCP) and CV+ are compared, with adaptation via DtACI providing further improvements. Conformalization significantly enhances local and marginal calibration, as evidenced by reduced coverage error and improved interval validity.

However, the transfer of calibration improvements to search performance is acquisition-dependent. Under EI, conformalization yields substantial gains, while under TS and OBS, the impact is neutral or occasionally negative. This is attributed to the interaction between surrogate misspecification and acquisition strategy, as well as the loss of training data in split conformalization.

Figure 3: LCBench-L search performance rank over iteration search budget. Performances are reported with and without conformalization, across several surrogate architectures (rows) and acquisition functions (columns). Results cover 20 random warm start initializations. Shaded region represents 95\% dataset-bootstrapped interval. Conformalization is carried out via CV+ up to the 50-th iteration, and SCP thereafter.

Benchmarking and Comparative Analysis

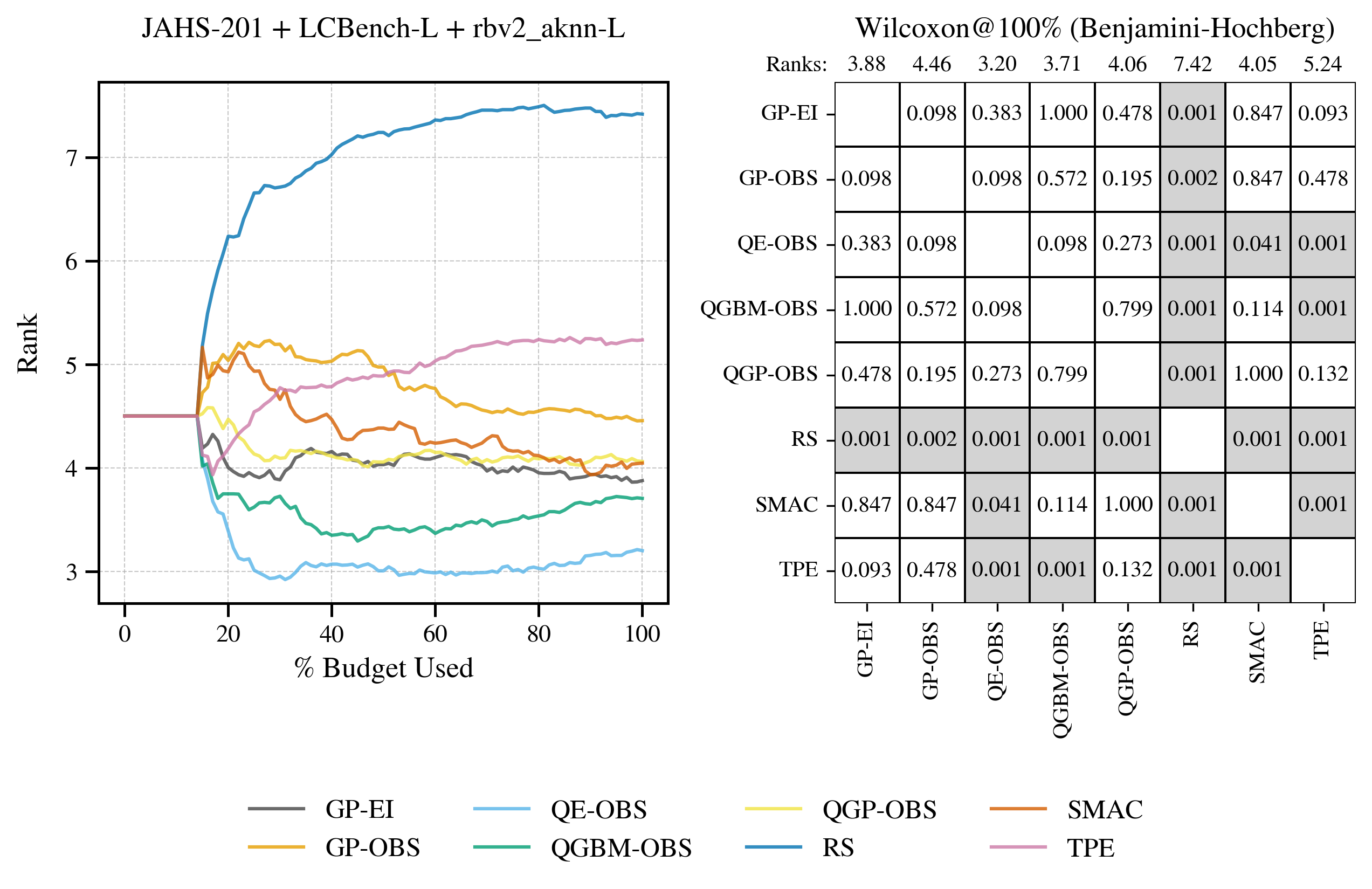

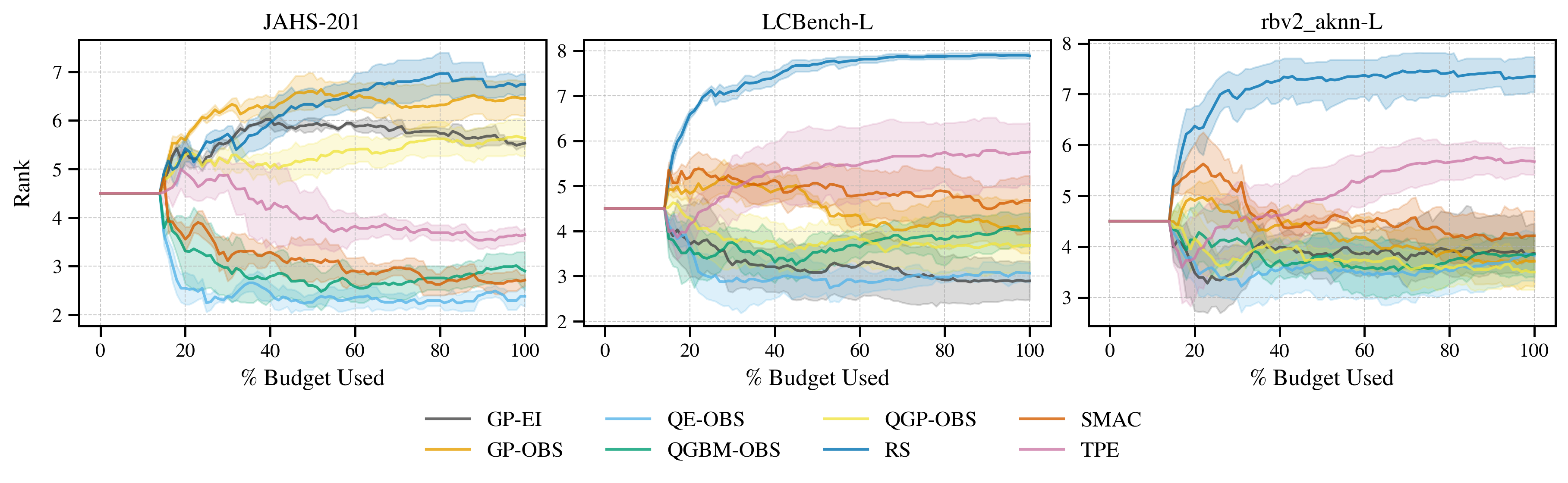

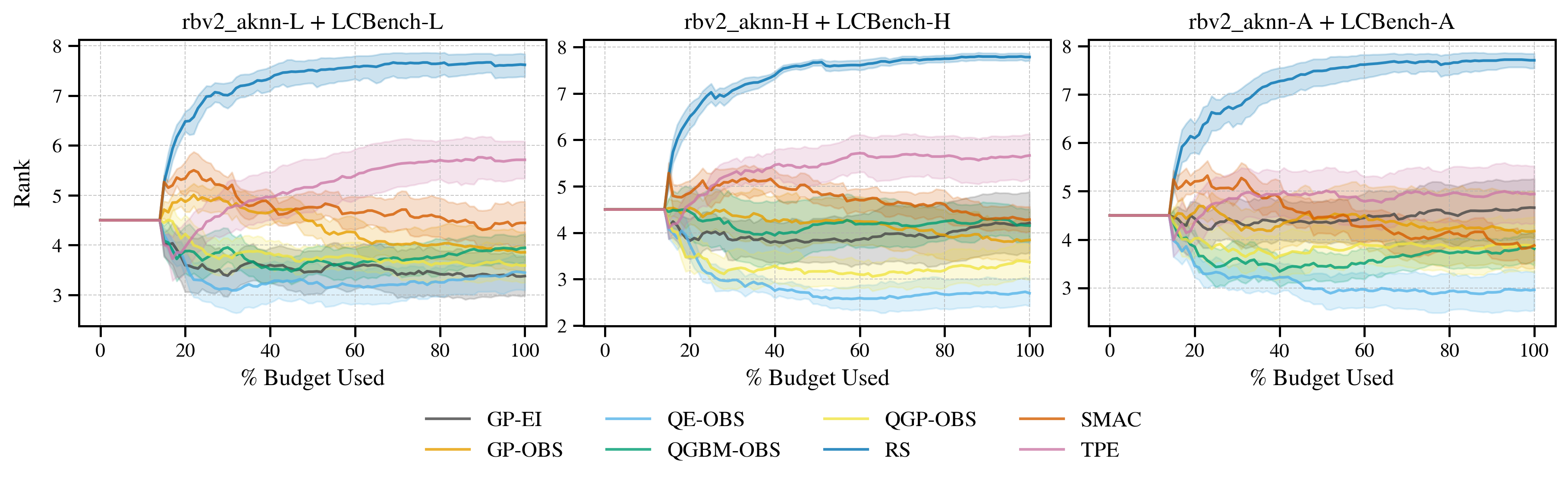

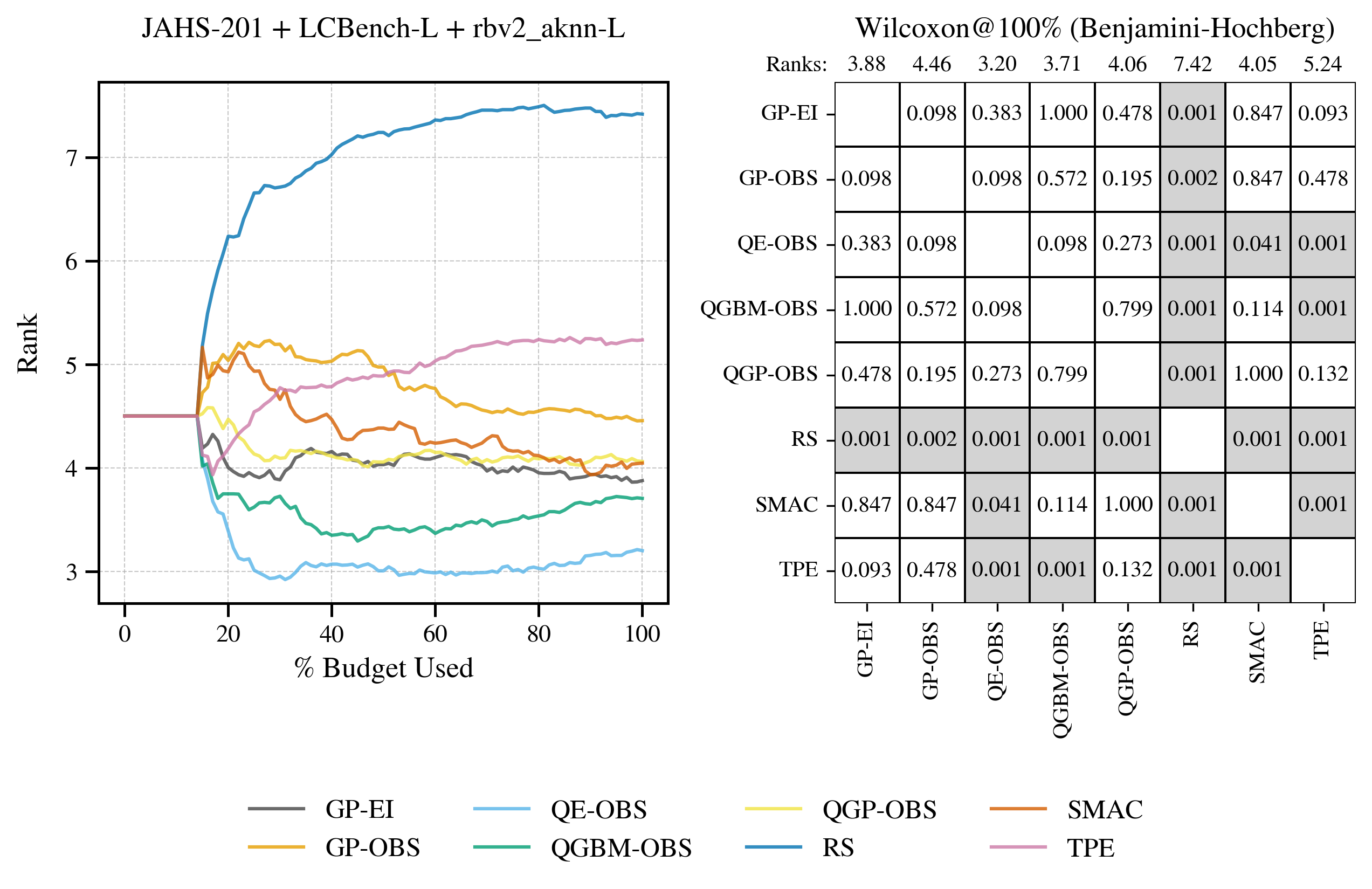

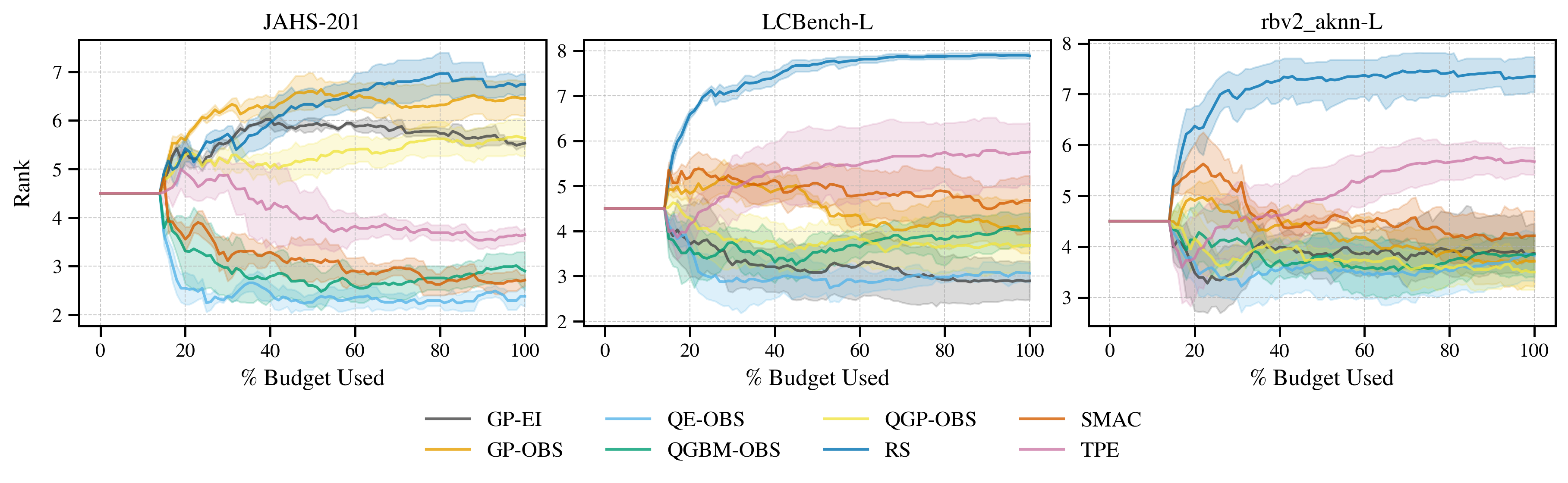

The paper benchmarks the proposed quantile conformal frameworks against GP-EI, GP-OBS, TPE, SMAC, and random search across JAHS-Bench-201, LCBench-L, and rbv2_aknn-L. QE and QGBM consistently achieve first and second place, with statistically significant outperformance over SMAC and TPE. GP-based methods are competitive in continuous environments but degrade in categorical, heteroskedastic, and asymmetric settings.

Stratified analysis on sub-populations of datasets with high heteroskedasticity and asymmetry further validates the robustness of quantile conformal approaches, especially QE, which maintains top performance across all challenging environments.

Figure 4: Left: search performance rank over iteration search budget for range of quantile and established HPO algorithms. Results cover 15 random warm start initializations. Right: Matrix of Wilcoxon Signed-Rank p-values per pairwise algorithm comparison at 100\% budget. P-values are adjusted for multiple comparison via Benhamini-Hochberg correction. Shaded cells denote significant comparisons.

Figure 5: Search performance rank over iteration search budget for range of quantile and established HPO algorithms, segmented by benchmarking environment (columns). Results cover 15 random warm start initializations. Shaded region represents 95\% dataset-bootstrapped interval.

Figure 6: Search performance rank over iteration search budget for range of quantile and established HPO algorithms, segmented by benchmarking group (columns). Results cover 15 random warm start initializations. Shaded region represents 95\% dataset-bootstrapped interval.

Calibration Quality: Coverage and Interval Widths

The study provides a detailed breakdown of cumulative coverage error across confidence levels, demonstrating that conformalization is most beneficial at moderate to high confidence intervals. Interval widths are larger in conformalized variants, but CV+ and DtACI reduce width inflation compared to SCP and ACI, respectively.

Figure 7: Cumulative coverage per search iteration across 25\%, 50\% and 75\% intervals from greedy expected value acquisition on LCBench-L datasets. Results are averaged across 20 random warm started runs. Uncertainty regions mark 95\% dataset-bootstrapped intervals. Coverage reporting begins at iteration 32, post-conformalization.

Practical and Theoretical Implications

The findings have several implications:

- Surrogate Flexibility: Quantile conformal frameworks enable the use of diverse surrogate architectures, improving robustness in non-Gaussian, categorical, and heteroskedastic environments.

- Acquisition Strategy Selection: The choice of acquisition function is critical; TS and OBS are preferable in quantile HPO, while EI requires careful quantile density tuning.

- Conformalization Trade-offs: Calibration improvements do not universally translate to search gains; acquisition-surrogate interactions must be considered.

- Benchmarking Rigor: Stratified benchmarking reveals that quantile conformal methods are particularly advantageous in settings that violate GP assumptions, supporting their adoption in practical AutoML systems.

Future Directions

Potential avenues for further research include:

- Meta-learning for Surrogate Selection: Automated selection or adaptation of surrogate architectures based on dataset characteristics.

- Hybrid Acquisition Strategies: Dynamic switching between acquisition functions during search to exploit their respective strengths.

- Scalable Conformalization: Efficient conformalization techniques for large-scale HPO, minimizing training data loss and computational overhead.

- Multi-fidelity and Multi-objective Extensions: Integration of conformal quantile frameworks into multi-fidelity and multi-objective HPO settings.

Conclusion

This study advances the state of quantile-based hyperparameter optimization by integrating robust conformalization techniques, expanding surrogate and acquisition function choices, and providing rigorous benchmarking. Quantile ensembles with conformalization (QE) achieve consistently superior performance, especially in environments challenging for traditional GP surrogates. Conformalization significantly improves calibration, with search performance gains contingent on acquisition strategy. The work establishes quantile conformal HPO as a versatile and competitive approach for modern AutoML pipelines, with clear directions for future methodological and practical enhancements.