- The paper presents a unified autonomy framework (HUNT) that eliminates global pose estimation by using an instantaneous relative frame for all perception and control objectives.

- It integrates high-order Control Barrier Functions within a Nonlinear Model Predictive Controller to enforce real-time, collision-free trajectories in diverse, unstructured settings.

- Experimental results in SAR missions demonstrate robust mode switching, drift-free loitering, and safe traversal under dense canopy and urban conditions.

High-Speed UAV Navigation and Tracking in Unstructured Environments via Instantaneous Relative Frames

Introduction and Motivation

The paper presents HUNT, a unified autonomy framework for high-speed UAV navigation and target tracking in unstructured, GPS-denied environments. The central innovation is the formulation of all perception, estimation, and control objectives in an instantaneous relative frame, Rt, constructed solely from directly observable onboard quantities (attitude, altitude, velocity, and target-relative position when available). This approach eliminates reliance on global pose estimation, which is known to be fragile in environments with degraded GPS, sparse or dynamic visual features, and dense clutter—conditions typical in search-and-rescue (SAR) missions.

Figure 1: HUNT enables reactive high-speed autonomy for search-and-rescue operations, validated across diverse outdoor missions with seamless integration of fast traversal, target acquisition, and sustained tracking.

Framework Overview and Technical Contributions

HUNT unifies three operational regimes—traversal (loitering), acquisition, and tracking—within a single perception-control pipeline. The system operates in two primary modes:

A key technical contribution is the integration of high-order Control Barrier Functions (CBFs) into a Nonlinear Model Predictive Controller (NMPC), enforcing real-time, dynamically feasible, collision-free trajectories. Mode switching is governed by a confidence-based hysteresis policy on the UKF estimator uncertainty, preventing oscillations and ensuring stability as detections appear and disappear.

State Estimation and Reference Generation

State estimation in both modes is performed via an Unscented Kalman Filter (UKF) maintaining a state vector comprising relative position, velocity, attitude, and sensor biases. In loitering, the process model is driven by IMU readings, with updates from barometric altitude and stereo-inertial velocity fusion. In tracking, the process model uses only angular rates, and updates are provided by target-relative displacement from a lightweight onboard detector fused with stereo disparity.

Reference generation in loitering is based on altitude, forward speed, and heading, with the heading optionally biased by low-confidence detections or free-space analysis. In tracking, references are defined by horizontal standoff, vertical offset, and heading toward the target, all expressed in Rt to ensure robustness against drift.

Collision Avoidance and Planning

Collision avoidance leverages CBF constraints embedded in the NMPC, using stereo depth images to construct point clouds and select imminent collision points via time-to-collision heuristics. Each obstacle induces a quadratic separation constraint in Rt, and the NMPC optimizes trajectories subject to dynamics, actuation bounds, and safety constraints.

Experimental Validation

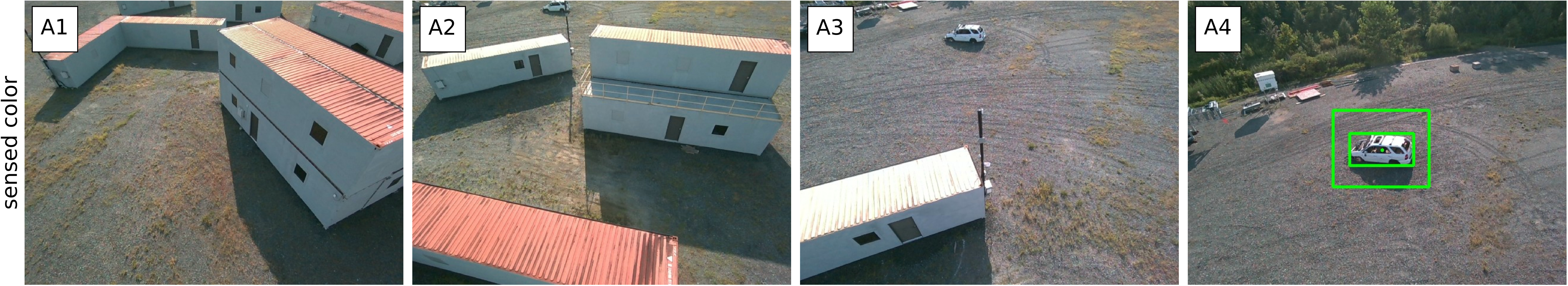

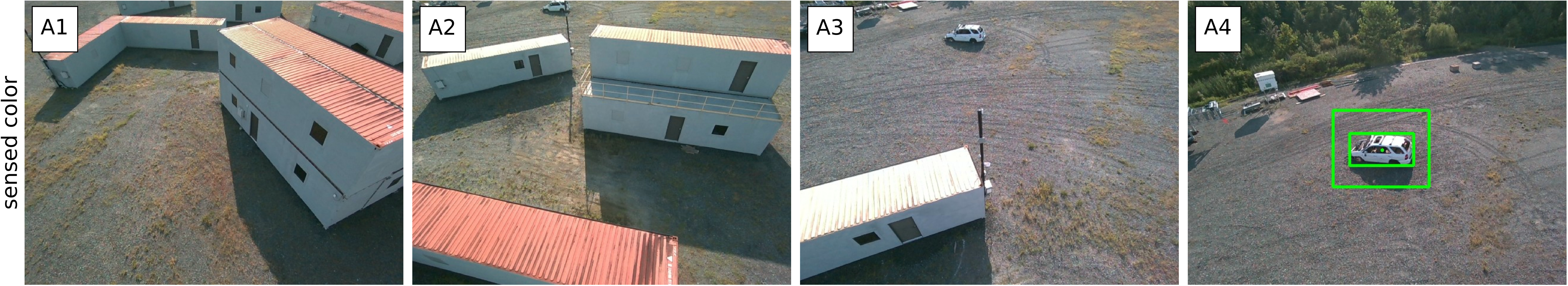

The framework is validated in extensive outdoor SAR-like missions, including urban compounds, semi-structured layouts, and dense forests. Experiments demonstrate:

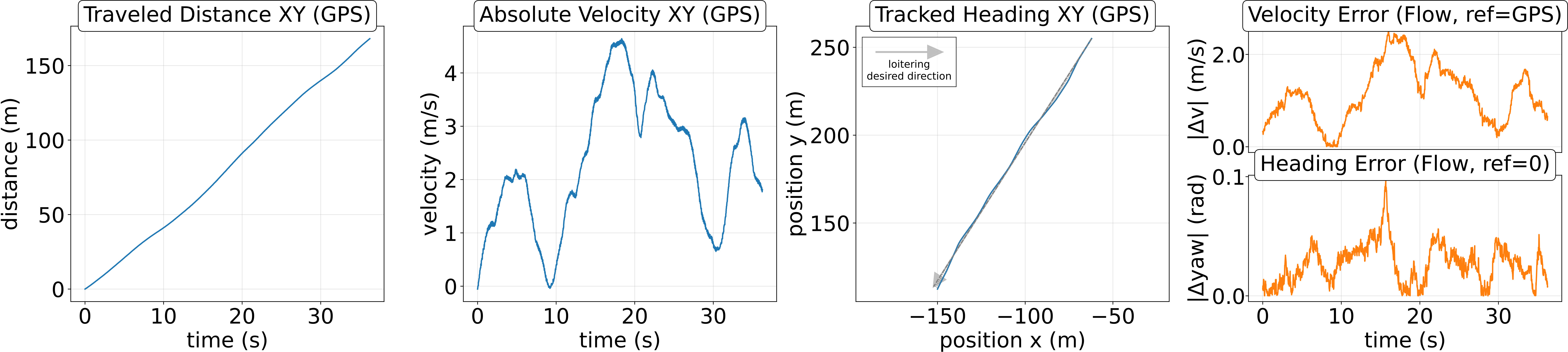

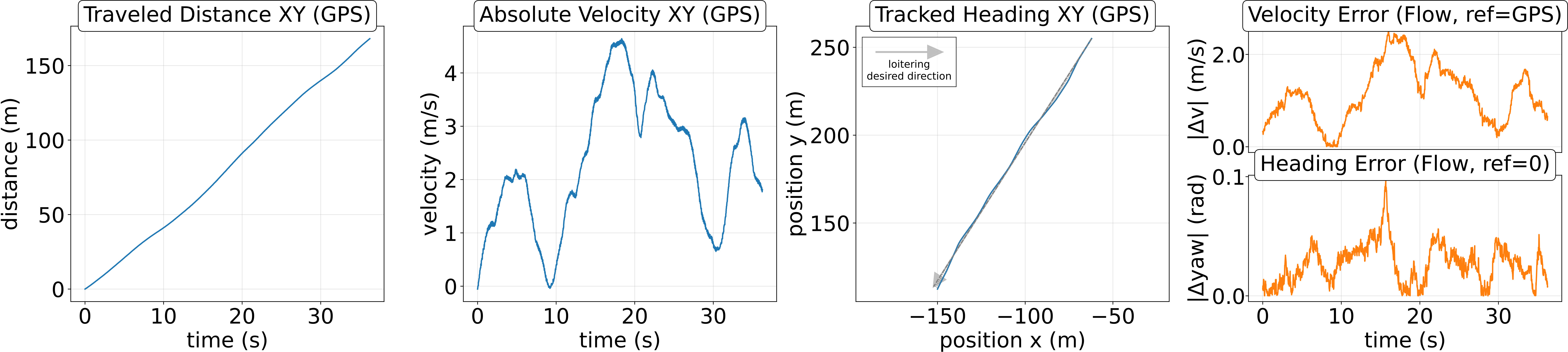

- Drift-free loitering above city blocks and forest canopy: The UAV maintains steady velocity and heading using only instantaneous observables, with velocity and heading errors remaining bounded relative to GPS ground truth.

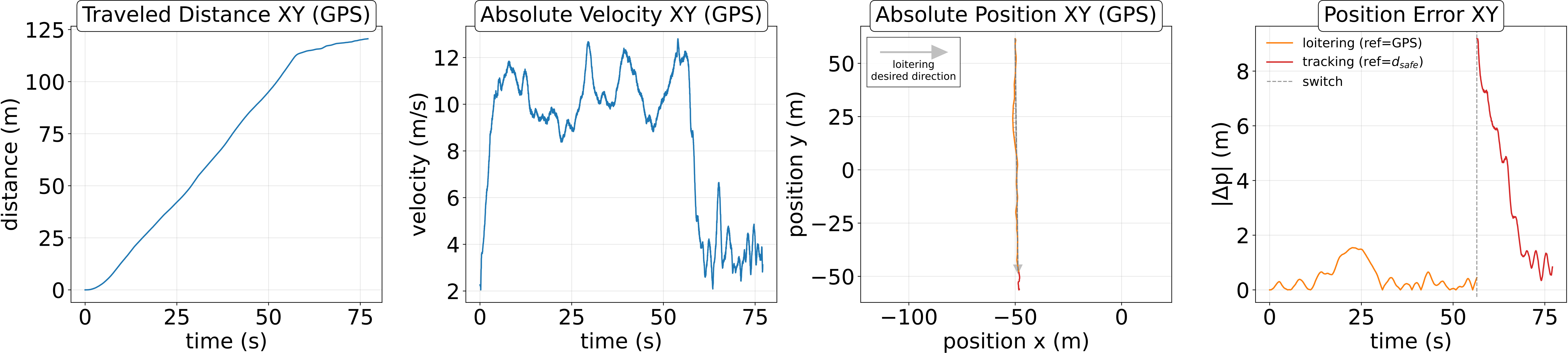

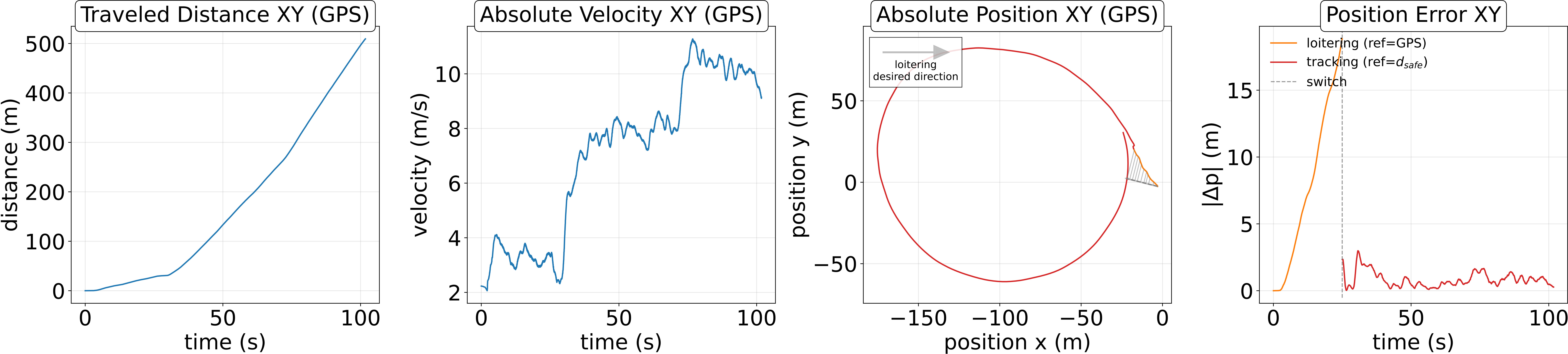

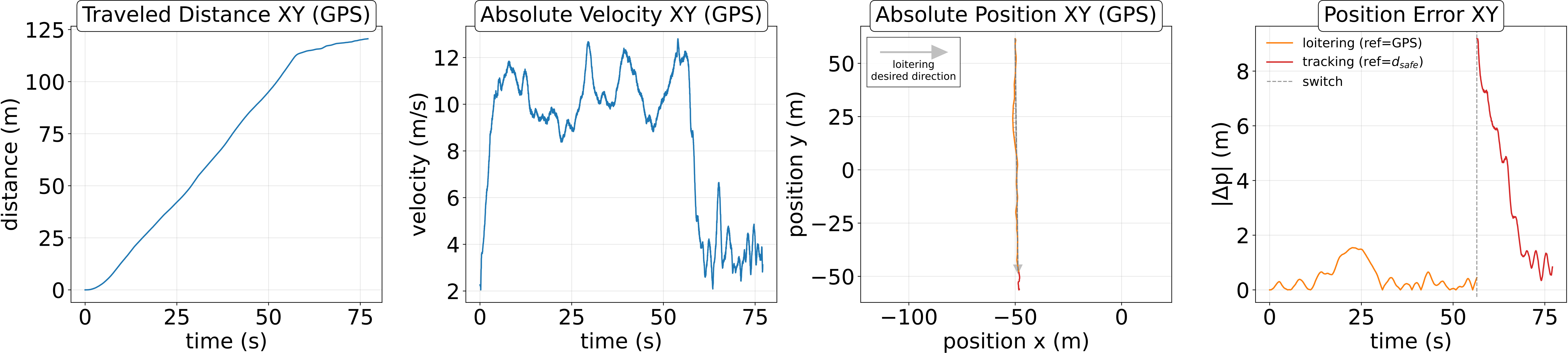

Figure 3: Loitering experiments without a target, showing drift-free traversal and bounded errors in both urban and mixed environments.

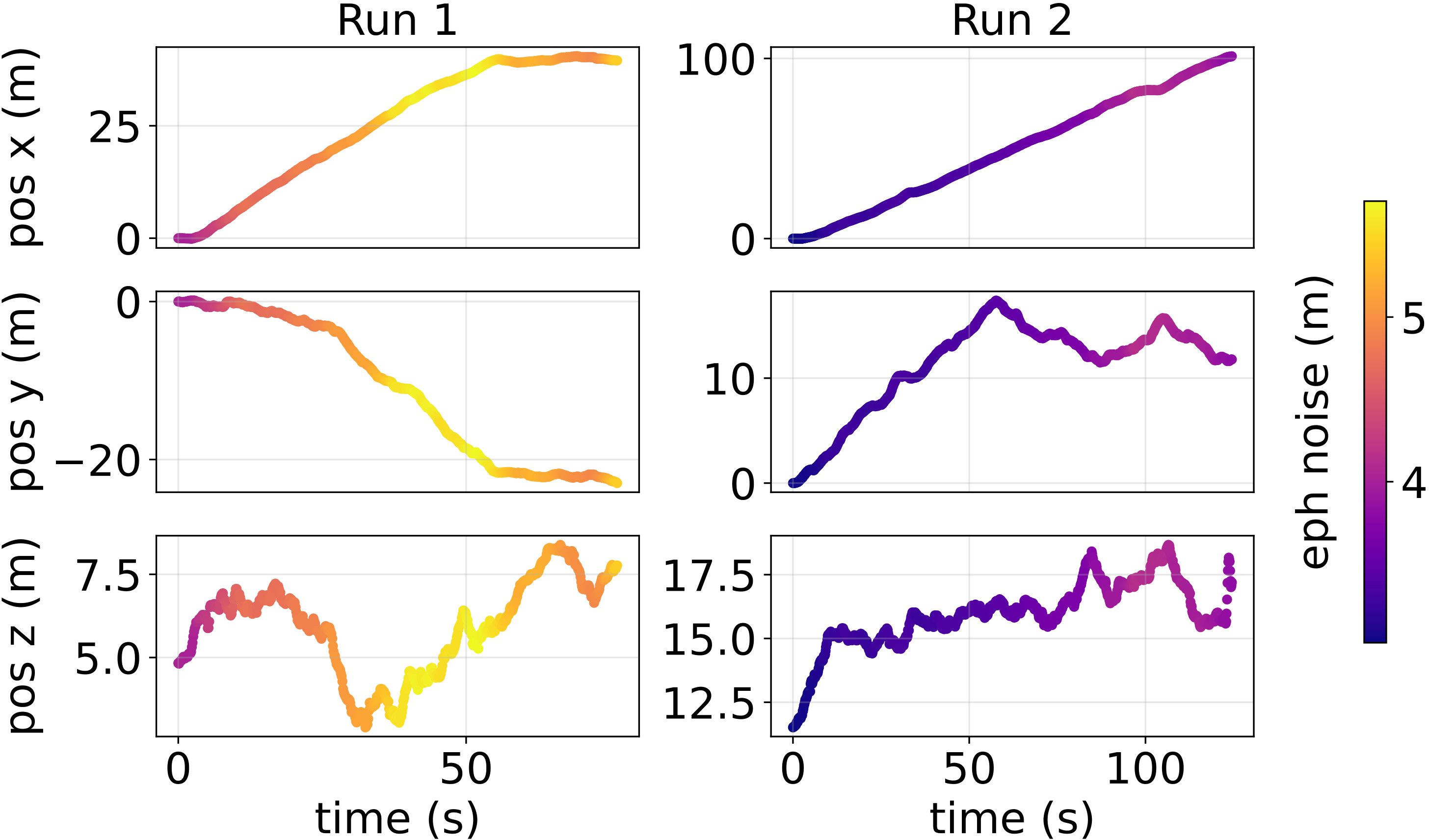

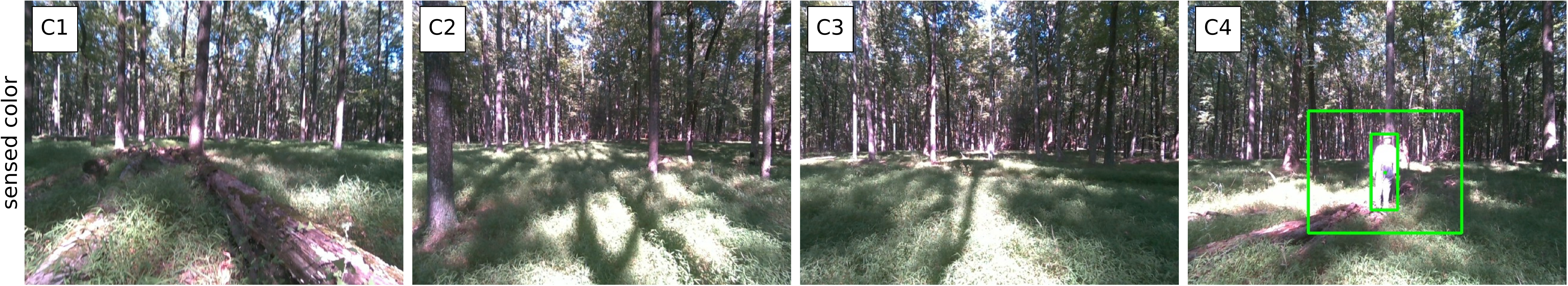

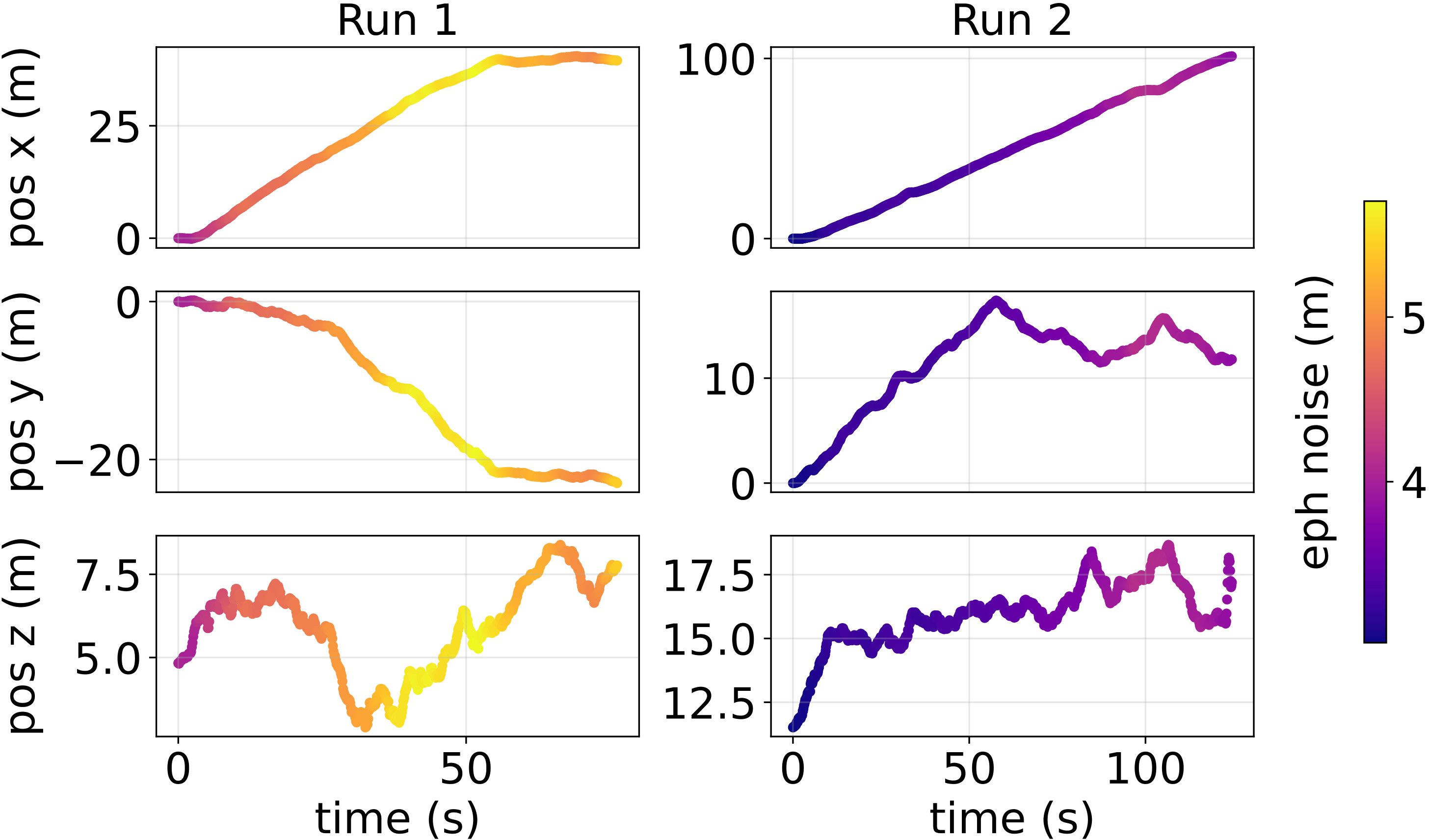

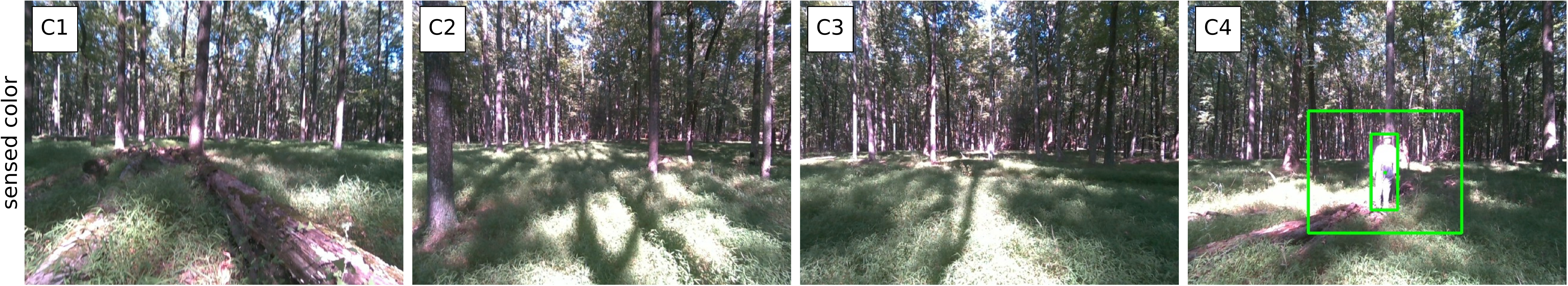

- Safe traversal below dense forest canopy: HUNT sustains stable forward motion and clearance margins above $1.0~\si{m}$, with GPS errors of $2$–$6~\si{m}$ making map-based navigation unreliable. The system relies exclusively on instantaneous observables for navigation.

Figure 4: Loitering under dense forest canopy, with UAV trajectories colored by GPS-reported horizontal error and satellite view of reconstructed flight paths.

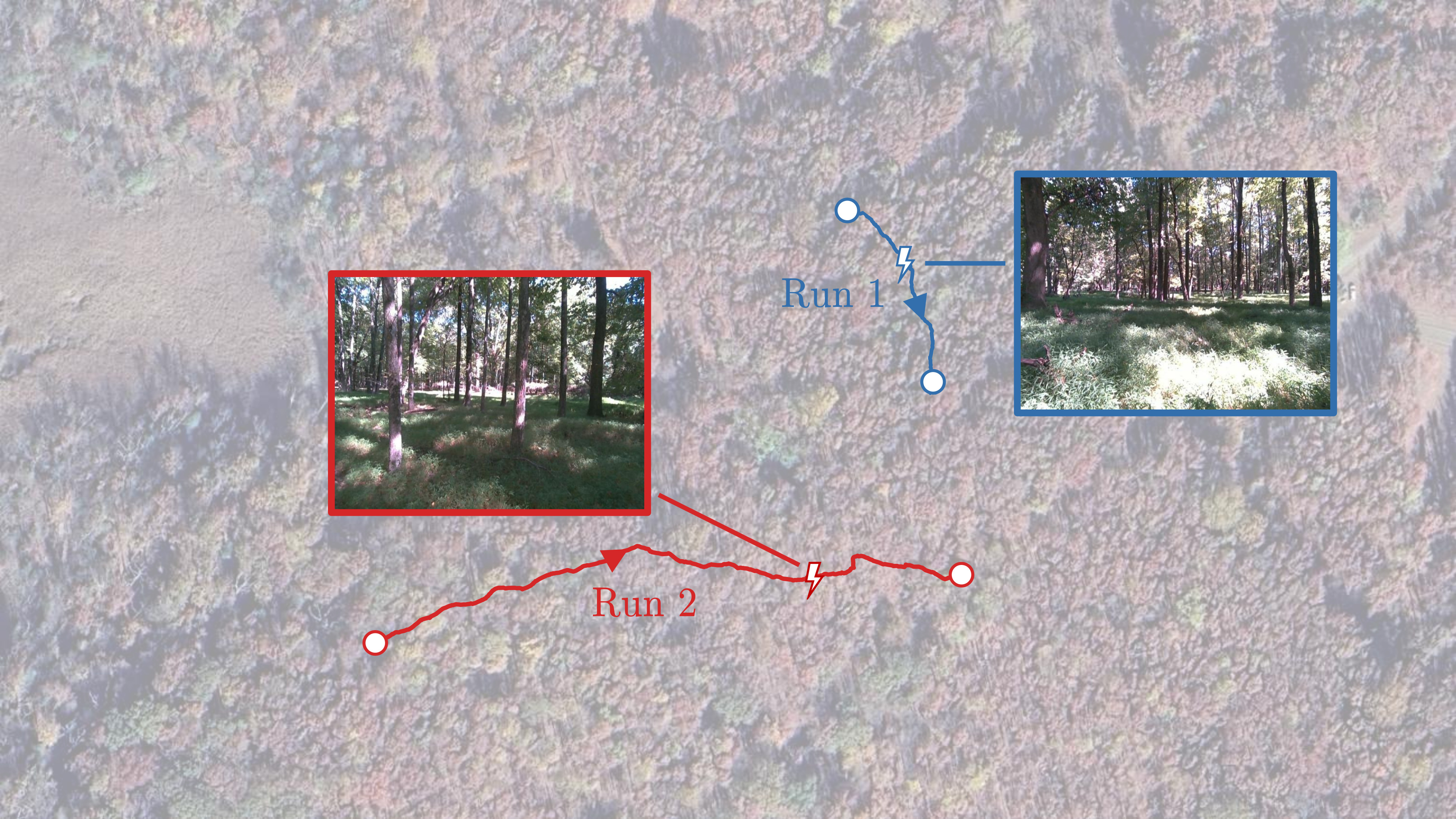

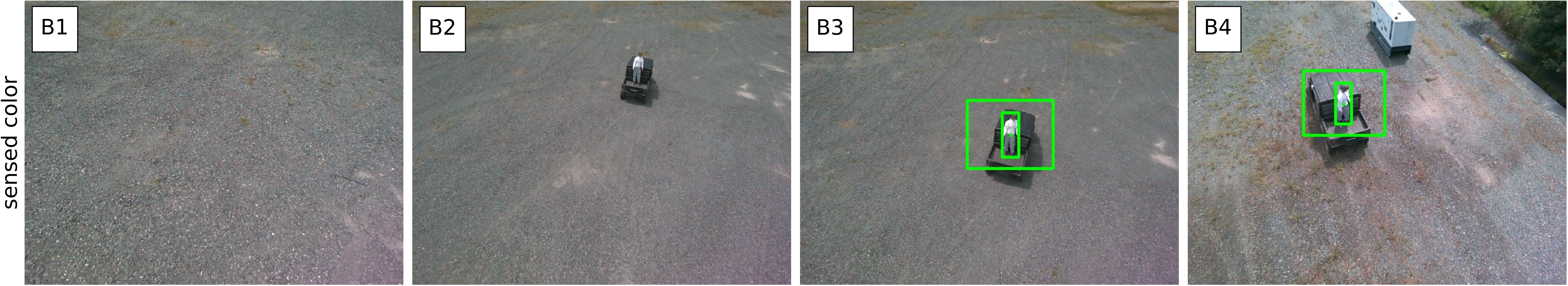

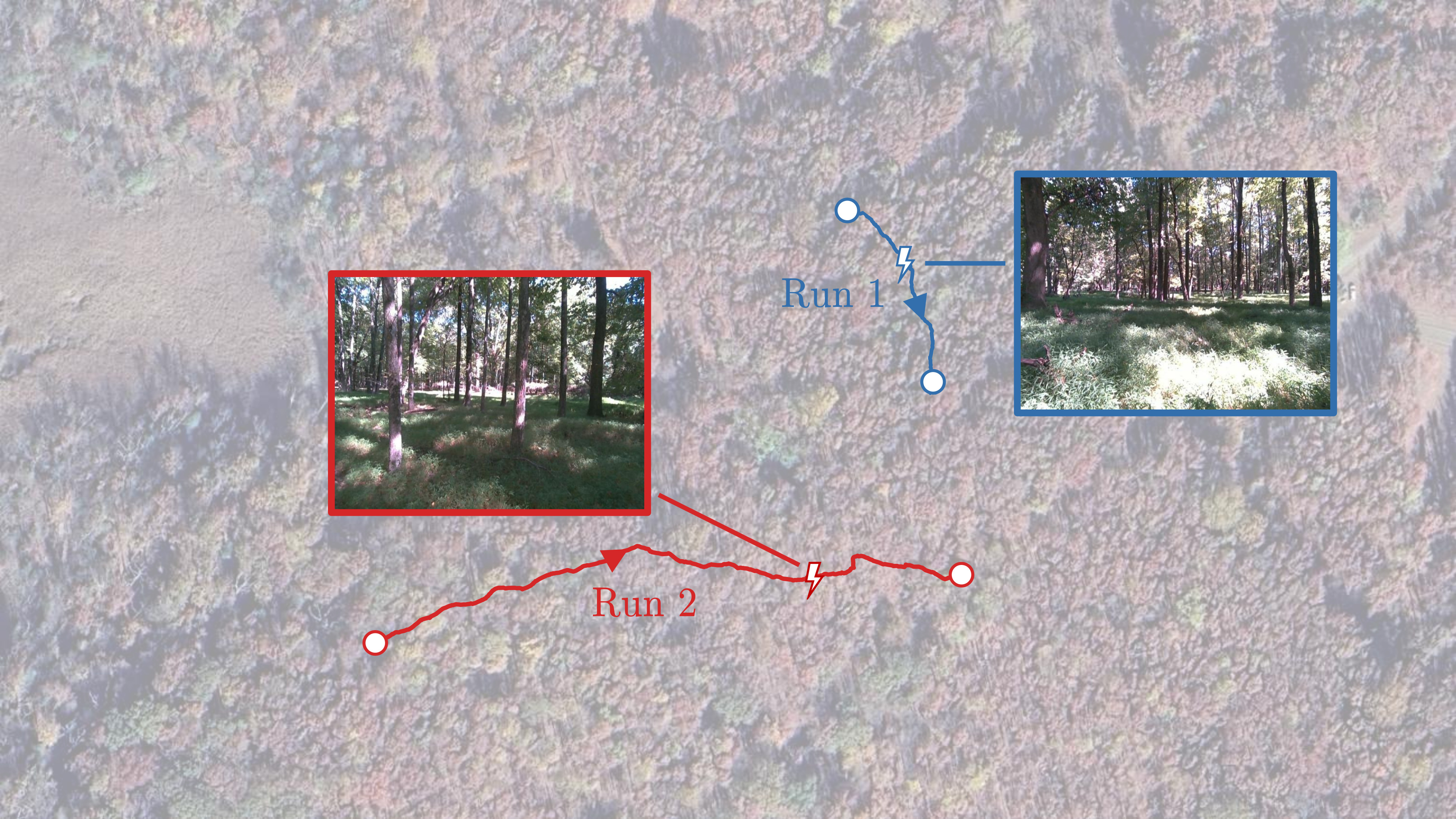

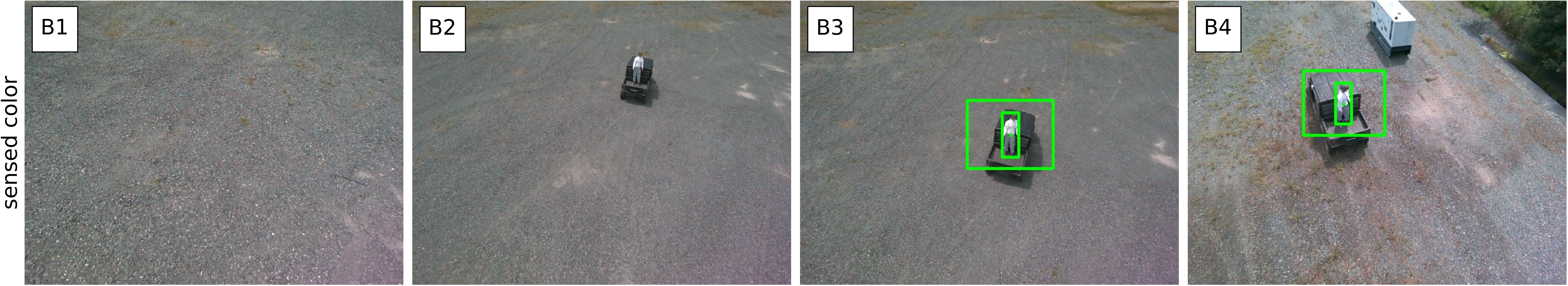

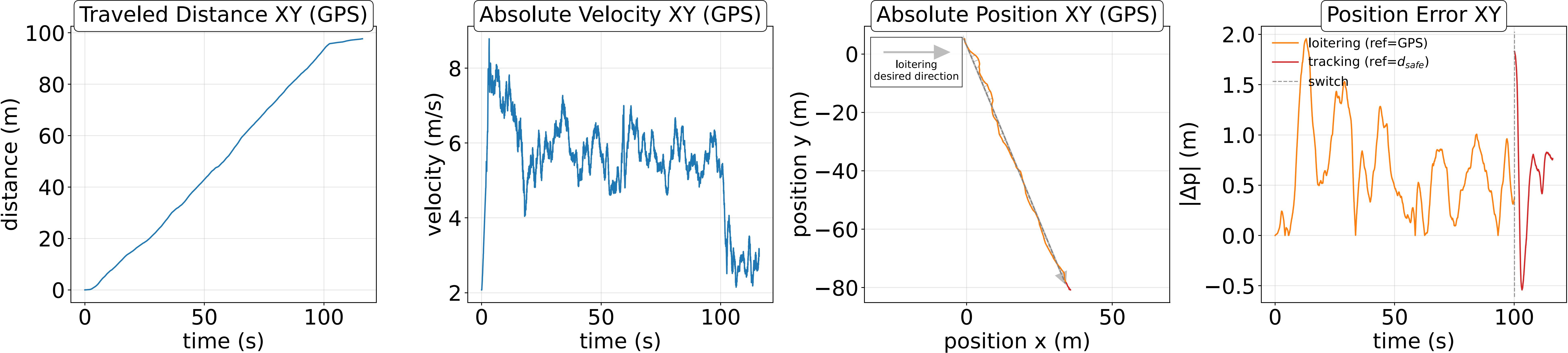

- Seamless mode switching in SAR missions: The UAV transitions between loitering and tracking upon target detection, maintaining bounded displacement errors and robust pursuit even in cluttered and occluded environments.

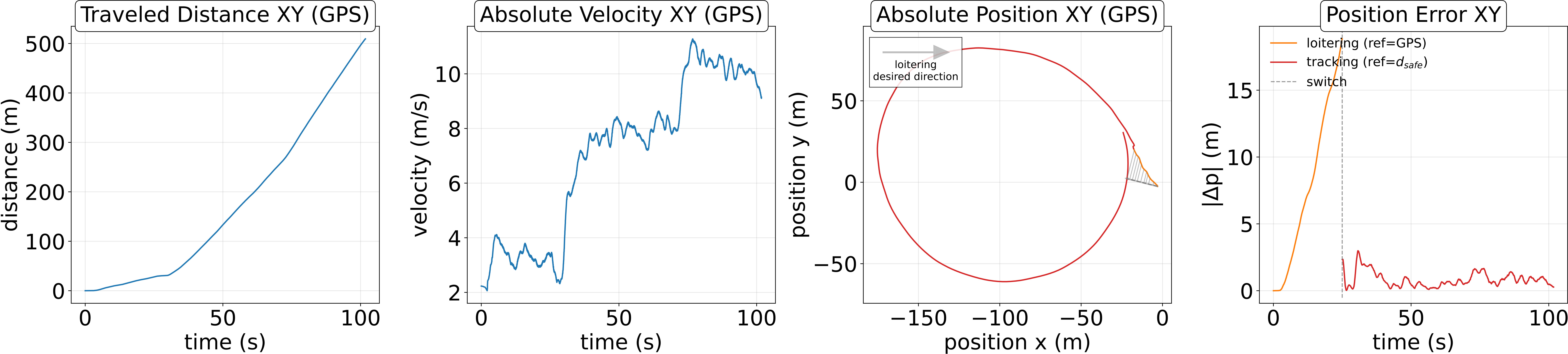

Figure 5: Mode switching between loitering and tracking across three SAR missions, demonstrating robust acquisition and pursuit in urban and forest scenarios.

Quantitative results indicate mean forward velocities of $8.85~\si{m/s}$ (urban search), $7.72~\si{m/s}$ (urban pursuit), and $6.52~\si{m/s}$ (forest search), with peak velocities up to $12.8~\si{m/s}$ and control effort and pitch angles remaining within safe bounds. The system operates without parameter retuning across all environments.

Implications and Future Directions

The instantaneous relative frame formulation in HUNT demonstrates that high-speed traversal and robust tracking can be achieved without global pose or mapping, challenging the necessity of world-centric navigation in degraded environments. The approach is particularly suited for SAR, disaster response, and exploration tasks where global localization is unreliable or unavailable.

Future work should address the integration of semantic reasoning from large vision-LLMs to guide loitering and improve coverage, as well as the introduction of lightweight short-term memory to avoid cyclic loitering and enhance exploration efficiency. The extension of the framework to multi-agent scenarios, cooperative search, and dynamic environments with moving obstacles is a promising direction.

Conclusion

HUNT provides a unified, real-time framework for high-speed UAV navigation and tracking in unstructured, GPS-denied environments by anchoring all objectives and constraints in an instantaneous relative frame. The system achieves robust autonomy, fast traversal, and reliable target acquisition and pursuit, validated in diverse real-world missions. The elimination of global pose estimation and reliance on instantaneous observables represent a significant step toward resilient autonomy in challenging environments.