Controls Abstraction Towards Accelerator Physics: A Middle Layer Python Package for Particle Accelerator Control

Abstract: Control system middle layers act as a co-ordination and communication bridge between end users, including operators, system experts, scientists, and experimental users, and the low-level control system interface. This article describes a Python package -- Controls Abstraction Towards Acclerator Physics (CATAP) -- which aims to build on previous experience and provide a modern Python-based middle layer with explicit abstraction, YAML-based configuration, and procedural code generation. CATAP provides a structured and coherent interface to a control system, allowing researchers and operators to centralize higher-level control logic and device information. This greatly reduces the amount of code that a user must write to perform a task, and codifies system knowledge that is usually anecdotal. The CATAP design has been deployed at two accelerator facilities, and has been developed to produce a procedurally generated facility-specific middle layer package from configuration files to enable its wider dissemination across other machines.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper introduces a Python software package called CATAP (Controls Abstraction Towards Accelerator Physics). Its job is to make it much easier for scientists and operators to control the very complicated machines used to accelerate particles (like electrons) for research. CATAP sits between the people and the low-level control system, acting like a “universal translator” that turns complicated, machine-specific details into simple, human-friendly commands.

Key Objectives

The paper focuses on solving a few practical problems:

- Make controlling accelerator hardware simpler and safer, so operators don’t need to remember lots of technical details.

- Reduce duplicated code by giving everyone a shared toolkit for common tasks (like turning magnets on or saving images from cameras).

- Handle differences between facilities and devices (which often use different names, settings, and control steps) in a clean, consistent way.

- Automatically generate parts of the control software from simple configuration files, so it’s easy to set up on new machines.

Methods and Approach

What is a “middle layer”?

Think of a particle accelerator like a car with a very tricky engine. The low-level control system is the engine: it has many knobs, switches, and hidden safety checks. The middle layer (CATAP) is like your dashboard and pedals. You don’t need to think about fuel injection or spark timing—you just press the pedal or turn the wheel, and the middle layer handles the complex engine details for you.

How CATAP works

CATAP organizes control in clear steps:

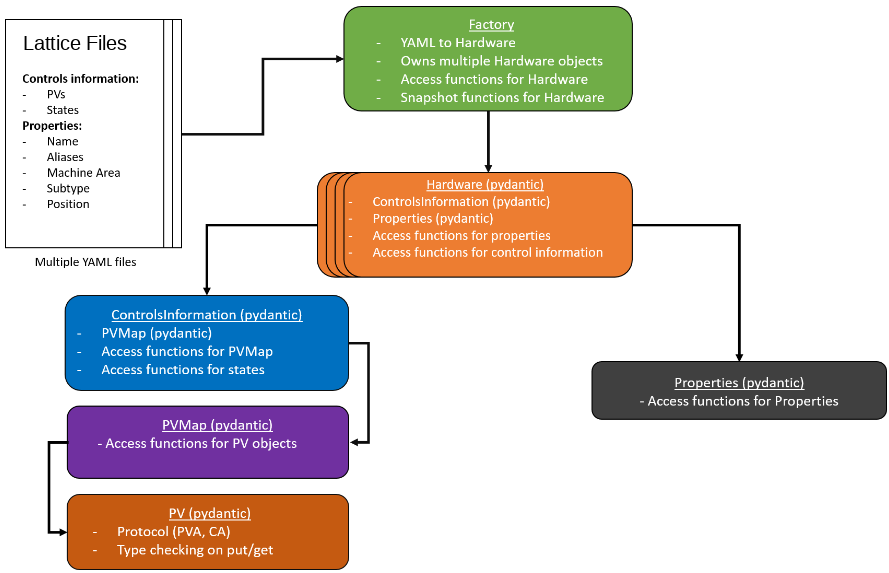

- YAML files: These are simple, human-readable configuration files (like instruction cards) that describe each device: what it’s called, what “buttons” it has, and any special rules. They also include friendly names and positions in the machine.

- PVs (Process Variables): These are the control points the machine exposes (like a knob setting or sensor reading). CATAP connects to them using EPICS, a standard system for accelerator control. EPICS provides two ways to talk to PVs: Channel Access and PVAccess, and CATAP supports both.

- Layers of classes:

- PVMap: A safe interface to each device’s PVs. It understands types (like on/off, numbers, text) and prevents invalid actions.

- ControlsInformation: Human-friendly functions that hide messy details (for example, clearing multiple interlocks before turning on a magnet).

- Properties: Metadata like device name, area, position, limits, and calibration factors.

- Hardware: The main object you use to control a device (like a Magnet or Camera). It combines all the above and provides simple “do this” functions.

- Factory: Groups many devices together so you can operate them at once (like “turn off all magnets in Area A”).

- HighLevelSystem: Combines different kinds of devices that work together as one unit (for example, a laser system that includes mirrors, shutters, a camera, and an energy meter).

- Snapshot: Saves and loads machine settings, like a “save game” for the accelerator. You can compare snapshots to see what changed.

Procedural code generation

Not every lab uses the same names or control steps. CATAP can automatically generate much of its code from YAML files using templates. Think of it like building software with LEGO instructions: you provide the device details in YAML, and CATAP builds the basic blocks for you. Then each facility can add its special procedures on top (for example, how a particular camera must be prepared before saving images).

Testing with a virtual accelerator

Before trying things on the real machine, CATAP is tested on a “virtual accelerator”: a simulated control system that behaves like the real one. This lets developers write and test scripts safely, without interrupting live operations.

Main Findings and Results

Here are the main outcomes of the work:

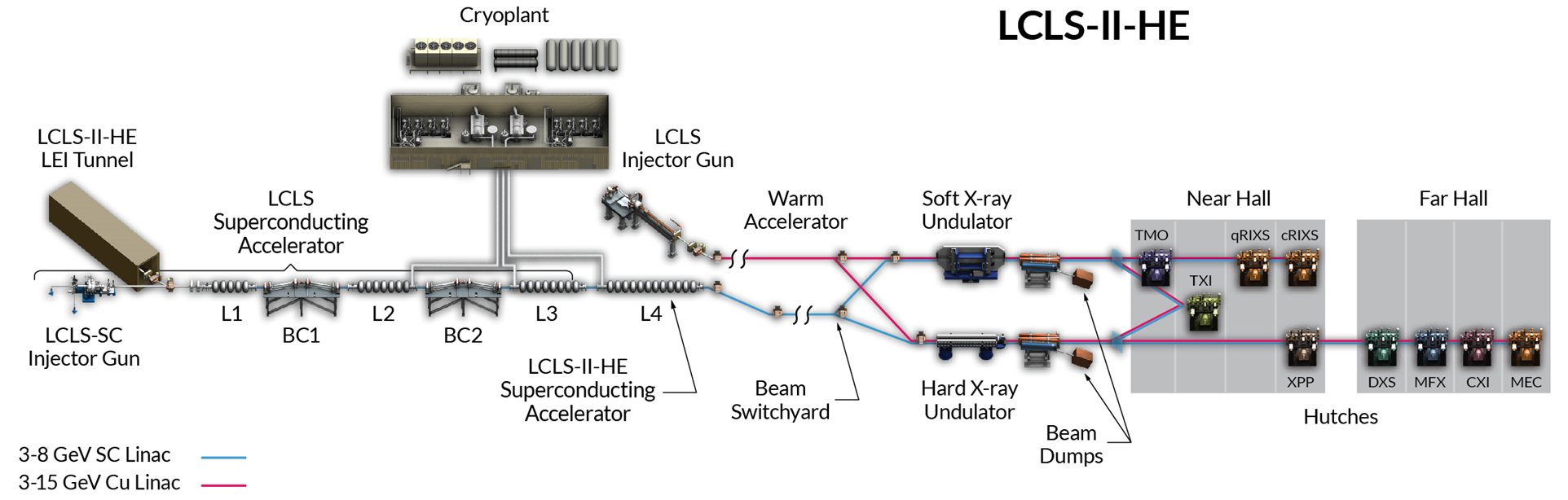

- CATAP has been deployed at two major facilities, LCLS (USA) and CLARA (UK), even though they have different scales and naming conventions.

- It simplifies everyday tasks, such as:

- Turning on/off large groups of magnets with a few lines of code.

- Inserting and checking diagnostic screens safely.

- Coordinating multiple devices in a laser system to scan positions or energies.

- Saving images from cameras with one command instead of many steps.

- It reduces duplicated code and makes applications more consistent across teams.

- Snapshots allow operators to save, load, and compare machine states, which is helpful for experiments and troubleshooting.

- Automatic code generation from YAML files speeds up setup for new devices and facilities.

- The design supports both EPICS control protocols (Channel Access and PVAccess), and is open to adding others in the future.

Why This Is Important

CATAP helps scientists and operators focus on physics rather than low-level control details. It makes accelerator operations:

- Safer, by enforcing correct procedures and checking device states.

- Faster, by letting you control many devices at once and reuse well-tested functions.

- More consistent, because shared code reduces mistakes and divergence.

- Easier to share, since facilities can adapt CATAP to their specific needs using simple YAML files and templates.

In the long run, this approach can speed up research, lower maintenance costs, and make it simpler for new facilities to adopt modern, user-friendly control tools.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, consolidated list of the paper’s unresolved issues and areas needing further investigation or validation.

- Scalability and performance at LCLS scale: No benchmarks on connection latency, throughput, update rates, memory footprint, and CPU overhead when operating across “millions of PVs,” nor guidance on sharding, batching, or rate-limiting to avoid IOC overload.

- Deterministic timing and real-time constraints: Unclear whether Python, pyepics/p4p, and CATAP can meet deterministic timing needs (e.g., LCLS-sc at 1 MHz), including event-synchronous acquisition, shot-by-shot alignment, and jitter bounds.

- Concurrency model for group operations: No specification of sequencing vs parallelization when performing operations like “turn on all magnets,” including dependency resolution, throttling, and avoiding race conditions or IOC saturation.

- Multi-user conflict resolution: Missing mechanisms for locking, leasing, or arbitration when multiple applications/scripts attempt to control the same device(s) concurrently.

- Transactional safety of bulk changes: No support described for atomic multi-device operations, rollback on failure, idempotency guarantees, or dry-run/simulation modes before applying snapshots.

- Safety interlock integration and assurance: Abstracting interlocks is appealing, but there’s no formal safety model, verification, or certification showing CATAP cannot bypass or mis-order safety-critical sequences.

- Security, authentication, and authorization: No treatment of EPICS Access Security integration, role-based controls, audit logging, or protections against misuse of dangerous actions (e.g., powering hardware) via CATAP.

- Error handling and resilience: Unclear strategy for handling PV disconnects, IOC restarts, stale data, partial failures during group operations, and standardized retry/backoff policies.

- Time synchronization and data provenance: Snapshots lack explicit per-PV timestamps, timing system integration (e.g., event codes), clock synchronization (NTP/PTP), and provenance trails for later analysis or compliance.

- Statistical PV buffering policy: No documented buffer size limits, memory management, sampling rates, retention/eviction policies, thread-safety, or backpressure handling under high data rates.

- Schema validation of YAML configurations: While pydantic is used for type checking, a complete, versioned schema (e.g., JSON Schema) for YAML files and a migration strategy for schema evolution are not described.

- Configuration drift and source-of-truth integration: No automated reconciliation between facility databases (e.g., LCLS Oracle) and YAML configs, drift detection, or update workflows to prevent desynchronization.

- Generated code maintainability: Lack of guidance on versioning templates, diffing and debugging generated classes, ensuring backward compatibility, and propagating template updates without breaking facility overrides.

- Security of procedural code generation: No discussion of sanitizing YAML inputs, preventing code injection or unsafe content in generated Python, and enforcing safe templating practices.

- Cross-protocol abstraction: PVAccess and Channel Access are supported, but a unified abstraction for additional protocols (TANGO, DOOCS, OPC-UA) is not specified; open questions include error semantics and feature parity across protocols.

- Device coverage breadth: Limited examples (magnets, BPMs, screens, cameras, laser subsystems); unclear readiness for RF cavities, timing systems, vacuum controls, environmental systems, and specialized diagnostics.

- Unit handling and conversions: Units are optional in YAML; there’s no robust enforcement of unit consistency, automatic conversions, or integration with a standardized units library across protocols.

- Formalization of HighLevelSystem logic: No standard DSL/state-machine specification for expressing multi-device coordination, dependencies, and safe sequences; logic remains ad-hoc in facility-specific code.

- Testing strategy beyond VA: The virtual accelerator supports development, but there’s no documented test coverage goals, HIL (hardware-in-the-loop) testing, CI/CD pipelines, regression tests for device logic, or stress/failure scenarios.

- Benchmarking and comparative evaluation: No quantitative comparison to existing middle layers (e.g., MATLAB Middle Layer, pyAML) in performance, ergonomics, maintainability, or migration complexity.

- Observability of CATAP itself: Missing built-in logging standards, metrics/telemetry, health checks, and tracing to monitor CATAP’s behavior and diagnose field issues.

- Snapshot safety and policy: No defined pre-checks, safety gates, approval workflows, or staged application for dangerous changes; unclear ordering guarantees and recovery if mid-apply failures occur.

- Integration with archival systems: No linkage to EPICS Archiver Appliance or data lakes; unclear how snapshots complement/replace long-term archival and how to query historical states programmatically.

- Governance and change management: Absent procedures for who owns YAML updates, review/approval processes, versioning policies, and how operational changes propagate safely to production.

- Effort to adopt at new facilities: No quantified estimate of configuration effort, onboarding steps, or best-practice templates to minimize custom code needed for facility-specific procedures.

- Asynchronous/event-driven programming model: Unclear support for async I/O, callbacks, and scheduling for coordinated scans (e.g., mirrors + cameras) under high rates, and how to avoid blocking operations.

- Integration with physics modeling and online optics: No interfaces to MAD-X/elegant or model-based control workflows (e.g., lattice synchronization, optics-based procedures, and model validation).

- License and compliance: The repositories are referenced but license terms, constraints for proprietary facilities, and compliance implications are not stated.

- Cross-platform deployment and environment management: No documentation on packaging, Python environment reproducibility, compatibility across OS/distributions, containerization standards, and dependency pinning.

Practical Applications

Immediate Applications

Below are concrete, deployable use cases that leverage CATAP’s abstractions, YAML-driven configuration, and procedural code generation, with sector tags and key assumptions noted where relevant.

- Accelerator operations: batch device orchestration and safety-aware workflows (Sector: research infrastructure, software/automation)

- Switch on/off large sets of magnets by area; retract all upstream screens before inserting a diagnostic screen; degauss and apply magnet setpoints with interlock handling via unified Hardware/Factory APIs.

- Potential tools/products: “Device Factory CLI” for area-wide actions; “Screen Safety Checker” microservice; “Magnet Power Sequencer.”

- Assumptions/dependencies: EPICS (Channel Access or PVAccess) availability; accurate interlock mappings in YAML; operator permissions for power operations.

- Machine state capture, comparison, and restore (Snapshot) (Sector: research infrastructure, data/software)

- Save facility state across heterogeneous devices in YAML; compare current vs. saved states; apply vetted configurations with device-specific logic (e.g., magnet ramping).

- Potential tools/products: “Snapshot Manager GUI,” “State Diff Tool” integrated in control rooms; automated rollback scripts for commissioning and recovery.

- Assumptions/dependencies: device-specific apply_snapshot logic is correct; governance for who can restore settings; versioned snapshot files.

- High-Level Systems for complex, coordinated procedures (e.g., photoinjector laser, bunch compressor chicane) (Sector: research infrastructure, robotics/automation)

- Single API to coordinate shutters, mirrors, cameras, attenuators, stages, and diagnostics; enable laser-position scans and energy scans with synchronized data capture.

- Potential tools/products: “Photoinjector Laser Assistant,” “Chicane Coordinator,” with live feedback from BPMs and cameras.

- Assumptions/dependencies: complete component coverage in YAML; calibration factors present and current; camera file paths and acquisition modes configured.

- Virtual Accelerator (VA)–based development and test (Sector: software engineering, education/training)

- Safely prototype HLAs and middle-layer logic against a Dockerized or on-prem EPICS VA; simulate ramping and noise; swap between VA and live system via environment variables.

- Potential tools/products: “VA Sandbox” CI pipeline; student/operator training modules using the VA.

- Assumptions/dependencies: representative VA PV coverage; policy permitting simulated writes to read-only parameters in VA; environment variable switching discipline.

- Procedural middle-layer generation from YAML + Jinja (Sector: software tooling, operations)

- Generate facility-specific PVMap, ControlsInformation, Hardware, and Factory base classes; add facility child classes for special procedures; auto-document from YAML descriptions.

- Potential tools/products: “Middle-Layer SDK,” “YAML-to-Python Generator,” “Device Template Registry.”

- Assumptions/dependencies: consistent YAML schemas; accessible device metadata (e.g., Oracle DB at LCLS); Jinja/pydantic runtime dependencies.

- MATLAB-to-Python migration for HLAs (Sector: software, operations, finance)

- Replace GUIDE-based applications with modular Python HLAs using CATAP abstractions; reduce license overhead; improve maintainability and code reuse.

- Potential tools/products: “HLA Migration Toolkit” mapping common workflows to CATAP APIs; standardized Python device libraries.

- Assumptions/dependencies: availability of Python expertise; acceptance of operational change; parity in functionality and performance.

- Cross-facility device libraries and documentation (Sector: research, education)

- Version-controlled YAML “lattice”/device configs serve as a living registry of device properties, enums, units, calibration dates, soft limits, and aliases; reduce anecdotal knowledge.

- Potential tools/products: “Device Registry Portal,” automated docs from YAML, calibration status dashboards.

- Assumptions/dependencies: commitment to keep configs current; naming conventions agreed; review workflows for metadata changes.

- Operations utilities and health checks (Sector: operations, reliability)

- Convenience scripts to open/close valves, perform area shutdowns, acquire BPM statistics, run health checks across devices; unify heterogenous interfaces.

- Potential tools/products: “Ops Toolkit” repository; shift-time checklists automated via Factory calls.

- Assumptions/dependencies: correct PV type assignments (binary/state/scalar/waveform/statistical); reliable network; proper access roles.

- Training and onboarding (Sector: education/training)

- Human-readable APIs and YAML reduce barrier for new operators; interactive exercises via VA; self-documenting code for device behavior and nomenclature mapping.

- Potential tools/products: “CATAP 101” curriculum; guided labs with Snapshot/Factory exercises.

- Assumptions/dependencies: training time allocated; curated examples; coaching on failure modes.

- Broader EPICS facilities and labs (Sector: neutron sources, synchrotrons, telescopes, industrial labs)

- Immediate adoption of CATAP-style middle layer at EPICS-based sites to streamline control-room applications, reduce duplicated code, and improve reproducibility.

- Assumptions/dependencies: EPICS stack present; willingness to adopt YAML schema; minimal local adaptations in child classes.

Long-Term Applications

These opportunities require further development, scaling, standardization, or cross-protocol integration before widespread deployment.

- Unified, protocol-agnostic middle layer (EPICS + TANGO + DOOCS and beyond) (Sector: research infrastructure, industrial ICS)

- Abstract device control across multiple ICS protocols; mix protocols inside a single Hardware definition; vendor-neutral orchestration.

- Potential tools/products: “CATAP-X” multi-protocol core; plug-ins for TANGO/DOOCS; cross-protocol device templates.

- Assumptions/dependencies: robust adapters; community consensus on schemas; security review for protocol bridging.

- Standardized device description schemas and template marketplace (Sector: software ecosystems, policy/standards)

- Community-maintained YAML/JSON schemas for magnets, BPMs, cameras, shutters, TDCs, etc.; shared template catalog with validation, linting, and CI.

- Potential tools/products: “Device Template Hub,” schema validators, asset catalogs; governance via standards bodies or consortia.

- Assumptions/dependencies: stakeholder buy-in; versioning and compatibility policy; quality assurance for templates.

- Digital twins and ML-in-the-loop optimization (Sector: software/AI, energy-efficient operations)

- Integrate Snapshot + VA with physics models to form digital twins; standard data buffers enable ML optimization of tuning (e.g., emittance, orbit correction).

- Potential tools/products: “Twin+CATAP” optimization services; reinforcement-learning agents using Factory APIs; anomaly detection from statistical PV buffers.

- Assumptions/dependencies: high-fidelity models; safe exploration policies; compute resources; rigorous rollback and guardrails.

- Autonomous or semi-autonomous operations (Sector: robotics/automation, safety)

- Policy-aware sequencers that monitor interlocks, limits, soft constraints, and statistical PVs to perform routine tuning and shutdown/startup autonomously.

- Potential tools/products: “Ops Autopilot,” policy engines with auditable decision logs; alarm response workflows.

- Assumptions/dependencies: formalized safety policies; certification; robust failure handling; human-in-the-loop controls.

- Cross-facility reproducibility and experiment portability (Sector: academia, collaboration)

- Share Snapshot + script bundles to reproduce setups across labs; standardized abstractions reduce porting cost; promote open science practices.

- Potential tools/products: “Experiment Capsule” format (YAML+code+data), import/export tools; repositories for sharable setups.

- Assumptions/dependencies: harmonized device semantics; legal/data-sharing policies; calibration and alignment differences handled via Properties.

- Cloud-native simulation and CI/CD for controls (Sector: software engineering)

- Containerized VA for automated testing of HLAs and middle-layer changes; pre-merge validation of device templates; continuous deployment to control-room workstations.

- Potential tools/products: “Controls CI,” GitOps pipelines; synthetic load and noise injection frameworks.

- Assumptions/dependencies: secure dev–prod boundaries; performance parity; team workflows.

- Procurement and governance policy impacts (Sector: policy, finance)

- Encourage open-source, Python-based HLAs to lower licensing costs and improve maintainability; mandate device-level documentation and snapshot logging for audits.

- Potential tools/products: policy templates for RFPs; audit-ready Snapshot archives; compliance dashboards.

- Assumptions/dependencies: institutional buy-in; change management; cybersecurity reviews.

- Extension to non-accelerator ICS domains (Sector: energy, manufacturing, healthcare, smart buildings)

- Apply CATAP’s patterns—YAML config, typed PVs, Factory orchestration, snapshots—to SCADA-like environments: power plants, process lines, hospital imaging suites, building automation.

- Potential tools/products: “ICS Middle-Layer Kit” adapted to sector-specific protocols; orchestrators for multi-device procedures (e.g., imaging sequences, line changeovers).

- Assumptions/dependencies: protocol adapters (OPC-UA, Modbus, DICOM); domain-specific safety/interlock logic; regulatory compliance.

- Workforce development and curriculum standardization (Sector: education/training)

- National lab curricula built around VA + CATAP; standardized practicals on device orchestration, safety logic, and reproducibility.

- Potential tools/products: modular training tracks, certification pathways; shared courseware repositories.

- Assumptions/dependencies: cross-institution coordination; sustained funding; instructor training.

- Auditability, provenance, and safety analytics (Sector: safety/compliance)

- Use Snapshot histories and YAML metadata for incident reconstruction, change tracking, and safety analytics; tie into alarm systems for trend analysis.

- Potential tools/products: “Provenance Explorer,” safety analytics dashboards; policy-enforced change logs.

- Assumptions/dependencies: disciplined snapshotting; retention policies; secure storage and access controls.

Glossary

- Accelerator lattice: The structured arrangement of accelerator components (magnets, cavities, diagnostics) along the beamline used to model and control beam transport. "An accelerator lattice consists of a variety of hardware types, including magnets, accelerating cavities, and numerous categories of beam diagnostics, to name only a few."

- Beam loss monitor (BLM): A detector system that measures radiation from lost beam particles to protect equipment and diagnose beam quality. "Currently, magnets, screens, wires, beam position monitors, long beam loss monitors, and transverse deflecting cavities are included in the YAML files."

- Beam position monitor (BPM): A diagnostic device that measures the transverse position of the particle beam. "Currently, magnets, screens, wires, beam position monitors, long beam loss monitors, and transverse deflecting cavities are included in the YAML files."

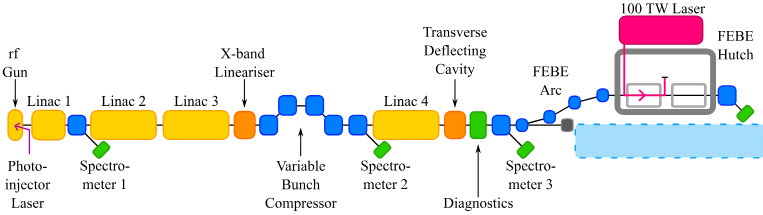

- Bunch compressor chicane: A magnetic chicane used to shorten (compress) the length of an electron bunch by introducing energy-dependent path length differences. "Three additional accelerating cavities and a variable magnetic bunch compressor chicane were installed in 2024"

- CATAP (Controls Abstraction Towards Accelerator Physics): A Python middle-layer framework that abstracts control-system details and provides higher-level, physics-oriented interfaces for accelerator devices. "The package described in this article, known as the Controls Abstraction Towards Accelerator Physics (CATAP), is a Python-based interface to the Experimental Physics and Industrial Control System (EPICS) software package"

- Channel Access: The legacy EPICS network protocol for reading and writing process variables. "built on the pyepics library for Channel Access process variables (PVs)"

- CLARA: A UK linear accelerator facility focused on high-brightness electron beams and user experiments. "CLARA is a high-brightness electron beam facility based on normal-conducting RF, operating at \SI{100}{\hertz} and with a maximum beam energy of \SI{250}{\mega\electronvolt}."

- Collimator: A device with an aperture used to define or limit the transverse size or halo of the beam, often for protection or measurement. "A diagnostic screen with a camera can also be used for beam measurements, and a collimator is also available."

- Degauss: To demagnetize a magnetic element (e.g., a magnet core) to remove residual magnetization before setting operational fields. "possibly first to degauss the magnet,"

- EPICS (Experimental Physics and Industrial Control System): A distributed control system toolkit widely used in large-scale scientific facilities for device control and data acquisition. "Experimental Physics and Industrial Control System (EPICS) software package"

- FACET-II: An advanced accelerator research facility at SLAC using high-energy electron beams, not primarily for x-ray generation. "FACET-II is not used to generate x-rays, but does have a user community focused on advanced accelerator research, such as plasma wakefield acceleration."

- Factory (CATAP): A CATAP class that instantiates and manages collections of Hardware objects, enabling batch operations and discovery by area/type. "The Factory class consists of groupings of Hardware objects into a single class."

- Hardware (CATAP): The abstraction in CATAP representing a single physical device, combining controls interface and metadata. "The Hardware class incorporates both the ControlsInformation and the Properties that were instantiated based on the configuration file."

- High-Level Applications (HLA): Operator- or scientist-facing applications that orchestrate device operations and measurements using higher-level abstractions. "This enables CATAP and the High-Level Applications (HLA) that use it to exist outside of hardware and control system changes."

- HighLevelSystem (CATAP): A CATAP construct that groups multiple devices into a single logical system for coordinated control and monitoring. "CATAP groups together such elements into a HighLevelSystem."

- Interlock: A protection mechanism that inhibits device operation until safety or readiness conditions are met. "the scientist should not need to be aware of the various interlocks that allow the magnet power supply to be activated,"

- Jinja: A templating engine used to procedurally generate CATAP code from facility-specific YAML definitions. "injected into a template file using the Jinja Python library."

- LCLS: The Linac Coherent Light Source at SLAC, a multi-linac facility producing ultra-bright x-ray pulses. "The tunnel housing LCLS at SLAC contains three linear electron accelerators (linacs) that can operate simultaneously."

- Linac: A linear accelerator that accelerates charged particles along a straight line using RF structures. "The tunnel housing LCLS at SLAC contains three linear electron accelerators (linacs) that can operate simultaneously."

- MAD: A lattice description and optics design convention/tool used to name and model accelerator elements. "Hardware components are assigned a MAD lattice name"

- p4p: A Python client library implementing the EPICS PVAccess protocol. "and p4p for PVAccess PVs"

- Photoinjector: An electron source in which a laser illuminates a photocathode inside an RF gun to produce high-brightness electron bunches. "The CLARA photoinjector laser exists in the middle layer as a HighLevelSystem,"

- Process Variable (PV): A named control-system data point representing a device setting, readback, or status value. "Between the three linacs, there are millions of PVs associated with the RF, magnets, and diagnostics"

- PVAccess: The newer EPICS network protocol supporting structured data and advanced messaging. "It is also possible to define a protocol to use for a given PV entry, which enables communication via PVAccess or ChannelAccess."

- PVMap (CATAP): A CATAP layer that encapsulates and validates the PV connections/types for a device. "Once successfully instantiated, the properties of the PVMap consist of the PV objects defined in the configuration file."

- pyepics: A Python library for interacting with EPICS Channel Access PVs. "built on the pyepics library"

- Quadrupole magnet: A magnet with four poles used to focus the beam in one plane while defocusing in the orthogonal plane. "a quadrupole magnet in the L1B area will have the root PV name of \text{QUAD:L1B:0385}"

- Snapshot (machine state): A saved set of device settings and readbacks (including buffered statistics) that can be compared, archived, or reapplied. "all of the relevant controls system data can be captured using a Snapshot interface"

- Superconducting cavity: An RF accelerating structure made of superconducting material enabling high-duty-cycle operation with low losses. "LCLS-sc saw first light in 2023, and is ramping up to deliver~\SI{4}{GeV} beams at \SI{1}{MHz} with superconducting cavities."

- Transverse deflecting cavity (TDC): An RF cavity that imparts a time-correlated transverse kick to the beam, used for time-resolved diagnostics. "Currently, magnets, screens, wires, beam position monitors, long beam loss monitors, and transverse deflecting cavities are included in the YAML files."

- Undulator: A periodic magnetic structure that forces electrons to oscillate and emit intense, coherent x-ray radiation. "Two undulator lines (tailored for soft and hard x-rays) deliver short~(fs) x-ray pulses at photon energies from about 200 eV to 2.5 keV"

- Virtual accelerator (VA): A software-emulated control system mirroring the real machine’s PVs for development and testing. "all of which are prefixed with the string VM- for the virtual accelerator, or VA"

- Wall current monitor (WCM): A beam diagnostic that measures the total bunch charge or current via image currents on the beam pipe wall. "bunch charge measured by a wall current monitor,"

- YAML lattice file: A human-readable configuration file describing device metadata and PV mappings used to generate CATAP device classes. "The controls system information pertaining to a specific hardware object is provided to CATAP in the form of a YAML file."

Collections

Sign up for free to add this paper to one or more collections.