- The paper presents a novel manifold fitting approach that directly estimates a data manifold to robustly monitor deviations in high-dimensional settings.

- It develops a complementary manifold learning framework that embeds observations into a lower-dimensional space for effective online control.

- Empirical evaluations on synthetic and real-world datasets demonstrate superior fault detection power and practical applicability in industrial applications.

High-Dimensional Statistical Process Control via Manifold Fitting and Learning

Introduction and Motivation

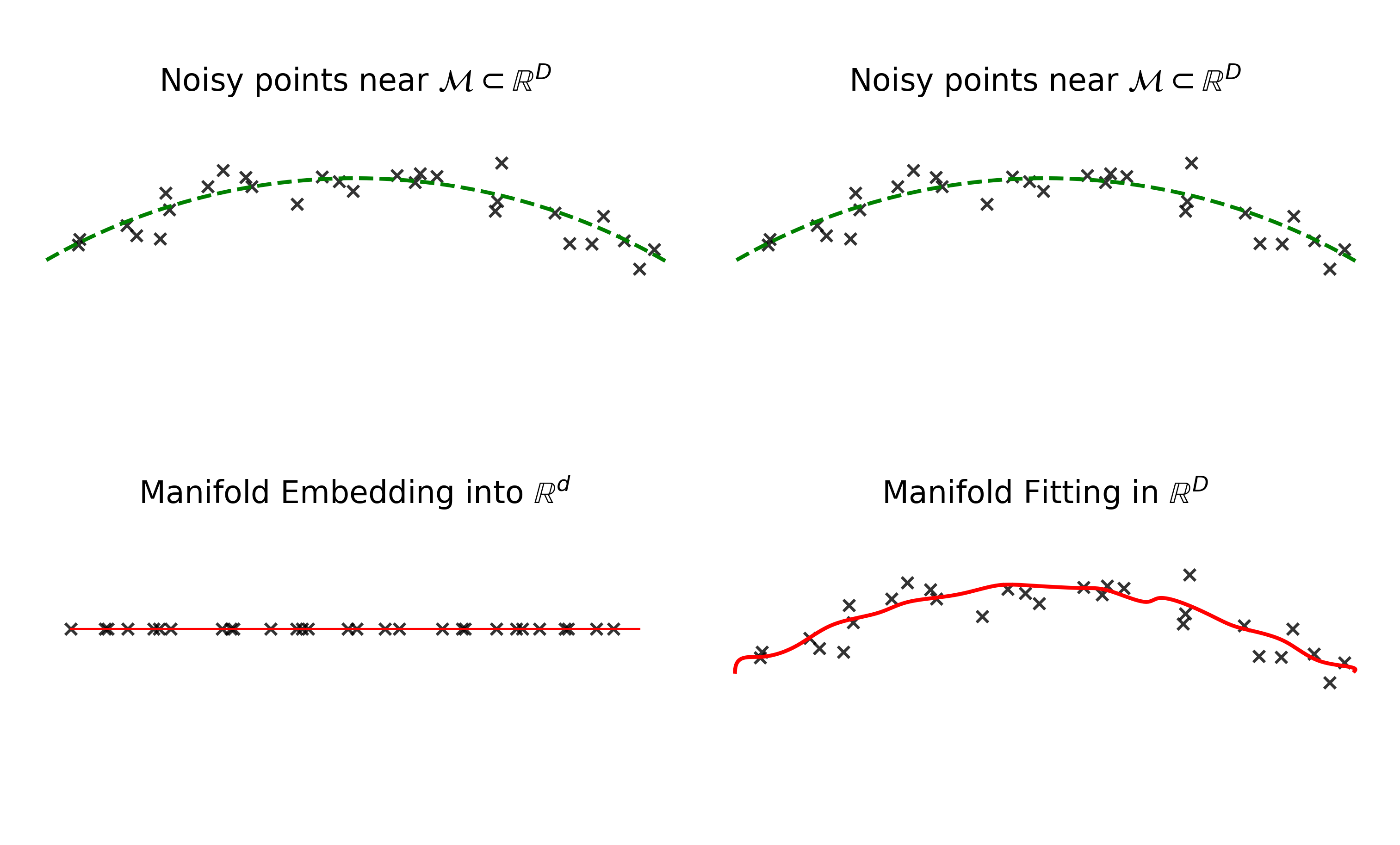

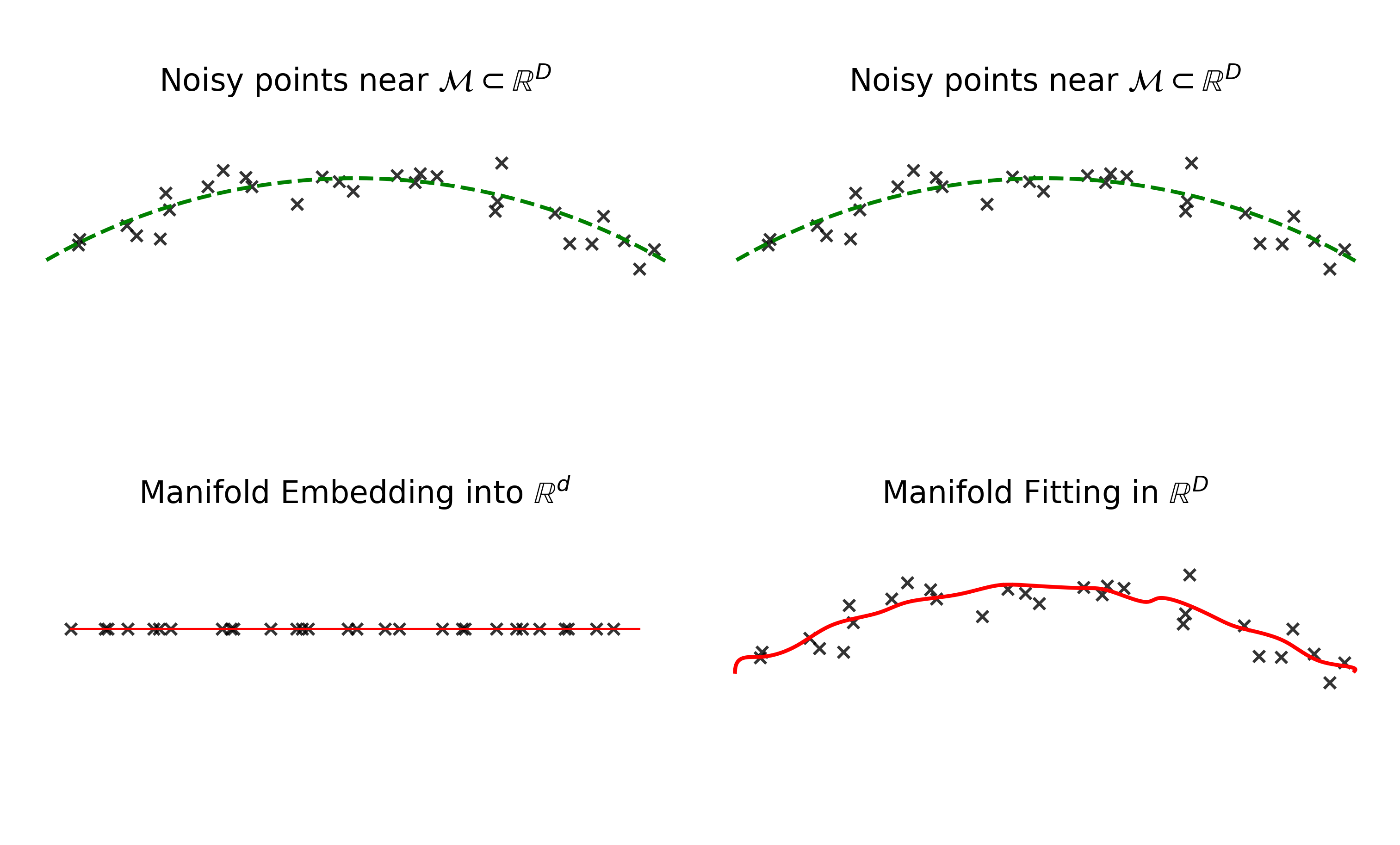

This paper addresses the challenge of Statistical Process Control (SPC) in high-dimensional, dynamic industrial processes, where the data are assumed to reside on a nonlinear, lower-dimensional manifold embedded in a high-dimensional ambient space. Traditional multivariate SPC methods, such as Hotelling’s T2 chart, suffer from diminished fault detection power as dimensionality increases, and are often inapplicable when the number of Phase I observations is less than the data dimension. The authors propose two complementary frameworks for online (Phase II) SPC: (1) a manifold fitting approach that directly estimates the data manifold in the ambient space and monitors deviations from it, and (2) a manifold learning approach that embeds the data into a lower-dimensional space and monitors the embedded observations. Both frameworks are designed to provide controllable Type I error rates and are evaluated on synthetic and real-world datasets.

Figure 1: Manifold embedding and fitting, adapted from Yao et al. (2023), illustrating the distinction between direct manifold fitting in ambient space and embedding via manifold learning.

Manifold Fitting-Based SPC Framework

Theoretical Foundations

The manifold fitting approach is predicated on the assumption that, under statistical control and small noise, process observations Yt lie near a compact, twice-differentiable manifold M⊂RD of intrinsic dimension d<D. The key monitoring statistic is the Euclidean distance from each observation to the manifold, dist(Yt,M)=x∈Minf∥Yt−x∥2. This scalar statistic enables the use of a univariate control chart, circumventing the curse of dimensionality and the need for explicit distributional assumptions.

Manifold Fitting Algorithm

The manifold fitting procedure is based on the method of Yao et al. (2023), which achieves a Hausdorff estimation error of O(σ2log(1/σ)) with m=O(σ−(d+3)) samples, without requiring prior knowledge of d. The algorithm consists of:

- Contraction Direction Estimation: For each point z, construct a Euclidean ball BD(z,r0) and compute a weighted average of points within the ball to estimate the direction toward the manifold.

- Local Contraction: Construct a hyper-cylinder aligned with the estimated direction and compute a weighted average of points within the cylinder to estimate the projection π^(z) onto the manifold.

- Noise Estimation: Iteratively estimate the noise level σ from the residuals of projections.

These steps yield a smooth submanifold M^ approximating M, with theoretical guarantees on the estimation error.

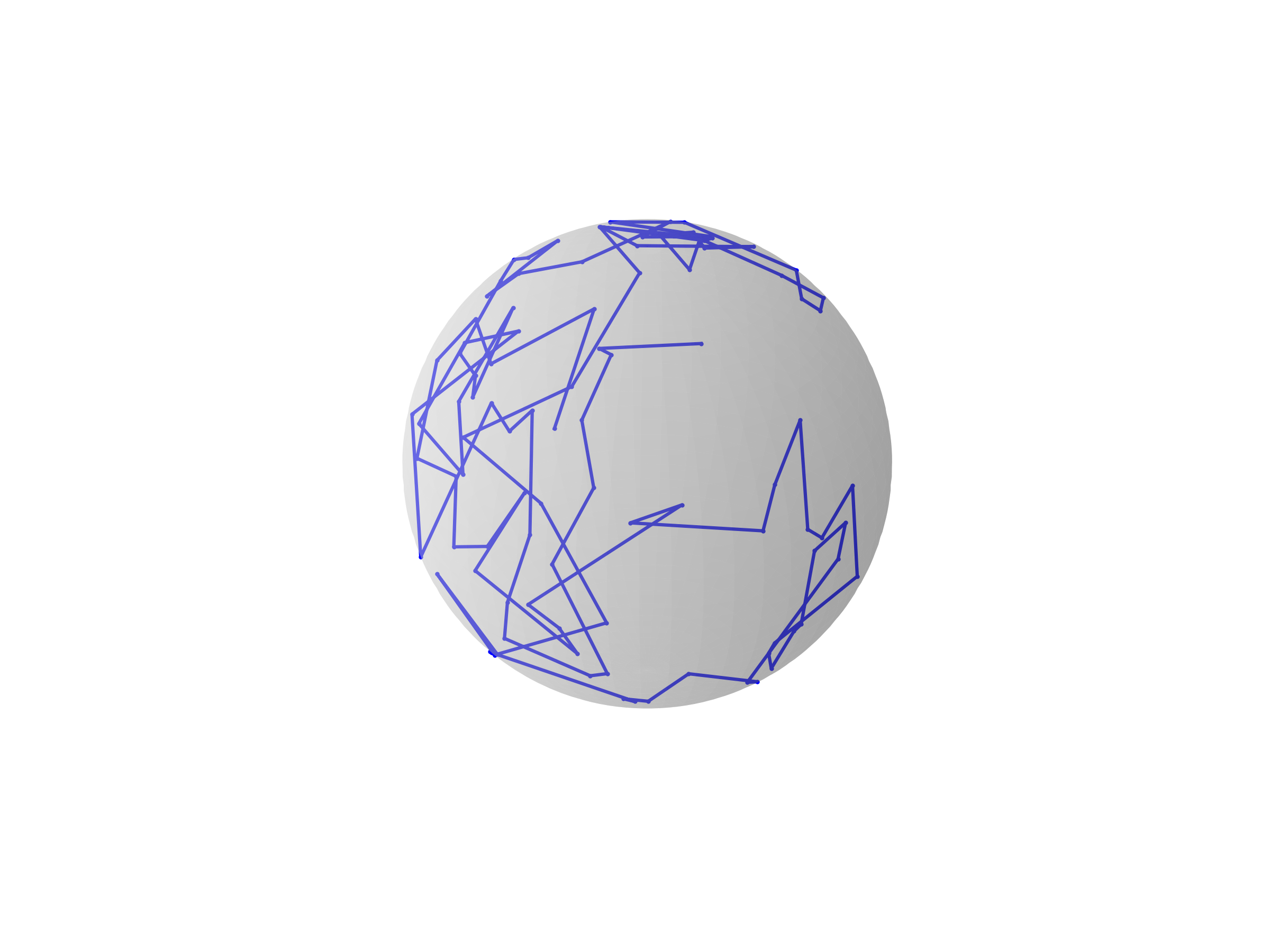

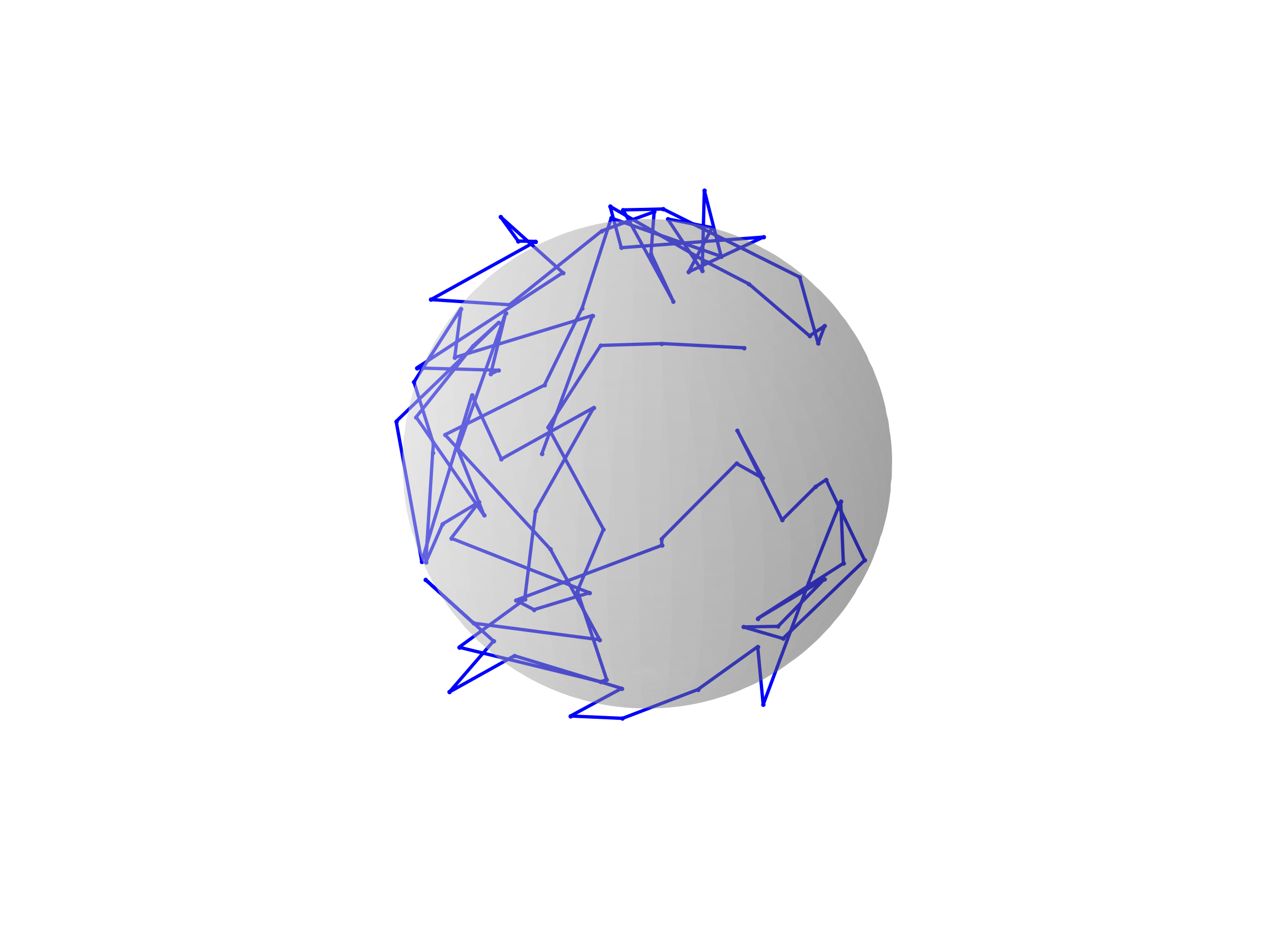

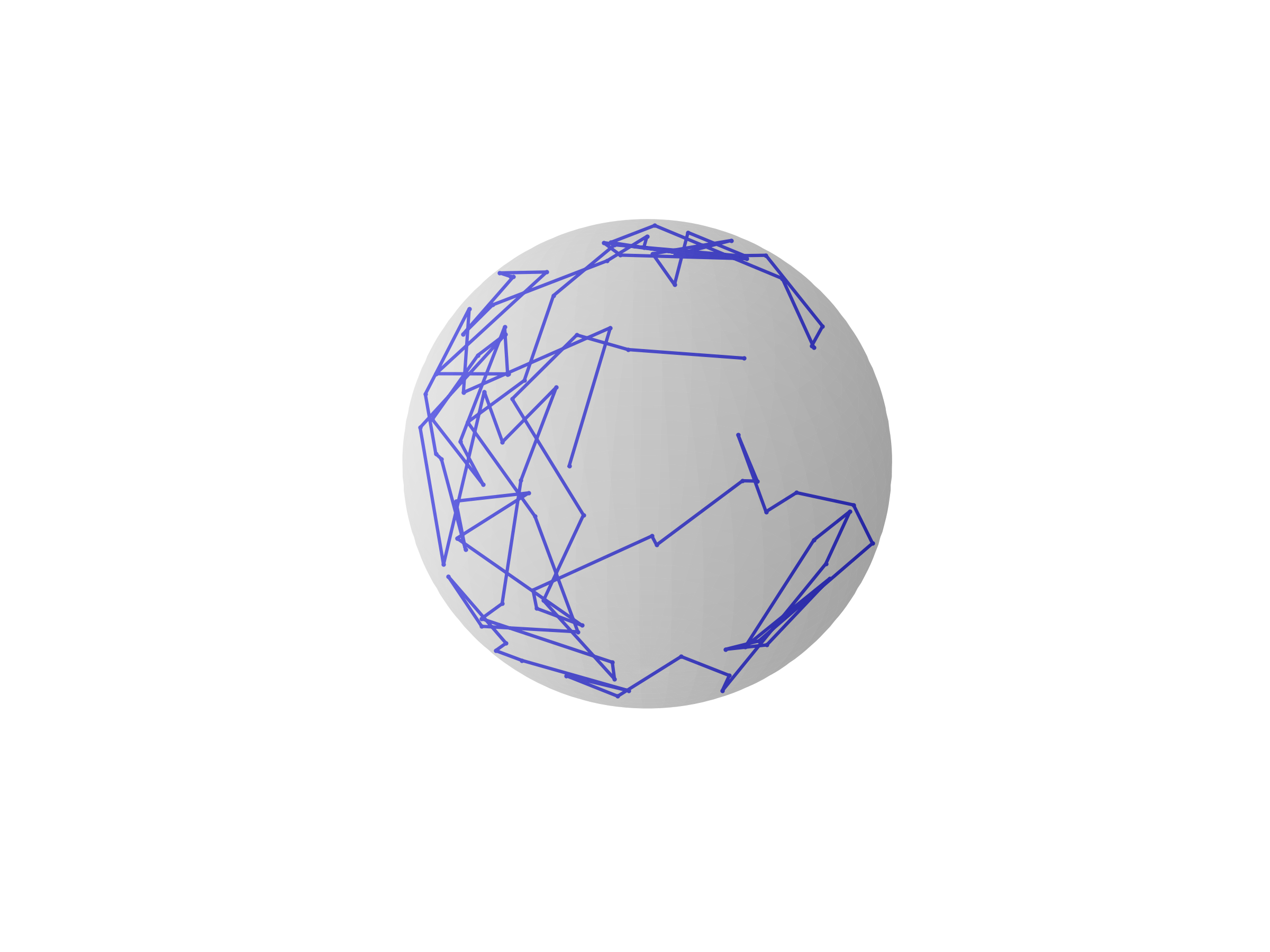

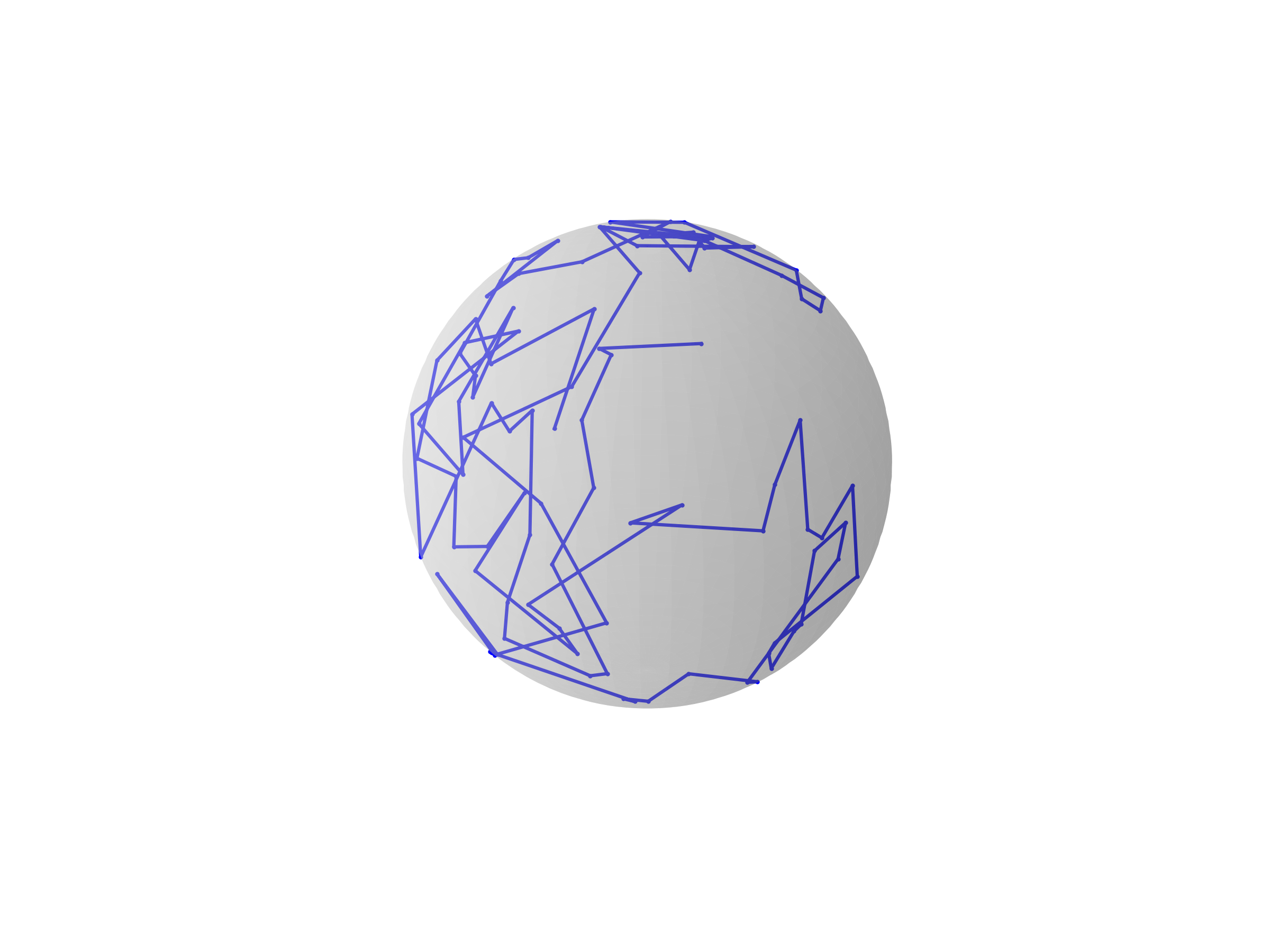

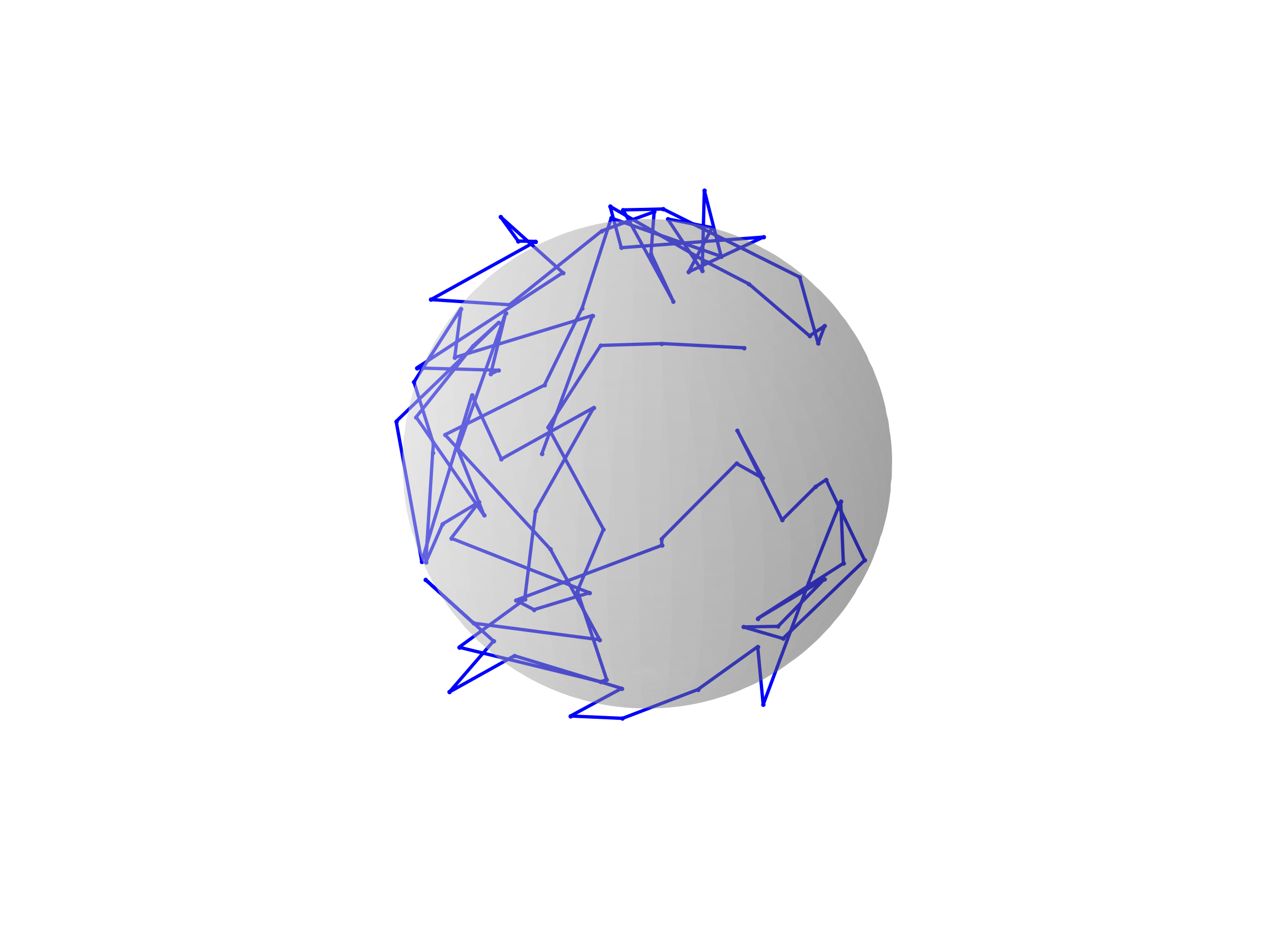

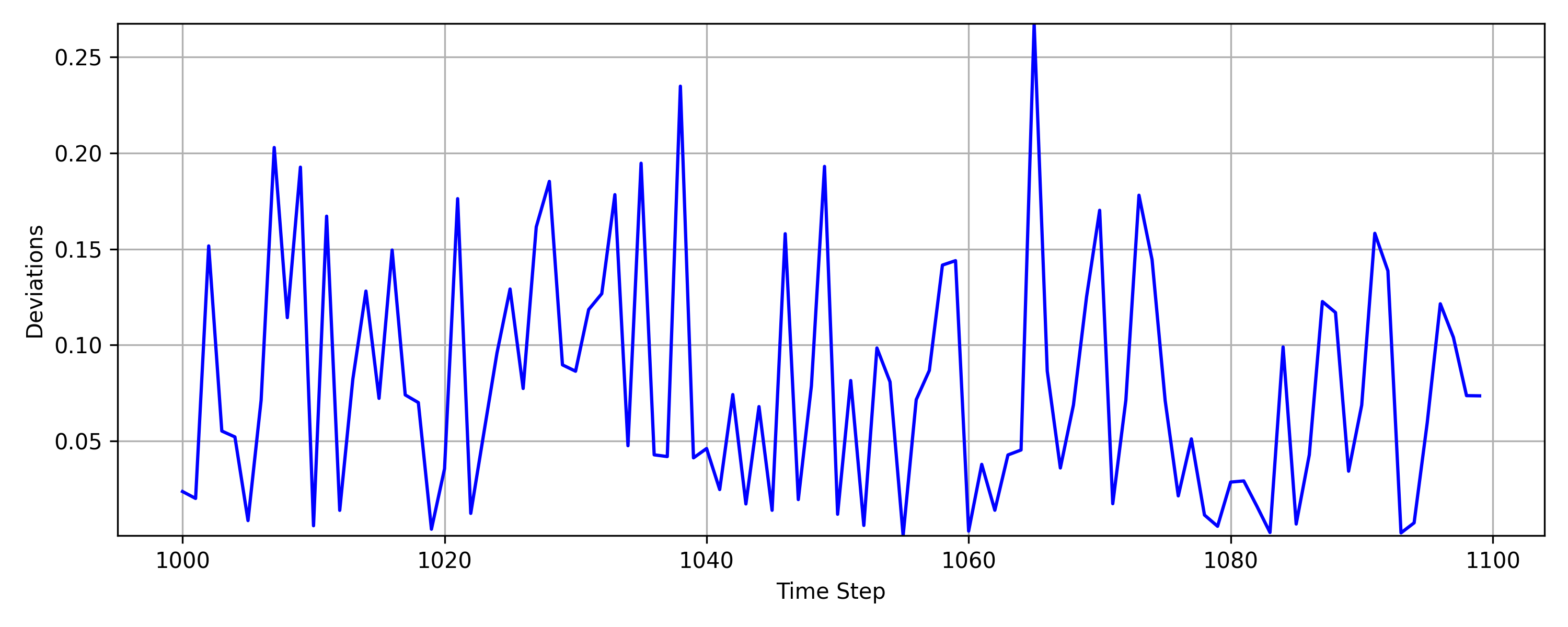

Figure 2: Trajectory of Xt∈M, Yt∈R3, and projections π^(Yt) for a synthetic process on a 2-sphere.

Distribution-Free Control Chart

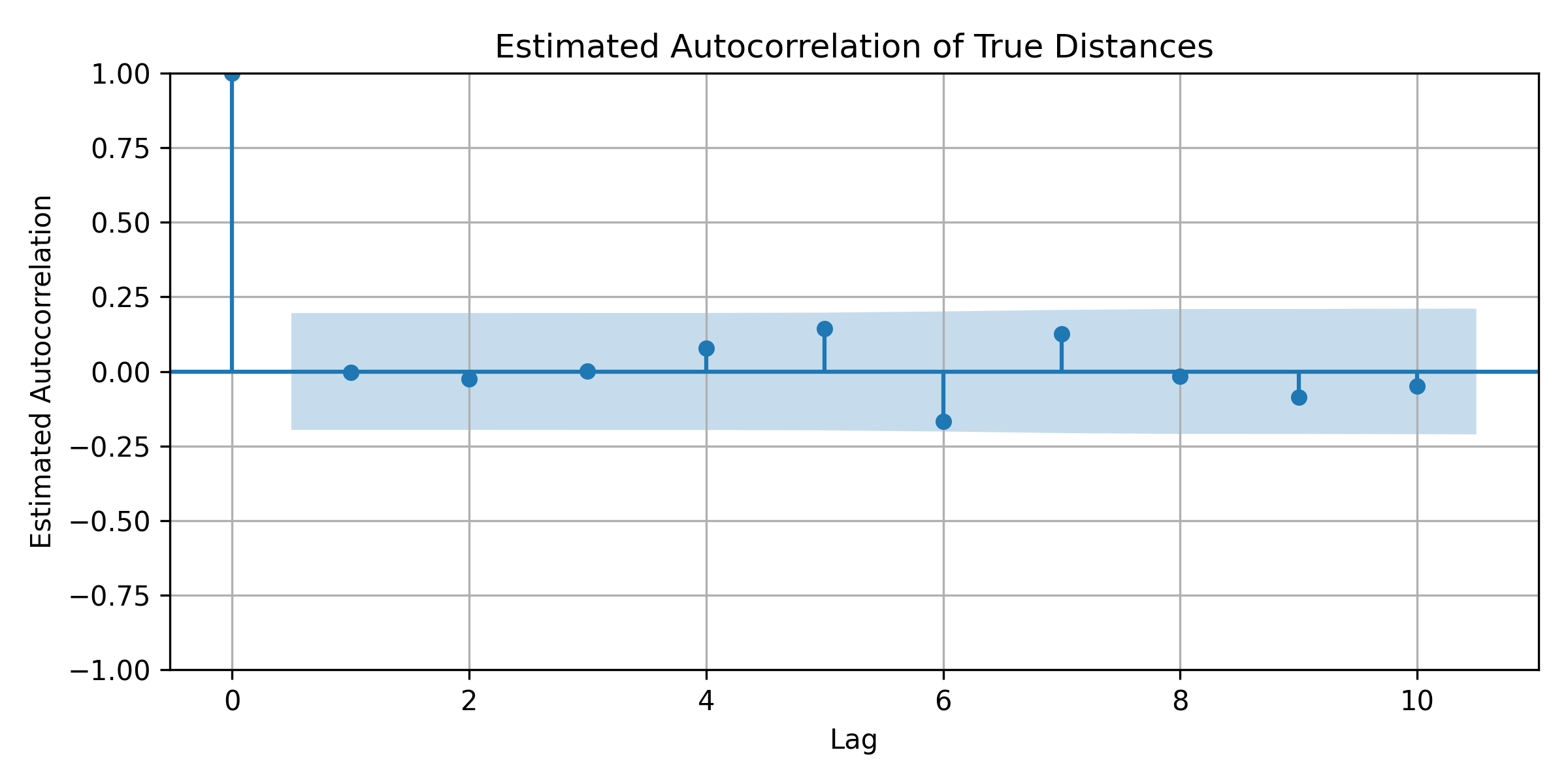

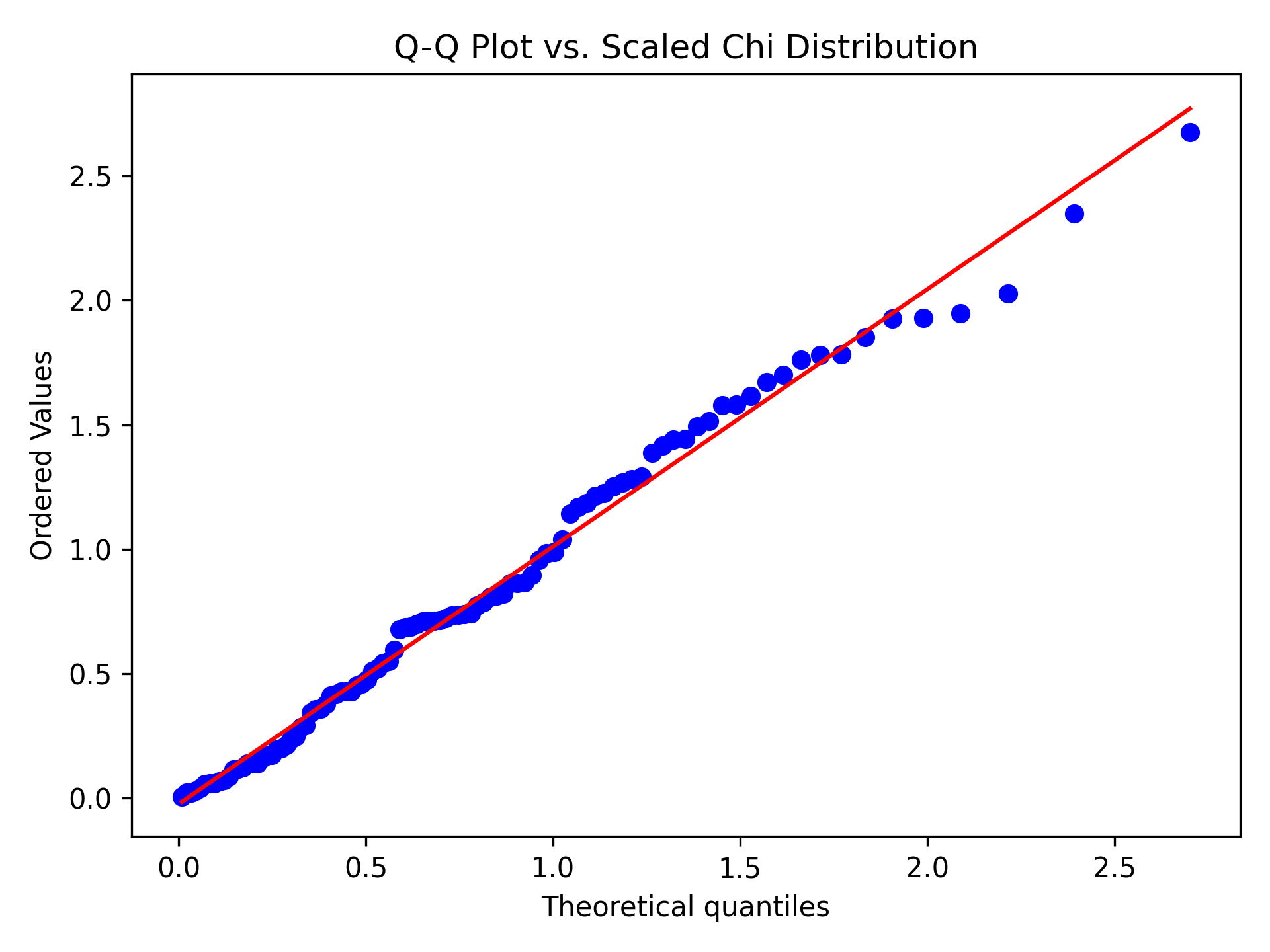

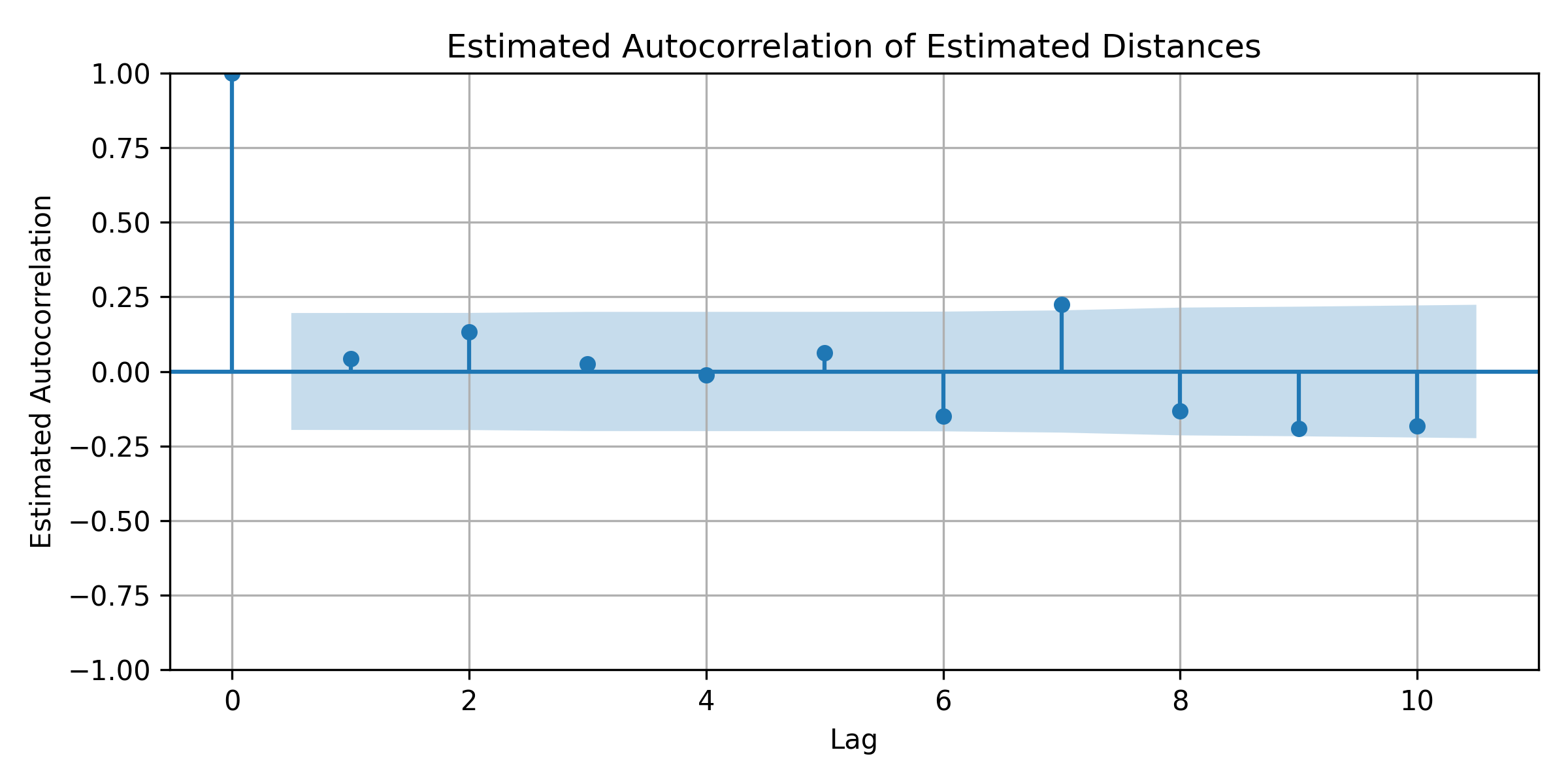

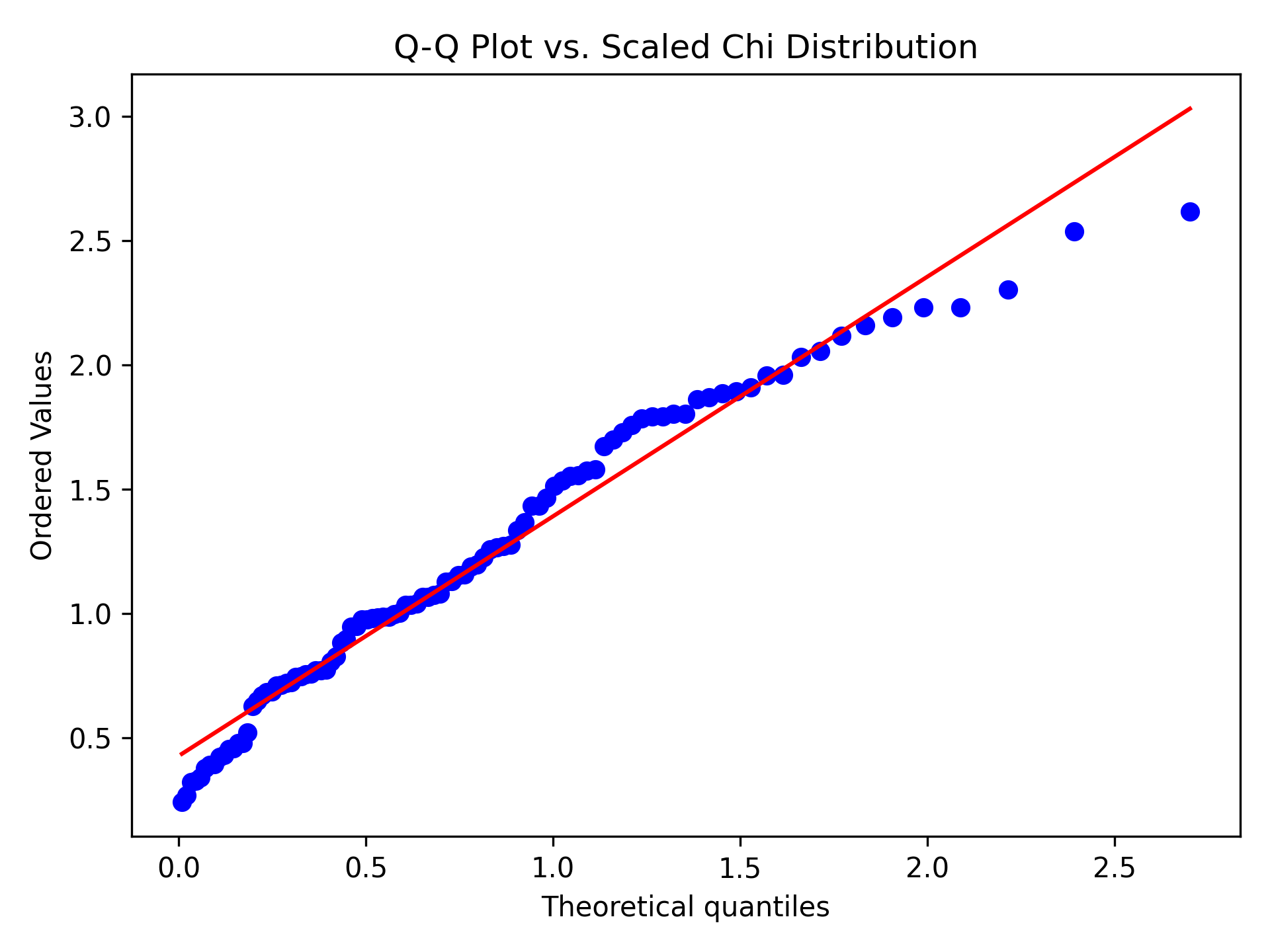

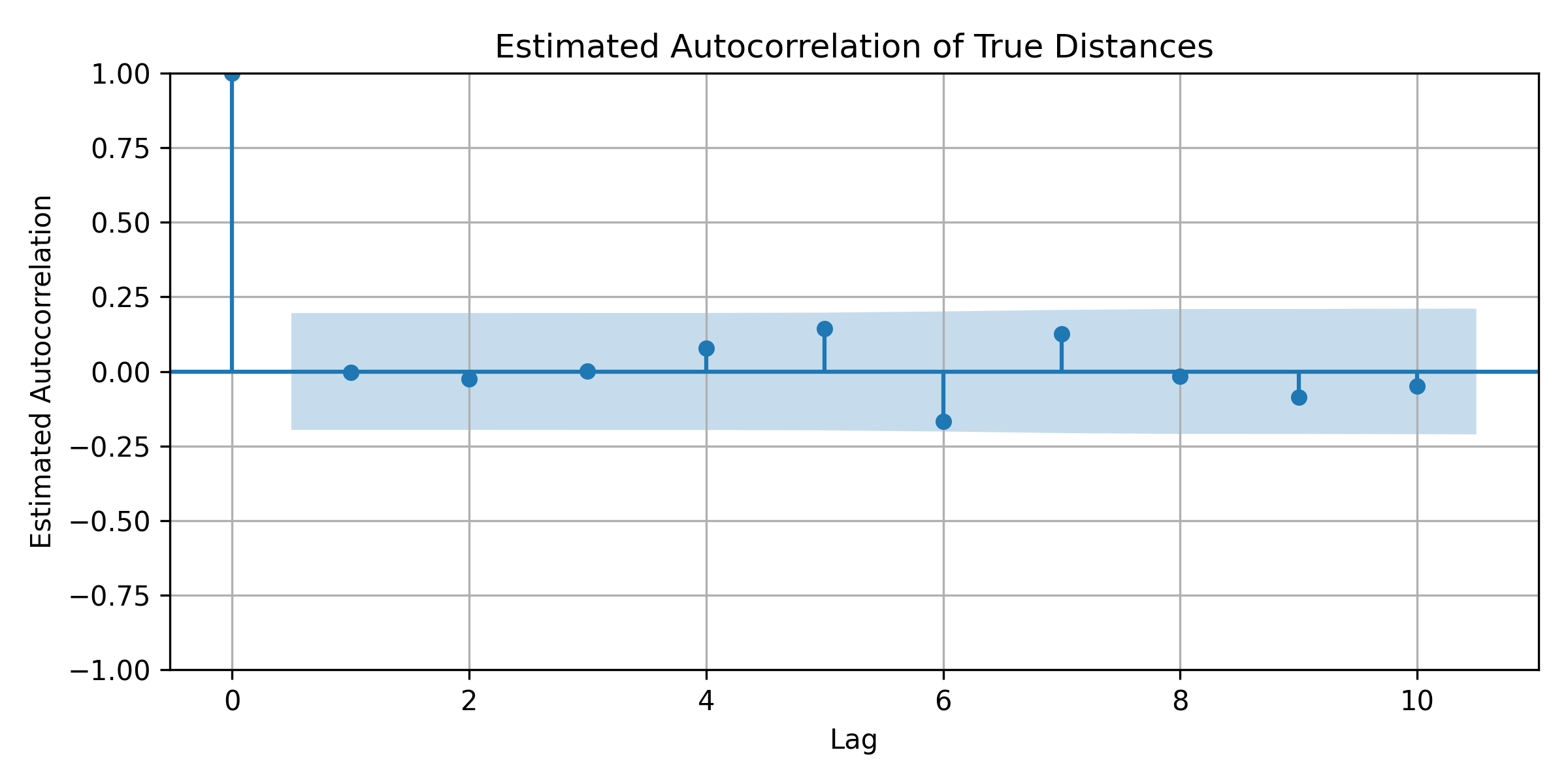

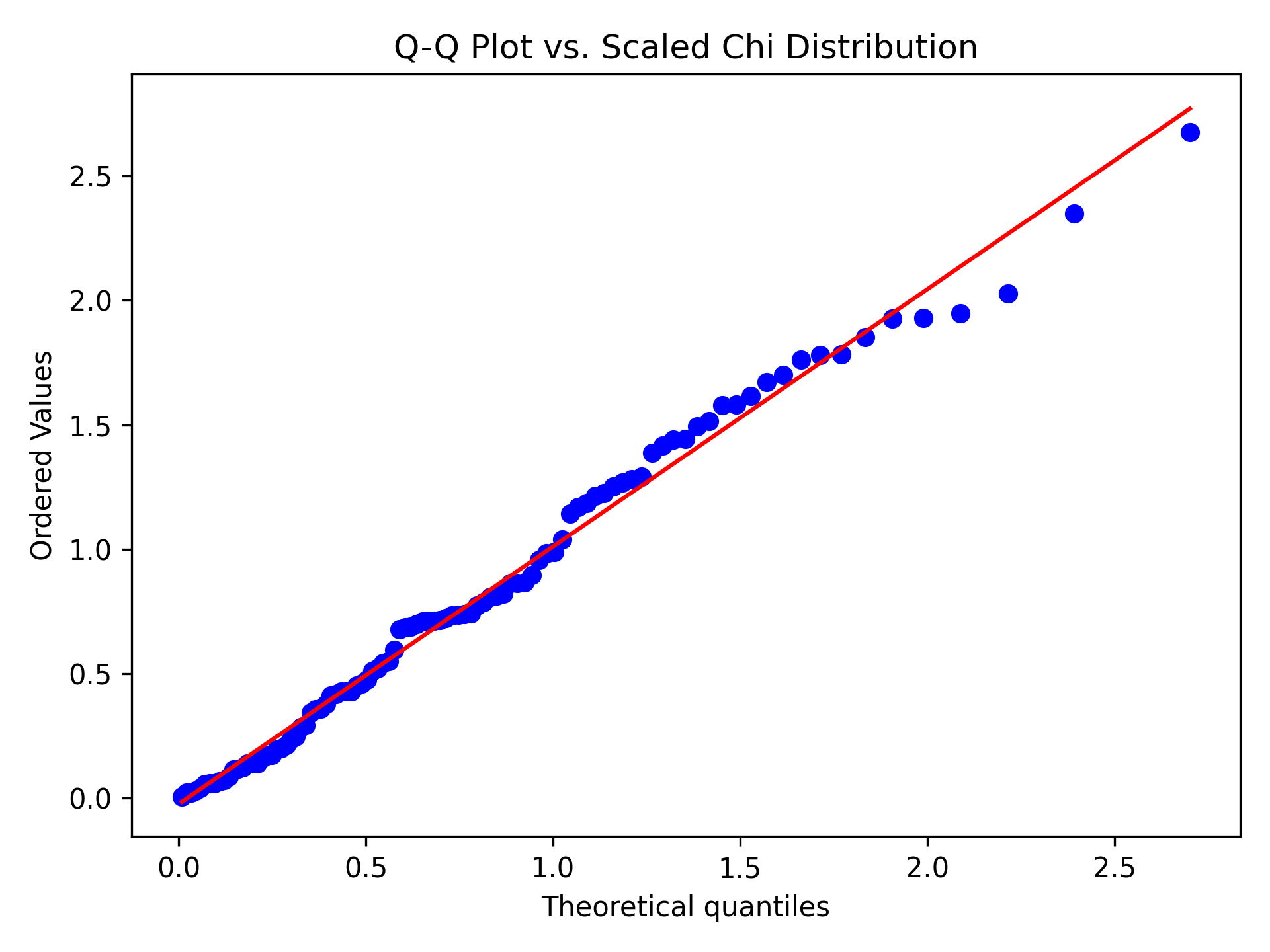

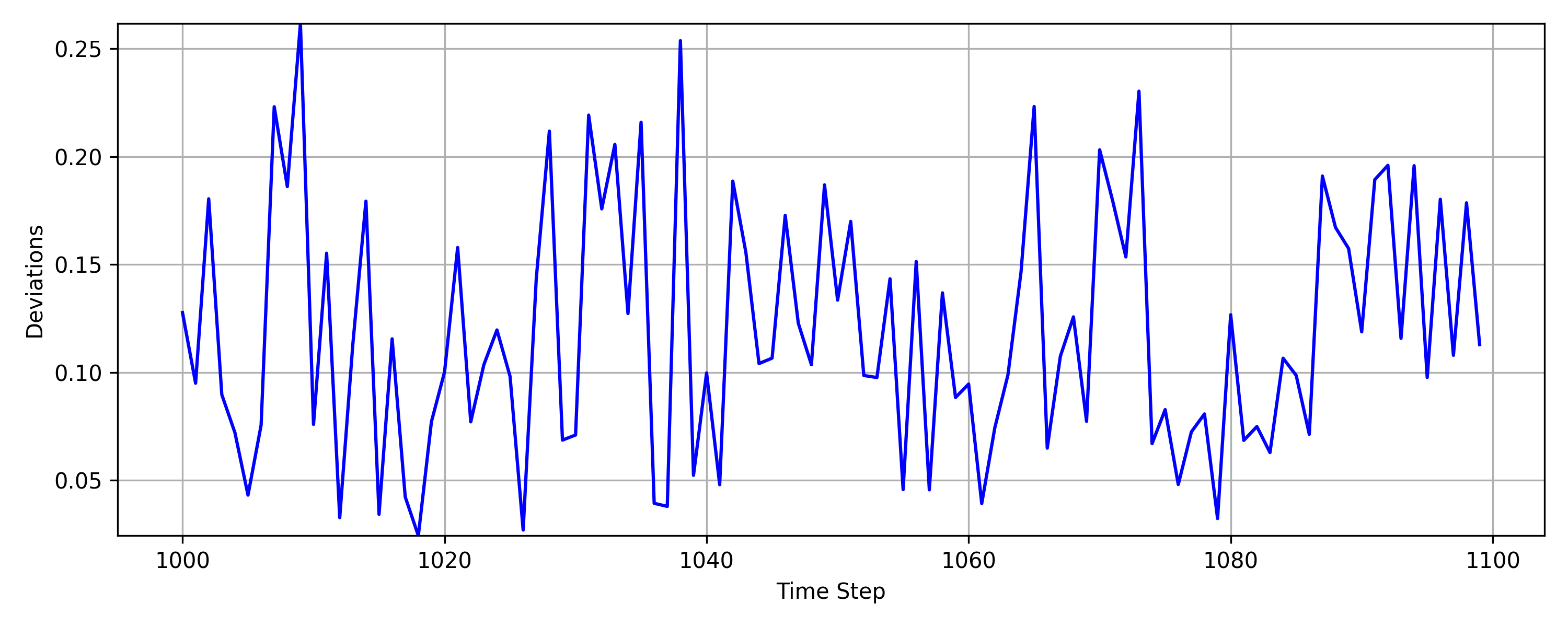

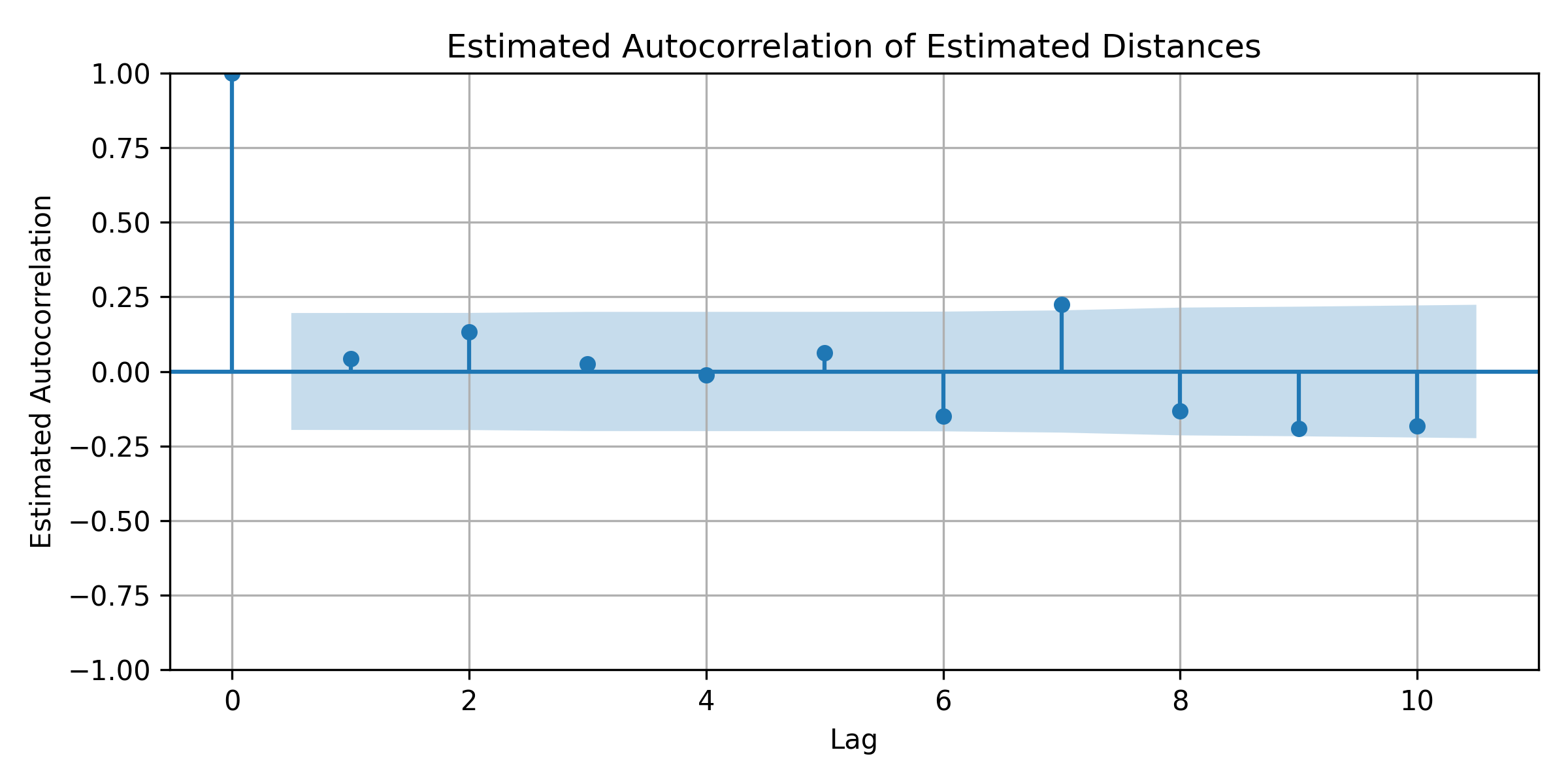

The deviations $||Y_t - \hat{\pi}(Y_t)||_2$ are monitored using a novel distribution-free EWMA control chart (UDFM), which employs a rolling window and a rank-based test statistic. The control limits are set via permutation tests to maintain a desired false alarm rate α, and the run length under the null hypothesis follows a geometric distribution. Temporal dependencies in the deviations are addressed by prewhitening via AR modeling.

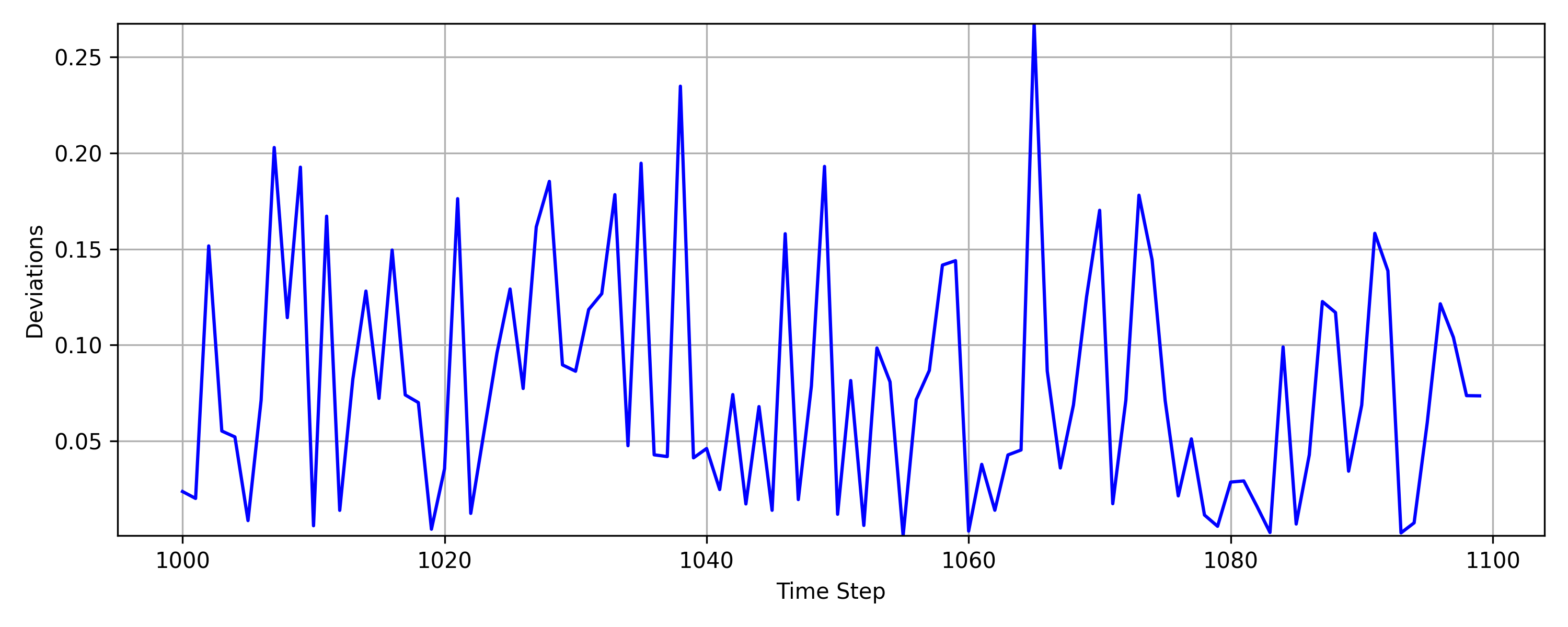

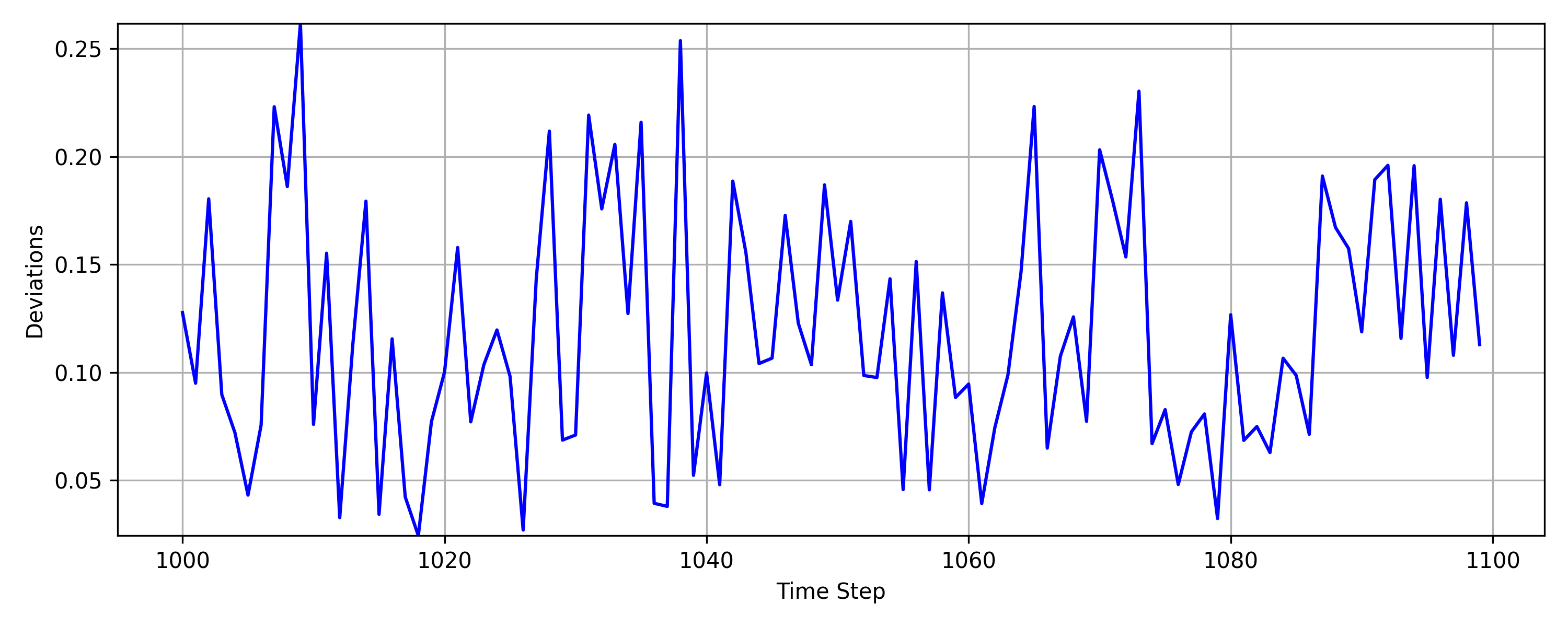

Figure 3: ∣∣Yt−π(Yt)∣∣2 and ∣∣Yt−π^(Yt)∣∣2 for synthetic data, illustrating the behavior of true and estimated deviations.

Manifold Learning-Based SPC Framework

Embedding and Monitoring

The manifold learning approach leverages Laplacian-based dimensionality reduction methods (LPP, NPE) to approximate an embedding function f^:M→Rd. The embedded observations are monitored in the lower-dimensional space, with temporal dependencies filtered via univariate AR models. The DFEWMA multivariate control chart is used for monitoring, with control limits set to achieve a specified in-control ARL.

Out-of-Sample Extension and Limitations

Unlike nonlinear manifold learning methods lacking explicit out-of-sample mappings, LPP and NPE provide linear approximations to the Laplace-Beltrami eigenfunctions, enabling online monitoring. However, these methods require m>D for the generalized eigenvalue problem to be well-posed, limiting their applicability in extreme high-dimensional settings.

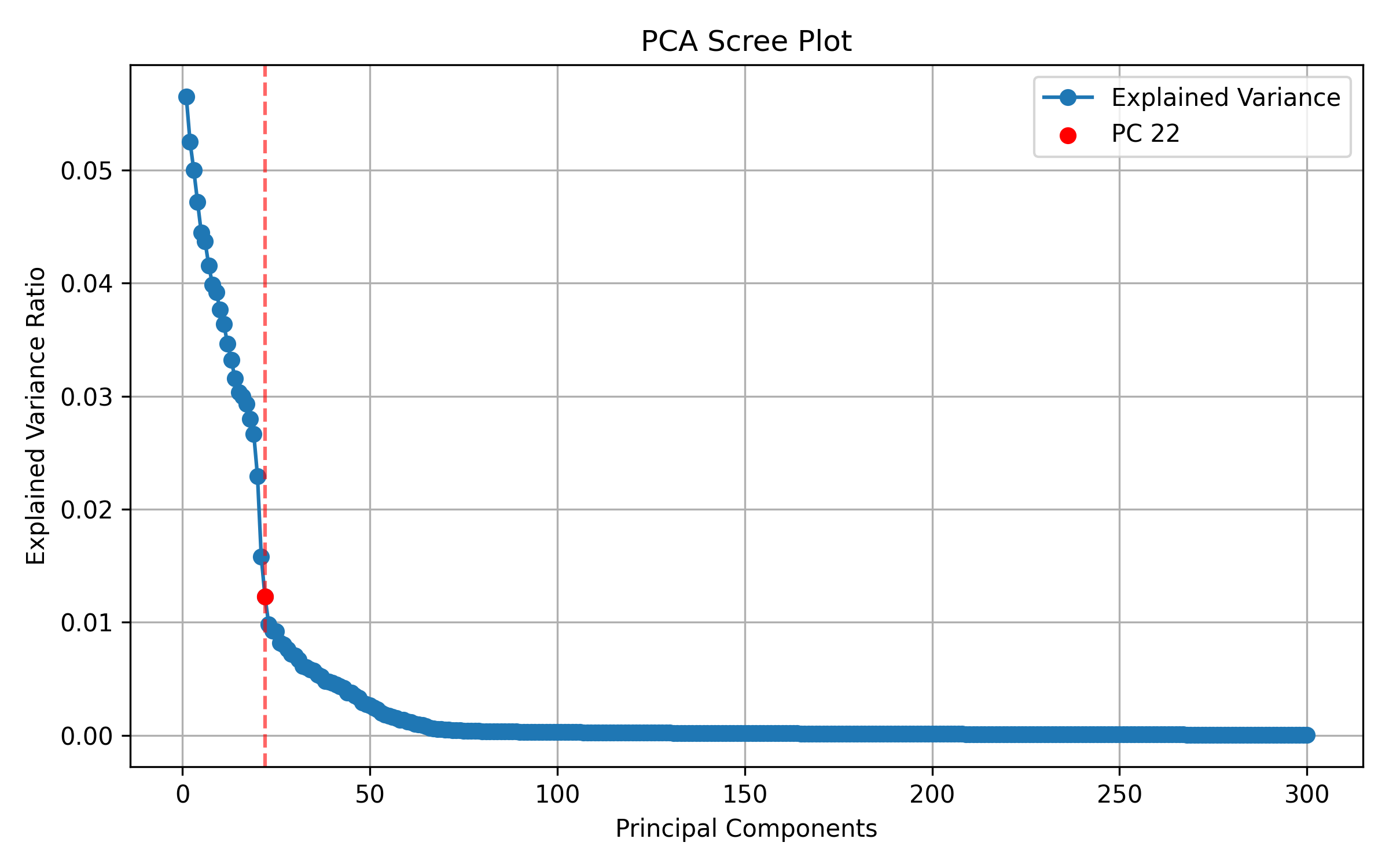

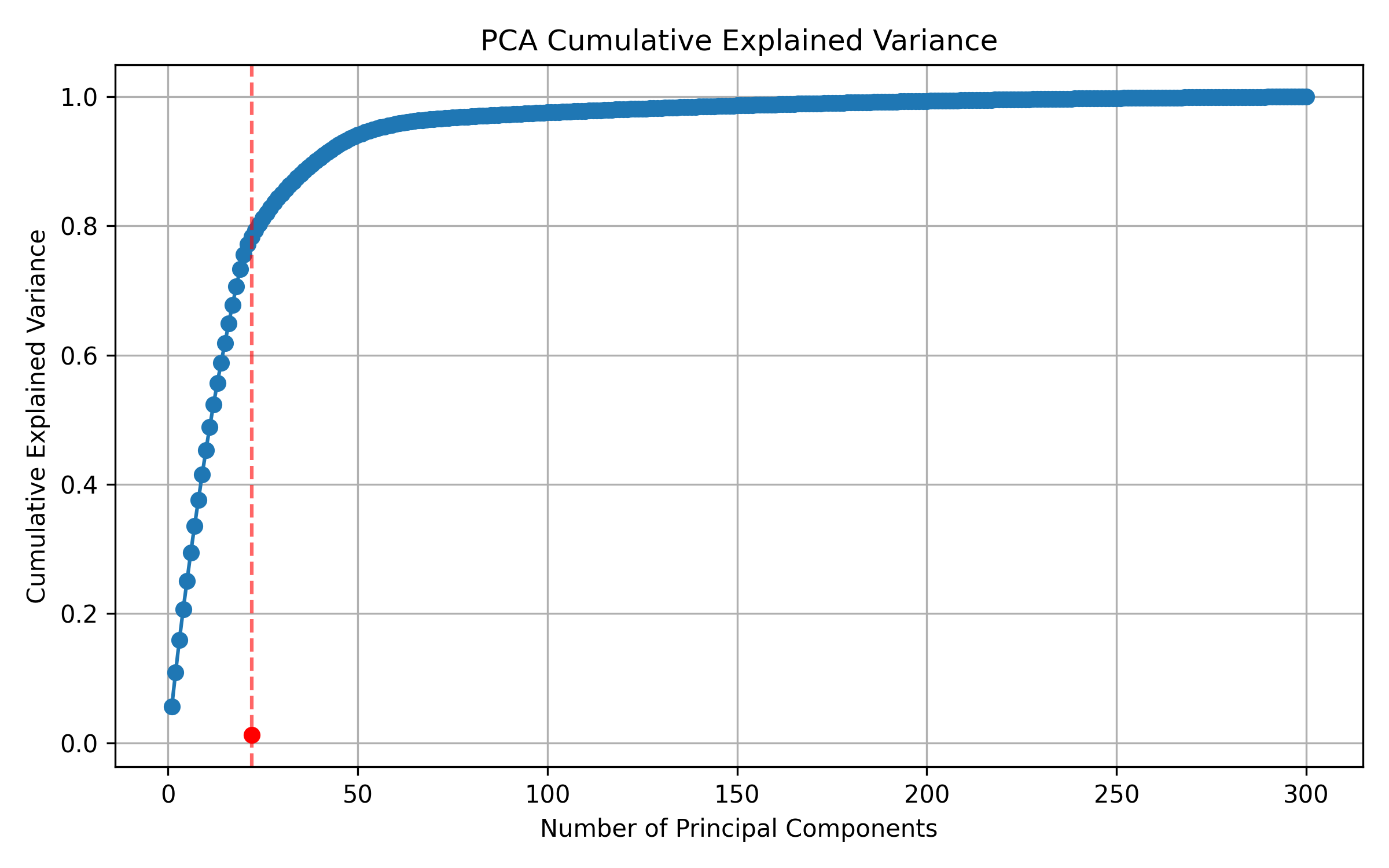

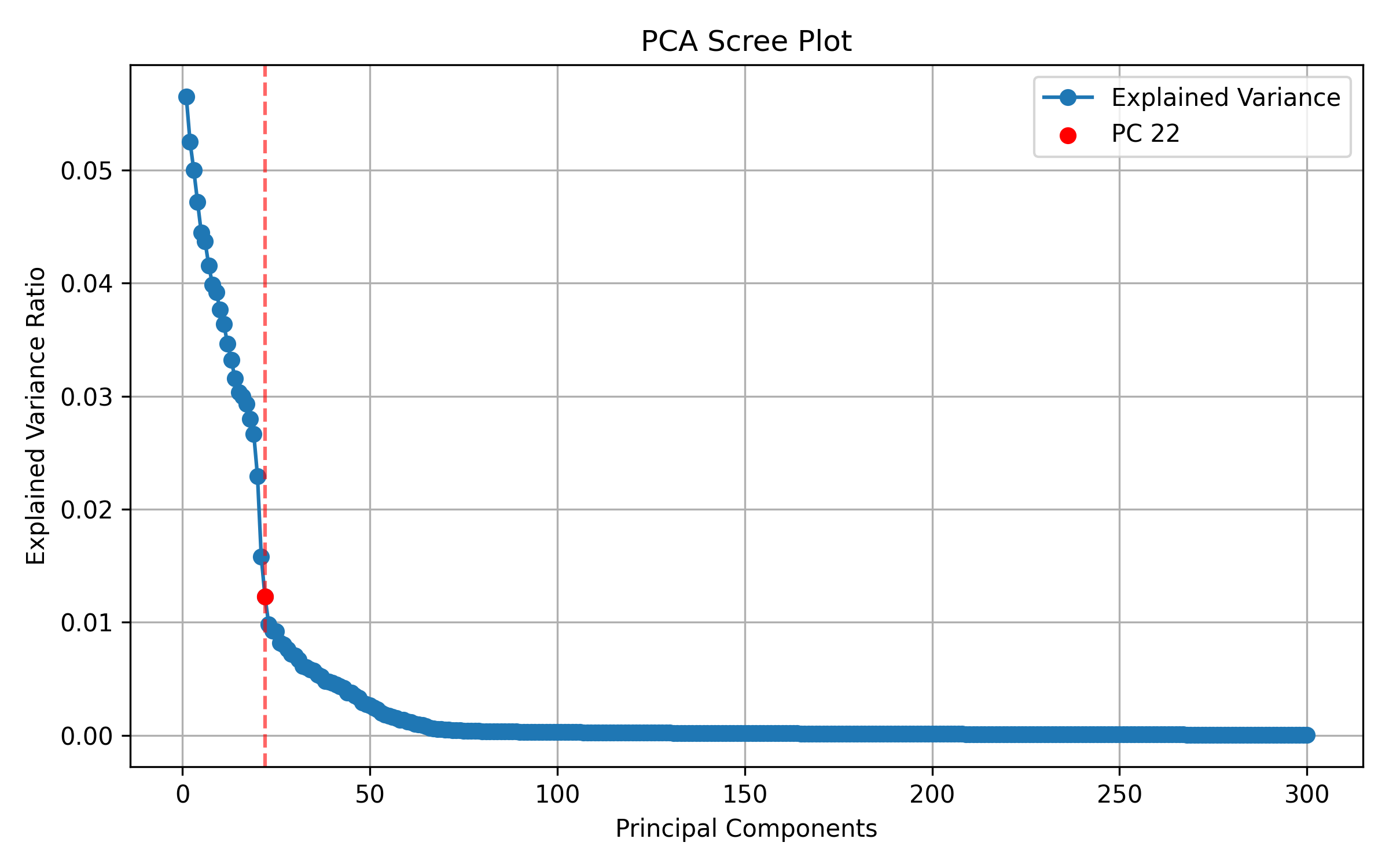

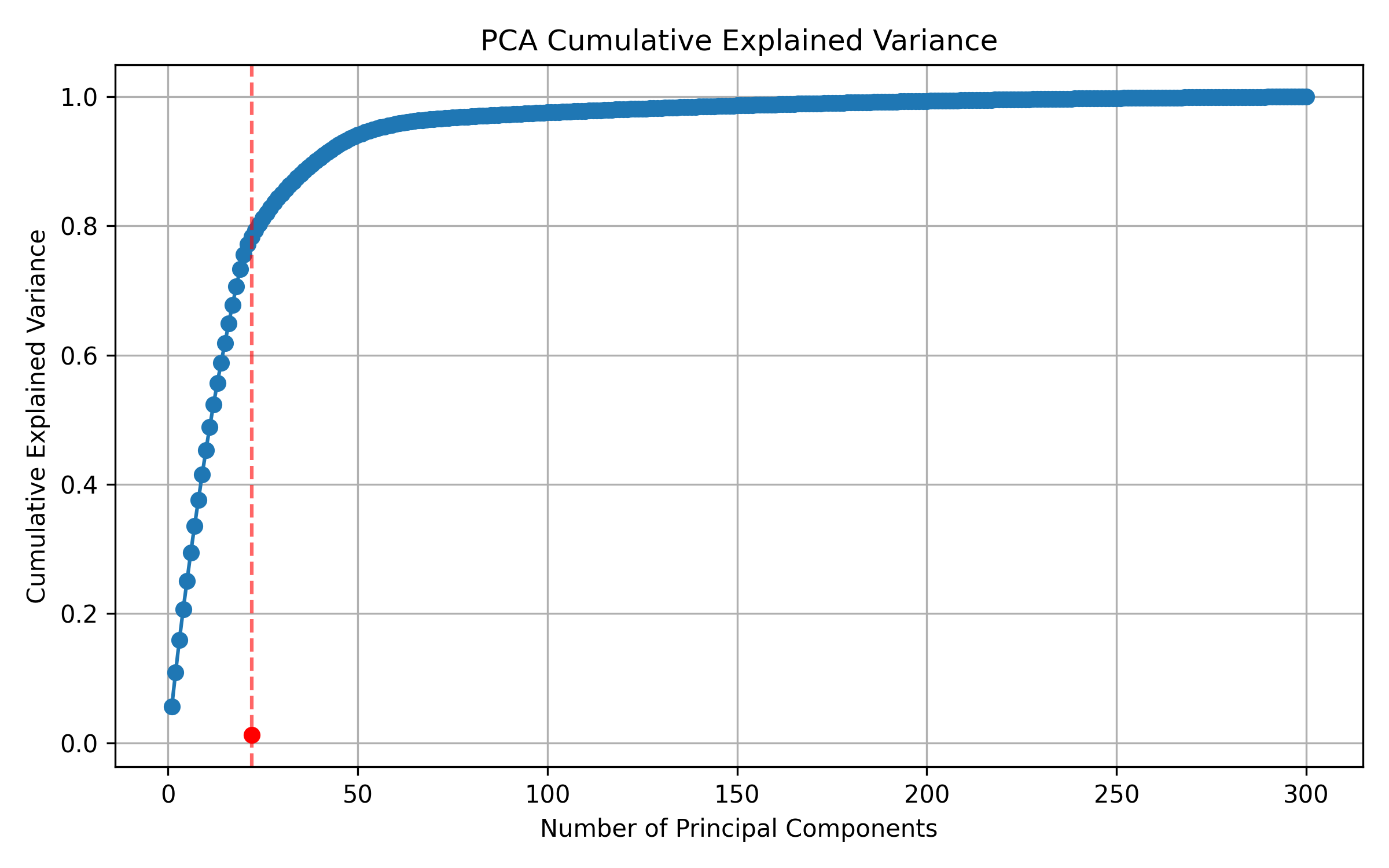

Figure 4: PCA elbow plot for the Tennessee Eastman process, indicating intrinsic dimensionality.

Synthetic Process on a 2-Sphere

Simulations on a synthetic process evolving on a 2-sphere in R6 demonstrate that the manifold fitting approach (MF) detects mean shifts in all ambient dimensions, whereas manifold learning methods (LPP, NPE, PCA) fail to detect shifts outside the embedded subspace. MF achieves lower out-of-control ARL, indicating superior detection power.

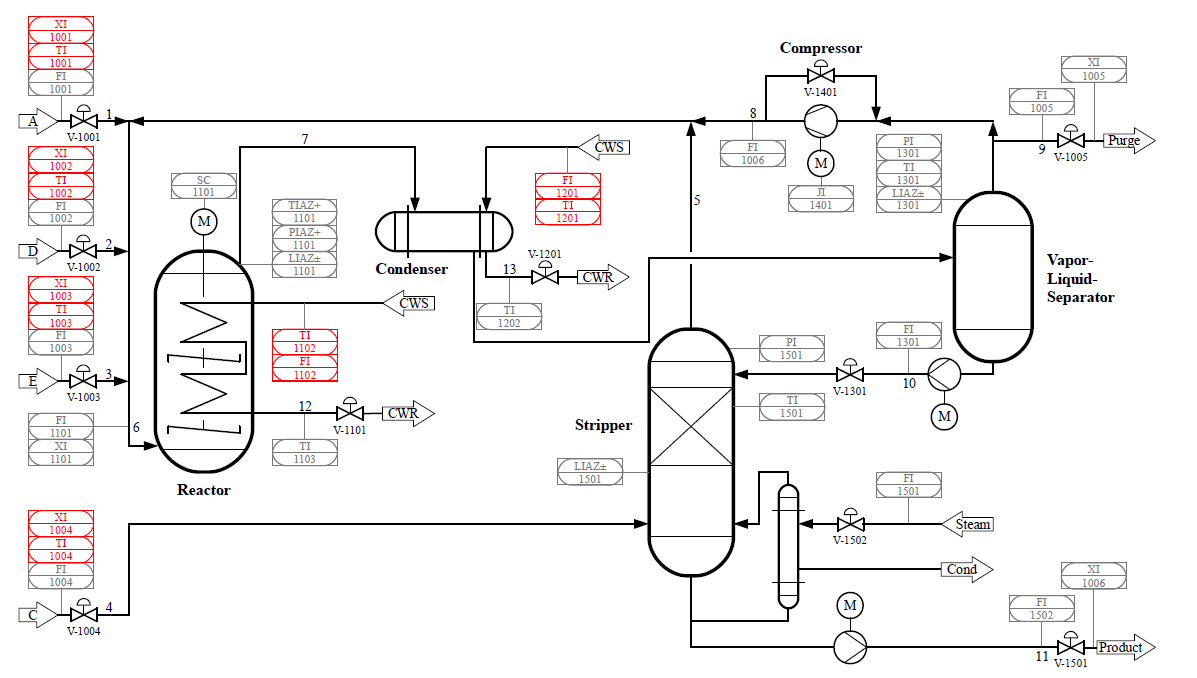

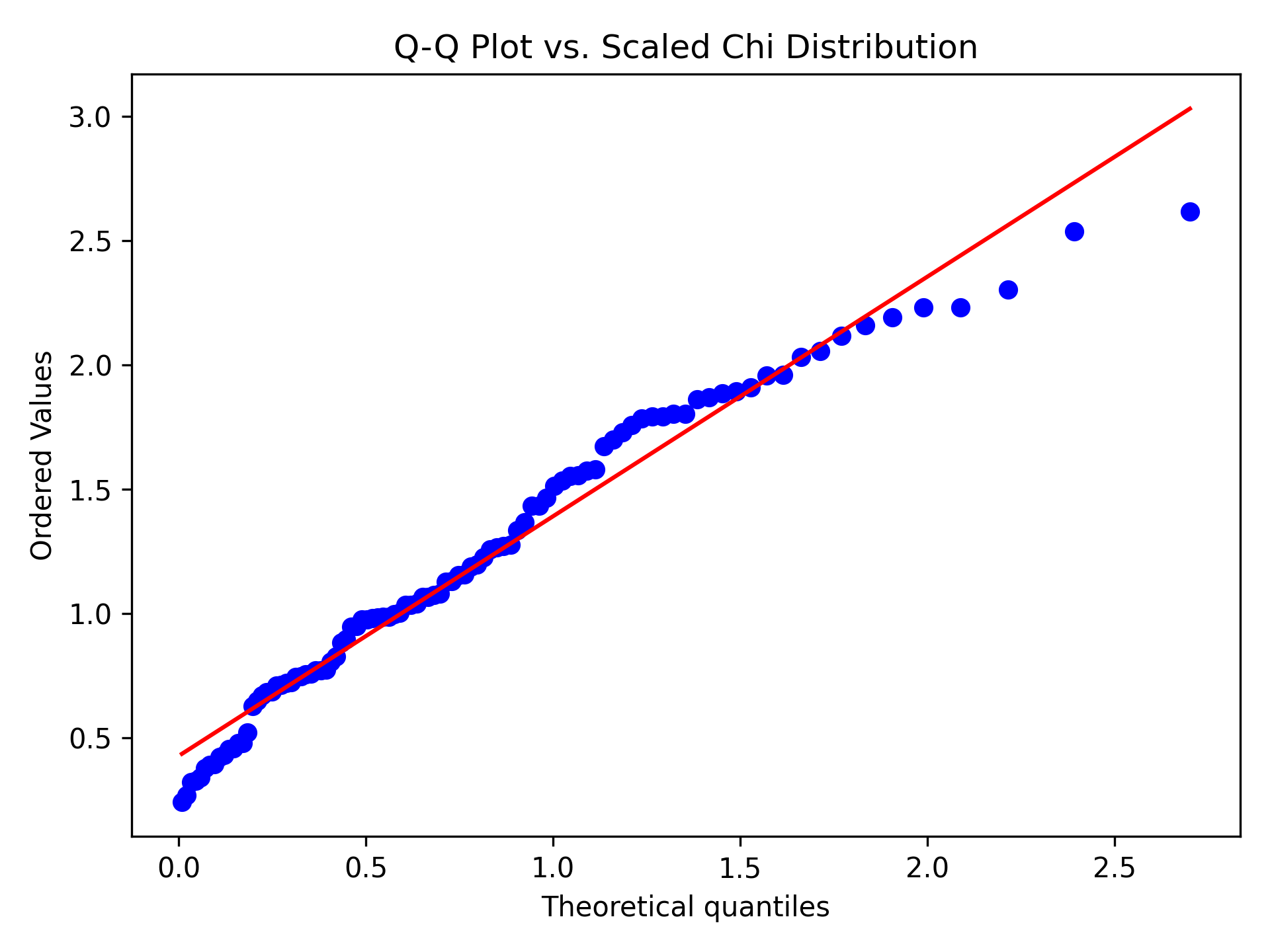

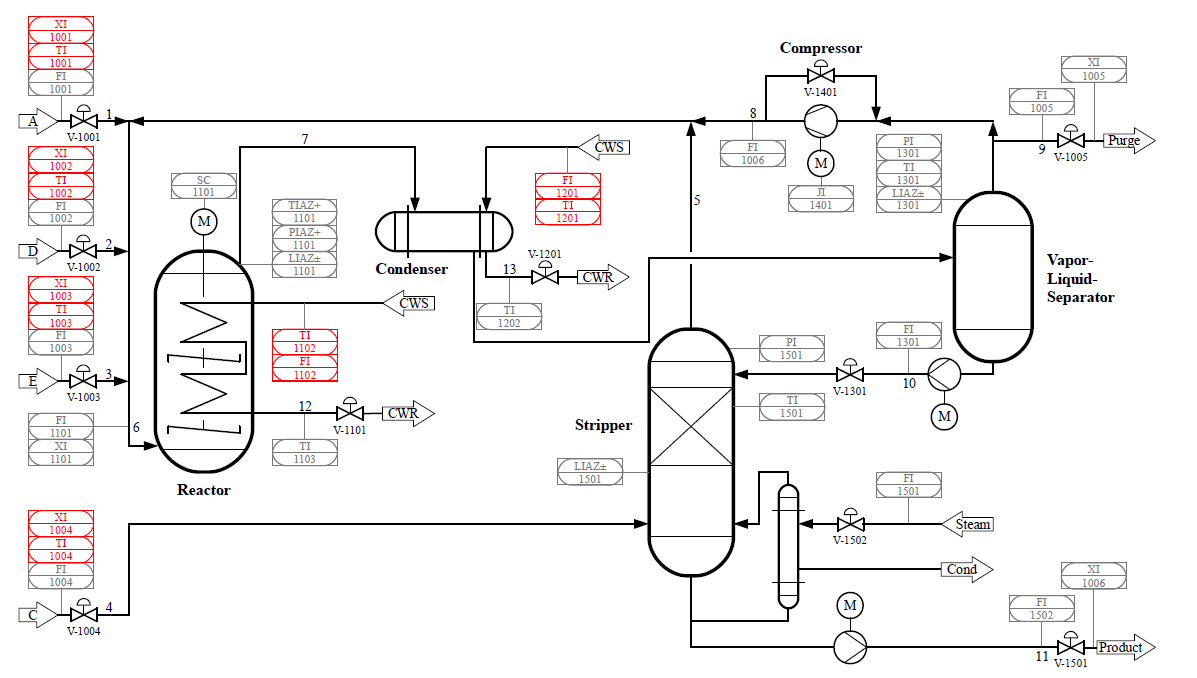

Tennessee Eastman Process

Experiments on a replicated Tennessee Eastman process (D=300) show that MF maintains controllable ARL even when m<D, where manifold learning methods are ill-posed. For moderate m>D, MF outperforms LPP and NPE in detecting large shifts, while NPE is more sensitive to small shifts. PCA fails to detect faults due to the nonlinear nature of the process manifold.

Figure 5: Tennessee Eastman Process schematic, illustrating the complexity and feedback loops.

Kolektor Surface-Defect Dataset

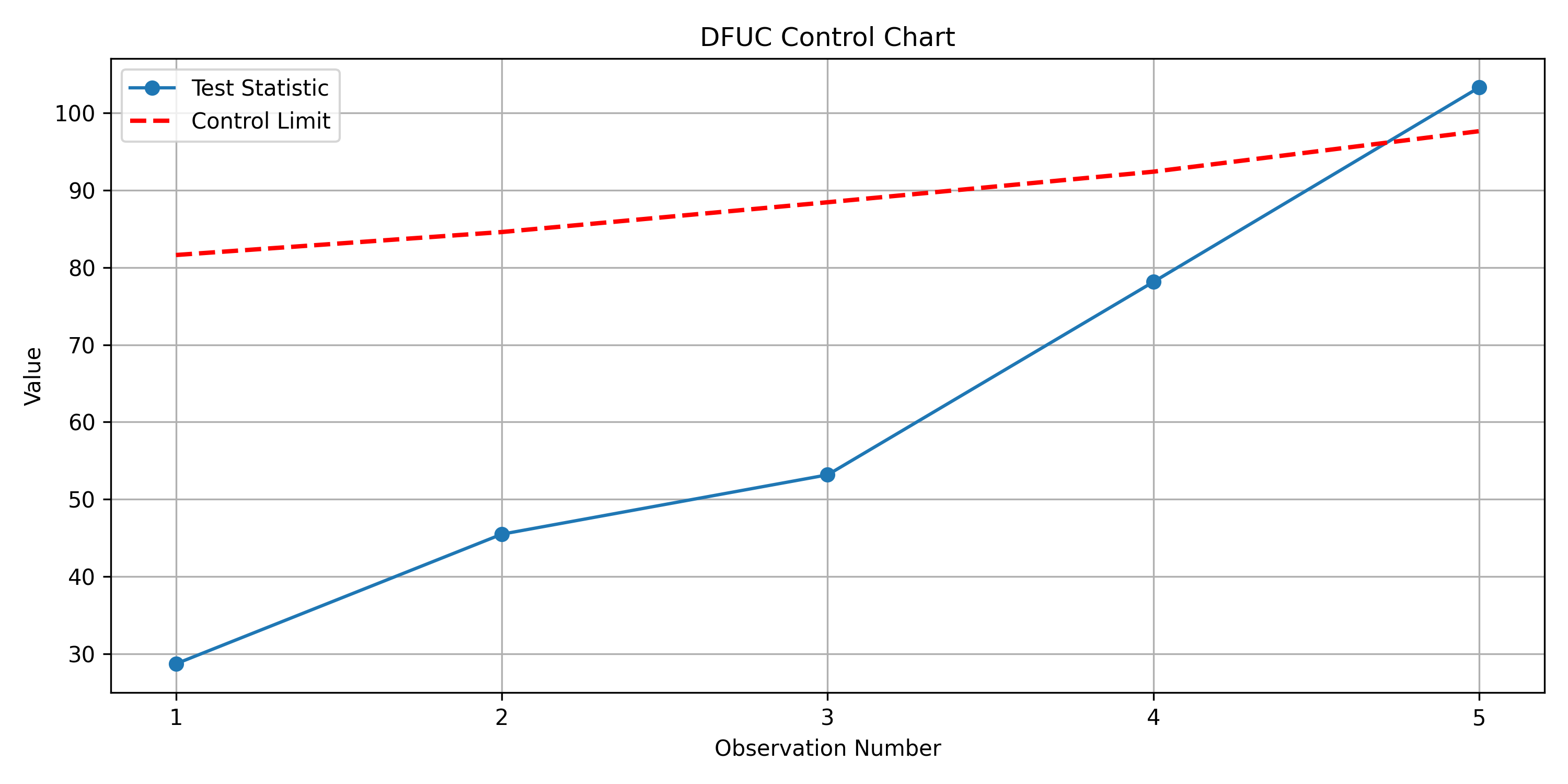

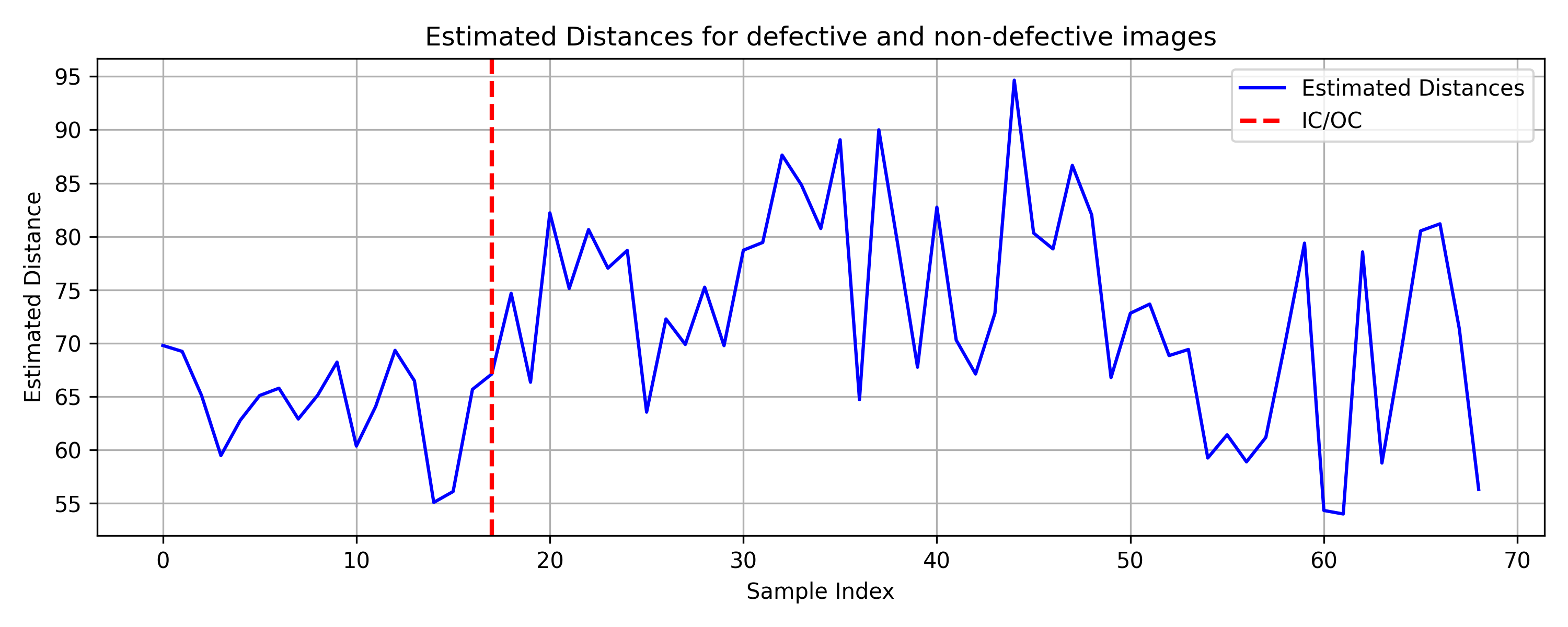

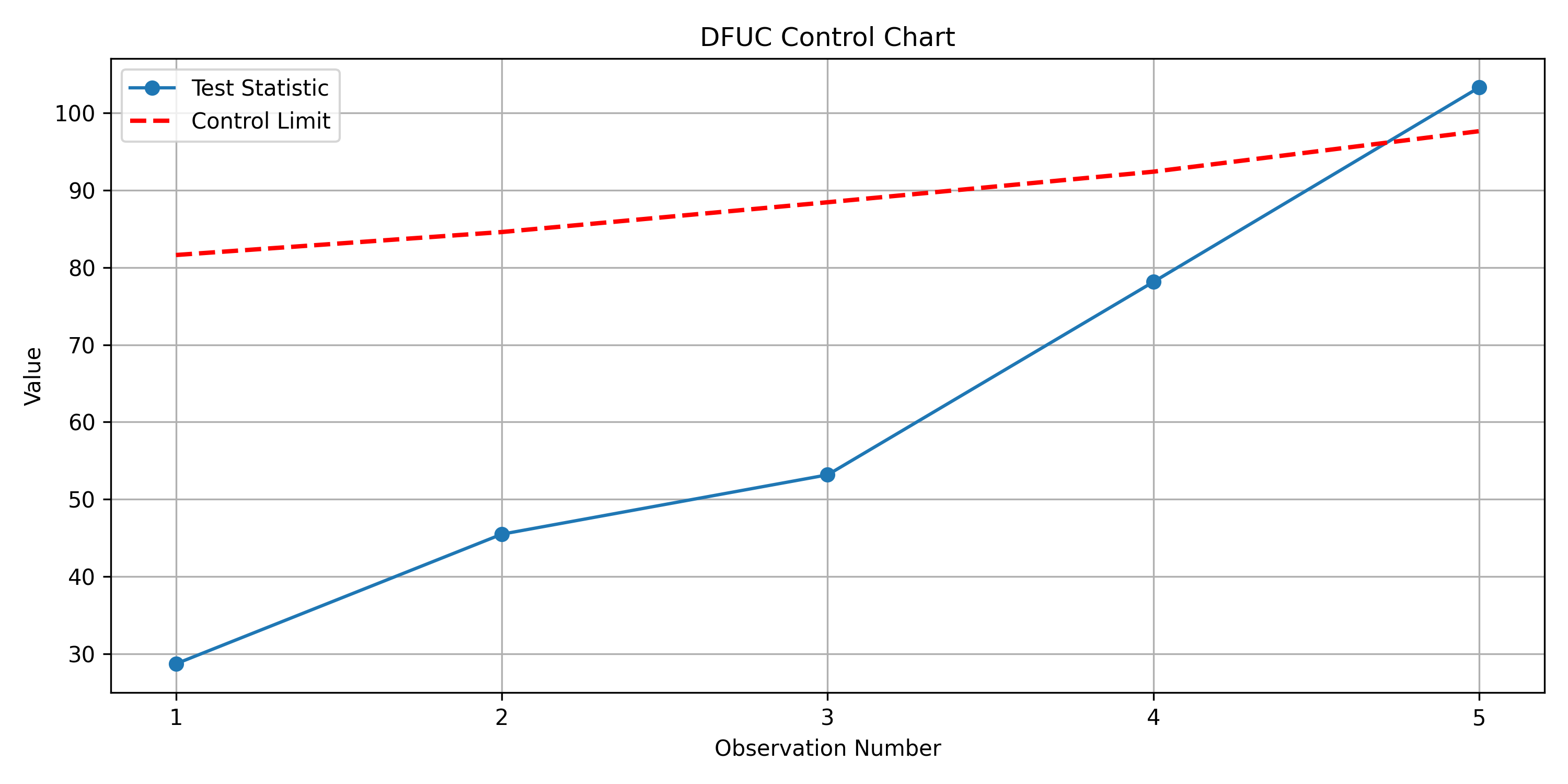

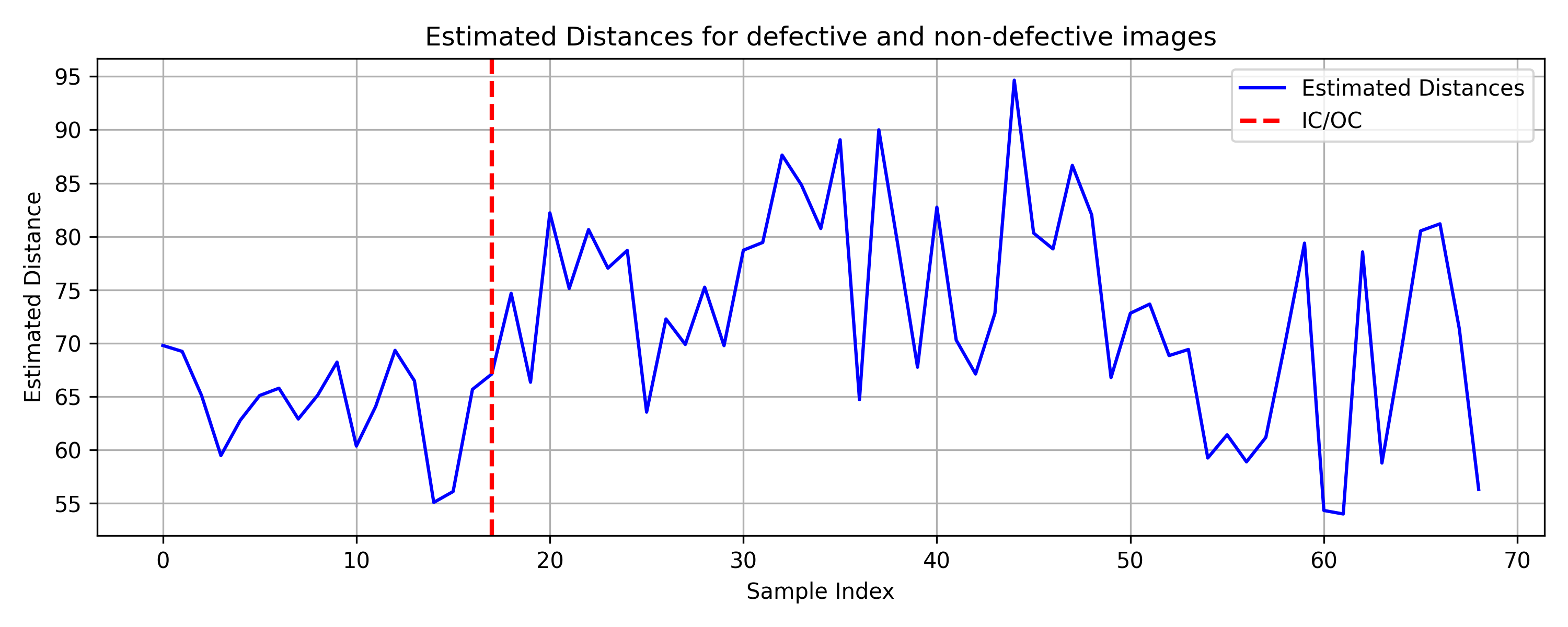

Application to the Kolektor surface-defect image dataset (D=720896) demonstrates the practical utility of MF in anomaly detection. The UDFM control chart signals an alarm after only five defective images, with estimated deviations for defective surfaces significantly higher than for non-defective ones.

Figure 6: Example images from the Kolektor surface-defect dataset, showing non-defective and defective surfaces.

Figure 7: UDFM control chart for Kolektor dataset, with alarm triggered at the fifth defective image.

Implementation Considerations

- Computational Requirements: Manifold fitting scales with the number of Phase I samples and ambient dimension, but is feasible for D≫m due to local averaging and efficient data structures.

- Parameter Selection: Theoretical guarantees require careful tuning of ball and cylinder radii, which depend on estimated noise level σ and manifold reach.

- Prewhitening: AR modeling of deviations is essential for maintaining i.i.d. assumptions in control charting.

- Out-of-Sample Extension: MF is applicable for m<D, unlike manifold learning methods, which require m>D.

- Deployment: Both frameworks are suitable for real-time monitoring, with MF offering a simpler univariate chart and broader applicability.

Implications and Future Directions

The manifold fitting approach provides a robust, distribution-free method for SPC in high-dimensional, nonlinear settings, with strong theoretical guarantees and practical performance. Its ability to operate in extreme high-dimensional regimes and detect faults in directions orthogonal to the learned manifold is a significant advantage over traditional and manifold learning-based SPC methods. The framework is extensible to other types of process changes, such as covariance shifts or manifold shape changes, and can be adapted for variable attribution via analysis of projected points.

Future research may focus on:

- Extending MF to detect changes in manifold geometry (e.g., curvature, topology).

- Integrating deep learning-based manifold fitting for complex data types.

- Developing scalable algorithms for real-time, streaming data.

- Investigating theoretical properties under non-Gaussian noise and non-stationary processes.

Conclusion

This work presents two complementary frameworks for high-dimensional SPC under manifold assumptions: a direct manifold fitting approach with a univariate, distribution-free control chart, and a manifold learning approach with multivariate monitoring. Extensive experiments demonstrate that manifold fitting achieves competitive or superior fault detection, especially in extreme high-dimensional and nonlinear scenarios. The practical utility is further validated on real industrial and image datasets, establishing manifold fitting as a powerful tool for modern SPC applications.