- The paper introduces a framework that propagates quantization uncertainty in sensor calibration data, showing that these errors can match or exceed nominal accuracy.

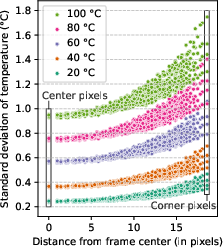

- It employs large-scale Monte Carlo simulation on the MLX90640 sensor to reveal non-uniform, temperature-dependent uncertainty that significantly affects edge detection performance.

- Efficient FPGA-based implementations achieve up to 94× speedup with low power consumption while closely matching Monte Carlo outputs, enabling real-time uncertainty tracking.

Efficient Digital Methods to Quantify Sensor Output Uncertainty

Introduction

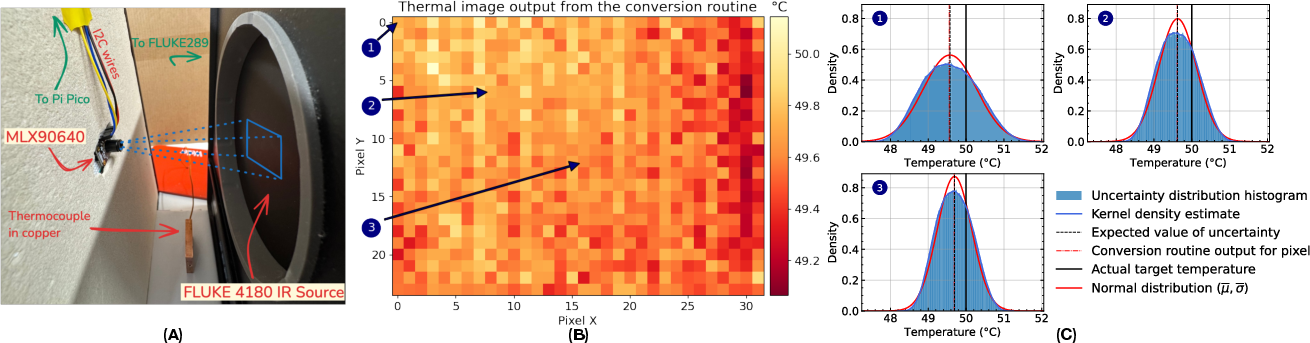

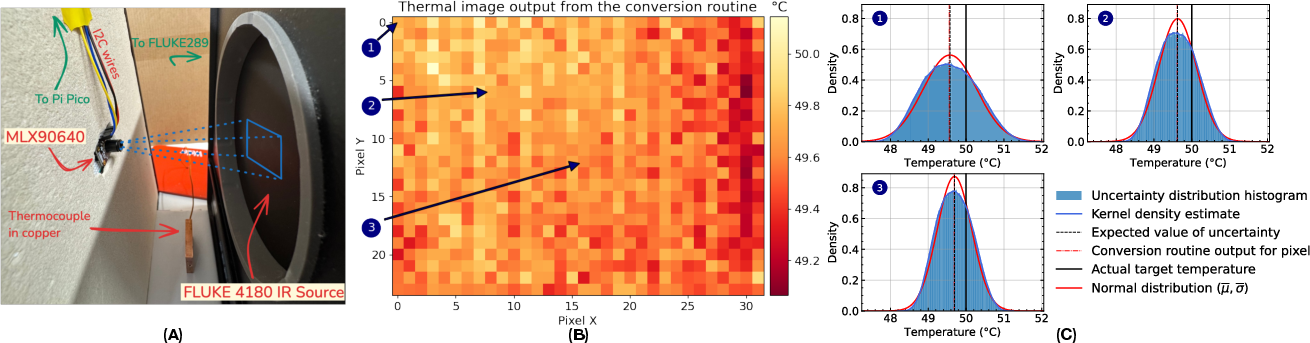

This work addresses the quantification of epistemic uncertainty in sensor outputs, focusing on the impact of limited-precision calibration data in modern digital sensors. The authors present a comprehensive framework for propagating and quantifying uncertainty arising from the quantization of calibration parameters, using thermopile-based infrared sensors (specifically, the Melexis MLX90640) as a case study. The analysis demonstrates that representation uncertainty in calibration data can induce output errors that are comparable to, or even exceed, the nominal sensor accuracy. The paper further introduces efficient hardware implementations for real-time uncertainty tracking, enabling practical deployment in embedded systems.

Figure 1: Simplified diagram of a thermopile-based infrared sensor, illustrating the signal chain from physical transduction to digital output and the role of quantized calibration data in introducing epistemic uncertainty.

Representation Uncertainty in Sensor Calibration

Theoretical Framework

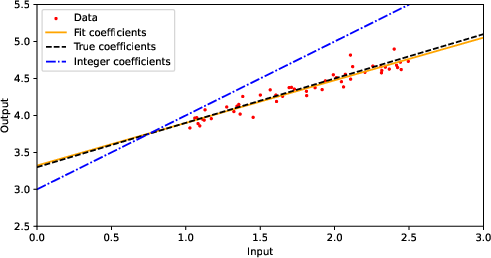

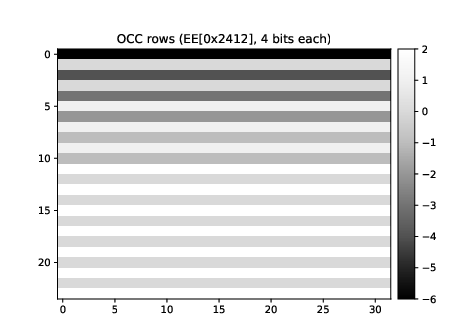

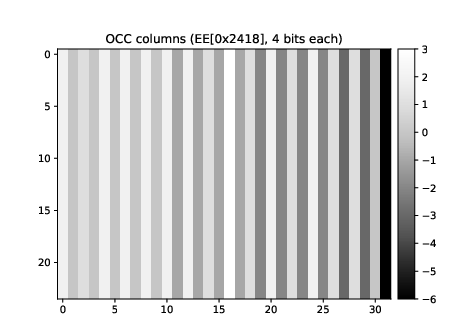

The core insight is that the process of storing calibration parameters in finite-precision digital memory introduces representation uncertainty. When real-valued calibration parameters are quantized (e.g., rounded to integers), the original value is only known to lie within a quantization bin, typically modeled as a uniform distribution of width one centered on the stored value. This epistemic uncertainty is distinct from aleatoric noise and persists even in the absence of measurement noise.

The propagation of this uncertainty through the sensor's conversion routines—often nonlinear and involving multiple correlated parameters—results in nontrivial, input-dependent uncertainty in the final sensor output. The authors formalize this process and provide a methodology for forward-propagating representation uncertainty through the calibration extraction and measurement conversion pipeline.

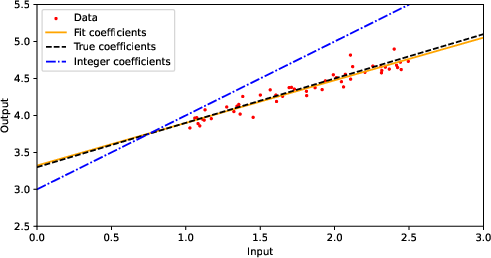

Figure 3: Example of calibration via linear fit, showing the deviation introduced by quantized coefficients compared to the true model.

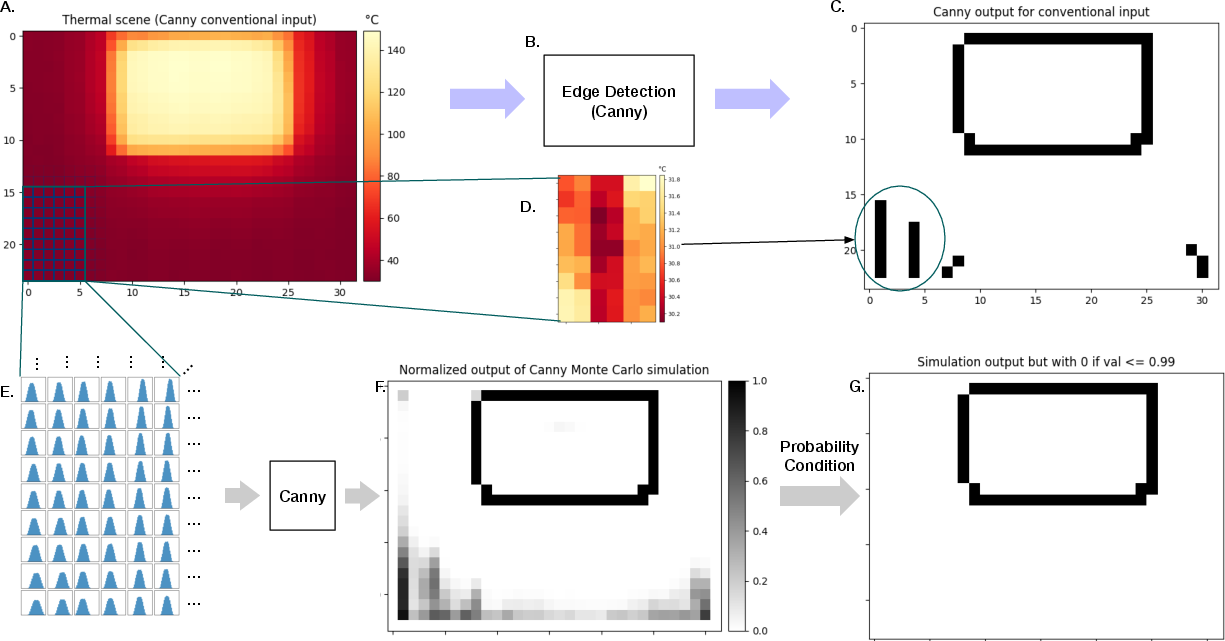

Empirical Analysis on MLX90640

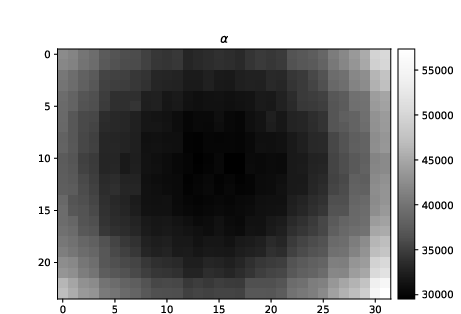

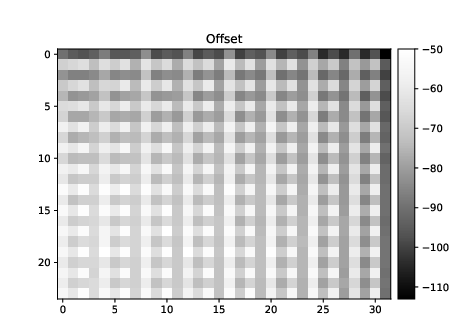

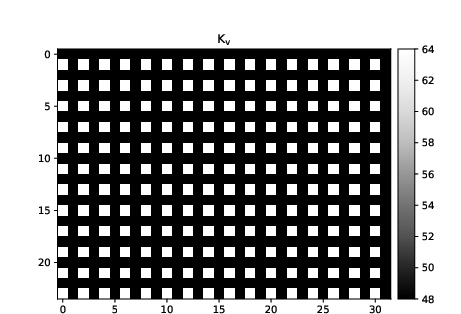

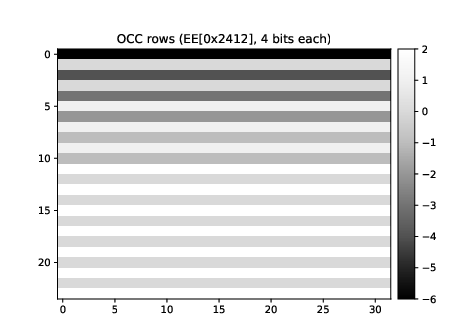

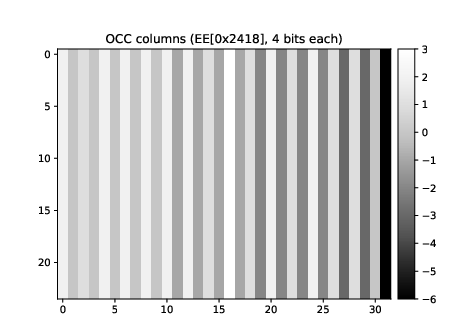

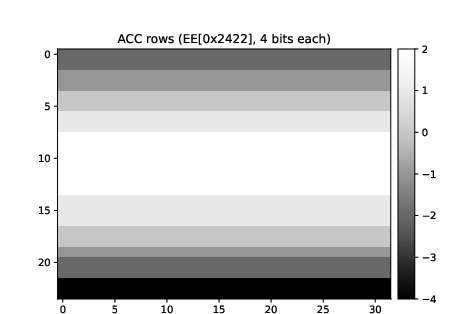

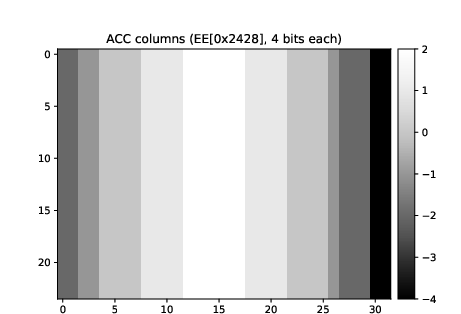

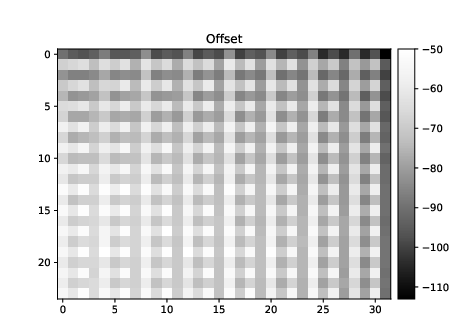

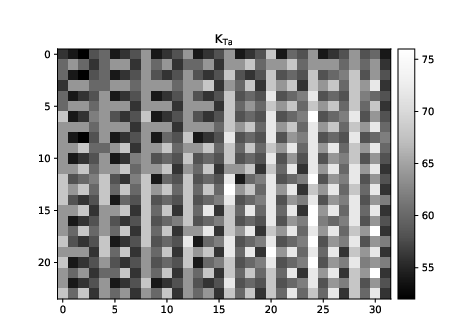

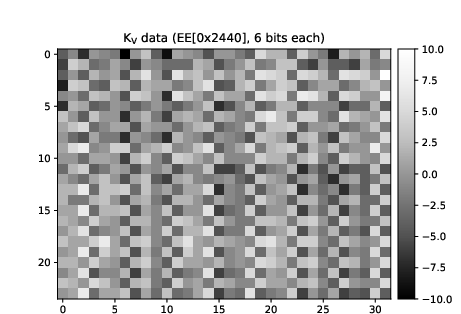

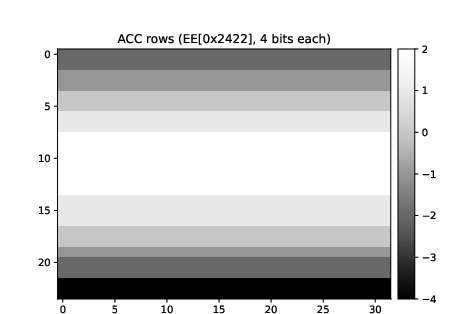

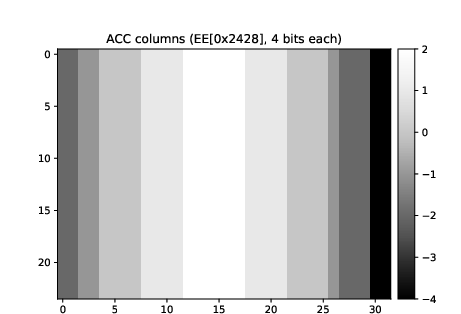

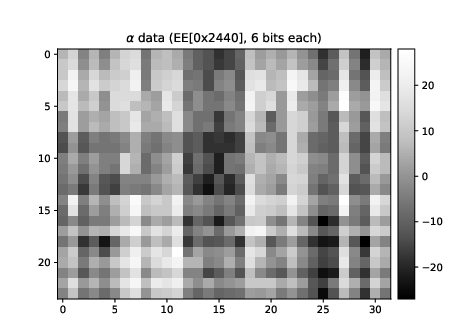

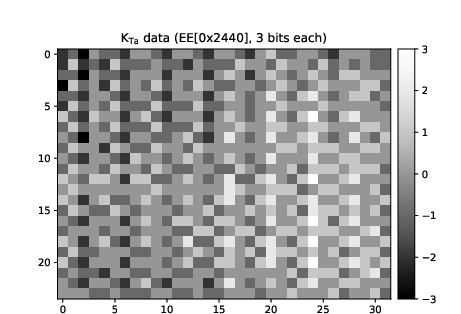

The MLX90640 sensor, with 768 pixels and 37 calibration parameters (including per-pixel vectors), serves as the experimental platform. The authors extract the calibration data from the sensor's EEPROM, model the representation uncertainty as uniform distributions, and propagate this uncertainty through the manufacturer's conversion routines using large-scale Monte Carlo simulation (500,000 samples per pixel per measurement).

Key findings include:

Figure 6: Per-pixel probability distributions for calibration parameters, illustrating the spatially varying uncertainty structure.

Application: Uncertainty-Aware Edge Detection

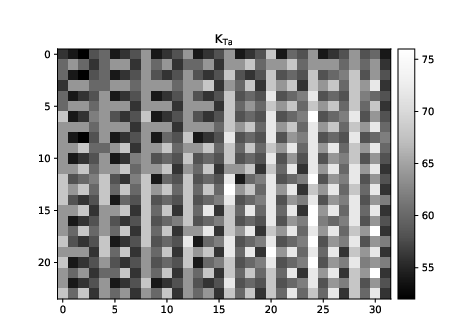

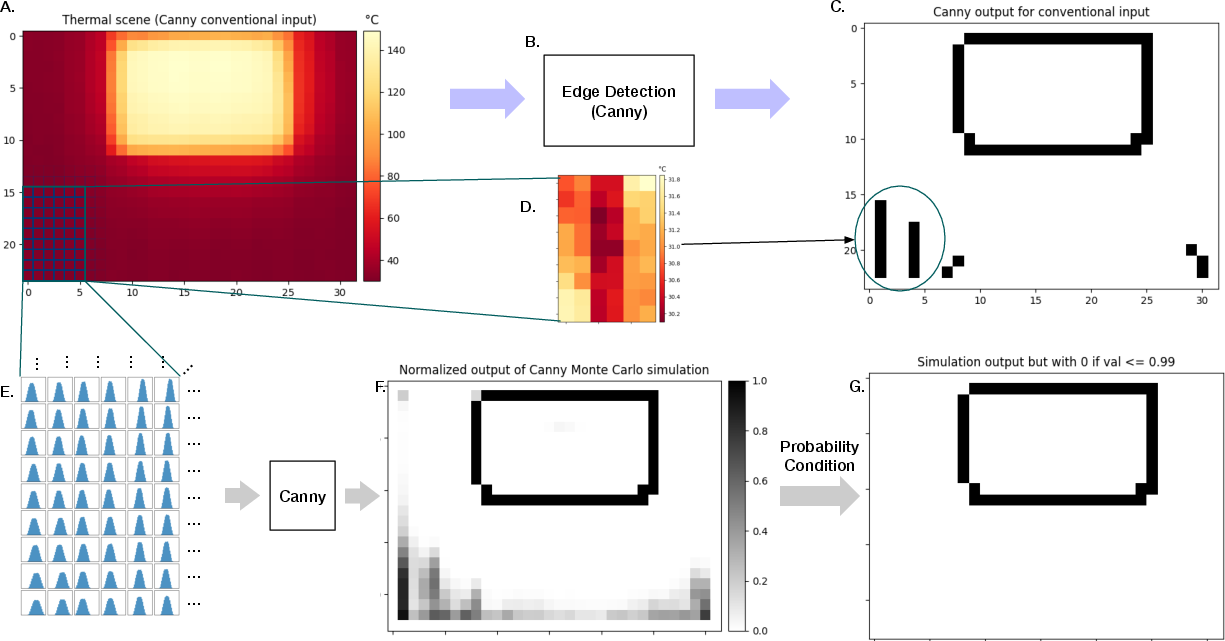

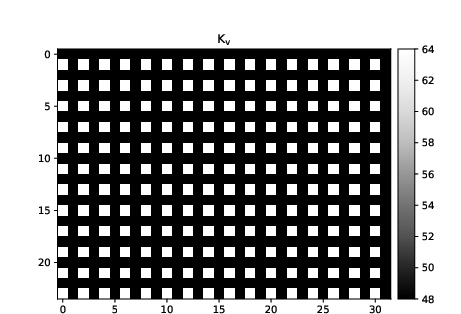

The practical implications of epistemic uncertainty are demonstrated in an edge detection scenario using the Canny operator. Conventional edge detection on the sensor output yields a significant number of false positives, particularly in regions with high uncertainty due to calibration quantization.

By propagating the full uncertainty distribution through the edge detection pipeline (via Monte Carlo sampling), the authors compute the probability of each pixel being classified as an edge. Filtering edges by high-probability thresholds (e.g., >0.99) eliminates false positives while preserving true edges, demonstrating the utility of uncertainty-aware processing in downstream tasks.

Figure 2: Impact of uncertainty-aware processing on edge detection—false positives are eliminated by thresholding on edge probability derived from the propagated uncertainty.

Real-Time Uncertainty Quantification Hardware

To address the computational cost of Monte Carlo-based uncertainty propagation, the authors implement the conversion and uncertainty tracking routines on two FPGA-based platforms (UxHw-FPGA-5k and UxHw-FPGA-17k), both supporting native uncertainty tracking via the Laplace microarchitecture.

Performance metrics:

- UxHw-FPGA-5k: 16.7 mW average power, 4.7 s per pixel, 42.9× speedup over equal-accuracy Monte Carlo.

- UxHw-FPGA-17k: 147.15 mW average power, 0.35 s per pixel, 94.4× speedup.

- Both platforms achieve a Wasserstein distance of 0.0189°C to the ground-truth Monte Carlo output.

These results establish the feasibility of real-time, low-power uncertainty quantification in embedded sensor systems.

Figure 5: Hardware platforms for real-time uncertainty tracking and comparison of output distributions to Monte Carlo ground truth.

Implementation Considerations

Software

- Calibration extraction and conversion routines must be instrumented to model calibration data as random variables with uniform support over the quantization bin.

- Correlations induced by shared calibration data in multiple parameters must be preserved during sampling.

- Monte Carlo simulation is straightforward but computationally intensive; hardware acceleration or analytic propagation (where feasible) is preferred for real-time applications.

Hardware

- Native uncertainty tracking architectures (e.g., Laplace) can efficiently propagate uncertainty distributions through arithmetic operations, supporting real-time deployment.

- Power and latency metrics indicate suitability for edge devices and battery-powered systems.

Limitations

- The uncertainty quantification is only as accurate as the model of representation uncertainty; unmodeled sources (e.g., floating-point rounding, additional re-discretizations) may contribute further error.

- The approach is most critical when representation uncertainty is not dominated by other error sources (e.g., measurement noise, ADC quantization).

Implications and Future Directions

The findings have significant implications for the design and deployment of sensor systems in safety-critical and high-precision applications. Ignoring epistemic uncertainty from calibration quantization can result in overconfident and potentially erroneous downstream decisions. The presented framework enables explicit quantification and propagation of this uncertainty, supporting robust decision-making.

Future work may explore:

- Quantization-aware calibration procedures, leveraging techniques from neural network quantization to optimize the trade-off between memory footprint and uncertainty.

- Bayesian calibration and uncertainty propagation methods for more expressive modeling.

- Distribution of higher-precision calibration data via digital channels, enabled by unique device identifiers.

- Extension to other sensor modalities and more complex, nonlinear calibration models.

Conclusion

This work rigorously demonstrates that representation uncertainty in calibration data is a fundamental and often dominant source of epistemic uncertainty in sensor outputs. The authors provide both a theoretical framework and practical tools—including efficient hardware implementations—for quantifying and propagating this uncertainty in real time. The results underscore the necessity of uncertainty-aware processing in modern sensor systems and provide a foundation for future research in robust, reliable sensing and inference.