- The paper presents a novel analogy linking diffusion models with Kelly’s criterion for optimizing mutual information.

- It details the role of classifier-free guidance in amplifying conditional signals to overcome low mutual information in image labels.

- The study highlights trade-offs between condition fidelity and sample diversity, offering insights for tuning diffusion processes.

Diffusion Models as Functional Analogues to Kelly's Criterion

The paper "Diffusion Models are Kelly Gamblers" discusses an intriguing intersection between diffusion models and the principles of Kelly criterion, emphasizing mutual information in large-scale machine learning applications. This connection provides a new perspective for evaluating and enhancing the performance of diffusion models, especially concerning their capacity to handle image labels which typically exhibit low mutual information with the label.

Conceptual Framework

Diffusion models have become vital for generating high-dimensional data such as images and audio by leveraging stochastic processes to iteratively refine noisy inputs into structured outputs. The authors draw an analogy between these models and the Kelly criterion. This criterion is a strategy for optimizing bet sizes based on certain information, maximizing logarithmic wealth over time. Critically, in the context of machine learning, this parallels with optimizing the use of mutual information captured between data and learned representations.

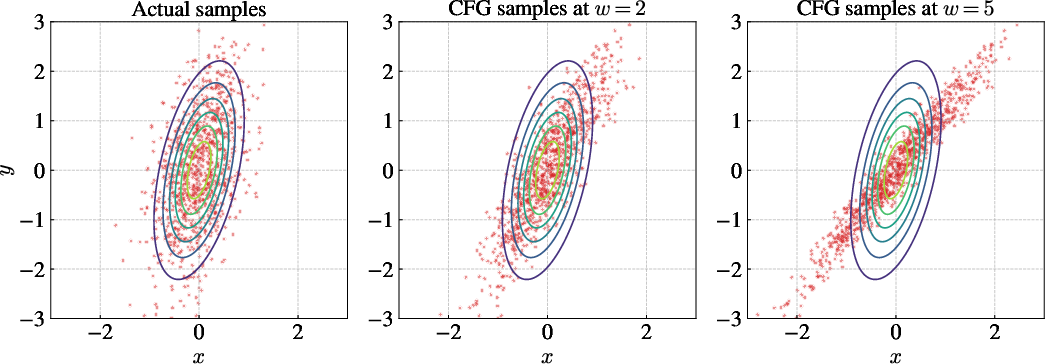

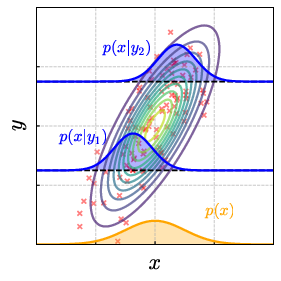

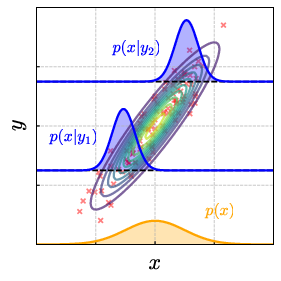

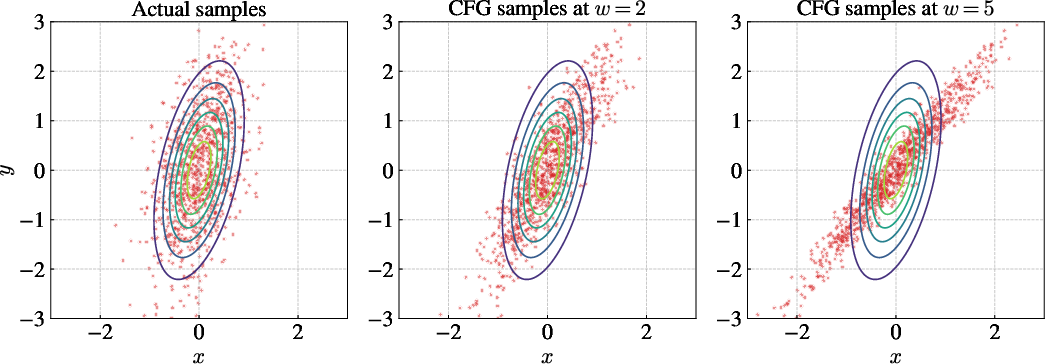

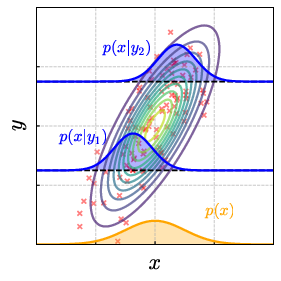

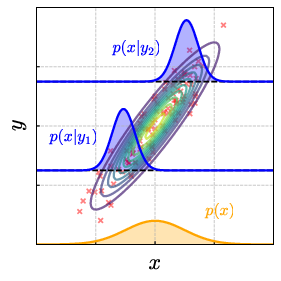

Figure 1: Samples generated by a CFG-style modification to the conditional score $\nabla \log \P(x_\t, \t|y)$ of a joint Gaussian.

Classifier-Free Guidance (CFG)

A key technique explored is CFG, which modifies traditional sampling in diffusion models by enhancing the contribution of conditional data at sampling time, effectively boosting the mutual information between generated data and observed conditions. This heuristic addresses the innate difficulty in associating images with their labels, which naturally possess low mutual information. By amplifying the signal between the condition and generated sample during the denoising process, CFG provides a practical mechanism to leverage diffusion’s generative strengths more effectively.

Computational Insights

Forward and Reverse Processes

The paper details the mathematical representation of diffusion processes through forward and reverse stochastic differential equations (SDEs). The forward passes "blur" the data to a Gaussian-like noise state. The innovative aspect of diffusion is how the reversal introduces sophisticated neural controls (parameterized as ϵθ in the paper) to refine and restore meaningful outputs from this noise.

Entropy and its Role

Entropy is a profound concept deeply tied to the informational nature of diffusion models. The authors highlight how conditional models inherently store more information due to the need to associate data with conditions, leading to higher total entropy values. This contrasts with unconditional models. The resulting behavior during the reverse process profoundly illustrates that entropy matching models are not just about recovery, but actively engage in inferring missing information, reminiscent of Kelly’s optimal gambling setup.

Figure 2: The total and neural entropy rates for MNIST and CIFAR-10 images, demonstrating the prominent peak in entropy production in early forward diffusion steps.

Operational and Theoretical Trade-offs

Operationally, the theoretical models presented offer insights into performance trade-offs. For example, boosting mutual information via CFG increases fidelity to conditions but may decrease sample diversity. Understanding these trade-offs is crucial for practitioners seeking to fine-tune diffusion models for specific applications, whether in high-fidelity image generation or other domains like audio synthesis.

Implementation and Practicality

Real-world Applications

In practice, bridging these theoretical insights with real-world applicability involves understanding the conditions under which CFG offers substantial gains, typically in datasets with naturally low semantic informational content or when greater diversity in generation is permissible. The paper encourages leveraging modular architectures with flexibility in encoding and handling latent variables to compensate for information loss due to noise.

Future Directions

Recognizing mutual information encoding in diffusion models opens new avenues not only in improving current implementations but also in exploring novel architectures that might inherently understand and utilize mutual information more effectively. Research into different domain applications might reveal further areas where the principles drawn from Kelly’s criterion and its interpretation through diffusion processes could be adapted for higher performance and utility.

Conclusion

Diffusion models, as shown, act analogously to Kelly's criterion in gambling, encoding and optimizing usage of information. This paper offers a comprehensive theoretical grounding for leveraging mutual information to enhance the capability and application scopes of diffusion models. As these models continue to push the boundaries of generative modeling, aligning them with robust information theory principles promises not only efficiency and fidelity improvements but also broadens their conceptual application landscape.