- The paper introduces a novel portrait editing pipeline that uses attribute-specific Concept Sliders and accelerated StreamDiffusion to enable fast, fine-grained modifications.

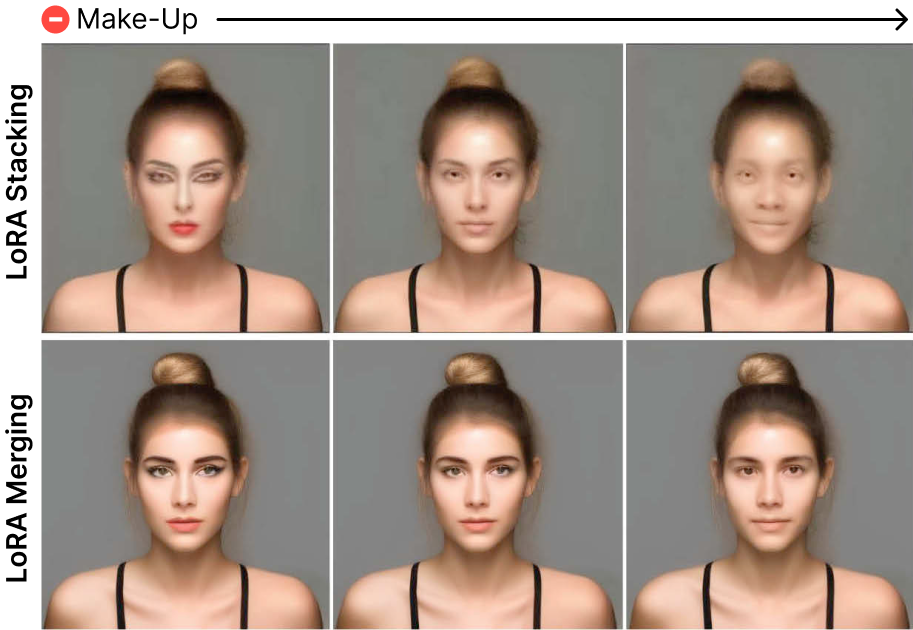

- It demonstrates that LoRA merging outperforms stacking in preserving detail during multi-attribute editing while maintaining visual coherence.

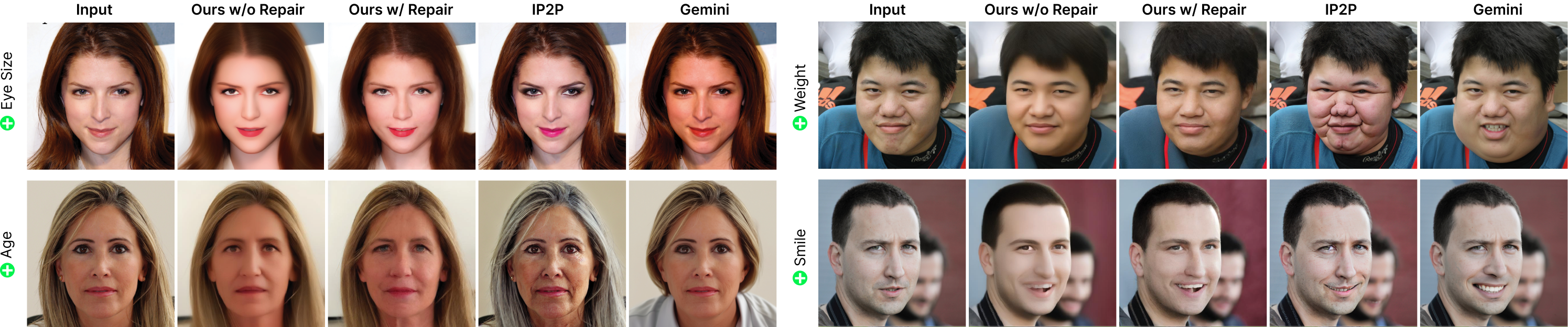

- The addition of a lightweight Repair Step restores high-frequency details, ensuring structural integrity and identity preservation.

CharGen: Fast and Fluent Portrait Modification

Introduction and Motivation

CharGen addresses the persistent challenge in diffusion-based image editing: achieving fine-grained, interactive control over facial attributes while maintaining high visual fidelity and low latency. Existing character editors, both 2D and 3D, rely on predefined, handcrafted attribute controls, limiting flexibility and requiring significant manual setup. Diffusion models, while capable of high-fidelity synthesis, lack mechanisms for continuous, precise attribute manipulation and are often too slow for interactive workflows. CharGen proposes a solution by integrating attribute-specific Concept Sliders, accelerated StreamDiffusion sampling, and a novel Repair Step for detail restoration, enabling rapid, fluent, and identity-preserving portrait editing.

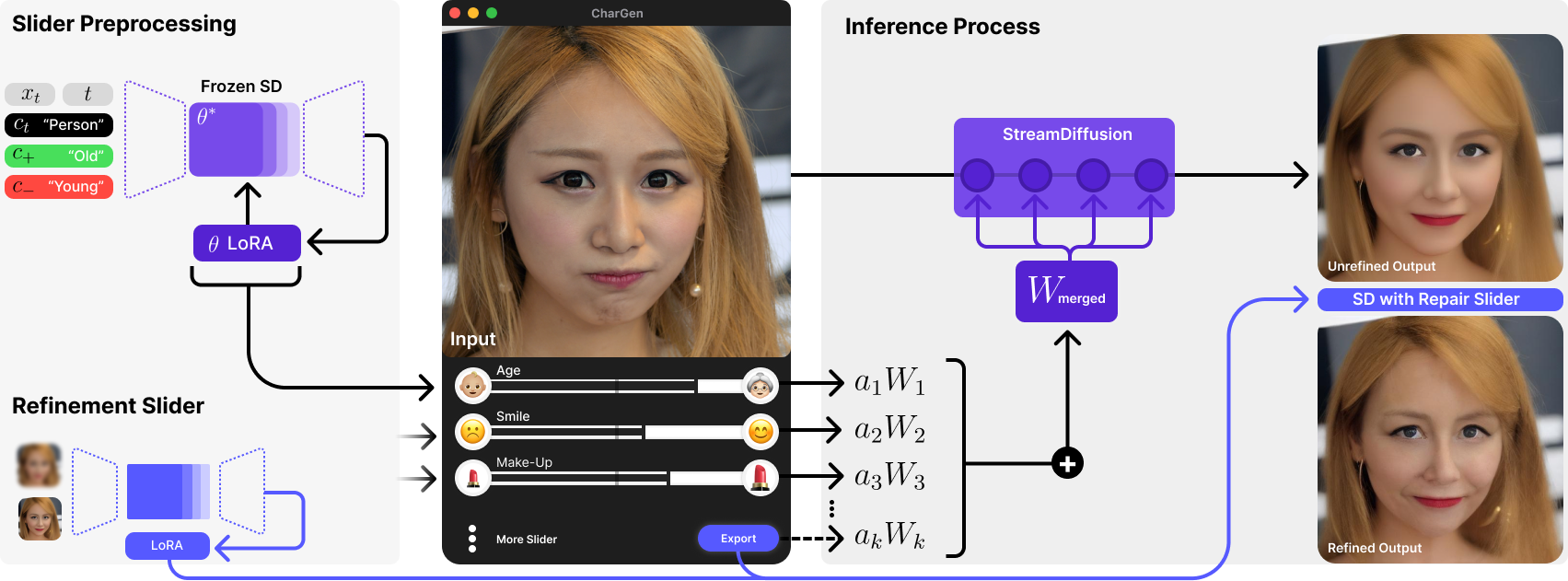

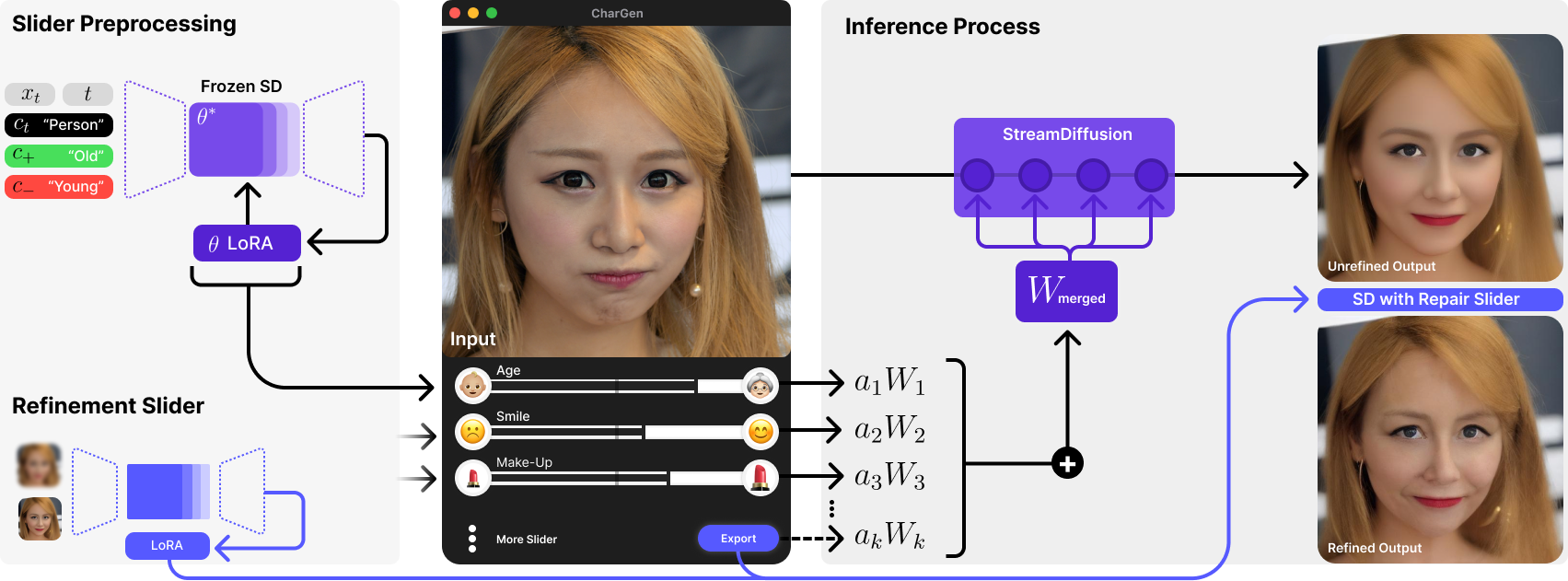

Figure 1: Overview of the CharGen pipeline, illustrating Concept Slider pretraining, interactive slider-based editing, LoRA merging, and the final Repair Step for detail enhancement.

Methodology

Attribute-Specific Concept Sliders

CharGen leverages Concept Sliders, which are LoRA adapters fine-tuned to control specific facial attributes (e.g., expression, structure, age, hair) in the latent space of Stable Diffusion. Each slider is trained using paired data (text or images) to learn a disentangled direction in latent space, allowing for continuous, independent adjustment of attributes. The independence of sliders enables simultaneous multi-attribute editing by merging their LoRA weight matrices, with user-controlled scaling factors for each attribute.

StreamDiffusion Integration

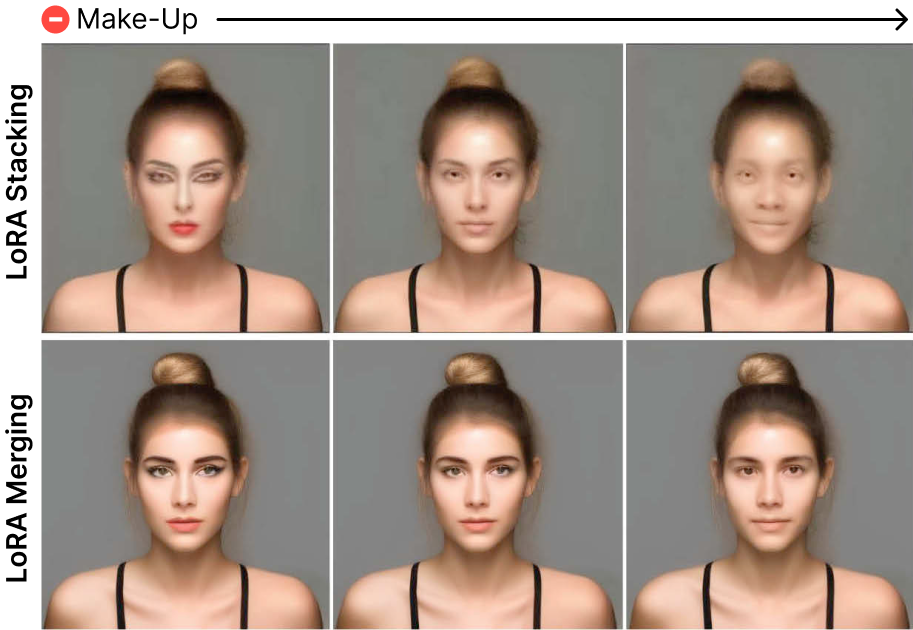

To achieve interactive performance, CharGen integrates Concept Sliders into the StreamDiffusion pipeline. StreamDiffusion accelerates inference via batch denoising, residual classifier-free guidance, and model optimizations (e.g., TensorRT, TinyAutoEncoder). Two strategies for LoRA integration were evaluated: stacking (sequential application) and merging (pre-combination of weights). Empirical results demonstrate that LoRA merging is superior, as stacking leads to cumulative distortions and loss of detail with multiple edits.

Figure 2: LoRA stacking (top) causes progressive degradation with multiple edits, while LoRA merging (bottom) maintains stable, consistent attribute changes.

Repair Step

StreamDiffusion's acceleration introduces a loss of high-frequency detail. CharGen addresses this with a lightweight Repair Step, evaluating three approaches: standard Stable Diffusion, a dedicated Repair Slider, and ControlNet-based repair. The Repair Slider, trained to map StreamDiffusion outputs back to high-detail ground truth, achieves the best balance between detail enhancement and structural preservation.

Figure 3: Comparison of refinement methods. The Repair Slider restores detail without compromising structure, outperforming both standard Stable Diffusion and ControlNet-based repair.

Experimental Evaluation

Qualitative Analysis

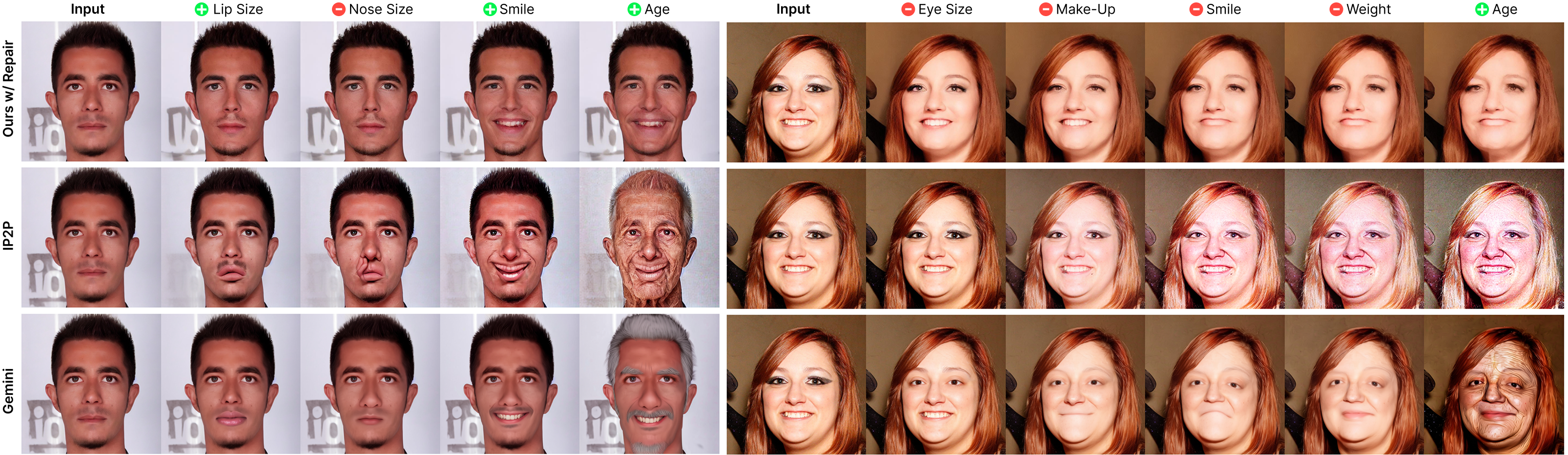

CharGen is compared against InstructPix2Pix (IP2P) and Google Gemini across single and multi-attribute editing tasks. For single-attribute modifications, CharGen provides precise, localized control, outperforming IP2P in subtlety and Gemini in edit consistency, though Gemini achieves stronger transformations in some cases.

Figure 4: Single attribute modifications. CharGen delivers more precise and visually consistent edits compared to IP2P and Gemini.

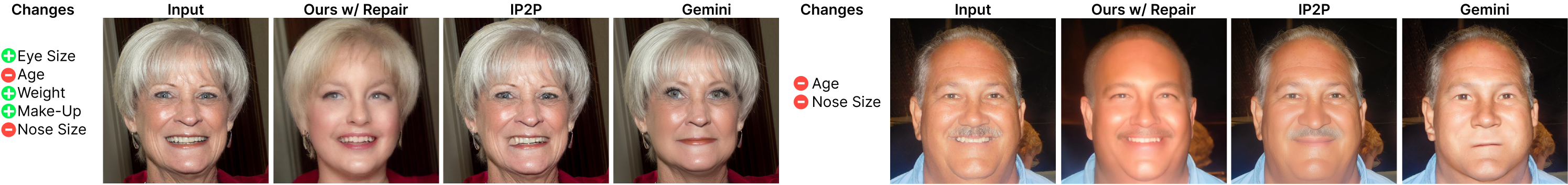

For multi-attribute editing, CharGen's LoRA merging enables simultaneous, independent control of several attributes, maintaining visual coherence and identity. Competing methods often fail to incorporate all requested changes or introduce unintended modifications.

Figure 5: Multi-attribute editing. CharGen achieves edits that are visually closer to the desired combination of changes, while other methods struggle with attribute entanglement.

In progressive editing scenarios, CharGen maintains input fidelity by adjusting slider parameters, whereas IP2P and Gemini accumulate artifacts due to repeated image processing.

Figure 6: Progressive edits. CharGen enables fluent, artifact-free modifications, unlike IP2P and Gemini, which degrade with sequential edits.

Quantitative Analysis

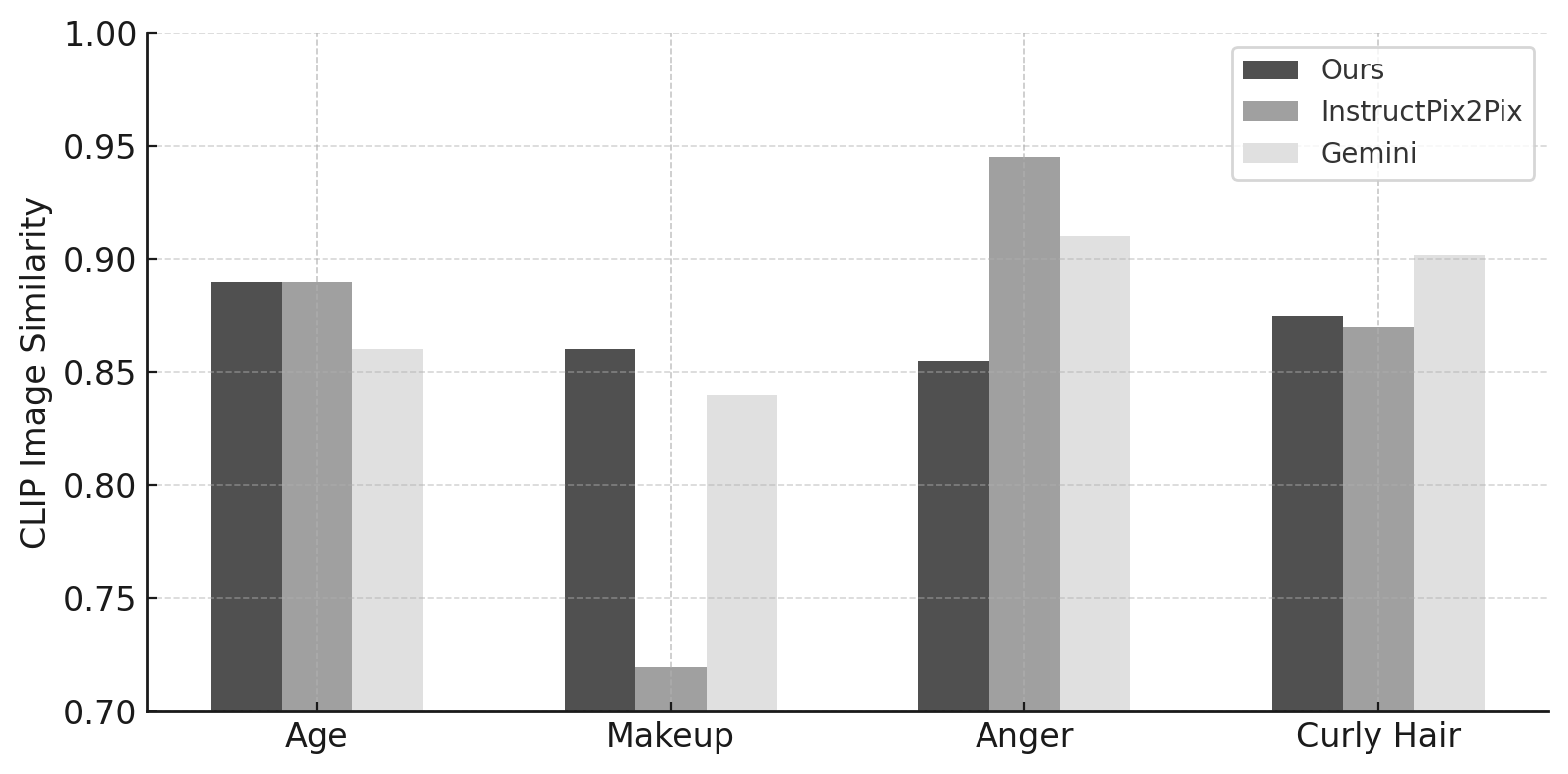

CharGen achieves a $2$–4× speedup over Gemini and IP2P, with edit times as low as $0.53$–$2.55$ seconds depending on the number of active sliders. CLIP Image Similarity scores indicate that CharGen and Gemini both preserve structural and identity fidelity ($0.85$–$0.90$), outperforming IP2P, which exhibits higher variance.

Figure 7: CLIP Image Similarity across four attributes. CharGen and Gemini maintain high structural fidelity to the input.

For the Repair Step, the Repair Slider achieves the best PSNR and SSIM, while standard Stable Diffusion yields the lowest LPIPS. ControlNet, despite producing detailed outputs, introduces significant structural deviations.

User Study

A user study with 35 participants confirms CharGen's superiority for multi-attribute editing, with 76% preference over Gemini and IP2P. For single-attribute edits, Gemini is preferred for strong transformations, but CharGen is favored for subtle, identity-preserving modifications. In refinement, users prefer the Repair Slider for balancing detail and fidelity, while ControlNet is penalized for structural inconsistency.

Limitations and Future Directions

CharGen's reliance on independently trained Concept Sliders can lead to attribute interference (e.g., age and lip size), especially for correlated anatomical features. The system is less effective for extreme transformations (e.g., dramatic aging) due to the limited range of training pairs. Additionally, the approach may inherit demographic biases from the underlying datasets, and its capabilities raise ethical concerns regarding potential misuse for deepfakes or deceptive content.

Future research should focus on joint optimization of Concept Sliders to mitigate attribute entanglement, improved training for discrete or rare attributes, and more advanced LoRA integration strategies for enhanced detail synthesis. Extending the methodology to broader image domains and developing bias mitigation techniques are also important directions.

Conclusion

CharGen demonstrates that interactive, fine-grained, and multi-attribute portrait editing is achievable by combining attribute-specific Concept Sliders with an accelerated diffusion pipeline and a dedicated Repair Step. The system delivers significant improvements in edit speed, control, and visual fidelity over existing methods, particularly for iterative and multi-attribute workflows. While limitations remain in handling extreme edits and attribute interactions, CharGen establishes a robust foundation for controllable, high-fidelity generative editing in professional and creative applications. Responsible deployment and further research into bias and misuse mitigation are essential as such systems become more widely adopted.