- The paper introduces XQC, a framework that combines batch normalization, weight normalization, and categorical cross-entropy loss to create a well-conditioned optimization landscape for DRL.

- The paper demonstrates that stabilizing the critic's Hessian eigenspectrum improves convergence, resulting in state-of-the-art performance on diverse continuous control tasks.

- The paper shows that XQC achieves comparable or better performance with about 4.5× fewer parameters and 5× less compute, ensuring scalability and robustness.

XQC: Well-conditioned Optimization Accelerates Deep Reinforcement Learning

Introduction

The paper "XQC: Well-conditioned Optimization Accelerates Deep Reinforcement Learning" introduces an innovative framework designed to enhance sample efficiency in deep reinforcement learning (DRL) by improving the optimization landscape of the critic network. Leveraging insights from the eigenspectrum and condition number analyses of the critic's Hessian, the authors propose a new approach that combines batch normalization (BN), weight normalization (WN), and categorical cross-entropy (CE) loss. This configuration results in significantly smaller condition numbers, yielding better sample efficiency with fewer parameters and reduced computational requirements.

Methodology

The foundation of XQC lies in its well-conditioned optimization landscape, achieved through meticulous architectural choices. The proposed framework incorporates key elements that collectively contribute to improved training dynamics:

- Normalization Techniques: By utilizing BN and WN, XQC ensures a stable and well-bounded optimization process, which plays a critical role in maintaining effective learning rates under non-stationary targets.

- Categorical Cross-Entropy Loss: The incorporation of a distributional CE loss over the traditional mean squared error (MSE) enhances the landscape's condition, fostering better convergence properties.

These design principles underpin the XQC algorithm, which is implemented as an extension to the soft actor-critic (SAC) framework. The resulting architecture outperforms existing baselines across a multitude of tasks without resorting to larger models or increased computational resources.

Empirical Results

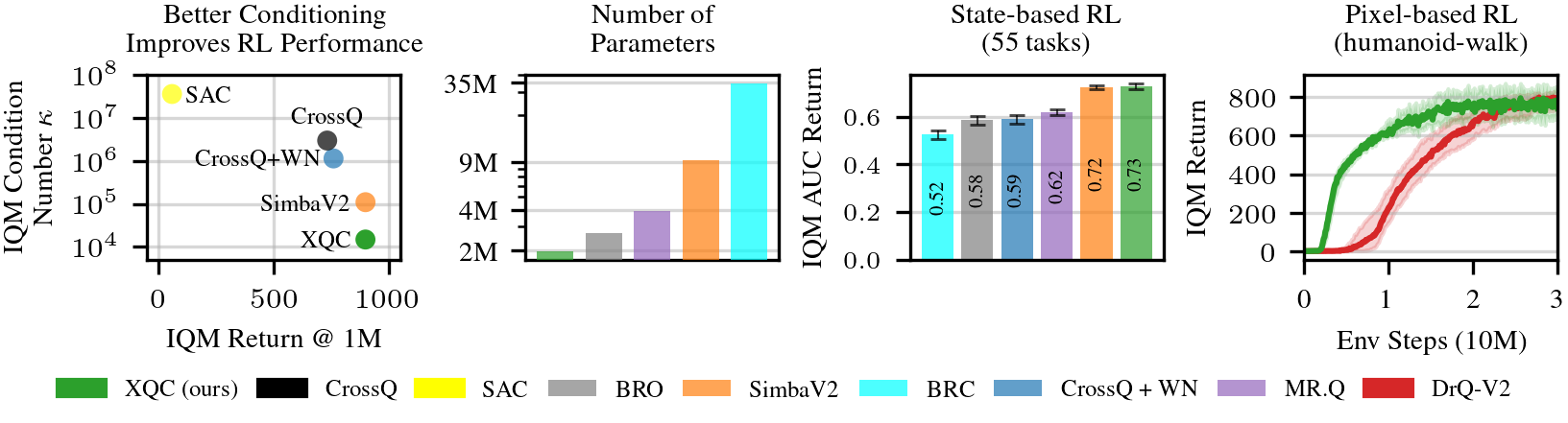

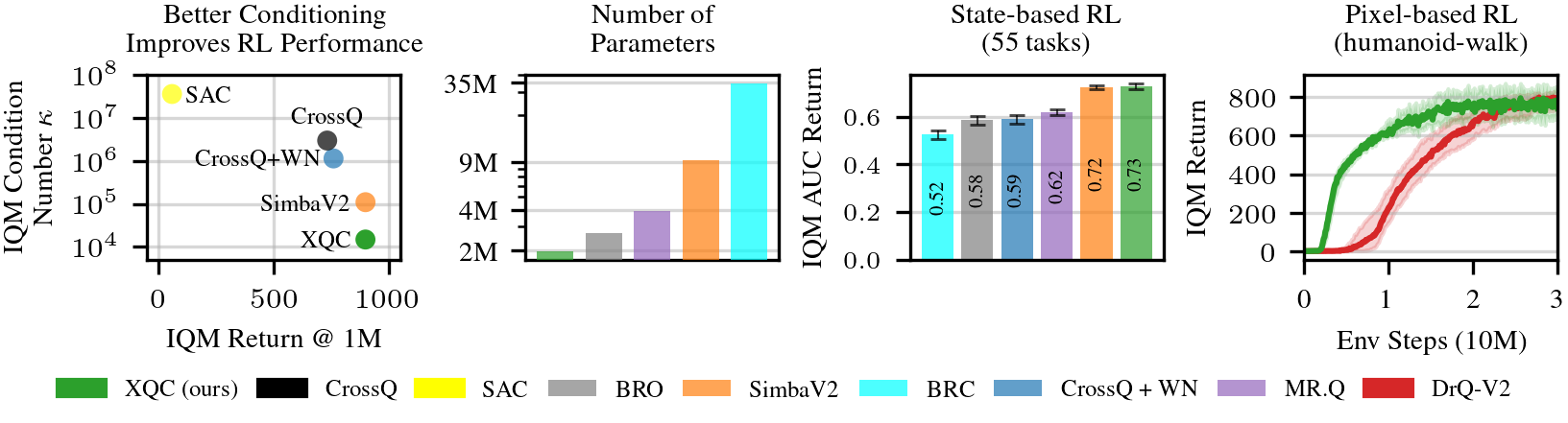

Figure 1: Well-conditioned network architectures yield state-of-the-art RL performance. Our algorithm, XQC with a BN and WN-based architecture and a CE loss, achieves competitive performance against state-of-the-art baselines across 55 proprioceptive continuous control tasks.

The empirical evaluation showcases XQC's capability to deliver state-of-the-art performance on 55 proprioceptive and 15 vision-based continuous control tasks. The algorithm matches or surpasses more complex approaches in terms of sample efficiency, leveraging fewer parameters (approximately 4.5× fewer) and computational resources (5× less compute) compared to the closest competitors like simba-v.

Optimization Landscape Analysis

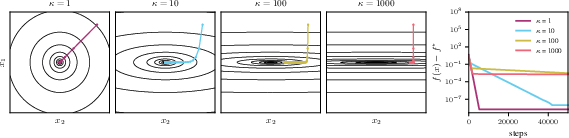

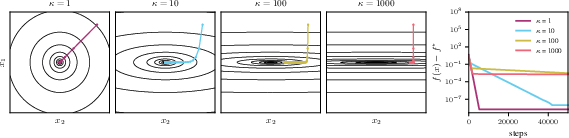

Figure 2: When performing gradient-based optimization, the condition number (κ) of the objective's Hessian significantly impacts convergence.

Through comprehensive analysis, the authors demonstrate that the optimization landscape's conditioning strongly correlates with DRL performance. Architectures incorporating BN exhibit more compact and stable eigenspectra, avoiding outlier modes that can destabilize training.

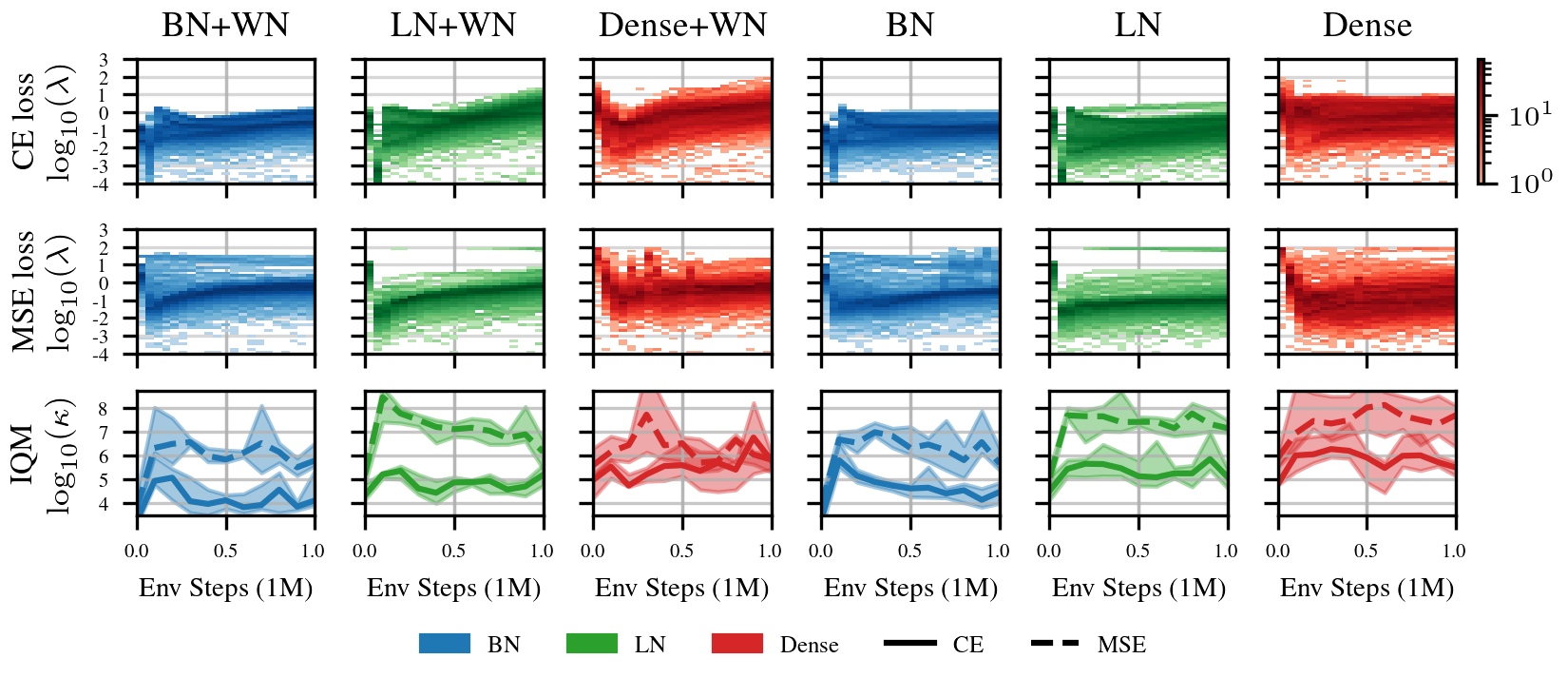

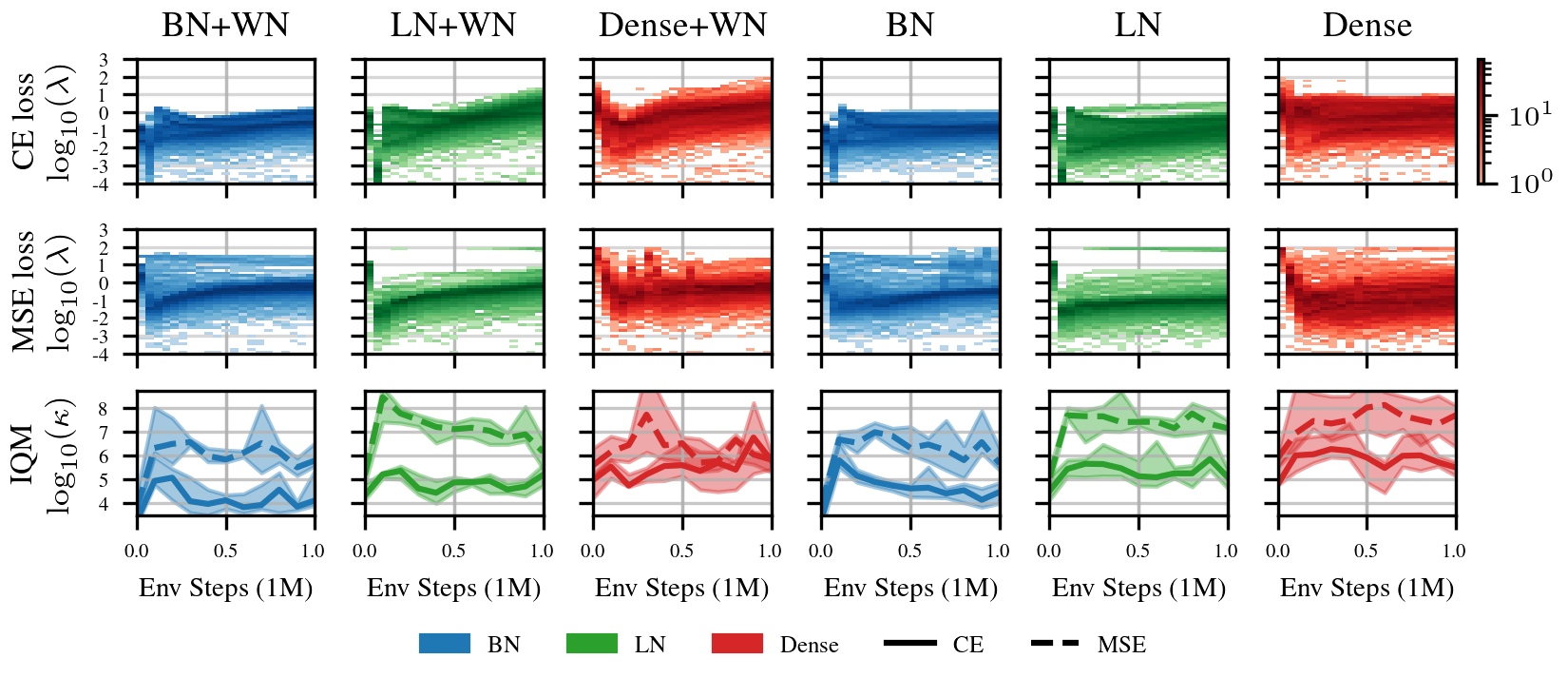

Figure 3: Eigenvalues and condition numbers on dog-trot over 5 seeds for different critic architectures during training.

The empirical eigenvalue analysis revealed that the combination of BN, WN, and CE loss substantially improves landscape conditioning over traditional MSE losses. The compactness and stability in the eigenspectra are crucial for efficient convergence and enhanced sample efficiency.

Parameter and Compute Efficiency

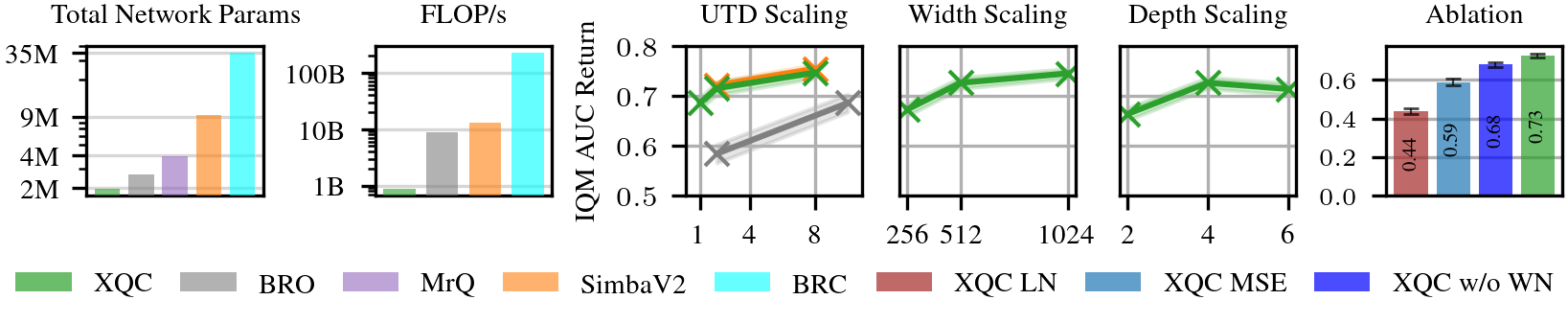

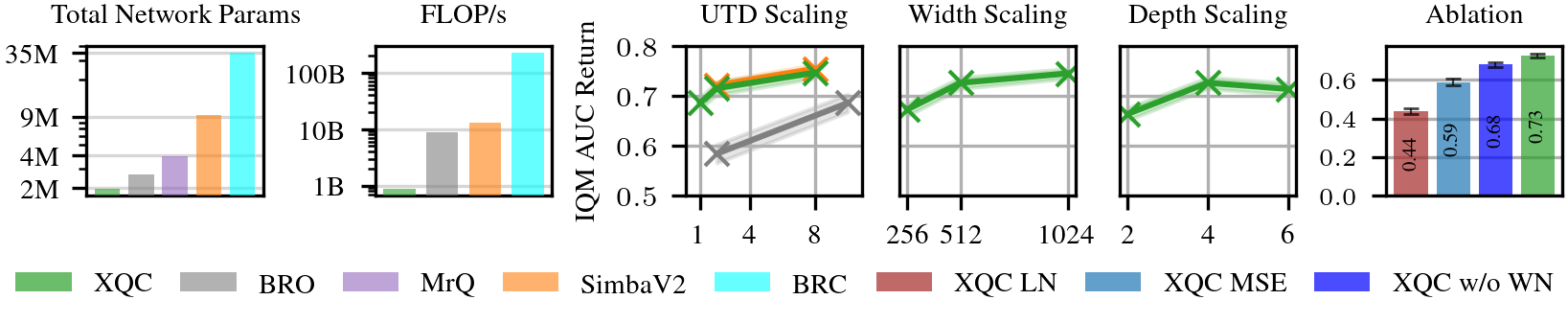

XQC achieves competitive sample efficiency with significant parameter and compute savings. This efficiency can be attributed to XQC's well-conditioned architectural design, which demonstrates robustness and scalability across varying update-to-date (UTD) ratios, layer widths, and depths.

Figure 4: XQC is significantly more parameter and compute efficient... Robust to scaling.

The detailed breakdown underscores the architecture's capability to scale gracefully, maintaining or improving performance even as compute and model capacity are increased—a critical aspect for real-world applications where computational resources are constrained.

Conclusion

The XQC framework emphasizes the importance of well-conditioned optimization landscapes in DRL, where stability and sample efficiency are paramount. By focusing on improving the critic's Hessian properties through BN, WN, and CE loss, XQC achieves significant performance gains with reduced complexity and resource requirements. This paradigm shift from added complexity to optimizing the landscape presents a promising direction for future exploration in reinforcement learning research, potentially unlocking further improvements in efficiency and capability across diverse applications.