- The paper introduces an agentic system that employs strong prompting contracts and iterative verification to reliably generate and validate Gammapy-based analysis scripts.

- It demonstrates a modular design with tight stack integration, ensuring controlled execution, reproducibility, and comprehensive logging of code outputs.

- Benchmarking reveals that models like gpt-5 achieve 100% pass rates on key tasks, reducing boilerplate and enhancing workflow efficiency in gamma-ray analysis.

Agent-Based Code Generation for the Gammapy Framework: Design, Implementation, and Evaluation

Introduction

The paper presents an agentic system for automated code generation, execution, and validation tailored to the Gammapy framework, a Python-based toolkit for high-energy gamma-ray astronomy. The motivation arises from the limitations of LLMs when applied to specialized scientific libraries: lack of comprehensive documentation, rapidly evolving APIs, and the necessity for domain-specific data handling. The proposed agent addresses these challenges by enforcing strict prompting contracts, integrating tightly with the Gammapy analysis stack, and employing iterative verification to ensure the correctness and reproducibility of generated scripts.

System Architecture and Design Principles

The agent is implemented as a modular Python package (gammapygpt) and is designed around three core principles:

- Strong Prompting Contracts: The system message encodes non-negotiable rules, such as returning a single, complete Python script, importing all dependencies, avoiding interactive plotting, and not selecting observations via

TARGET_NAME. These constraints are enforced both at generation and validation stages.

- Tight Stack Integration: The agent exposes data locations via environment variables (notably

PHOTON_STORAGE), executes code in a sandboxed workspace, and captures all outputs. Timeouts and resource limits are strictly enforced to prevent runaway jobs.

- Iterative Verification: Generated code is executed and, upon failure, a concise error summary is fed back to the model. This loop continues until the script passes validation or a predefined attempt budget is exhausted.

The architecture is organized into small, testable modules:

- Configuration: Centralized management of model backends, timeouts, and data paths.

- Prompting and Messaging: System messages encode rules; user prompts are expanded with optional RAG context.

- Runner: Orchestrates the conversation, enforces output format, executes scripts, and manages the iterative loop.

- Code Execution: Scripts are run in a controlled environment with output capture and auditability.

- RAG Layer: Optionally injects top-k relevant tutorial snippets using OpenAI embeddings and Qdrant for context augmentation.

- Utilities: Preprocessing of tutorials to ensure headless execution and compliance with contracts.

Interfaces and Usability

Two primary interfaces are provided:

- CLI: Supports code generation, dataset download, and RAG index building.

- Web UI (Streamlit): Allows backend selection, attempt budget configuration, and displays scripts and logs. All runs are stored for reproducibility and audit.

Validation is pluggable, with default criteria based on process exit codes and optional domain-specific checks. All executions are offline, with no network access, ensuring reproducibility and data privacy.

Benchmarking and Evaluation

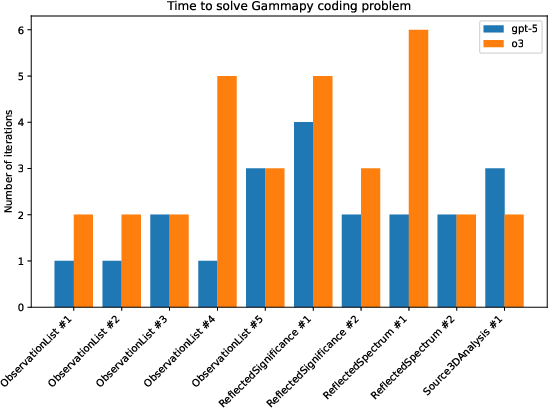

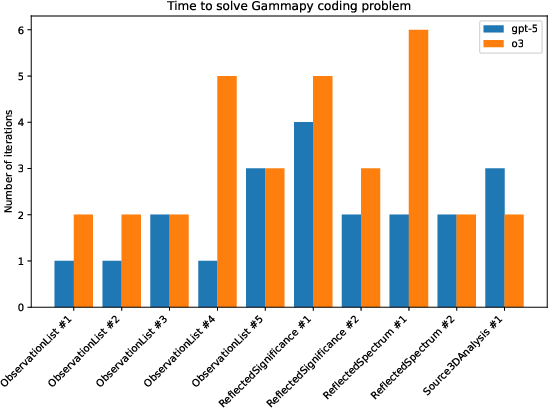

A comprehensive benchmarking suite is included, executing generated scripts in isolated environments and applying explicit numerical validators. The suite compares two OpenAI models (o3 and gpt-5) at maximum reasoning effort across a set of representative Gammapy tasks:

- ObservationList: Select and count observations for a source.

- ReflectedSignificance: Compute reflected-region significance.

- ReflectedSpectrum: Perform spectral extraction and report energy flux and spectral index.

- Source3DAnalysis: Full 3D binned analysis and model fitting.

Each task requires both successful code execution and passing a task-specific check (e.g., exact integer match, float within tolerance, or end-to-end run completion).

Figure 1: Coding benchmark results (attempts to pass and pass rates per task/model).

Both models achieved 100% pass rates on the less complex per-source tasks, with the latest model (gpt-5) demonstrating slightly faster convergence (fewer attempts to pass). Trace logs indicate that a typical successful run involved approximately 7.3k output tokens, with ~6.5k dedicated to reasoning.

Reproducibility and Safety

Each agent attempt produces a complete record: prompt, message log, generated script, stdout/stderr, and validation outcome. This enables both the reuse of successful generations and the diagnosis of failures. The system is designed for offline operation, with strict timeouts and no network access, ensuring both reproducibility and security.

Implications and Future Directions

The results demonstrate that domain-aware code agents, when tightly integrated with scientific workflows and governed by strict validation, can reliably automate routine analysis tasks in gamma-ray astronomy. The agent reduces boilerplate and increases reproducibility, addressing key pain points in scientific software development.

The backend is being extended to support open-weight models (e.g., Qwen, GPT-OSS) for on-premise and privacy-preserving deployments, leveraging platforms such as Helmholtz Blablador. This agnostic design enables systematic benchmarking across proprietary and open-weight models, facilitating transparent evaluation and adoption in institutional settings.

Future work includes:

- Expanding the RAG corpus to cover more complex workflows (e.g., CTAO simulations, end-to-end sensitivity studies).

- Exploring multi-agent collaboration (planner, critic, optimizer) to enhance robustness and convergence.

- Hardening open-weight deployments (quantization, schedulers, multi-GPU inference) for scalable, local inference.

- Continued release of the package and benchmarks to support reproducible research across both closed and open ecosystems.

Conclusion

The agentic approach to code generation for the Gammapy framework demonstrates that, with strong prompting contracts, tight stack integration, and iterative validation, LLM-based agents can deliver reliable, reproducible analysis scripts for specialized scientific domains. The system's modularity, auditability, and support for both proprietary and open-weight models position it as a practical tool for accelerating scientific discovery in gamma-ray astronomy and beyond. The ongoing development of open-weight support and multi-agent strategies will further enhance its applicability and robustness in real-world research environments.