- The paper introduces CWM, a 32-billion parameter LLM that leverages world models to simulate dynamic code execution.

- It employs a multi-stage training approach combining pre-training, mid-training with Python traces, and reinforcement learning to refine its reasoning skills.

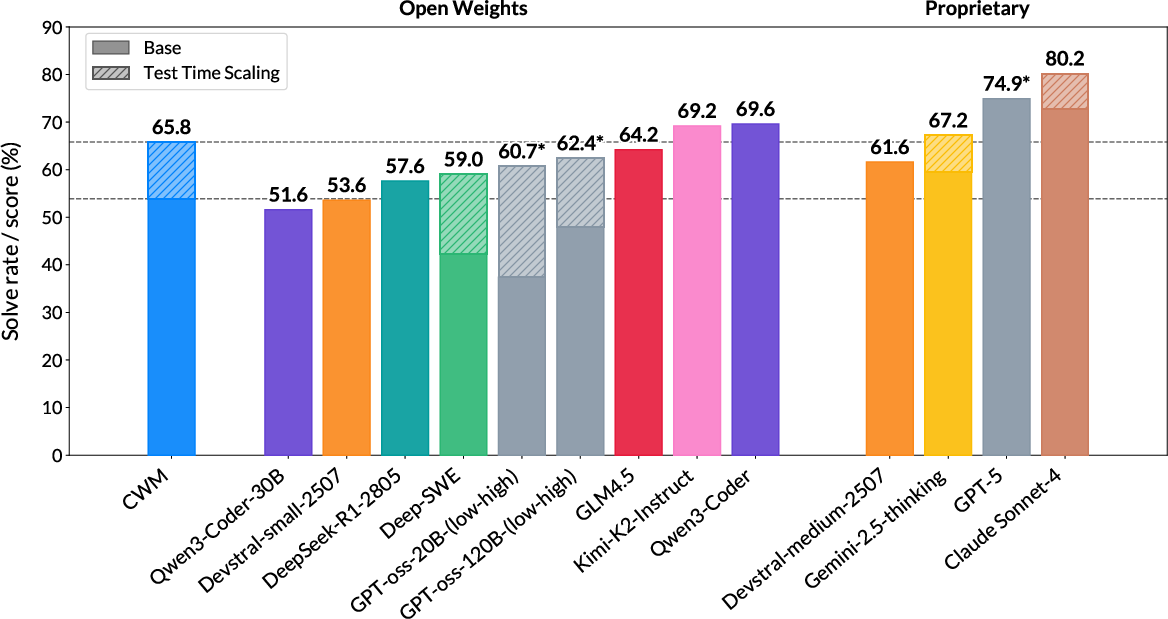

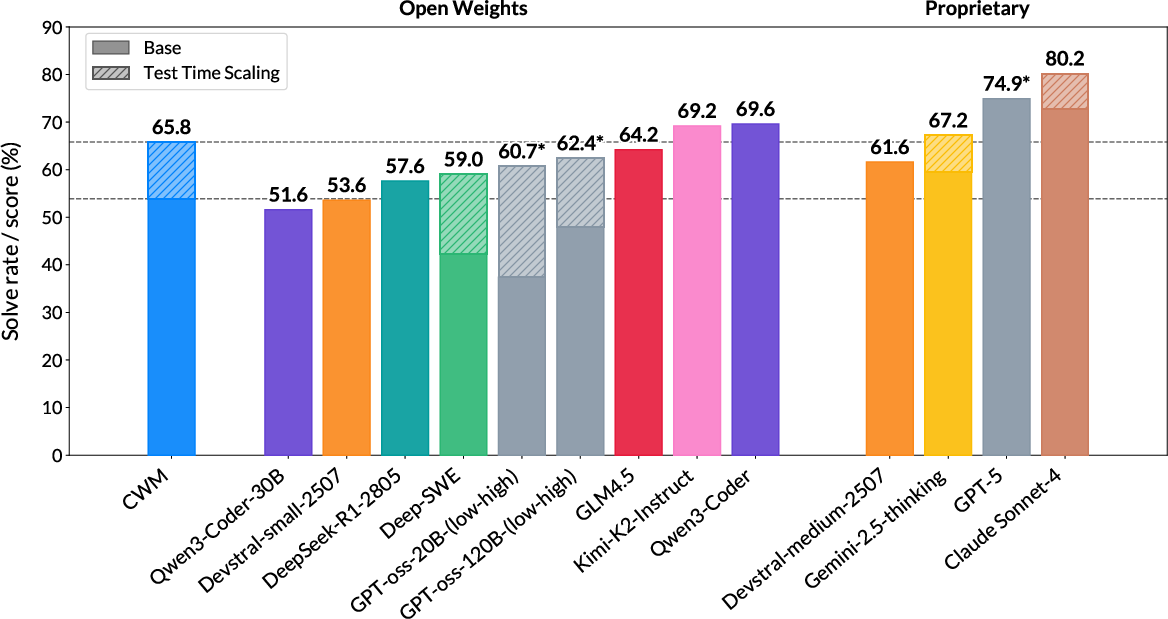

- CWM achieves a 65.8% pass@1 on the SWE-bench Verified benchmark, outperforming several comparable open and closed-weight models.

CWM: An Open-Weights LLM for Research on Code Generation with World Models

Introduction

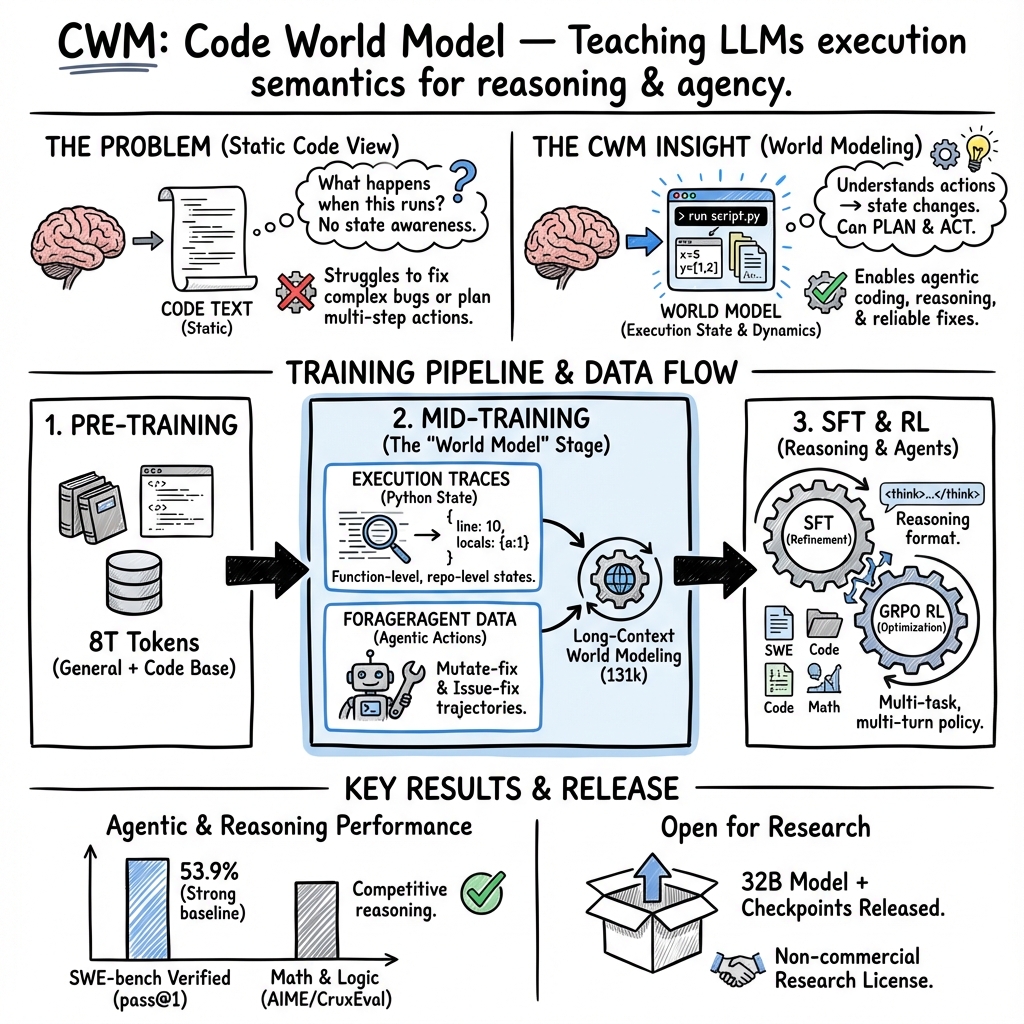

The paper introduces the Code World Model (CWM), a 32-billion-parameter open-weight LLM designed for code generation with world models. Traditional code generation approaches typically treat code as static text data, allowing models to learn to predict code line-by-line. However, this method lacks the ability to fully understand code execution and its dynamic effects. CWM addresses this limitation by training on extensive observation-action trajectories from Python interpreters and agentic Docker environments. This innovative approach aims to enhance code understanding and reasoning abilities in various computational contexts. Although CWM demonstrates notable promise, the complexities of real-time execution scenarios are yet to be fully evaluated.

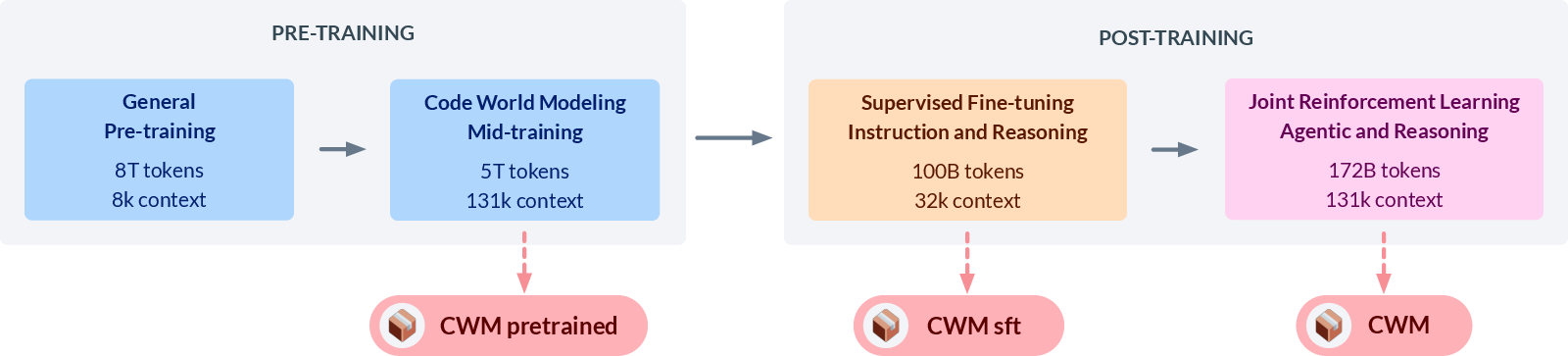

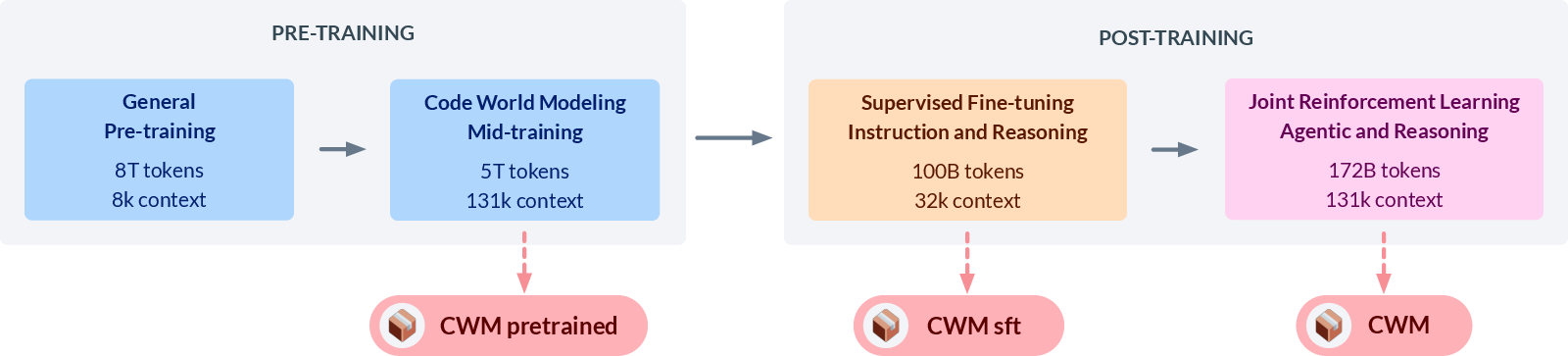

Figure 1: Overview of the CWM training stages and the model checkpoints that we release.

Training Methodology

CWM training is divided into multiple stages to ensure comprehensive learning. Initially, the model undergoes traditional pre-training, followed by mid-training using Python execution traces and agentic data, then concluding with reinforcement learning (RL). Mid-training plays a crucial role by exposing the model to dynamic execution traces and agentic interactions at a scale not typically seen in similar models. This phase is instrumental in grounding model predictions within the underlying dynamical systems encountered during code execution.

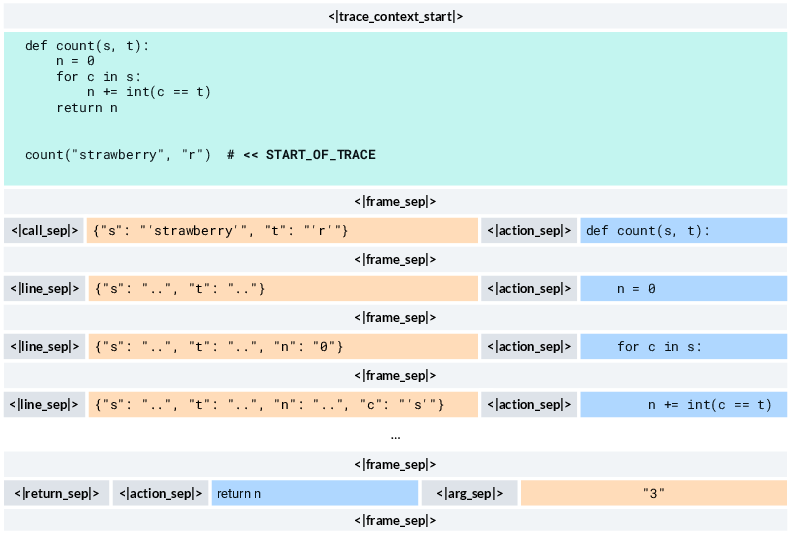

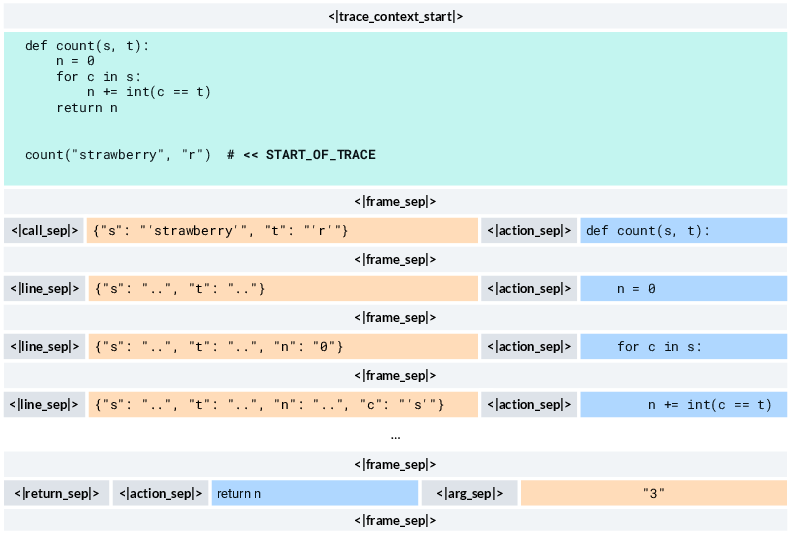

Figure 2: CWM format for Python traces. Given a source code context and a marker of the trace starting point, CWM predicts a series of stack frames representing the Program states and the actions (executed code).

Reinforcement Learning Approach

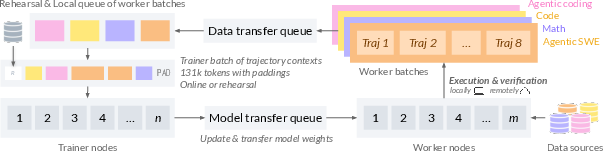

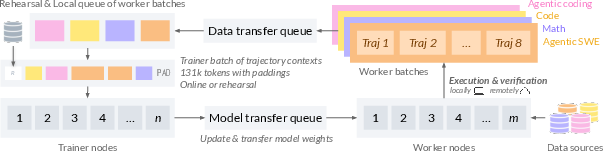

The RL phase further refines CWM's capabilities, facilitating its understanding of reasoning required in complex programming tasks. This phase consists of supervised fine-tuning and agentic multi-task RL, where CWM learns to handle various software engineering tasks through tool-use and multi-turn interactions. The RL process leverages a Group Relative Policy Optimization (GRPO) variant, incorporating several recent advancements to maintain efficiency during asynchronous training.

Figure 3: Async RL systems overview. Worker nodes generate trajectory batches from multiple RL environments and send them to trainer nodes via a transfer queue.

CWM outperforms several large models with both open and closed weights on benchmark tests, demonstrating superior ability to generate and execute code. On the SWE-bench Verified benchmark, CWM achieves pass@1 scores of 65.8% with test-time scaling. Additionally, the model exhibits significant improvements in competitive programming and mathematical reasoning tasks, showcasing its robustness across various code-related evaluations.

Figure 4: On SWE-bench Verified, CWM outperforms open-weight models with similar parameter counts and is competitive with much larger or closed-weight LLMs.

Implications and Future Directions

CWM's development opens several avenues for future exploration in AI-driven code generation with realistic implementation scenarios. By focusing on execution semantics during training, the model provides insights into how reasoning can benefit agentic coding and step-by-step simulation of Python code execution. Further research is encouraged to deepen our understanding of world models and their impact on AI-driven reasoning and planning capabilities.

Conclusion

CWM is a seminal step toward integrating world models into code generation tasks, bridging the gap between static code representation and dynamic execution understanding. Through its layered training approach, CWM showcases how grounding learning in execution dynamics can significantly enhance model capabilities, paving the way for future advancements in intelligent code generation and reasoning AI systems.