- The paper introduces a novel framework, MindCraft, that visualizes how abstract concept hierarchies form in deep models through spectral decomposition and counterfactual analysis.

- It employs targeted input interventions and cosine similarity-based Conceptual Separation Scores to pinpoint branching layers within neural networks.

- Experimental results across diverse tasks illustrate practical applications in model debugging, fairness auditing, and enhanced interpretability.

MindCraft: How Concept Trees Take Shape In Deep Models

Introduction

"MindCraft: How Concept Trees Take Shape In Deep Models" introduces an innovative framework that investigates the formation and stabilization of abstract concepts within deep neural networks. The study aims to understand these processes by bridging gaps in existing interpretability methodologies using Concept Trees to trace branching Concept Paths. By leveraging causal inference and spectral decomposition, the researchers propose a systemized approach to depict how neural networks create and refine concept hierarchies across diverse reasoning tasks.

Concept Tree Framework

The MindCraft framework offers a novel approach to visualizing the internal mechanisms of large-scale foundation models. The authors construct Concept Trees by performing spectral decomposition at each network layer and connecting the principal directions into branching paths. This framework reveals when concepts start to diverge from shared representations into distinct subspaces. Utilizing counterfactual intervention, MindCraft identifies branching layers where abstract concepts become linearly separable, providing insights into hierarchical concept emergence.

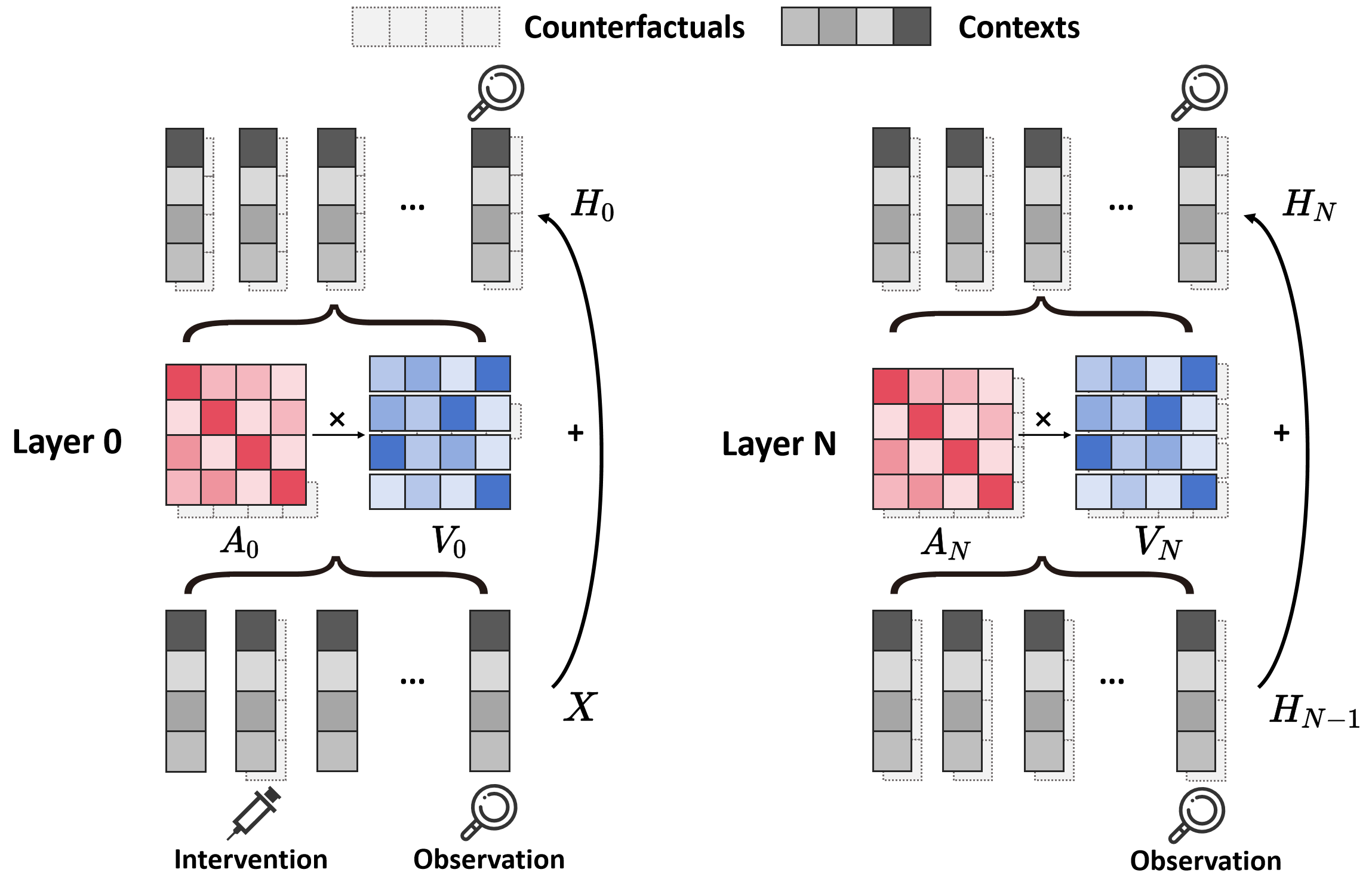

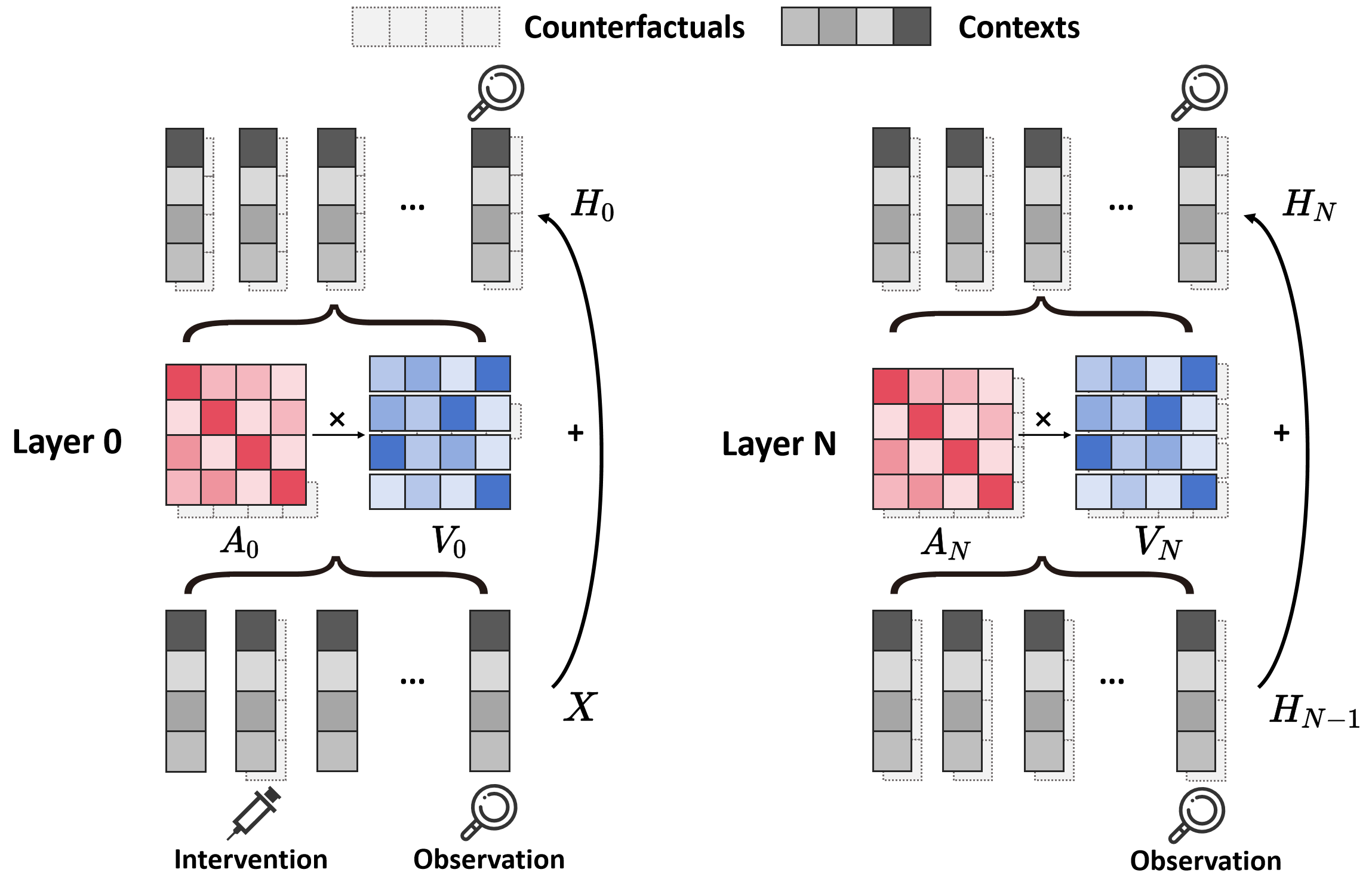

Figure 1: Overview of the MindCraft algorithm, highlighting the intervention on specific input tokens and the role of attention mechanisms in identifying hierarchical concept separation.

Methodology

The core methodology involves inducing counterfactual differences through targeted input interventions and tracing their propagation across network layers. Specifically, the framework focuses on analyzing the value transformations of the last token after the attention mechanism to determine where conceptual differences become pronounced. The Concept Path, defined by the projections on the singular vectors of the Value transformation matrix, forms the basis for constructing Concept Trees.

Identifying Branching Points

Essential to the method is calculating a Conceptual Separation Score through cosine similarity between filtered Concept Paths. A significant drop in this score marks a branching layer, which is a key indicator of concept separation. By systematically applying this across various reasoning scenarios, the study effectively captures the hierarchical organization of concepts within the model.

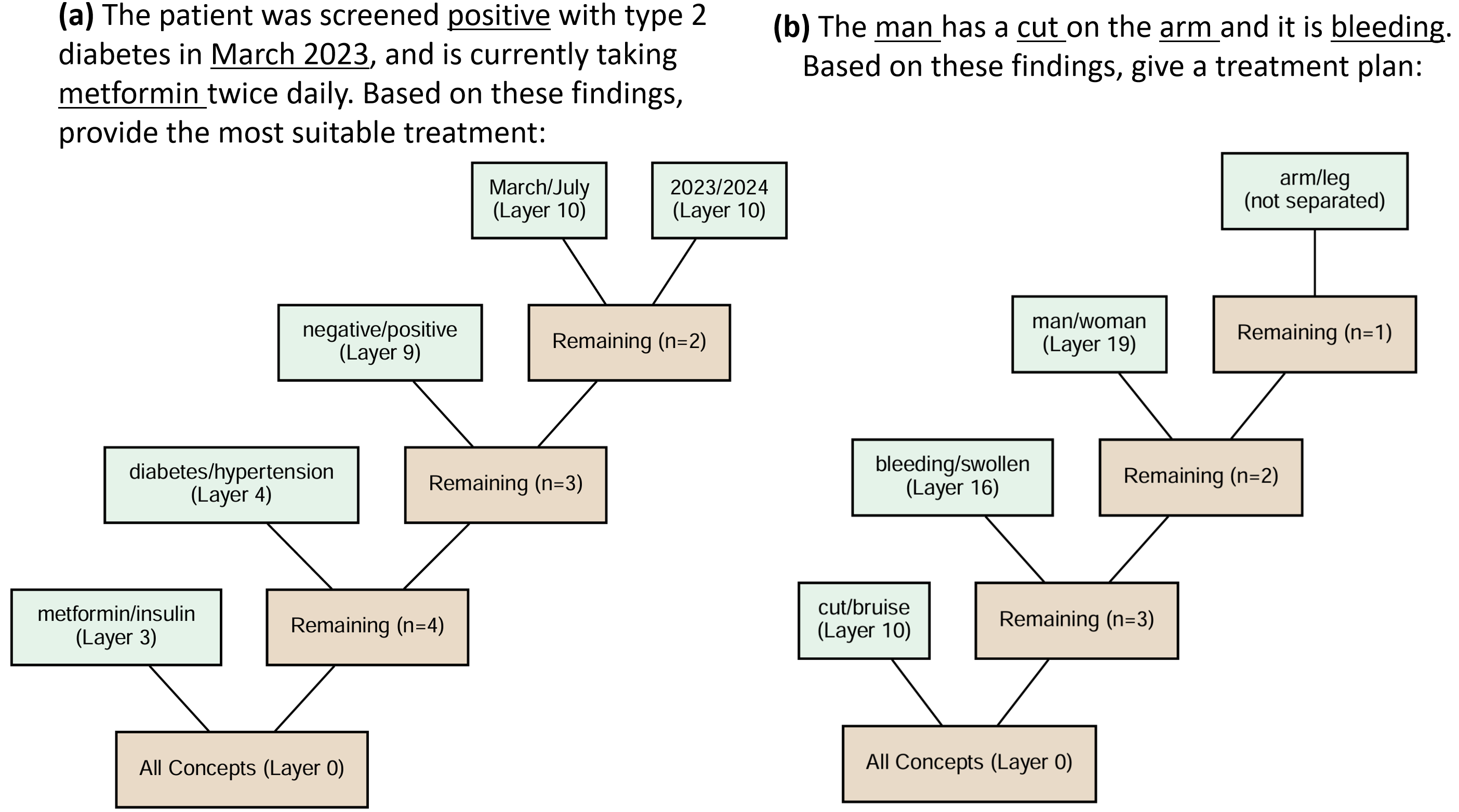

Figure 2: Layer-wise analysis of attention weights, highlighting the progressive divergence and stabilization of counterfactual differences through layers.

Application and Experiments

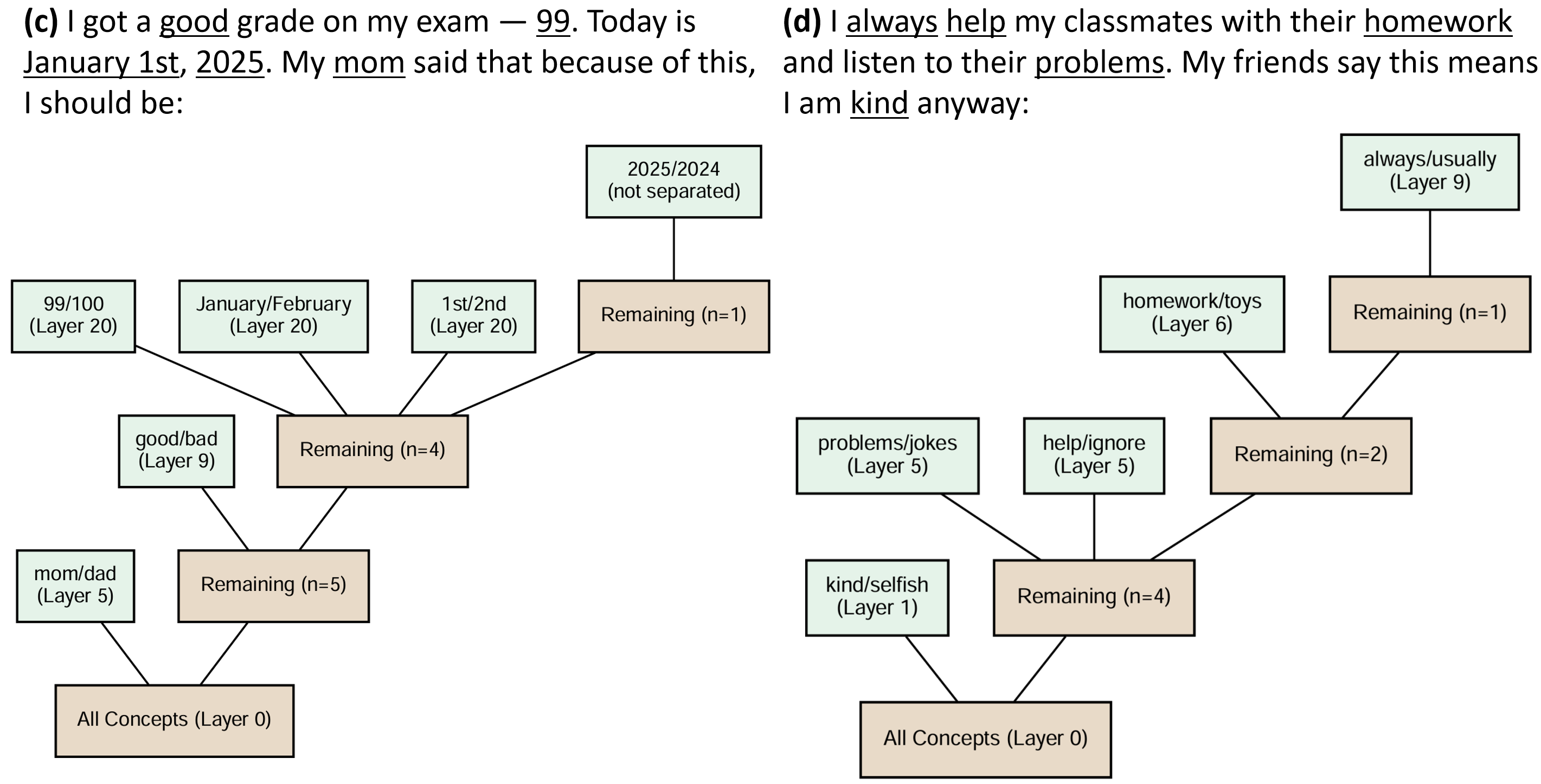

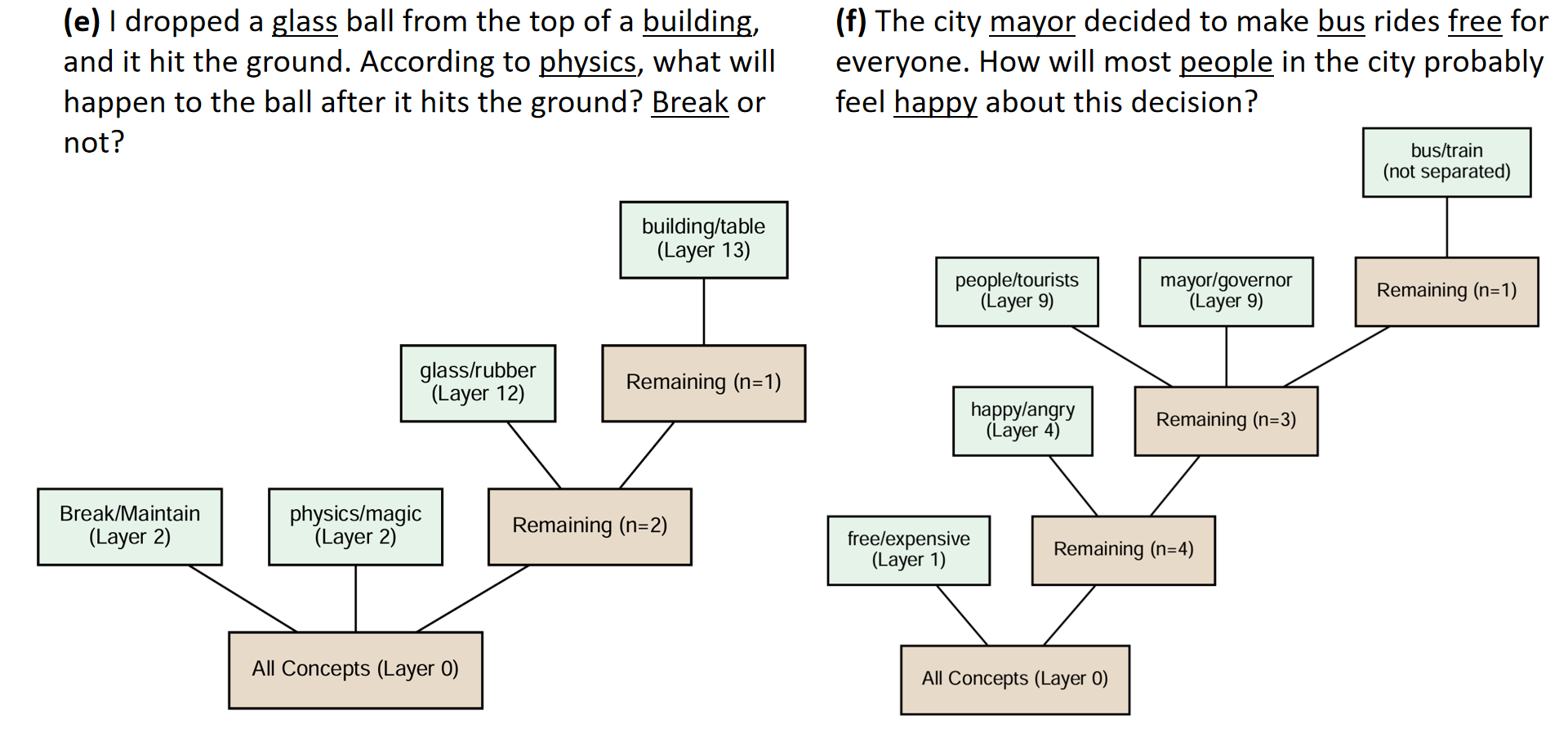

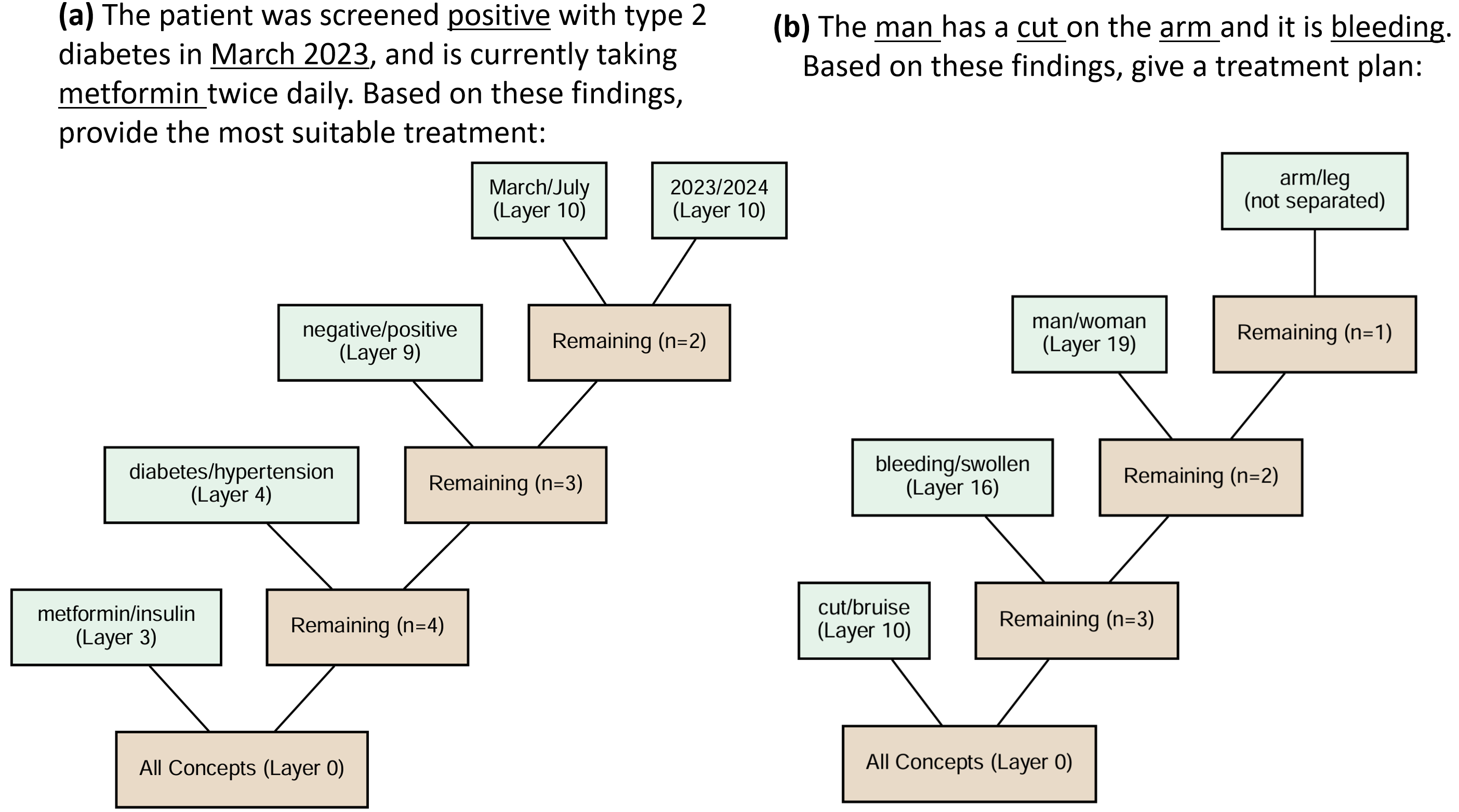

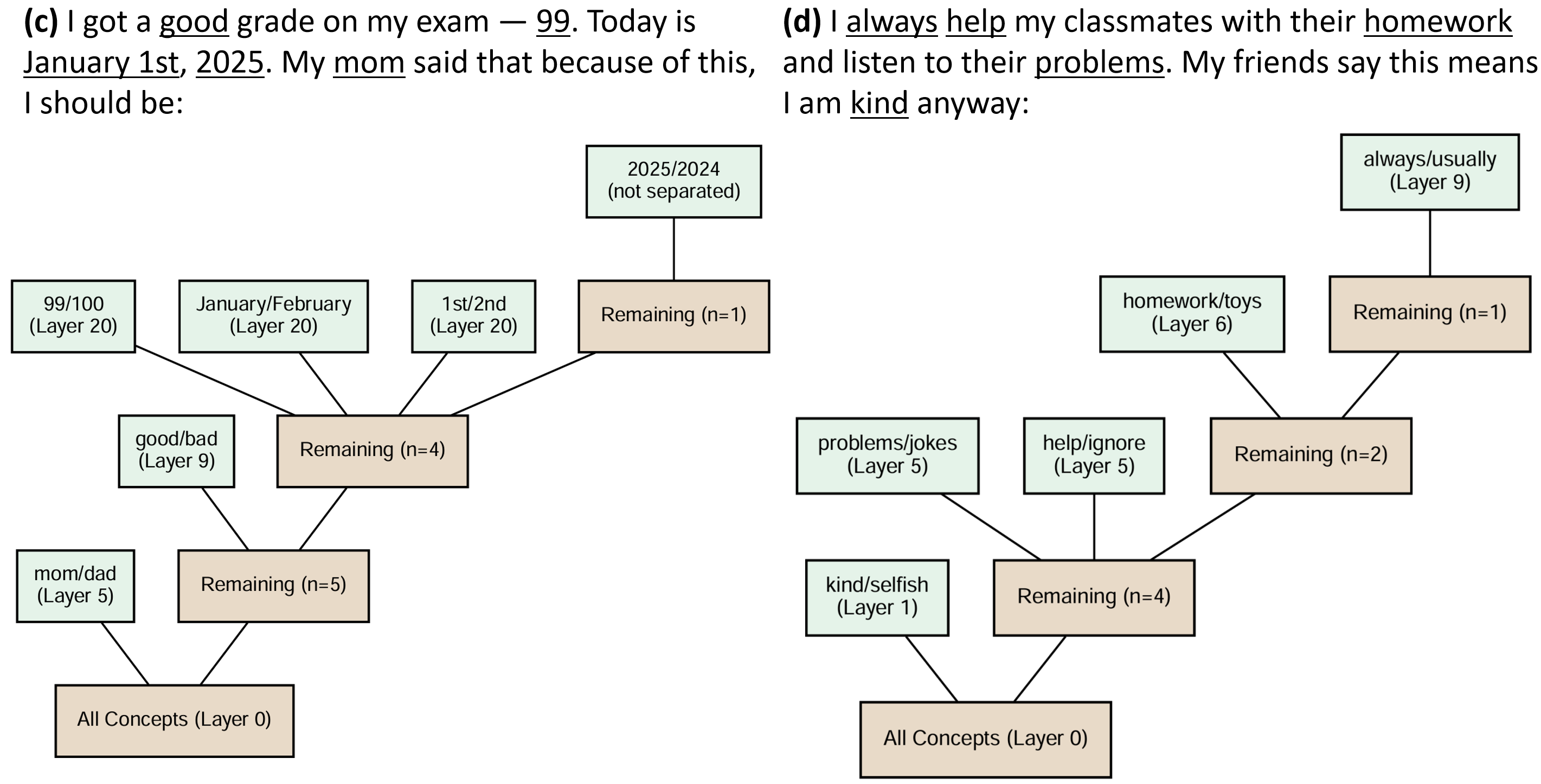

MindCraft was evaluated on tasks spanning medical diagnosis, physics reasoning, and political decision-making, among others. The results demonstrate how models prioritize different concepts: treatment-related and symptom-distinctive tokens often diverged earlier in the network layers compared to less critical tokens. This emphasizes the adaptive understanding of input at different conceptual levels.

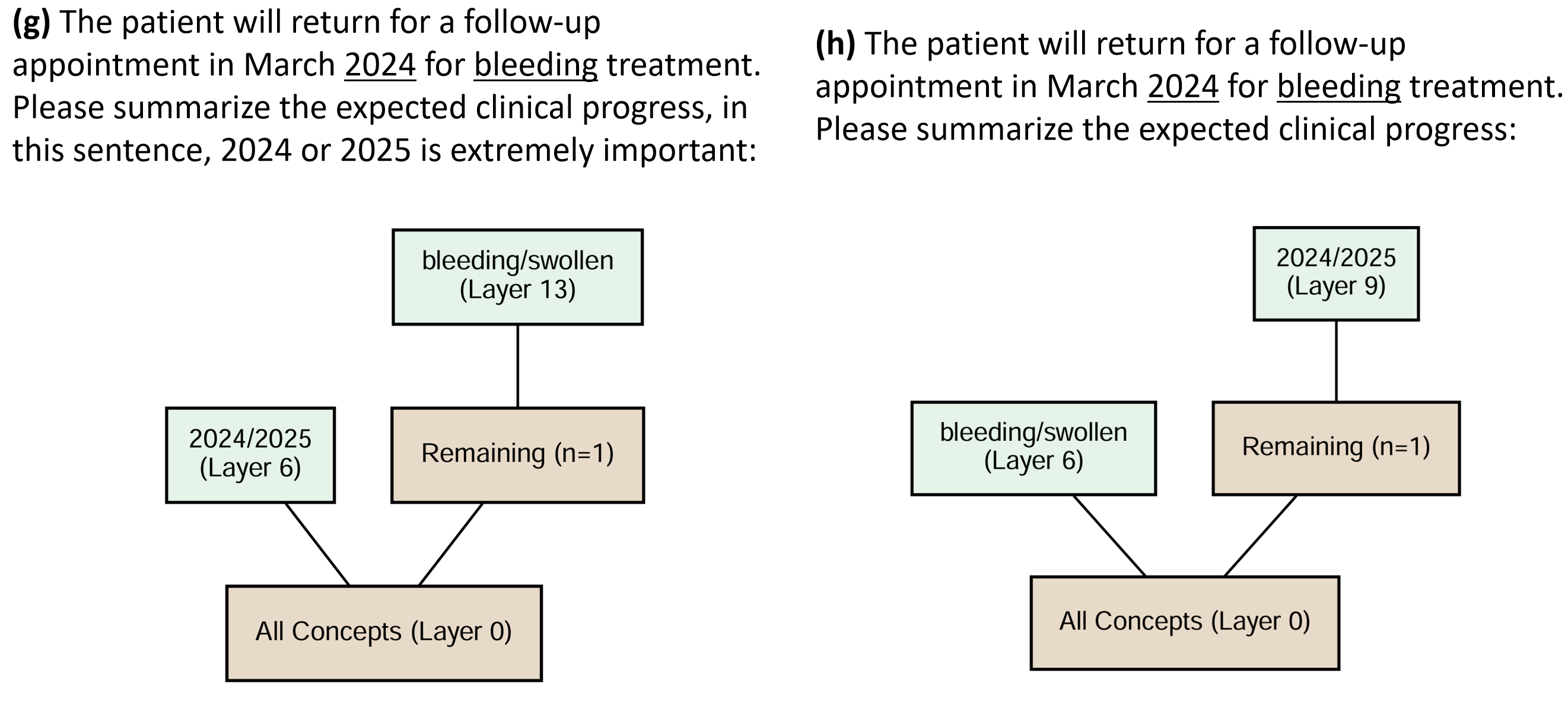

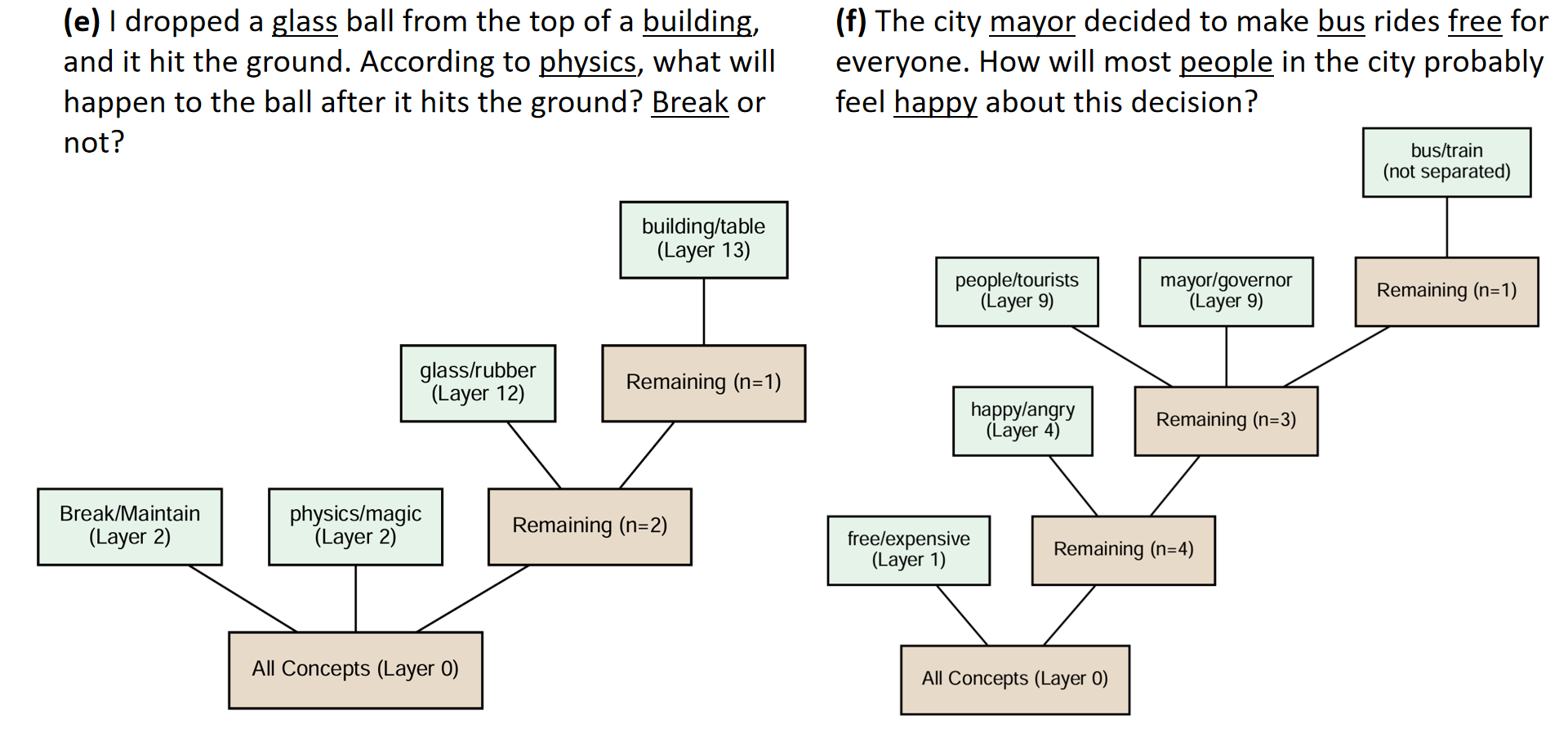

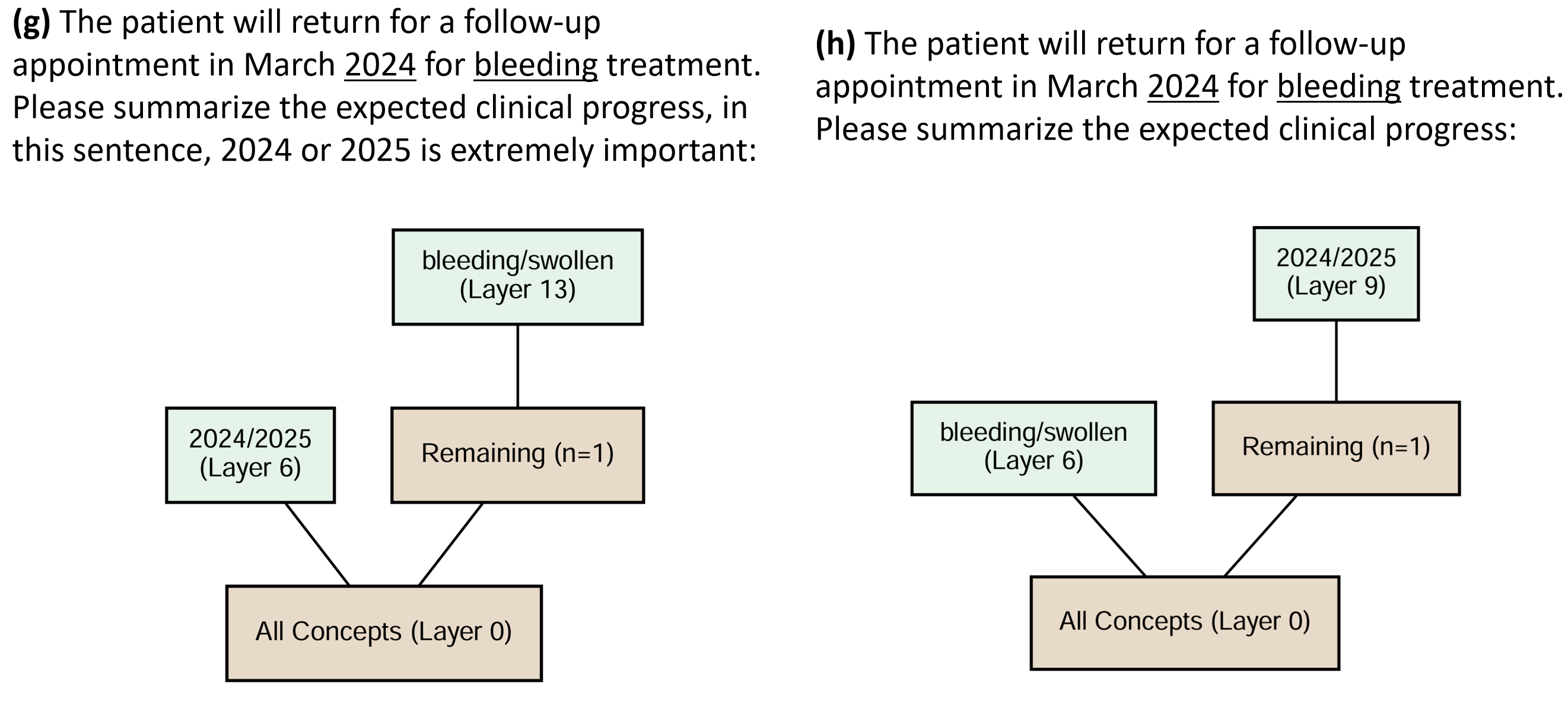

Figure 3: Concept Trees from various reasoning scenarios showing distinct nodes for medical diagnosis and political decision-making, highlighting diverse conceptual separation layers.

Conclusions

MindCraft advances the field of interpretable AI by providing a means to visualize and analyze the emergence of concept hierarchies within deep models. By identifying the precise layers where concepts branch off, the framework allows a deeper understanding of the layer-wise dynamics shaping abstract reasoning structures. These insights pave the way for practical applications, such as debugging models, auditing for fairness, and fostering accountability in sensitive usage contexts. Future developments could explore extending Concept Tree applications to multimodal domains or using identified paths for model editing.