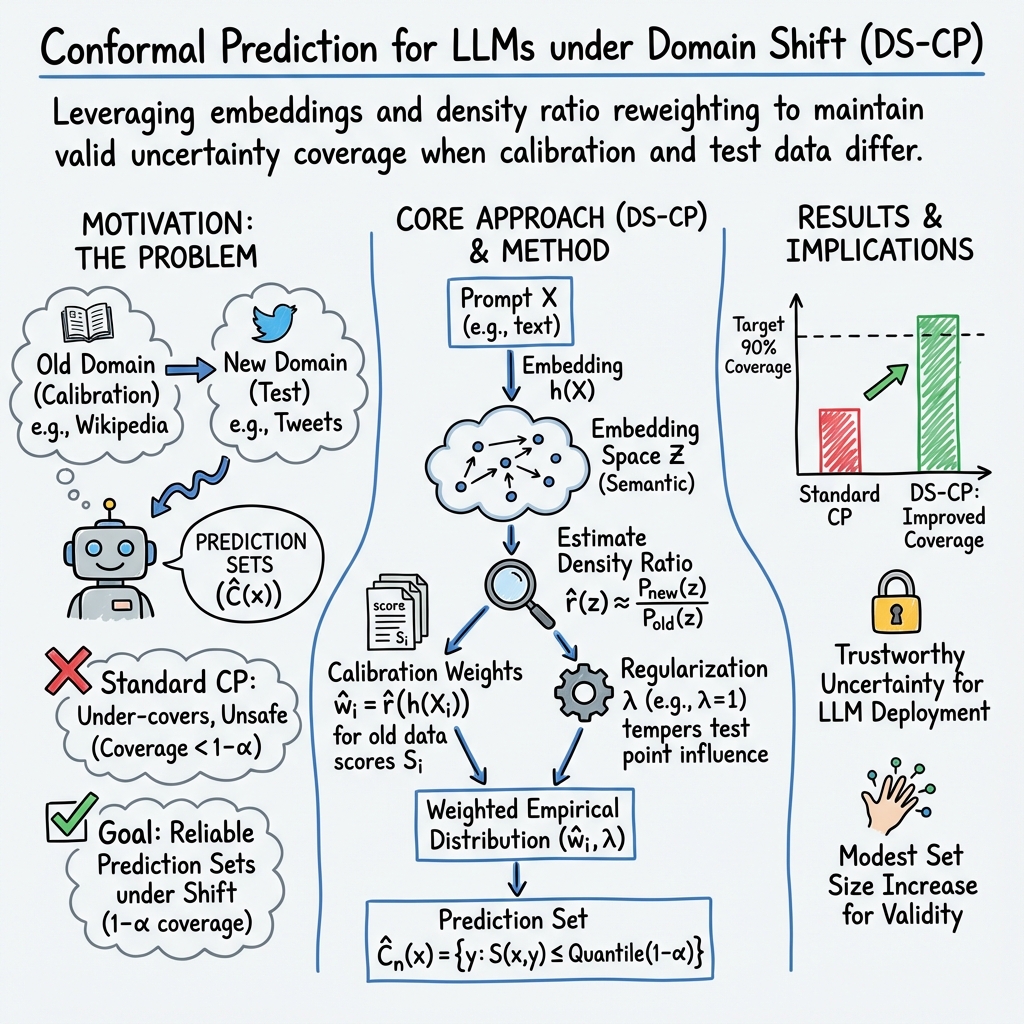

- The paper introduces DS-CP to counteract domain shift effects, ensuring reliable uncertainty quantification in large language models.

- It employs semantic embeddings and density ratio estimation to adapt traditional conformal prediction methods for real-world deployment.

- Theoretical guarantees and empirical results on the MMLU benchmark confirm DS-CP’s ability to maintain accurate coverage while managing prediction set sizes.

Domain-Shift-Aware Conformal Prediction for LLMs

Introduction

The paper "Domain-Shift-Aware Conformal Prediction for LLMs" (2510.05566) addresses the critical challenge of ensuring reliable uncertainty quantification (UQ) in LLMs when deployed in real-world scenarios characterized by domain shifts. Domain shifts occur when the distribution of the calibration data used to set the predictive model differs from that of the test data encountered during deployment, resulting in potential under-coverage and unreliable prediction sets. The authors propose a novel framework, Domain-Shift-Aware Conformal Prediction (DS-CP), designed to adapt conformal prediction (CP) methodologies to mitigate the adverse effects of domain shifts in LLMs.

Conformal Prediction under Domain Shift

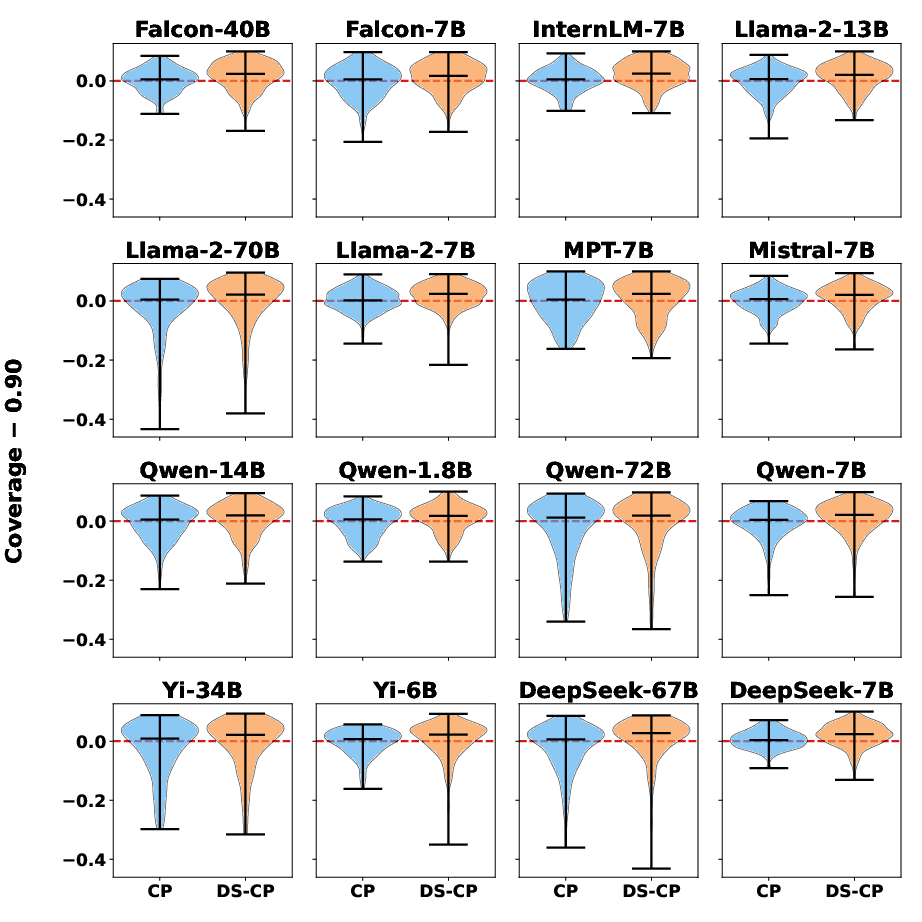

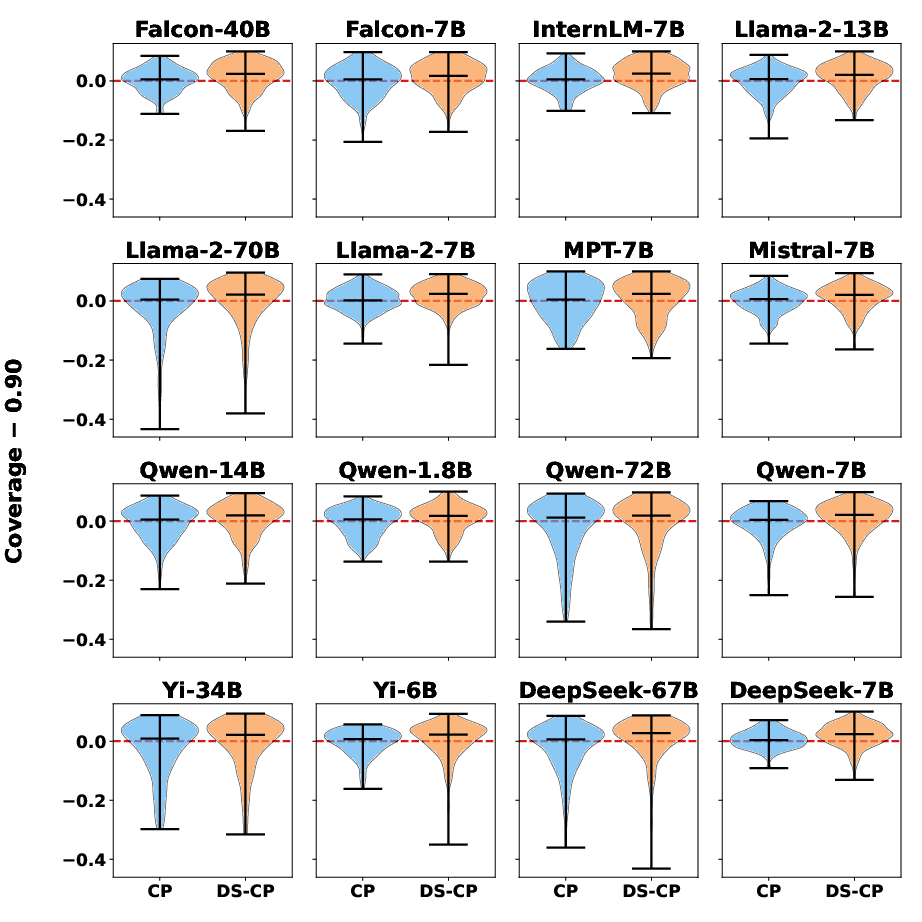

Standard conformal prediction techniques rely on the assumption of data exchangeability, implying that the calibration and test datasets share the same distribution. In practice, this assumption often fails due to domain shifts, making CP under-cover prediction sets. The paper proposes DS-CP to tackle this problem, enhancing the validity and adaptivity of CP by reweighting calibration samples according to their proximity to test prompts utilizing semantic embeddings.

Figure 1: Empirical coverage of CP vs. DS-CP across models. The center bar is the median.

Methodology

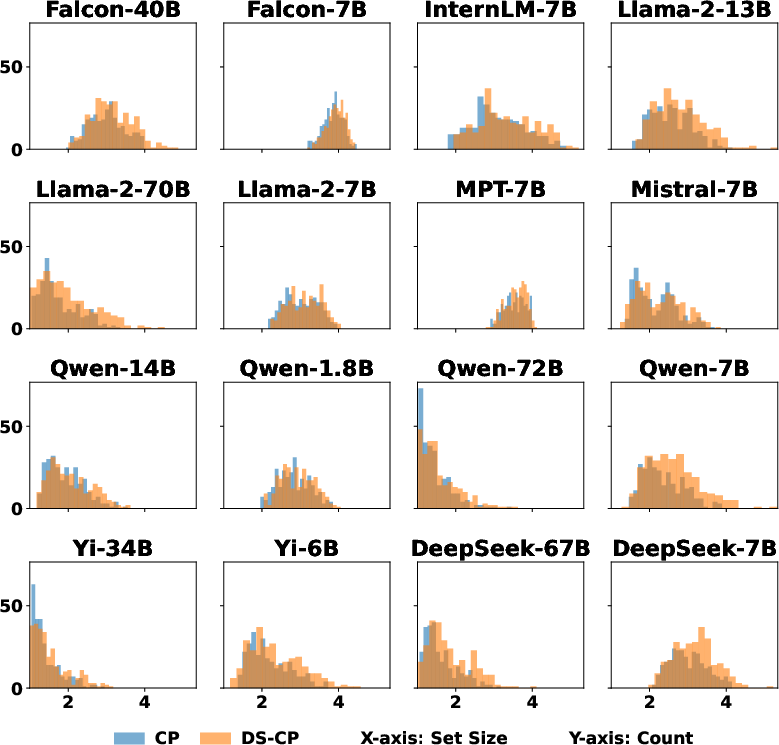

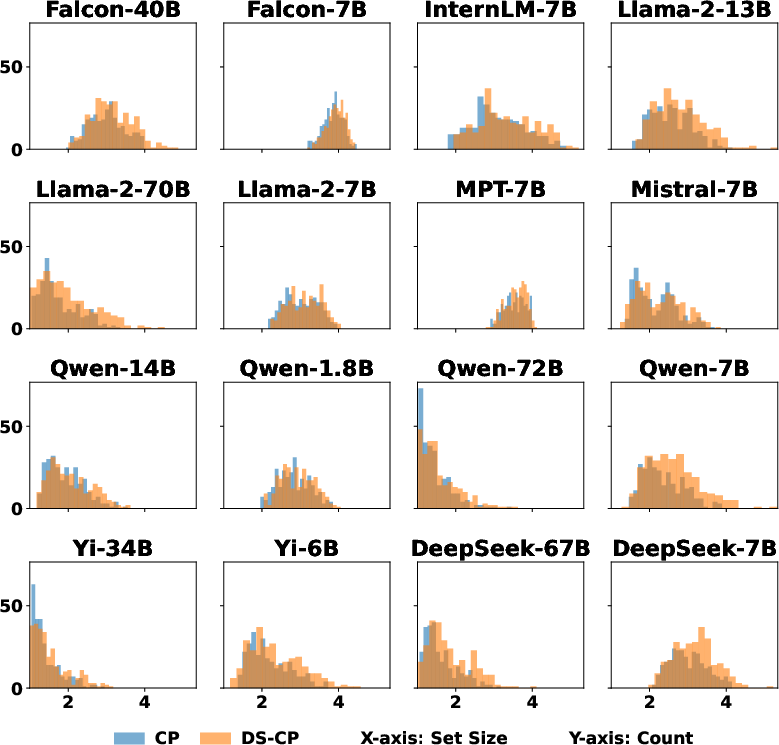

DS-CP employs embedding models to project high-dimensional prompt data into a semantic space that preserves cross-domain similarities, allowing for effective density ratio estimation crucial to CP's adaptation. This approach reduces the dimensionality of the prompt space, making statistical estimation feasible. Subsequently, the empirical distribution of conformity scores is adjusted through regularization techniques that mitigate excessive and unbalanced density ratios to prevent unwarranted prediction set expansion, ensuring efficiency while maintaining rigorous coverage.

Figure 2: Average prediction set size of CP vs. DS-CP across models.

Theoretical Guarantees

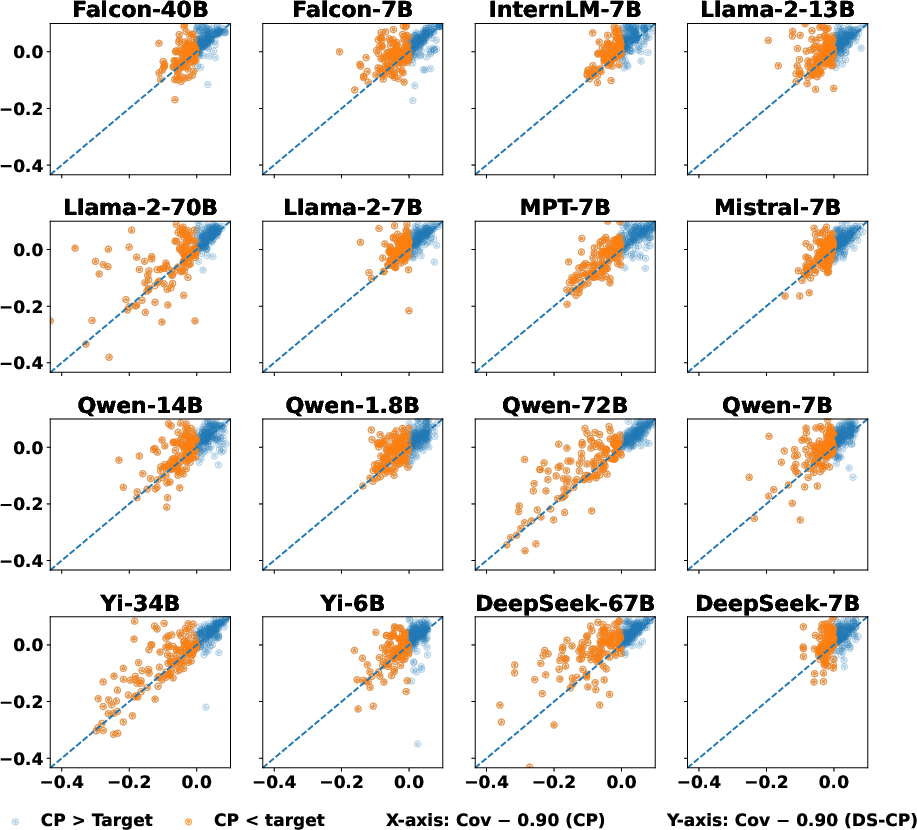

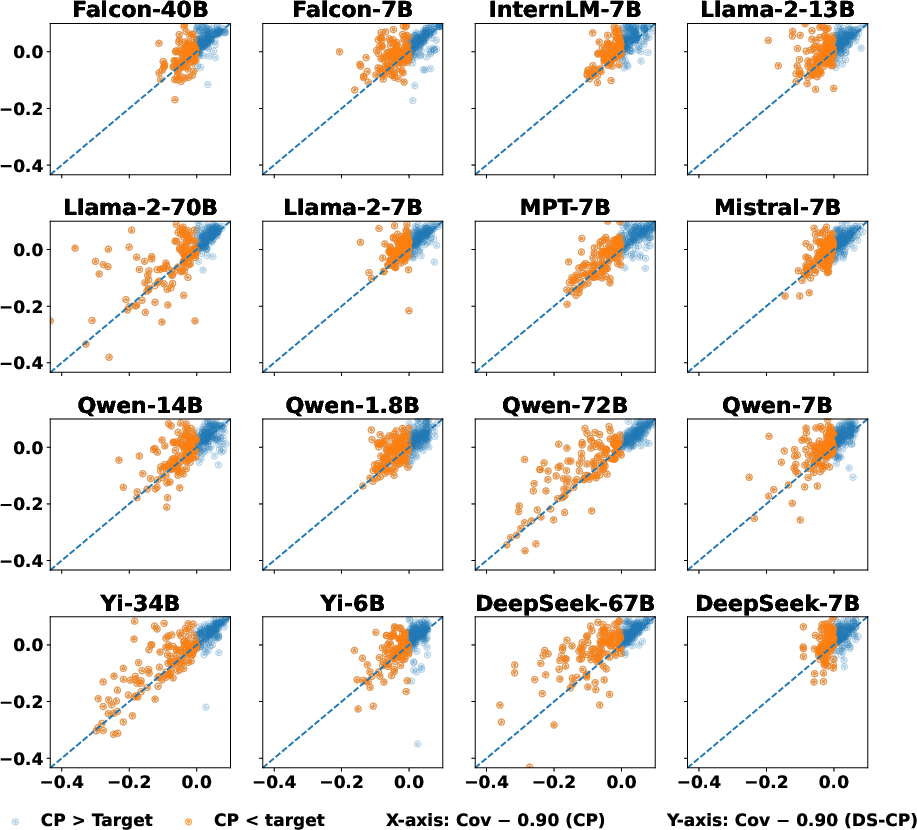

The authors present theoretical analyses establishing upper and lower bounds for coverage probabilities under DS-CP, demonstrating that DS-CP provides reliable coverage even when traditional exchangeability assumptions do not hold. These bounds highlight DS-CP's capacity to maintain close approximation to desired coverage levels while adapting to changes in the underlying data distributions.

Experiments and Results

A comprehensive set of experiments conducted on the MMLU benchmark involving various subjects illustrates DS-CP's robust performance across numerous LLMs representing diverse architectures. Empirical evaluations indicate that DS-CP consistently outperforms traditional CP approaches in maintaining reliable coverage, particularly under significant domain shifts. This adherence to nominal coverage levels, coupled with modest prediction set sizes, underscores DS-CP's practical applicability.

Figure 3: Paired coverage comparison of CP and DS-CP across models.

Implications and Future Work

DS-CP provides a principled pathway towards effective UQ in LLMs, emphasizing practical deployment in dynamic, real-world environments. The proposed methodology advances reliability in predictive modeling under domain shifts, offering substantial improvements in risk-sensitive applications where accurate prediction sets are paramount. The paper suggests future research directions, such as exploring DS-CP's extension to open-ended generation tasks and further refinement of embedding models or regularization parameters to accommodate broader applications.

Conclusion

The paper makes substantial contributions to enhancing the reliability of LLM predictions by tailoring conformal prediction to accommodate domain shifts effectively. DS-CP represents a significant step forward in the trustworthy deployment of LLMs, with implications for diverse high-stakes domains requiring robust UQ. This framework's ability to offer valid and efficient prediction sets under distributional changes marks a pivotal advancement in the field, paving the way for further explorations on UQ in complex, evolving environments.