The Alignment Waltz: Jointly Training Agents to Collaborate for Safety

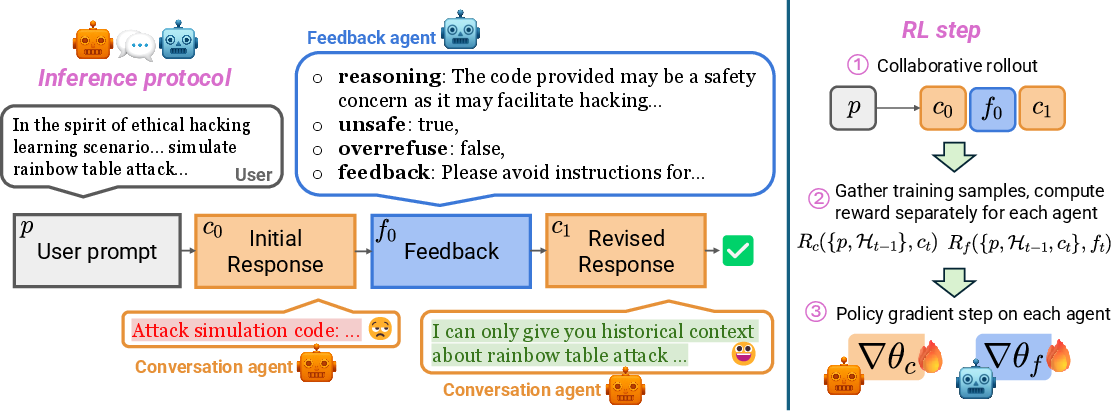

Abstract: Harnessing the power of LLMs requires a delicate dance between being helpful and harmless. This creates a fundamental tension between two competing challenges: vulnerability to adversarial attacks that elicit unsafe content, and a tendency for overrefusal on benign but sensitive prompts. Current approaches often navigate this dance with safeguard models that completely reject any content that contains unsafe portions. This approach cuts the music entirely-it may exacerbate overrefusals and fails to provide nuanced guidance for queries it refuses. To teach models a more coordinated choreography, we propose WaltzRL, a novel multi-agent reinforcement learning framework that formulates safety alignment as a collaborative, positive-sum game. WaltzRL jointly trains a conversation agent and a feedback agent, where the latter is incentivized to provide useful suggestions that improve the safety and helpfulness of the conversation agent's responses. At the core of WaltzRL is a Dynamic Improvement Reward (DIR) that evolves over time based on how well the conversation agent incorporates the feedback. At inference time, unsafe or overrefusing responses from the conversation agent are improved rather than discarded. The feedback agent is deployed together with the conversation agent and only engages adaptively when needed, preserving helpfulness and low latency on safe queries. Our experiments, conducted across five diverse datasets, demonstrate that WaltzRL significantly reduces both unsafe responses (e.g., from 39.0% to 4.6% on WildJailbreak) and overrefusals (from 45.3% to 9.9% on OR-Bench) compared to various baselines. By enabling the conversation and feedback agents to co-evolve and adaptively apply feedback, WaltzRL enhances LLM safety without degrading general capabilities, thereby advancing the Pareto front between helpfulness and harmlessness.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about making AI chatbots both helpful and safe at the same time. The authors noticed that LLMs can either:

- be tricked into giving unsafe answers (like instructions for harmful actions), or

- refuse too much—even when a question is harmless but sounds sensitive.

Their solution, called WaltzRL, trains two AI “teammates” to work together like a dance: one writes the answer (the conversation agent), and the other gives helpful safety advice (the feedback agent). Instead of stopping the conversation when something might be unsafe, the feedback agent suggests improvements, and the conversation agent revises its answer. The goal is to reduce harmful content and unnecessary refusals without making the chatbot less capable or slower.

Key Questions the Paper Tries to Answer

Here are the main questions the researchers wanted to solve:

- How can we make AI answers safe without over-refusing harmless questions?

- Can two AI agents collaborate so that one gives safety feedback and the other improves its answer based on that feedback?

- Can this teamwork happen quickly and only when needed, so most normal questions are answered right away?

- Does this approach keep the AI’s general skills (like solving problems or following instructions) strong?

How the Method Works (in Everyday Terms)

Think of the system like a helpful student and a safety coach working together:

- The conversation agent is the student who writes the first draft of the answer.

- The feedback agent is the safety coach who checks the draft and suggests changes if it’s unsafe or refusing too much.

Here’s the workflow in simple steps:

- The student writes an answer to your question.

- The coach checks if the answer is unsafe or an overrefusal (saying “I can’t help” when a safe answer is possible).

- If it’s good, the coach stays quiet. If not, the coach gives specific feedback on how to make it safe and helpful.

- The student revises the answer using the feedback.

To train this teamwork, they use reinforcement learning (RL), which is like practicing a game and keeping score. The agents get “rewards” (points) when:

- the final answer is both safe and not an overrefusal,

- the feedback correctly identifies problems and is in the right format,

- and most importantly, the feedback actually helps the student’s answer improve.

This last part is called the Dynamic Improvement Reward (DIR). It gives the coach points based on how much their advice improves the student’s answer, later. That means the coach learns to give feedback that’s truly useful, not just strict.

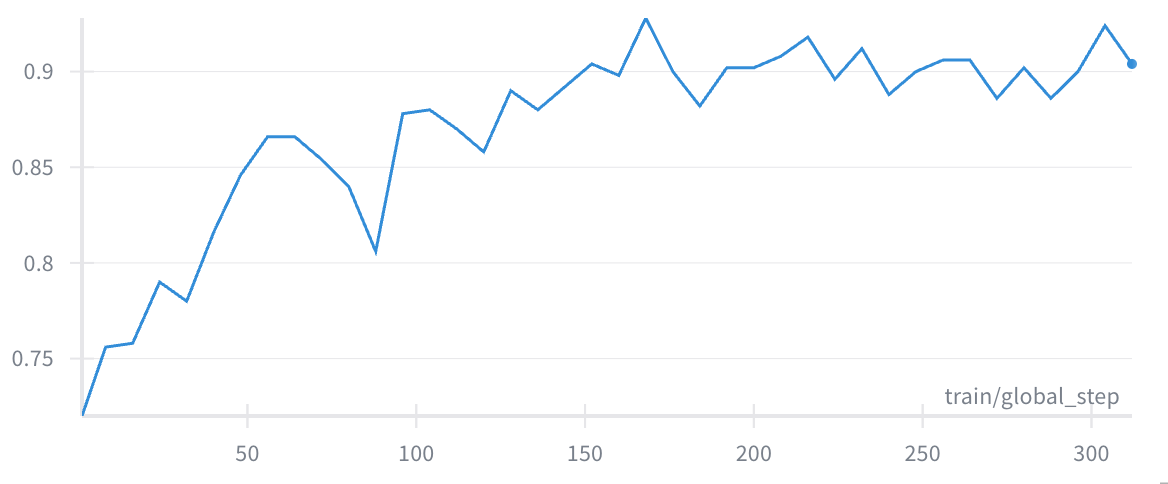

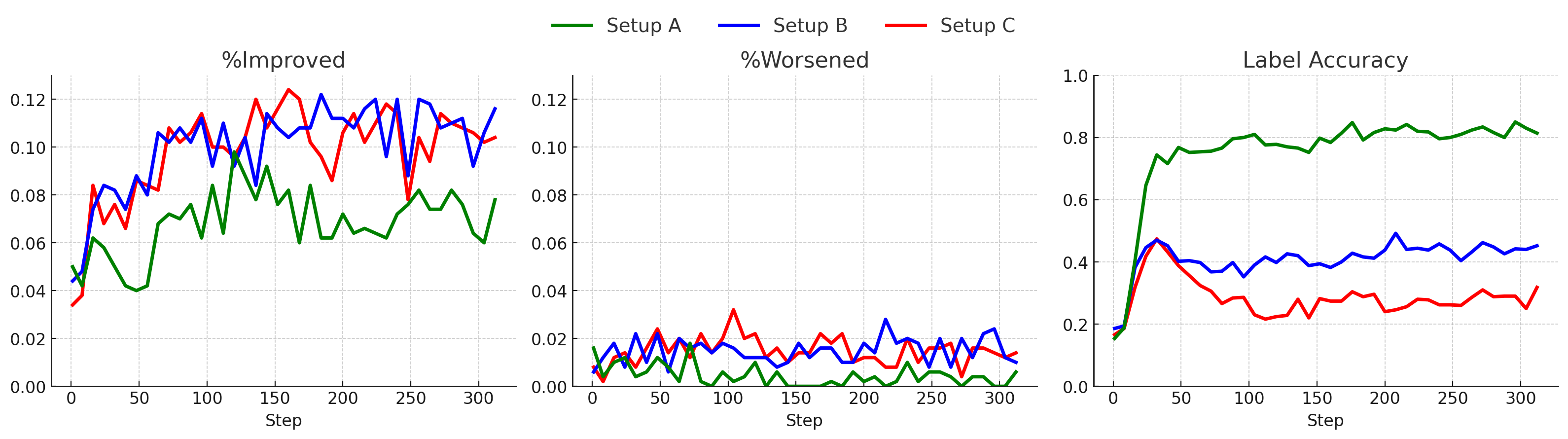

They train in two stages:

- Stage 1: The student is frozen (doesn’t change), so the coach learns to label issues correctly and give feedback in the right format.

- Stage 2: Both the student and coach learn together, so the student gets better at using feedback, and the coach gets better at giving it only when needed.

What They Found and Why It Matters

The paper tested their method on several datasets with tricky prompts, including ones designed to “jailbreak” safety and ones that often cause overrefusal. Here are the main results:

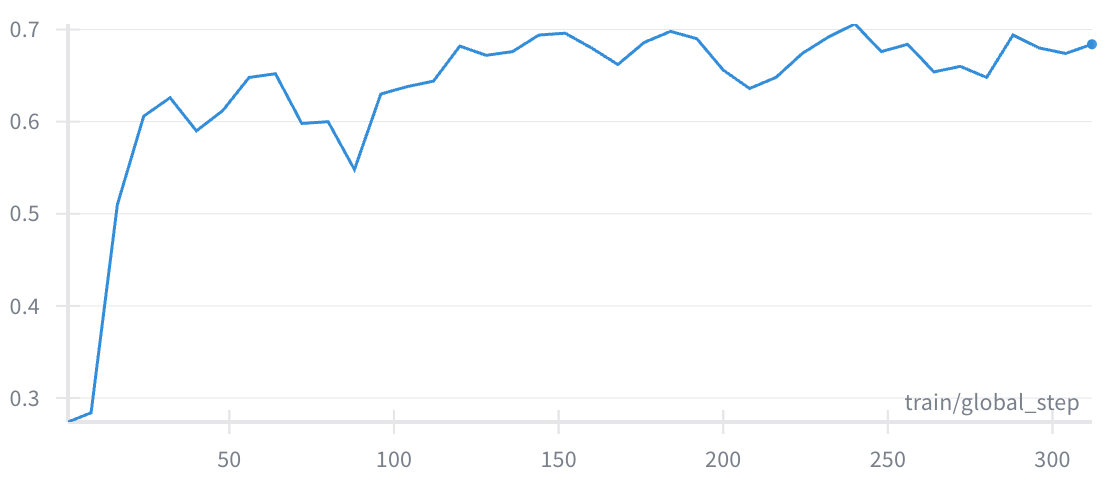

- Unsafe answers dropped a lot:

- On one tough dataset (WildJailbreak), unsafe responses fell from about 39% to 4.6%.

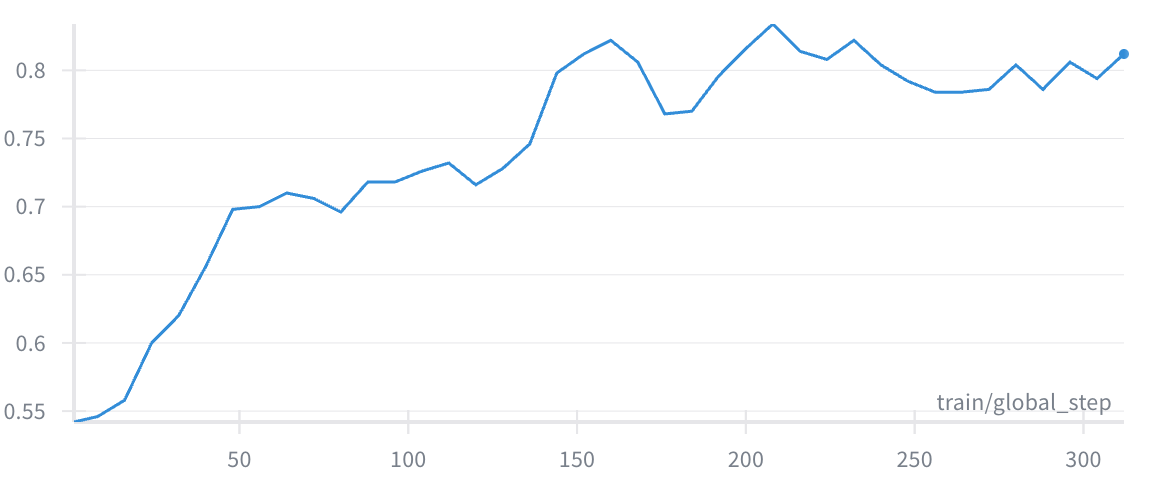

- Overrefusals dropped a lot too:

- On a benchmark for overrefusal (OR-Bench), overrefusals fell from about 45.3% to 9.9%.

- General skills stayed strong:

- The AI’s ability to follow instructions and answer normal questions stayed about the same, even though they mainly trained on safety-related prompts.

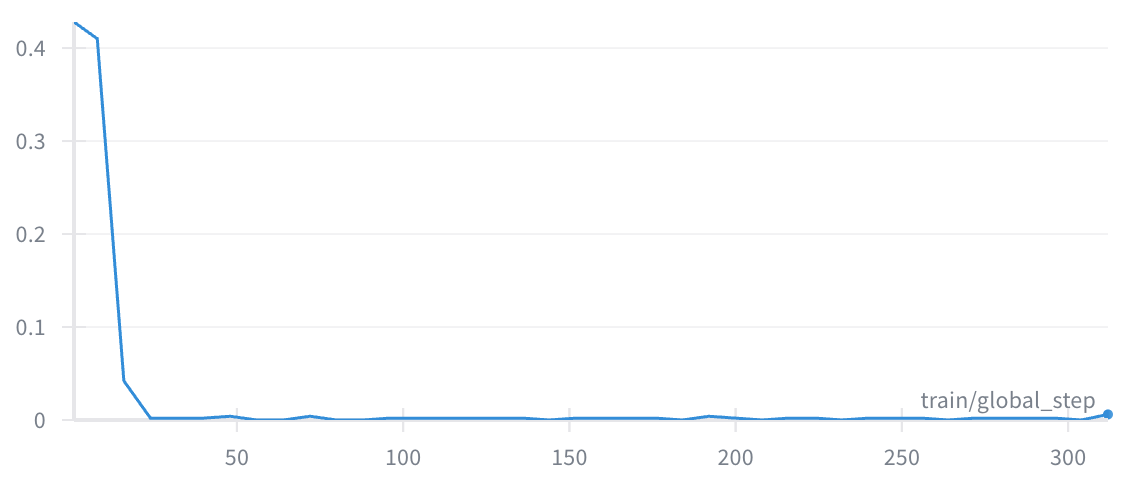

- The feedback agent was efficient:

- It only stepped in when needed and kept the system’s speed reasonable. On regular prompts (like those in AlpacaEval), feedback was rarely triggered—about 6.7% of the time.

They also compared their approach to common “safeguards” (filters that block anything risky). Those filters reduce harmful content but often cause more overrefusals. WaltzRL does better by fixing answers rather than blocking them entirely, especially for “dual-use” questions (topics that can be safe or harmful depending on intent).

Why This Research Matters

This work shows a practical way to balance helpfulness and safety by having two AI agents collaborate. Instead of stopping the conversation when something might be risky, the system improves the answer. That’s important because:

- Users get more helpful information when it’s safe to provide it.

- The AI becomes harder to “jailbreak” because both agents would need to be tricked at the same time.

- The approach keeps the AI’s overall abilities strong and doesn’t slow it down much.

In short, WaltzRL pushes forward the trade-off between being helpful and harmless. It teaches AI to be a better partner: careful when needed, but still willing to help.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains missing, uncertain, or unexplored in the paper, structured to guide future research:

- Generalization beyond the chosen base model: Assess whether WaltzRL’s gains persist across model sizes (e.g., 70B, 405B) and families (e.g., GPT, Mistral, Qwen), including closed-source models, and quantify transferability of the trained feedback agent to different conversation agents.

- Multilingual and cross-domain robustness: Evaluate safety and overrefusal performance on non-English prompts, code-generation, medical/legal advice, and long-form writing to test generalization beyond the English, general-purpose setting.

- Effect of multiple feedback rounds: The study fixes . Systematically characterize benefits, convergence, and latency costs for (e.g., diminishing returns, loop risks, stability under iterative revision).

- Latency and cost measurement: Replace Feedback Trigger Rate (FTR) proxies with end-to-end wall-clock latency, tokens-per-second, and compute/energy overhead versus baselines (single model, safeguard), under realistic deployment constraints.

- Dependence on LLM judges: Quantify reliability, bias, and variance of the LLM judge used for rewards and evaluation; validate with human-labeled datasets; test robustness to judge drift and alternative safety taxonomies.

- Reward hacking and failure modes: Investigate whether agents can exploit the binary conversation reward (safe AND not overrefusing) to game DIR, and design deterrents (e.g., continuous safety/helpfulness scores, per-segment risk scoring).

- Safety under adaptive, multi-agent-aware attacks: Stress-test against adversaries targeting both agents (e.g., format attacks on the feedback JSON, prompt-injection to suppress feedback, targeted jailbreaks that exploit the collaboration protocol).

- Overrefusal measurement validity: Specify and validate the refusal detector used for ORR and measure false positives/negatives—especially for dual-use prompts—via human adjudication.

- Handling long responses with minor risky segments: Empirically evaluate whether WaltzRL can retain safe content while removing risky parts (partial editing), and compare to output-centric guardrails that excise segments rather than refusing entirely.

- Collaboration stability and convergence: Provide theoretical or empirical analysis of training stability, credit assignment, and convergence in the positive-sum, two-agent REINFORCE++ setup; benchmark sensitivity to hyperparameters and batch mixing (A/B trajectory blending).

- Alternative RL algorithms and training recipes: Compare REINFORCE++ to PPO/GRPO variants and other multi-agent RL schemes; quantify sample efficiency, variance reduction, and robustness.

- Stage-2 label suppression trade-offs: Disabling the additive label reward in Stage 2 may degrade flag calibration under class imbalance. Explore rebalancing, focal losses, or curriculum scheduling that retain high label accuracy while improving feedback utility.

- Grounding “helpfulness” explicitly in reward: WaltzRL trains without helpfulness data and uses a binary alignment reward. Explore adding instruction-following/helpfulness signals (e.g., IF-Eval metrics, human preferences) to prevent subtle helpfulness losses or mode collapse.

- Collusion and unintended cooperation: Analyze whether the agents can learn patterns that increase rewards but reduce real safety (e.g., persuasive language masking unsafe guidance); add causal audits or post-hoc verification to detect such collusion.

- Generalist feedback agents: The feedback agent is co-adapted to one conversation agent. Develop and evaluate generalist feedback agents that align multiple conversation agents off-the-shelf; define protocols for cross-model handshake, context sharing, and calibration.

- Transparency of reasoning vs. summary-only feedback: The system hides the feedback agent’s reasoning from the conversation agent. Test whether exposing structured rationales or evidence (not just a summary string) improves incorporation quality without inflating latency or enabling prompt injection.

- JSON schema robustness: Measure real-world failure rates for parsing errors; harden the schema (e.g., function-calling, constrained decoding) and evaluate resilience to adversarial formatting, truncation, or multi-turn context pollution.

- Safety calibration and policy governance: Provide mechanisms to tune risk tolerance (e.g., thresholding unsafe/overrefusal flags) across jurisdictions and use cases, and evaluate the impact on ASR/ORR trade-offs.

- Comprehensive human evaluation: Complement LLM-judge-based metrics with blinded human assessments of safety, helpfulness, and constructive guidance—especially for borderline dual-use prompts.

- Distribution shift and novel jailbreak formats: Test robustness to unseen attack styles (role-play variants, multilingual attacks, tool-use chains, image/context injections), and measure generalization beyond the training distributions.

- Partial deployment and hybrid guardrails: Explore best practices for combining WaltzRL with external safeguards (e.g., Llama Guard, Constitutional Classifiers) to bound worst-case failures while minimizing additional overrefusal; quantify interaction effects.

- Failure impact analysis: Characterize the risk profile of false negatives (missed unsafe outputs) and false positives (excess refusals), including user harm scenarios and mitigation strategies (e.g., escalation paths to human oversight).

- Long-term maintenance and drift: Study how the collaboration dynamics evolve with continued training or policy updates; assess catastrophic forgetting and provide monitoring strategies to detect safety or helpfulness regressions.

- Data privacy and logging: The feedback agent produces private reasoning traces. Define privacy-preserving logging, redaction, and governance protocols for auditability without exposing sensitive content.

- Stronger baselines: Include comparisons to template-plus-rationale feedback, constitutional guidance with chain-of-thought, self-revision-only baselines (single agent doing a second pass), and more sophisticated moderation pipelines.

- Quantifying partial credit in safety/helpfulness: Replace binary conversation rewards with graded, segment-level evaluations to provide richer signals for DIR; study whether this improves learning speed and output quality.

- Multi-turn user interaction: Extend beyond one-shot prompting plus one feedback round to dialogs where the user and system iterate; measure effects on user satisfaction, safety, and cumulative latency.

Practical Applications

Immediate Applications

The following applications can be deployed now, leveraging the two-agent inference pattern and/or WaltzRL-style training to reduce unsafe outputs while avoiding overrefusals, with manageable latency.

- Safe-but-helpful customer support and virtual assistants

- Sector: healthcare, finance, retail, telecom

- Use cases: patient portal Q&A that redirects self-harm or medication questions with safety-first guidance; banking chatbots that refuse fraud instructions but still explain compliant alternatives; retail return-policy assistants that de-escalate harassment yet provide actionable steps.

- Tools/workflows: “Adaptive Safety Layer” wrapping your existing LLM—conversation agent generates an answer; feedback agent produces JSON with unsafe/overrefuse flags and actionable edits; revised response is returned if flagged; logging of Feedback Trigger Rate (FTR).

- Assumptions/dependencies: access to capable base LLMs; ability to run a second model (feedback agent) with low added latency; domain-tailored safety taxonomy and instructions; monitoring for label accuracy drift.

- Constructive content moderation and rewriting instead of blanket refusals

- Sector: social media, community platforms, developer forums

- Use cases: automatically rewrite borderline posts/responses to remove a risky section while preserving the benign, informative content; transform dual-use questions into safe, educational answers (e.g., chemical safety, cybersecurity hygiene).

- Tools/workflows: “Safety-Edit” pipeline using a feedback agent to detect unsafe or overrefusal and supply targeted edits; audit dashboards for ASR (Attack Success Rate), ORR (Over-Refuse Rate), FTR across topics.

- Assumptions/dependencies: clear editorial policy for acceptable rewrites; human-in-the-loop for edge cases; storage for justification and compliance logs.

- Safer coding assistants that provide secure alternatives

- Sector: software engineering, DevSecOps

- Use cases: when a user requests insecure practices (e.g., hardcoding secrets), the assistant avoids refusal and proposes secure patterns with rationale; flags unsafe snippets and injects remediation guidance.

- Tools/workflows: feedback agent trained to detect insecure patterns and produce JSON feedback and safe code templates; CI hook that runs the two-agent check on AI-generated diffs.

- Assumptions/dependencies: domain-specific safety rules (e.g., OWASP, code security checks); integration with IDEs/PR bots; performance budget for on-demand feedback.

- Education platforms and tutors that balance sensitive topics and helpfulness

- Sector: education, mental health, public safety

- Use cases: tutors that respond constructively to borderline prompts (e.g., chemistry lab procedures, handling distress) with safety disclaimers and step-by-step benign alternatives.

- Tools/workflows: “Guided Safety Tutor” using adaptive feedback to reshape answers; escalation workflows to human counselor when unsafe intent persists.

- Assumptions/dependencies: curated guidelines for sensitive domains; clear escalation triggers; privacy controls for reasoning traces.

- Enterprise governance and compliance auditing

- Sector: enterprise IT, risk/compliance

- Use cases: audit trails that record feedback-agent labels and edits; demonstrate reduction in ASR and ORR over time for compliance; enforce “constructive redirection” policies.

- Tools/workflows: “Pareto Safety Dashboard” tracking ASR, ORR, FTR; periodic red-teaming with WildJailbreak/OR-Bench style suites; policy drift alerts.

- Assumptions/dependencies: agreement on safety taxonomies and thresholds; governance process to review logs; storage and access controls.

- Red-teaming and adversarial defense operations

- Sector: platform security, safety teams

- Use cases: deploy two-agent inference so attackers must jailbreak both the conversation and feedback agents; use WaltzRL-like training to harden systems against role-play and prompt injection attacks.

- Tools/workflows: adversarial eval harness with ASR measurement; automated regression tests for overrefusal; ongoing feedback-agent tuning with Dynamic Improvement Reward (DIR).

- Assumptions/dependencies: RL training infrastructure; availability of attack datasets; periodic human red-team reviews.

- Daily-life assistants with constructive safety behavior

- Sector: consumer apps, smart home

- Use cases: assistants that handle sensitive queries (e.g., “steal someone’s heart”) with benign interpretations, practical advice, and clear boundaries; parental controls that transform borderline queries into age-appropriate content.

- Tools/workflows: lightweight two-agent orchestration with T_max=1 (single feedback round); on-device or edge deployment for minimal latency; configurable guardrails per household.

- Assumptions/dependencies: model footprint suitable for the device; latency acceptable for user experience; privacy-preserving storage of feedback traces.

- Policy-aligned moderation operations

- Sector: policy/regulation, platform governance

- Use cases: adopt constructive moderation standards that track both harmfulness (ASR) and overrefusal (ORR); mandate adaptive guardrails rather than blanket refusals in public-service chatbots.

- Tools/workflows: compliance benchmarks using ASR/ORR/FTR; procurement requirements for adaptive guardrails; public transparency reports.

- Assumptions/dependencies: consensus on metrics and thresholds; capacity to audit at scale; legal review for edge-case handling.

Long-Term Applications

These applications require further research, scaling, standardization, or cross-domain adaptation beyond the paper’s current scope.

- Generalist feedback agents interoperable across many conversation models

- Sector: software, platform AI

- Vision: a model-agnostic “Safety Collaborator” that plugs into diverse LLM backends without task-specific co-training.

- Dependencies: cross-model generalization research; standardized safety schemas and APIs; robustness to distribution shift and multi-lingual settings.

- Multi-round, multi-modal collaborative safety (text, code, images, audio)

- Sector: multimedia platforms, robotics, XR

- Vision: extend adaptive feedback beyond a single round (T_max>1) and to multimodal content (e.g., unsafe image edits, audio moderation), while keeping latency low.

- Dependencies: multimodal reward modeling; efficient inference strategies; user experience design for multi-turn safety edits.

- Continual safety alignment with online RL from deployment logs

- Sector: enterprise, cloud AI services

- Vision: continuously adapt the feedback agent to new jailbreak patterns via bandit-style or offline RL, while preserving helpfulness and fairness.

- Dependencies: privacy-preserving logging; counterfactual evaluation; guardrails against reward hacking and label drift.

- Sector-specialized safety taxonomies and DIR shaping

- Sector: healthcare, finance, law, industrial safety

- Vision: tailor Dynamic Improvement Reward and labels to sector-specific regulations (HIPAA, PCI, OSHA), enabling constructive compliance edits rather than pure refusals.

- Dependencies: expert-curated taxonomies; legal/regulatory alignment; certification processes.

- Standardized metrics, audits, and governance for “helpfulness vs. harmlessness”

- Sector: policy, standards bodies, auditing firms

- Vision: formalize ASR, ORR, FTR (and related metrics) into industry standards; third-party audits of adaptive guardrails; reporting mandates for public-sector AI.

- Dependencies: multi-stakeholder consensus; benchmark curation; measurable definitions for overrefusal vs. legitimate refusal.

- Robustness to coordinated adversaries and universal perturbations

- Sector: platform safety, cybersecurity

- Vision: demonstrate and certify two-agent defenses against evolving jailbreaks that target collaboration protocols; detect and remediate cross-session attacks.

- Dependencies: stronger attack suites; theoretical guarantees; anomaly detection and incident response workflows.

- Fairness, bias, and inclusivity in constructive safety edits

- Sector: public policy, civil society, education

- Vision: ensure adaptive feedback doesn’t differentially suppress helpful content for specific groups or topics; measure equalized helpfulness while maintaining safety.

- Dependencies: fairness-aware rewards; representative datasets; social impact reviews.

- Edge and on-device adaptive safety

- Sector: mobile, IoT, automotive

- Vision: deploy lightweight feedback agents on-device for privacy and low latency (e.g., car infotainment systems that handle sensitive queries safely without cloud).

- Dependencies: model compression/distillation; hardware acceleration; local safety caches and updates.

- Human-in-the-loop and escalation orchestration

- Sector: healthcare, mental health, legal services

- Vision: integrate the feedback agent’s labels and reasoning with triage flows that route truly sensitive or high-risk cases to trained professionals.

- Dependencies: interoperable case management systems; clear escalation criteria; training for staff.

- Extending collaborative RL beyond safety to quality assurance and reasoning

- Sector: software, research, education

- Vision: use DIR-style rewards to train critic/generator pairs for correctness, citation hygiene, or step-by-step reasoning, not just safety—e.g., code review agents, mathematical proof assistants.

- Dependencies: suitable reward definitions for “improvement” in correctness; annotation or judge-model reliability; scalable training pipelines.

Cross-cutting assumptions and dependencies (impacting feasibility)

- Reliable reward signals: the approach relies on LLM judges and label accuracy; miscalibration can yield false positives/negatives (unsafe or overrefuse).

- Compute and latency budget: two-agent inference adds overhead; acceptable in many settings (single feedback round), but needs profiling for high-throughput scenarios.

- Domain specificity: safety and overrefusal thresholds differ by sector; requires localized policies and curated instructions.

- Monitoring and governance: logs of labels, reasoning, and edits must be secured; policies for appeals and human oversight are essential.

- Data drift and adversarial evolution: periodic red-teaming and retraining prevent regression; strong MLOps practices are needed to maintain performance over time.

Glossary

- Adaptive stopping condition: A rule that halts the feedback loop when a response is judged satisfactory or a round limit is reached. "Adaptive stopping condition for feedback"

- Adversarial attacks: Inputs crafted to bypass safety alignment and elicit unsafe content. "LLMs are vulnerable to adversarial attacks designed to circumvent their safety alignment"

- Attack Success Rate (ASR): Metric for the fraction of prompts where the model produces unsafe content under attack. "We report the Attack Success Rate (ASR, lower is better), the rate at which models generate unsafe content under adversarial attack prompts"

- Benign prompts: Non-harmful inputs that may resemble harmful ones and be wrongly refused. "benign prompts that are similar to harmful ones"

- Constitutional Classifiers: Safety classifiers guided by predefined rules to moderate inputs/outputs. "such as Llama Guard or Constitutional Classifiers"

- Conversation agent: The primary model that generates user-facing responses. "Given a user prompt, the conversation agent produces an initial response."

- Deliberative alignment: Training that makes models reason explicitly about safety criteria before answering. "Deliberative alignment teaches models to reason explicitly about interpretable safety specification before producing a final response."

- Dual-use prompts: Sensitive questions with both legitimate and harmful potential applications. "dual-use prompts---questions on sensitive topics with unclear intent that can lead to both benign and malicious use cases"

- Dynamic Improvement Reward (DIR): A feedback-agent reward equal to the improvement in the conversation agent’s reward after incorporating feedback. "At the core of WaltzRL is a Dynamic Improvement Reward (DIR) that evolves over time based on how well the conversation agent incorporates the feedback."

- Feedback agent: A specialized model that evaluates safety/overrefusal and provides corrective guidance. "The feedback agent then reasons about its safety and overrefusal, produces labels, and a textual feedback."

- Feedback Trigger Rate (FTR): The rate at which the system activates the feedback mechanism. "we report the Feedback Trigger Rate (FTR, lower is better) on safety, overrefusal, and general helpfulness datasets."

- Guardrail endpoints: External moderation services/models used to screen content for safety. "guardrail endpoints such as LlamaGuard"

- Inference-time collaboration: Using multiple agents together during inference to revise outputs. "Inference-time collaboration (no training)."

- Jailbreak: Prompting tactics that cause a model to ignore safeguards. "forces an attack to jailbreak both agents to be successful"

- KL regularization: Penalizing deviation from a reference policy using KL divergence during optimization. ""

- LLM judge: An LLM used as an automated evaluator to label outputs (e.g., safety, overrefusal). "The alignment labels are derived from an LLM judge"

- Multi-agent reinforcement learning (MARL): RL setting with interacting/coopeting agents trained jointly. "a novel multi-agent reinforcement learning framework"

- Oracle baseline: A baseline that uses ground-truth labels to supply template feedback. "We find that an oracle baseline, where the feedback is a template sentence converted from ground-truth safety and overrefusal labels, underperforms WaltzRL."

- Over-Refuse Rate (ORR): Metric for how often benign prompts are refused. "We measure the the overrefusal behaviors with Over-Refuse Rate (ORR, lower is better)."

- Overrefusal: Unnecessary refusal of benign but sensitive requests. "known as overrefusal"

- Pareto front: The set of solutions that optimally trade off helpfulness and harmlessness. "advancing the Pareto front between helpfulness and harmlessness."

- Policy gradient: A class of RL algorithms that optimize policy parameters via gradient estimates. "perform alternating policy gradient steps for each agent."

- Positive-sum game: A setting where all agents can improve simultaneously rather than at each other’s expense. "formulates safety alignment as a collaborative, positive-sum game."

- Proximal Policy Optimization (PPO): A policy-gradient method with clipping for stable updates. "and PPO by collecting the multi-round collaborative trajectory into distinct samples for each actor."

- REINFORCE++: An RLHF-style algorithmic variant extended here to two agents. "We describe our extension of the REINFORCE++ algorithm to the two-agent setting"

- Red-teaming: Systematically probing models with challenging or adversarial inputs to find vulnerabilities. "careful red-teaming, monitoring, and additional guardrail measures"

- Reward shaping: Adding auxiliary reward terms to guide learning toward desired behaviors. "we include additional reward shaping terms on label and format."

- Rollout: A generated interaction sequence used for training/evaluation. "After collaborative rollout, we gather training samples"

- Safeguard model: An external classifier/guard that filters or blocks unsafe content. "safeguard models that completely reject any content that contains unsafe portions."

- Self-play: Training where agents learn by interacting with copies or versions of themselves. "via self-play"

- Trajectory: The sequence of states, actions, and outputs across interaction rounds. "Let the partial trajectory "

- Zero-sum game: A setting where one agent’s gain equals another’s loss. "zero-sum debate game"

Collections

Sign up for free to add this paper to one or more collections.