- The paper introduces a unified framework using imagination-guided queries to selectively retrieve both visual and behavioral experiences for enhanced navigation.

- It employs a language-conditioned contrastive world model with multi-step overshooting to improve long-horizon prediction and retrieval quality.

- Memoir demonstrates significant benchmark improvements with enhanced SPL, reduced training latency, and lower memory consumption for efficient VLN performance.

Imagination-Guided Experience Retrieval for Memory-Persistent Vision-and-Language Navigation

Introduction

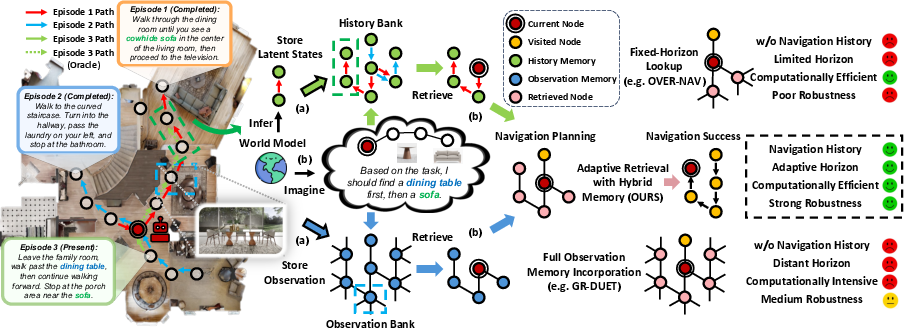

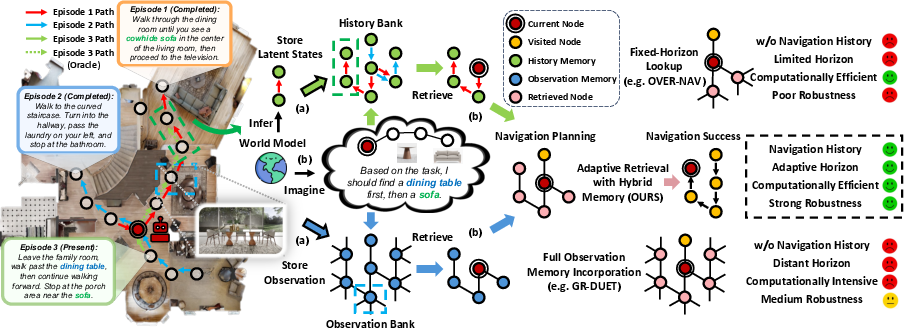

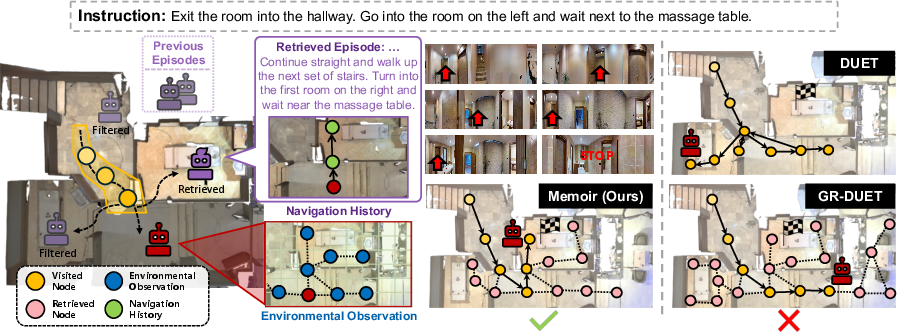

The paper "Dream to Recall: Imagination-Guided Experience Retrieval for Memory-Persistent Vision-and-Language Navigation" (Memoir) addresses the challenge of enabling embodied agents to progressively improve navigation performance by leveraging accumulated experience across episodes. Traditional VLN agents operate in an episodic fashion, lacking mechanisms for long-term memory integration and thus failing to adapt to persistent, real-world scenarios. Existing memory-persistent VLN methods either incorporate all available memory, leading to computational inefficiency and noise, or rely on fixed-horizon lookups, risking loss of valuable experience. Furthermore, most approaches focus solely on environmental observations, neglecting behavioral histories that encode strategic decision-making patterns.

Memoir introduces a unified framework where a language-conditioned world model imagines future navigation states to generate queries for selective retrieval of both environmental observations and behavioral histories. This paradigm enables adaptive, viewpoint-level memory access, facilitating robust navigation planning grounded in explicit long-term memory.

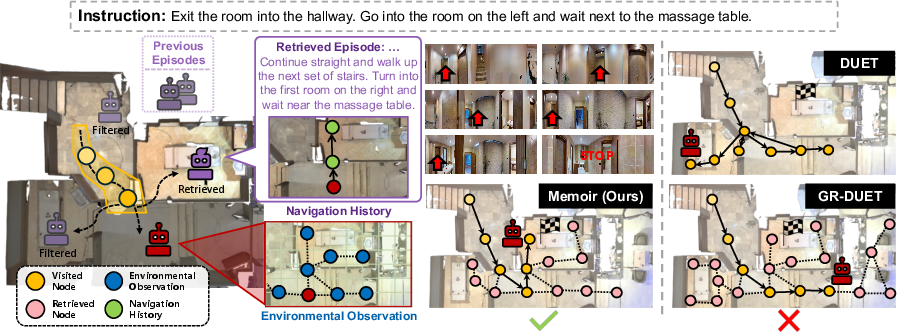

Figure 1: Overview of Memoir's workflow for experience retrieval via imagination, showing episodic memory bank population and imagination-guided retrieval for navigation planning.

Methodology

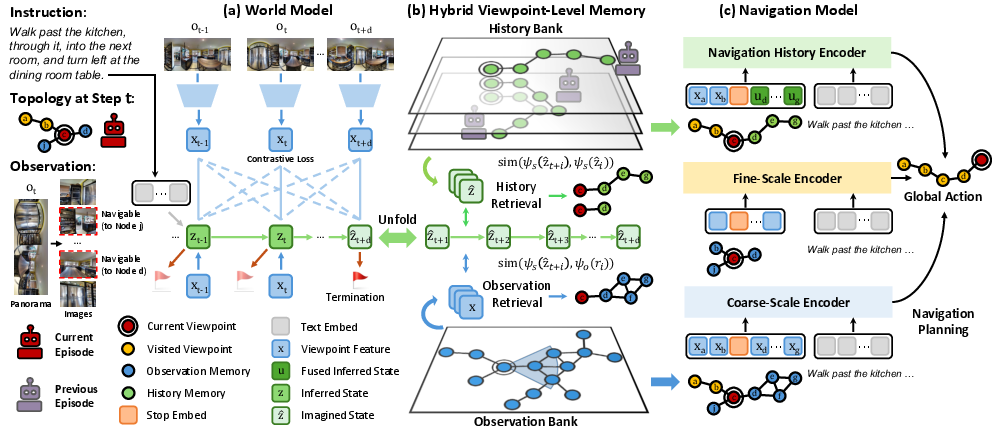

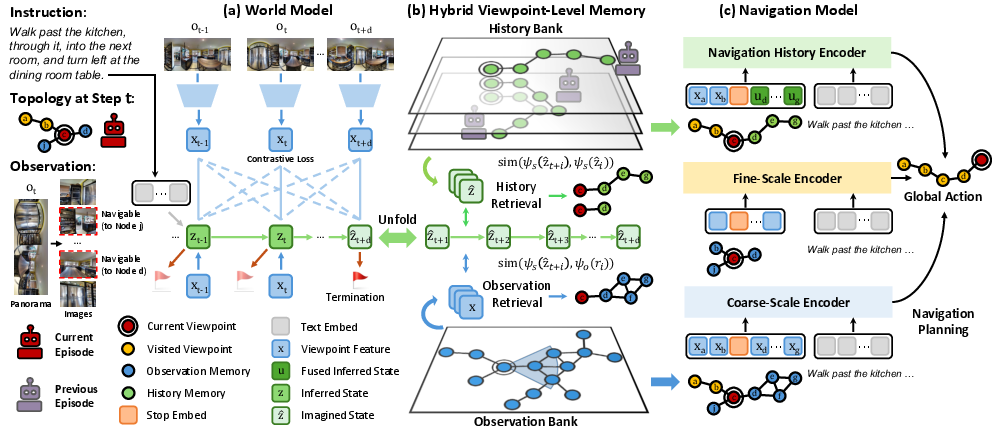

Language-Conditioned Contrastive World Model

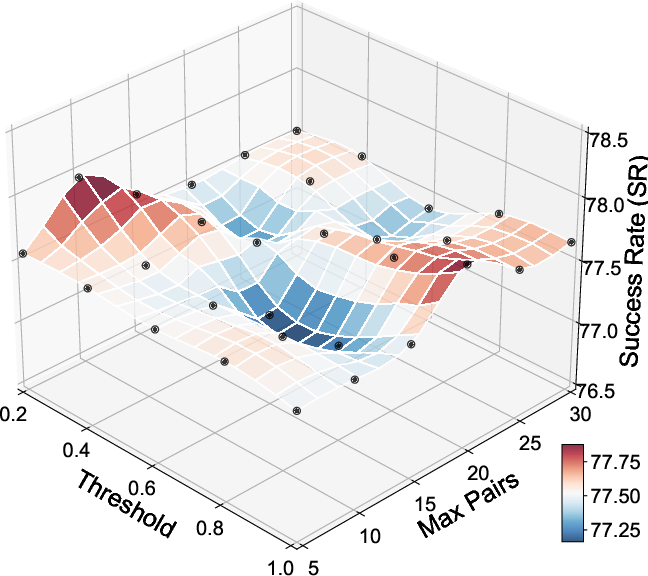

Memoir employs a contrastive variational world model conditioned on both visual observations and natural language instructions. The model encodes navigation histories into latent state representations and recursively imagines future states, which serve as queries for memory retrieval. The contrastive objective replaces pixel-level reconstruction with semantic compatibility estimation between latent states and observations, enabling efficient and discriminative representation learning. Multi-step overshooting is incorporated to enhance long-horizon predictive capability, improving retrieval quality for extended navigation trajectories.

Hybrid Viewpoint-Level Memory (HVM)

Memoir maintains two complementary memory banks anchored to viewpoints:

- Observation Bank: Stores panoramic visual features for each visited viewpoint.

- History Bank: Stores inferred agent states and imagined trajectory sequences for each viewpoint, capturing behavioral patterns across episodes.

At each navigation step, the agent updates both banks with current observations and imagined trajectories. Retrieval is performed via:

- Observation Retrieval: Topology-guided search using state-observation compatibility scores, filtering and ranking candidate viewpoints based on imagined future states.

- History Retrieval: Sequential similarity matching between current imagined trajectories and stored historical patterns, selecting top matches for integration.

Figure 2: Details of imagination-guided experience retrieval, including contrastive training, recursive imagination, dual retrieval, and specialized encoders for decision integration.

Experience-Augmented Navigation Model

Memoir extends the Dual-Scale Graph Transformer (DUET) architecture with three specialized encoders:

- Coarse-Scale Encoder: Processes global observations, including retrieved viewpoints.

- Fine-Scale Encoder: Processes immediate panoramic features for local action planning.

- Navigation-History Encoder: Fuses retrieved historical states with current viewpoint features, enabling history-informed decision-making.

A dynamic fusion mechanism balances contributions from all branches, producing final action scores for navigation.

Experimental Results

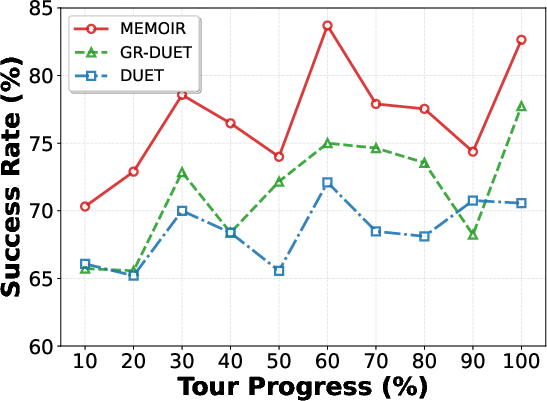

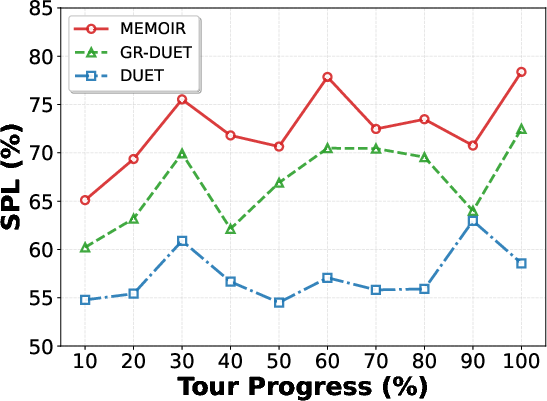

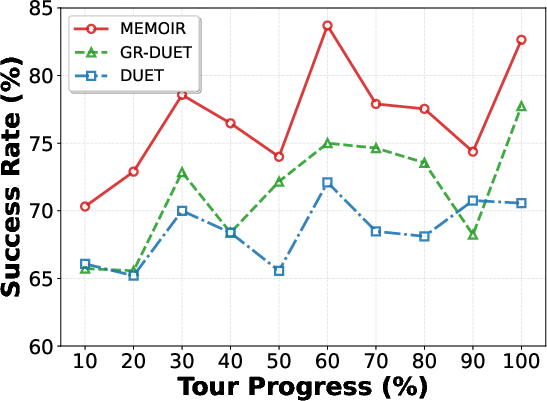

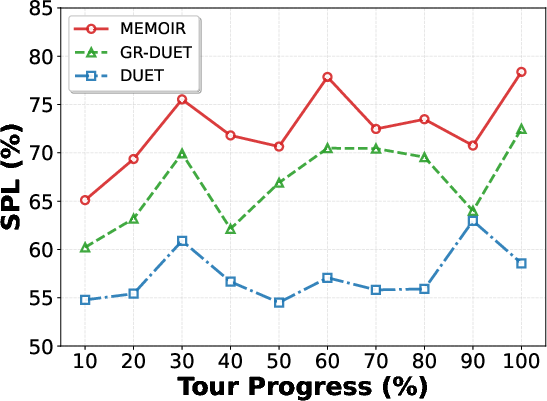

Memoir is evaluated on IR2R and GSA-R2R benchmarks, demonstrating consistent improvements over both traditional and memory-persistent baselines. On IR2R unseen environments, Memoir achieves a 5.4% SPL gain over GR-DUET (73.3% vs. 67.9%), with 8.3× training speedup and 74% inference memory reduction. The oracle retrieval upper bound (93.4% SPL) indicates substantial headroom for further improvement.

Figure 3: Performance scaling across tour progression on IR2R, showing Memoir's sustained improvement with accumulated experience.

Memoir also outperforms adaptation-based and memory-based methods on GSA-R2R across diverse user and scene instructions, with average gains of 2.38% SR and 1.59% SPL over GR-DUET.

Qualitative Analysis

Case studies illustrate Memoir's ability to retrieve relevant observations and behavioral histories, enabling successful navigation in scenarios where baselines fail due to ambiguity or lack of strategic context.

Figure 4: Visualization of Memoir's memory retrieval from both banks and trajectory comparison with DUET and GR-DUET.

Computational Efficiency

Memoir's selective retrieval mechanism yields significant resource savings compared to complete memory incorporation strategies. Training memory usage is reduced by 55%, and training latency by 88%, making Memoir suitable for deployment in resource-constrained settings.

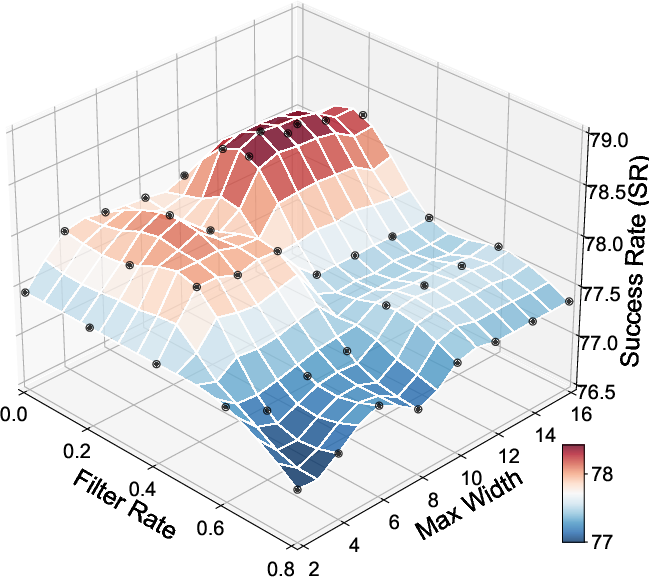

Ablation Studies

Ablations confirm the necessity of both observation and history retrieval, the superiority of imagination-guided queries over random or exhaustive memory access, and the benefits of advanced world model architectures (Transformer with overshooting). Incorporating neighbor viewpoints and completing partial observations further enhance navigation performance.

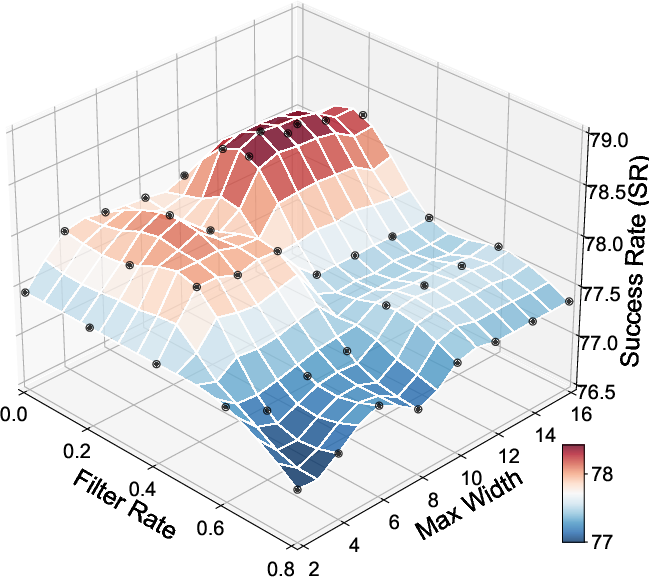

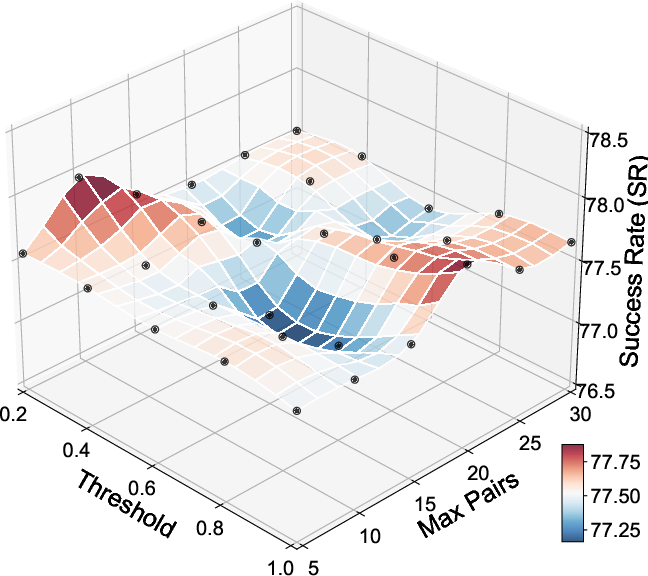

Figure 5: Hyper-parameter study for retrieval mechanisms, showing the impact of filter rates and search width on navigation performance.

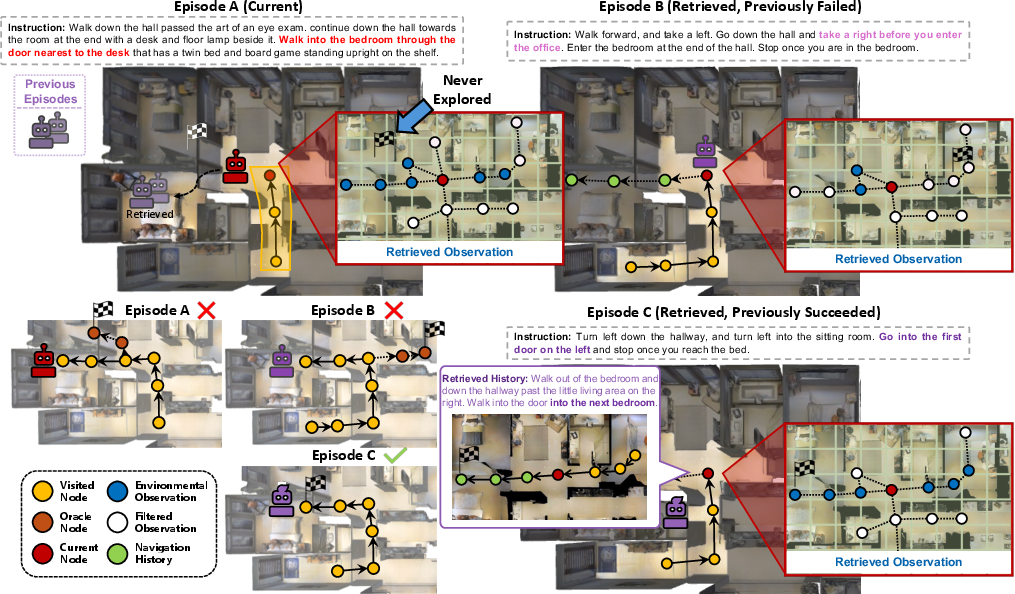

Failure Modes and Future Directions

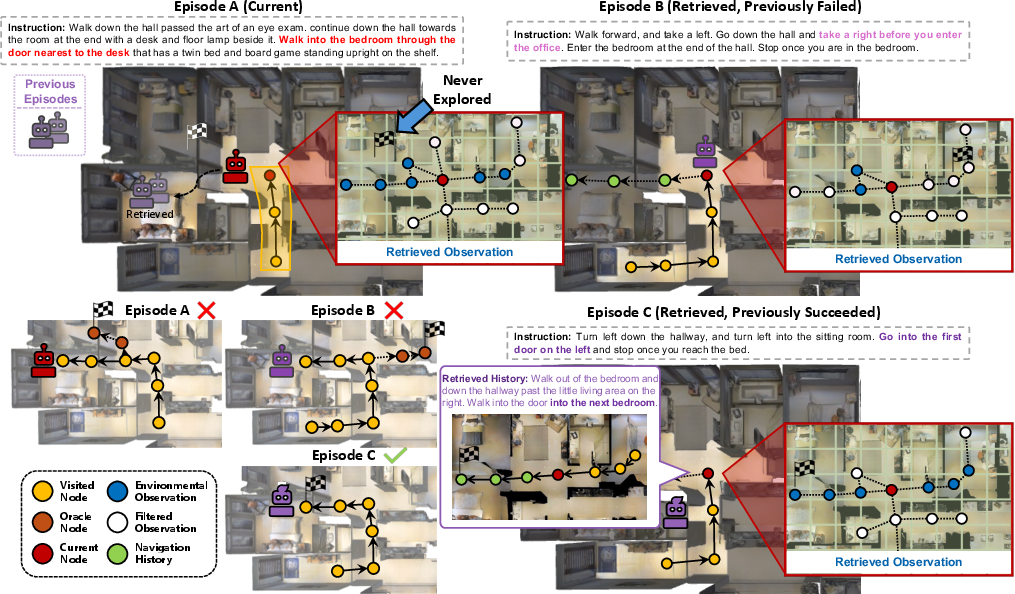

Failure analysis reveals limitations in world model predictive accuracy and retrieval confidence, particularly in distinguishing spatially similar but semantically distinct targets. The agent may over-exploit retrieved experience, failing to explore novel alternatives when memory is misleading.

Figure 6: Visualization of failure modes in imagination-guided memory retrieval, highlighting retrieval ambiguities and exploitation-exploration trade-offs.

Implications and Future Work

Memoir establishes a principled connection between predictive simulation and explicit memory retrieval for embodied navigation. The approach demonstrates that imagination-guided, adaptive memory access enables agents to leverage both environmental and behavioral experience for robust, scalable navigation. The substantial gap between current performance and the oracle upper bound motivates further research in world model scaling, explicit spatial relationship modeling, and confidence-aware retrieval mechanisms.

Potential future developments include:

- Large-scale pretraining of world models for improved predictive fidelity.

- Integration of spatial reasoning modules to enhance retrieval discrimination.

- Dynamic confidence estimation for balancing exploitation and exploration during navigation.

Conclusion

Memoir introduces an imagination-guided experience retrieval paradigm for memory-persistent VLN, leveraging a language-conditioned world model and hybrid viewpoint-level memory to enable adaptive, efficient, and effective navigation. Extensive empirical results validate the approach's superiority in both performance and resource efficiency, while ablation and failure analyses highlight avenues for further advancement in predictive retrieval and embodied intelligence.