- The paper introduces ParBaLS, a myopic Bayesian decision framework that efficiently selects batches in active learning using partial batch label sampling.

- It leverages Expected Error Reduction and EPIG strategies to reduce test loss and improve predictive performance across varied data types.

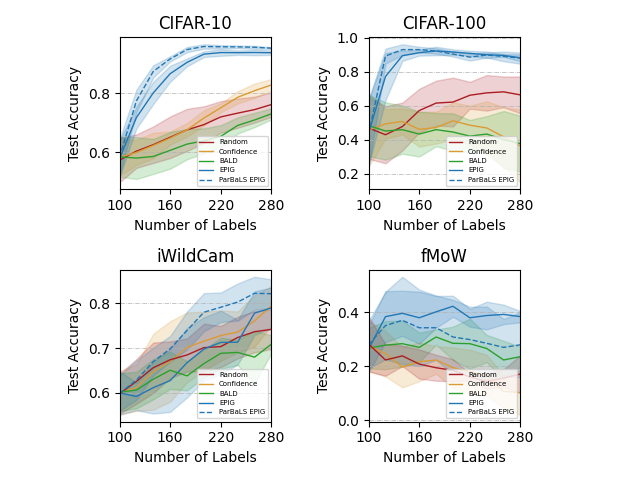

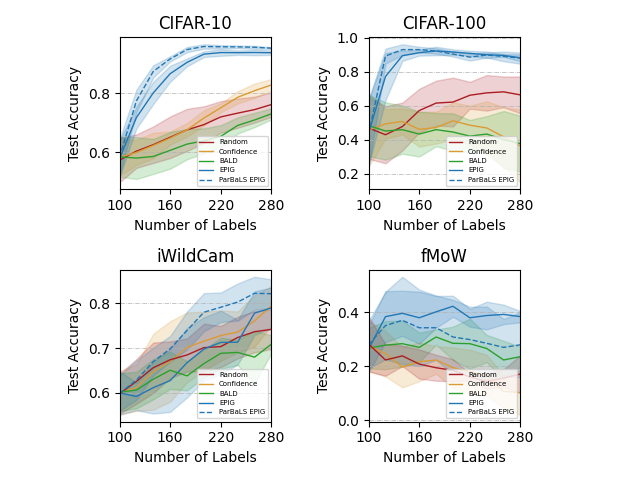

- Experimental results show that ParBaLS scales effectively under constrained labeling budgets, achieving balanced test accuracy on complex datasets.

Myopic Bayesian Decision Theory for Batch Active Learning with Partial Batch Label Sampling

Overview and Motivation

The paper "Myopic Bayesian Decision Theory for Batch Active Learning with Partial Batch Label Sampling" (2510.09877) details advancements in active learning, particularly addressing the challenges posed by batch learning in large datasets. Active Learning (AL) is a pivotal methodology allowing machine learning systems to optimize training efficiency by selectively labeling the most informative data under a constrained labeling budget. The existing algorithms in AL often present obscurities concerning their effectiveness across varying contexts, leading to uncertainties about their decisions and performance.

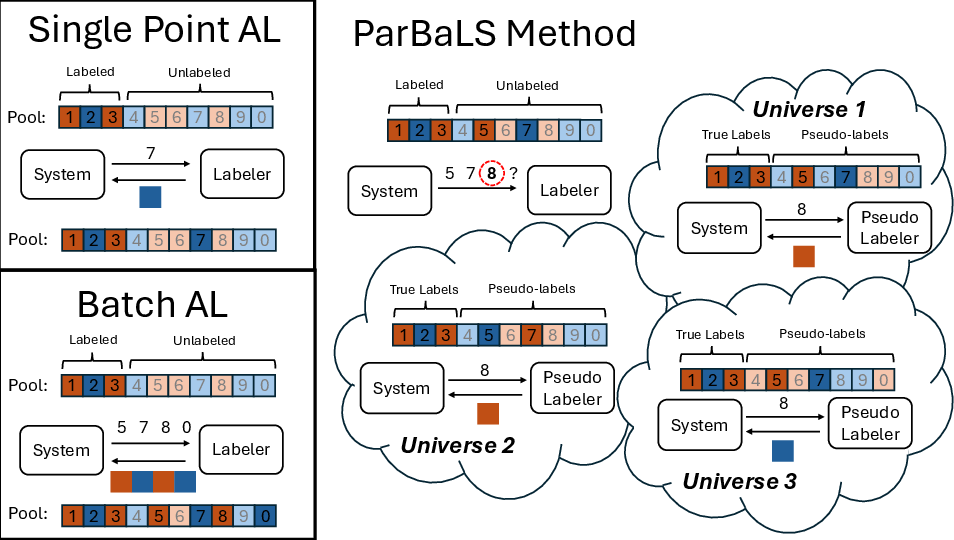

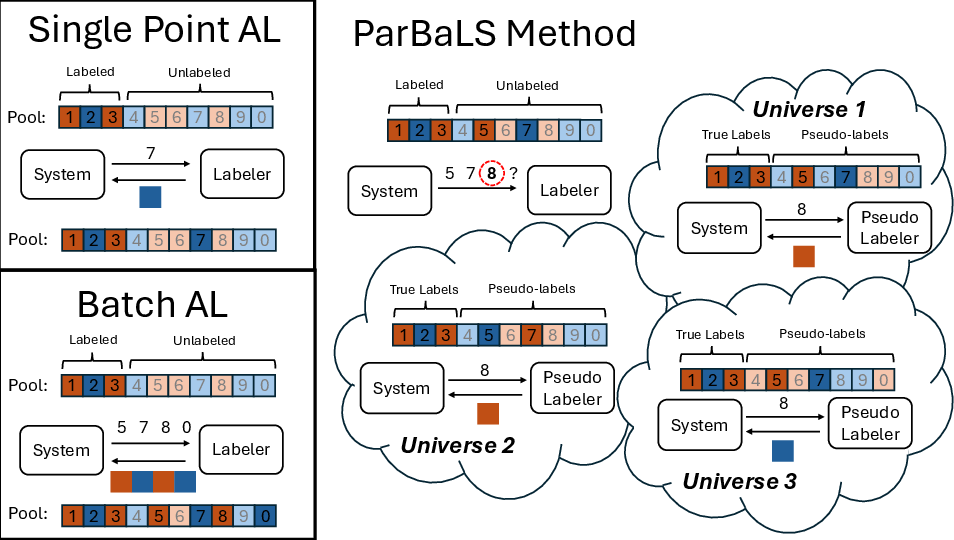

Bayesian Decision Theory (BDT) stands as a universal approach in decision-making under uncertainty, framing it within probabilistic reasoning. This paper innovatively translates BDT to active learning by focusing on a myopic framework—the selection is aimed at optimizing expected test loss immediately after labeling a new data point while disregarding subsequent budget impacts. This myopic perspective fuels effective algorithm derivations such as Expected Error Reduction (EER) and Expected Predictive Information Gain (EPIG). Extending these methods into batch selection environments, the paper pioneers the Partial Batch Label Sampling (ParBaLS) mechanism, transforming batch AL into a more tractable and efficient process.

Figure 1: An illustration of the proposed method, ParBaLS.

Methodological Contributions

The foundational premise of this paper is rooted in the derivation and application of Bayesian Active Learning protocols through the prism of Myopic Bayesian Decision Theory (MBDT). MBDT posits selecting actions that minimize expected costs—here articulated as test loss. The paper introduces several algorithms stemming from this principle:

- Expected Error Reduction (EER) and Expected Predictive Information Gain (EPIG): These are pivotal techniques detailed in associated analyses, focusing on minimizing prediction uncertainty and error post-labeling one more point, leveraging probabilistic inferencing.

- ParBaLS: Comprehensively tackling the complexities inherent in batch selection by incrementally assembling batches using pseudo-labels drawn from parallel decision universes. ParBaLS iteratively refines batch acquisition by weighing the expected informativeness of potential labeling in these simulated states.

The implementation leverages Bayesian Logistic Regression on neural embeddings, showcasing enhanced performance across diverse datasets including both tabular data and images. The comparative analysis versus established batch techniques substantiates ParBaLS' efficacy in maintaining high test accuracy when navigating limited labeling budgets.

Experimental Validation

The experimental strategy evaluates ParBaLS alongside traditional AL methods across 10 datasets. Results indicate key advantages in ParBaLS' balanced performance across varied data distributions and labeling scenarios. The test results for Bayesian Logistic Regression on tabular datasets with multiple iterations, from an initial budget of 20 to a steady rollout of additional samples, emphasize ParBaLS' superior performance.

Figure 2: Test accuracy on tabular datasets with Bayesian Logistic Regression, illustrating effective performance under a constrained labeling budget.

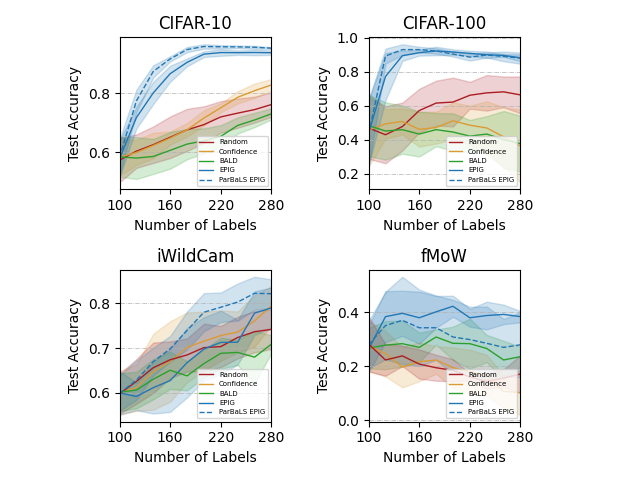

Figure 3: Test accuracy on one-vs-all image datasets with fixed encoders.

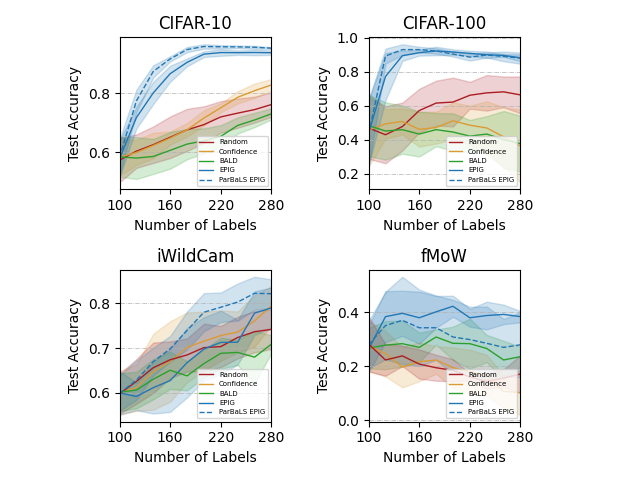

Figure 4: Test accuracy on subpopulation-shifted image datasets with fixed encoders.

Implications and Future Directions

The implications of adopting ParBaLS extend beyond immediate accuracy gains in active learning scenarios. It showcases a scalable solution to real-world large dataset challenges, optimally aligning selection efficiency with practical constraints of batch processing. The theoretical pathways explored promise further refinement in active learning heuristics founded on robust probabilistic frameworks.

Future explorations could expand ParBaLS' applications into deeper networks or more complex learning models, potentially integrating adaptive pseudo-labeling mechanisms more closely with real-time data augmentations. By delineating clear performance boundaries within Bayesian approaches, the research encourages a paradigm shift towards more intuitive AL systems seamlessly tuned to probabilistic forecasting.

Conclusion

The research encapsulated by "Myopic Bayesian Decision Theory for Batch Active Learning with Partial Batch Label Sampling" represents a significant technical stride in active learning methodologies. By systematically addressing batch acquisition through pseudo-label sampling strategies, the paper establishes ParBaLS as a promising front in overcoming traditional active learning limitations with computational efficiency and decision-making clarity. These contributions set a formidable stage for continued advancements in informed data selection and model training paradigms.