- The paper presents a continuous Level-of-Detail framework that leverages 3D Gaussian Splatting for smooth, artifact-free rendering and efficient model storage.

- It introduces a learnable, distance-adaptive decay parameter for each Gaussian primitive to ensure robust view-dependent opacity control.

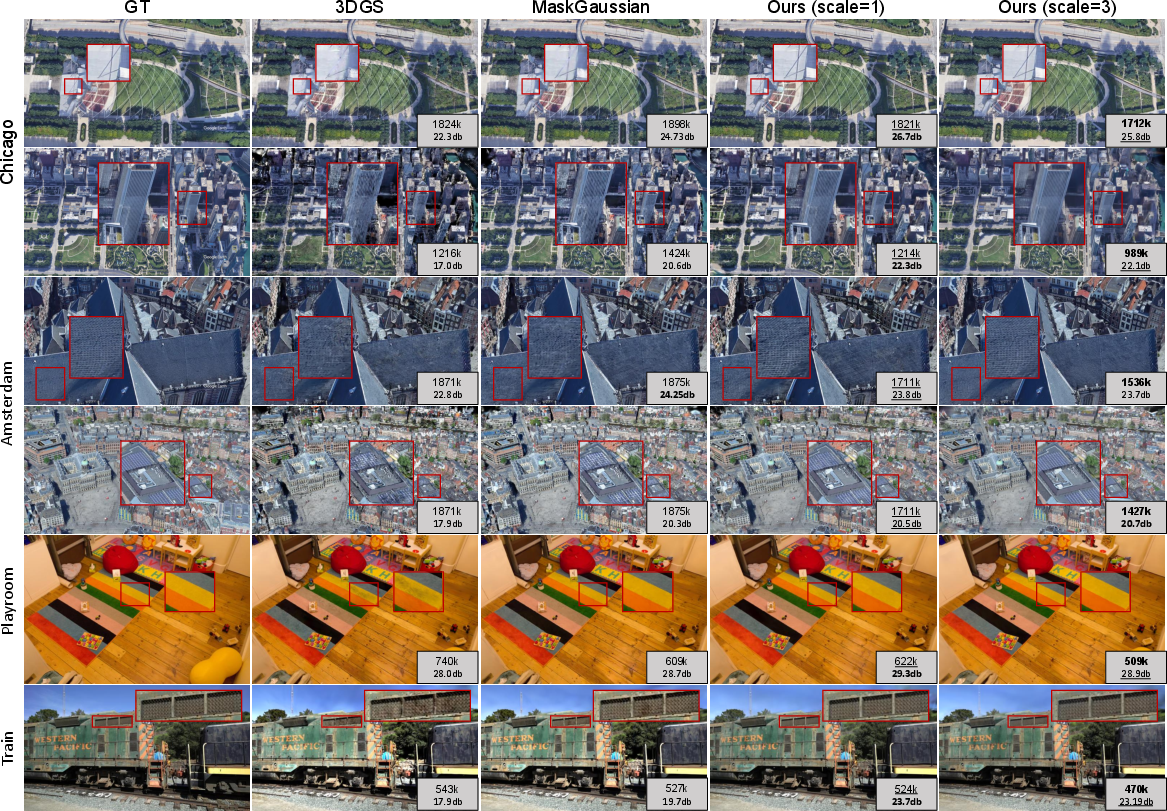

- Experimental results show improved PSNR, SSIM, and LPIPS metrics with fewer primitives compared to traditional discrete LoD methods.

Continuous Level-of-Detail via 3D Gaussian Splatting: A Technical Analysis

Introduction and Motivation

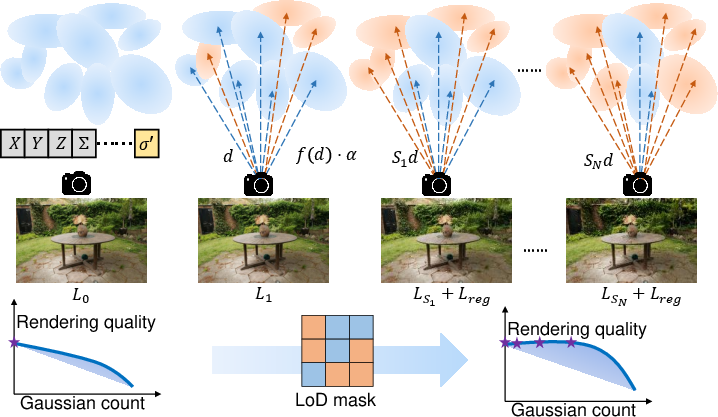

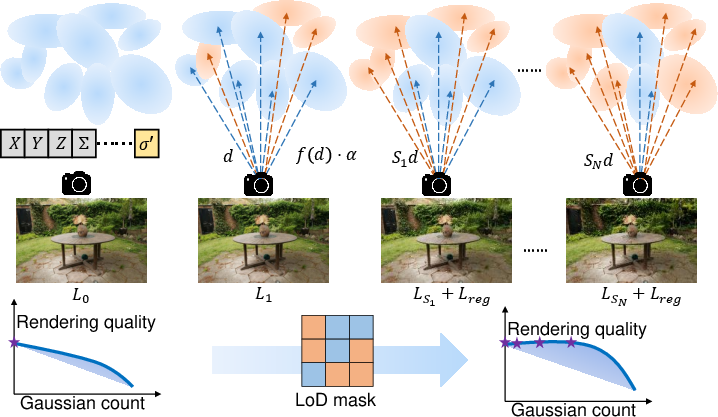

The CLoD-GS framework addresses the persistent limitations of discrete Level-of-Detail (DLoD) paradigms in real-time graphics, specifically the storage overhead and visual discontinuities ("popping" artifacts) that arise from swapping between multiple model versions. By leveraging the explicit, differentiable, and volumetric nature of 3D Gaussian Splatting (3DGS), CLoD-GS introduces a continuous Level-of-Detail (CLoD) mechanism that enables smooth, view-dependent simplification within a single unified model. This approach is particularly relevant for neural scene representations, where balancing rendering fidelity and computational efficiency is critical for scalable deployment.

Methodological Framework

CLoD-GS augments each Gaussian primitive in the 3DGS representation with a learnable, distance-dependent decay parameter, σd,i, which modulates the primitive's opacity as a function of its normalized distance to the viewpoint. The effective opacity is computed as:

αi′′=αi⋅exp(−2⋅ReLU(σd,i)2+ϵ(di′⋅sv)2)

where di′ is the normalized Euclidean distance, sv is a user-controllable virtual distance scale, and ϵ ensures numerical stability. Primitives are filtered for rendering via a dynamic mask Mi=(αi′′>τ⋅sv), allowing for continuous culling based on perceptual relevance.

Figure 1: The CLoD-GS framework integrates distance-adaptive opacity, dynamic masking, and coarse-to-fine training for continuous LoD in 3DGS.

The training regime employs a coarse-to-fine strategy, sampling sv from a uniform range to simulate varying viewing distances. A primitive count regularization loss penalizes excessive primitive usage at larger sv, enforcing compactness:

Lreg=(sv−1.0)2⋅(ReLU(ηactual−ηtarget))2

with ηtarget=1/sv1.5 and ηactual the fraction of rendered primitives. The total loss combines standard 3DGS rendering loss with this regularization, adaptively weighted to prevent over-pruning.

Experimental Results

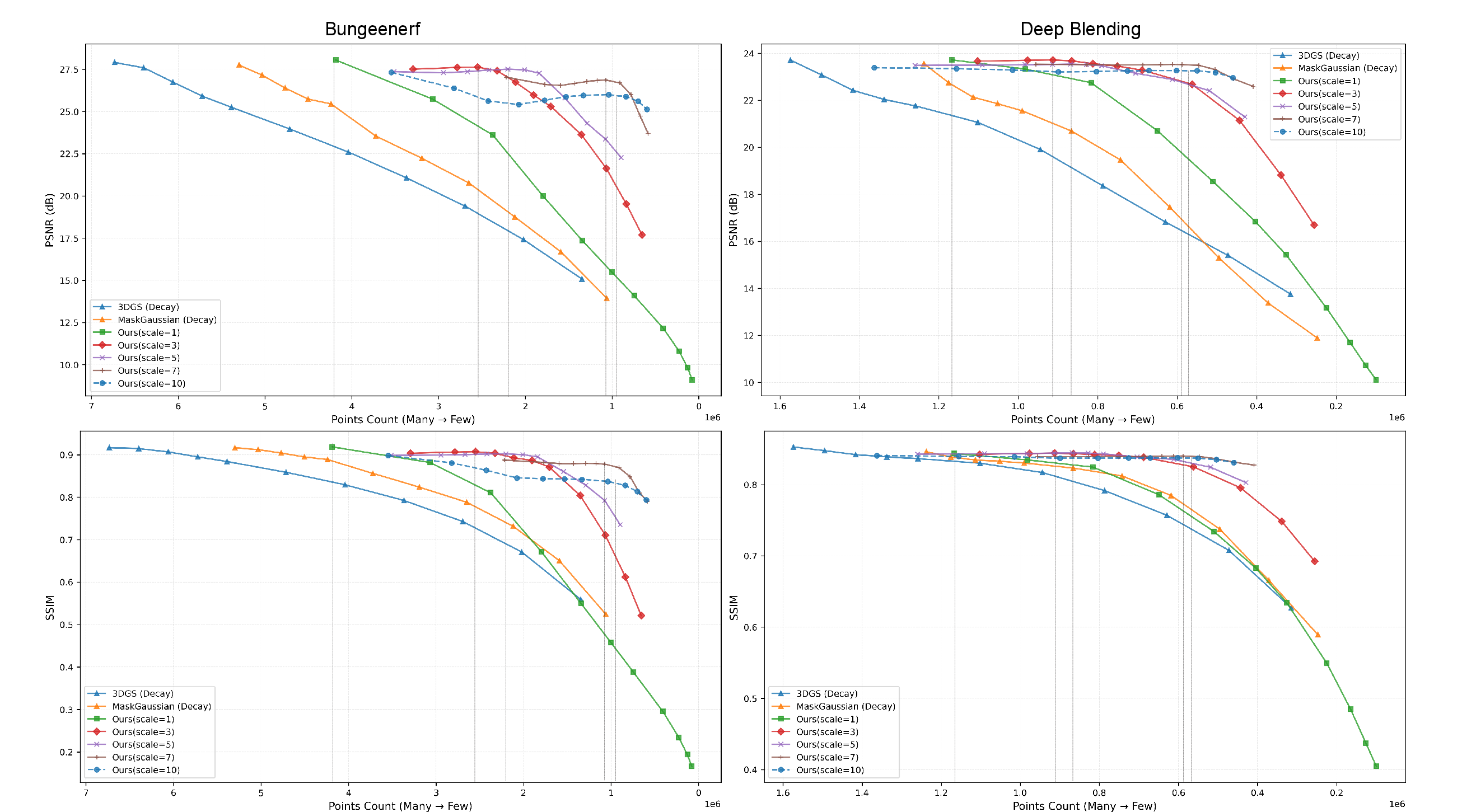

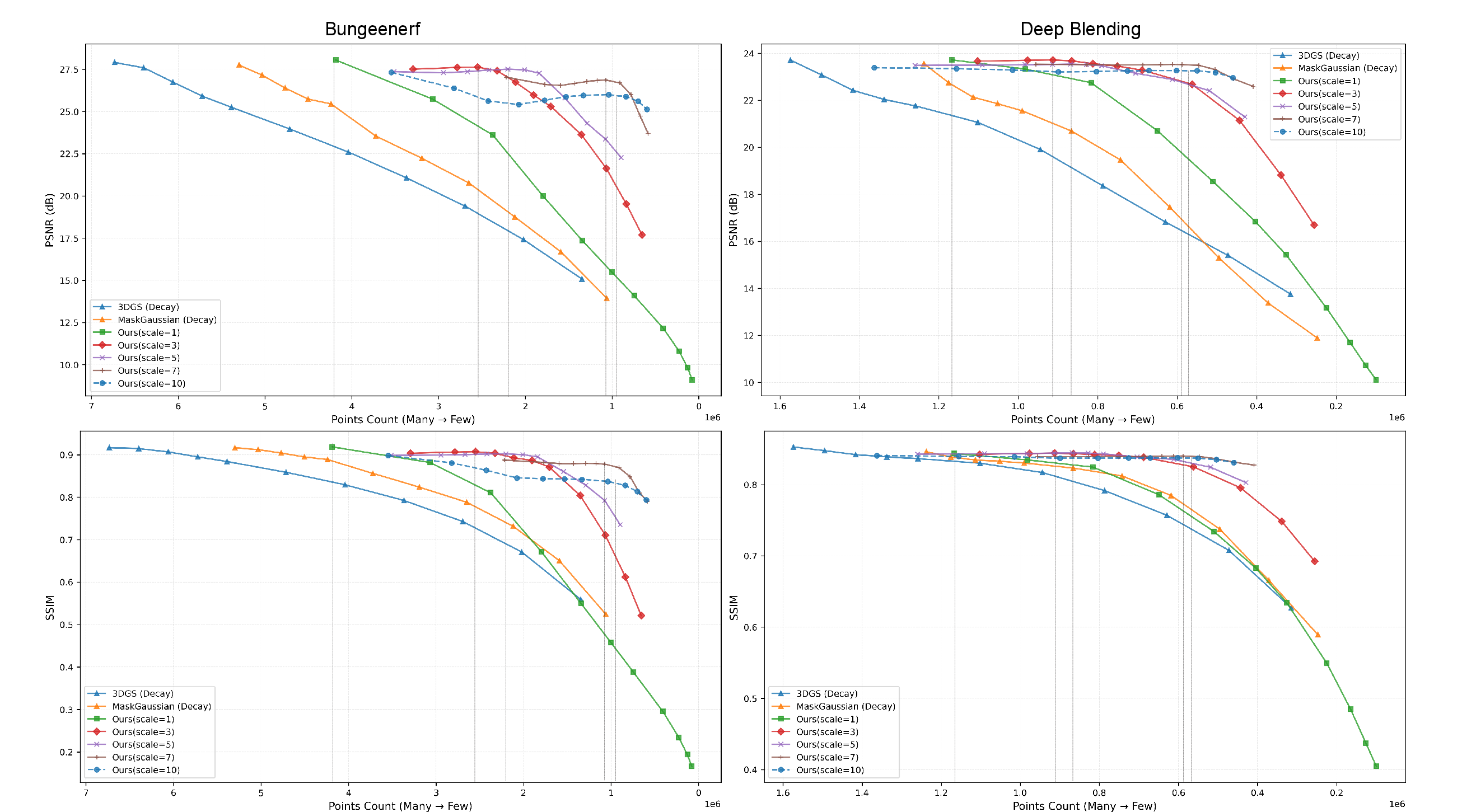

CLoD-GS was evaluated on BungeeNeRF, Tanks and Temples, and Deep Blending datasets, using PSNR, SSIM, and LPIPS as metrics. The framework consistently matches or exceeds the rendering quality of baseline 3DGS and state-of-the-art LoD/compression methods, while reducing the primitive count and memory footprint.

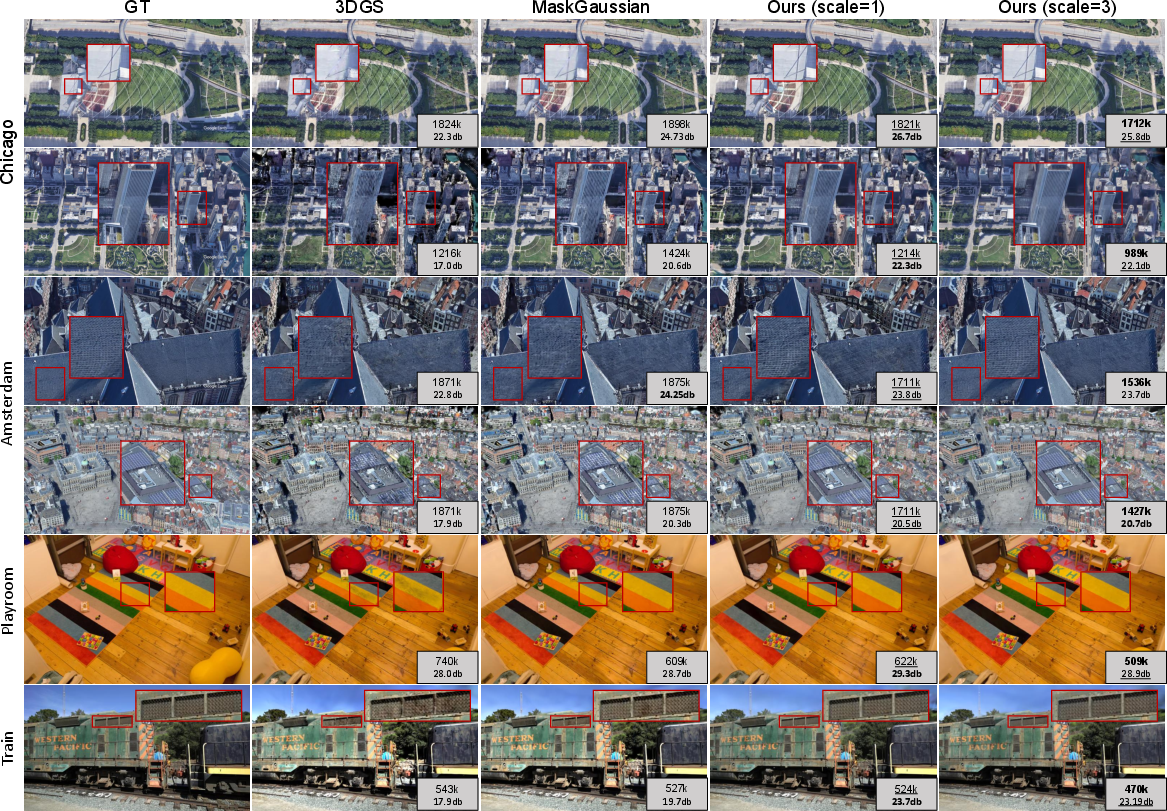

Figure 2: CLoD-GS preserves fine details and avoids artifacts at similar primitive counts, outperforming baselines under complex lighting and texture.

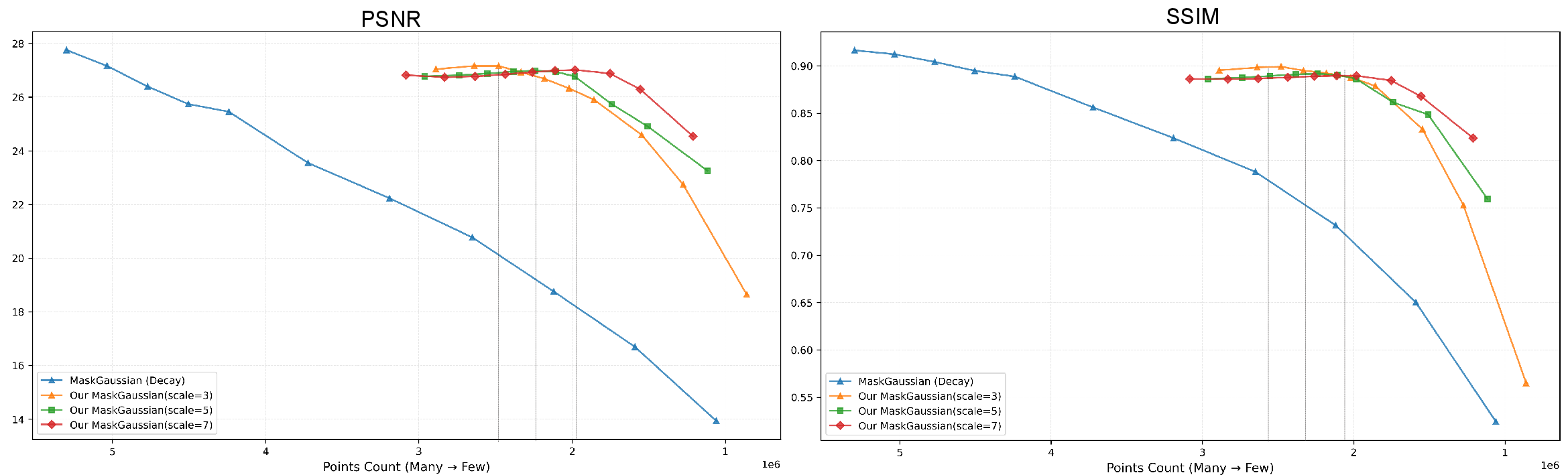

Figure 3: Quality vs. primitive count curves show CLoD-GS degrades gracefully with increasing simplification, outperforming discrete and static compression baselines.

Ablation studies confirm the necessity of the regularization loss, adaptive weighting, and multi-scale training. Removing any component results in measurable degradation in PSNR, SSIM, and LPIPS.

Comparison: Continuous vs. Discrete LoD

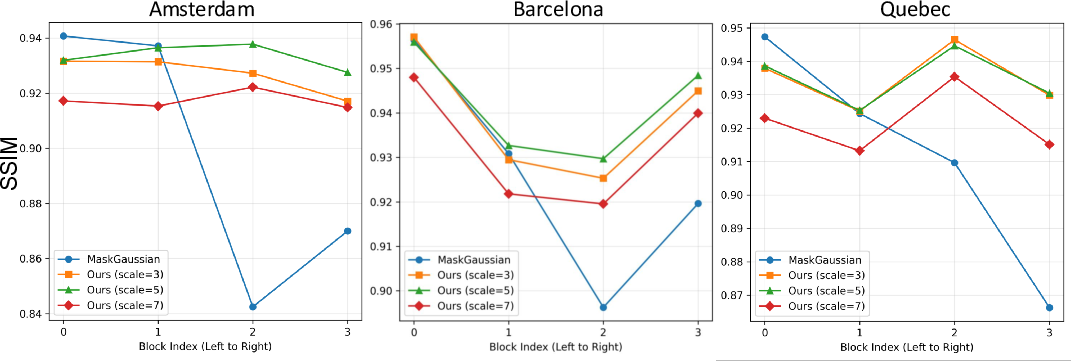

A direct comparison between CLoD and DLoD strategies demonstrates the superiority of the continuous approach. DLoD, implemented via hard model switches, produces abrupt quality transitions and visible artifacts at LoD boundaries.

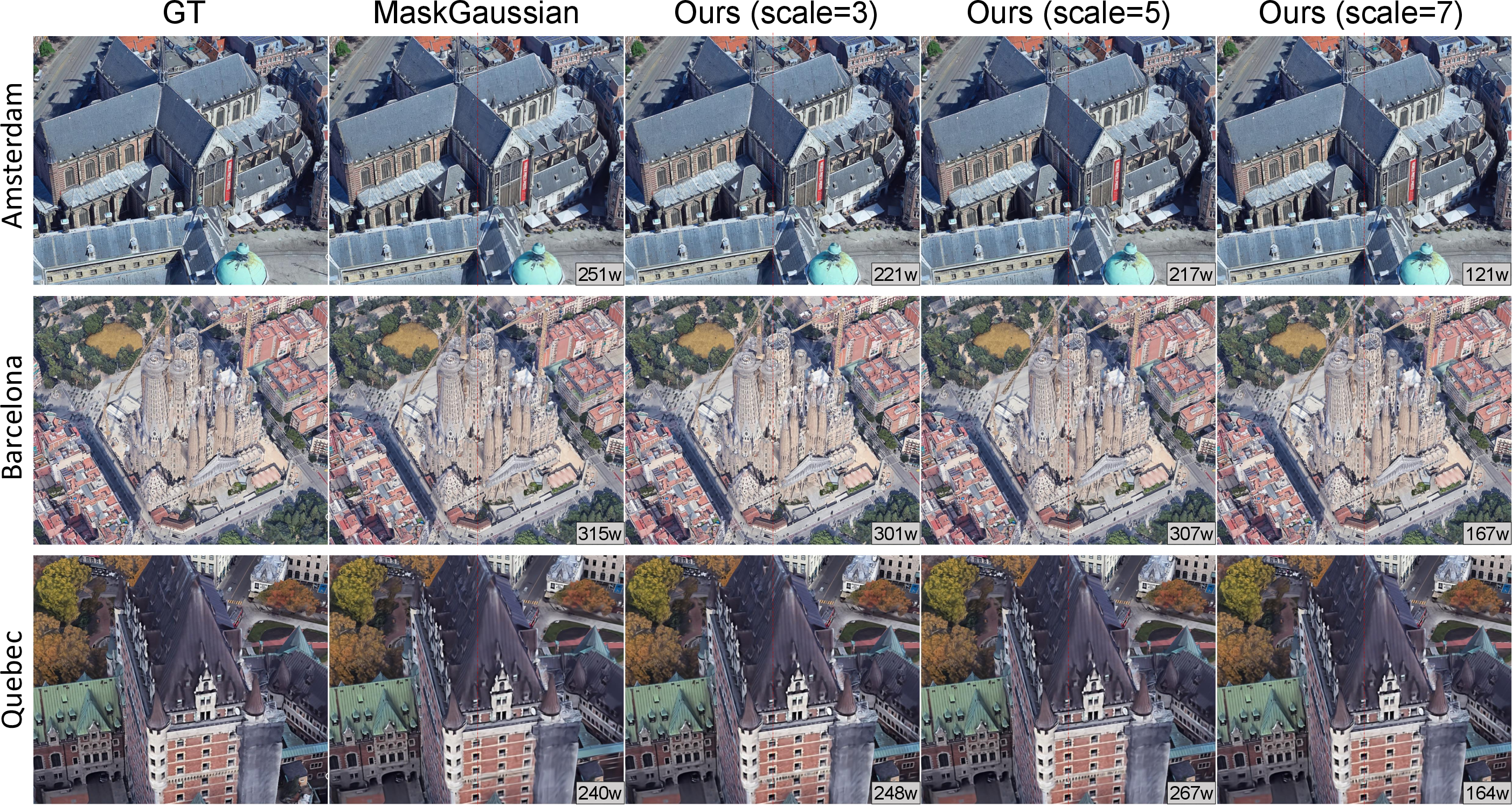

Figure 4: CLoD-GS enables smooth, artifact-free transitions across LoD regions, whereas DLoD exhibits visible popping at boundaries.

Figure 5: Metric curves for DLoD vs. CLoD show sharp discontinuities for DLoD and smooth progression for CLoD-GS.

CLoD-GS requires only a single model, reducing training time and storage requirements compared to DLoD, which necessitates multiple models.

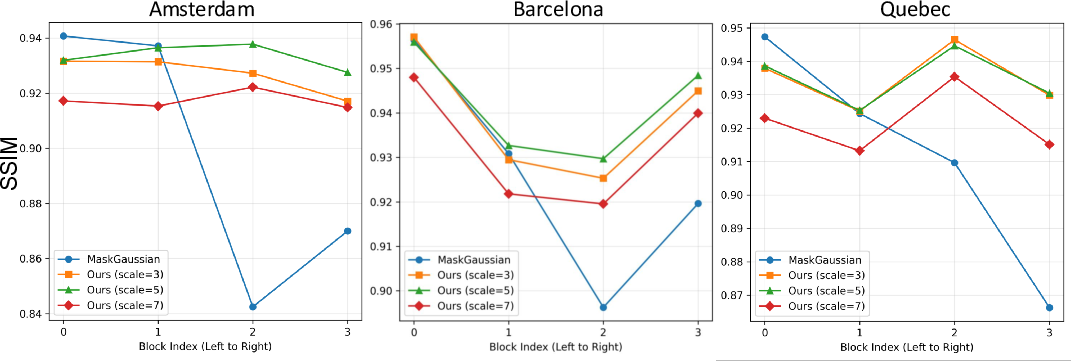

Robustness and Generality

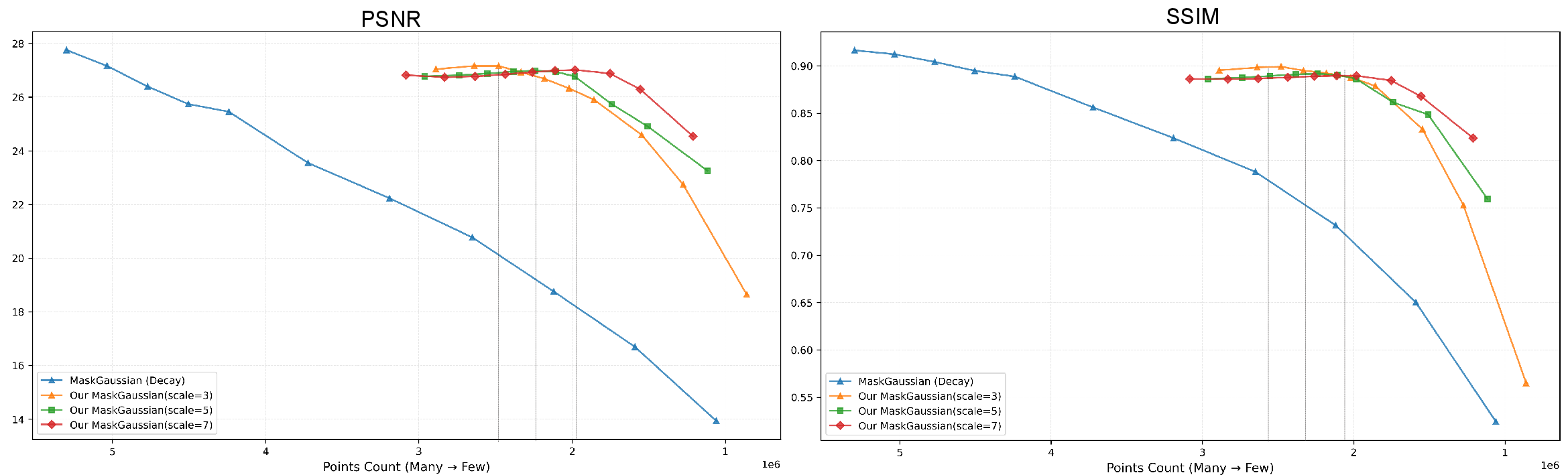

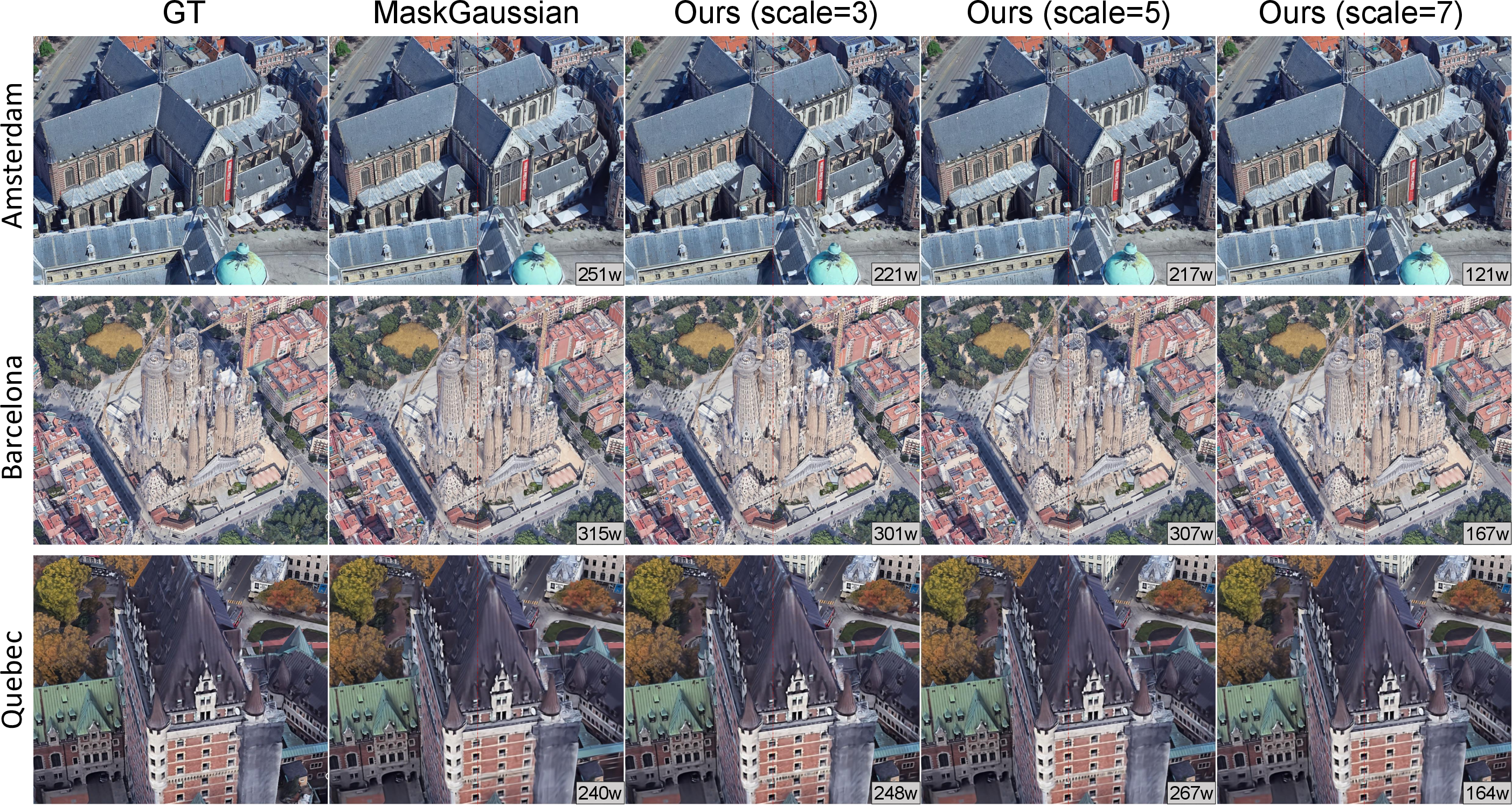

The CLoD-GS training strategy is compatible with compressed representations such as MaskGaussian, imparting continuous LoD capabilities without sacrificing compression benefits.

Figure 6: CLoD-GS enables continuous LoD on MaskGaussian-compressed models, maintaining graceful quality-compression trade-offs.

The additional storage overhead is minimal (one float per primitive, ~1.6% increase), making the approach practical for large-scale deployment.

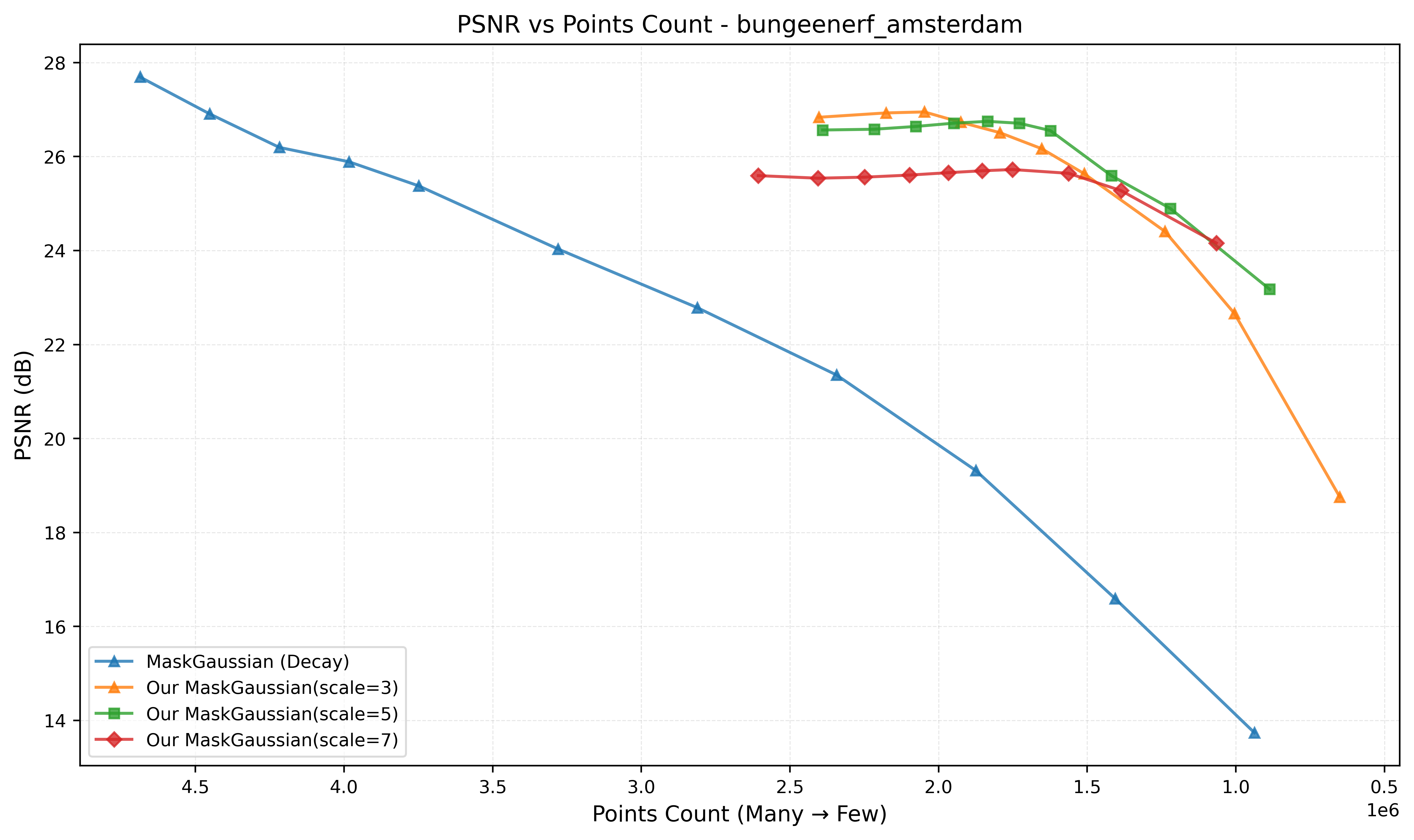

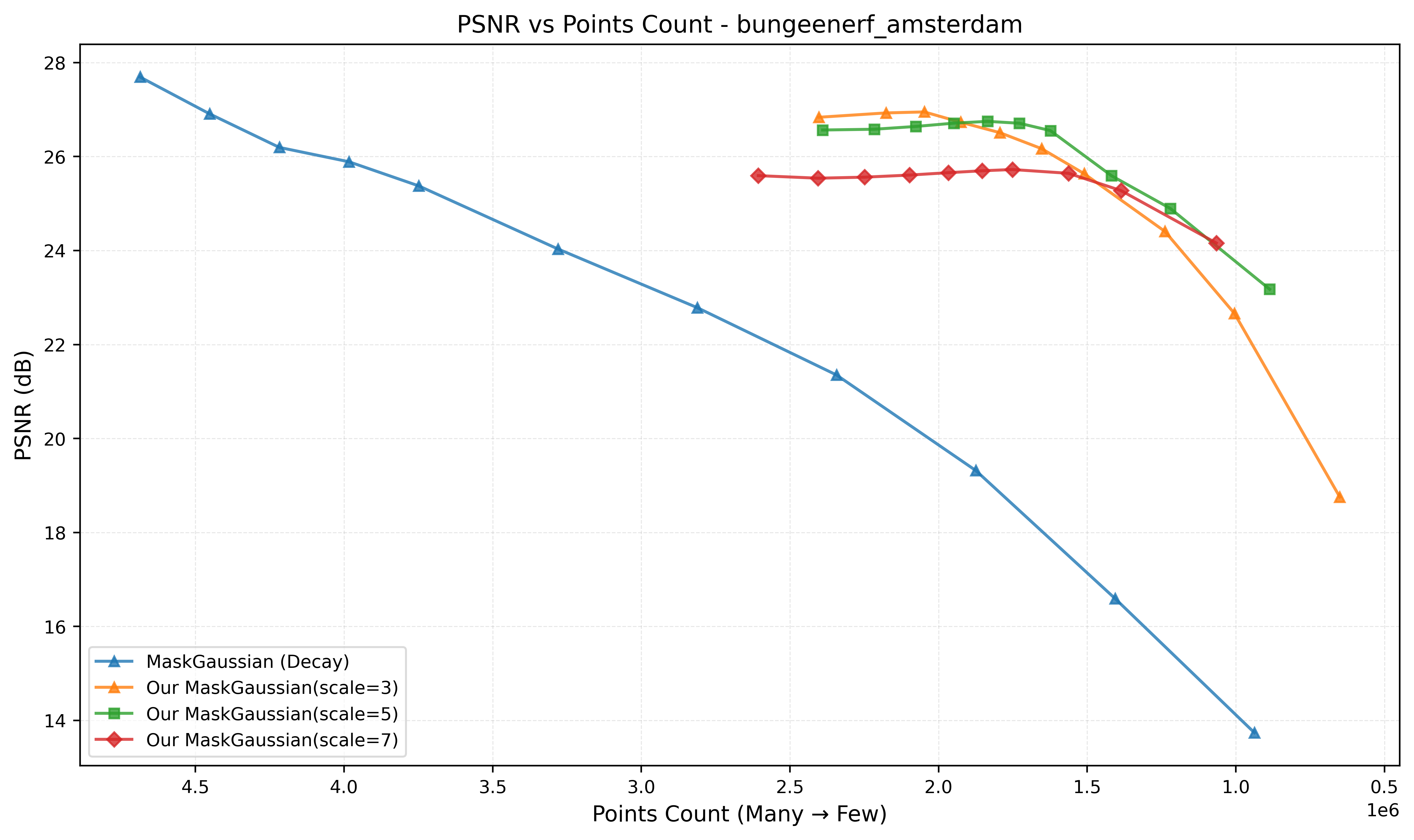

Detailed PSNR vs. primitive count curves across multiple scenes (Figures 8–23) demonstrate that CLoD-GS maintains high fidelity at reduced primitive counts, with more graceful degradation than both original 3DGS and MaskGaussian. This scalability is critical for real-time applications on resource-constrained hardware.

Figure 7: PSNR vs. primitive count for 3DGS on BungeeNeRF amsterdam; CLoD-GS achieves similar or better quality with fewer primitives.

[Figures 9–23 omitted for brevity, but each supports the above claim across diverse scenes.]

Implementation Considerations

- Computational Overhead: The per-primitive decay parameter introduces negligible runtime cost, as the opacity attenuation and masking are simple element-wise operations.

- Memory Footprint: The increase in model size is marginal, and the reduction in rendered primitives compensates for this in practice.

- Training Stability: The coarse-to-fine strategy and regularization are essential to avoid trivial solutions and ensure robustness across the LoD spectrum.

- Integration: CLoD-GS is compatible with existing 3DGS pipelines and can be combined with compression techniques for further efficiency.

Theoretical and Practical Implications

CLoD-GS demonstrates that explicit, differentiable, volumetric representations are uniquely suited for continuous LoD mechanisms. The learnable, per-primitive simplification behavior enables fine-grained control over rendering quality and performance, facilitating adaptive rendering in heterogeneous environments. The approach eliminates the need for complex hierarchical data structures and runtime mesh traversal, reducing CPU overhead and simplifying deployment.

Future Directions

Potential extensions include:

- Incorporating perceptual metrics beyond distance (e.g., saliency, semantic importance) for more intelligent simplification.

- Hybrid systems combining continuous per-primitive scaling with chunk-based streaming for massive environments.

- Application to dynamic scenes and integration with 4D Gaussian Splatting frameworks.

Conclusion

CLoD-GS provides a principled, efficient, and scalable solution to the Level-of-Detail problem in neural scene representations. By embedding a continuous, learnable LoD mechanism within the 3DGS framework, it achieves state-of-the-art rendering quality, compactness, and smoothness, with minimal overhead. The methodology is robust, generalizable, and compatible with existing compression techniques, representing a significant advancement in real-time neural rendering. Future work should explore perceptually-driven simplification and hybrid strategies for even greater scalability and fidelity.