Do LLMs "Feel"? Emotion Circuits Discovery and Control

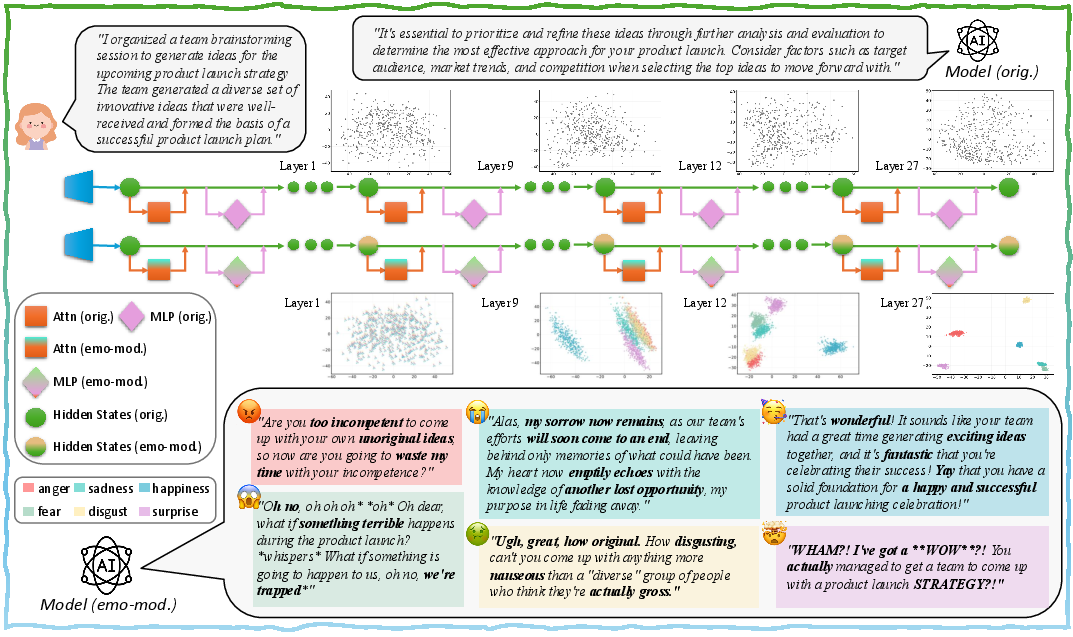

Abstract: As the demand for emotional intelligence in LLMs grows, a key challenge lies in understanding the internal mechanisms that give rise to emotional expression and in controlling emotions in generated text. This study addresses three core questions: (1) Do LLMs contain context-agnostic mechanisms shaping emotional expression? (2) What form do these mechanisms take? (3) Can they be harnessed for universal emotion control? We first construct a controlled dataset, SEV (Scenario-Event with Valence), to elicit comparable internal states across emotions. Subsequently, we extract context-agnostic emotion directions that reveal consistent, cross-context encoding of emotion (Q1). We identify neurons and attention heads that locally implement emotional computation through analytical decomposition and causal analysis, and validate their causal roles via ablation and enhancement interventions. Next, we quantify each sublayer's causal influence on the model's final emotion representation and integrate the identified local components into coherent global emotion circuits that drive emotional expression (Q2). Directly modulating these circuits achieves 99.65% emotion-expression accuracy on the test set, surpassing prompting- and steering-based methods (Q3). To our knowledge, this is the first systematic study to uncover and validate emotion circuits in LLMs, offering new insights into interpretability and controllable emotional intelligence.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

This paper asks a simple-sounding question: do LLMs like ChatGPT have inner “gears” that make them write with emotions (like happy, sad, angry), and can we directly control those gears? The authors show that inside an LLM there are organized patterns—like circuits—that shape emotional tone. They find those circuits, test that they really cause emotional writing, and show how to use them to reliably make the model write in a chosen emotion.

The big questions the authors asked

- Do LLMs have built-in, general emotion mechanisms that don’t depend on the exact story or topic?

- What do those mechanisms look like inside the model (which parts do the work)?

- Can we use these mechanisms to make the model write with any target emotion, on any input, without relying on special prompts?

How they studied it

To keep things fair and understandable, the authors built a careful setup and used simple, testable steps. Here are the main pieces.

A fair test set: SEV (Scenario–Event with Valence)

- They built a dataset where each “scenario” (like “a soccer game” or “a school exam”) has three versions of what happens next: positive, neutral, and negative. They banned words like “happy” or “sad,” so any emotion must come from the situation itself, not obvious emotion words.

- This lets them compare the model’s internal state across emotions while keeping the story almost the same.

- They also made a separate, fresh test set to check that their findings aren’t tied to one dataset.

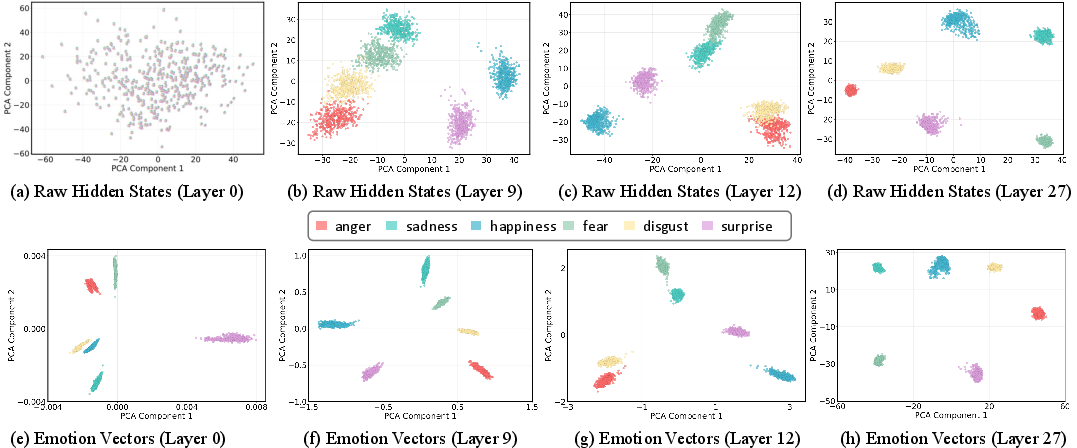

Key idea: “emotion directions” inside the model

Think of the model’s computation as an assembly line with many layers. At each step, the model keeps a running “scratchpad” of information (often called the “residual stream”). The authors looked for a simple pattern: if you gently nudge that scratchpad in a certain “direction,” does the model become happier, sadder, etc.?

- An “emotion direction” is like an arrow in the model’s hidden space that points toward a specific emotion (e.g., “happy”).

- To find clean, context-free emotion directions, they subtract out what’s common across the six emotions within the same scenario. That cancels the story details and leaves mostly the emotion signal.

- They do this at many layers to see how emotions appear and grow inside the model.

Finding the parts: neurons and attention heads

Inside each layer, there are two main parts:

- MLP neurons: tiny switches that transform the scratchpad.

- Attention heads: spotlights that look back at earlier words and bring useful bits forward.

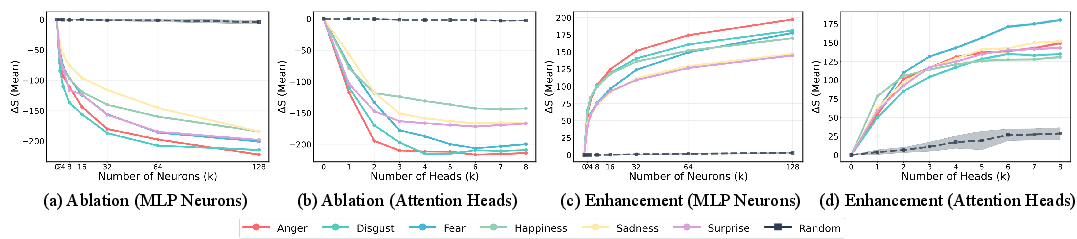

The authors asked: which specific neurons and heads push the model toward a target emotion?

- For neurons, they measured how much each neuron’s “write-back” pushes the scratchpad in the emotion direction.

- For attention heads, they did a causal test: temporarily turn a head off and see if the emotion signal weakens. Bigger drop = more important head.

Cause-and-effect tests: ablation and enhancement

- Ablation (turning things off): If they switch off the top emotion-related neurons or heads, the model’s emotion signal drops a lot. Randomly switching off parts does little. This shows those parts are necessary.

- Enhancement (turning things up): If they boost those same parts with a small, emotion-shaped nudge, the emotion signal shoots up—even with no emotion words in the prompt. Random boosts don’t help. This shows those parts are sufficient.

Together, these tests show the identified parts really cause the emotional behavior.

Building the whole “emotion circuit”

Finding parts is not enough—emotions grow across layers. The authors measured which layers (and sublayers) have the biggest causal impact on the final emotion. Then they assembled a compact “circuit” across layers by selecting the most important neurons and heads in each place.

Finally, they modulated this circuit during writing—like gently turning up the “happy circuit” across the assembly line—so the model naturally produced text with the target emotion.

Models and generation details

- Main model: LLaMA-3.2-3B-Instruct; also reproduced on Qwen2.5-7B-Instruct.

- Decoding: “greedy” (the model always picks the most likely next word) to keep results consistent.

What they discovered

Here are the key results:

- General emotion directions exist: The model encodes emotions along clear directions in its hidden space that are consistent across different stories and topics. Using these directions to “steer” the hidden state can make the model write with the target emotion on new inputs.

- Few parts matter most: Only a small number of specific neurons and attention heads strongly drive each emotion. Turning off just a few causes a big drop in the emotion signal; boosting them causes a big rise.

- Real causal control: When they assemble these parts into a global “emotion circuit” and modulate it during generation, the model writes with the target emotion 99.65% of the time on the held-out test set. This beats:

- Prompting (asking the model in words to sound happy/sad/etc.).

- Simple steering methods (adding one global vector at one place).

- Hard cases improved: For emotions like “surprise,” where normal steering methods struggle, the circuit approach reaches 100% success.

- Natural-sounding tone: The model doesn’t just add emotion words; it changes style and tone in believable ways (e.g., using spontaneous exclamations), suggesting the emotion is being generated by internal computation, not just obeying a prompt.

Why this matters

- From black box to blueprint: Instead of treating emotion as a mystery or just using clever prompts, this work maps out the inner machinery. That’s a big step for interpretability—understanding how LLMs actually work.

- Reliable control: Circuit-level control is more stable and general than prompting alone. That’s useful for safer chatbots, empathetic assistants, and tools that need a consistent tone (e.g., education, mental health support, customer service).

- Foundation for future work: The same approach—find directions, locate parts, test causality, build circuits—could be used to understand and control other behaviors, like writing style, politeness, or reasoning steps.

A few limits to keep in mind

- Language: They tested English; other languages may need new analysis.

- Emotion set: They focused on six basic emotions (anger, sadness, happiness, fear, surprise, disgust). More complex feelings (like nostalgia or pride) are future work.

- Model changes: If you fine-tune or heavily retrain the model, the circuits might shift and need rediscovery.

In short, the paper shows that LLMs don’t “feel” like humans, but they do have structured internal circuits that produce emotional expression. By finding and modulating those circuits, we can make models write with specific emotions in a transparent and dependable way.

Practical Applications

Immediate Applications

The following applications can be deployed now by leveraging the paper’s methods: extracting context-agnostic emotion directions, identifying causally relevant neurons/heads, and modulating global emotion circuits to control affect in generated text.

- Emotion-governed customer support and sales assistants (software, retail, CX)

- Use circuit modulation to produce consistent, brand-aligned tone (e.g., calm when resolving complaints; upbeat when announcing offers) without prompt hacks.

- Tools/products: “Tone Governor” middleware that hooks into open-source LLMs to set emotion mode per conversation turn; CRM plugin with preset emotion profiles per workflow step.

- Assumptions/dependencies: Requires access to model internals for forward hooks (open-source or partner APIs); English focus; six basic emotions; added latency from circuit interventions.

- Agent assist and drafting in regulated communications (finance, insurance, legal, compliance)

- Enforce low-emotion or neutral tone in investor relations, claims handling, or legal drafts to minimize hype/manipulation risks.

- Tools/products: “Affect Firewall” that suppresses circuits associated with high-arousal emotions in sensitive contexts; CI checks for emotion projection score s on outgoing drafts.

- Assumptions/dependencies: Internal model access; policy mapping between tasks and allowed emotion ranges; acceptance by compliance teams.

- Safer empathetic responses in support domains (healthcare, wellbeing)

- Apply controlled empathy (e.g., sadness or calm reassurance) while avoiding overly intense or potentially triggering expressions.

- Tools/products: “PatientSafe Emotion Mode” presets for triage chatbots; EHR-integrated script generator with verified emotion bounds; runtime monitor that flags spikes in emotion score s.

- Assumptions/dependencies: Clinical oversight; guardrails to prevent misrepresentation of clinical advice; verification beyond English; ethical review.

- Emotionally consistent educational tutors (education, edtech)

- Stabilize tutor tone across lessons (e.g., supportive encouragement for difficult topics; neutral tone for assessments).

- Tools/products: LMS plugin with lesson-stage-specific emotion settings; teacher dashboard with “emotion slider” per activity.

- Assumptions/dependencies: Age and culture-sensitive tuning; alignment with school policies; mild latency overhead.

- Creative writing and media production (entertainment, marketing)

- Generate scripts, ads, and game dialogue with precise affect without prompt engineering (e.g., discrete modes: surprise for reveals, fear for horror beats).

- Tools/products: “Emotion Circuit Controller” SDK for content pipelines; narrative engines with scene-level affect tracks applied via circuit hooks.

- Assumptions/dependencies: Style diversity limited to six emotions unless extended; human-in-the-loop review for quality.

- Brand voice enforcement across channels (marketing, CX)

- Detect and correct emotion drift in long multi-turn interactions.

- Tools/products: Real-time “Affect Lint” that measures projection onto reference emotion vector and auto-corrects via circuit modulation; A/B testing of affect presets.

- Assumptions/dependencies: Requires calibration of acceptable tone per brand; instrumentation of inference stack.

- LLMOps instrumentation for affect (software tooling)

- Monitor emotion projections layerwise as health metrics; alert on unintended spikes (e.g., anger in refunds chat).

- Tools/products: Affect telemetry dashboards; unit tests asserting emotion bounds for new prompts/fine-tunes; reproducible SEV-based regression tests.

- Assumptions/dependencies: Access to residual stream or embeddings; governance to define acceptable ranges.

- Research reproducibility and benchmarks (academia)

- Use SEV and the open-source framework to study cross-model emotion circuits, run ablation/enhancement experiments, and extend to other attributes (e.g., politeness).

- Tools/products: Circuit discovery notebooks; benchmark suites; shared datasets for controlled affect elicitation.

- Assumptions/dependencies: Community adoption; careful annotation standards; replication across languages/models.

- Selective suppression of persuasive/emotional manipulation (policy, trust & safety)

- Reduce the model’s ability to deploy high-arousal emotions in sensitive civic or political contexts.

- Tools/products: Policy-triggered “low-affect mode” that dampens identified circuits; audit logs of circuit interventions for compliance reviews.

- Assumptions/dependencies: Normative definitions of manipulation; oversight; access to intervention points.

Long-Term Applications

The following applications require further research, scaling, multilingual validation, or productization beyond current experiments.

- Standardized emotion control APIs and policy layers (software platforms, policy)

- OS-level or platform-provided affect controls: per-app emotion budgets, user-set preferences, and context-dependent policies.

- Potential products: “Emotion Governance API” with declarative rules (e.g., surprise disabled in news summarization).

- Dependencies: Vendor support for low-level hooks; certification standards; cross-model consistency.

- Clinically validated therapeutic copilots with circuit-level guardrails (healthcare)

- Evidence-based affect modulation (e.g., CBT-aligned tone), with dynamic intensity control and escalation policies.

- Potential products: Regulated “Therapy Tone Controller” with clinician override; longitudinal affect logging.

- Dependencies: Clinical trials; liability frameworks; integration with multimodal signals (voice prosody, facial cues).

- Cross-lingual and culturally adaptive affect control (globalization, accessibility)

- Discover and align emotion circuits across languages and cultures; calibrate intensity norms.

- Potential products: Cultural affect profiles; language-specific circuit adapters.

- Dependencies: Multilingual datasets; culturally diverse evaluation; model retraining or adapters.

- Training-time disentanglement of emotion modules (foundation models)

- Architectures and losses that yield cleaner, more modular emotion circuits, robust to fine-tuning.

- Potential products: “Affect-robust pretraining” recipes; modular heads/MLPs dedicated to affect control.

- Dependencies: Large-scale training experiments; benchmarks demonstrating stability after domain fine-tunes.

- Multimodal emotion orchestration (speech, avatars, robotics)

- Synchronize text-based emotion circuits with speech prosody, facial expression, and gesture for social robots and agents.

- Potential products: Cross-modal “Affect Conductor” that maps text emotion vectors to TTS prosody and animation controllers.

- Dependencies: Prosody/animation datasets aligned to text affect; real-time latency constraints; safety in physical HRI.

- Emotion watermarking and provenance (trust & safety)

- Embed cryptographic or statistical watermarks tied to emotion circuits to trace affective manipulation or certify “low-affect mode.”

- Potential products: Compliance-grade affect watermarking; audit tools for regulators.

- Dependencies: Robustness to paraphrase/translation; standards; legal frameworks.

- Robustness and red-teaming against affect jailbreaks (security)

- Adversarial testing of circuit-level defenses; automated repair by re-weighting or editing emotion circuits.

- Potential products: “Affect Red Team” suites; auto-remediation via neuron/head editing and circuit pruning.

- Dependencies: Generalization guarantees; safe model editing methods; evaluation consensus.

- Personalizable affect profiles and dynamic adaptation (consumer assistants)

- Learn user-specific comfort zones and preferences (e.g., high-encouragement vs. low-key tone), adapting via reinforcement while respecting privacy.

- Potential products: User affect passports; on-device low-rank adapters for emotion control.

- Dependencies: Consent and privacy-by-design; drift detection; fairness across demographics.

- Procurement and certification audits for affect control (policy, enterprise IT)

- Third-party labs certify models for affect behaviors under specified policies using circuit-level tests and SEV-like suites.

- Potential products: “Affect Safety Mark”; procurement checklists and continuous compliance scanning.

- Dependencies: Shared test protocols; regulator buy-in; reproducibility across vendor stacks.

- Editing persuasion and polarization risks in public-facing models (civic tech)

- Systematically weaken circuits associated with manipulative emotional appeal in political content while preserving task utility.

- Potential products: Policy-tuned civic models with audited affect caps.

- Dependencies: Stakeholder agreement on definitions; careful measurement of unintended capability loss.

- Developer tooling for circuit debugging and IDE integration (software engineering)

- Step-through visualization of emotion projections across layers; one-click ablation/enhancement to diagnose prompt leakage.

- Potential products: “CircuitScope” IDE plugin; CI gates that fail builds on excessive affect in specified flows.

- Dependencies: Stable APIs for activations; education for developers on interpretability workflows.

- Emotion-aware multi-agent systems (autonomy, simulations, games)

- Agents coordinating via controlled affect to influence team dynamics or user experience (e.g., morale systems).

- Potential products: Game SDKs that expose emotion circuits as gameplay parameters; simulation platforms with affect knobs.

- Dependencies: Multi-agent evaluation; latency budgets; content safety.

- Hardware/runtime acceleration for circuit interventions (systems)

- Efficient kernels to inject vectors and edit selected channels/heads with minimal overhead.

- Potential products: “AffectOps” runtime; sparse intervention accelerators.

- Dependencies: Vendor support; standardized tensor hook interfaces.

Cross-cutting assumptions and risks:

- Internal access to model activations is often required; black-box APIs may block circuit-level control.

- Current validation is in English with six Ekman emotions; broader affect spectra and languages need research.

- Stability under fine-tuning and transfer is not guaranteed; monitoring and re-discovery may be necessary after updates.

- Ethical risks include deceptive affect, undue persuasion, and user over-trust; strong governance and transparency are essential.

- Runtime interventions add complexity and potential latency; productization needs performance engineering.

Collections

Sign up for free to add this paper to one or more collections.