- The paper demonstrates that LLMs match or exceed traditional preprocessing techniques, achieving up to 97% accuracy in tasks like stopword removal and lemmatization.

- It details evaluations on diverse datasets, revealing a 6% improvement in F1 scores for text classification tasks due to enhanced contextual understanding.

- The study underlines the higher computational demands of LLM-based methods, highlighting the need for further research in low-resource language settings.

Examining the Linguistic Capabilities of LLMs in Text Preprocessing

This essay explores the paper "Investigating LLMs' Linguistic Abilities for Text Preprocessing," which explores the application of LLMs in the domain of text preprocessing. Text preprocessing is a pivotal component of NLP that significantly influences downstream tasks. Traditional preprocessing methods like stopword removal, lemmatization, and stemming often lack language-specific contextualization. The authors propose leveraging LLMs for preprocessing tasks given their ability to incorporate context and adapt dynamically to various languages and domains.

The study evaluates LLMs against traditional methods using diverse datasets, assessing their efficacy in English and several European languages. The results indicate that LLMs match or exceed traditional methods in many instances, particularly for lemmatization and stopword removal. The accuracy of LLMs in replicating traditional methods reached up to 97% for stopword removal and lemmatization tasks.

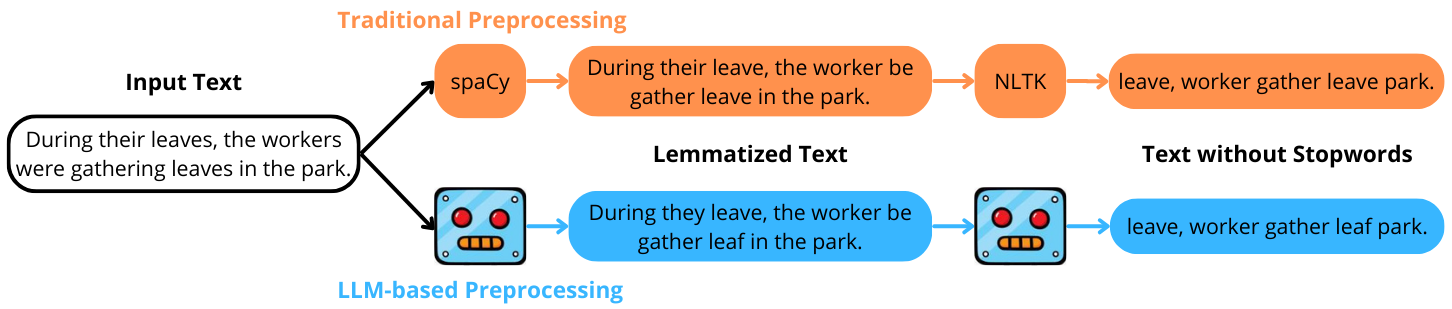

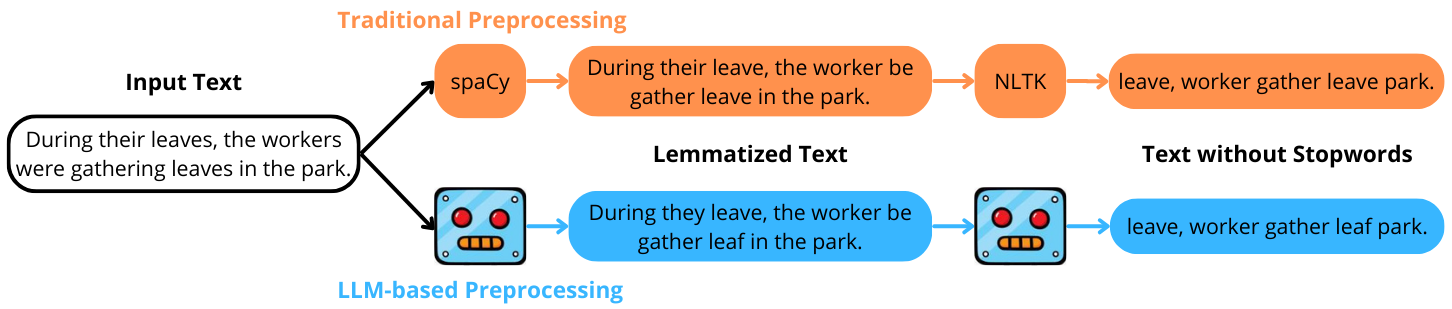

Figure 1: Example of traditional vs. LLM-based text preprocessing. In this case, the LLM correctly disambiguates the word "leaves", distinguishing between employee absences and foliage in its two occurrences, and applies lemmatization accordingly.

Impact on Downstream Tasks

Utilizing LLMs in preprocessing has shown to improve the performance of several text classification tasks. For instance, employing an LLM-based preprocessing pipeline resulted in up to 6% improvements in the F1 score for certain datasets. It was especially evident in tasks involving language-specific subtleties and context-sensitive sentence structures. However, the performance gains varied across datasets and languages.

Limitations and Challenges

One significant limitation of LLM-based preprocessing is its computational demand. While it provides noticeable improvements and language adaptability, the computational resources required are considerably higher than traditional methods. This approach is better suited for scenarios where traditional resources are inadequate, particularly in low-resource languages.

Future Research Directions

The paper suggests several avenues for future research. One promising direction is to explore LLMs' capabilities in text preprocessing for low-resource languages, where traditional linguistic resources are scarce. Additionally, refining prompts to enhance LLM performance and further understanding their contextual decisions in text preprocessing are potential areas of interest.

Conclusion

Overall, the investigation reveals that LLMs possess considerable potential to supplement or replace traditional preprocessing techniques. The paper provides evidence that LLMs can effectively deal with context-dependent linguistic challenges, thereby offering significant improvements in text preprocessing for NLP tasks across different languages and domains. These findings highlight the potential of LLMs to transform the preprocessing landscape, especially in multilingual and low-resource settings.