Learning Human-Humanoid Coordination for Collaborative Object Carrying

Abstract: Human-humanoid collaboration shows significant promise for applications in healthcare, domestic assistance, and manufacturing. While compliant robot-human collaboration has been extensively developed for robotic arms, enabling compliant human-humanoid collaboration remains largely unexplored due to humanoids' complex whole-body dynamics. In this paper, we propose a proprioception-only reinforcement learning approach, COLA, that combines leader and follower behaviors within a single policy. The model is trained in a closed-loop environment with dynamic object interactions to predict object motion patterns and human intentions implicitly, enabling compliant collaboration to maintain load balance through coordinated trajectory planning. We evaluate our approach through comprehensive simulator and real-world experiments on collaborative carrying tasks, demonstrating the effectiveness, generalization, and robustness of our model across various terrains and objects. Simulation experiments demonstrate that our model reduces human effort by 24.7%. compared to baseline approaches while maintaining object stability. Real-world experiments validate robust collaborative carrying across different object types (boxes, desks, stretchers, etc.) and movement patterns (straight-line, turning, slope climbing). Human user studies with 23 participants confirm an average improvement of 27.4% compared to baseline models. Our method enables compliant human-humanoid collaborative carrying without requiring external sensors or complex interaction models, offering a practical solution for real-world deployment.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Explaining “COLA: Learning Human‑Humanoid Coordination for Collaborative Object Carrying”

Overview: What this paper is about

This paper is about teaching a humanoid robot (a robot with a human-like body) to carry objects together with a person in a smooth, safe, and helpful way. The method, called COLA, helps the robot decide when to gently follow the human’s lead and when to take the lead itself—without needing extra cameras or special force sensors. It relies only on the robot’s “body sense” (proprioception), which is like how you can feel where your arms and legs are without looking.

The main questions the researchers asked

The researchers focused on three easy-to-understand goals:

- Can a humanoid robot carry things with a human in a way that feels natural and safe, like a good teammate?

- Can the robot reduce how hard the human has to work while keeping the object steady (not wobbling or tilting)?

- Can the same robot handle many different objects (like boxes, stretchers, or carts) and different movements (turning, going straight, going up a slope)?

How they did it (in simple terms)

Think about two people carrying a table together. Sometimes one person leads (sets the direction and speed), and the other follows (matches the movement). Good partners also “give” a little when the other pushes or pulls—this is called compliance. COLA teaches a robot to do that.

Here’s the approach, with real-world analogies:

- Proprioception-only: The robot uses only its internal sensors—like joints and balance—to “feel” what’s happening, just as you can feel someone pushing your hands when you both carry a box.

- Learning by trial and error (reinforcement learning): The robot practices in a simulator, trying actions and getting “rewards” for doing well (moving with the human, keeping the object level, not wobbling).

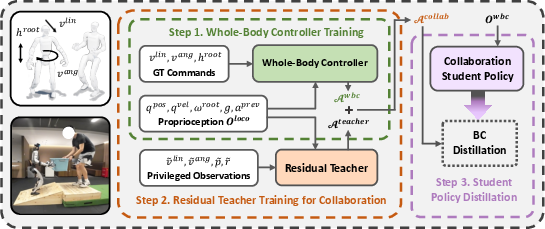

- A strong base controller: First, they train a reliable “whole-body” controller so the robot can walk and move its arms smoothly. Think of this as teaching basic balance and coordination.

- A “residual” teacher policy: On top of that, they add a small “correction system” that learns the special tricks needed for teamwork (like when to lift more, or how to turn with someone). “Residual” here means it adds just the right extra adjustments, like a coach giving small tips to refine your movement.

- Teacher–student training (knowledge distillation): The “teacher” model is allowed to see extra information in simulation (like the exact position and speed of the carried object). It becomes very good. Then a “student” model learns to copy the teacher but uses only the robot’s body sensors—so it can work in the real world without extra equipment.

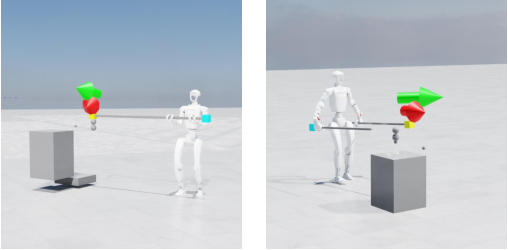

- Closed-loop training environment: In the simulator, the object, the robot, and a “virtual human” are all connected, so when one moves, it affects the others—just like in real life when carrying something together. This helps the robot learn how small pushes and pulls change the shared object and what to do in response.

- Leader vs. follower: The robot can act as a follower (match the human) when the “desired speed” is set to zero, or as a leader (set the pace) when given a nonzero speed. This lets it switch roles smoothly.

Key idea behind “feeling” forces without a force sensor:

- By comparing where its joints are versus where they’re supposed to be (the “offset”), the robot can guess if something is pushing or pulling on it—similar to how you can tell if a door is stuck because your arm feels different when you try to push it.

What they found and why it matters

Below are the main results, introduced here to highlight both performance and usefulness:

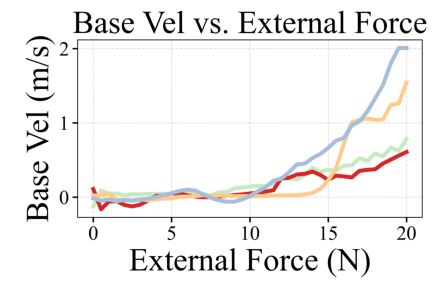

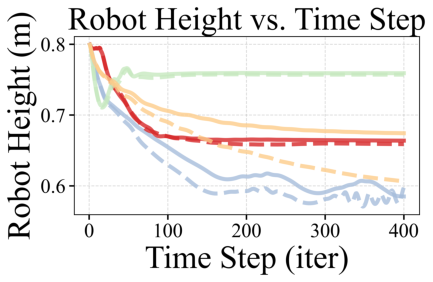

- Reduced human effort: In simulations, the robot cut the human’s needed effort by roughly 25–31% compared to other methods, while keeping the object steady. In real-world user studies with 23 people, participants reported about a 27% improvement over baselines.

- Better coordination: The robot closely matched the human’s movement with small errors in both straight-line speed and turning. This means it felt more like a good partner than a stiff machine.

- Works in many situations: It handled different objects (boxes, desks, stretchers, carts) and different movements (going straight, turning, climbing slopes), showing strong generalization.

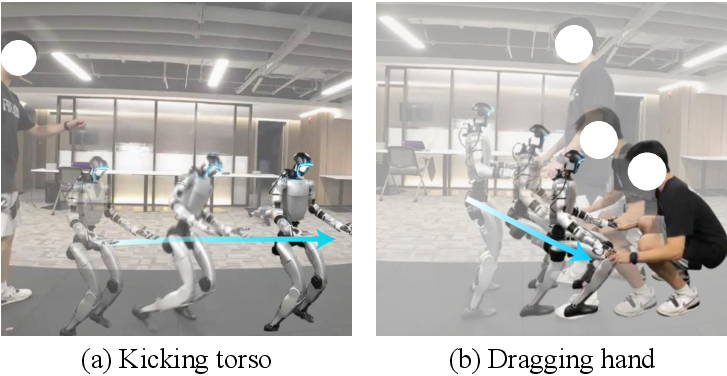

- Natural “give and take” (compliance): The robot would resist when it needed to stay stable (like holding posture), but it would also “go with” gentle pushes at the hands or arms to start moving together—just like a human partner would.

- No extra sensors needed: The final model only uses the robot’s built-in body sensors. That makes it simpler, cheaper, and easier to deploy in real places like homes, hospitals, or factories.

Why this research is important

If robots can safely and smoothly carry things with people, it can make a big difference in:

- Healthcare: Helping lift and move patients or medical equipment.

- Home assistance: Helping carry furniture or heavy boxes.

- Factories and warehouses: Teaming up with workers to move awkward or heavy items.

- Emergency response: Assisting in rescue tasks where quick, coordinated carrying is needed.

Because COLA doesn’t depend on extra cameras or complex force sensors, it’s more practical to use in the real world. Being able to switch between leading and following also makes it more flexible and intuitive for human partners.

Limitations and what’s next

The method already works well with only body sensors, but it could get even better by adding:

- Vision (cameras) or touch sensors to understand the scene and contact more precisely.

- Smarter planning so the robot can decide on its own how best to help (for example, choosing paths or lifting strategies).

- Broader training for even more object types and teamwork situations.

In short, COLA shows a promising way for humanoid robots to become helpful, polite teammates when carrying objects with people—making shared tasks easier, safer, and more natural.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Based on the paper’s methodology, experiments, and claims, the following unresolved gaps and open questions remain for future research to address:

- Missing direct force sensing: The policy infers interaction forces from joint-state offsets without validating accuracy against ground-truth force/torque sensors; quantify this proxy’s fidelity and explore integrating wrist/hand F/T sensing, tactile arrays, or impedance control for safer, more precise load sharing.

- No autonomous role negotiation: “Leader” vs “Follower” is imposed via velocity commands rather than inferred online; develop policies that detect human intent and dynamically switch or negotiate roles, including conflict resolution when human and robot goals diverge.

- Simplified human model in simulation: The “support body” with a 6-DoF joint and PD control does not capture realistic human gait, compliance, delays, grip changes, or stochastic behaviors; incorporate biomechanical human models, variable compliance, unpredictable stops/jerks, and multi-contact interactions (e.g., hip/torso coupling).

- Limited perception: The student operates on proprioception only; evaluate the benefits and risks of adding vision (human pose, object pose, obstacle detection) and tactile sensing for intent recognition, obstacle avoidance, and grasp adaptation, and quantify how multi-modal inputs improve safety and performance.

- Safety guarantees and failure handling: No analysis of passivity, maximum contact forces, emergency behaviors, or safe fail-states; design and verify safety-aware controllers with formal guarantees (e.g., passivity, CLF/CBC), plus reflexes for slips, drops, stumbles, or unexpected human motions.

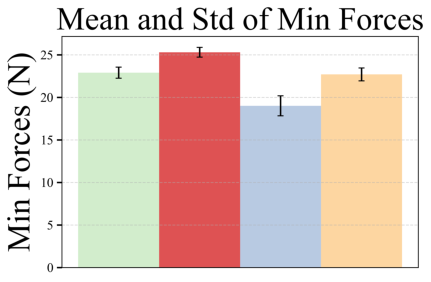

- Inconsistent and narrow effort metrics: Reported “effort reduction” percentages (24.7%, 31.47%, 27.4%) are inconsistent and rely on limited proxies (minimum force to initiate movement, simulated horizontal forces); establish standardized, reproducible protocols measuring vertical/horizontal load sharing, human biomechanics (EMG, ground-reaction forces), and metabolic cost.

- Reward design sensitivity: The composite reward (e.g., velocity tracking, height diff, force penalty) lacks ablation and sensitivity analysis; study how weights and terms (e.g., jerk, comfort, torque smoothness) affect learned behaviors and minimize unintended strategies.

- Object diversity and load ranges: Real-world tests covered 3–20 kg rigid objects; assess generalization to heavier loads (>40 kg), deformable/fragile items, articulated objects, long/awkward geometries, off-center mass distributions, and compliance variability.

- Terrain and environment scope: Beyond straight paths and slopes, evaluate stairs, curbs, uneven or loose surfaces (gravel, grass), narrow passages, and cluttered environments with obstacle avoidance while co-carrying.

- Grasp acquisition and regrasp: Hands were fixed in predefined grasps and fingers excluded; integrate perception-driven grasping, regrasping strategies, slip detection, and recovery (including finger control) for picking up/lowering and handling varied handles/edges.

- Real-world force instrumentation: “Minimum force to move robot” is reported without continuous interaction force/torque logs; instrument handles with 6-axis F/T sensors to capture time-series interaction profiles and validate compliance, load split, and stability.

- Personalization to the human: A fixed ~15 N threshold to trigger following is used; develop adaptive personalization to user strength, intent cues, and preferences (thresholds, responsiveness) learned online or via brief calibration.

- Long-horizon robustness: Duration, thermal limits, battery draw, and behavior drift over extended carrying tasks are not studied; evaluate long-horizon stability, comfort, energy consumption, and consistency across minutes-long sessions.

- Cross-platform transferability: Trained/deployed on a single robot (G1) with PD position control; test transfer to torque-controlled and variable-stiffness humanoids, different kinematics, and actuators, including hardware-aware policy adaptation.

- Distillation robustness: Student is trained via behavioral cloning, which is vulnerable to covariate shift; compare against interactive imitation (e.g., DAgger), privileged-to-proprioceptive RL fine-tuning, and quantify the teacher–student performance gap.

- Privileged object-state gap: Teacher uses ground-truth object pose/velocity while the student has none; characterize degradation under diverse objects and dynamics, and investigate onboard object-state estimators (vision/tactile) and uncertainty-aware policies.

- Failure modes and recovery strategies: No systematic analysis of errors (loss of grip, human stumble, sudden stops, collisions); design detection and recovery mechanisms and evaluate incident rates and recovery success.

- Comparative baselines: Baselines are limited (Vanilla MLP, goal estimation, Transformer) and omit strong human–robot co-carry systems (e.g., haptic-based model-based WBC, H2-COMPACT) under matched conditions; perform rigorous benchmarking against state-of-the-art.

- User study design: The 23-participant study reports mean ratings without demographics, statistical tests, or inter-rater reliability; conduct broader, controlled studies (power analysis, significance tests) across diverse populations and tasks.

- Real-time system characterization: Inference latency, control frequency, and compute load on the real robot are not reported; characterize real-time performance, jitter, and power/thermal constraints under dynamic contacts.

- Sim-to-real strategies: Domain randomization for object mass/inertia, surface friction, delays, and perturbations is not detailed; implement systematic randomization and quantify sim-to-real gaps with targeted robustness tests.

- High-level planning and communication: “Leader” mode relies on pre-specified goal commands; integrate shared path planning, natural-language/gesture interfaces, and negotiation protocols for destination, speed, and maneuver coordination.

- Explicit load-sharing control: The policy aims to reduce human effort but does not explicitly regulate vertical/horizontal load split; design controllers that actively target ergonomically safe load distributions subject to human comfort constraints.

- Multi-human scenarios: Only one human partner is considered; extend to multi-agent collaboration (two humans and one robot, or teams), including role allocation, coordination strategies, and communication among multiple partners.

Glossary

- 6-DoF (Six Degrees of Freedom): A kinematic property indicating independent motion along three translational and three rotational axes. Example: "which is connected to the support body via a $6$-DoF joint."

- Ablation study: An experimental analysis that removes or varies components of a system to assess their impact on performance. Example: "The lower part of \cref{tab:main_exp_results} presents an ablation study on different choices of our model architecture of the student policy."

- Behavioral cloning: A supervised learning method that trains a policy to mimic expert actions via recorded demonstrations. Example: "We use behavioral cloning to distill the teacher policy into a student policy, training the student to mimic the teacher by minimizing the mean squared error between their outputs during interactions with the environment."

- Closed-loop training environment: A simulation setup where the agent’s actions influence future observations through dynamic interactions, enabling feedback-driven learning. Example: "In the second training step, we introduce a closed-loop environment for policy training to explicitly model the dynamic interaction between the human, object, and humanoid."

- Compliance (robotics): The ability of a robot to yield or adapt to external forces, enabling safe, force-aware interaction. Example: "Position-only control lacks the compliance required for human-humanoid interaction~\cite{yu2021adaptive,hartmann2024deep}, as it operates without force awareness."

- Distillation (policy distillation): Transferring knowledge from a larger or privileged model (teacher) to a smaller or restricted model (student). Example: "In the distillation step, we transfer the expertise of the teacher policy ($\mathcal{F}^{\text{wbc} + \mathcal{F}^{\text{teacher}$) into a student policy, $\mathcal{F}^{\text{student}$, designed for real-world deployment."

- End-effector: The terminal component of a robot arm (e.g., hand or gripper) that interacts with objects. Example: "The end-effector goal command, representing the $6-$DoF target pose of the robot's wrist, is generated using \ac{slerp}."

- Ground truth (GT): The accurate, authoritative data used as a reference for training or evaluation. Example: "represent the GT states of the carried object with a history of length ."

- Haptic cues: Signals derived from force or touch sensing that inform control or intent inference. Example: "While H$2$-COMPACT~\cite{bethala2025collaboration} proposes a learning-based model that uses haptic cues to predict horizontal velocity commands, it still operates with limited scope."

- Isaac Lab: A high-performance robotics simulation and learning framework by NVIDIA for large-scale training. Example: "We train our policy in Isaac Lab on a single RTX $4090$D GPU using \ac{ppo} with $4096$ parallel environments."

- Loco-manipulation: Integrated control of locomotion and manipulation tasks in a single robotic policy. Example: "which have demonstrated the efficacy of reinforcement learning for training humanoid robot loco-manipulation and have achieved notable success in real-world deployments"

- Multi-Layer Perceptron (MLP): A feedforward neural network composed of multiple layers of fully connected neurons. Example: "Vanilla MLP: We implement the policy as an \ac{mlp}, initialize it with the weights of the whole-body controller, and train it end-to-end with \ac{ppo}."

- Open-loop (control): A control approach that operates without using feedback from the environment to adjust actions. Example: "or high-level intention prediction in open-loop object-finding or serving tasks~\cite{gao2021hybrid,sheng2025human,liu2025idagc,zhi2025closed}."

- PD control (Proportional–Derivative control): A control method using proportional and derivative terms to track target positions or velocities. Example: "We use PD position control for actuation."

- Privileged information: Data available during training (e.g., simulation ground-truth) but not at test time, used to guide learning. Example: "this teacher is granted access to privileged information ($\mathcal{O}^{\text{priv}$), which includes the object's ground-truth pose and velocity history."

- Proprioception: Internal sensing of a robot’s own body states (e.g., joint positions, velocities, forces). Example: "provides a proprioception-only policy that enables compliant human-humanoid collaboration for carrying diverse objects across various movement patterns."

- Proximal Policy Optimization (PPO): A reinforcement learning algorithm that stabilizes policy updates via clipped objectives. Example: "we adopt the \ac{ppo} algorithm to conduct reinforcement learning-based training for humanoid collaboration in our research."

- Residual policy: A learned correction term added on top of a base controller or policy to refine behavior. Example: "we train a residual teacher policy on top of the pre-trained \ac{wbc} policy in the closed-loop training environment."

- SLERP (Spherical Linear Interpolation): An interpolation method on the unit sphere for smoothly blending orientations (quaternions). Example: "The end-effector goal command, representing the $6-$DoF target pose of the robot's wrist, is generated using \ac{slerp}."

- Teacher–student framework: A training paradigm where a teacher model guides a student model, often via distillation. Example: "To incorporate these, we adopt a teacher-student framework in which the teacher policy, trained with both proprioceptive and privileged object-state information, is learned with rewards on the humanoid motion (e.g, robust locomotion across varied terrains) and the object status (e.g, maintaining a stretcher)."

- Whole-Body Control (WBC): Coordinated control of all robot joints to achieve locomotion and manipulation goals simultaneously. Example: "To this end, we formulate the collaboration policy as a residual policy atop the base whole-body control policy, enabling the model to implicitly infer collaborative intent directly from physical interaction."

- Whole-body coordination: The integrated, simultaneous use of multiple body parts to perform complex tasks. Example: "In this work, we propose a residual learning framework that enables humanoids to collaborate with humans using whole-body coordination, significantly broadening the range of collaborative carrying scenarios that humanoid robots can handle."

Practical Applications

Immediate Applications

Below are actionable, real-world uses that can be deployed now, along with relevant sectors, potential tools/products/workflows, and key assumptions/dependencies.

- Healthcare: stretcher, bed, and equipment transport assist — sectors: healthcare, public safety

- Description: A nurse or EMT holds one end of a stretcher or medical cart while the humanoid uses the follower mode to share load, stabilize height, and coordinate direction (straight, turning, slopes).

- Tools/products/workflows: “Carry Assist Mode” on existing humanoids; ROS node exposing a simple leader/follower toggle; standard operating procedures (SOPs) for human–humanoid team lifts; quick user training for push/pull intent.

- Assumptions/dependencies: Object has robust grasp points; moderate payloads (demonstrated with 11 kg stretcher, 20 kg cart); adequate floor friction; compliant actuation and safe stops; facility accepts collaborative robot deployment and has basic HRI training.

- Warehousing and logistics: two-person lift replacement for bulky boxes and carts — sectors: logistics, retail, e-commerce fulfillment

- Description: One worker plus humanoid move oversized cartons or roll cages; robot tracks human linear/turning velocities and maintains balanced object height to reduce musculoskeletal strain.

- Tools/products/workflows: “Team-Lift Automation” workflow; ergonomic program dashboards using the paper’s metrics (Avg. external force, Height error); integration with warehouse management systems (WMS) for task handoffs.

- Assumptions/dependencies: Clear path with minimal obstacles; task payloads within current robot capability; worker acceptance and safety guardrails; battery/runtime fit for shift needs.

- Manufacturing and assembly support: panel, jig, and fixture carrying — sectors: manufacturing, industrial robotics

- Description: Humanoid coordinates with technicians to move large flat panels or fixtures between stations, maintaining height alignment during load/unload or lowering to benches.

- Tools/products/workflows: Whole-Body Carry skill module bundled with WBC; line-side SOP for human-led movement; adjustable compliant thresholds for different fixtures.

- Assumptions/dependencies: Known grasp geometry; safe workspace boundaries; robot PD position control tuned for fixtures; limited need for obstacle avoidance (policy is proprioception-only).

- Event setup, hospitality, and facilities: desks, tables, stage components — sectors: hospitality, venue operations, corporate facilities

- Description: Staff plus humanoid carry furniture or staging elements; robot assists with turning, ramp navigation, and height synchronization during lowering/raising.

- Tools/products/workflows: “Venue Carry Assist” routine; rapid role switching (leader for cart pushing, follower for team lifts); simple visual cue cards indicating preferred grasp points.

- Assumptions/dependencies: Moderate loads; indoor environments with ramps rather than stairs; minimal crowds during movement; defined safety perimeter.

- Eldercare and home assistance: furniture repositioning and grocery/cart help on slopes — sectors: eldercare, smart home

- Description: In residential or assisted-living contexts, the humanoid helps lift one side of a small furniture item or assists with cart dragging up ramps, reducing caregiver effort.

- Tools/products/workflows: Home “Assist Carry” preset with conservative speed and compliance thresholds; caregiver training module to use push/pull cues; built-in emergency stop.

- Assumptions/dependencies: Object stability and grasp points; low-speed operation; caregiver supervision; existing policies permitting robots in eldercare facilities.

- Safety and ergonomics programs: reduce human effort with measurable gains — sectors: occupational safety, HR/ergonomics

- Description: Deploy the policy to cut average human effort (paper reports ~25–31% reduction) and track Height error and Avg. external force to quantify injury risk reductions.

- Tools/products/workflows: Ergonomic analytics dashboard; periodic compliance calibration (threshold near ~15 N for movement initiation in follower mode); incident reporting integrated with safety management systems.

- Assumptions/dependencies: Consistent tasks amenable to team lifts; ergonomic buy-in; governance for robot–worker collaboration and incident review.

- Education and research: reproducible RL collaboration experiments — sectors: academia, robotics R&D

- Description: Use the released policy and closed-loop training environment to study proprioception-only collaboration, teacher–student distillation, and sim-to-real for whole-body coordination.

- Tools/products/workflows: Isaac Lab-based training pipelines; baseline comparison using the paper’s metrics; curricula for RL courses emphasizing closed-loop, object-constrained interaction.

- Assumptions/dependencies: Access to simulation tools and a humanoid platform with similar WBC; domain randomization and tuning for hardware differences.

- Robotics OEM feature packaging: turnkey “Compliant Carry” capability — sectors: robotics platforms, software

- Description: Integrate COLA as a firmware/software feature in humanoid control stacks, exposing leader/follower modes and compliance tuning for customers.

- Tools/products/workflows: SDK module with ROS/REST APIs; safety interlocks; parameter profiles per sector (healthcare, warehouse, facilities).

- Assumptions/dependencies: Vendor support; certification for collaborative operation; field service and user training.

Long-Term Applications

Below are applications that will benefit from further research, scaling, sensor integration, certification, or workflow redesign before broad deployment.

- Autonomous route planning and obstacle avoidance during carries — sectors: logistics, healthcare, facilities

- Description: Move from human-led following to robot-autonomous leader mode with path planning, obstacle clearance, and dynamic rerouting.

- Tools/products/workflows: Vision/lidar modules; shared intent planner; HRI interface for route approval; safety-rated autonomy stack.

- Assumptions/dependencies: Multi-modal perception beyond proprioception; robust collision avoidance; certified safety under ISO/ANSI standards.

- Vision and tactile integration for unstructured environments — sectors: robotics, construction, public safety

- Description: Add cameras and force/torque sensors to recognize object geometry, detect slippage, and adapt grasps dynamically in cluttered or outdoor settings.

- Tools/products/workflows: Perception-to-control fusion; grasp detection; tactile slip monitoring; online compliance adaptation.

- Assumptions/dependencies: Sensor calibration; fusion latency; robustness to lighting/weather; hardware upgrades to end-effectors.

- Multi-human and multi-humanoid team carrying — sectors: manufacturing, construction, disaster relief

- Description: Coordinate larger teams to move oversized loads with dynamic role allocation, distributed compliance, and formation control.

- Tools/products/workflows: Multi-agent RL; formation planners; inter-robot communication; shared load balancing.

- Assumptions/dependencies: Reliable V2V/V2H communication; formal safety and liability frameworks; training for team coordination.

- Emergency response and patient transport in complex terrain — sectors: public safety, defense, healthcare

- Description: Robust carry in debris-filled, uneven terrains; stairs; evacuation scenarios with autonomous stability guarantees.

- Tools/products/workflows: Terrain assessment; active stabilization; mission planning under uncertainty; ruggedized hardware.

- Assumptions/dependencies: Enhanced locomotion capabilities; high compliance with unpredictable forces; regulatory approval for emergency use.

- Standardization and certification for humanoid collaboration — sectors: policy, standards bodies, insurance

- Description: Develop standards for safe human–humanoid co-carry (speed limits, force thresholds, HRI protocols), enabling widespread adoption and insurance coverage.

- Tools/products/workflows: Industry working groups (ISO/IEC/ANSI/RIA); certification labs; compliance documentation templates.

- Assumptions/dependencies: Consensus across regulators, manufacturers, and labor groups; incident data to inform thresholds.

- Workforce redesign and ROI modeling for 1-human + 1-robot tasks — sectors: operations, HR, finance

- Description: Transition from two-person lifts to human–robot teams; quantify ROI via productivity and injury reduction; reskilling programs.

- Tools/products/workflows: Task audits; cost–benefit calculators; reskilling curricula; change management plans.

- Assumptions/dependencies: Organizational buy-in; union/worker council agreements; clear job classification updates.

- Language and gesture-based intent interfaces — sectors: software, HRI, education

- Description: Integrate natural language and gestures to set leader/follower roles, target velocity, and carry actions without physical cues.

- Tools/products/workflows: LLM-based intent parsing; multimodal HRI; safety filters; user onboarding modules.

- Assumptions/dependencies: Reliable speech/gesture recognition in noisy environments; intent ambiguity resolution; safety interlocks.

- Generalized co-manipulation beyond carrying: assembly, insertion, door and drawer operations — sectors: manufacturing, home robotics

- Description: Extend proprioceptive compliance to joint tasks that require fine force adaptation, height/pose synchronization, and dynamic role switching.

- Tools/products/workflows: Task libraries; shared impedance control; hybrid force–position control with policy learning.

- Assumptions/dependencies: Tactile/force sensing for fine tasks; expanded training curricula; broader motion primitives.

- Energy-aware carry policies and hardware co-design — sectors: robotics hardware, energy

- Description: Optimize policy for battery life and thermal limits during prolonged assist tasks; co-design actuators and gearing for compliant carrying.

- Tools/products/workflows: Energy-aware RL rewards; runtime monitors; actuator selection guides.

- Assumptions/dependencies: Detailed power models; thermal safety margins; hardware redesign cycles.

- Academic benchmarks and shared datasets for human–humanoid collaboration — sectors: academia, standards

- Description: Establish cross-institution benchmarks covering velocity/height tracking, human effort reduction, and compliance under disturbances.

- Tools/products/workflows: Dataset collection protocols; standardized test objects and terrains; community challenges and leaderboards.

- Assumptions/dependencies: Shared access to humanoid platforms; common logging formats; reproducibility standards.

Collections

Sign up for free to add this paper to one or more collections.