- The paper introduces the Natural Language Tools (NLT) framework, which decouples tool selection from response generation to improve LLM tool-calling accuracy.

- NLT employs a three-stage modular architecture that reduces task interference and cuts token usage by 31.4%, enhancing overall performance.

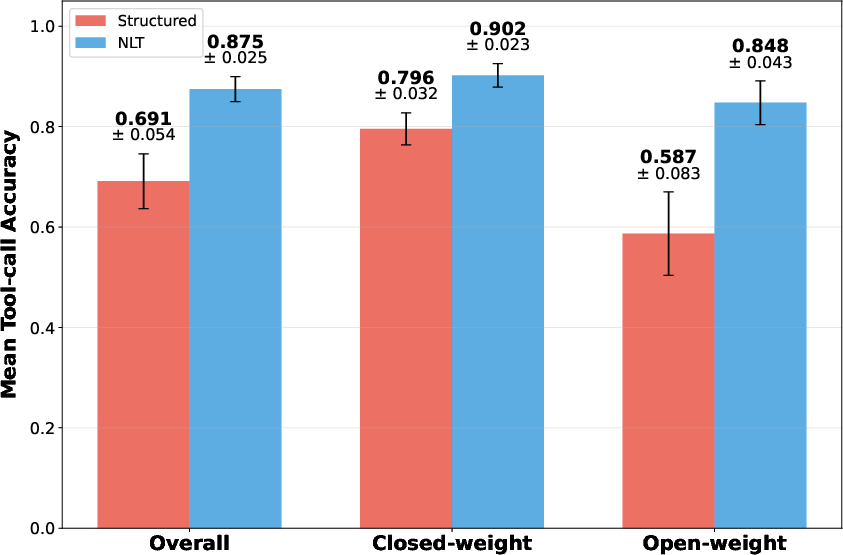

- Evaluation with 6,400 trials in customer service and mental health domains shows an 18.4 percentage point accuracy boost compared to traditional structured approaches.

Introduction

The paper introduces a new framework called Natural Language Tools (NLT) designed to enhance the tool-calling capabilities in LLMs by replacing programmatic JSON calls with natural language interactions. Structured tool calling, which requires models to conform to rigid schemas such as JSON, can degrade performance due to task interference and increased context length. NLT seeks to improve upon these drawbacks by decoupling tool selection from response generation and removing programmatic constraints, thereby improving tool-call accuracy and reducing output variance.

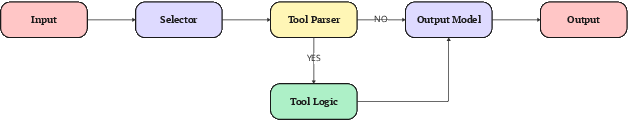

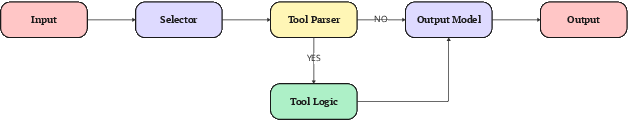

NLT System Architecture

NLT employs a modular architecture comprising three stages: tool selection, tool execution, and response generation. This architecture separates the tool selection process into a dedicated model step, thereby reducing task interference and token overhead.

Figure 1: The above represents a simplified NLT architecture with a single input LLM and a single output LLM.

Stage 1: Tool Selection

The selector model receives an input along with a crafted NLT prompt, listing each available tool and prompting the model to decide whether to call each tool using a simple YES or NO format. This separation allows multiple tools to be called simultaneously without task interference.

Stage 2: Tool Execution

A parser executes the selected tools based on the outputs of the selector model. This process involves parsing the YES/NO decisions and executing the relevant functions.

Stage 3: Response Generation

Finally, an output module integrates the results from the tool execution stage into the model's response, effectively closing the tool selection loop.

Implementation Details

The paper details the prompt structure used for the selector model, emphasizing the use of a natural language template that includes the model's role, the purpose of the search, a list of tools, the output format, and an example output. This natural language approach aligns with models' strengths and reduces the burden imposed by structured output formatting.

Evaluation and Results

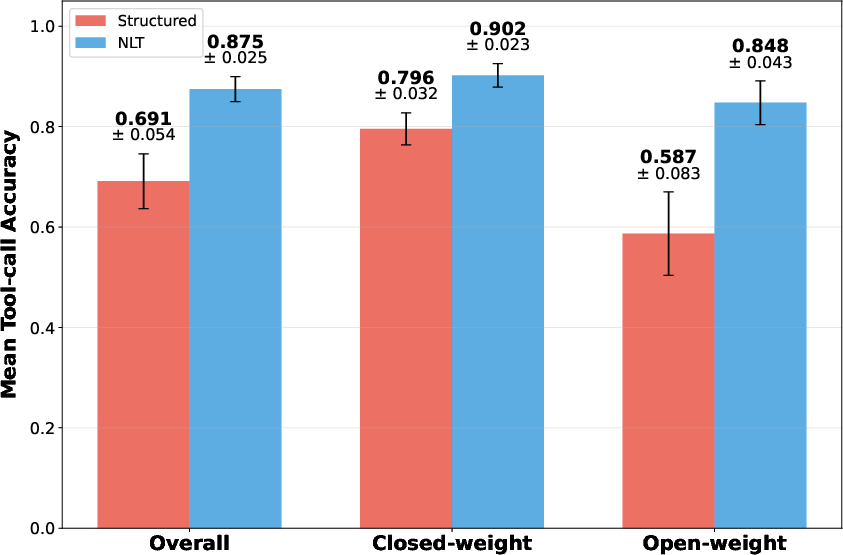

NLT was evaluated across 10 frontier models, conducting 6,400 trials in customer service and mental health domains. Key findings demonstrate that NLT improves tool-calling accuracy by 18.4 percentage points and significantly reduces variance compared to structured calls.

Figure 2: Side by side comparisons between NLT and Structured approaches, across three categories: overall results, closed-weight models, and open-weight models.

Open-weight models saw the largest accuracy gains under NLT, although closed-weight models also experienced notable improvements. These results remained robust even under perturbations to prompt designs, indicating the general effectiveness of NLT across different conditions.

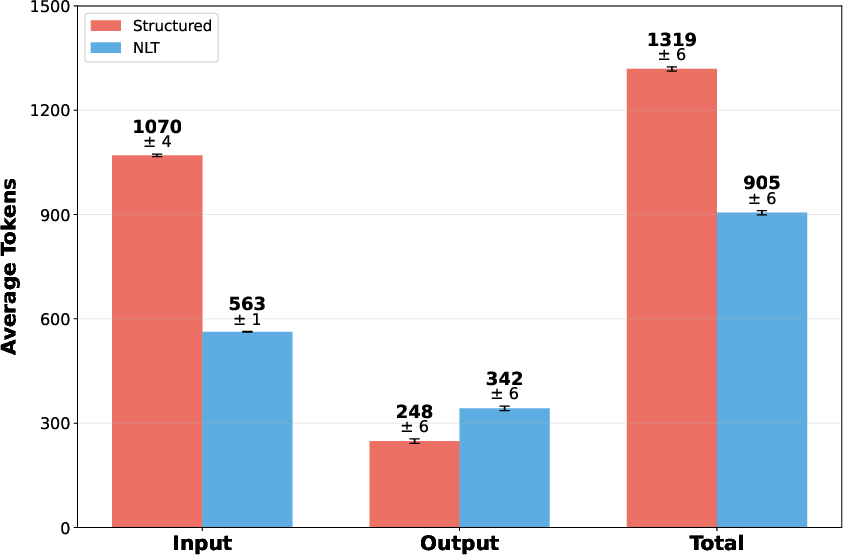

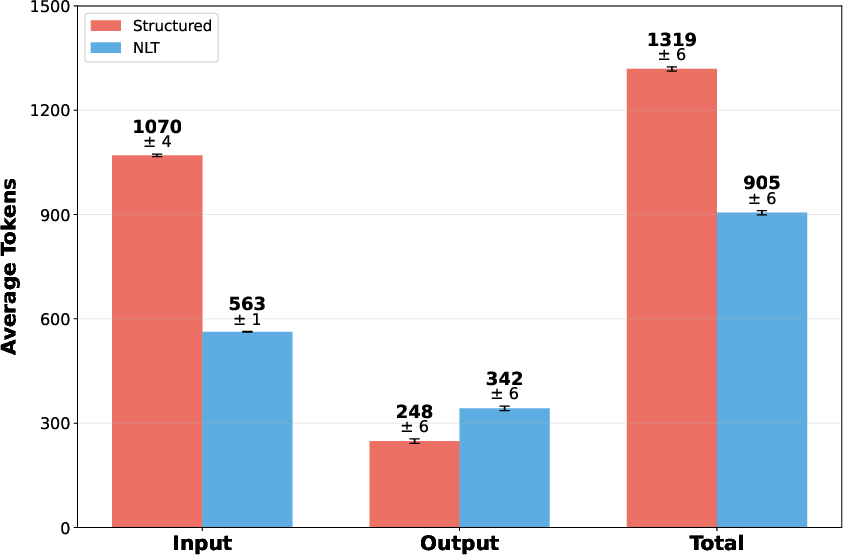

NLT reduces token usage by an average of 31.4%, primarily due to reducing input tokens by eliminating the need for JSON schema overhead. This reduction significantly impacts computational cost and model accuracy due to fewer tokens being used.

Figure 3: A comparison of token usage between NLT and structured tool calling approaches, charted across Input, Output, and Total tokens.

Discussion

The transition from structured to natural language tool calling presents several mechanisms that explain NLT's improved performance, such as reducing the format burden and aligning with the predominantly natural language datasets LLMs are trained on. However, this necessitates additional architectural considerations related to complexity, latency, and coupling between system prompts and tool definitions.

Conclusion

NLT represents a significant shift in tool calling for LLMs by leveraging natural language outputs and reducing reliance on programmatic constraints. This approach not only enhances tool-call accuracy and consistency but also opens up avenues for future enhancements in AI-based agent systems. The paper highlights a promising direction for AI research where adaptability and flexibility in interactions drive forward the capabilities of LLMs.