- The paper presents a Mamba-based object detection framework integrated with reinforcement learning for energy-efficient navigation on edge devices.

- The methodology uses knowledge distillation and architectural optimization to reduce parameters by 31% and model size by 67% while maintaining accuracy.

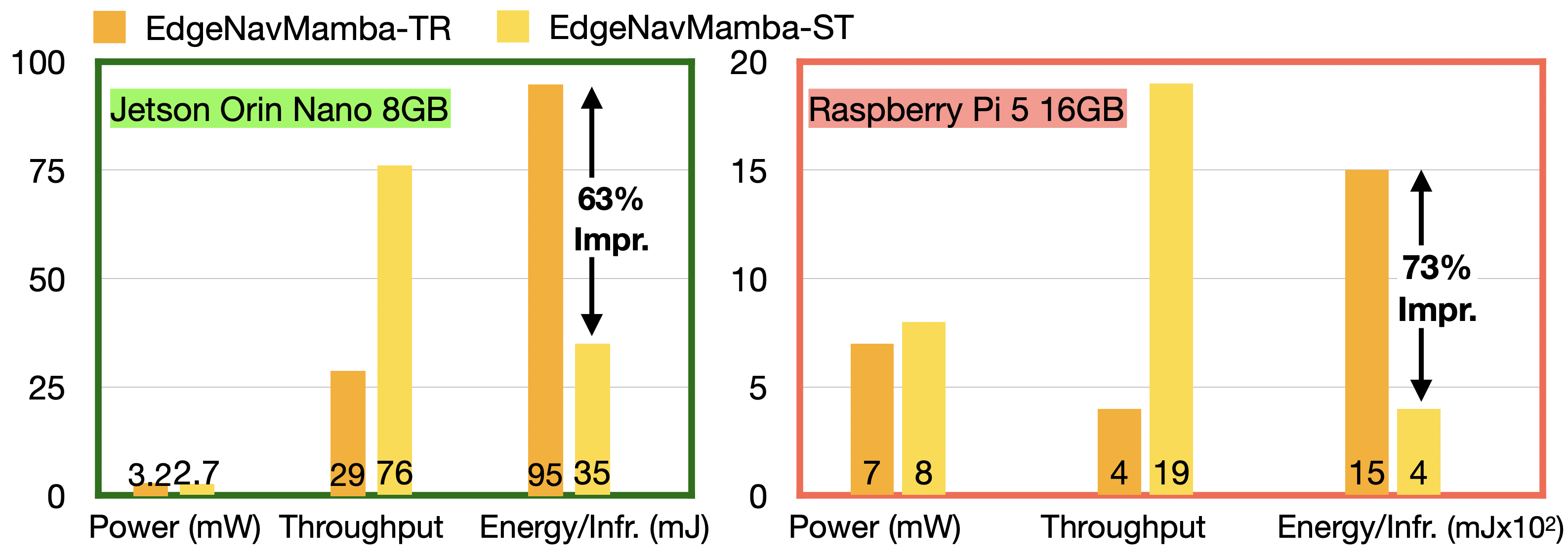

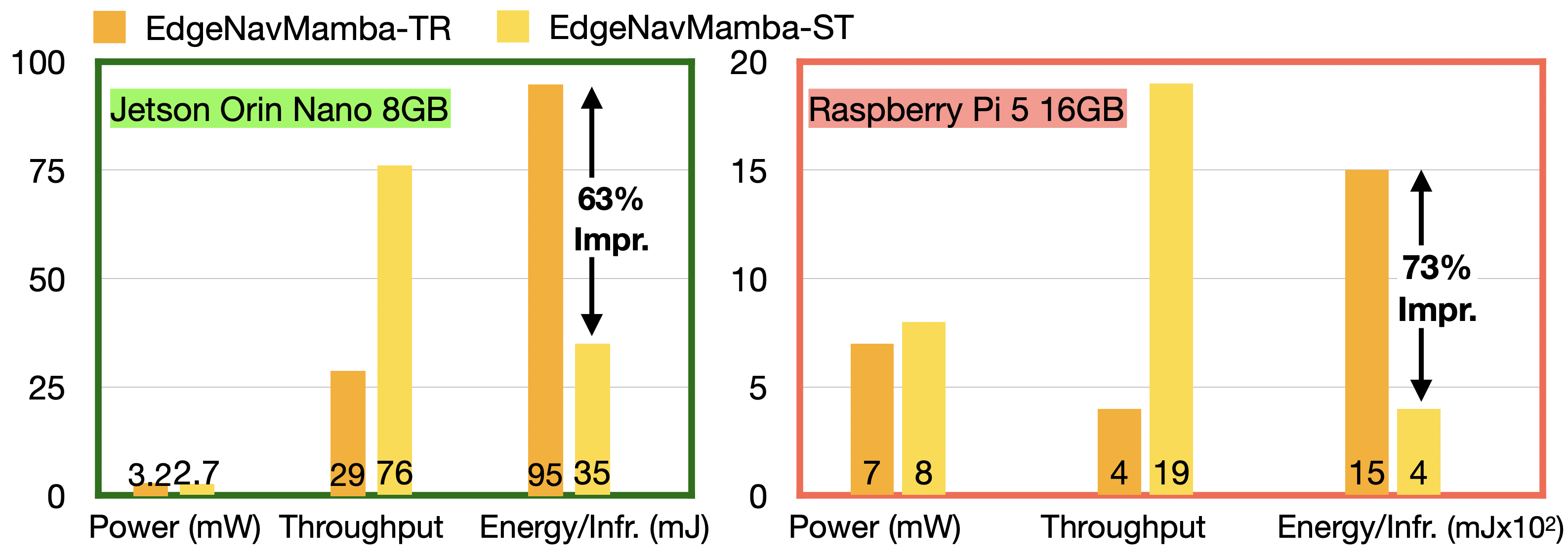

- Experimental results demonstrate up to 73% energy reduction on platforms like Jetson Orin Nano and Raspberry Pi 5, confirming real-time deployment viability.

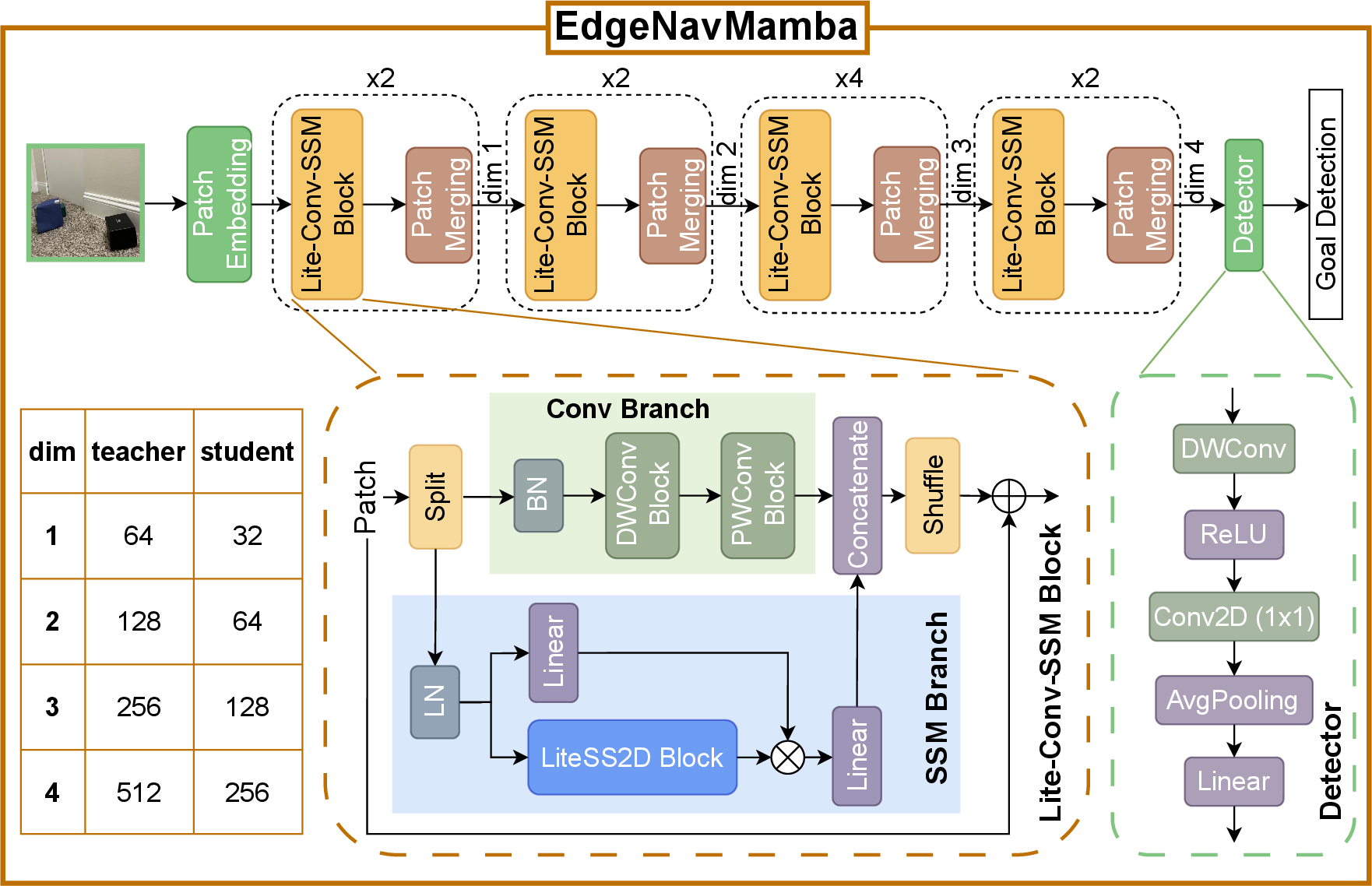

EdgeNavMamba: Mamba-Optimized Object Detection for Energy-Efficient Edge Devices

Introduction and Motivation

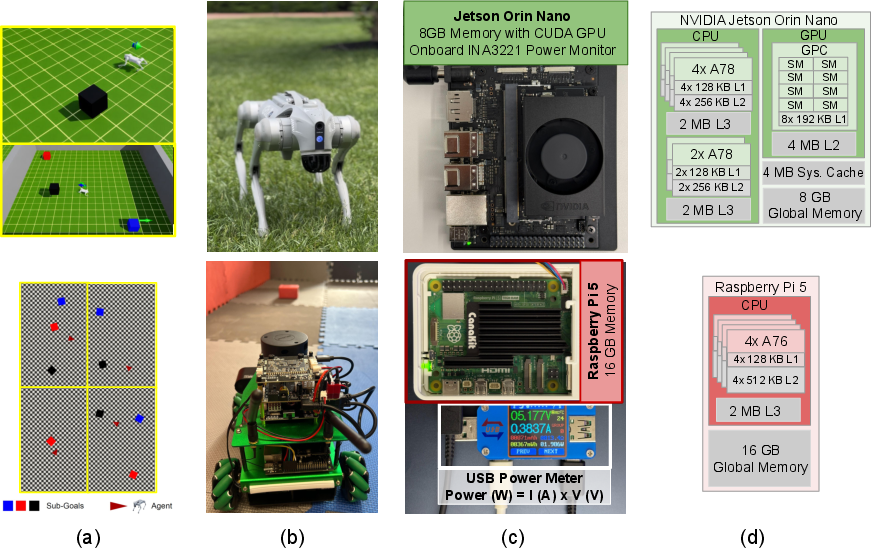

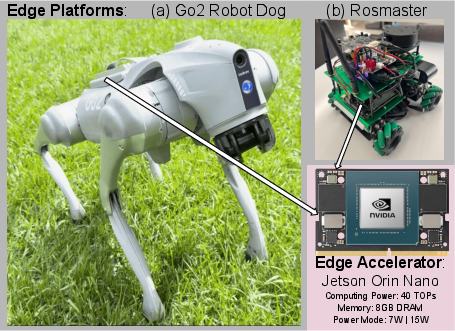

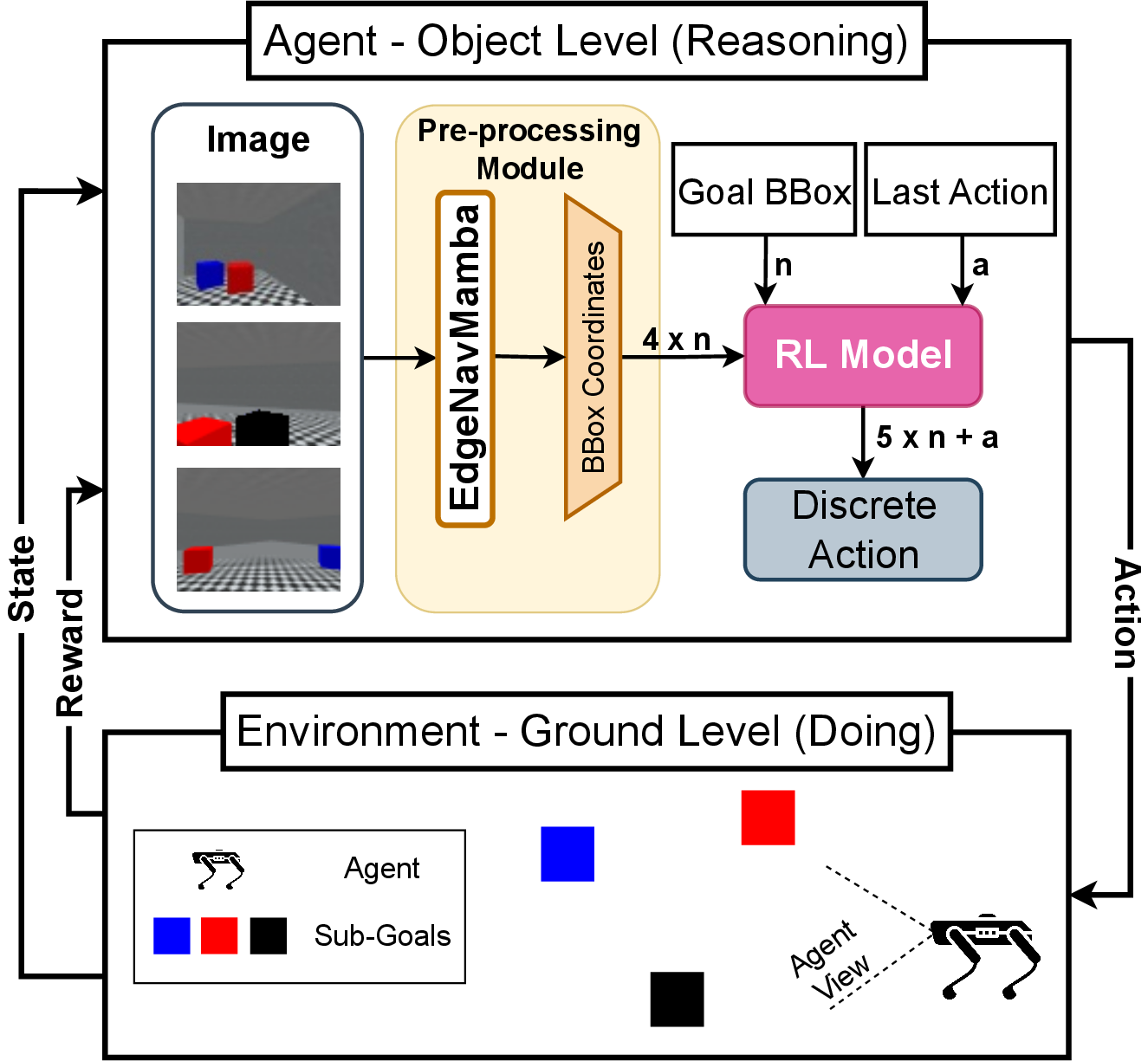

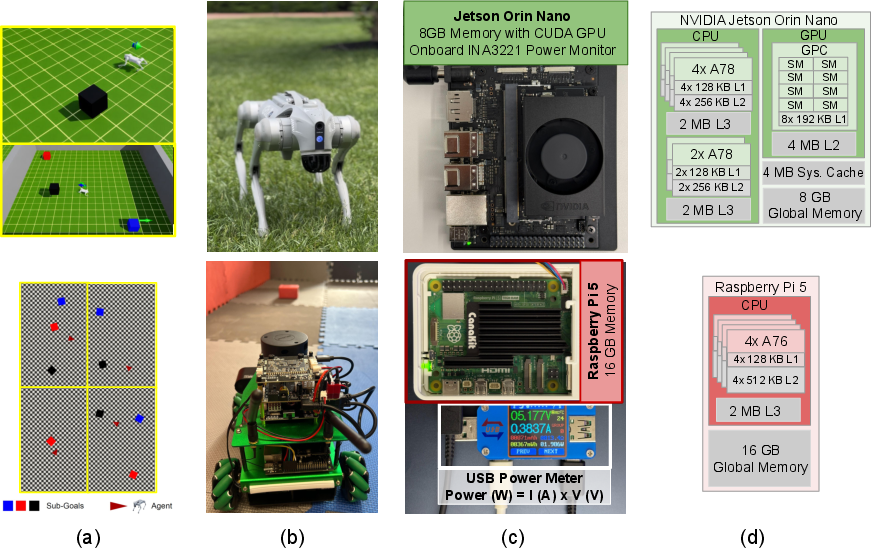

EdgeNavMamba addresses the challenge of deploying deep learning-based autonomous navigation on resource-constrained edge devices, where computational and energy efficiency are paramount. The framework integrates a Mamba-based object detector with reinforcement learning (RL) for goal-directed navigation, targeting platforms such as NVIDIA Jetson Orin Nano and Raspberry Pi 5. The approach leverages architectural optimization and knowledge distillation (KD) to produce compact models that fit within cache memory, thereby reducing inference latency and energy consumption without sacrificing detection accuracy.

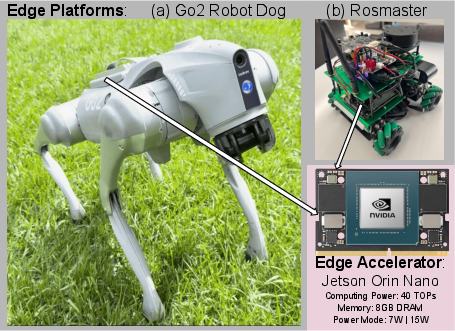

Figure 1: Edge platforms with onboard Jetson Orin Nano accelerators: (a) the Unitree Go2 robot dog and (b) the Yahboom Rosmaster wheeled robot.

Technical Foundations

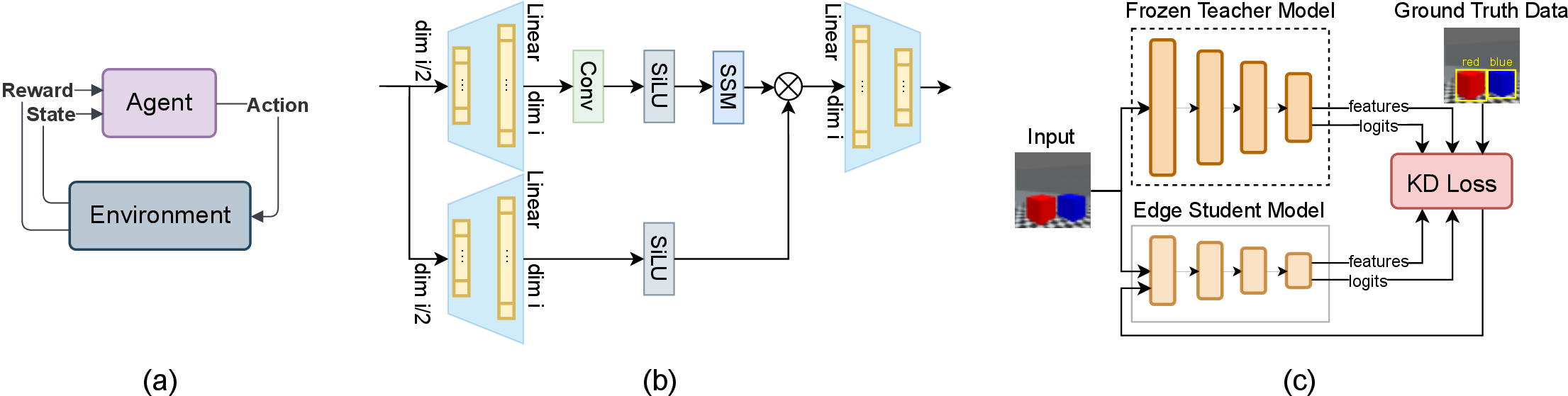

Reinforcement Learning for Navigation

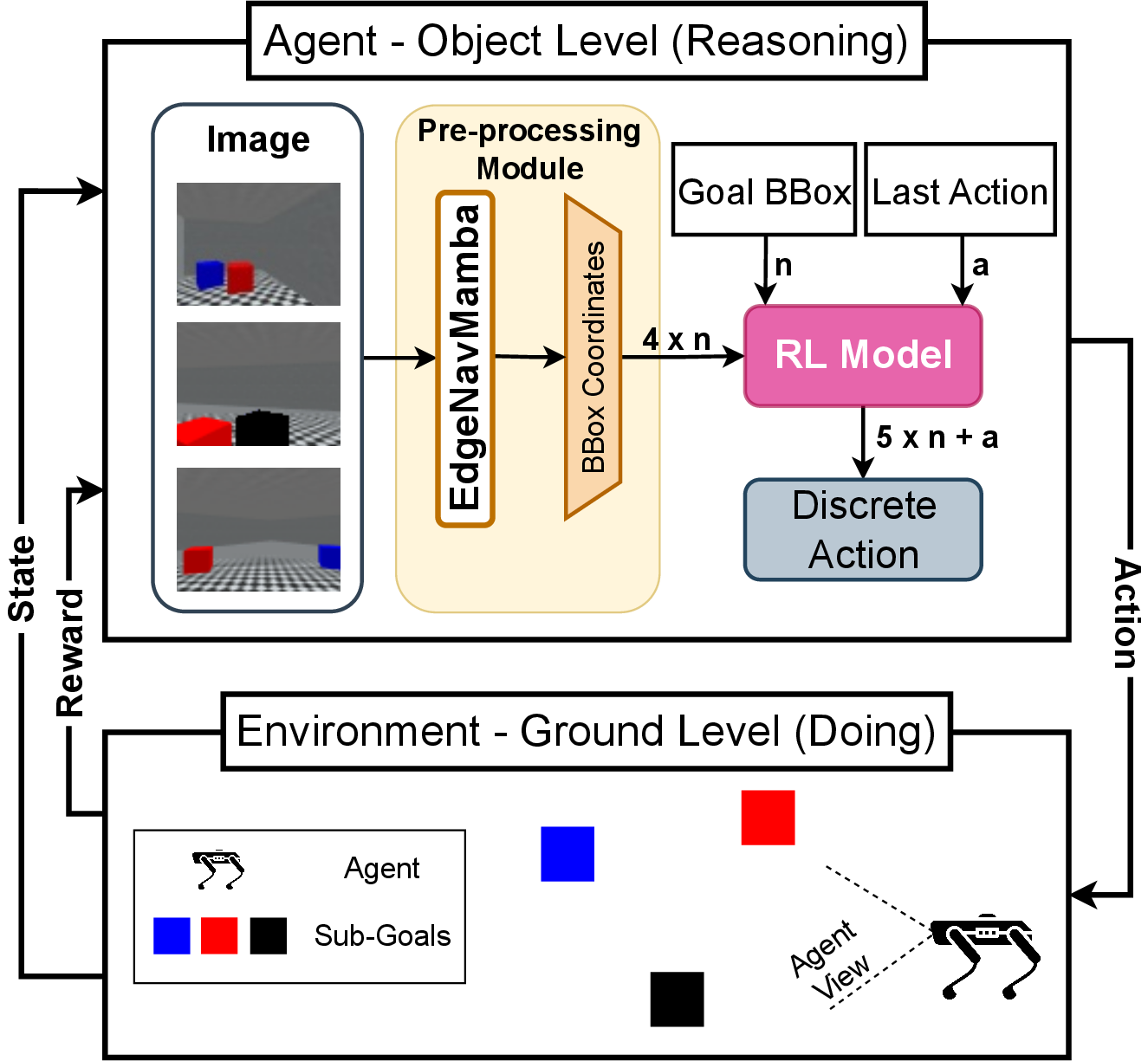

The navigation task is formulated as a Markov Decision Process (MDP), where the agent receives bounding box (BBOX) coordinates from the object detector and uses them as state input for a PPO-based RL policy. The action space is discrete, and the reward function combines sparse signals (goal reached, collision) with dense shaping (distance reduction, exploration incentives).

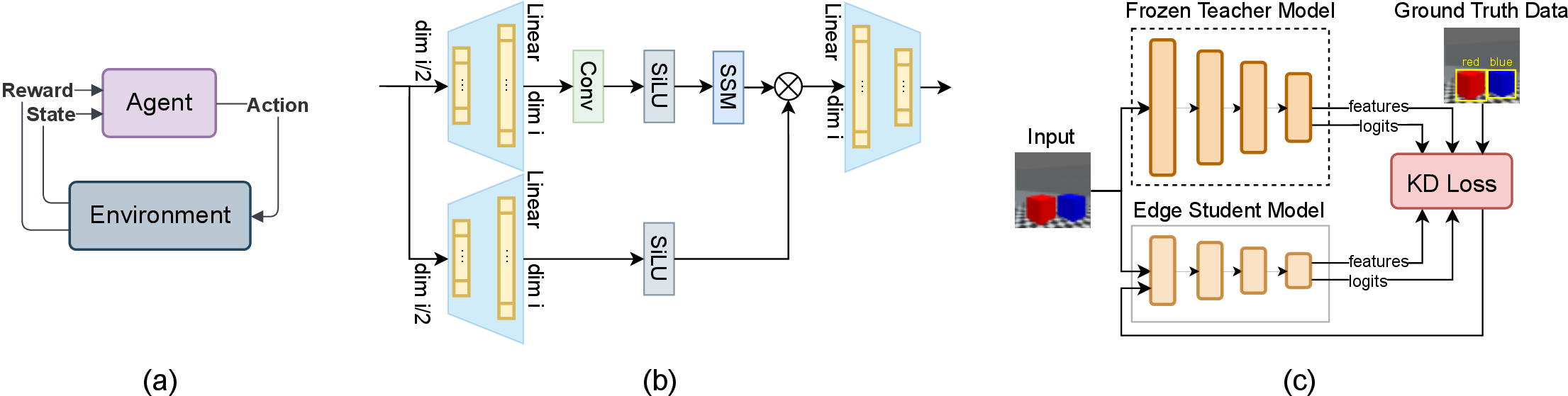

Figure 2: (a) RL agent-environment interaction; (b) Mamba architecture for feature extraction; (c) Knowledge distillation process from teacher to student.

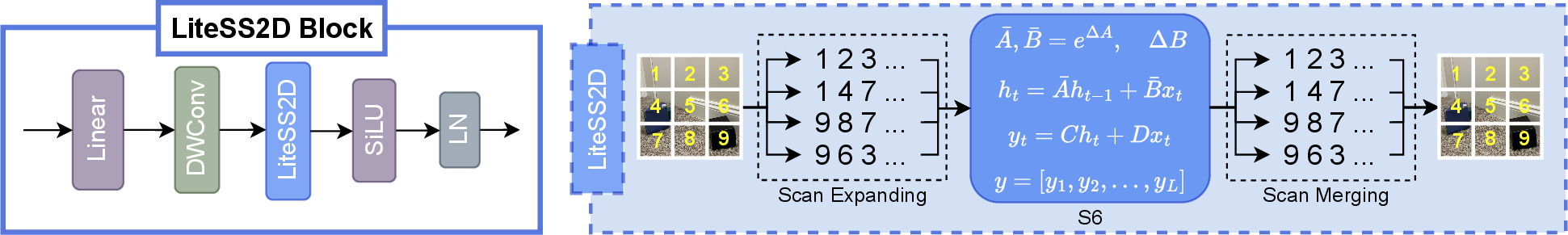

Mamba-Based Object Detection

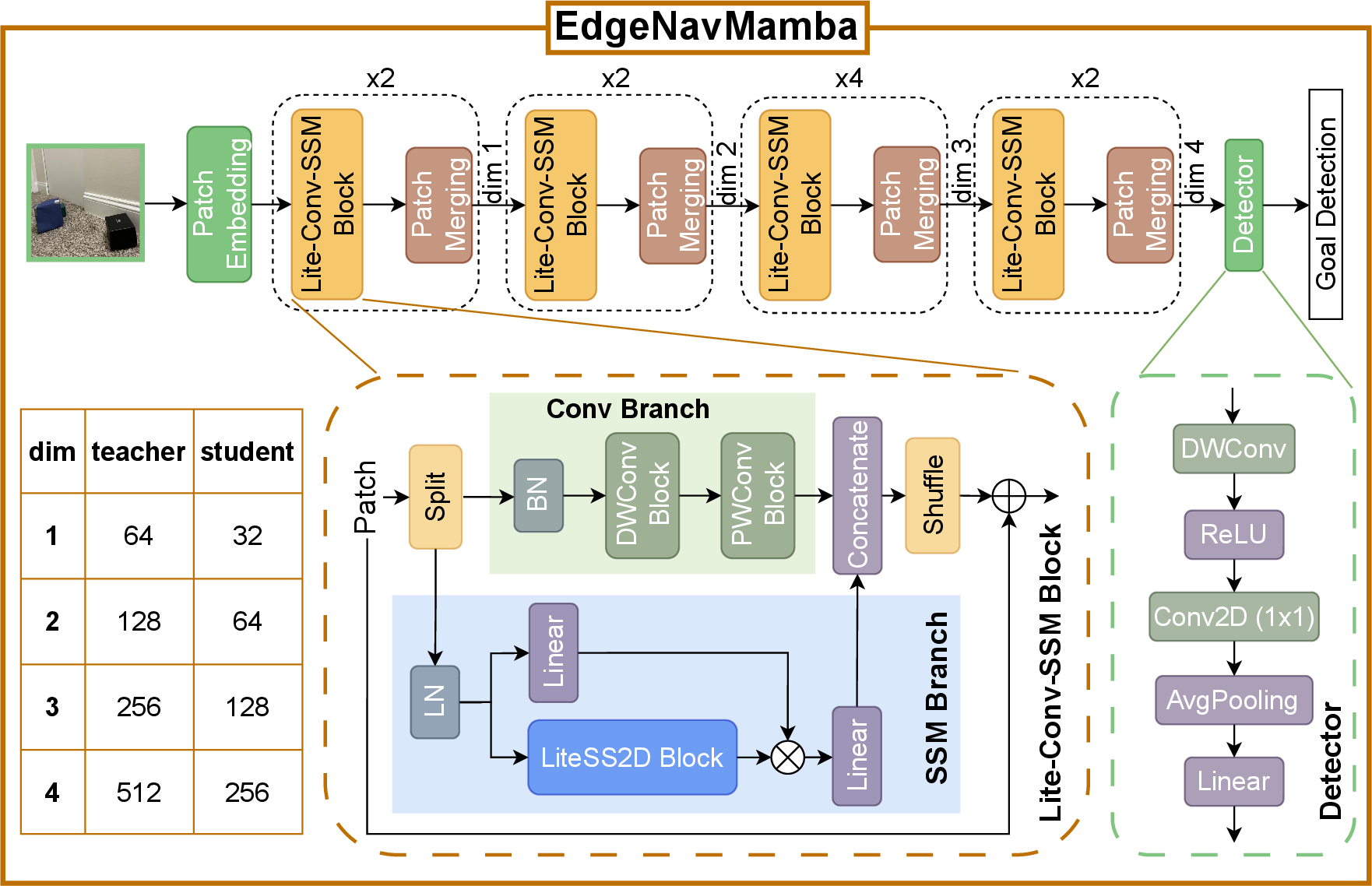

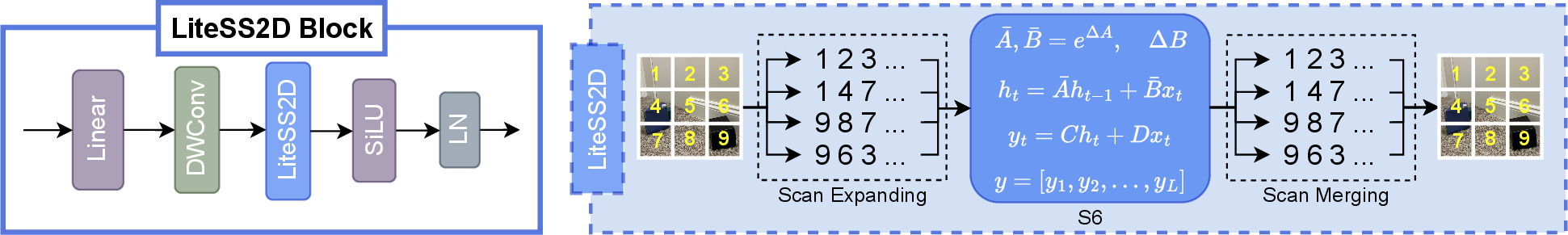

The object detector employs a hybrid architecture combining convolutional layers for local feature extraction and Selective State Space Models (SSM) via Mamba for efficient global context modeling. The LiteSS2D block, adapted from VMamba, shares weights across scan directions to minimize computation and memory footprint.

Figure 3: Proposed EdgeNavMamba architecture with convolution and SSM branches for feature extraction, used identically in teacher and student models with different dimensions.

Figure 4: Detailed EdgeNavMamba architecture, highlighting the integration of LiteSS2D in the SSM branch.

Figure 5: LiteSS2D block architecture, demonstrating efficient directional scanning and weight sharing for memory and compute reduction.

Knowledge Distillation

KD is employed to transfer knowledge from a larger, high-performing teacher model to a compact student model. The distillation loss combines YOLO detection loss, temperature-scaled KL divergence on logits, and feature matching loss between intermediate representations. Hyperparameters λkd and λfeat balance the contributions of distillation and feature matching.

Implementation Details

Training and Deployment

- Datasets: Real-world and simulated datasets (MiniWorld, IsaacLab) with three object classes, split 90/10 for training/validation.

- Model Training: Teacher trained and frozen; student distilled with depths [2,2,4,2] and channels (32,64,128,256). Adam optimizer, lr=10−4, batch size 32, input size 224×224.

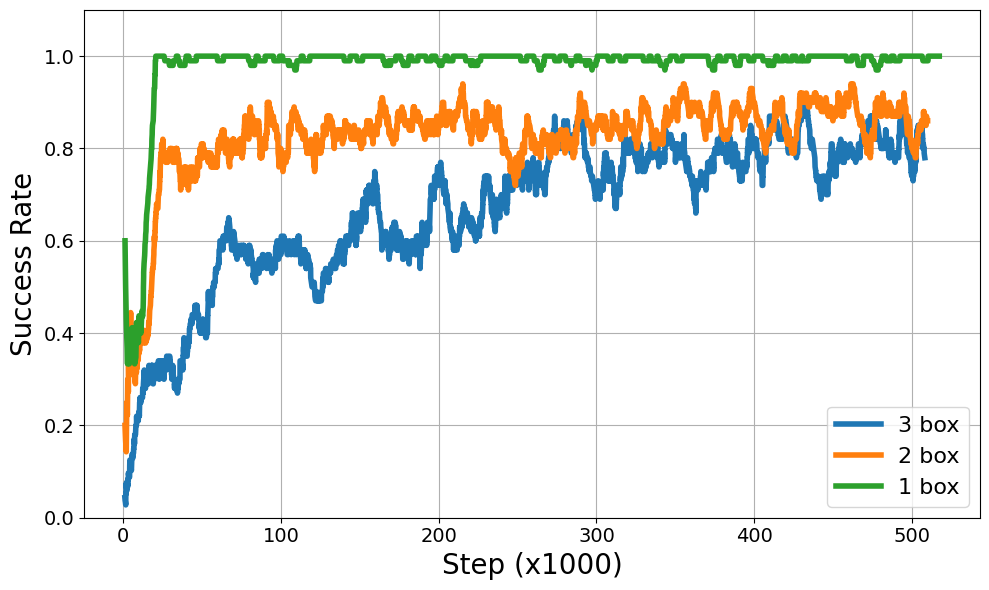

- RL Policy: PPO algorithm, learning rate 3×10−4, batch size 128, 500k timesteps, reward shaping as per Table 1.

- Deployment: Models exported to ONNX and deployed on Jetson Orin Nano and Raspberry Pi 5, integrated with Unitree Go2 and Yahboom Rosmaster robots.

Figure 6: Experimental setup for energy-efficient multi-goal navigation, including simulation environments, edge robotic platforms, and power measurement infrastructure.

Experimental Results

Model Efficiency and Accuracy

EdgeNavMamba-ST (student) achieves a 31% reduction in parameters and a 67% reduction in model size compared to the baseline, with no degradation in mAP (0.93 vs. 0.93 for teacher). FLOPs are reduced to 0.15G, supporting real-time inference on edge hardware.

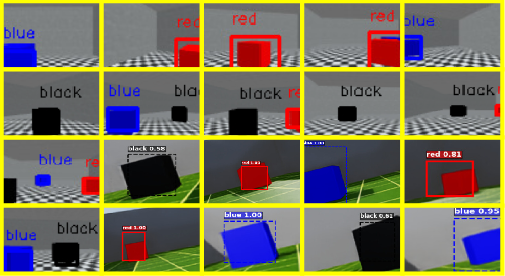

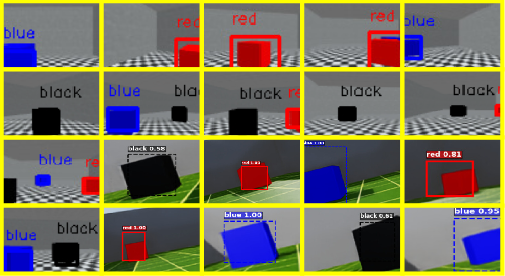

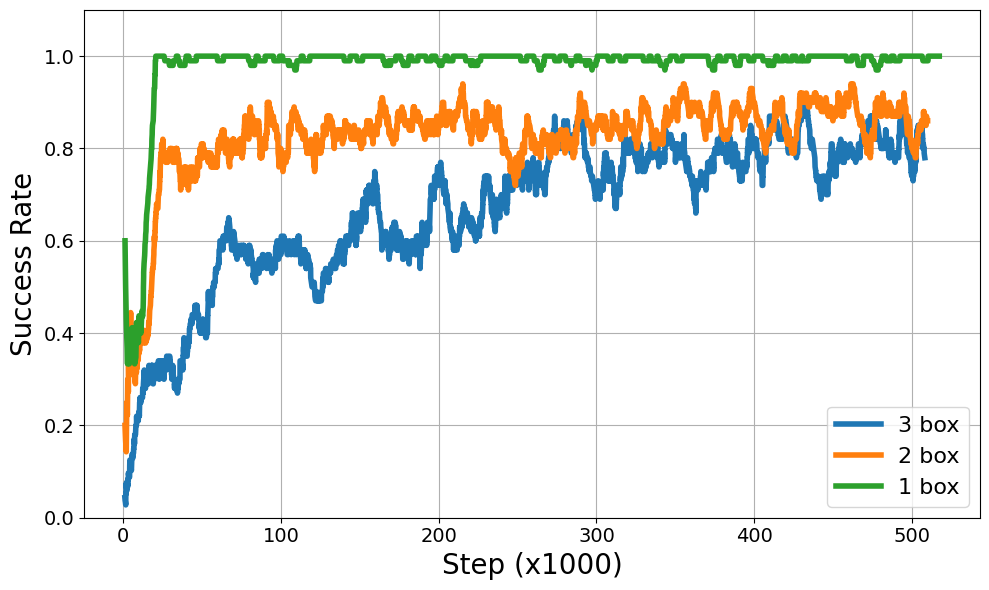

In MiniWorld, the RL agent achieves 100% success in single-goal scenarios, 94% in two-goal, and 90% in three-goal environments, demonstrating robust navigation even with distractors.

Figure 7: MiniWorld and IsaacLab environments with agent detections using EdgeNavMamba-ST.

Figure 8: Success rate of navigation toward defined goals during training across varying environment complexities.

Energy Profiling

On Jetson Orin Nano, EdgeNavMamba-ST reduces energy per inference by 63% and improves throughput. On Raspberry Pi 5, energy reduction reaches 73%, with negligible power overhead, confirming the efficacy of KD and architectural optimizations for edge deployment.

Figure 9: Energy and performance comparison of EdgeNavMamba-TR (teacher) and EdgeNavMamba-ST (student) on Jetson Orin Nano and Raspberry Pi 5.

Implications and Future Directions

EdgeNavMamba demonstrates that Mamba-based architectures, when combined with knowledge distillation and hardware-aware design, enable efficient deployment of deep learning models for autonomous navigation on edge devices. The strong numerical results—parameter reduction, energy savings, and sustained accuracy—underscore the viability of SSMs as alternatives to attention mechanisms in resource-constrained settings. The framework's modularity allows for adaptation to other edge tasks, such as real-time medical imaging or security surveillance.

Future work may explore:

- Further quantization and pruning for sub-cache deployment.

- Extension to multi-modal sensor fusion (e.g., LiDAR, IMU).

- Real-world deployment in dynamic, unstructured environments.

- Integration with federated learning for distributed edge intelligence.

Conclusion

EdgeNavMamba provides a comprehensive solution for energy-efficient, real-time autonomous navigation on edge devices by leveraging Mamba-based object detection and reinforcement learning. The combination of architectural innovation and knowledge distillation yields substantial reductions in model size and energy consumption, with no loss in detection or navigation performance. The results validate the approach for practical edge AI applications and suggest promising directions for future research in efficient deep learning deployment.