- The paper introduces a system that integrates 3D Gaussian Splatting and robotic leaf manipulation to generate detailed digital twins for advanced plant phenotyping.

- It achieves high segmentation (90.8%) and detection accuracies using a modular, cost-effective setup with stereo cameras, a digital turntable, and a 7-DOF robot arm.

- The system enables precise underleaf imaging and quantitative analysis, facilitating applications in disease detection, growth monitoring, and precision agriculture.

Botany-Bot: Digital Twin Monitoring of Occluded and Underleaf Plant Structures with Gaussian Splats

Introduction and Motivation

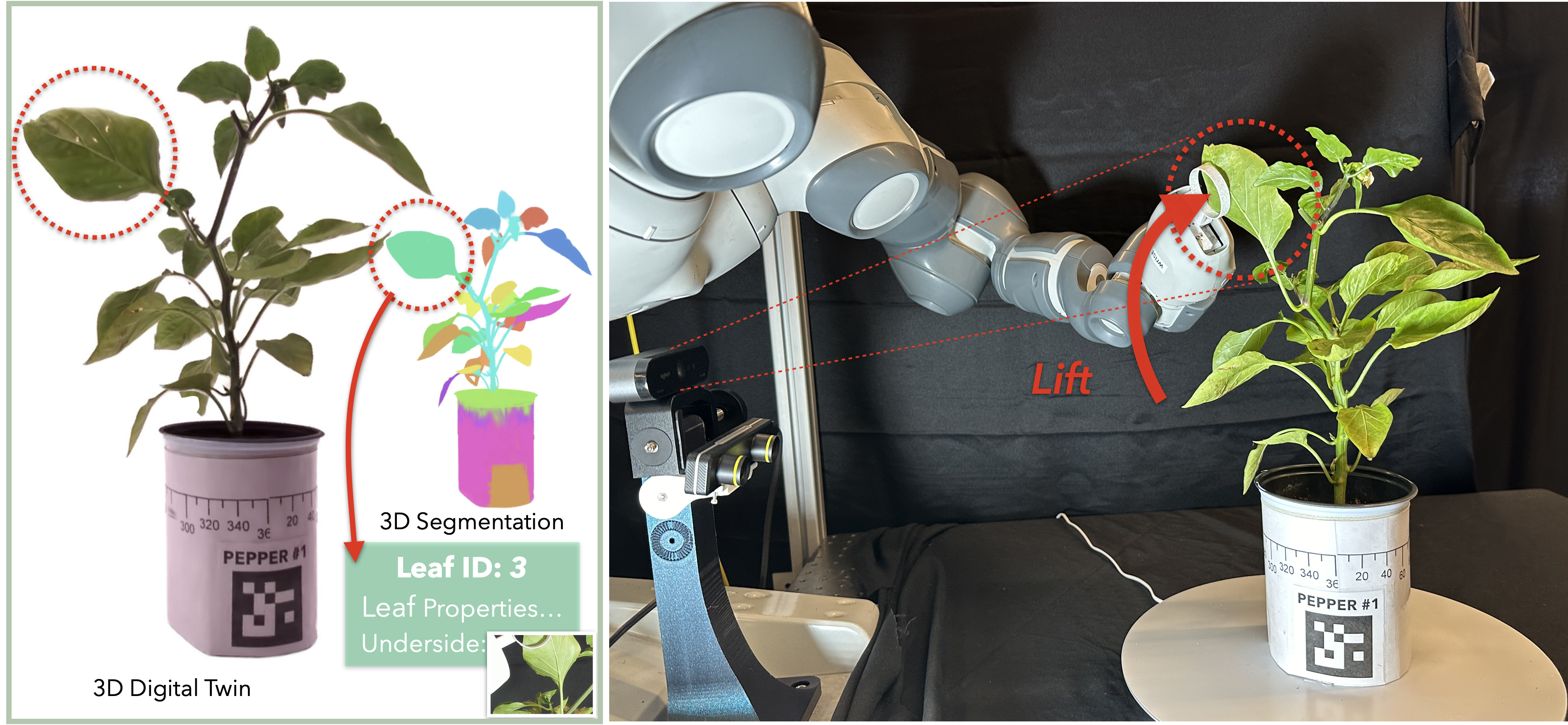

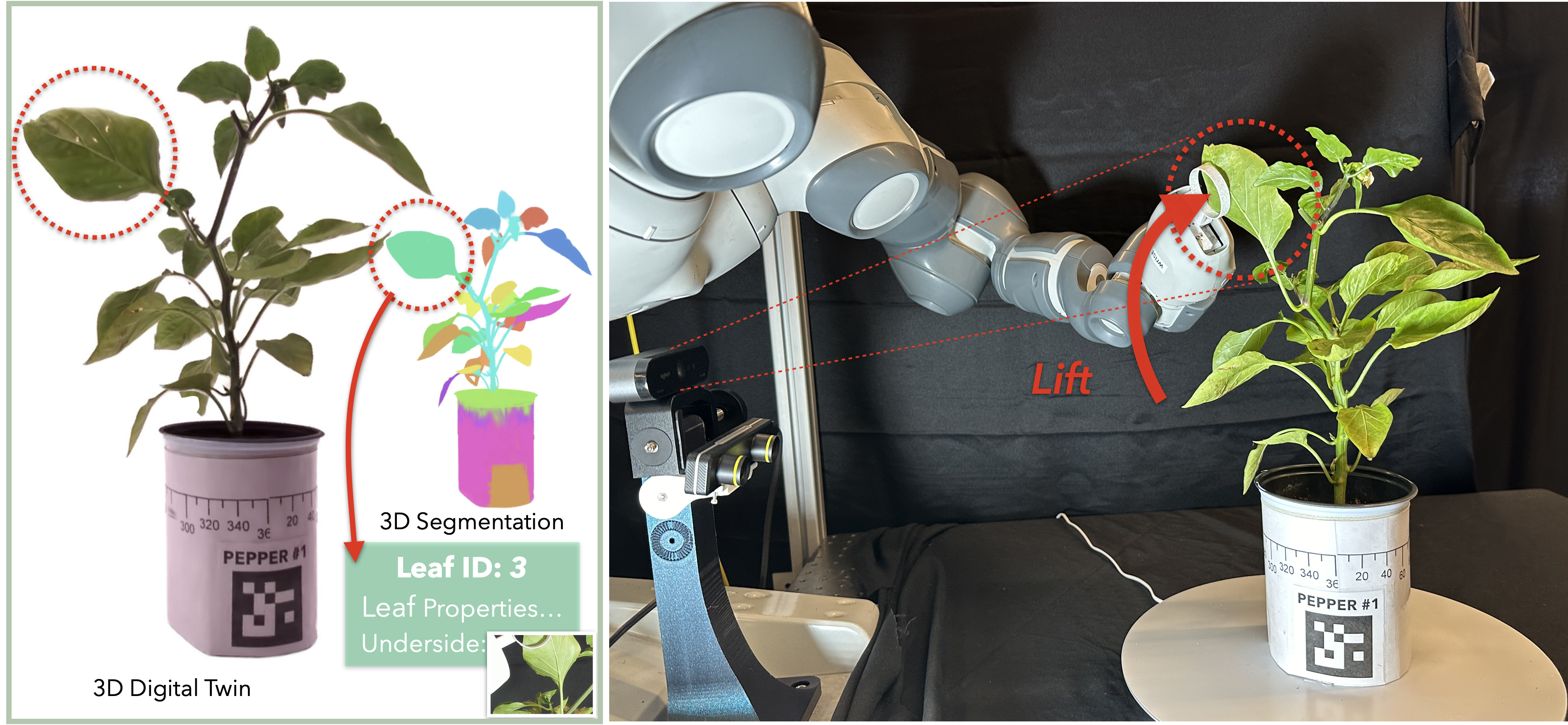

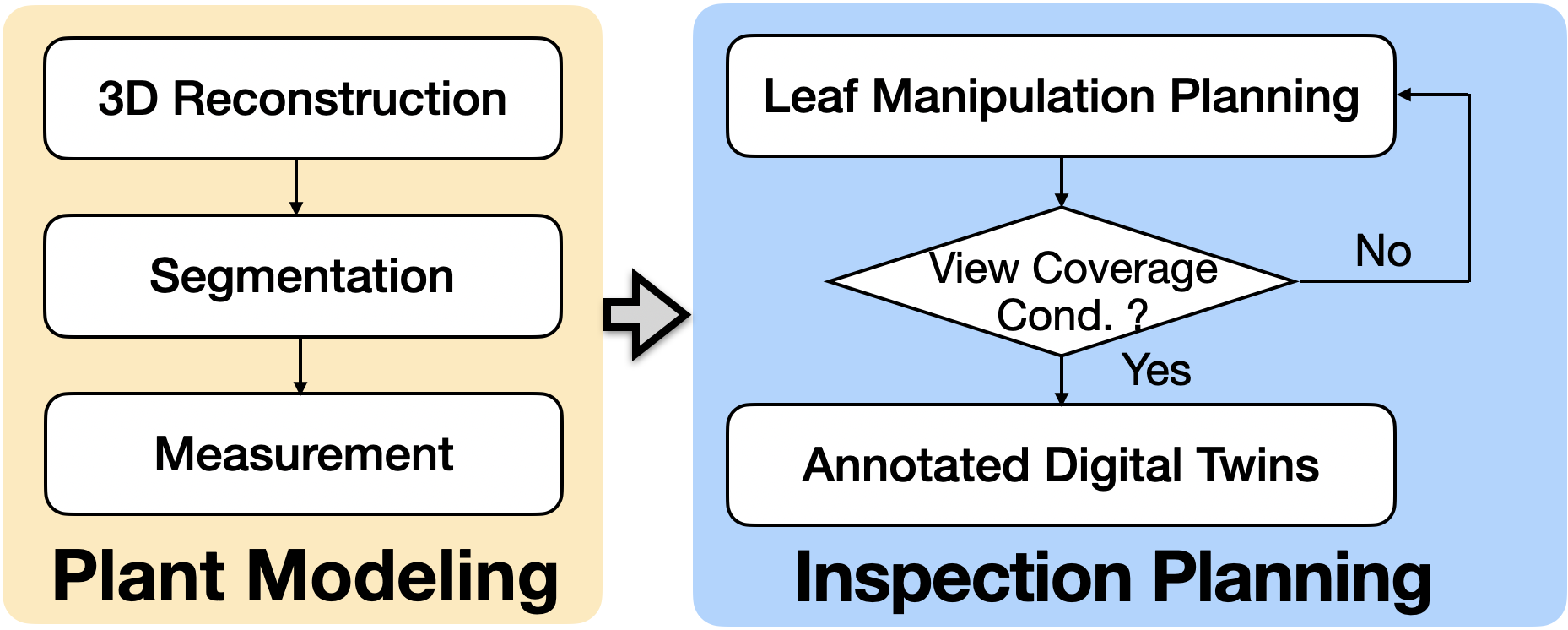

The paper presents Botany-Bot, an integrated hardware-software system for high-fidelity 3D digital twin generation and inspection of living plants, specifically targeting the challenge of occluded and underleaf structures. Traditional plant phenotyping systems relying on fixed cameras are fundamentally limited by occlusion, particularly for leaf undersides and stem buds, which are critical for disease detection, pest monitoring, and growth analysis. Botany-Bot leverages recent advances in 3D Gaussian Splatting (3DGS) for rapid, high-quality reconstruction, and introduces autonomous robotic manipulation to physically interact with plant leaves, enabling acquisition of high-resolution images of previously inaccessible surfaces.

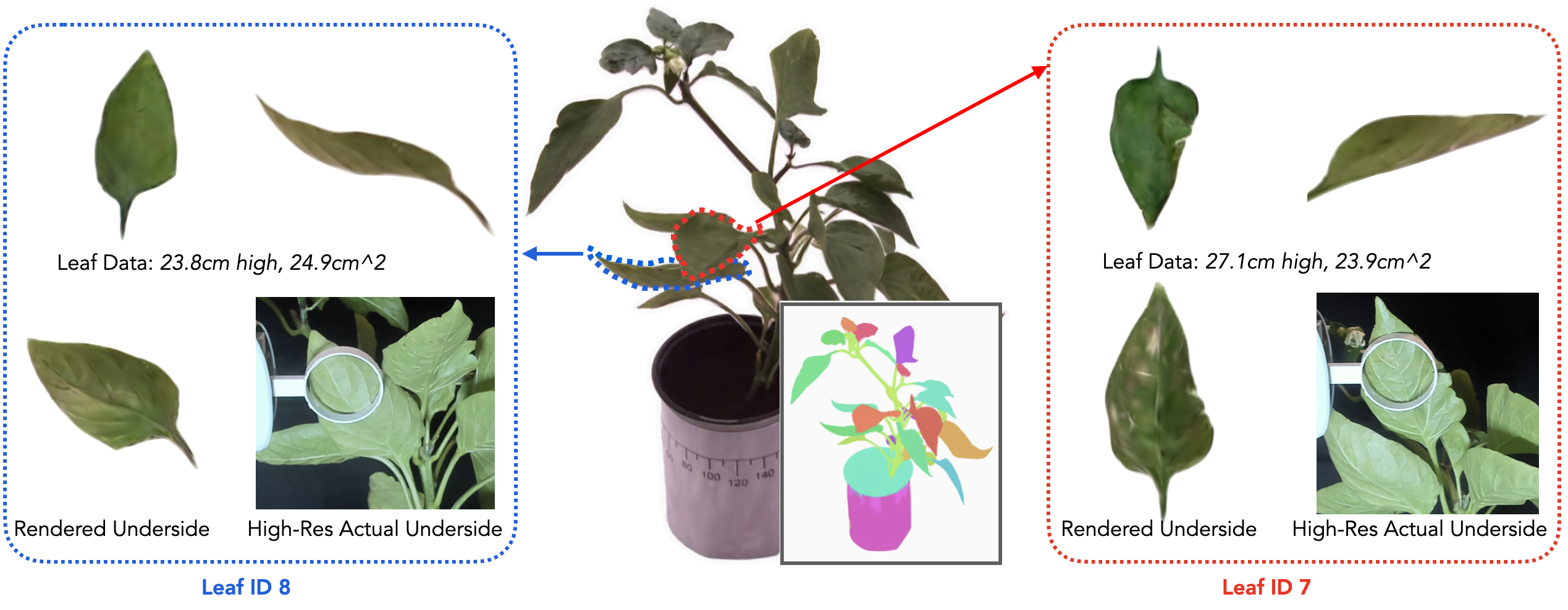

Figure 1: Botany-Bot creates detailed 3D digital twins of plants with a turntable and fixed cameras, segmenting them into individual components and augmenting with robotically acquired underleaf images.

System Architecture

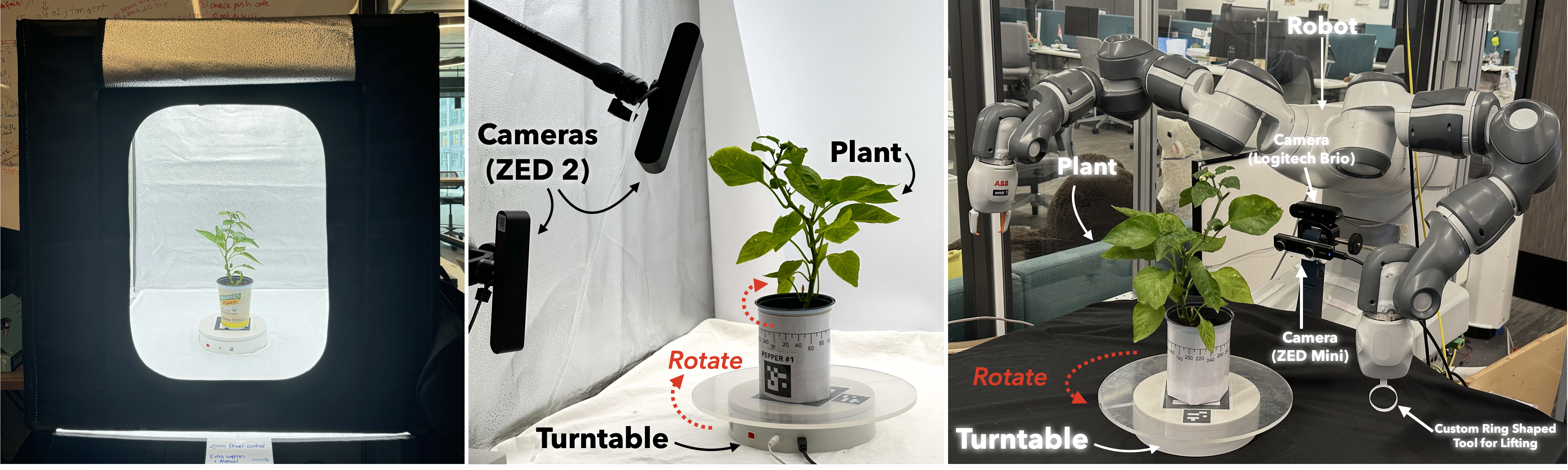

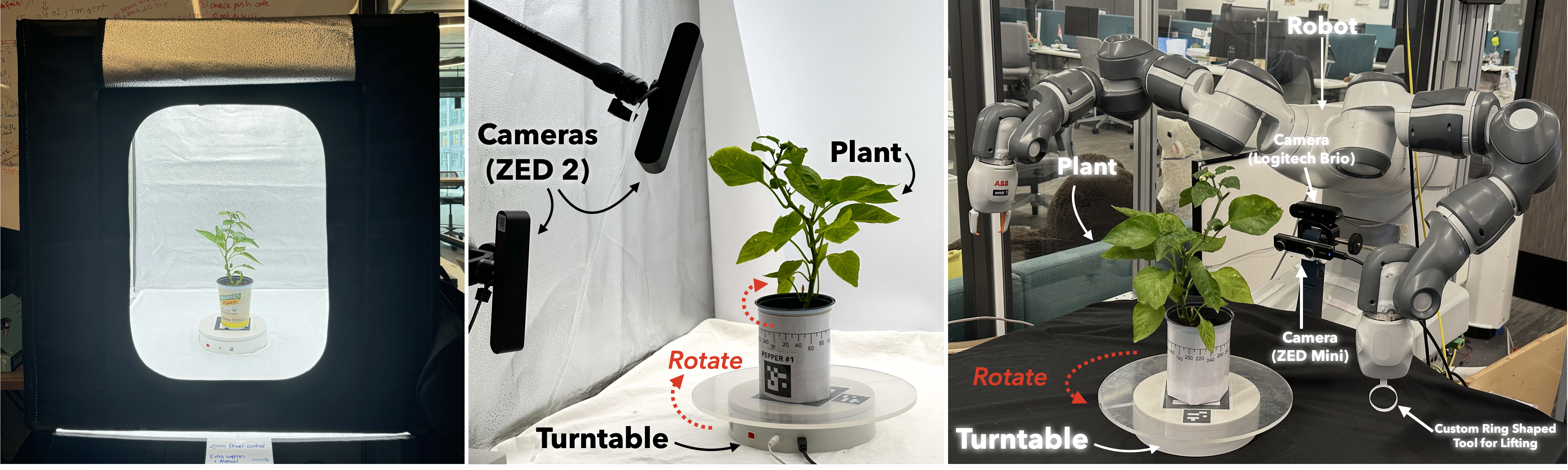

Hardware Design

Botany-Bot utilizes a commercially available lightbox for uniform illumination, two stereo cameras (yielding four viewpoints), a digital turntable for multi-view capture, and a 7-DOF industrial robot arm equipped with a custom ring-shaped end effector for non-destructive leaf manipulation. The system is designed for modularity and cost-effectiveness, with the core imaging and turntable setup costing under \$2,000, excluding the robot.

Figure 2: Multi-view data collection system with lightbox, turntable, stereo cameras, and robot arm for plant manipulation and imaging.

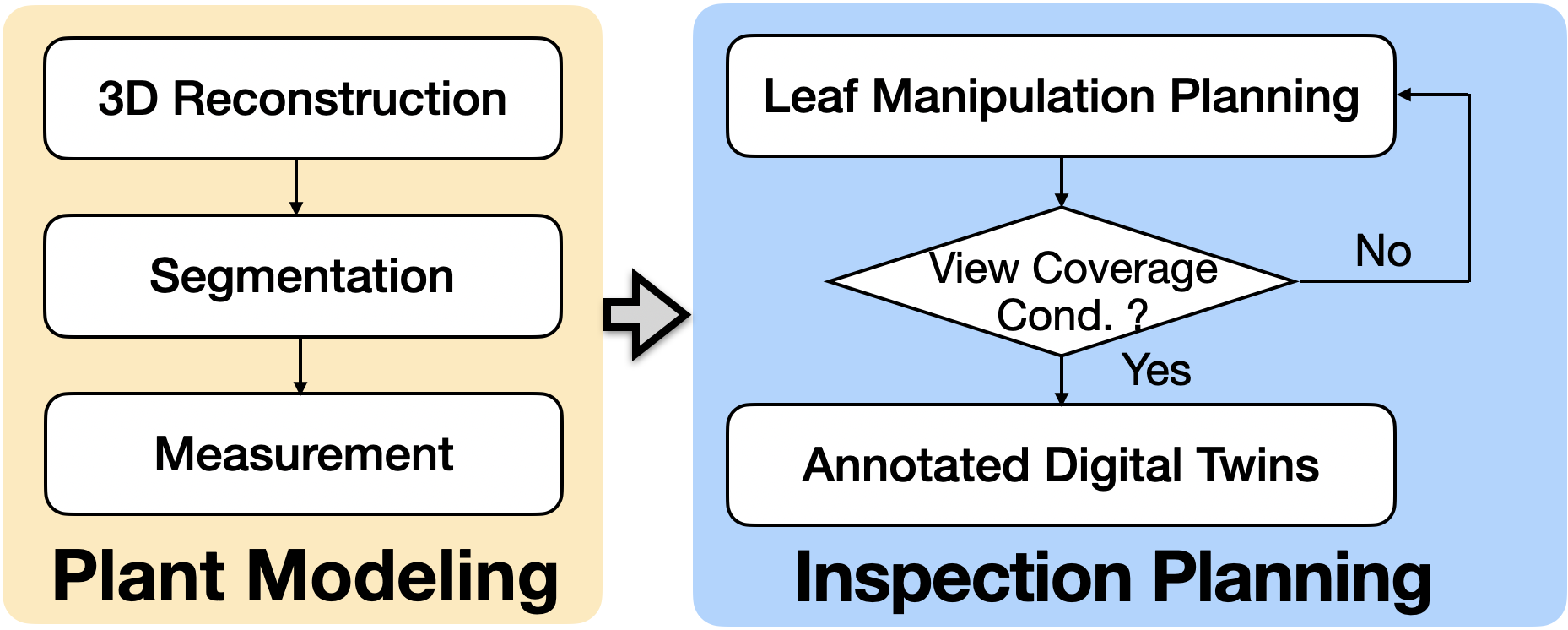

Software Pipeline

The software stack orchestrates multi-view image acquisition, camera pose calibration via ArUco markers, 3D reconstruction using Gaussian Splatting, semantic segmentation of plant components, and planning of robotic inspection trajectories. Segment Anything Model v2 (SAM2) is used for initial plant-background masking, and GARField is employed for multi-level 3D segmentation, enabling leaf-level identification and geometric analysis.

Figure 3: Software pipeline for Botany-Bot, integrating multi-view modeling, segmentation, and inspection planning for annotated digital twins.

3D Reconstruction and Segmentation

Gaussian Splatting and Alpha Loss

Botany-Bot adopts 3DGS for rapid, high-fidelity scene reconstruction, achieving real-time rendering at 30 FPS. To address the non-static background and lighting inconsistencies inherent in turntable-based multi-view capture, the system applies SAM2-based masking and introduces an L1 alpha loss penalizing out-of-mask pixels, which is critical for suppressing spurious geometry ("floaters") and improving reconstruction quality.

Figure 4: Alpha loss ablation demonstrates the necessity of penalizing out-of-mask pixels for robust reconstruction in quasi-multiview setups.

Semantic Segmentation

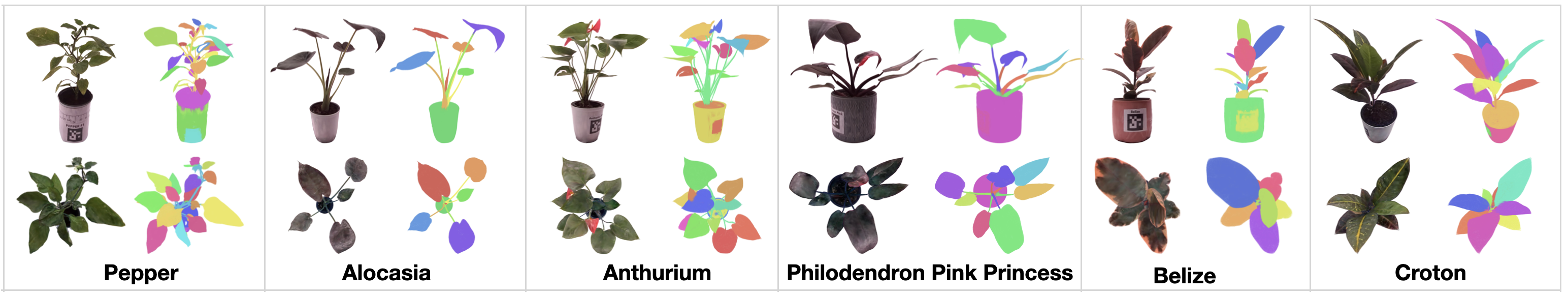

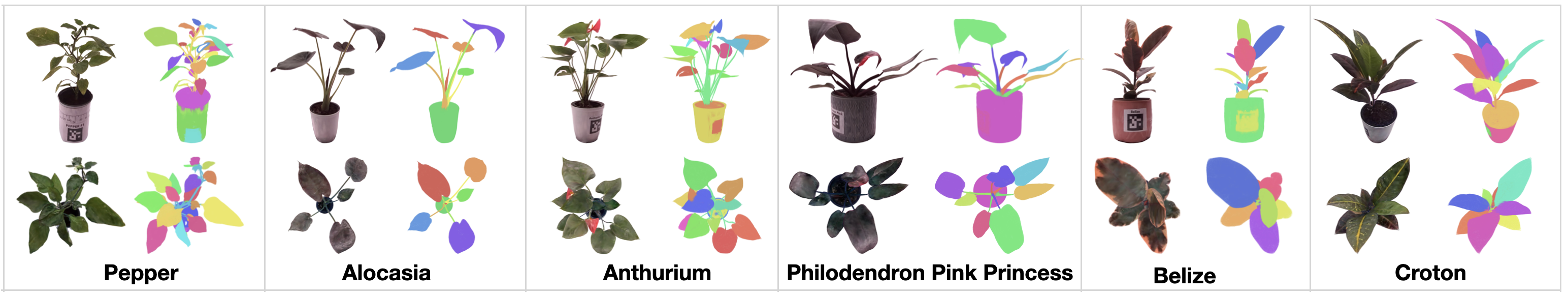

GARField leverages multi-view SAM masks to cluster 3D Gaussians into discrete plant components. Leaf detection is performed by filtering clusters based on spatial heuristics (e.g., excluding pot, stem, and noise clusters), and principal component analysis (PCA) is used to estimate leaf orientation. This approach enables zero-shot leaf segmentation and detection without task-specific models.

Figure 5: Segmented digital twins for six plant species, showing RGB renderings and colorized leaf/stem segmentation from multiple viewpoints.

Robotic Interaction and Inspection

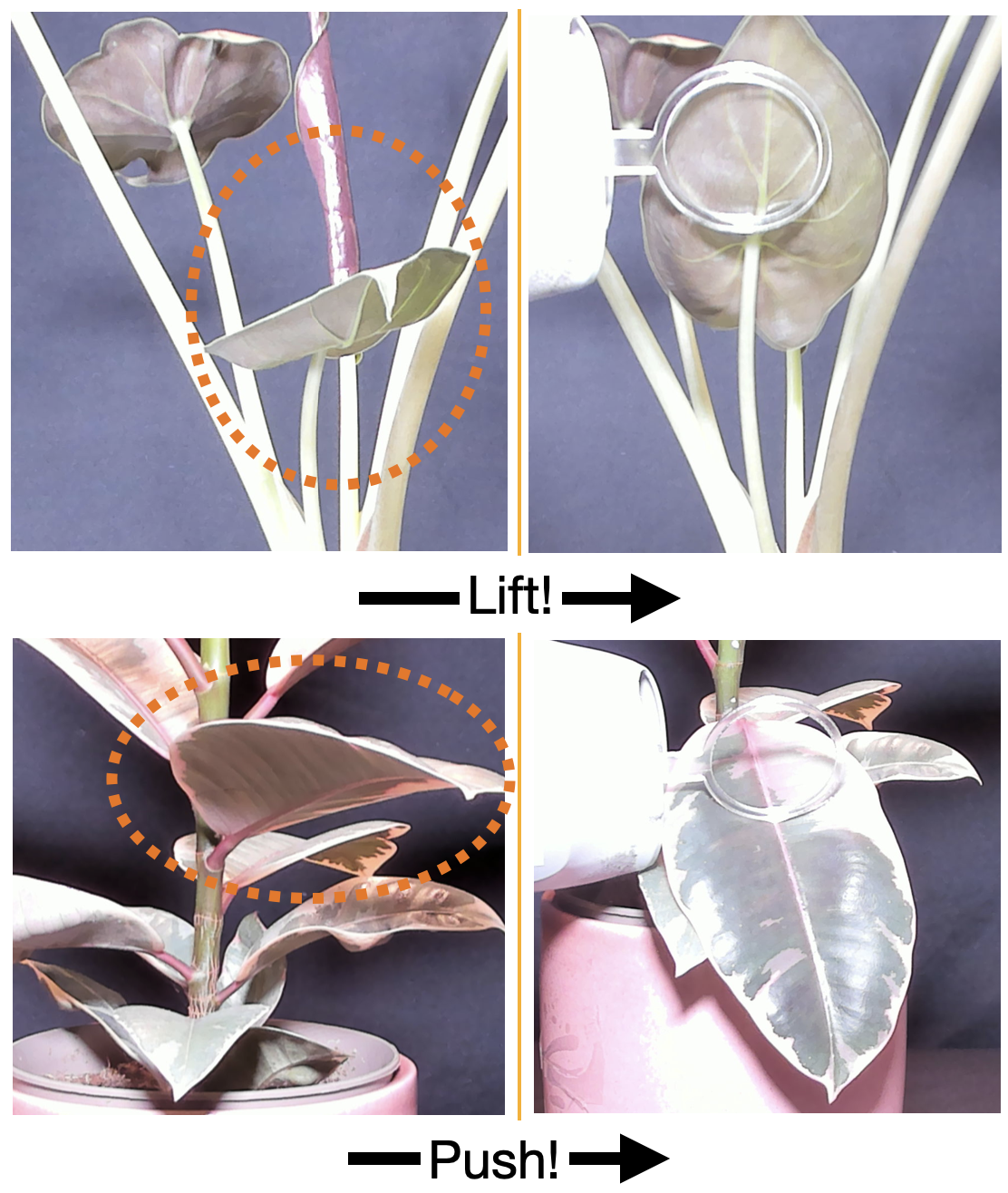

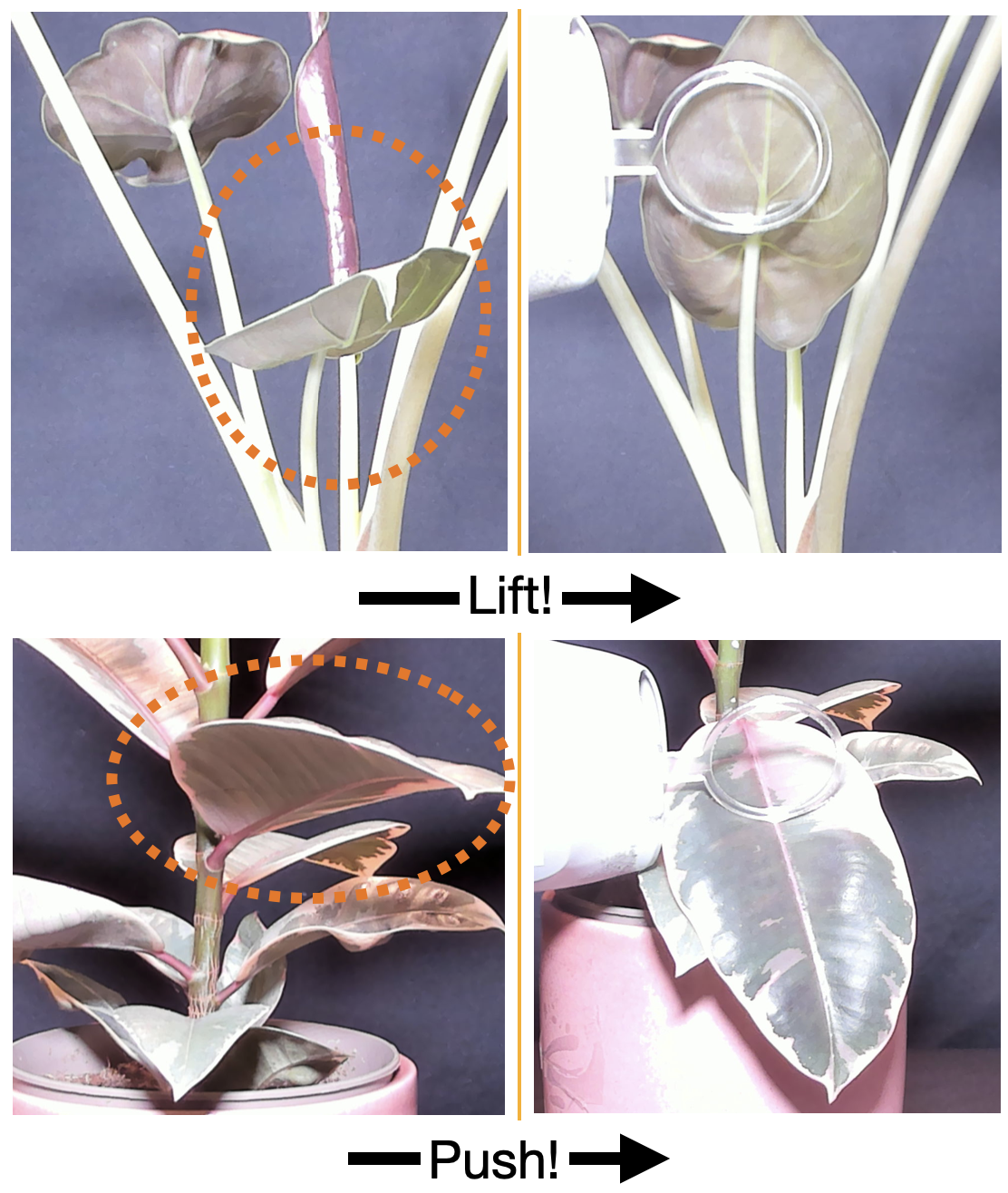

Manipulation Primitives

The robot executes a three-stage primitive: (a) turntable rotation to align the target leaf with the camera, (b) end effector positioning above/below the leaf centroid, and (c) controlled lifting/pushing motion with concurrent rotation to expose the leaf underside/overside. The inspection trajectory is optimized to maximize view coverage, defined as the ratio of visible to total leaf surface area.

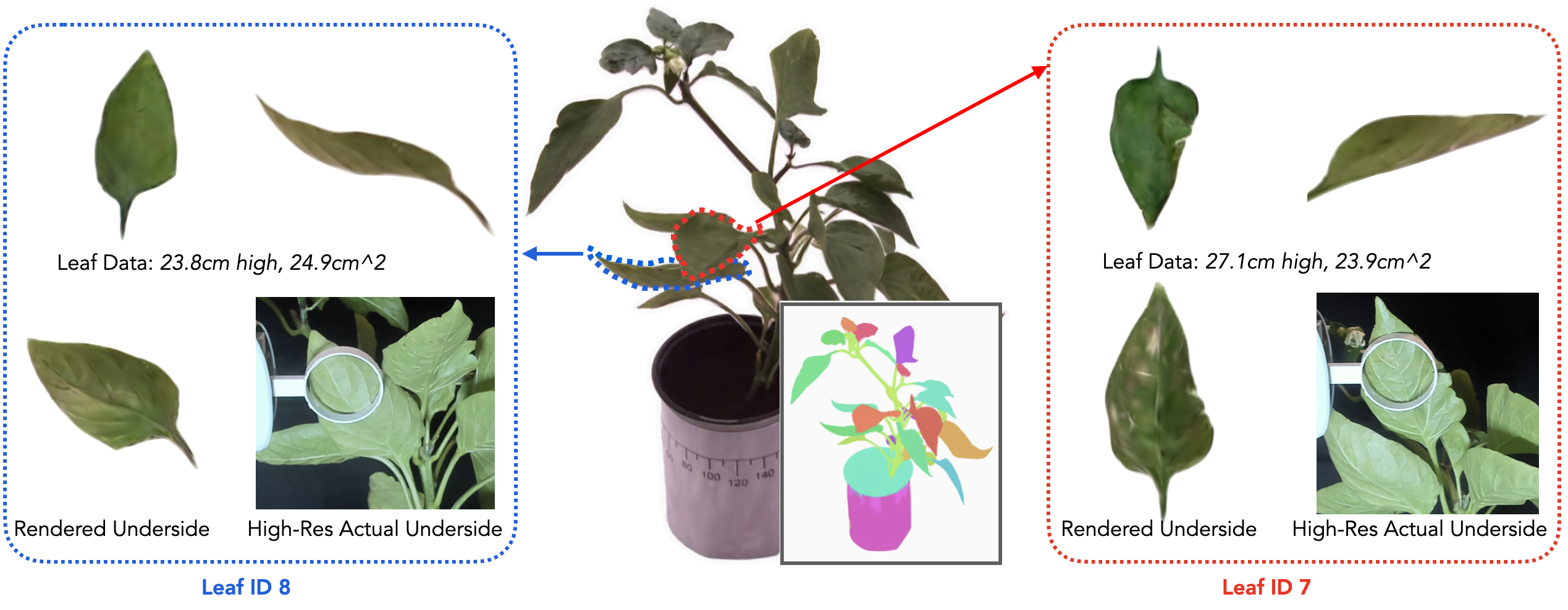

Annotated Digital Twins

The system aggregates geometric statistics, segmentation data, and high-resolution images from robotic inspection into an indexable digital twin data structure, enabling interactive exploration and analysis of individual leaves and their physical properties.

Figure 6: Annotated 3D digital twins with segmented leaves and robotically acquired underleaf images for detailed phenotyping.

Figure 7: Leaf surfaces before and after robotic manipulation, revealing previously occluded regions for high-resolution inspection.

Experimental Evaluation

Quantitative Results

Botany-Bot was evaluated on 109 leaves from 8 plant species. Key metrics include:

- Leaf segmentation accuracy: 90.8%

- Leaf detection accuracy: 86.2%

- Leaf manipulation success rate: 77.9%

- Detailed underleaf imaging success rate: 77.3%

- Mean absolute error (MAE) for leaf length/width estimation: 2.0 cm

Physical metrics such as leaf area and height are computed directly from 3D segmentations, with systematic underestimation attributed to bounding box fitting and exclusion of stem connections.

Robotic Inspection

Out of 68 accessible leaves, 53 were successfully manipulated, and 41 yielded high-quality underleaf/overside images. Failure modes include leaf obstructions, plant dynamics, and pose registration errors. The system avoids destructive interactions, but occasional breakage was observed in dense or fragile specimens.

Limitations and Future Directions

Botany-Bot is currently limited to plants with separable, non-dense leaves and does not update the 3D model with post-manipulation images, only annotating the digital twin. Segmentation accuracy could be improved via SAM2 fine-tuning or hierarchical clustering methods. Future work includes closed-loop visual servoing for manipulation, bimanual robot extension, integration with low-cost robot platforms, and longitudinal digital twin tracking for growth analysis.

Implications and Prospects

Botany-Bot demonstrates a scalable, modular approach to high-fidelity plant phenotyping, combining rapid 3D reconstruction with autonomous robotic inspection. The system enables detailed analysis of occluded plant structures, facilitating applications in breeding, disease monitoring, and precision agriculture. The integration of annotated digital twins with interactive inspection data sets a precedent for future agricultural robotics and digital twin systems. Further research may focus on dynamic reconstruction, improved segmentation, and broader applicability to complex plant morphologies.

Conclusion

Botany-Bot advances the state-of-the-art in plant phenotyping by integrating 3D Gaussian Splatting with autonomous robotic inspection, achieving high segmentation and manipulation accuracy for leaf-level analysis. The system's modular design and annotated digital twin framework provide a foundation for future developments in agricultural monitoring, robotic interaction, and digital twin evolution.