- The paper introduces MusicRFM, a framework that uses Recursive Feature Machines to steer music generation by adjusting internal activations with music-theoretic concepts.

- It integrates lightweight RFM probes with MusicGen, applying time-varying injection schedules to enable both single and multi-directional control of musical attributes.

- Experimental results indicate improved accuracy in note and chord generation, though balancing control strengths is essential to prevent distributional drift.

Steering Autoregressive Music Generation with Recursive Feature Machines: A Technical Essay

Introduction

The paper "Steering Autoregressive Music Generation with Recursive Feature Machines" (2510.19127) introduces a novel framework, MusicRFM, for controlling music generation models. This framework leverages Recursive Feature Machines (RFMs) to enable fine-grained, interpretable control over pre-trained music models by adjusting their internal activations. Unlike traditional methods requiring intensive retraining or potentially introducing artifacts, MusicRFM facilitates real-time adjustments to musical attributes such as pitch, chords, and tempo.

Methodological Framework

Recursive Feature Machines and Concept Directions

RFMs provide a mechanism for identifying interpretable axes within a model's activation space. These axes, termed "concept directions," correlate strongly with music-theoretic attributes like notes and chords. RFMs achieve this by analyzing gradients within the model, extracting orthogonal directions using an Average Gradient Outer Product (AGOP). This hierarchical approach discerns principal axes of sensitivity, leading to robust intervention in the activation space.

Integration with MusicGen

MusicGen serves as the backbone for MusicRFM. The integration involves training lightweight RFM probes across various layers of MusicGen using the SynTheory dataset, which provides detailed supervision on music-theoretic concepts. Once these concept-aligned directions are established, they are injected into MusicGen during inference to guide the generation process without per-step optimization.

Dynamic Control Mechanisms

MusicRFM introduces time-varying schedules for controlling these injections. These include deterministic schedules (linear, exponential, logistic) and stochastic gating, allowing for nuanced control over the trajectory of musical attributes during generation. Furthermore, it supports multi-direction steering, permitting simultaneous or staggered enforcement of multiple musical attributes.

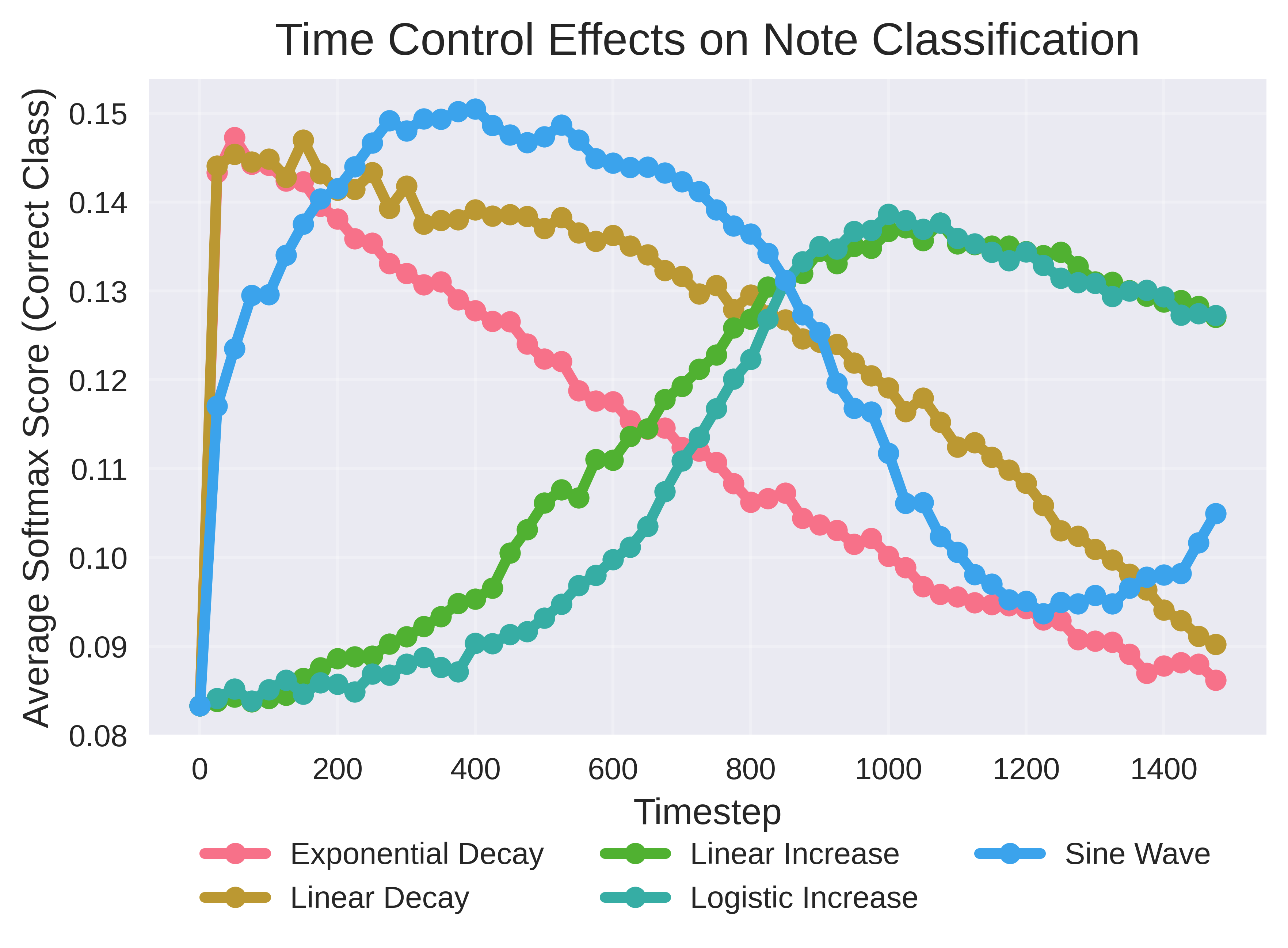

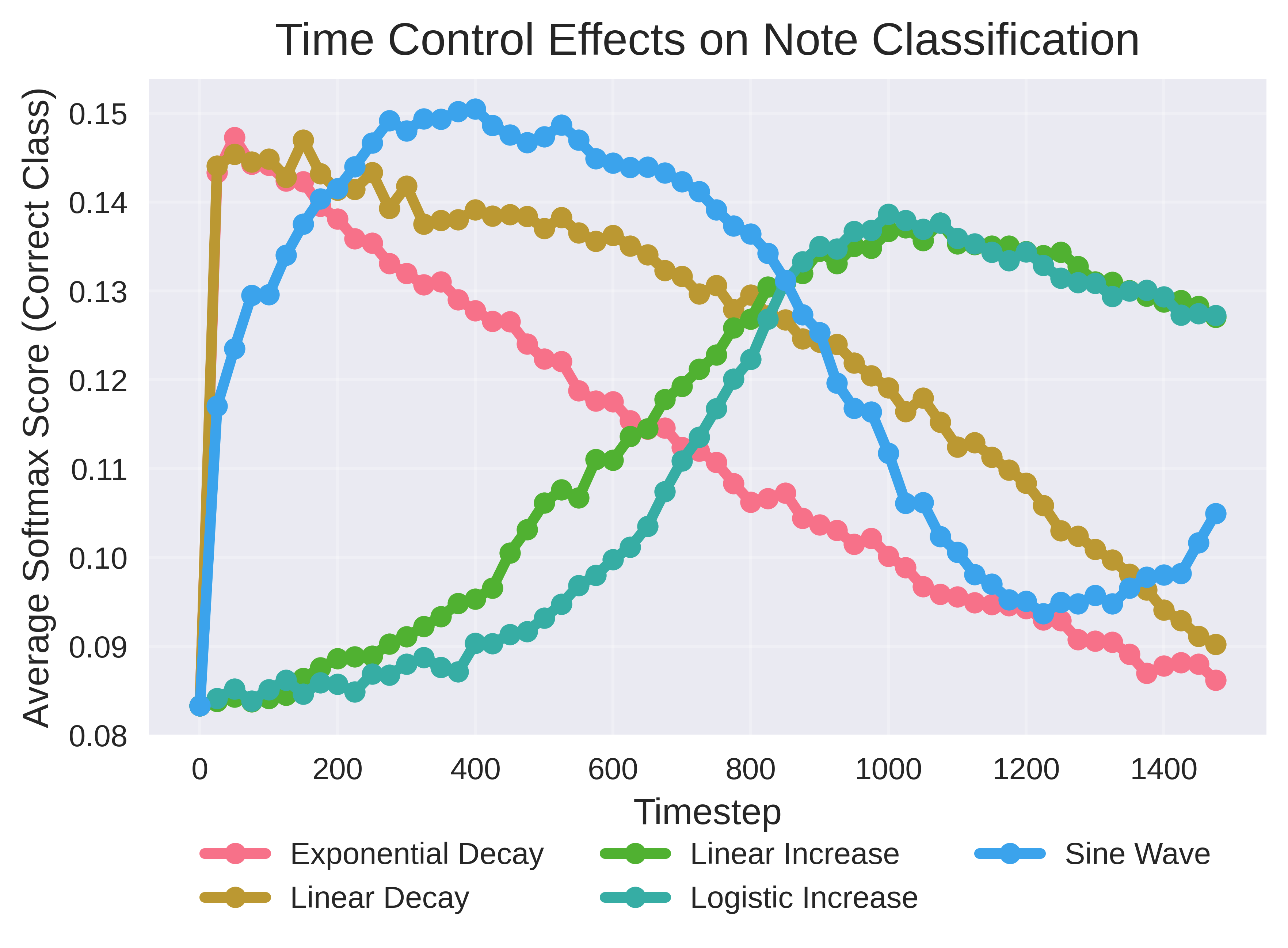

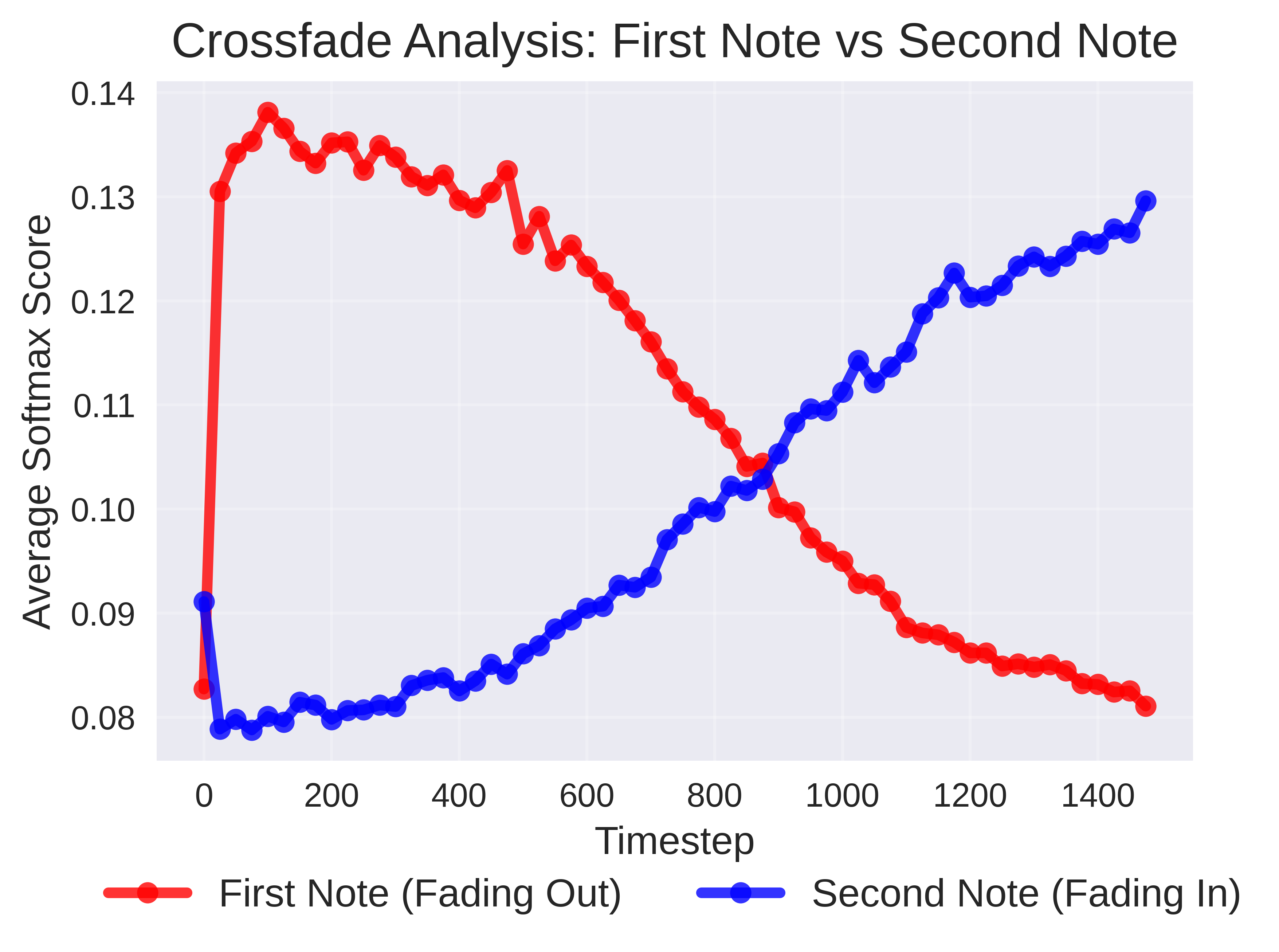

Figure 1: Temporal softmax traces (notes). Curves show the probe probability of the ground-truth note across timesteps for different schedules (linear/exp rise/decay, log. increase, sine).

Experimental Results

Single-Direction Steering

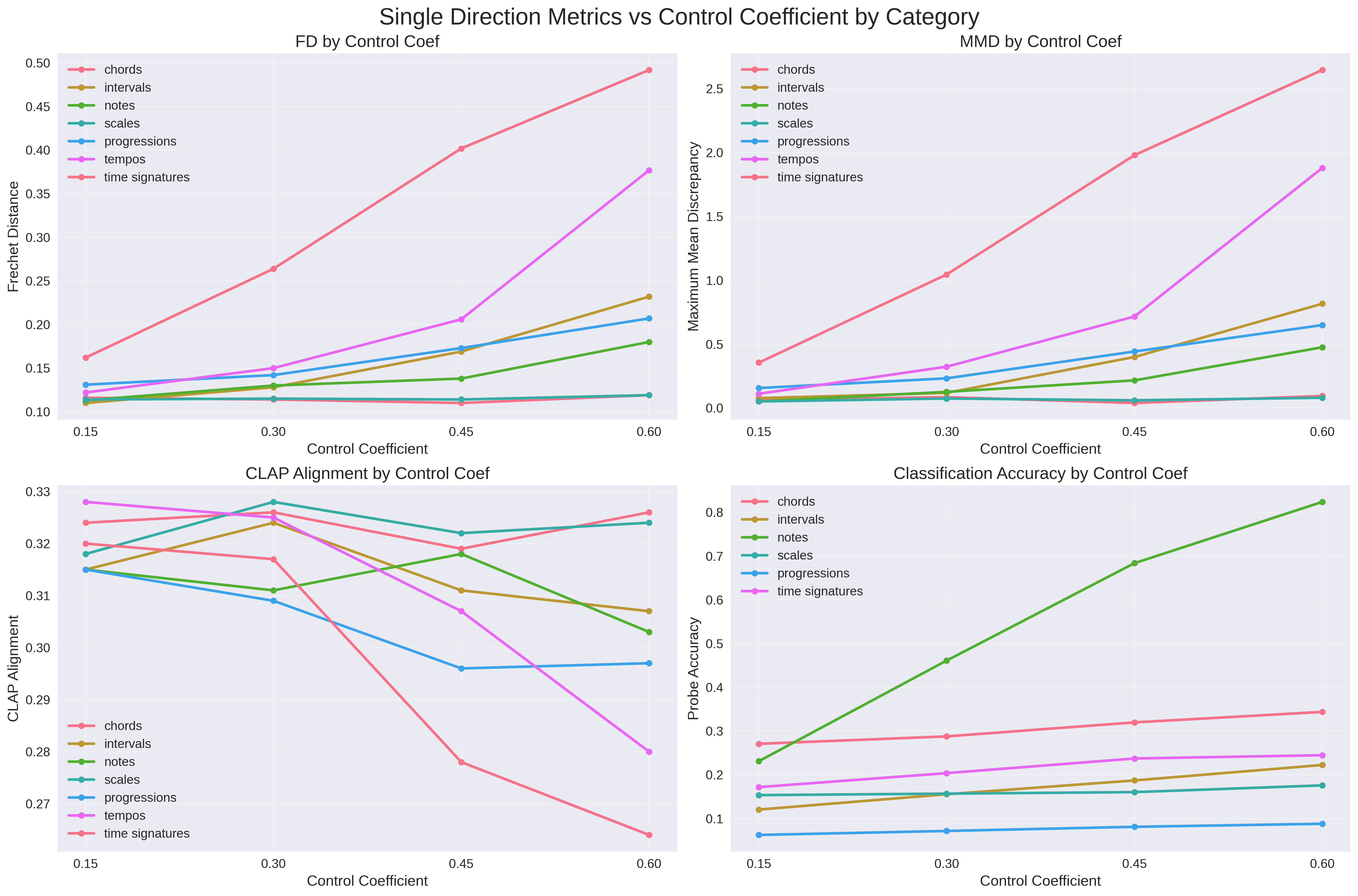

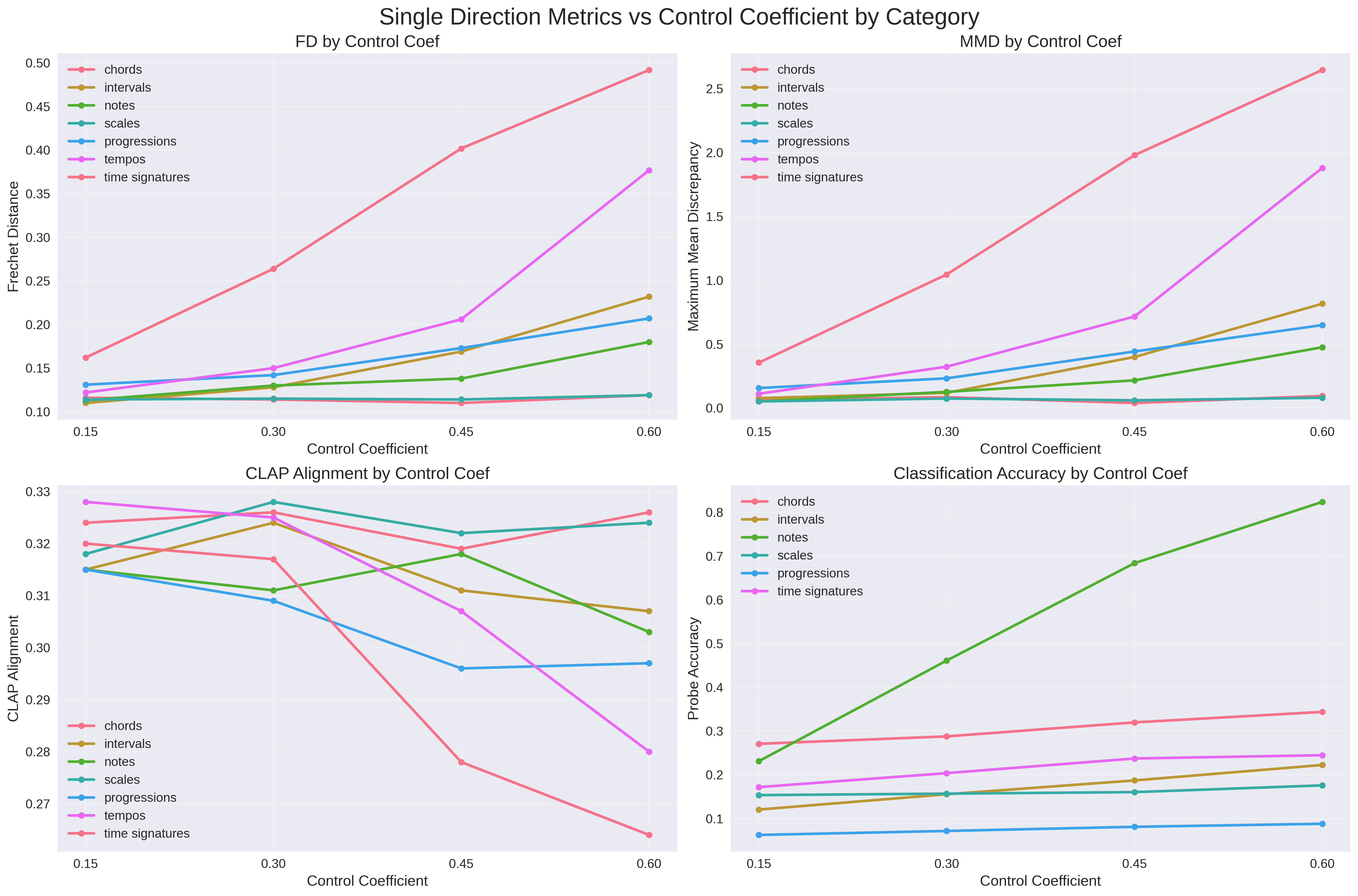

The framework achieves notable improvements in the accuracy of generating specific musical notes and chords. With increasing control coefficients (η0), Fréchet Distance (FD) and Maximum Mean Discrepancy (MMD) metrics rise, indicating deviations from reference distributions. However, the alignment with text prompts remains fairly stable, showcasing the framework’s efficacy in maintaining prompt fidelity (Figure 2).

Figure 2: Single-direction steering metrics as a function of control coefficient eta_0. FD increases, MMD follows suit, CLAP alignment remains stable, and probe accuracy improves with stronger control.

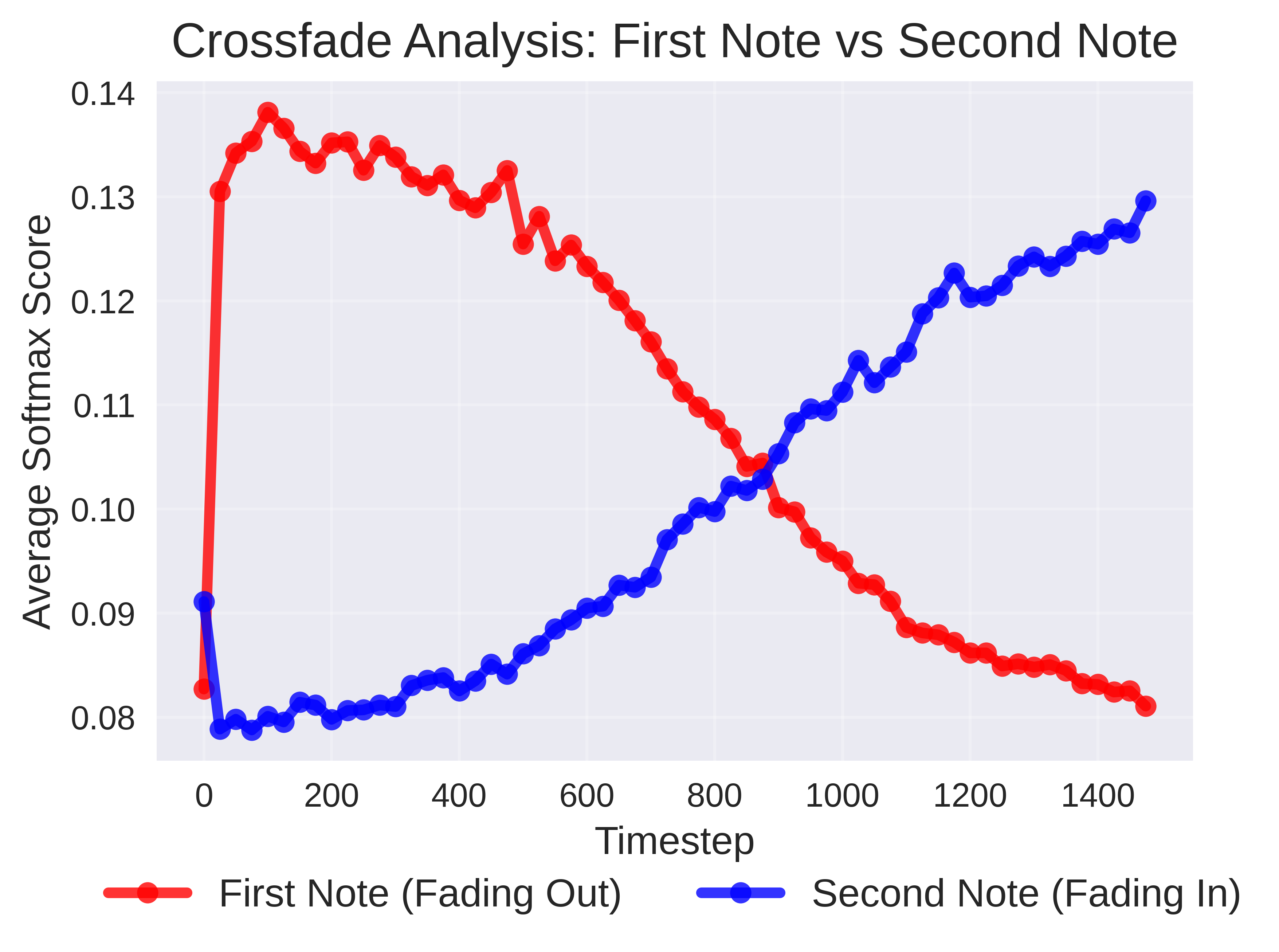

Multi-Direction Control

MusicRFM demonstrated robust capability in simultaneously managing multiple musical attributes, though this often resulted in increased distributional drift and decreased prompt adherence. The approach suggests a need for careful balance in control strengths to mitigate artifacts while achieving desired outcomes.

Temporal Dynamics

The application of time-based schedules showcases effective modulation of musical attributes, reflected in the accurate following of prescribed schedules. The dynamic control proves instrumental in realizing transitions and nuanced variations within generated music.

Implications and Future Directions

MusicRFM embodies a significant advancement in the domain of controllable music generation. It fosters practical applications across music production and interactive generative systems, offering means for real-time modulation of musical compositions. Future directions involve extending the framework to more complex real-world music attributes beyond symbolic datasets, harnessing temporally aware feature extraction, and exploring interactive real-time steering in performance contexts.

Moreover, extending this methodology to other autoregressive generative models like OpenAI’s Jukebox suggests potential cross-domain applications, further enriching the generative capabilities across diverse audio domains.

Conclusion

MusicRFM sets a foundational framework for controlled autoregressive music generation. By employing Recursive Feature Machines for steering pre-trained models in the activation space, it balances fine-grained controllability with audio quality and prompt fidelity. This research paves the way for enhanced interpretability in generative models and amplifies their applicability in creative fields, transforming generative dynamics in music and beyond.