Unveiling the Dimensionality of Networks of Networks

Abstract: "Every object that biology studies is a system of systems." (Fran\c{c}ois Jacob, 1974). Most networks feature intricate architectures originating from tinkering, a repetitive use of existing components where structures are not invented but reshaped. Still, linking the properties of primitive components to the emergent behavior of composite networks remains a key open challenge. Here, by composing scale-invariant networks, we show how tinkering decouples Fiedler and spectral dimensions, hitherto considered identical, providing valuable insights into mesoscopic and macroscopic collective regimes.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Unveiling the Dimensionality of Networks of Networks — Explained Simply

1) What is this paper about?

This paper explores how big, complicated systems are built by connecting smaller parts together, like Lego blocks. These systems are called “networks of networks.” The authors show that when you build a large network by combining smaller ones, two important ways of measuring “how the network behaves” can come apart and give different answers. This discovery helps us better understand how things spread, balance, or sync in complex systems like the brain, the internet, or even living cells.

2) What questions are the researchers asking?

The paper asks simple but deep questions:

- If you build a big network by attaching many smaller networks together, how does that change how things move or spread through the whole system?

- Are two key measurements of “network size/shape” always the same, or can they be different in these composite (modular) networks?

- Can we predict these measurements from the properties of the smaller pieces?

To make this concrete, they compare two “dimensions” of a network:

- The spectral dimension (): tells how a spreading process (like heat, traffic, or a rumor) behaves when the network is extremely large.

- The Fiedler dimension (): tells how the slowest “relaxing” or “balancing” process scales as the network gets bigger (it’s related to how hard it is for the network to fully settle down or synchronize).

In simple terms: is about the “feel” of the space for spreading at huge size; is about the slowest bottleneck as size grows. In regular, uniform networks these are the same. The paper shows they can differ in modular, stitched-together networks.

3) How did they study this? (Methods in everyday language)

They use a tool called the Laplacian Renormalization Group (LRG). Think of it like this:

- Imagine you’re looking at a very detailed map of roads. Then you slowly blur the map so only the main roads remain. As you blur more, you lose details and keep only the big structure.

- They do the same for networks using a “diffusion” process (like watching a drop of dye spread in water on the network). The amount of blurring is controlled by a “time” parameter, .

- At each blur level, they measure how much “information” is left. The rate of information loss is called the “heat capacity” . Plateaus (flat regions) in mean the network looks similar across many scales — that’s a sign of scale-invariance.

- The height of a plateau directly tells you half the spectral dimension: .

They apply this method to:

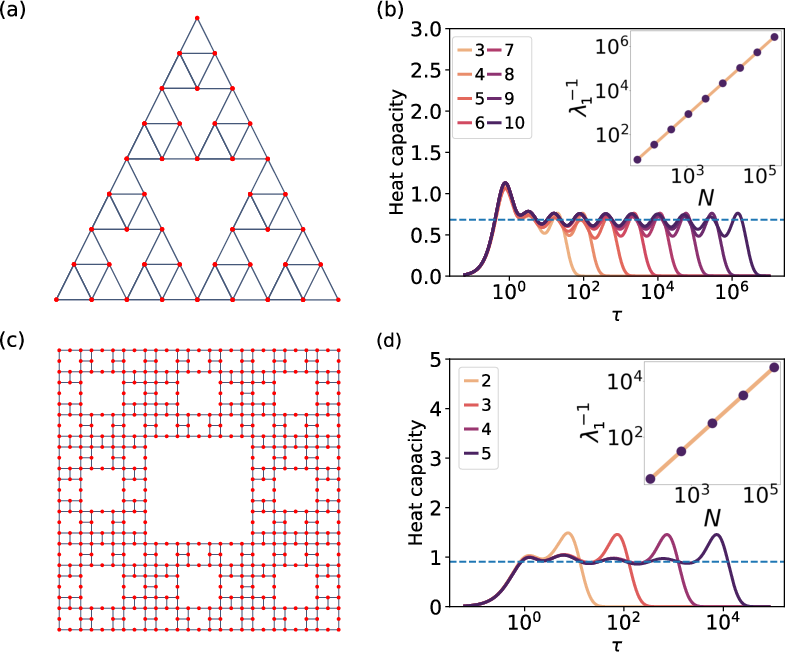

- Simple fractal networks (like Sierpinski gasket/carpet) to double-check known results.

- “Bundled” or “network-of-networks” structures, where each node of a “base” network has a whole “fiber” network attached to it (imagine each intersection in a city connected to its own small neighborhood).

They also analyze the smallest non-zero “tone” of the network’s Laplacian (a math object describing connections), called the Fiedler eigenvalue . It controls the slowest time to balance/spread. Its scaling with size defines the Fiedler dimension via:

- , where is the number of nodes.

4) What did they find, and why does it matter?

Here are the main discoveries, with a short explanation before the list: When you build networks out of smaller parts, the large-scale spreading behavior and the slowest “settling-down” behavior don’t always match — even though in simpler networks they do. The authors identify multiple “scales” in these composite networks and show how each scale contributes a different effective dimension.

- In regular fractals (like the Sierpinski networks), the two dimensions match: . That’s the “classic” case.

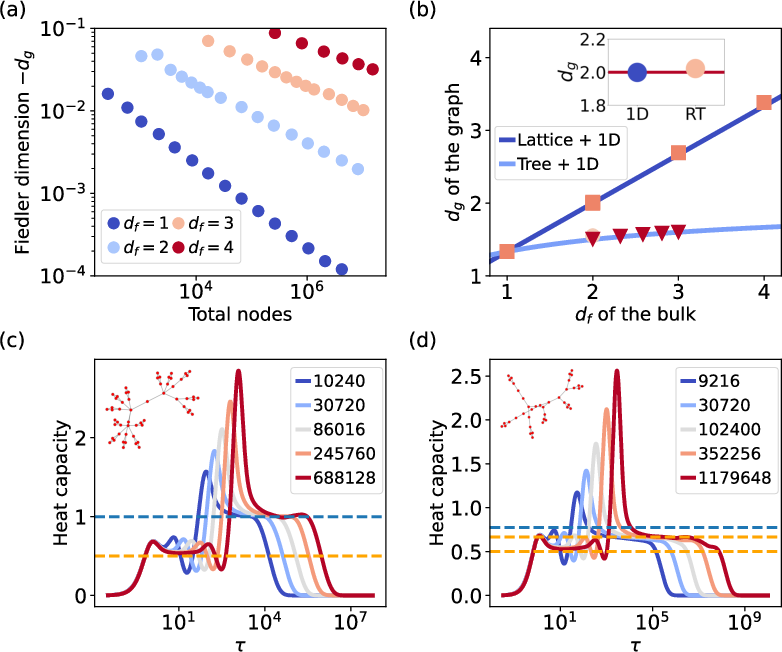

- In bundled networks (networks-of-networks), they decouple: . This means:

- The spectral dimension is controlled by the fibers (the smaller units attached to each base node).

- The Fiedler dimension depends on a mix of the base and the fibers — especially on how the base’s slowest mode scales and how big the fibers are.

- When they looked at the “heat capacity” over time (as they blurred the network):

- The first plateau matched the fibers’ dimension (so comes from the fibers).

- A second plateau revealed the base’s dimension (showing a deeper, mesoscopic scale).

- A final peak linked to the Fiedler eigenvalue indicated how the slowest process shuts down, tying to .

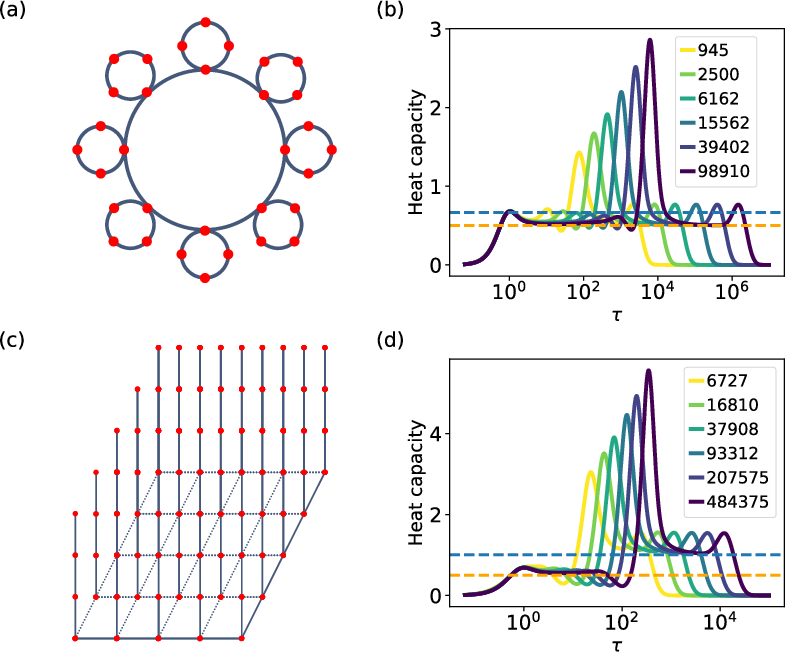

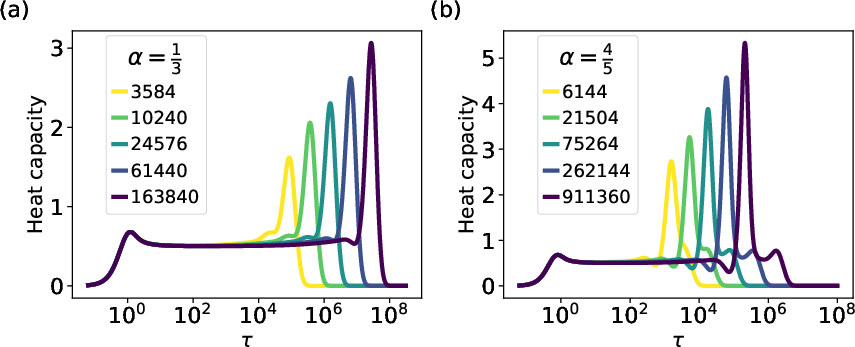

- They derived a general formula predicting for bundled networks, and confirmed it with computer experiments. A striking example is a “comb-like” network (a ring of rings): it produces an “anomalous” Fiedler dimension of $4/3$ — a value you wouldn’t guess from either part alone.

- Special case: if the base’s Fiedler dimension equals 2, then the composite network’s Fiedler dimension equals the base’s, no matter what the fibers are.

Why it matters:

- Many real systems (brains, ecosystems, social platforms, software, and materials) are built by reusing modules. Knowing that and can differ helps us predict when spreading (like signals or diseases) will feel “low-dimensional” (easy to spread) but the system still takes a long time to fully balance due to hidden bottlenecks — or vice versa.

5) What’s the big picture? (Implications)

This work shows that one number (the spectral dimension) isn’t enough to describe complex, modular networks. The Fiedler dimension adds crucial information about large-scale bottlenecks and slow dynamics. Understanding both helps in:

- Designing safer, more robust infrastructures (power grids, transportation, the internet).

- Building better materials and circuits where transport or synchronization matters.

- Understanding how the brain or gene networks integrate modules to get complex behavior.

- Planning for or controlling spreading processes (like epidemics or information flow) in social and technological systems.

In short: when you build big things out of smaller parts, new behaviors can appear that you can’t see by looking at the parts alone. This paper gives us the tools and formulas to predict those behaviors — and to design networks that work the way we want.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, consolidated list of what remains uncertain or unexplored in the paper, framed to guide actionable future research:

- Provide a rigorous derivation (with error bounds) of the perturbative result λₖ ≈ ℓₖᵇ / N_f: specify assumptions on coupling locality, strength, fiber homogeneity, and quantify the regime of validity (including corrections beyond first order).

- Generalize Eq. (dg_gen) to heterogeneous composites: allow fibers with variable sizes, weights, multiple anchor nodes per fiber, node-dependent N_f(i), and distributions of fiber/base dimensions; derive the resulting Fiedler scaling and dimensional exponents.

- Extend the framework to weighted and directed networks: define appropriate Laplacians (e.g., random-walk or normalized) and test whether spectral–Fiedler dimension decoupling persists; establish transformation rules between Laplacian choices.

- Assess applicability beyond “bundled” architectures: analyze multiplex/interdependent networks (layer-layer couplings, nonlocal interlayer connectors) to determine whether ds and dg relationships derived here hold or fail; identify structural conditions for generalization.

- Develop a finite-size scaling theory for the LRG heat capacity C(τ): predict plateau widths, peak heights/locations, and their scaling with N; provide diagnostics and uncertainty quantification for estimating ds and dg from finite data.

- Validate on empirical systems: design a methodology to infer base/fiber decomposition and estimate d_{f,b}, d_{f,f}, d_{g,b} from real-world networks (e.g., brain connectomes, software dependency graphs, biological interaction networks); benchmark predictions against observed dynamical timescales.

- Clarify treatment of small-world and scale-free bases: formally define d_{f,b} and d_{g,b} when fractal dimension is ill-defined or diverges; justify and prove the claim d_{g,b} = 2 for BA networks across parameter ranges (degree exponent, size), including counterexamples if present.

- Analyze cases where the base fraction does not vanish in the thermodynamic limit (e.g., bounded fiber sizes or different scaling laws for base vs fiber growth): derive ds for such composites and specify when the fiber no longer dominates ds.

- Characterize the full low-λ spectral density ρ(λ) for composites: move beyond λ₁ to obtain analytical forms for ρ(λ) that combine base and fiber contributions; verify against numerical spectra and connect to the observed second plateau.

- Quantify dynamical consequences of anomalous d_g: derive explicit scaling laws for relaxation/mixing times, synchronization thresholds, diffusion return probabilities, and epidemic spreading rates as functions of dg (and ds), and test them in simulations.

- Formalize recursive composition: build and analyze an RG-like flow for repeated composition (base ↦ composite ↦ new base), including fixed points and stability of (ds, dg); map the “phase diagram” of achievable dimension pairs under hierarchical tinkering.

- Evaluate robustness of conclusions under Laplacian normalization: compare D − A vs normalized Laplacians; identify conditions under which ds and dg estimates are invariant or how they transform.

- Improve robustness of LRG-based dimension inference in noisy or incomplete data: develop criteria to distinguish genuine plateaus from artifacts, specify sampling requirements, and propose denoising procedures to stabilize C(τ) estimates.

- Quantify boundary-condition effects: provide correction schemes for non-periodic boundaries and finite geometries, and estimate how boundary artifacts bias ds and dg measured via C(τ).

- Incorporate multiple fibers per base node and inter-fiber coupling through the base: extend the model to graph-of-graphs with fiber–fiber interactions mediated by base nodes, and derive the impact on low-energy spectra and Fiedler scaling.

- Supply analytical characterization for random-tree bases (RT): compute/justify d_{f,b} and d_{g,b} for RTs under different generative models (e.g., Galton–Watson, preferential attachment trees), including parameter dependence and proofs (promised but not shown in the end matter).

- Identify structural predictors of d_g ≠ d_s: define measurable network features (e.g., modularity, attachment heterogeneity, degree distributions) that forecast the magnitude and direction of dg–ds decoupling; specify classes where equality holds beyond homogeneous networks.

- Standardize computational pipelines: detail scalable algorithms and complexity for estimating ds and dg (LRG, spectral methods) in large N, compare with alternative estimators, and provide guidelines for parameter selection (e.g., τ ranges).

- Analyze effects of nonuniform coupling strengths (weighted attachment impurities): generalize ℓᵇ to incorporate edge weights and derive modified λ₁ scaling and dg expressions under heterogeneous coupling.

- Explore fiber mesoscopic effects: assess how fibers with internal modularity or nontrivial Fiedler behavior (dg,f ≠ ds,f) influence global dg; determine when fiber mesoscopic scales contribute to or dominate the composite’s Fiedler dimension.

Glossary

- Barabasi-Albert (BA) network: A generative model producing scale-free networks by preferential attachment, often used as a base in composite structures. "The special case is directly explored by using a bundled network made of a Barabasi-Albert (BA) base."

- bundled networks: Composite graphs formed by attaching a “fiber” network to each node of a “base” network. "In particular, bundled networks are built by attaching a copy of a generic network (referred to as 'fiber') to each site of the base network"

- canonical-ensemble description: A statistical mechanics framework treating network spectra analogously to energy levels at a given inverse temperature. "establishing a canonical-ensemble description of heterogeneous networks"

- coarse-graining: The process of suppressing high-frequency modes to reveal effective large-scale structure. "As increases, high-frequency modes are suppressed, allowing for a natural coarse-graining of the network."

- Comb lattice: A lattice with a “spine” and “teeth” (fibers), exhibiting anomalous diffusion and scaling properties. "For instance, for the Comb lattice with the base of the same length of the fibers, for we have , from which we get the anomalous value ."

- Dirac brush: A bundled network whose base is a two-dimensional lattice and whose fibers are one-dimensional rings. "We first consider two specific networks: the Dirac comb, formed as a ring of rings, and the Dirac brush"

- Dirac comb: A bundled network structured as a ring of rings, serving as a tractable composite model. "We first consider two specific networks: the Dirac comb, formed as a ring of rings, and the Dirac brush"

- entropic susceptibility (or heat capacity): A scale-dependent measure of information loss rate across diffusion times on a network. "The entropic susceptibility (or heat capacity) is defined as \cite{InfoCore}"

- fiber (in bundled networks): The component network attached to each base node in a bundled construction. "bundled networks are built by attaching a copy of a generic network (referred to as 'fiber') to each site of the base network"

- Fiedler eigenvalue: The smallest non-zero Laplacian eigenvalue governing diffusion times, equilibration, and synchronization robustness. "the smallest non-zero eigenvalue of the Laplacian, the Fiedler eigenvalue, governs several dynamical phenomena, including relaxation to equilibrium, diffusion times, and the robustness of synchronization"

- Fiedler exponent: The scaling exponent controlling how the Fiedler eigenvalue vanishes with system size. "The blue dashed line represents the Fiedler exponent as predicted in Eq. \eqref{dg_gen}."

- Fiedler dimension: The effective dimension inferred from the scaling of the Fiedler eigenvalue with network size. "An exponent, i.e., the Fiedler dimension, determines how this eigenvalue approaches zero as increases"

- fractal dimension: A measure of how the number of nodes scales with the linear size of self-similar networks. "where , and are the fractal dimensions of the base, the fiber and the whole network, respectively."

- Hamiltonian: In the statistical mechanics analogy, the Laplacian acting as the energy operator. "Here, the diffusion time is a scale parameter playing the role of an inverse temperature, acts as a Hamiltonian, and is the partition function."

- heat equation: The diffusion equation governing flow on networks, expressed via the Laplacian’s time-evolution operator. "The method is based on the time-evolution operator $e^{-\tau \hat{L}$ of the diffusion (or heat) equation"

- impurity: A localized perturbation in the fiber’s Laplacian, parameterized by the base’s eigenvalues. "Eq.~\eqref{Lap3} describes the fiber network where an impurity of intensity is present in the site $0$."

- information core: The subset of nodes contributing most to informational structure across scales. "has enabled the identification of the information core \cite{InfoCore}"

- informationally-scale-invariant networks: Networks whose entropy-loss rate remains constant across a broad range of diffusion scales. "This formalism has recently allowed for the definition of informationally-{\em scale-invariant networks}, where the entropy-loss rate remains constant (or nearly so) across a broad range of scales"

- Laplacian density matrix: A normalized operator derived from the Laplacian’s diffusion kernel, analogous to a thermal state. "we can define the Laplacian density matrix"

- Laplacian operator: The matrix encoding network topology for diffusion and spectral analysis. " is the Laplacian operator"

- Laplacian Renormalization Group (LRG): A method tracing spectral properties across scales to detect emergent dimensions. "Using the Laplacian Renormalization Group (LRG) \cite{LRG,InfoCore,Modularity}, we detect and characterize emergent structural scales"

- mesoscopic scales: Intermediate-size regimes in finite networks where additional structural indicators emerge. "At finite but large network size , corresponding to mesoscopic scales, additional structural indicators emerge."

- network-of-networks: A composite network architecture formed by coupling distinct subnetworks. "They form a special class of {\em network-of-networks} or composite networks"

- partition function: The normalization factor for the Laplacian density matrix, summing over diffusive modes. "and is the partition function."

- periodic boundary conditions: Boundary treatment that eliminates edge effects and improves convergence. "The periodic boundary conditions avoid border effects, thus enhancing numerical convergence."

- random trees (RT): Tree-like fiber structures used to probe base-dependent spectral behavior in composites. "We have checked this property by using either rings or random trees (RT) as fibers."

- Renormalization Group (RG) techniques: Methods from statistical physics adapted to analyze multi-scale organization in networks. "and the extension of RG techniques to complex networks \cite{LRG}"

- spectral density: The distribution of Laplacian eigenvalues characterizing low-energy behavior. "defined by the spectral density at small eigenvalues"

- spectral dimension: A dimension defined by the scaling of the Laplacian’s eigenvalue density, controlling thermodynamic and asymptotic processes. "one of the most physically relevant is the spectral dimension, defined through the scaling of the Laplacian eigenvalue density"

- spectral gap: The gap between the zero eigenvalue and the Fiedler eigenvalue that closes in the thermodynamic limit. "In the thermodynamic limit (), , the so-called spectral gap closes"

- Sierpinski carpet: A regular fractal graph built by recursively patching square units, used to study scale invariance. "(d) Heat capacity, , versus diffusion time, , for the Sierpinski carpet of generation (see legend)."

- Sierpinski gasket: A regular fractal graph built by recursively attaching triangles, exhibiting oscillatory heat capacity across generations. "(b) Heat capacity, , versus diffusion time, , for the Sierpinski gasket of generation (see legend)."

- thermodynamic limit: The infinite-size limit where continuous spectra and macroscopic dimensions are defined. "By construction, however, the spectral dimension is defined in the thermodynamic limit of infinite systems."

- time-evolution operator: The operator governing diffusive dynamics on the network across scales. "The method is based on the time-evolution operator $e^{-\tau \hat{L}$ of the diffusion (or heat) equation"

Practical Applications

Practical applications of “Unveiling the Dimensionality of Networks of Networks”

Below are actionable applications derived from the paper’s findings on the decoupling of spectral and Fiedler dimensions, the Laplacian Renormalization Group (LRG) method, and analytical scaling of mesoscopic dynamics in composite (bundled) networks. Each item notes its sector, a concrete use case, potential tools or workflows, and assumptions or dependencies that affect feasibility.

Immediate Applications

These applications can be deployed now with existing data and tooling (e.g., Laplacian spectral solvers, approximation methods, and the LRG workflow introduced in the paper).

- Network diagnostics for multi-scale systems

- Sector: software, telecom, infrastructure, finance, academia

- Use case: Integrate an LRG-based “heat capacity” analyzer to detect structural transitions and scale-invariant plateaus in large graphs. Use the plateau levels to estimate spectral dimension () and the large-τ behavior to estimate the Fiedler dimension (). Flag regimes where to identify mesoscopic bottlenecks that macroscopic indicators miss.

- Tools/workflows: Python/R package to compute from Laplacian spectra (Lanczos/Krylov approximations for large graphs), automatic identification of peaks/plateaus, report dashboards for SRE/network ops/risk teams.

- Assumptions/dependencies: Undirected, weighted graphs; stationary diffusion model (); sufficient network size for stable plateau detection; computational budget to estimate low eigenvalues (e.g., ) and spectral density.

- Synchronization tuning and equilibration-time forecasting

- Sector: energy (power grids, microgrids), IoT, sensor networks, manufacturing control systems

- Use case: Use and to forecast relaxation times and synchronization robustness as networks scale or modules are added. Adjust topology (e.g., fiber length, base connectivity) to target acceptable equilibration times without sacrificing local performance.

- Tools/workflows: Continuous monitoring of , “what-if” topology simulators based on bundled network scaling, control parameter tuning aligned to predicted .

- Assumptions/dependencies: Linearized dynamics approximated by Laplacian; stable operating points; changes in topology remain within the regime where perturbative approximations (base-as-impurity) hold.

- Diffusion and routing optimization in overlay networks

- Sector: content delivery networks (CDNs), P2P overlays, blockchain, enterprise networks

- Use case: For composite overlays (rings-on-lattices, hub-and-spoke), use LRG to identify the fiber-dominant asymptotic regime (governed by ) and the base-dominant mesoscopic regime (governed by and ). Tune module sizes (fiber length) and attachment density to improve mixing times and reduce congestion.

- Tools/workflows: Topology A/B testing with predicted regime transitions; fiber/base dimension estimator; traffic engineering dashboards reporting expected equilibration lags.

- Assumptions/dependencies: Traffic can be approximated as diffusion; the network-of-networks decomposition is known; periodic boundary effects differ from real-world edge cases.

- Epidemic and information spread management on multilayer networks

- Sector: public health, social platforms, urban mobility

- Use case: In interdependent networks (mobility base + contact fibers), use the first LRG plateau (fiber) to estimate asymptotic spread potential () and the second plateau/base-controlled regime to plan time-sensitive interventions (e.g., targeted mobility restrictions, local community buffering).

- Tools/workflows: Joint analysis of contact and mobility layers; intervention scheduling aligned with predicted -driven timescales; scenario planning dashboards.

- Assumptions/dependencies: Layers are mappable as base vs fiber; near-linear diffusion approximation for early/mesoscopic phases; adequate data resolution for layer separation.

- Supply-chain resilience assessment

- Sector: manufacturing, logistics, retail

- Use case: Model multi-tier supply chains as bundled networks (local supplier chains as fibers attached to global distribution bases). Use scaling to forecast recovery times after shocks, and tune module sizes (e.g., supplier pool breadth) to avoid slow mesoscopic modes.

- Tools/workflows: LRG diagnostic embedded in digital-twin platforms; “slow-mode” identification via ; targeted redundancy planning.

- Assumptions/dependencies: Structural reducibility to base/fiber; stable mapping of flow constraints to Laplacian dynamics; data quality in inter-firm links.

- Graph coarse-graining and summarization

- Sector: data engineering, cybersecurity, academia

- Use case: Use LRG as a principled coarse-graining to produce hierarchical graph summaries that preserve multi-scale spectral properties. Identify the “information core” and emergent modules for auditing and intrusion detection.

- Tools/workflows: LRG-based graph compression; entropy/susceptibility reports; module-aware access controls.

- Assumptions/dependencies: Suitable for undirected, weighted graphs; conservation of relevant dynamics under coarse-graining.

- Practical home/enterprise network placement

- Sector: daily life, small business IT

- Use case: Treat access points/extenders as fibers attached to a backbone base. Use the decoupling between and to balance local coverage (fiber) vs global latency/synchronization (base). Place extenders to avoid creating slow mesoscopic modes.

- Tools/workflows: Simple planner that estimates plateaus from topology sketches; proxies via path redundancy and base connectivity.

- Assumptions/dependencies: Simplified undirected modeling; small networks may require heuristic proxies rather than full spectra.

Long-Term Applications

These require further research, scaling, or development—often involving directed/weighted temporal networks, co-optimization across layers, and generative design.

- Topology synthesis with targeted mesoscopic dynamics

- Sector: telecom, cloud architectures, robotics, distributed AI/ML

- Use case: Use the derived scaling for and the composite Fiedler dimension to design network-of-networks with a target pair , controlling asymptotic vs mesoscopic behavior. The formula introduced in the paper,

can serve as a constraint in generative topology search (e.g., to avoid slow global modes while keeping local performance). - Tools/workflows: Generative design pipelines; spectral-aware reinforcement learning for topology search; constraint solvers integrating fractal and Fiedler dimensions. - Assumptions/dependencies: Reliable estimation of fractal dimensions () and base Fiedler dimension (); extension to directed/time-varying networks.

Co-design of interdependent critical infrastructures

- Sector: energy, communications, transportation

- Use case: Design the coupling between power grids (base) and control/ICT layers (fibers) to ensure favorable scaling. Use LRG plateaus to validate regime separation and minimize risk of cascading failures driven by mesoscopic slow modes.

- Tools/workflows: Cross-layer spectral co-optimization; resilience KPIs based on scaling and plateau structure; regulatory reporting integrating and .

- Assumptions/dependencies: Multilayer mapping fidelity; coordinated data sharing across operators; robust models beyond linear diffusion.

- Neuromorphic and modular AI systems with controlled timescales

- Sector: hardware (neuromorphic circuits), machine learning

- Use case: Compose cores and subnetworks (fibers) onto global scaffolds (bases) to engineer desired relaxation times and learning dynamics (e.g., gating slow global modes while keeping rich local dynamics).

- Tools/workflows: Spectral-aware architecture search for spiking networks and GNNs; -based profiling of candidate designs; hardware-friendly approximations to .

- Assumptions/dependencies: Applicability of Laplacian dynamics to neural substrates; hardware constraints; extension to nonlinear/heterogeneous couplings.

- Metamaterials and transport in composite media

- Sector: materials science, photonics/phononics

- Use case: Design lattice-like composites whose “network-of-networks” geometry yields tuned diffusion, wave transport, or localization properties—leveraging decoupled and to separate bulk vs mesoscopic behaviors.

- Tools/workflows: Discrete-to-continuum mapping; spectral homogenization; experimental validation with 3D-printed scaffolds.

- Assumptions/dependencies: Accurate network abstractions of materials; boundary conditions and fabrication tolerances; linear transport approximations.

- Financial systemic-risk stress testing

- Sector: finance, policy

- Use case: Model interbank exposures (base) with sectoral or instrument-specific subnetworks (fibers). Use decoupling () to highlight finite-size vulnerabilities that asymptotic metrics miss. Incorporate scaling into stress scenarios to estimate recovery/contagion times.

- Tools/workflows: Spectral risk dashboards; regulator-facing LRG reports; supervisory tools focused on mesoscopic slow modes.

- Assumptions/dependencies: Data transparency; appropriateness of Laplacian modeling for financial flows; regulatory acceptance.

- Swarm robotics and multi-agent coordination

- Sector: robotics, defense, autonomous systems

- Use case: Architect communication/control as a composite topology to achieve fast local coordination (fibers) with controlled global convergence (base). Use to prevent emergent slow modes as swarm size grows.

- Tools/workflows: Spectral design for swarm topologies; adaptive reconfiguration rules driven by features.

- Assumptions/dependencies: Mapping to Laplacian-mediated consensus/control; dynamic and directed interactions; robustness to link failures.

- Urban and mobility network planning

- Sector: public policy, transportation

- Use case: Treat multimodal transport (rail/bus/road) as fibers attached to urban backbones. Use LRG plateaus and scaling to plan expansions that minimize system-wide equilibration times (e.g., congestion dissipation) while preserving local accessibility.

- Tools/workflows: Planning simulators integrating ; spectral KPIs for infrastructure investments; policy benchmarks for mesoscopic performance.

- Assumptions/dependencies: High-quality multilayer data; validation beyond linear diffusion; socio-economic constraints.

- Composite software architecture (microservices, data meshes)

- Sector: software engineering

- Use case: Compose microservices (fibers) onto platform backbones (bases) with target to avoid latent slow-downs at scale. Use LRG to identify modules and to guide refactoring before performance cliffs.

- Tools/workflows: CI/CD-integrated spectral checks; topology linting; ops dashboards showing evolution as services are added.

- Assumptions/dependencies: Meaningful mapping of call graphs/dependency networks to Laplacians; evolving/directed edges require adapted methods.

In both immediate and long-term settings, key feasibility factors recur: the suitability of Laplacian (diffusion-based) models for the domain, the ability to separate networks into base vs fiber components, the availability of scalable spectral estimators (especially for ), and sufficient network size or structure to reveal clear LRG plateaus. Where these conditions hold, the paper’s framework offers a powerful, actionable lens for designing and governing complex, modular systems.

Collections

Sign up for free to add this paper to one or more collections.