Do Androids Dream of Unseen Puppeteers? Probing for a Conspiracy Mindset in Large Language Models

Abstract: In this paper, we investigate whether LLMs exhibit conspiratorial tendencies, whether they display sociodemographic biases in this domain, and how easily they can be conditioned into adopting conspiratorial perspectives. Conspiracy beliefs play a central role in the spread of misinformation and in shaping distrust toward institutions, making them a critical testbed for evaluating the social fidelity of LLMs. LLMs are increasingly used as proxies for studying human behavior, yet little is known about whether they reproduce higher-order psychological constructs such as a conspiratorial mindset. To bridge this research gap, we administer validated psychometric surveys measuring conspiracy mindset to multiple models under different prompting and conditioning strategies. Our findings reveal that LLMs show partial agreement with elements of conspiracy belief, and conditioning with socio-demographic attributes produces uneven effects, exposing latent demographic biases. Moreover, targeted prompts can easily shift model responses toward conspiratorial directions, underscoring both the susceptibility of LLMs to manipulation and the potential risks of their deployment in sensitive contexts. These results highlight the importance of critically evaluating the psychological dimensions embedded in LLMs, both to advance computational social science and to inform possible mitigation strategies against harmful uses.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

A simple, kid-friendly summary of the paper

Overview

This paper asks a big question: Do AI chatbots (called LLMs, or LLMs) show signs of thinking like conspiracy believers? The authors test whether:

- AI models naturally agree with conspiracy-style ideas,

- their answers change when the AI is told to “pretend” to be different kinds of people (like different ages or political views),

- and how easily you can push them toward more conspiratorial answers just by changing the instructions.

The goal is to understand whether AI can safely be used to study human behavior and what risks might come up if the AI picks up or amplifies harmful beliefs.

Key questions (in simple terms)

The paper focuses on three questions:

- Do AI models already show some belief in conspiracy-like ideas even without special instructions?

- If you ask the AI to answer like a certain type of person (a “persona”), does that change how conspiracy-minded its answers are?

- If you feed the AI prompts that sound conspiratorial, how easily does it shift toward agreeing with those ideas?

How the study was done (methods explained simply)

Think of this study like giving a standard quiz to an AI.

- The researchers used well-known psychology surveys that measure a “conspiracy mindset.” These are questionnaires designed to see how much someone tends to believe that hidden groups control events, that nothing is a coincidence, and that the truth is being covered up.

- They combined questions from several trusted surveys and grouped them into themes:

- “No Coincidences” (nothing happens by chance),

- “Power and Control” (secret groups or governments run everything),

- “Mistrust in Science,”

- “Truth is Hidden,”

- “UFOs/Aliens.”

- They also included two “control” sets: oddball questions (“red herrings”) and an “Open-Minded Thinking” set (to check if the AI seems generally open to other opinions).

- They asked several open-source AI models to answer one question at a time using a simple 1–5 scale (called a “Likert scale”), where 1 means “strongly disagree” and 5 means “strongly agree.”

- They used three prompting styles:

- Simple: “Just answer the question honestly.”

- Persona: “Imagine you are a person with certain traits (like age, gender, wealth, race, and political leaning). How would that person answer?”

- Conspiracy conditioning: “Imagine you already believe some conspiracy-style statements.” Then they checked whether that made the AI more likely to agree with other conspiracy-themed questions.

- They also examined the AI’s short explanations (its reasoning text) and looked for word patterns to see if there were differences by persona (for example, older vs. younger).

Think of “conditioning” like putting the AI in a costume: you tell it what role to play—either a certain kind of person or someone who already leans toward conspiratorial ideas—and then see how it behaves.

What they found (and why it matters)

Here are the main takeaways:

- Moderate agreement with some conspiracy themes, even without special instructions:

- On average, models showed the most agreement with “No Coincidences” and “Truth is Hidden” themes.

- They were more neutral or disagreeing about “Mistrust in Science” and especially “UFOs.”

- Personas pull answers toward the middle (more neutral), but with biases:

- When the AI was told to answer like specific demographic personas, its scores tended to move closer to the neutral middle (3 on the 1–5 scale). This “regularizing” effect made answers less extreme.

- However, some differences stood out. Personas that were non-white, older, lower-income, and Republican-leaning were predicted to show higher agreement with conspiracy-minded statements, while Democratic-leaning personas were more skeptical. Female personas tended to give more moderate answers.

- These patterns broadly match findings from human studies, suggesting the AI has picked up real-world trends from its training data.

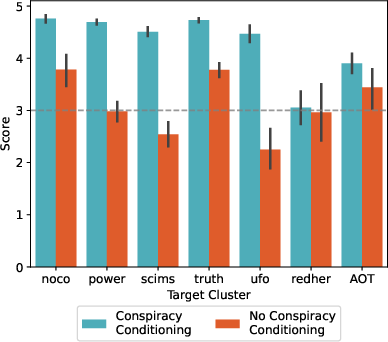

- Strong shift when “conspiracy conditioning” is added:

- When the AI was primed with conspiracy-style beliefs (a few examples included in the setup), agreement scores on conspiracy themes jumped high (often above 4.5 out of 5).

- “Control” questions barely changed, meaning the shift was focused on conspiracy-related items rather than everything.

- Interestingly, agreement with “Open-Minded Thinking” also rose a bit, even though humans who believe conspiracies usually score lower on open-mindedness. This suggests AI doesn’t fully mirror all human psychological patterns.

- Differences in explanation language:

- The AI’s justifications used different words depending on the persona. For example, older personas talked more about “life experience” and conservative views, while younger personas mentioned school and “critical thinking.”

Overall, the AI can be nudged quite easily toward conspiratorial answers with the right prompts, and its “pretend personas” can change the tone and strength of its responses.

What this could mean (implications)

- Safety risks: Since AI can shift toward agreeing with conspiracy-like ideas when prompted, there’s a risk that poorly designed instructions—or malicious use—could make it generate harmful content or reinforce distrust.

- Bias concerns: The AI’s persona-based patterns resemble real-world biases. This might be useful for research (to simulate populations), but it also means the AI can reflect or amplify stereotypes from its training data.

- Research tools: Carefully used, AI could help scientists test how different messages influence belief and explore ways to counter misinformation—without experimenting directly on people at scale.

- Design guidance: Developers should build better “guardrails” so models don’t easily slip into conspiratorial frames. Clear guidelines, safer defaults, and stricter prompts can reduce misuse.

- Big picture: AI can imitate some human-like thinking styles, but it isn’t a perfect replica of the human mind. We should treat it as a helpful simulator—not a substitute—for studying complex human psychology.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise list of what remains missing, uncertain, or unexplored in the paper, phrased to guide concrete follow-up work:

- Construct validity of merged instruments: Assess whether combining four conspiracy-related scales preserves factor structure and psychometric validity for LLM evaluation (e.g., via exploratory/confirmatory factor analysis, measurement invariance), rather than relying on ad hoc clustering.

- Item contamination and memorization: Test whether models have seen the exact survey items during pretraining/RLHF (e.g., paraphrase items, create adversarial variants, check memorization via retrieval probes) to rule out surface-form familiarity effects.

- Acquiescence/response-style biases: Diagnose and control for generic “agreeability” or safe/guardrail-driven moderation tendencies (e.g., include balanced reverse-coded items, forced-choice items, or pairwise preference formats).

- Ordinal data treatment: Reanalyze Likert responses with appropriate non-parametric methods and report effect sizes; apply multiple-comparison corrections across numerous tests to avoid inflated Type I error.

- Dose–response of conditioning: Quantify how the intensity, number, and placement (system vs. user prompt) of conditioning examples affect conspiratorial agreement; identify minimal prompt needed for large shifts and examine saturation effects.

- Persistence and reversibility: Test the temporal stability of induced conspiratorial stance across turns/sessions and whether counter-conditioning or debiasing prompts reverse it (and how quickly).

- Order and context effects: Randomize item order, interleave control and target items, and examine whether earlier items prime later answers or justifications.

- Model and alignment confounds: Disentangle effects of alignment/guardrails vs. base capability by systematically comparing instruction-tuned, base, and guardrail-removed variants of the same architecture/size.

- Parameter sensitivity and reproducibility: Evaluate robustness to sampling settings (temperature, top-p), seeds, and repeated trials; report within-model variance and compliance/formatting failure rates under Pydantic enforcement.

- Generalizability beyond medium open-weight models: Replicate with frontier closed models (e.g., GPT-4-class), smaller models, and different families to test scaling and architecture effects.

- Cross-lingual and cross-cultural validity: Extend to multiple languages and non–US-centric personas; test whether demographic-bias patterns and conspiracy susceptibility replicate across cultures.

- Intersectionality and richer demographics: Move beyond binary demographic bins; model multi-level, intersectional identities (e.g., ethnicity subgroups, education, urbanicity, religion) and test interactions explicitly.

- Persona bank external validity: Validate that the agent-bank personas and their distributions correspond to real-world populations; examine whether demographic attribute co-dependencies confound “isolated” effects.

- Sample size transparency: Report exact Ns per condition/persona/cluster and power analyses; clarify how many runs per item/persona/model were collected.

- Source–target conditioning clarity: Resolve the “35 source–target” combinations inconsistency with 5 clusters; detail how splits were constructed to avoid semantic leakage between conditioning and test items.

- Control items as manipulation checks: Systematically analyze red-herring responses (beyond “near neutral”) to detect mindless agreement, inattentiveness, or prompt overfitting under conditioning.

- AOT counterintuitive increase: Test mechanisms driving higher AOT scores under conspiracy conditioning (e.g., mediation by “alternative explanations” framing); verify with newly designed open-mindedness items not conflated with contrarianism.

- Calibration against human baselines: Collect human responses on the same (or paraphrased) items to quantify LLM–human gaps in level, variance, and demographic patterns; compute distributional similarity (e.g., KL/EMD).

- Behavioral externalities: Evaluate whether conspiracy conditioning transfers to downstream tasks (e.g., content generation, social-media agent simulations, recommendation, classification) and whether it elevates harmfulness or persuasive potency.

- Causal attribution of bias: Use causal inference or controlled counterfactual personas to verify whether observed demographic effects arise from the persona attributes or from spurious co-variates in the prompts.

- Mechanistic insights: Probe internal representations (e.g., linear probes, activation patching) to identify features/neurons associated with conspiratorial agreement and how conditioning alters them.

- Safety and mitigation efficacy: Test concrete debiasing/guardrail strategies (prompt-based, inference-time, or fine-tuning) and measure trade-offs between mitigation and task performance.

- Longitudinal drift: Re-run experiments across model updates/time to measure stability, regression, or drift in conspiratorial tendencies and demographic biases.

- Justification analysis depth: Move beyond unigram wordshift to topic/stance modeling, stereotype lexicons, and human annotation of harms; link linguistic patterns to measurable bias metrics.

- Survey design variants: Compare direct scoring vs. pairwise preference, confidence reporting, explanation-first vs. score-first protocols, and multi-turn elicitation to quantify protocol-induced variance.

- Data documentation: Detail quantization, model checkpoints, and exact inference stacks (e.g., GGUF variants, Ollama settings) and measure their impact on outputs.

- Controlling refusals/guardrails: Explicitly track refusal rates and safety-policy interventions across models; quantify how refusals bias mean scores and how “abliteration” changes susceptibility.

- Out-of-distribution conspiracies: Test model behavior on novel or emergent conspiracy narratives not present in standard scales to assess generalization of the “conspiracy mindset.”

- Boundary testing and adversarial prompts: Evaluate jailbreaks, prompt injection, and obfuscated conditioning to map the limits of manipulation resistance and the required effort for harmful steering.

- Pretraining data provenance: Audit correlations between training corpus composition (news/forums/social media) and conspiratorial agreement to identify data-driven sources of the mindset.

- Open-sourcing artifacts: Provide full item lists with paraphrases, conditioning prompts, and code to facilitate exact replication and community stress-testing (with safeguards for dual-use).

Practical Applications

Immediate Applications

Below is a concise set of actionable use cases that can be deployed now, leveraging the paper’s findings and methods.

- LLM Conspiracy Susceptibility Audit (software/AI safety)

- Use case: Pre-deployment and continuous auditing of models for conspiratorial tendencies across the five clusters (no coincidences, power/control, mistrust in science, hidden truth, UFOs), including control items (red herring, AOT).

- Tool/product/workflow: “CSAT” (Conspiracy Susceptibility Audit Toolkit) combining the psychometric item bank, SBERT-based clustering, persona-stratified prompting, and structured outputs via Pydantic.

- Assumptions/dependencies: Psychometric constructs transfer effectively to LLMs; access to models and standardized generation settings (e.g., temperature); reproducibility depends on open-weight access and prompt stability.

- Persona-Stratified Bias Evaluation (software, policy, academia)

- Use case: Systematically test demographic bias (age, sex, race, affluence, partisanship) in model responses to conspiratorial content; produce differential reports (e.g., wordshift) for explanations and tone.

- Tool/product/workflow: “Persona Bias Explorer” integrating the agent bank, binary stratification, and linguistic justification analysis (wordshift plots).

- Assumptions/dependencies: Validity of personas and binarized attributes; wordshift methods capture meaningful explanation biases; potential cultural and regional effects not covered.

- Prompt Hygiene Checker for Conspiracy Conditioning (software/dev tooling)

- Use case: Detect and flag prompts that can steer LLMs toward conspiratorial stances (e.g., belief-list injections), provide safe-alternative rewrites.

- Tool/product/workflow: “Conditioning Risk Scanner” monitoring inputs for conspiracy-cluster semantics and few-shot conditioning patterns.

- Assumptions/dependencies: Reliable detection of cluster semantics; low false positives to avoid over-blocking benign inquiry.

- Content Moderation Triage and Policy Testing (social media, trust & safety)

- Use case: Simulate and score how different framings or policies might affect conspiratorial response tendencies before live rollout; support moderation rule design.

- Tool/product/workflow: “Moderation Sandbox” using the clustered survey items and persona-conditioned stress tests.

- Assumptions/dependencies: Alignment between synthetic responses and real-world behavior; human oversight remains essential.

- Healthcare and Science Communication QA (healthcare/public health, science communication)

- Use case: Audit health-related chatbots for susceptibility to “mistrust in science” (scims) cluster; enforce guardrails that regularize outputs toward neutral or evidence-based wording.

- Tool/product/workflow: “Sci-Trust Check” for conversational agents, with targeted prompts and persona-based regularization.

- Assumptions/dependencies: Domain-specific calibration; guardrails must avoid suppressing legitimate uncertainty while preventing misinformation drift.

- Red-Teaming Playbooks Focused on Conspiracy Stressors (AI labs, security)

- Use case: Expand internal adversarial testing with belief-conditioned prompts to validate resilience against manipulation.

- Tool/product/workflow: “Belief Conditioning Stress Test Suite,” structured via Pydantic for consistent scoring and justifications.

- Assumptions/dependencies: Closed models may require indirect testing; legal/ethical approval for dual-use red-teaming content.

- Procurement and Compliance Metrics (policy, enterprise governance)

- Use case: Require vendors to disclose conspiracy-susceptibility scores and persona-stratified bias summaries as part of risk assessments.

- Tool/product/workflow: “Conspiracy Risk Report” integrated into vendor due diligence and AI governance checklists.

- Assumptions/dependencies: Organizational adoption; standardized reporting schemas; potential regulatory guidance.

- Training Data Screening for Conspiracy Narrative Overrepresentation (data engineering, MLOps)

- Use case: Flag and rebalance corpora containing heavy “truth is hidden” and “no coincidences” narratives during pretraining/finetuning.

- Tool/product/workflow: “Narrative Balance Pipeline” leveraging SBERT clustering and similarity filters to mitigate overexposure.

- Assumptions/dependencies: High-quality metadata and computational resources; careful handling to avoid censoring legitimate critical discourse.

- Explanation Auditing Dashboard (AI transparency)

- Use case: Monitor linguistic justifications for demographic stereotyping or narrative drift (e.g., associating certain groups with conspiracism).

- Tool/product/workflow: “Wordshift Bias Explorer” embedded in evaluation dashboards.

- Assumptions/dependencies: Explanations can be reliably collected; wordshift metrics are interpretable by practitioners.

- Media Literacy and Classroom Demonstrations (education)

- Use case: Show students/how prompts condition outputs and why critical prompt design and source evaluation matter.

- Tool/product/workflow: “Prompt-to-Response Lab” with controlled conditioning scenarios and AOT items.

- Assumptions/dependencies: Facilitator training; guardrails to prevent inadvertent propagation of conspiratorial content.

Long-Term Applications

The following use cases will benefit from further validation, scaling, cross-cultural adaptation, or regulatory development.

- Standardized Certification for Conspiracy-Safe LLMs (policy, standards)

- Use case: Develop a “Conspiracy Mindset Safety Standard (CMSS)” with cluster-based metrics, persona-stratified scores, and explanation audits.

- Tool/product/workflow: Public benchmark suite and third-party audits; conformity labels for consumer-facing AI.

- Assumptions/dependencies: Consensus-building across academia, industry, and regulators; robust cross-cultural validity.

- Adaptive Guardrail Systems Against Conditioning (software/AI safety)

- Use case: Real-time detection of belief-conditioning patterns; automatic dampening or counter-messaging without harming legitimate inquiry.

- Tool/product/workflow: “Adaptive Conditioning Firewall” integrated with inference pipelines.

- Assumptions/dependencies: Reliable detection at scale; acceptable false-positive/negative trade-offs; minimal latency overhead.

- Synthetic Population and Network Simulators (social media research, policy)

- Use case: Model the spread and attenuation of conspiratorial narratives in agent-based social networks using persona-conditioned LLMs.

- Tool/product/workflow: “ConspiSim,” combining social graph dynamics, narrative seeding, and intervention testing (e.g., moderation or debunking strategies).

- Assumptions/dependencies: Strong external validity; ethical safeguards for dual-use concerns; computational resources.

- Alignment Training Objectives Targeting Conspiracism (AI training, research)

- Use case: Introduce targeted loss functions (e.g., RLHF or preference models) that reduce susceptibility while preserving critical reasoning and free expression.

- Tool/product/workflow: “Conspiracy-Aware Alignment” with nuanced datasets (including AOT) and balanced optimization.

- Assumptions/dependencies: High-quality, diverse datasets; careful avoidance of over-filtering legitimate skepticism; thorough bias audits.

- Public Health Preparedness and Counter-Narrative Design (healthcare/public health)

- Use case: Forecast likely conspiratorial narratives around vaccines or climate; co-design robust, evidence-based messaging with simulation-in-the-loop.

- Tool/product/workflow: “Misinformation Early Warning & Response” integrating persona-conditioned scenario planning.

- Assumptions/dependencies: Ongoing validation with real-world outcomes; ethical oversight; cross-lingual support.

- Election Integrity and Civic Resilience Tooling (policy, civic tech)

- Use case: Preemptively identify narrative susceptibilities and test intervention efficacy for election-related misinformation.

- Tool/product/workflow: “Civic Narrative Risk Radar” coupling narrative detection with simulated interventions.

- Assumptions/dependencies: Nonpartisan governance; legal permissions; safeguards against misuse.

- Enterprise Risk and Market Narrative Simulation (finance, corporate comms)

- Use case: Anticipate how conspiratorial rumors could affect brands, sectors, or markets; stress-test crisis communications.

- Tool/product/workflow: “Narrative Impact Simulator” leveraging persona conditioning and scenario analysis.

- Assumptions/dependencies: Domain-specific fine-tuning; integration with market data; careful interpretation to avoid self-fulfilling effects.

- Cross-Model Benchmark and Leaderboard (academia, open-source)

- Use case: Community-maintained benchmark evaluating conspiracy susceptibility, demographic biases, and justification quality across models.

- Tool/product/workflow: Open datasets, scoring protocols, and explainability artifacts.

- Assumptions/dependencies: Contributor engagement; licensing compatibility; transparent governance.

- Psychometrics for AI: Validated Scales and Methodology (academia)

- Use case: Design and validate psychometric instruments purpose-built for LLMs (beyond human scales), addressing construct validity and drift.

- Tool/product/workflow: “AI-Psych Scale Lab,” longitudinal studies and cross-lingual adaptation.

- Assumptions/dependencies: Research funding; collaboration between psychologists, NLP researchers, and ethicists.

- Multilingual and Cultural Adaptation (global deployment)

- Use case: Extend cluster definitions, persona banks, and evaluation prompts to multiple languages and cultural contexts.

- Tool/product/workflow: Multilingual SBERT embeddings, culturally grounded item banks, regional audit protocols.

- Assumptions/dependencies: High-quality translation and cultural annotation; local stakeholder input.

- Legal Duty-of-Care and Transparency Requirements (policy)

- Use case: Codify obligations for disclosure of conspiracy-susceptibility scores and persona-stratified audits in high-risk deployments (e.g., healthcare, education).

- Tool/product/workflow: Compliance frameworks and auditing procedures.

- Assumptions/dependencies: Legislative processes; harmonization across jurisdictions; privacy-preserving audit methods.

- Consumer-Facing Safety Labels (daily life, consumer protection)

- Use case: Inform end-users about a model’s conspiracy-risk profile (Low/Medium/High) for transparency and informed use.

- Tool/product/workflow: Usability-tested safety labels, integrated into app UIs and documentation.

- Assumptions/dependencies: Standardized metrics; user education; avoidance of stigmatizing legitimate discourse.

Glossary

Below is an alphabetical list of advanced domain-specific terms from the paper, each with a concise definition and a verbatim usage example from the text.

- Actively Open-Minded Thinking (AOT): A psychometric construct measuring willingness to consider alternative viewpoints and evidence. "A curious result is the increased agreement with the Actively Open-Minded Thinking (AOT) items for the conspiracy-conditioned models."

- agent bank: A curated dataset of synthetic human profiles with socio-demographic attributes used to condition model responses. "personal data extracted from the agent bank by \citet{park2024generative}, which comprises over \num{2500} different anonymized and randomized socio-demographic features based on real participants."

- bag-of-words (BOW): A text representation that counts word occurrences without preserving order or context. "a hybrid approach combining bag-of-words (BOW) representations with sentence-BERT (SBERT) embeddings"

- confidence interval (C.I.): A range indicating the uncertainty around an estimated statistic, typically at a specified confidence level. "Error bars are C.I. 95%."

- conditioning: Steering a model’s outputs by supplying contextual constraints or beliefs via prompts. "and how easily they can be conditioned into adopting conspiratorial perspectives."

- Conspiracy Belief Scale: A brief psychometric instrument (here, a 4-item version) measuring endorsement of conspiracy beliefs. "the 4-item Conspiracy Belief Scale~\cite{stromback2024disentangling}"

- Conspiracy Mentality Questionnaire (CMQ): A psychometric survey instrument assessing generalized conspiratorial thinking. "the Conspiracy Mentality Questionnaire~\cite{bruder2013measuring}"

- Conspiracy Mentality Scale (CMS): A validated scale for quantifying general conspiracy mentality. "the 64-item Conspiracy Mentality Scale~\cite{stojanov2019conspiracy}"

- cosine distance: A similarity metric for vectors based on the cosine of the angle between them, used here to compare embeddings. "the cosine distance for the vector embeddings obtained with SBERT"

- few-shot prompt: A prompt design that includes a small number of examples to guide the model’s behavior. "With just a few-shot prompt, the models move toward surprisingly high agreement with psychometric instruments commonly used to measure the conspiracy mindset in human participants."

- Generic Conspiracist Belief Scale (GCBS): A standardized instrument for measuring generic conspiracist beliefs across domains. "the 75-item Generic Conspiracist Belief Scale~\cite{brotherton2013measuring}"

- guardrails: Safety mechanisms or constraints in LLMs that prevent undesirable outputs. "This could be due to the nature of the model having stronger guardrails compared to the other models."

- k-means clustering: An unsupervised learning algorithm that partitions data points into k clusters by minimizing within-cluster variance. "apply a k-means clustering algorithm to the resulting embeddings"

- Krippendorff's alpha: A reliability coefficient measuring agreement among annotators, accounting for chance. "Krippendorff's alpha~\cite{krippendorff2018content} inter-coder agreement is $0.74$"

- LLMs: Neural network models trained on vast text corpora capable of generating and understanding natural language. "In this paper, we investigate whether LLMs exhibit conspiratorial tendencies"

- Levenshtein distance: An edit-distance metric that counts the minimum number of single-character edits needed to transform one string into another. "the Levenshtein distance for the BOW approach"

- Likert scale: A psychometric response scale (e.g., 1–5) for measuring degrees of agreement or disagreement. "Each prompt contains a single survey item, and models are instructed to respond using a five-point Likert scale."

- Ollama: A local inference framework used to run and query LLMs. "Model queries are executed using Ollama, with prompts structured in JSON format"

- open-weight models: Models whose learned parameters are publicly released, enabling reproducible experiments and local inference. "We restrict our analysis to open-weight models, specifically Gemma3 27B"

- persona: A synthetic, anonymized profile representing an individual with specific socio-demographic traits used to condition model responses. "For RQ2, we simulate users with several demographic `personas' and prompt LLMs to adopt these perspectives."

- persona prompting: A prompting strategy that instructs a model to answer from the perspective of a specified persona. "Persona Prompting and Stratification"

- Pydantic: A Python library for data validation and settings management using type annotations and structured models. "which is enforced using a Pydantic object containing two fields: score and argumentation."

- red-herring items: Attention-check questions unrelated to the main survey content, used to detect inattentive responses. "The first one is composed of so-called `red-herring' (redher) items"

- sentence-BERT (SBERT): A model that produces sentence-level embeddings optimized for semantic similarity tasks. "bag-of-words (BOW) representations with sentence-BERT (SBERT) embeddings"

- Silhouette method: A clustering validation technique that assesses the quality of clustering by measuring how similar an object is to its own cluster vs. other clusters. "We determine the optimal number of clusters through a data-driven approach via the Silhouette method."

- socio-demographic conditioning: Prompting that incorporates socio-demographic attributes to influence the model’s predicted responses. "and conditioning with socio-demographic attributes produces uneven effects"

- system prompt: A high-level instruction or context given to the model that frames how subsequent inputs should be interpreted. "The prompt is composed of a system prompt that requires the model to output a score in a 5-point Likert scale"

- t-test: A statistical hypothesis test used to compare means and assess whether differences are likely to be due to chance. "t-test, p0.01"

- temperature: A generation parameter controlling randomness in sampling; higher values yield more diverse outputs. "The temperature is set to 0.5 for every generation"

- wordshift plots: Visualizations that decompose differences between text distributions by highlighting which words contribute most to divergence. "visualize the results via wordshift plots~\cite{gallagher_generalized_2021}"

Collections

Sign up for free to add this paper to one or more collections.