- The paper proposes a resilience testing framework that quantifies the risk of transferred adversarial attacks using surrogate models and CKA similarity metrics.

- It employs regression-based estimators and dual-similarity strategies to maximize adversarial subspace coverage across varying network architectures.

- The approach addresses the computational limits of exhaustive risk mapping and aligns with regulatory standards for AI security.

Quantifying the Risk of Transferred Black Box Attacks

Introduction

The paper "Quantifying the Risk of Transferred Black Box Attacks" (2511.05102) explores the vulnerabilities of neural networks to adversarial attacks, particularly within security-critical applications. As neural networks become integral in various technological domains, their susceptibility to manipulation through adversarial examples has become a significant concern. Regulatory frameworks, such as the EU AI Act, demand that organizations ensure the security and robustness of AI systems amidst increasing legislative focus. The paper emphasizes the necessity of quantifying the risk associated with transferred adversarial attacks—those crafted for a surrogate model and effective across different models in a black-box setting.

Challenges in Resilience Testing

The primary challenge addressed is the computational infeasibility of exploring the vast input space needed for comprehensive adversarial risk mapping. Black-box evasion attacks leverage transferability—a key trait wherein attacks generated for one model are successful against others with differing architectures and training datasets. This trait underscores the impracticality of full test coverage due to the complexity of such attacks and the dimensions of input spaces.

To navigate this limitation, the paper proposes a resilience testing framework employing surrogate models selected based on Centered Kernel Alignment (CKA) similarity. This approach aims to optimize adversarial subspace coverage and provide actionable risk estimation through regression-based estimators.

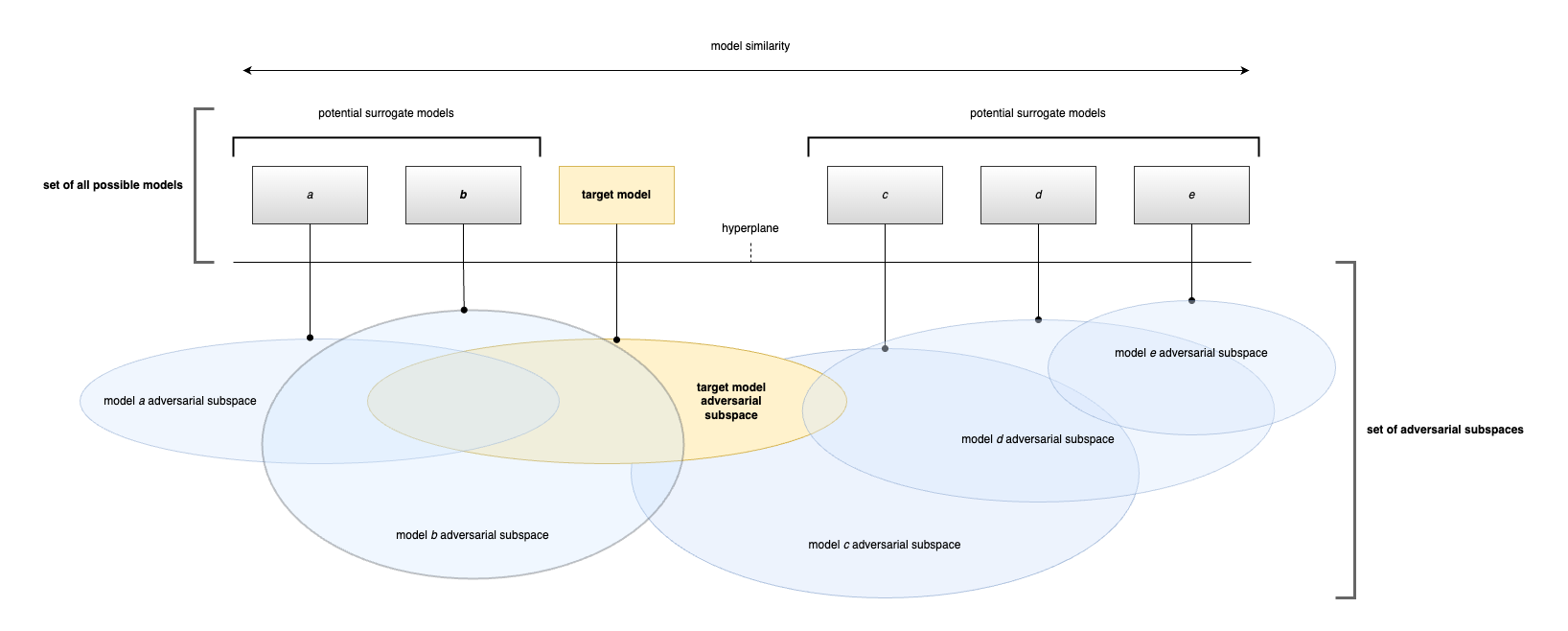

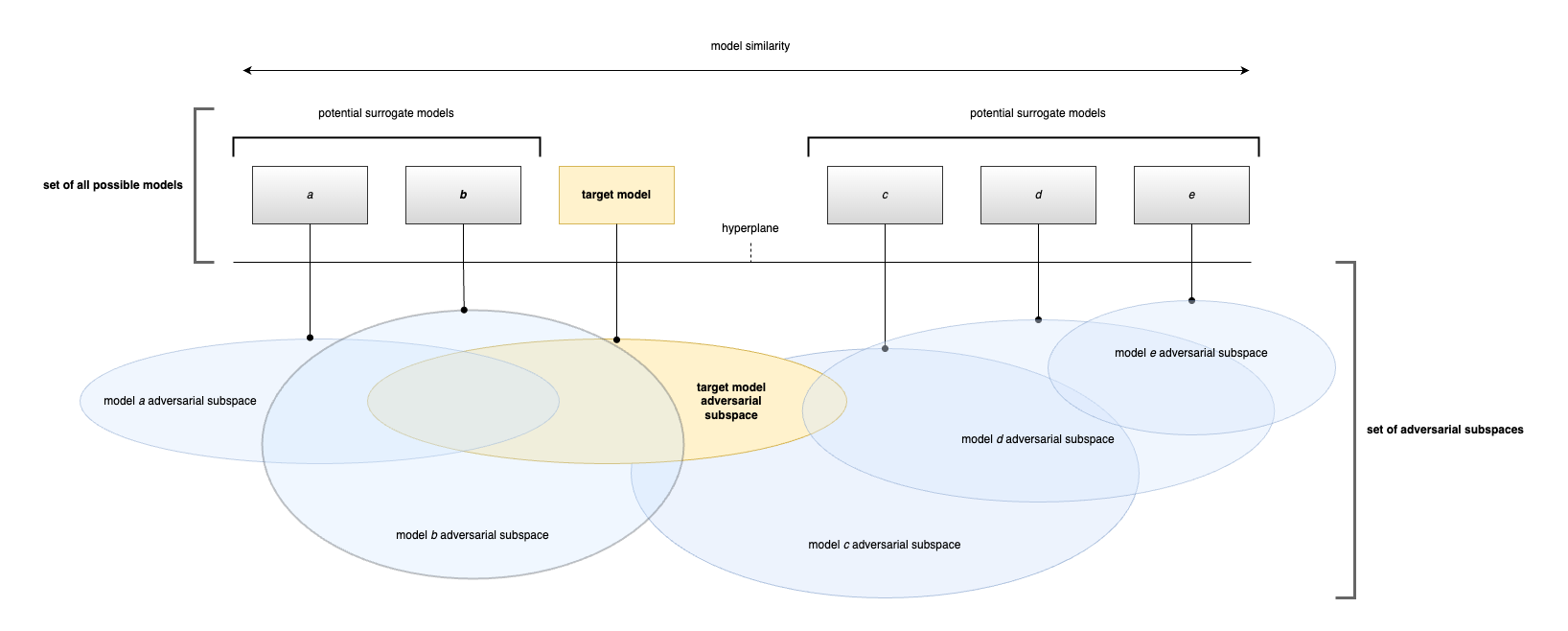

Figure 1: Conceptual illustration of adversarial subspace overlap across models with varying similarity. Target model (yellow) and potential surrogate models (a–e), each with its own adversarial subspace.

Neural Network Similarity Via CKA

CKA is introduced as an effective method for assessing neural network similarities. Utilized predominantly in scenarios involving complex and non-linear transformations, CKA is robust in capturing representational similarities among neural networks. By comparing similarity matrices of activations through the Hilbert-Schmidt Independence Criterion, CKA furnishes a similarity score between zero and one, laying the groundwork for evaluating the likelihood of adversarial subspace intersection among models.

Coverage Testing Feasibility

The paper scrutinizes full-coverage testing feasibility in black-box attack contexts, where attacker knowledge is assumed to be partial. Due to the overarching threat posed by transferable attacks, defining testing frameworks that span all relevant adversarial subspaces is proven infeasible. The dimensionality of adversarial examples and their contiguous subspaces pose formidable impediments to exhaustive resilience testing.

Practical Resilience Framework

To mitigate these constraints, a framework that employs surrogate models with both high and low CKA similarities relative to the target model is proposed. This dual-similarity strategy maximizes adversarial subspace coverage, thereby decreasing the likelihood of overlapping subspaces and increasing the complexity and cost for potential attackers.

The framework delineates methodologies for selecting surrogate models based on empirically derived similarity thresholds. Surrogates exhibiting both high and low similarities enhance resilience testing efficacy, providing better coverage of adversarial subspaces and aligning risk quantification practices with regulatory expectations.

Implications and Future Work

The implications of this research are pivotal, particularly for organizations aiming to comply with stringent AI security standards. The proposed framework offers a pragmatic solution for integrating accurate risk quantification into resilience testing, bolstering both regulatory compliance and system security postures.

Looking forward, future work will focus on refining similarity metrics, improving computational efficiency, and deriving metrics from model architectural features rather than activations. The objective is to develop scalable, inexpensive methods that maintain high reliability in assessing model similarity and quantifying adversarial risks.

Conclusion

The paper "Quantifying the Risk of Transferred Black Box Attacks" (2511.05102) addresses significant gaps in resilience testing associated with transferred adversarial attacks, offering a methodologically sound framework to resolve computational boundaries inherent in exhaustive adversarial risk mapping. By leveraging CKA similarity, the proposed framework advances towards efficient, realistic risk estimation crucial for navigating evolving regulatory landscapes and enhancing neural network security within production environments.