Lightning Grasp: High Performance Procedural Grasp Synthesis with Contact Fields

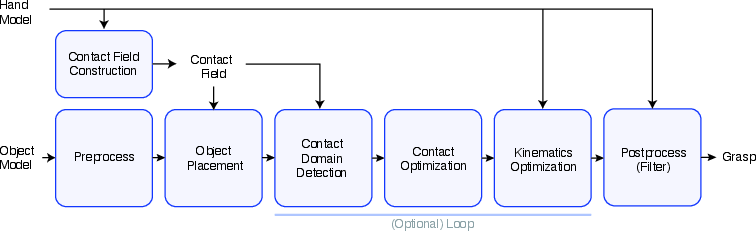

Abstract: Despite years of research, real-time diverse grasp synthesis for dexterous hands remains an unsolved core challenge in robotics and computer graphics. We present Lightning Grasp, a novel high-performance procedural grasp synthesis algorithm that achieves orders-of-magnitude speedups over state-of-the-art approaches, while enabling unsupervised grasp generation for irregular, tool-like objects. The method avoids many limitations of prior approaches, such as the need for carefully tuned energy functions and sensitive initialization. This breakthrough is driven by a key insight: decoupling complex geometric computation from the search process via a simple, efficient data structure - the Contact Field. This abstraction collapses the problem complexity, enabling a procedural search at unprecedented speeds. We open-source our system to propel further innovation in robotic manipulation.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces Lightning Grasp, a super-fast way for robot hands to figure out how to pick up all kinds of objects. Instead of slowly trying lots of guesses or needing carefully tuned formulas, Lightning Grasp uses a clever data structure called a “Contact Field” to quickly find where each finger could touch an object and then build a stable grasp in seconds.

What questions did the researchers ask?

The paper focuses on simple, practical questions:

- How can a robot hand quickly find many different, good ways to grasp weird or tool-like objects?

- Can we avoid slow, sensitive methods that need lots of manual tuning?

- Can we make grasping fast enough to run in real time, even on older GPUs?

- Can one system work across different robot hands and many object shapes?

How does Lightning Grasp work?

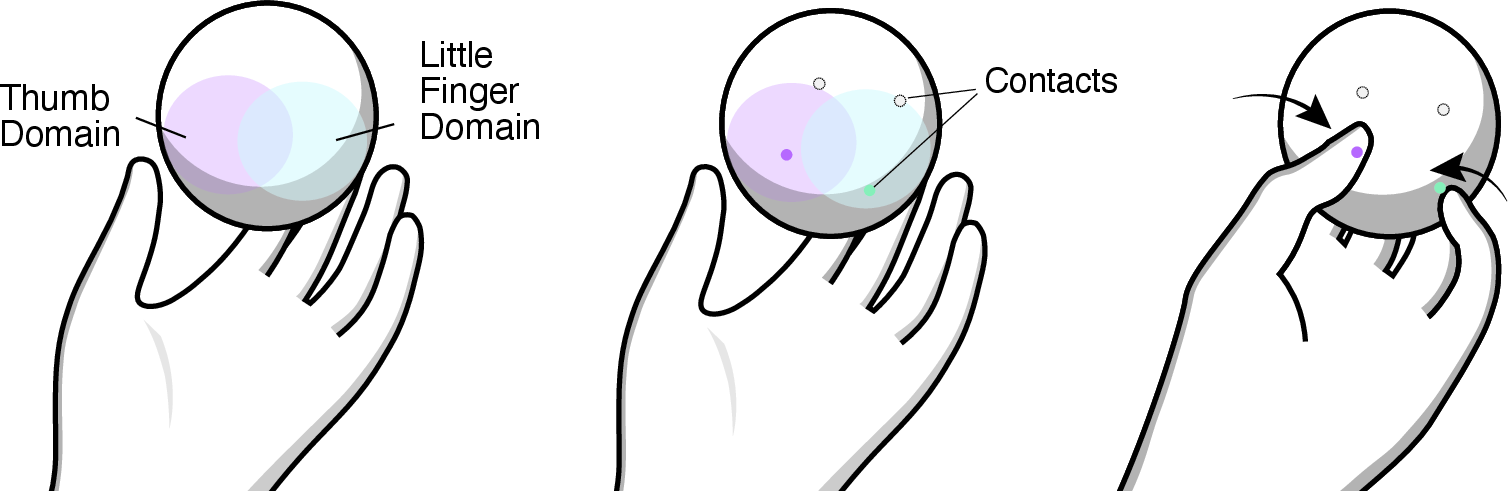

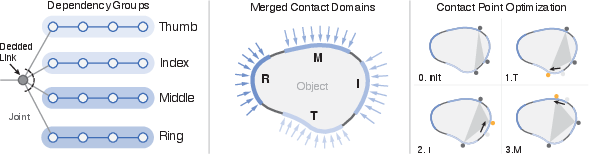

Think of grasping as three steps: find where fingers can touch, choose the best touch points, and move the fingers to match. Lightning Grasp does exactly that.

Step 1: Build a “Contact Field” (a map of possible touches)

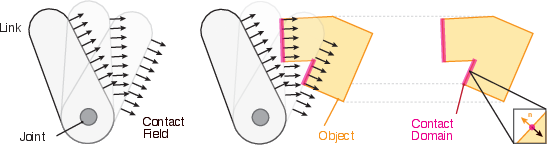

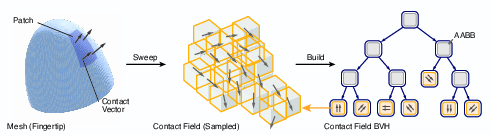

- Imagine every point on every fingertip, across all the ways the hand can bend, as an arrow in space: each arrow’s tip says “I could touch here,” and the arrow’s direction says “I’d press in this direction.”

- Collect all these arrows into a big 6D “map” called the Contact Field. It captures where the hand could make contact and how the finger would push.

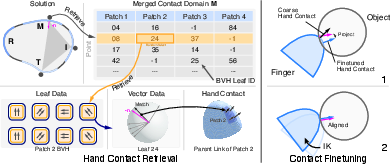

- To search this map fast, they store it in a BVH (Bounding Volume Hierarchy), which is like organizing arrows into boxes in a tree structure so lookups are super quick.

Step 2: Find good touch points on the object

- They place the object near the palm (a region that’s usually graspable) and use the Contact Field to find “contact domains”—areas on the object surface that certain fingers can reach without colliding.

- Then they pick one point per finger and improve those choices using a quick, trial-and-error method (a “zeroth-order” optimization), looking for points that make the grasp stable.

- Stability here means the pushes from the fingers can balance out so the object won’t slip or twist away. You can think of it like several kids pushing gently on a ball from different sides so the ball stays still.

Step 3: Move the fingers to match the chosen points

- Once the target touch points are chosen on the object, the system uses inverse kinematics (IK) to bend each finger so its contact point lines up with the object’s touch point and direction.

- If the contact map is coarse (low detail), it does a fast “fine-tuning” loop: project the finger’s touch point onto its link precisely and re-run IK for a tighter fit.

Along the way, they:

- Preprocess the object to avoid traps (like deep holes or sharp concave areas that are hard to reach without collisions).

- Check collisions quickly and filter bad grasps.

- Cache intermediate results so future runs get even faster.

What did they find and why is it important?

Here are the most important results and why they matter:

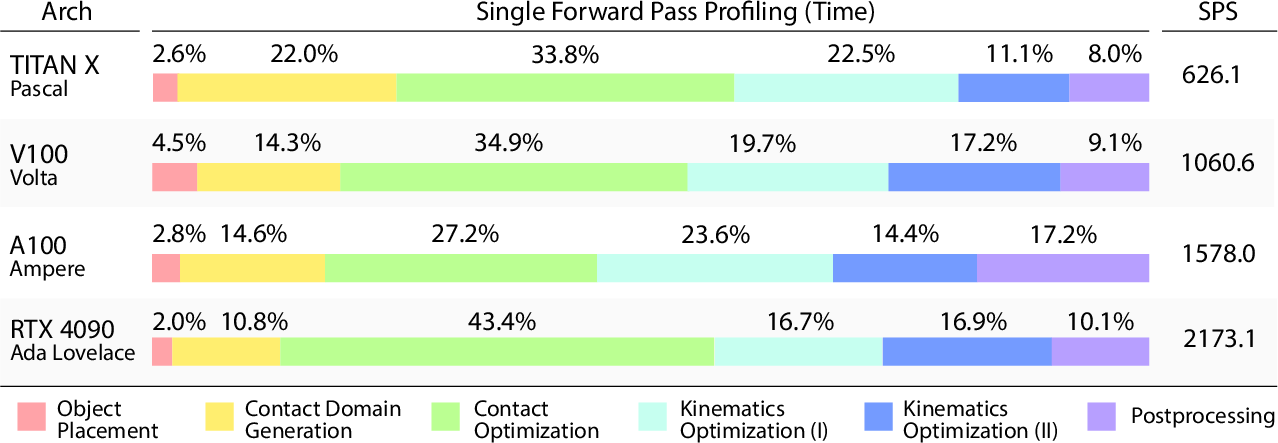

- Speed: Lightning Grasp can generate hundreds to thousands of valid, diverse grasps in about 2–5 seconds on a single GPU. That’s orders of magnitude faster than many previous methods.

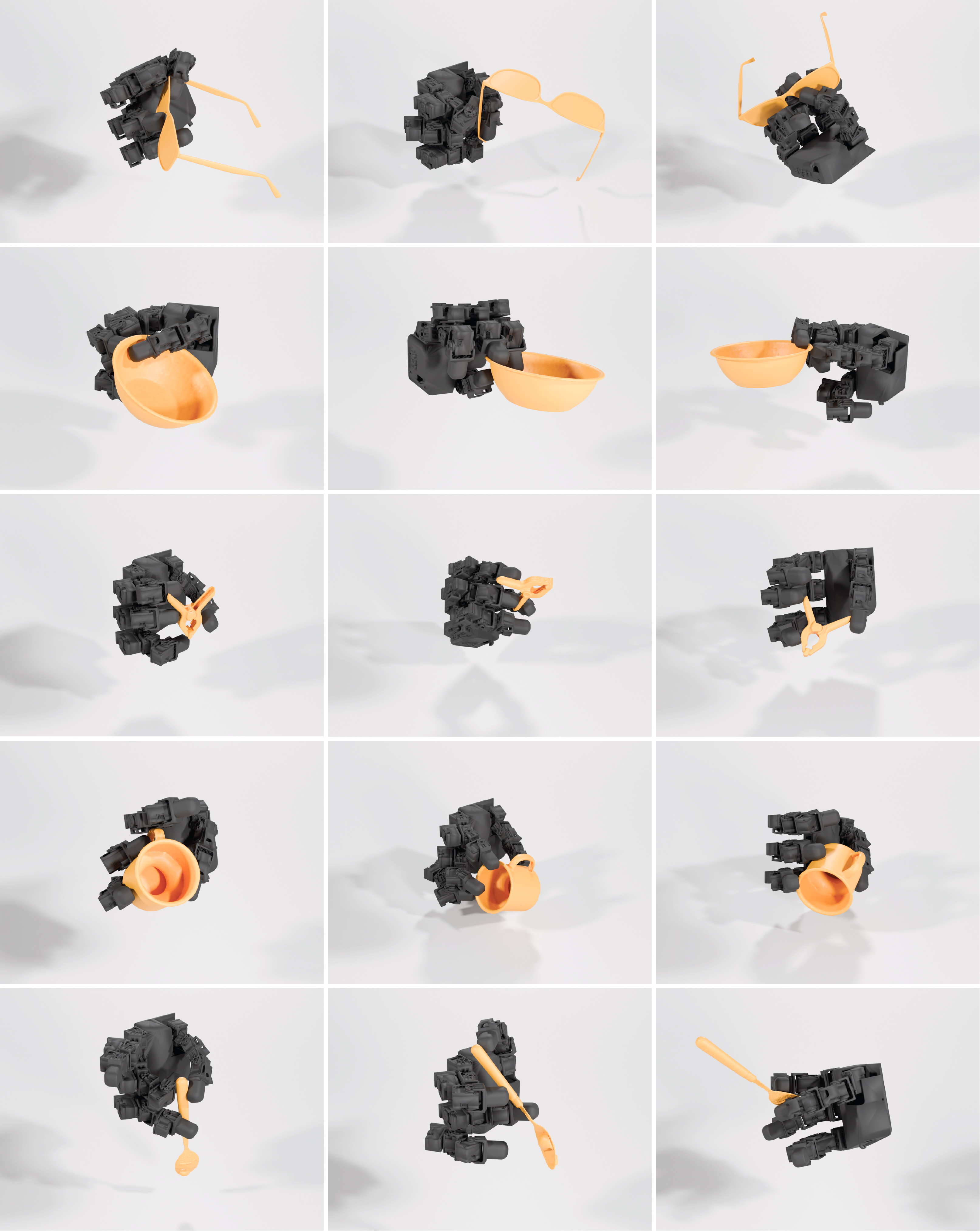

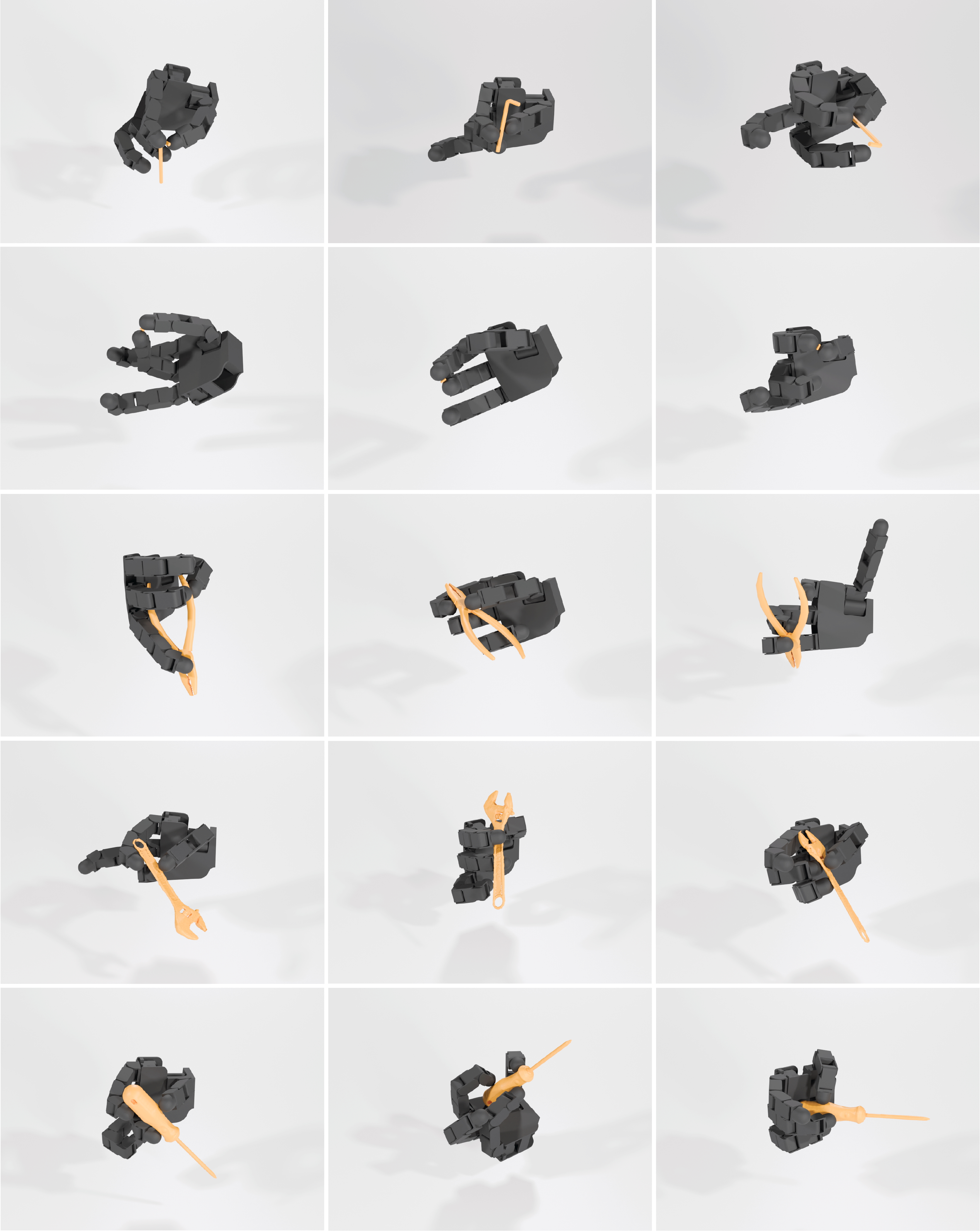

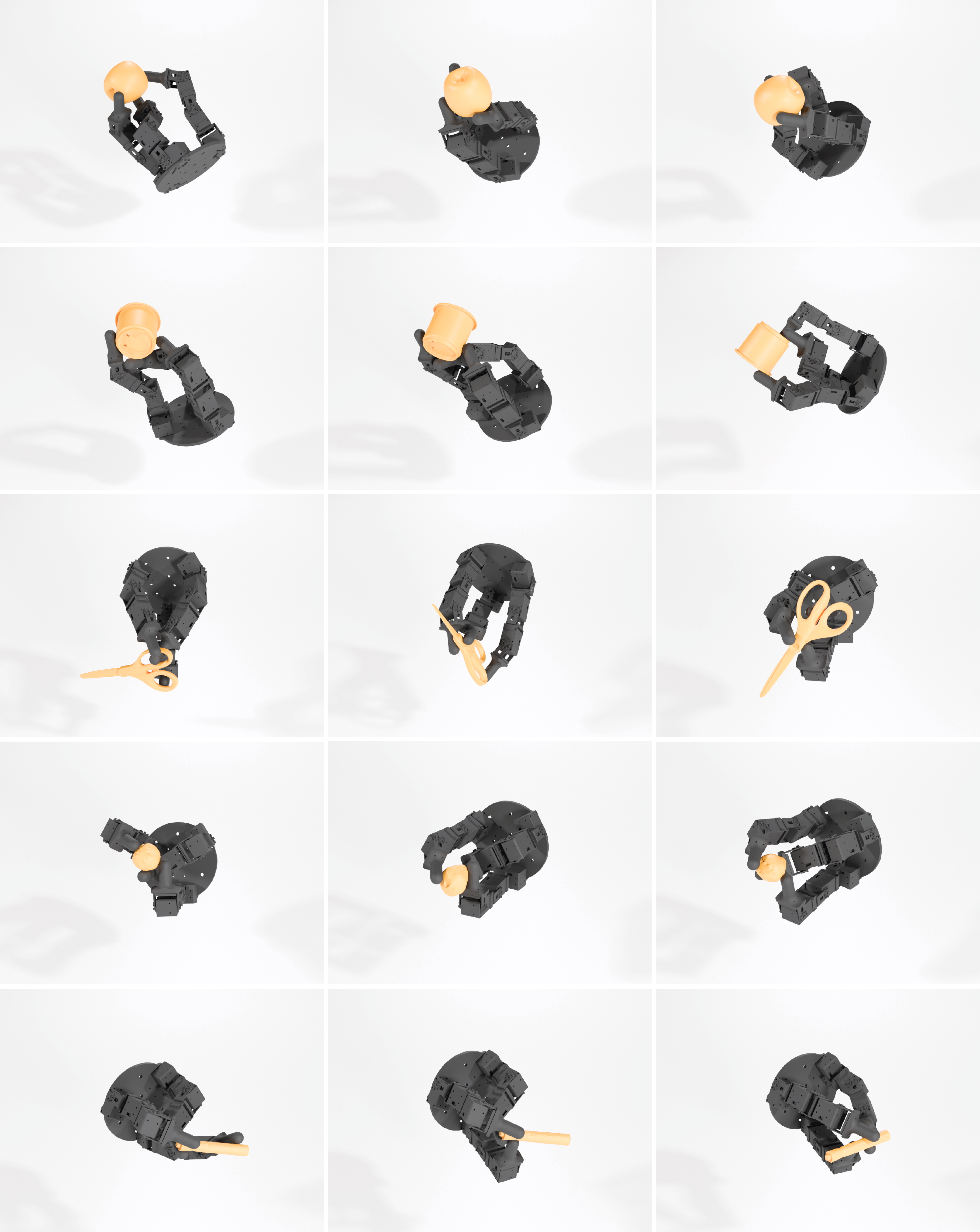

- Diversity: It doesn’t just find one way to pick up an object—it produces many different grasps, including those useful for tools (like mugs, scissors, pliers, wrenches).

- Generality: It works across several robot hands (Shadow, LEAP, Allegro, DClaw) and many object types, from smooth fruits to non-convex items like cups and bowls.

- Less manual tuning: No need for delicate “energy function” balancing or hand-crafted starting poses. That removes a big human bottleneck.

- Real-time potential: Even on older GPUs, performance stays strong, making it practical for real-time use.

- Open-source: The code is released, so others can build on it and improve robot manipulation faster.

They also report some common failure cases:

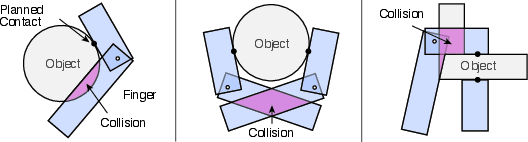

- Highly non-convex objects (like cups with handles) can still cause unexpected collisions at a global scale. Future improvements could make early search more collision-aware to avoid these.

What’s the potential impact?

Lightning Grasp could:

- Supercharge training data for learning-based robot manipulation, since it can quickly generate lots of varied grasps.

- Make dexterous robot hands (not just simple grippers) more practical, pushing the field toward human-like manipulation.

- Help engineers evaluate and design better robot hands, because it reveals which designs lead to more valid grasps and fewer collisions.

- Enable faster, more reliable grasping in robotics and even help with realistic hand-object interactions in computer graphics and games.

- Inspire “data-driven search,” where the system learns from its own successes to pick even better object poses and grasp strategies next time.

In short, by separating “geometry” (where touches can happen) from “search” (which touches we should pick), Lightning Grasp makes dexterous grasping both fast and robust—opening the door to more capable, nimble robots.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains missing, uncertain, or unexplored in the paper, aimed to guide future research.

- Formal guarantees for completeness and optimality: no proofs or bounds on whether the contact-field-based search will find all feasible grasps or the best grasps under given metrics.

- Coverage of the contact field: the joint-space sampling strategy is random with no metrics for coverage, approximation error, or convergence; how many samples per patch are sufficient remains unknown.

- Collision-aware contact field: early-stage pruning of contacts that inevitably cause global hand-object penetrations is proposed but not implemented; specific representations (e.g., finger geometry metadata per box) and algorithms are open.

- Multi-contact per finger in one forward pass: current pipeline cannot realize multiple independent contacts on the same finger within a single pass; scheduling and optimization strategies for coupled contacts remain to be designed.

- Adaptive resolution and memory-time trade-offs: box width, per-leaf normal discretization (e.g., 256 vectors ≈ 7°), and patch granularity are fixed or heuristic; automated, task-aware refinement and compression schemes are missing.

- Optimal patch decomposition: the surface cover for fine-grained patches is random and suboptimal; a practical algorithm to minimize patch count while balancing coverage and query load is left unspecified.

- Object preprocessing heuristic: removing concave points via a small-box penetration test may discard valid grasps; a principled reachability/penetration prediction method (e.g., conservative reachability or accessibility fields) could reduce false negatives.

- Hyperparameter sensitivity: thresholds and scalars (e.g., θ_hit, σ for mutations, λ damping, β normal scaling, ε stability criterion, μ friction) lack tuning guidance, sensitivity analysis, or automatic calibration.

- Actuation constraints: the stability and IK formulations ignore torque/force limits, joint velocity limits, and actuator capabilities; mapping contact wrenches to feasible joint torques remains unaddressed.

- Gravity and object mass: FSWO/GSWO do not explicitly incorporate gravity, mass distribution, or required net wrench to counter external loads; correlation of these metrics with real-world success is unvalidated.

- Orientation/contact modeling: normal alignment via two-point matching ignores rolling contact, curvature, pad compliance, and tangential stiffness; an extended model for soft/compliant contacts is needed.

- Finetuning via projection: alternating projection can move contacts off selected patches; guarantees on convergence, contact feasibility, and absence of new collisions during finetuning are not provided.

- Cluttered environments and support surfaces: search assumes objects placed above the palm; grasping in clutter, from tables, shelves, or with environmental contacts is unaddressed.

- Perception robustness: the pipeline’s reliance on accurate meshes lacks evaluation on sensor-derived point clouds with noise, holes, and misalignments; strategies for uncertainty handling are missing.

- Real-world validation: results are visual and SPS-focused; standardized physical robot experiments, success rates, and repeatability across hands/objects are absent.

- Quantitative benchmarking: comparisons to baselines omit success rate, stability score distributions, collision rates, diversity metrics (e.g., coverage of contact regions), and time-to-first-valid-grasp.

- Handling highly non-convex objects: the observed failure on cups indicates the need for early global collision pruning; concrete data structures and checks to avoid such cases are not designed.

- Contact-domain independence assumption: enforcing one domain per finger ignores coupled kinematics and practical multi-contact strategies; when and how to relax this constraint is unclear.

- IK solver robustness: no convergence guarantees, singularity handling, or failure-mode analysis; initial guess strategies and recovery mechanisms for difficult kinematic configurations are unexplored.

- From wrench solutions to actuation feasibility: converting optimized contact forces to joint torques via Jacobians and verifying actuator capacity and safety margins remains an open step.

- Compliance and dynamics: the method targets rigid hands and quasi-static contacts; modeling soft hands, time-varying stick–slip, and dynamic stabilization is left open.

- Contact field build cost: preprocessing time, amortization across tasks, and incremental updates when hands change (wear, calibration drift) are not measured or methodized.

- Learned object placement policies: the suggested data-driven proposal distributions (self-play) are not implemented; training signals, architectures, and evaluation protocols remain to be defined.

- Normal discretization effects: impact of quantized normal directions on contact accuracy, stability metrics, and IK feasibility is unquantified; adaptive refinement near high-curvature regions is missing.

- Geometry consistency: the narrow-phase uses point-based half-plane checks for objects, while the rest uses meshes; accuracy and consistency across geometry types are not evaluated.

- Penetration tolerance: allowing ~2 mm penetration is stated but not integrated into collision detection thresholds, mesh scale normalization, or stability assessment; its practical effects need study.

- Multi-pass generation and cache reuse: mechanisms to ensure diversity (e.g., deduplication, coverage metrics) and to persist/reuse intermediate tree states are not specified.

- Task-conditioned functional grasps: evaluation of tool-use functionality (e.g., pouring, cutting) beyond “holding” is missing; integrating task constraints into contact optimization is an open avenue.

- Scalability across morphologies: memory and performance bounds for very high-DoF hands or many fingers, and for hands with bulky layouts causing frequent self-collisions, need systematic analysis.

- Deployment constraints: reliance on high-end GPUs raises questions about embedded/edge feasibility, energy/thermal limits, and CPU-only variants; portability benchmarking is absent.

- Safety beyond geometry: checks for pinch hazards, excessive contact forces on neighboring fingers, and safe motion plans post-grasp are not covered.

- Mathematical characterization of CF(H) ∩ S(O): properties (manifold structure, smoothness, connectivity) and conditions guaranteeing efficient sampling and optimization remain theoretically unexplored.

- Parameter selection for ε in stability: the practical threshold for “small enough” wrench error is not defined; calibration against physical success criteria is needed.

- Diversity quantification: claims of diverse grasps are not backed by metrics (e.g., dispersion of contact locations, distinct grasp families); measuring and optimizing diversity remains to be done.

- Build-time vs run-time trade-offs: profiling focuses on forward pass; contact field construction, BVH build times, and memory use across hands/objects are not reported.

Practical Applications

Immediate Applications

Below is a concise set of actionable, sector-linked use cases that can be deployed now, along with indicative tools/workflows and key dependencies.

- High-throughput grasp planning for dexterous manipulators in manufacturing and logistics (robotics)

- Tools/products/workflows: Lightning Grasp SDK as a ROS2 node; GPU runtime that ingests URDF/meshes; precompute contact fields; runtime grasp selection for irregular SKUs.

- Assumptions/dependencies: Availability of multi-finger hands (e.g., Shadow, Allegro); reliable 3D perception and pose estimation; safety interlocks; GPU (A100/TITAN X class or better).

- Offline dataset generation for training manipulation policies (academia, software, robotics)

- Tools/products/workflows: Multi-pass grasp generation pipeline to produce 1,000–10,000 grasps per forward pass; training RL/diffusion policies (e.g., for DexterityGen, DexGraspNet).

- Assumptions/dependencies: Access to object meshes; GPU clusters for scale; consistent grasp stability metric selection (FSWO/GSWO).

- Robotic hand hardware benchmarking and design iteration (robotics hardware)

- Tools/products/workflows: “Hand Evaluator” reporting effective samples/sec, collision rates, stability yields across object sets; comparative studies of fingertip shapes/motor layouts.

- Assumptions/dependencies: Accurate URDF/mesh models; representative test objects; standardized evaluation protocols.

- Rapid, contact-accurate grasp authoring in animation/VFX and game engines (computer graphics, software)

- Tools/products/workflows: Unity/Unreal/Maya plugins; BVH-based contact fields to synthesize realistic hand-object interactions at scale.

- Assumptions/dependencies: Access to object/hand meshes; integration with engine physics; workstation GPUs.

- VR/AR training simulators for tool handling and maintenance (education, enterprise training)

- Tools/products/workflows: Lightning Grasp integrated for realistic grasps and haptic cues; scenario authoring for diverse tools.

- Assumptions/dependencies: Low-latency integration with VR engines; accurate controller kinematics; GPU availability.

- Teleoperation assistance via grasp suggestion overlays (robotics, healthcare/assistive tech)

- Tools/products/workflows: Real-time inference to propose stable contact sets; UI overlays guiding operators.

- Assumptions/dependencies: Calibrated hand kinematics; synchronized perception; human override; latency bounds.

- Warehouse item induction for irregular products (robotics, logistics)

- Tools/products/workflows: Catalog-level precomputation of contact domains; runtime retrieval and validation to accelerate onboarding of new SKUs.

- Assumptions/dependencies: High-quality product meshes; dexterous gripper availability; collision-aware filtering.

- CAD “robot-graspability” checks for product/tool design (manufacturing, product design)

- Tools/products/workflows: CAD plugin that evaluates CF(H)∩S(O) intersections; identifies problematic concavities; suggests design edits for robot-friendliness.

- Assumptions/dependencies: CAD-to-mesh conversion fidelity; access to target hand models.

- Simulation stack integrations (software)

- Tools/products/workflows: Isaac Sim/MuJoCo/ROS2 adapters; PyTorch-based kinematics; CUDA kernels for BVH and collision.

- Assumptions/dependencies: Stable APIs; maintenance and documentation; GPU support.

- Coursework and labs for dexterous manipulation (education)

- Tools/products/workflows: Assignments on contact field BVH, zeroth-order optimization, IK; reproducible benchmarks; open-source codebase.

- Assumptions/dependencies: Lab PCs with GPUs; curated object sets; instructor expertise.

- Assistive device customization for personal object sets (healthcare)

- Tools/products/workflows: Generate personalized grasp libraries for prosthetics; tune controllers to patient-specific daily objects.

- Assumptions/dependencies: Multi-DoF prosthetics; object scanning; clinical workflow alignment.

- Cloud “Grasp-as-a-Service” for e-commerce item onboarding (software, retail)

- Tools/products/workflows: API that returns grasp candidates and stability scores for new products; batch precomputation.

- Assumptions/dependencies: Data sharing agreements; secure mesh handling; SLAs; GPU backends.

- Adaptive fingertip prototyping guided by collision/failure patterns (robotics hardware)

- Tools/products/workflows: Analyze failure cases to redesign fingertip geometry; iterate with 3D printing and re-evaluation.

- Assumptions/dependencies: Rapid manufacturing; validation testbeds; consistent metrics.

- Collision-aware object preprocessing for concave geometry (academia, robotics)

- Tools/products/workflows: Use provided concavity filtering to reduce unreachable holes; improve SPS in pipelines.

- Assumptions/dependencies: Good mesh resolution; parameter tuning (thresholds).

Long-Term Applications

The following use cases require further research, scaling, or development (e.g., closed-loop control, compliance, certification, broader hardware availability).

- General-purpose household robots with dexterous manipulation (robotics, daily life)

- Tools/products/workflows: Closed-loop Lightning Grasp integrated with perception and tactile feedback; robust grasping of diverse household objects.

- Assumptions/dependencies: Affordable reliable dexterous hardware; safe human-robot interaction; robust sensing.

- Autonomous tool use in industrial maintenance and utilities (energy, construction, field robotics)

- Tools/products/workflows: Task-level planners that chain grasp synthesis with tool operation sequences; contact-aware planning for valves, clamps, fasteners.

- Assumptions/dependencies: Harsh environment robustness; compliance control; certification and safety standards.

- Prosthetics with contact-aware, personalized controllers (healthcare)

- Tools/products/workflows: Per-user contact fields; adaptive policies trained via self-play; on-device inference for real-time grasps.

- Assumptions/dependencies: Embedded compute; tactile/force sensing; clinical trials and regulatory approval.

- Surgical robotics instrument manipulation and handoffs (healthcare)

- Tools/products/workflows: Precision contact planning in constrained environments; instrument exchange and tool adjustments.

- Assumptions/dependencies: Formal safety guarantees; sterility and robustness; integration with surgical platforms.

- Space robotics for on-orbit servicing and assembly (space, robotics)

- Tools/products/workflows: Precomputed contact fields for space tools/connectors; robust grasp synthesis under microgravity.

- Assumptions/dependencies: Radiation-hardened compute; limited communication; extreme reliability requirements.

- Standards and policy for dexterous grasp benchmarking (policy, industry consortia)

- Tools/products/workflows: Benchmark suites for grasp diversity/speed/stability; shared datasets; certification protocols.

- Assumptions/dependencies: Multi-stakeholder consensus; reproducible metrics; governance frameworks.

- Hardware–software co-design via inverse morphology optimization (robotics research, manufacturing)

- Tools/products/workflows: Optimize hand geometry and compliance to maximize CF(H)∩S(O) over target object sets; generative fingertip design.

- Assumptions/dependencies: Differentiable or surrogate modeling; fabrication constraints; validation loops.

- Learning-enhanced search with self-play and richer physics (academia, software)

- Tools/products/workflows: Train object placement policies; incorporate friction (GSWO), multi-contact per finger, and collision-aware contact fields.

- Assumptions/dependencies: High-quality friction data; tactile sensing; integration with learned policies.

- Human-in-the-loop AR guidance for everyday grasping (daily life, education)

- Tools/products/workflows: Mobile app that suggests stable human grasps derived from simplified contact fields; assistive training.

- Assumptions/dependencies: Real-time 3D perception on mobile; ergonomic models; user acceptance and privacy.

- Digital twins for factories to pre-validate graspability of new SKUs (manufacturing, software)

- Tools/products/workflows: Fleet-level precomputation of contact domains; scenario testing in virtual environments.

- Assumptions/dependencies: High-fidelity twins; continuous synchronization with real operations; IT integration.

- Soft-hand integration and multi-contact per finger (robotics)

- Tools/products/workflows: Extend contact fields to deformable, compliant hands; richer contact modeling with tactile arrays.

- Assumptions/dependencies: Soft-body simulation; tactile sensing; increased compute.

- Safety-certified real-time grasp planning stack (policy, industry)

- Tools/products/workflows: Formal verification of no-penetration and stability; certified runtime with bounded latencies.

- Assumptions/dependencies: Verified models; conservative margins; regulatory processes.

- Environmental service robots (waste sorting, agriculture harvest) (energy, agriculture, robotics)

- Tools/products/workflows: Handling irregular organic items and debris with dexterous grasps; contamination-aware planning.

- Assumptions/dependencies: Robust perception under variable lighting/occlusion; sanitation requirements; ruggedized hardware.

Glossary

- AABB (Axis-Aligned Bounding Box): A box aligned with coordinate axes used to accelerate broad-phase collision detection. "We implement two-phase collision detection with a AABB-based broad phase followed by different narrow phase detection algorithms based on geometry type."

- BLAS (Bottom-Level Acceleration Structure): A per-leaf acceleration structure (e.g., a BVH over vectors) to speed up queries within a node. "The normal vector set on the leaf node can be organized with another BVH~(i.e. BLAS)."

- Bounding Volume Hierarchy (BVH): A tree of bounding volumes that accelerates spatial queries such as collision and intersection. "We organize the sampled contact field with a Bounding Volume Hierarchy~(BVH)."

- Contact domain: The subset of object-surface positions and normals that the hand can feasibly contact. "With the definitions above, the potential contact interaction between an object mesh and the hand's contact field can be easily defined as which we call contact domain."

- Contact field: A 6D representation (position and normal) of all potential hand contacts across joint configurations. "Our central innovation is the contact field, a simple but powerful data structure that can detect and represent all feasible contact regions on an object efficiently."

- Contact patch: A small hand-surface region used to segment and index contact-field samples. "We first decompose the hand link meshes into many small contact patches."

- Contact surface representation: A mapping of surface points and outward normals of a mesh into position-normal space for contact queries. "Given a mesh , its contact surface representation is defined as S(M)={(p, -n)| p\in \partial M, n \in {\rm normal}(p, M)}\subset \mathbb{R}3\times\mathbb{S}2."

- Convex decomposition: The process of approximating a complex mesh with a set of convex parts to enable efficient collision checks. "Specifically, we run convex decomposition over hand meshes and implement parallelized GJK~\cite{gilbert2002fast} algorithm for hand self-collision check."

- Damped least squares (DLS): An IK technique that regularizes joint updates to improve numerical stability. "we compute the joint update by optimizing the following damped least square~(DLS) problem:"

- Force closure: A grasp condition where frictional contacts can resist arbitrary external wrenches. "There are many kinds of grasp stability metrics such as form and force closures."

- Form closure: A grasp condition where geometric constraints immobilize the object even without friction. "There are many kinds of grasp stability metrics such as form and force closures."

- Forward kinematics (FK): The computation of link poses from joint angles along the kinematic chain. "let be the hand mesh according to configuration ~(computed with forward kinematics )"

- Geometric Jacobian: The matrix relating joint velocities to linear and angular velocities of a link or point. "which has a geometric Jacobian $\mathbf{J}(l_j; q) = \begin{bmatrix} \mathbf{J}_p \ \mathbf{J}_r \end{bmatrix} \in \mathbb{R}^{6 \times \dim \mathcal{C}$."

- GJK algorithm: The Gilbert–Johnson–Keerthi algorithm for collision detection and distance between convex shapes. "Specifically, we run convex decomposition over hand meshes and implement parallelized GJK~\cite{gilbert2002fast} algorithm for hand self-collision check."

- Inverse kinematics (IK): Solving for joint configurations that realize desired contact positions/orientations. "We implement an inverse kinematics solver for multi-chain systems using PyTorch."

- Kinematics tree: The graph structure of joints and links defining the robot’s articulated mechanism. "Dependency groups are connected components in the kinematics tree when we exclude the fixed/static links."

- LBVH (Linear Bounding Volume Hierarchy): A fast, GPU-friendly BVH construction method using linear ordering (e.g., Morton codes). "T\leftarrow{b_i}$); \hfill // Use LBVH~\cite{karras2012maximizing} construction."</li> <li><strong>Manifold (submanifold)</strong>: A space locally resembling Euclidean space; a submanifold is embedded within a higher-dimensional manifold. "In this paper, a mesh $M\mathbb{R}^3\partial M\mathbb{R}^3$."</li> <li><strong>Power grasp</strong>: A grasp where the object is secured against the palm with large-area contacts for stability. "to enable grasps like the power grasp."</li> <li><strong>Projected gradient descent</strong>: An optimization method that performs gradient steps followed by projection onto the feasible set. "Both problems can be decomposed into $n$ convex subproblems, which are efficiently solvable with projected gradient descent."</li> <li><strong>URDF (Unified Robot Description Format)</strong>: A standardized XML format describing a robot’s links, joints, and geometry. "Our method takes in hand model~(mesh and URDF) and object model~(mesh) as input and outputs valid grasps."</li> <li><strong>Wrench (in robotics)</strong>: A combined force and torque acting on an object. "we use the following self-balancing $\epsilon$-wrench setup"

- Zeroth-order optimization: Optimization using only function evaluations (no gradients), often via random local search. "Next, a block-wise zeroth-order optimization identifies stable contact points within these domains,"

Collections

Sign up for free to add this paper to one or more collections.