- The paper introduces a unified framework that integrates ensemble, Bayesian, and deterministic UQ techniques across classification, regression, and segmentation tasks.

- It leverages PyTorch and Lightning for streamlined training, automated checkpointing, and extensive metric tracking, ensuring reproducibility with high code quality.

- The framework supports broad dataset benchmarks and advanced evaluation protocols for calibration, OOD detection, and robust performance under distribution shifts.

Torch-Uncertainty: A Unified Framework for Uncertainty Quantification in Deep Learning

Motivation and Context

Deep neural networks (DNNs) often achieve strong predictive performance, but their lack of reliable uncertainty estimation has hindered their adoption in high-stakes applications such as healthcare, autonomous driving, and finance. Well-calibrated uncertainty quantification (UQ) is crucial under distribution shift and for detecting out-of-distribution (OOD) samples, supporting operations such as selective prediction or uncertainty-based decision referral. While myriad UQ methods exist, prior efforts to consolidate them into user-friendly, extensible toolkits have led to fragmented coverage of the relevant methods and evaluation protocols. "Torch-Uncertainty: A Deep Learning Framework for Uncertainty Quantification" (2511.10282) introduces a unified, modular, and evaluation-centric PyTorch/Lightning-based framework to streamline DNN training and evaluation with principled UQ techniques across multiple modalities and tasks.

Problem Scope and Coverage

Torch-Uncertainty addresses three core needs that previous libraries inadequately met: (1) broad support across learning tasks and data modalities (classification, regression, segmentation, pixel-wise regression); (2) a modular architecture allowing compositionality between diverse UQ techniques—Bayesian, ensemble, deterministic, conformal, post-hoc—enabling hybrid approaches and easy prototyping; and (3) advanced, automated, and extensible evaluation routines tightly integrating UQ metrics and robust model checkpointing. The framework incorporates a substantial zoo of benchmark datasets, an extensive set of UQ metrics, and output visualizations to lower the overhead for practitioners and support reproducibility in experimental comparisons.

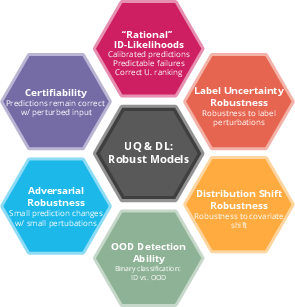

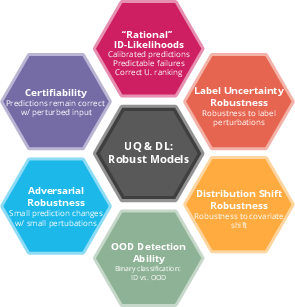

Figure 1: An overview of the dimensions of robustness and uncertainty quantification addressed by Torch-Uncertainty, focusing on rational in-distribution predictions, distribution-shift robustness, and OOD detection.

Within the uncertainty taxonomy, Torch-Uncertainty emphasizes both aleatoric (data-driven) and epistemic (model-driven) uncertainty, offering tools for calibration, selective classification, OOD detection, and performance under distribution shift. The library implements methods grouped into six major UQ families: deterministic UQ, ensemble-based methods, Bayesian neural networks, post-hoc methods, interval/conformal prediction, and select GP-based surrogates (GPs not covered due to scalability constraints). This broad coverage goes beyond many existing UQ frameworks, most of which limit themselves to a subset of these classes.

Architecture and Implementation

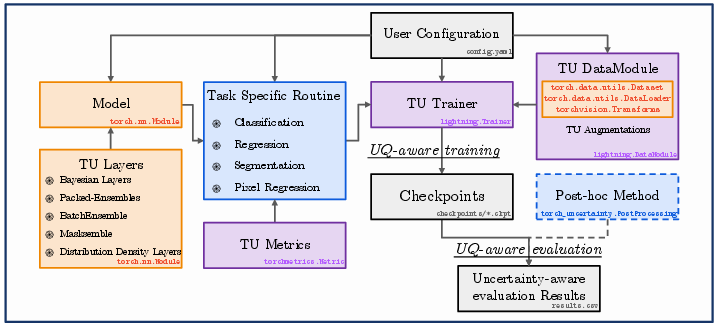

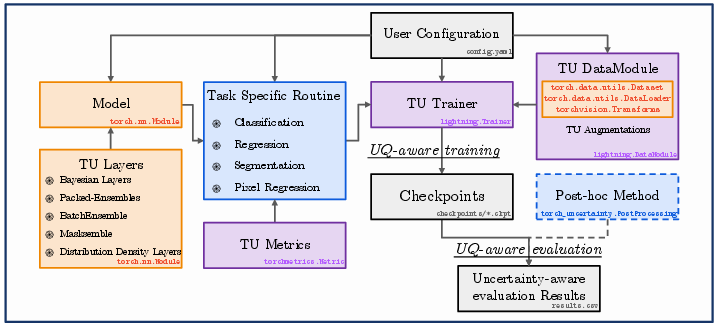

Torch-Uncertainty leverages PyTorch and Lightning for modularity and automation, providing "routines" designed around specific prediction tasks. These routines (e.g., ClassificationRoutine, SegmentationRoutine, RegressionRoutine) encapsulate

- Task-adapted training and evaluation processes

- Integrated tracking of UQ and conventional performance metrics

- Support for advanced checkpointing based on multiple criteria

- Seamless application and composition of UQ methods and post-processing (e.g., temperature scaling, test-time augmentations)

A central feature is the unification of training and inference workflows, where users can combine multiple UQ methods (e.g., ensembles of Laplace-approximated subnetworks) by simply constructing models with the desired wrappers or layers, without additional boilerplate.

Figure 2: Schematic of Torch-Uncertainty workflows, from training to deployment, with optional application of post-hoc UQ methods.

Unit testing, code quality enforcement (e.g., via ruff), and high coverage (>98%) are prioritized, and contributions are supported by clear guidelines and CI protocols. Pretrained models, standardized benchmarks, and tutorials further facilitate adoption.

Supported Methods and Composability

The library's modular wrappers and layers enable straightforward instantiation of a broad spectrum of UQ methods, including:

- Ensemble-based: Deep Ensembles, Packed Ensembles, Masksembles, BatchEnsemble, MIMO, Snapshot Ensembles

- Bayesian NNs: Variational inference (Bayes by Backprop, VI-ELBO), SWA, SWAG, SGLD, SGHMC, LP-BNN, MC Dropout, MC BatchNorm

- Deterministic UQ: Evidential networks, mean-variance outputs, beta-Gaussian regression

- Post-hoc: Temperature scaling, test-time augmentations, Laplace approximation

- Interval/Conformal: Conformal prediction techniques with valid coverage guarantees

- OOD Detection: Multiple built-in criteria (e.g., max probability, entropy, logit-based)

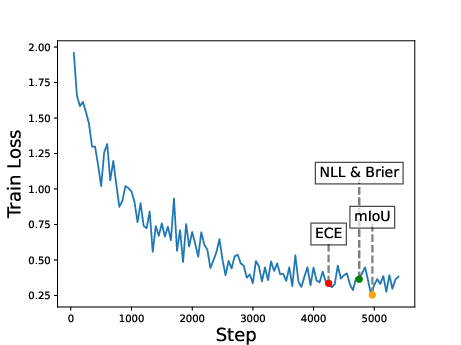

- Metrics: 26+ native metrics (e.g., ECE, aECE, Brier, NLL, AURC, AUROC, FPR-95, coverage, set size, segmentation mIoU, pixel accuracy)

The ability to compose methods is facilitated by standardized model interfaces, allowing, for example, the stacking of post-hoc calibration atop deep ensembles or hybrid Bayesian-ensemble constructions for uncertainty estimation.

Evaluation Protocols and Dataset Coverage

Torch-Uncertainty provides the most comprehensive built-in dataset support among surveyed UQ libraries, supporting 27+ datasets across:

Benchmarks and Experimental Results

Image Classification (ViT-B/16 on ImageNet)

- Deep Ensemble provides the highest accuracy (82.19%), best calibration (ECE=0.01% after temperature scaling), and strongest OOD detection (FarOOD AUROC=92.05%, FPR95=33.05%) compared to single models or compact ensemble variants.

- Temperature scaling provides slight further calibration improvement without accuracy loss.

- Compact ensemble approaches (Packed Ensembles, MIMO) are competitive in accuracy but yield higher residual risk in selective classification and OOD metrics, illustrating the tradeoff between efficiency and robustness.

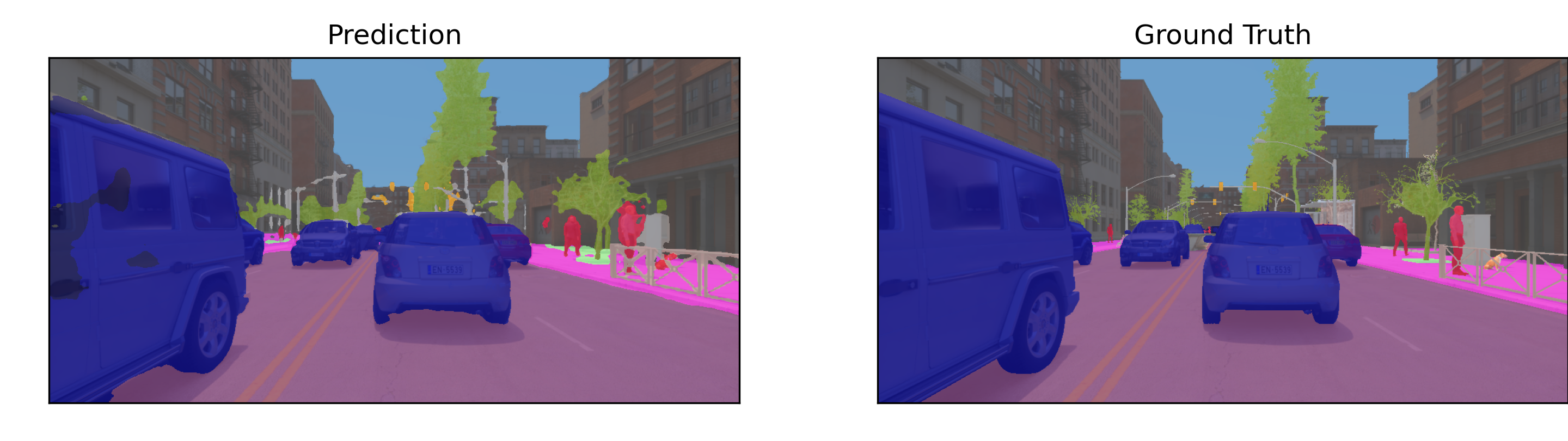

Semantic Segmentation (UNet on MUAD)

Regression and Time-Series

- Ensembles (both full and parameter-efficient variants) outperform baseline point-estimate regressors and show improvement over single Bayesian networks in mean absolute error and calibration.

- Modular routines generalize to non-vision tasks, e.g., InceptionTime for time-series classification, with metrics including ECE and OOD FPR95.

Practical Implications and Recommendations

Torch-Uncertainty provides a robust, reproducible foundation for UQ method development and evaluation, lowering the hurdles for rigorous experimentation. Key aspects include:

- Integrated, reproducible experiments with plug-and-play UQ baselines and datasets

- Highly granular metric tracking for nuanced model selection under multi-objective criteria

- Support for composable UQ strategies, enabling empirical analysis of hybrid techniques

- Visualization and automated reporting to diagnose calibration, coverage, and OOD phenomena

Resource requirements are typical of standard PyTorch/Lightning workloads, with added memory cost when ensembling. When OOD detection and robustness are critical, independent full ensembles (Deep Ensembles) remain the top choice; parameter-efficient alternatives (Packed Ensembles, MIMO) are effective under tighter resource constraints but exhibit mild degradation in uncertainty metrics.

Limitations and Future Directions

- The current focus is heavily on computer vision and related tasks; expanding to more NLP and multimodal tasks remains future work.

- Limited scaling of GP-based UQ due to tensor size and kernel computation constraints.

- Modular task design creates some risk for compatibility bugs; this is mitigated with stringent unit testing and code coverage.

- Maintenance overhead increases as new UQ methods and data domains are integrated.

Planned extensions include broader modality coverage, more sophisticated robustness metrics, expanded tutorials, and increased community engagement for sustainable development.

Conclusion

Torch-Uncertainty establishes a unified and extensible platform for uncertainty quantification in deep learning, with broad task/method/dataset coverage, modular combinability of UQ techniques, and evaluation protocols that support reproducibility and robust model selection. The framework directly enables both academic research and industrial applications demanding reliable, uncertainty-aware predictions. By democratizing access to advanced UQ methods, Torch-Uncertainty may drive methodological advances and support safer deployment of AI systems in practical domains.