The Riemann Hypothesis Emerges in Dynamical Quantum Phase Transitions

Abstract: The Riemann Hypothesis (RH), one of the most profound unsolved problems in mathematics, concerns the nontrivial zeros of the Riemann zeta function. Establishing connections between the RH and physical phenomena could offer new perspectives on its physical origin and verification. Here, we establish a direct correspondence between the nontrivial zeros of the zeta function and dynamical quantum phase transitions (DQPTs) in two realizable quantum systems, characterized by the averaged accumulated phase factor and the Loschmidt amplitude, respectively. This precise correspondence reveals that the RH can be viewed as the emergence of DQPTs at a specific temperature. We experimentally demonstrate this correspondence on a five-qubit spin-based system and further propose an universal quantum simulation framework for efficiently realizing both systems with polynomial resources, offering a quantum advantage for numerical verification of the RH. These findings uncover an intrinsic link between nonequilibrium critical dynamics and the RH, positioning quantum computing as a powerful platform for exploring one of mathematics' most enduring conjectures and beyond.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper connects a famous math mystery—the Riemann Hypothesis—to the behavior of real quantum systems. The Riemann Hypothesis (RH) is about where certain special points (called “zeros”) of a function named the Riemann zeta function appear in the complex plane. The authors show that these zeros line up with sharp changes in quantum systems over time, called dynamical quantum phase transitions (DQPTs). They also build and test a small quantum system in the lab to see these effects and propose ways to simulate bigger cases on quantum computers.

What questions does the paper ask?

- Can the nontrivial zeros of the Riemann zeta function be seen as real, physical events in a quantum system—specifically, as DQPTs?

- Is there a simple, direct way to measure them using a “probe” qubit?

- Can we design quantum simulations that make checking or exploring the RH faster than with classical computers?

In simple terms: the paper asks whether the mysterious “silent points” of the zeta function show up as “critical moments” in a quantum system, and whether quantum devices can help find them efficiently.

How did the researchers study this?

The authors build two kinds of quantum setups and explain them with everyday analogies:

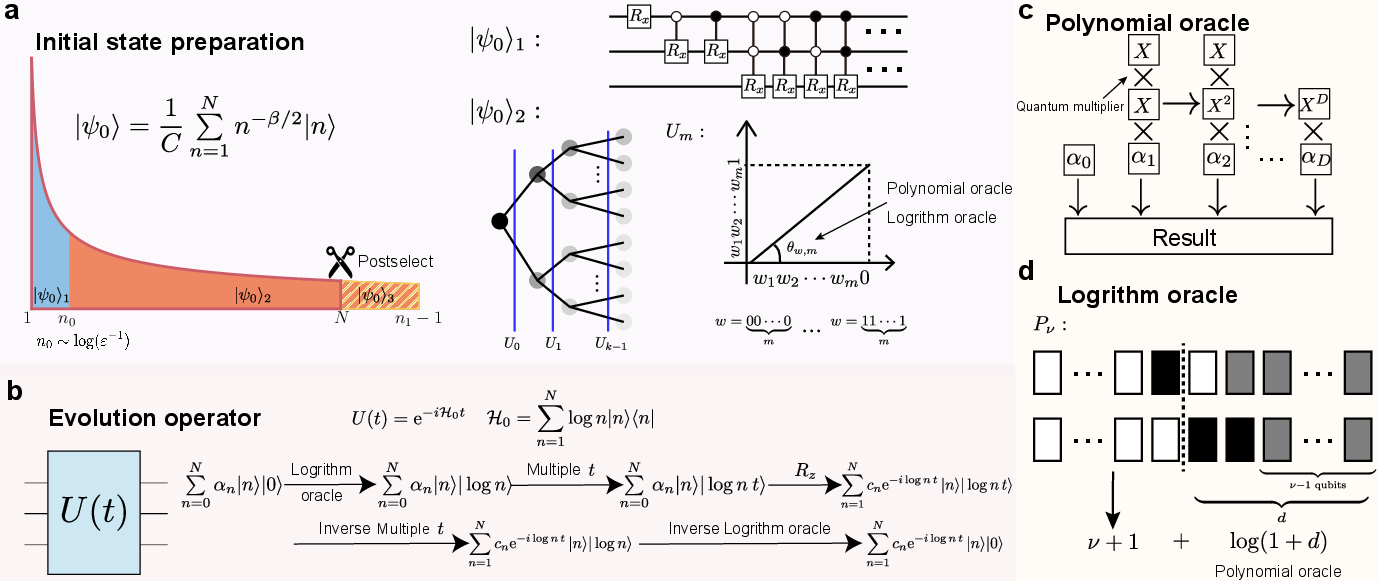

1) Probe spin and accumulated phase

- Imagine a large orchestra (the many-body quantum system) tuned so that each instrument’s pitch is like (a logarithmic spectrum).

- The orchestra is “warmed up” to a certain temperature (a thermal state with inverse temperature ).

- A small microphone (the probe qubit) listens as the orchestra plays forward and backward in time (time-reversal symmetry).

- The microphone records a number called the average accumulated phase, written . Because of the special tuning, when the orchestra hits certain times at , all sounds cancel out exactly—the microphone reads zero. Those “zero moments” correspond to the nontrivial zeros of the zeta function.

This cancellation is like waves lining up perfectly out of phase so the surface looks flat. In physics, that “flat moment” is a DQPT.

2) Loschmidt amplitude (a “return-to-start” check)

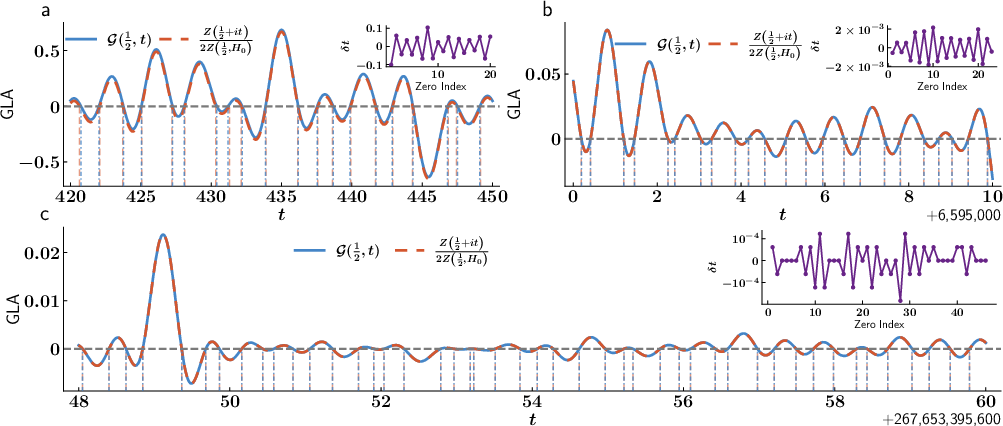

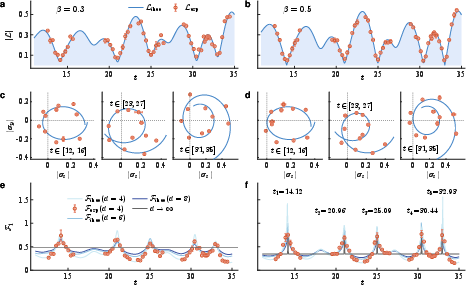

- Here, the team measures how likely the system is to return to its starting state as time goes on. This is called the Loschmidt amplitude, , and the echo is .

- They carefully shape the system so that matches a well-known math object (the Hardy -function tied to the zeta function).

- When hits zero at certain times , that again matches the zeta function’s zeros, and marks a DQPT.

Making it real in the lab

- They test the first setup using nuclear magnetic resonance (NMR), which treats tiny atomic nuclei like little magnets (qubits) you can control.

- Their device uses five qubits (three fluorine and two hydrogen spins). One qubit is the “probe,” and the other four make the small “orchestra.”

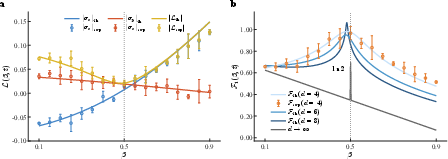

- They prepare a “thermal-like” state (sets the value), run the specially designed time evolution, and measure the probe’s coherence (its ).

- They see the measured signal drop to zero and bounce back (“vanishing-and-revival”) at the right times when , matching known Riemann zeros.

Simulating bigger cases on quantum computers

- The authors also design a universal quantum computing method to prepare the special states and run the needed time evolutions using only polynomial resources (which scales well).

- They show that, for finding and checking zeros, their approach can give at least a quadratic speedup compared to standard classical methods based on the Riemann–Siegel formula.

What did they find, and why does it matter?

- At (the “critical line” in the RH), the probe coherence and the Loschmidt amplitude become zero at times that match the zeta function’s nontrivial zeros. This maps the math zeros directly onto physical DQPTs.

- Away from , this “zero-and-revival” behavior disappears, which mirrors the RH’s claim that all nontrivial zeros lie on the line , where .

- Their NMR experiment with five qubits reproduced the first several zeros quite well, showing the idea works in a real device.

- Their simulations extended the second system’s match to very large zeros (up to about the -th zero), with accuracy improving for larger .

- They also show time-reversal symmetry in the quantum setups lines up with the zeta function’s reflection symmetry (a known property in number theory).

This matters because it builds a bridge between a deep math problem and physical, testable quantum behavior. It also suggests quantum computers could become powerful tools for exploring hard number theory questions.

What is the impact and what’s next?

- Physical viewpoint: The RH can be seen as saying that DQPTs “emerge” only at a special temperature line () in these engineered quantum systems. That gives a new, intuitive way to think about the RH.

- Quantum advantage: Their gate-based framework offers at least a quadratic speedup for numerically checking zeros compared to classical methods. As quantum hardware improves, this could scale to much bigger ranges.

- Future directions: The same techniques could be adapted to study other math functions and series, or to explore quantum chaos and information spread using tools like out-of-time-ordered correlators. It might even help with problems related to primes, supersymmetry, or the Witten index in physics.

Important note: This paper does not prove the Riemann Hypothesis. Instead, it shows a strong and testable connection between the RH’s zeros and observable quantum dynamics, and provides practical tools—both experimental and computational—for exploring them.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a consolidated list of unresolved issues that future work should address to solidify, generalize, and practically leverage the paper’s proposed correspondence between Riemann zeros and dynamical quantum phase transitions.

- No proof of the Riemann Hypothesis: the work establishes correspondences and enables numerical probing, but does not provide a formal proof or a pathway to a proof (or refutation) beyond empirical verification along discrete parameters.

- Exclusivity of DQPTs at β=1/2: the claim that DQPTs occur exclusively on the critical line is shown in the thermodynamic limit; a rigorous finite-size analysis (including bounds on spurious zeros and their scaling) is missing.

- Finite-size effects and rounding of nonanalyticities: the free-energy density nonanalyticities are defined via a thermodynamic limit; how these signatures sharpen with system size and how to distinguish true zeros from finite-size artifacts are not quantified.

- Robustness to Hamiltonian imperfections: the mapping critically relies on an exact logarithmic spectrum E_n=log n; tolerance to spectral deviations, level ordering errors, and Hamiltonian control inaccuracies is not analyzed.

- Experimental realization limited to the first system: the Loschmidt-amplitude-based second system (used for large zeros) is not experimentally demonstrated; feasibility, control requirements, and error budgets for implementing the time-dependent θ(t) term on hardware remain uncharacterized.

- Precision requirements for θ(t) and ψ(·) (digamma) evaluation: the second system demands accurate, time-dependent implementation of the Riemann–Siegel theta function and its derivative; the computational and control complexity to realize these functions within target error δ is not provided.

- Truncation and convergence domains: the treatment of alternating Dirichlet series and Riemann–Siegel truncations lacks explicit, uniform error bounds across β and t; precise convergence guarantees and residual error estimates for finite N are not quantified.

- Detection criteria for zeros: the experimental identification of zeros via simultaneous vanishing of Re(L) and Im(L) uses polynomial fitting; statistical decision rules, confidence intervals, false-positive/false-negative rates, and robustness to noise/drift are not specified.

- Complexity claims versus state-of-the-art classical algorithms: the asserted “at least quadratic speedup” is benchmarked against direct Riemann–Siegel evaluation; comparison with faster classical methods (e.g., Hiary’s O(t{1/3+o(1)}), Odlyzko–Schönhage) and end-to-end speedups (including pre/post-processing) is not established.

- Gate-based resource accounting: the universal framework gives asymptotic “polynomial” guarantees and sample complexities, but explicit gate counts, depth, qubit requirements, classical precomputation costs, and constant factors needed for practical regimes (e.g., t≈1012) are not detailed.

- Success probability and amplitude amplification: initial state preparation yields success probability ≥(1/2−ε/3); the need for, and cost of, amplitude amplification (or alternative boosting schemes) to reach practical error δ is not discussed.

- Noise tolerance and fault tolerance: the sensitivity of the DQPT signatures to decoherence, control errors, readout noise, and calibration drifts—and the minimum error-correction requirements to preserve the mapping at scale—are not evaluated.

- Scalability of multi-qubit controlled rotations: the implementation of controlled-Rz(θ_n) with θ_n=t log n for large n and t is nontrivial; decomposition strategies, ancilla requirements, and error accumulation in deep, highly conditional circuits are not analyzed.

- Mapping rigor for time-reversal and functional equation: the claimed correspondence between time-reversal symmetry and the reflection (functional) symmetry of ζ(s) is stated but not derived; a formal mapping that reproduces the full functional equation (including all prefactors) is missing.

- Treatment of trivial zeros and other special points: the approach focuses on nontrivial zeros on the critical line; behavior near trivial zeros (negative even integers), poles (s=1), and branch cuts in the analytic continuation is not explored.

- Generality beyond the two constructed models: whether other classes of quenches, initial states, or observables produce the same correspondence (or a broader family of number-theoretic mappings) is not addressed.

- Multiplicity and simplicity of zeros: the framework assumes simple zeros; the capacity to detect multiplicity (if it exists) and to differentiate close/near-degenerate zeros is not analyzed.

- Continuous verification off the critical line: the suggestion to “exclude” DQPTs off β≠1/2 would require checking a continuum of t; strategies for covering continuous domains efficiently (with guarantees) are not provided.

- Choice of free-energy normalization d=log N: the physical justification for using d=log N as the “degrees of freedom” and its universality across many-body realizations is not argued; alternative scalings and their impact on nonanalyticity detection are unexplored.

- Extension to L-functions and broader arithmetic objects: while the discussion hints at generalizations, concrete constructions for Dirichlet L-functions, automorphic L-functions, or prime-counting functions (and their control Hamiltonians) are not developed.

- Link to quantum chaos diagnostics: the proposed connection to OTOCs and chaos is speculative; an explicit protocol and measurable predictions (e.g., scaling laws, spectral statistics) tying zeros to chaos indicators are lacking.

- Validation at very large zeros: numerical demonstrations near the 1012-th zero with modest spin numbers raise questions about how N, gate depth, and precision scale to maintain accuracy; reconciliation of resource counts with the N∼√(t/2π) heuristic is needed.

- Practical end-to-end workflow: a complete, reproducible pipeline—from parameter selection to circuit synthesis, error mitigation, zero identification, and certification—suitable for near-term or fault-tolerant hardware is not provided.

Glossary

- Accumulated phase factor: A complex-valued observable that encodes phase accumulation from time evolution, used to map features of the zeta function. "The dynamics of the accumulated phase factor and the Loschmidt amplitude reproduce key features of the zeta function"

- Ancillary Hilbert space: An additional Hilbert space used to purify mixed states or embed them in a larger framework. "where denotes an orthonormal basis in an ancillary Hilbert space isomorphic to the physical one."

- Analytic continuation: Extending a function to complex arguments to reveal additional structure or equivalences. "By analytically continuing to complex time plane , we obtain"

- Boundary partition function: A partition function defined with boundary states, linking equilibrium statistical mechanics to dynamical observables. "the boundary partition function in equilibrium statistical mechanics~\cite{heyl2018dynamical, leclair1995boundary} is given by"

- Chemical shift: In NMR, the frequency offset of a nucleus’s resonance relative to a reference. "The chemical shifts are shown on the diagonal"

- Deviation density matrix: The traceless part of a density matrix used in NMR to describe deviations from the identity component. "Here, we employ the traceless deviation density matrix to describe the state evolution."

- Digamma function: The logarithmic derivative of the gamma function, appearing in phase terms like the Riemann–Siegel theta function. "with denoting the digamma function."

- Dirichlet series: A series of the form used to represent analytic functions like the zeta function. "It admits a Dirichlet series representation valid for "

- Dynamical free energy: A rate function derived from the Loschmidt echo that signals nonanalyticities at critical times. "from which the Loschmidt rate (or dynamical free energy) is obtained as"

- Dynamical quantum phase transitions (DQPTs): Non-equilibrium temporal transitions marked by nonanalyticities in dynamical observables. "Here, we establish a direct correspondence between the nontrivial zeros of the zeta function and dynamical quantum phase transitions (DQPTs)"

- Generalized Loschmidt amplitude (GLA): The overlap of an initial density matrix with its time-evolved version, generalizing the Loschmidt amplitude to mixed states. "The generalized Loschmidt amplitude (GLA) is defined as the overlap of the initial density matrices with the time-evolution operator"

- Gradient ascent pulse technique: An optimization method for designing high-fidelity shaped control pulses in NMR/quantum control. "shaped pulses optimized via a gradient ascent pulse technique~\cite{exp4}, yielding a numerical simulation fidelity exceeding 99.5\%."

- Hardy Z-function: A real-valued function on the critical line related to the zeta function, used in zero-finding and asymptotics. "For $N=\sqrt{t/{2\pi}$, matches the Hardy Z-function "

- Hilbert–Pólya conjecture: The conjecture that nontrivial zeros of the zeta function correspond to eigenvalues of a (yet unknown) self-adjoint operator. "The most influential idea in this direction is the HilbertâPólya conjecture"

- Lee–Yang zeros: Zeros of the partition function in the complex plane that indicate phase transitions in equilibrium systems. "analogous to LeeâYang zeros in thermal systems~\cite{wei2012lee, peng2015experimental,francis2021many}."

- Line-selective shaped pulses: Tailored radio-frequency pulses that selectively address specific transitions or spectral lines. "The preparation sequence, shown in the main text, consists of two analytically designed line-selective shaped pulses, four pulsed field gradients, and three SWAP gates."

- Loschmidt amplitude: The overlap between an initial state and its time-evolved state, whose zeros signal DQPTs. "Loschmidt amplitude "

- Loschmidt echo: The modulus squared of the Loschmidt amplitude, measuring return probability and used to define the dynamical free energy. "The modulus of the Loschmidt amplitude, , defines the Loschmidt echo (LE)"

- Nematic liquid crystal solvent: An ordered solvent used to partially align molecules; here, MBBA is used to induce residual dipolar couplings. "partially aligned in the nematic liquid crystal solvent N-(4-methoxybenzaldehyde)-4-butylaniline (MBBA)"

- Nuclear magnetic resonance (NMR): A spectroscopy/quantum-control platform leveraging nuclear spins for quantum simulations and measurements. "we realized a five-qubit system on a nuclear magnetic resonance (NMR) platform."

- Out-of-time-ordered correlators (OTOCs): Correlators that probe information scrambling and quantum chaos. "motivating future studies of their relationship to out-of-time-ordered correlators (OTOCs)"

- Partition function: A central quantity in statistical mechanics that sums Boltzmann weights over energy levels. "with partition function ."

- Probe spin: An ancillary qubit coupled to a system to extract information via its coherence or expectation values. "We introduce a probe spin initialized in the superposition state ."

- Pulsed field gradient: Spatially varying magnetic-field pulses used to dephase coherences in NMR experiments. "consists of two analytically designed line-selective shaped pulses, four pulsed field gradients, and three SWAP gates."

- Quenching: Driving a system out of equilibrium via a sudden change in the Hamiltonian or parameters. "We then drive them out of equilibrium by quenching with specific interaction Hamiltonians"

- Residual dipolar couplings: Couplings arising from partial molecular alignment in a liquid crystal, not fully averaged out as in isotropic liquids. "Here, and correspond to the scalar and residual dipolar couplings, respectively."

- Riemann Hypothesis (RH): The conjecture that all nontrivial zeros of the Riemann zeta function lie on the critical line . "The Riemann Hypothesis (RH), one of the most profound unsolved problems in mathematics, concerns the nontrivial zeros of the Riemann zeta function."

- Riemann–Siegel approximation: An asymptotic expansion for the Hardy Z-function enabling efficient evaluation near large . "which admits a Riemann-Siegel approximation~\cite{de2011high},"

- Riemann–Siegel theta function: A phase function used to make real on the critical line. "Here is the Riemann-Siegel theta function"

- Scalar couplings: Isotropic J-couplings between nuclear spins observed in NMR spectra. "Here, and correspond to the scalar and residual dipolar couplings, respectively."

- Thermodynamic limit: The limit as system size goes to infinity, often used to reveal nonanalytic behavior. "In the thermodynamic limit ,"

- Thermal equilibrium state: A Gibbs state of a Hamiltonian at inverse temperature . "The system is initialized in the thermal equilibrium state of the Hamiltonian "

- Time-reversal symmetry: A symmetry under reversal of the time coordinate; here tied to reflection symmetry of the zeta function. "Moreover, the quantum systems exhibit time-reversal symmetry, corresponding to the reflection symmetry of the zeta function."

Practical Applications

Immediate Applications

The following items describe concrete, deployable use cases that leverage the paper’s findings and methods, along with sector links, potential tools/workflows, and feasibility notes.

- Quantum hardware and software benchmarking using number-theoretic workloads (industry; software)

- What: Use the constructed logarithmic-spectrum Hamiltonians, coherence-based DQPT detection, and Loschmidt amplitude circuits as stress-tests and benchmarks for noisy intermediate-scale quantum (NISQ) devices.

- Tools/workflows: “Number-Theoretic Benchmark Suite” including circuits for preparing thermal states with weights n{-β}, implementing e{-i log(n) t}, and measuring probe spin coherence and Loschmidt echoes.

- Assumptions/dependencies: Gate error rates and calibration fidelity must be sufficient to resolve coherence zeros; availability of controlled rotations and oracle-like subroutines to approximate log and θ(t) (Riemann–Siegel theta) functions; finite-size effects may blur expected non-analyticities.

- Experimental DQPT detection tied to Riemann zeros in small quantum systems (academia; quantum physics)

- What: Replicate the NMR demonstration or port to superconducting/ion-trap platforms to observe vanishing-and-revival dynamics at β=1/2 and map zeros to critical times.

- Tools/workflows: Probe spin initialization and measurement of ⟨σx⟩, ⟨σy⟩ (accumulated phase factor) or ⟨σz⟩ (Loschmidt amplitude), controlled-Z and controlled-Rz(θn=t log n) gates; pulse shaping via GRAPE or equivalent.

- Assumptions/dependencies: Precise time-dependent control to implement θ̇(t) terms; stable temperature-like state preparation; manageable decoherence over the experiment window.

- Hybrid classical–quantum acceleration of zeta evaluation in finite windows (academia; HPC/software)

- What: Use the proposed Riemann–Siegel-based circuits to accelerate partial sums around candidate zero regions, improving the throughput of numerical verification campaigns.

- Tools/workflows: “Quantum Zeta Explorer” that orchestrates classical pre/post-processing with quantum execution of partial sums; parameter scanning over β, t with error bars to locate zeros.

- Assumptions/dependencies: Overall speedup hinges on accuracy/precision targets and sample complexity; availability of efficient classical routines for θ(t), digamma ψ and error control; polynomial resource scaling verified for target problem sizes.

- Calibration and metrology via known zero times (industry; instrumentation)

- What: Use the well-tabulated locations of early nontrivial zeros (e.g., t≈14.13, 21.02, …) as timing landmarks to calibrate coupling strengths, phase accumulation rates, and pulse timing.

- Tools/workflows: “Zero-Time Calibration” procedures aligning measured coherence minima to theoretical zero positions; device drift tracking via repeated DQPT signatures.

- Assumptions/dependencies: Devices must reproducibly reach β≈1/2 thermal distributions and execute log-spectrum dynamics; calibration errors can be disentangled from finite-size deviations.

- Education and public engagement modules linking number theory and quantum dynamics (education)

- What: Develop lab courses and outreach demos that show RH-related dynamics via probe coherence and Loschmidt echoes on tabletop NMR or simulated quantum platforms.

- Tools/workflows: Curriculum packages with interactive simulators, small-scale circuits, and datasets of zeros; visualization dashboards of DQPT critical points vs. zeta zeros.

- Assumptions/dependencies: Access to educational quantum hardware/simulation environments; simplified protocols that tolerate higher noise.

- Diagnostics of time-reversal symmetry via coherence/reflection mapping (academia; quantum dynamics)

- What: Use the paper’s time-reversal invariance–reflection symmetry correspondence as an experimental diagnostic of symmetry violations in driven many-body systems.

- Tools/workflows: Symmetry checks based on the reflection property of ζ(s) mapped to reversibility of dynamics; compare ⟨σx⟩, ⟨σy⟩ trajectories under forward/backward evolutions.

- Assumptions/dependencies: Reliable implementation of forward/backward dynamics with minimal control errors; ability to disentangle symmetry-breaking from finite-size effects.

Long-Term Applications

The following items anticipate use cases that require further research, scaling, and engineering maturity.

- Quantum-assisted, large-scale verification of RH zeros (academia; policy)

- What: Build a “Quantum RH Observatory” to scan large t-regions with hybrid pipelines, potentially offering at least quadratic speedups in the |t| dependence compared to direct Riemann–Siegel evaluations.

- Tools/workflows: Scalable gate-based libraries for logarithmic spectra and thermal state prep; automated windowed searches with rigorous error certification and cross-validation.

- Assumptions/dependencies: High-qubit, low-noise devices; efficient oracles for θ(t), ψ and log(n); robust error models and confidence bounds; recognition that physical verification cannot by itself prove RH due to finite precision—mathematical rigor must accompany empirical evidence.

- Cryptography and standards planning contingent on RH-related progress (policy; finance)

- What: If RH (or strengthened zero-free regions) were confirmed, certain analytic bounds underpinning prime gaps and distribution tighten; standards bodies could revisit parameter choices and proofs in number-theoretic protocols.

- Tools/workflows: “Crypto Agility Plans” acknowledging potential shifts in analytic assumptions; tooling that audits dependencies on RH-sensitive bounds in protocols and proofs.

- Assumptions/dependencies: RH verification is not equivalent to breaking RSA; impacts are largely on error terms and analytic guarantees; policy actions depend on consensus within math/crypto communities.

- Generalized quantum algorithms for L-functions and special functions (academia; software/HPC)

- What: Extend the framework to Dirichlet L-functions and related series, contributing to computational analytic number theory and cross-checks of generalized RH variants.

- Tools/workflows: Circuit libraries for other spectra/state weights; hybrid pipelines integrating fastest known classical methods (Odlyzko, Hiary) with quantum subroutines.

- Assumptions/dependencies: Efficient state preparation for series-specific weights; precise control over long-time dynamics; scalable sampling strategies with certified error bounds.

- Discovery pathways toward Hilbert–Pólya-like operators via engineered Hamiltonians (academia; fundamental physics)

- What: Leverage the DQPT–zero correspondence to search for Hamiltonians whose spectra match zero distributions, exploring potential physical origins of RH-like phenomena.

- Tools/workflows: Design frameworks for operator synthesis, spectral engineering, and inverse problems mapping target spectra to implementable interactions.

- Assumptions/dependencies: Mature Hamiltonian engineering, error-corrected devices, and rigorous mathematical frameworks linking spectra to zeros beyond phenomenological correspondences.

- Quantum sensors leveraging critical nonequilibrium dynamics (industry; metrology)

- What: Employ DQPT criticality (sharp non-analyticities) as sensitive probes of environmental parameters or weak couplings, using zero-crossing timing as a high-contrast signal.

- Tools/workflows: Sensor protocols that translate shifts in critical times into parameter estimates; calibration via known zero positions.

- Assumptions/dependencies: Stability of engineered dynamics; mapping robustness from mathematical structure to practical sensing contexts; error-corrected or highly coherent platforms.

- Cross-platform benchmark standards built on special-function evaluation (industry; standards)

- What: Institutionalize “special-function quantum benchmarks” that compare devices on reproducible tasks (e.g., locating zeros within fixed windows with certified precision).

- Tools/workflows: Standardized datasets, test harnesses, and reporting formats; integration with broader quantum performance metrics (fidelity, depth, sampling cost).

- Assumptions/dependencies: Community adoption; harmonization of error certification procedures; accessibility across different hardware modalities.

- Quantum chaos and information propagation studies with number-theoretic fingerprints (academia; physics)

- What: Explore links between Riemann zeros, DQPTs, and OTOCs or other chaos diagnostics, potentially revealing new structures in nonequilibrium quantum systems.

- Tools/workflows: Experimental and numerical programs correlating zero distributions with scrambling indicators; theoretical models connecting arithmetic structure to dynamical signatures.

- Assumptions/dependencies: Advanced measurement toolkits for OTOCs; robust theoretical bridges between number theory and chaotic dynamics.

- Comprehensive software ecosystem for “Quantum Special Functions” (software; education)

- What: Create open-source packages offering quantum circuits, simulators, and APIs for ζ(s), L-functions, and allied tasks, with educational extensions and visualization.

- Tools/workflows: Libraries implementing thermal state prep with n{-β} weights, log-spectrum Hamiltonians, and θ(t)–ψ(t) controls; dashboards to explore zeros and DQPTs across β, t.

- Assumptions/dependencies: Sustainable community development and maintenance; hardware access or high-fidelity simulators; documented error controls and reproducibility.

These applications rely on core assumptions articulated in the paper: constructing logarithmic energy spectra and corresponding thermal states with polynomial resources; measurable DQPT signatures tied to nontrivial zeros; hybrid classical–quantum workflows for Riemann–Siegel evaluations; and scalability of gate-based implementations with certified precision. As devices and algorithms mature, both scientific discovery and practical tooling can expand from immediate demonstrations to impactful, long-term deployments.

Collections

Sign up for free to add this paper to one or more collections.