- The paper introduces a rotation-only formulation that analytically expresses translation as a function of rotation, reducing optimization to the rotation manifold.

- It employs rigorous degeneracy detection and Levenberg-Marquardt optimization, achieving up to 27.70% improvement over traditional multi-view methods.

- Experimental results on real and simulated data highlight superior robustness and efficiency compared to conventional full bundle adjustment approaches.

Rotation-only Imaging Geometry for Robust Rotation Estimation

Introduction and Motivation

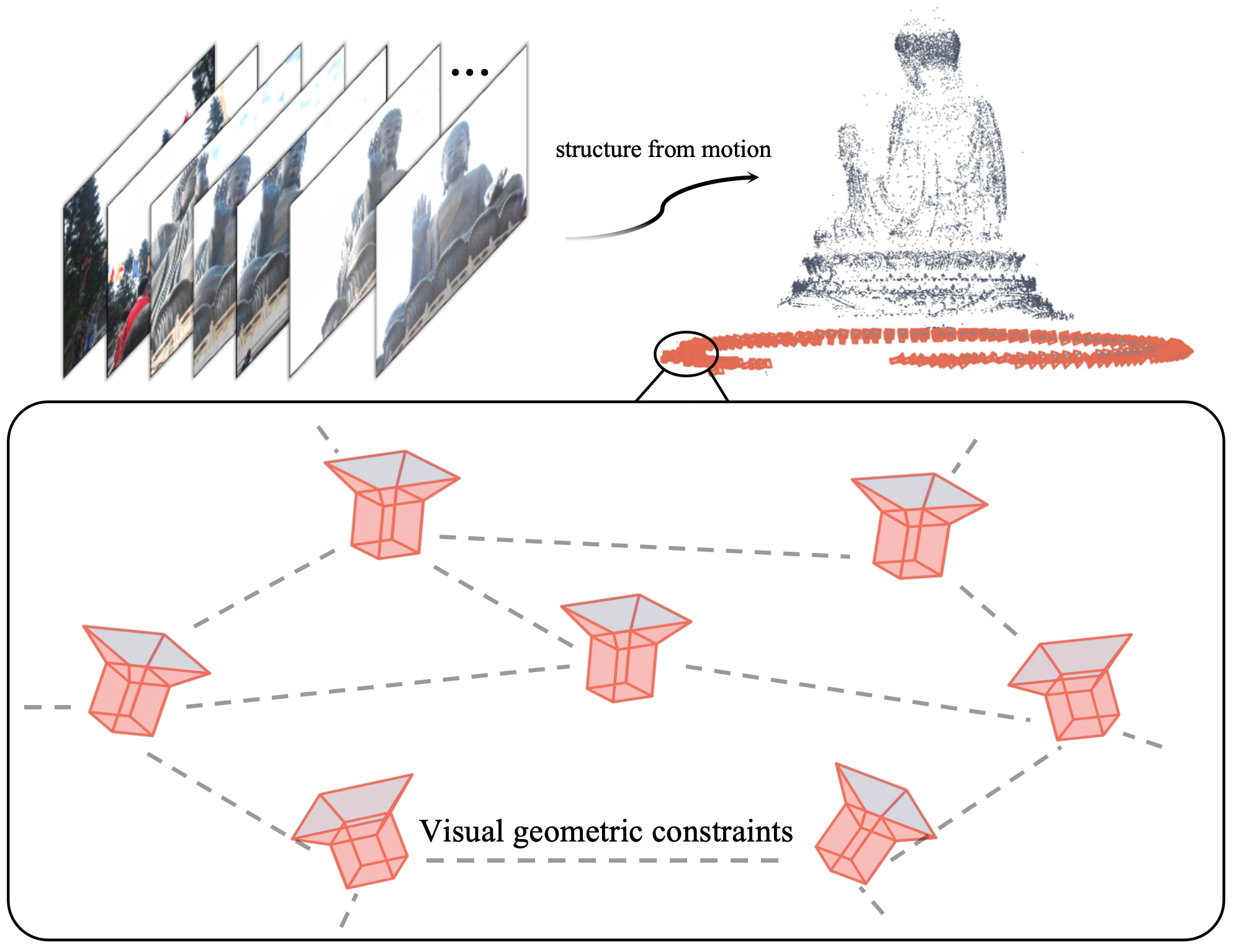

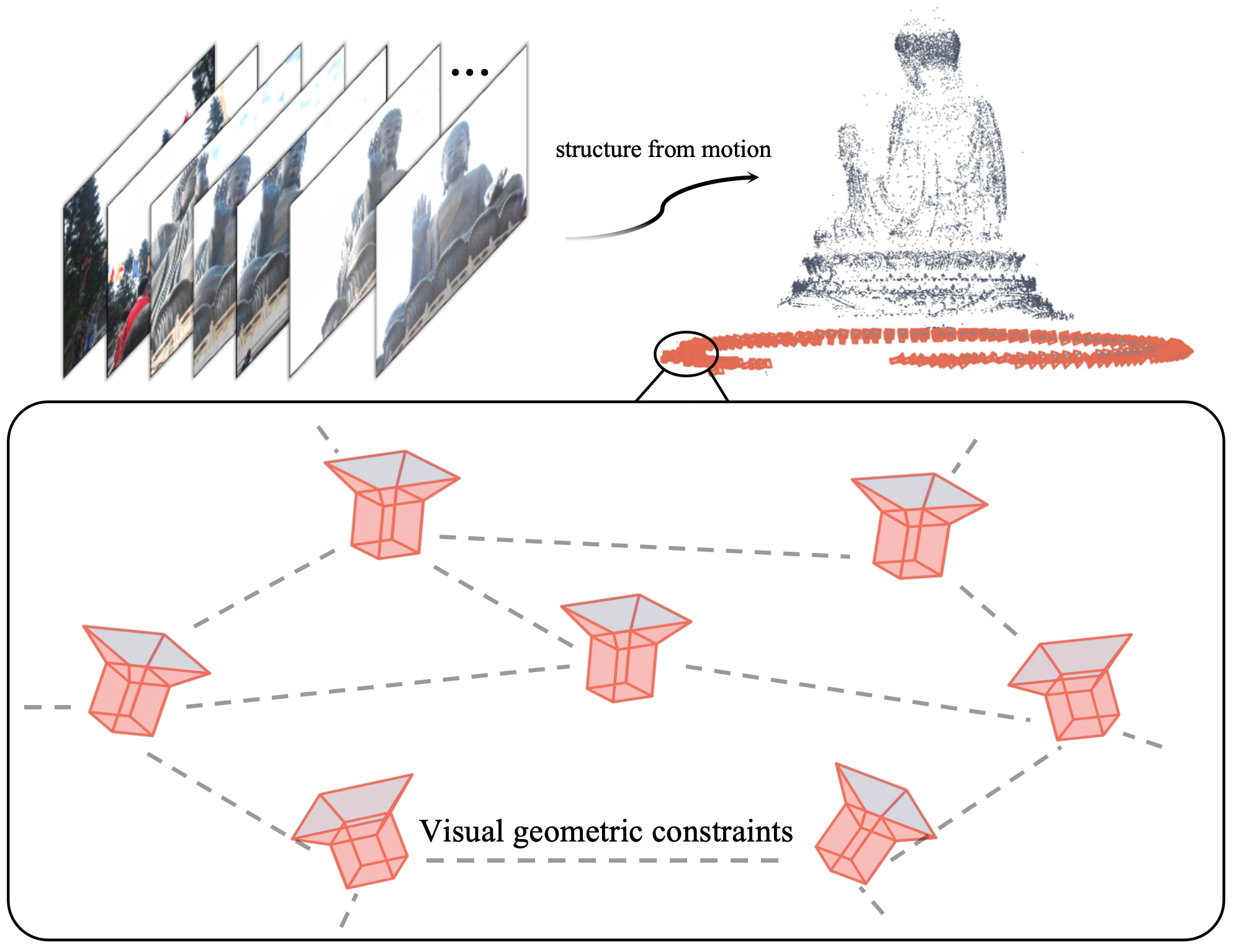

This work addresses one of the foundational challenges in computer vision and geometric scene understanding: robust estimation of camera rotation from image sequences, particularly within the context of Structure from Motion (SfM). The mainstream approaches to SfM, including bundle adjustment (BA), tightly couple the estimation of rotation, translation, and 3D scene structure, often introducing sensitivity to initialization and high-dimensional optimization challenges. Recent developments in pose-only imaging geometry have demonstrated that decoupling scene structure from pose can increase both the efficiency and robustness of SfM pipelines. This paper advances this decoupling to its logical extent by formulating a rotation-only imaging geometry—parametrizing imaging solely on the rotation manifold and analytically expressing translation as a function of rotation and observations. This approach eliminates dependence upon translation estimation for many core problems, enabling robust, efficient, and highly accurate rotation estimation in both two-view and multi-view scenarios.

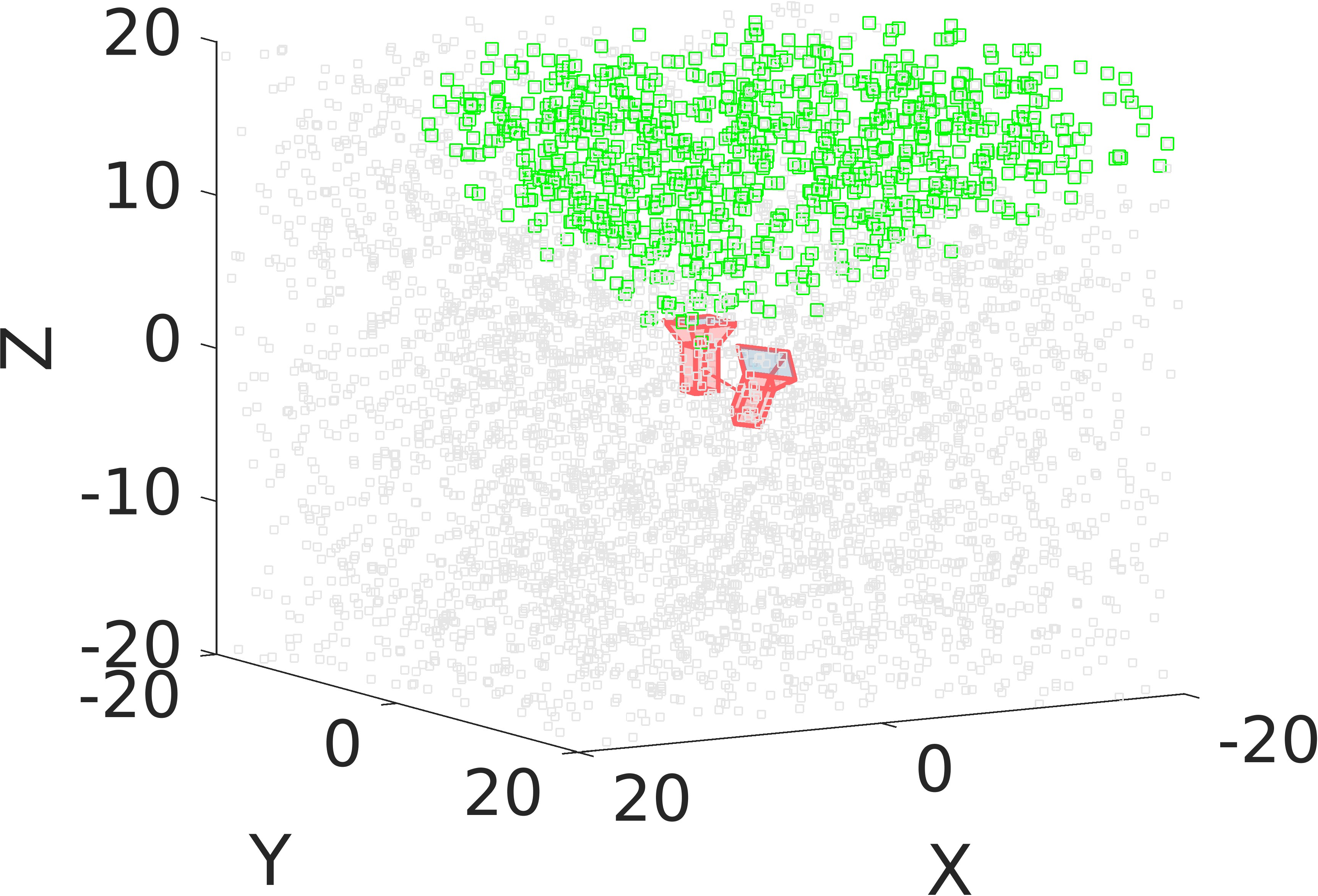

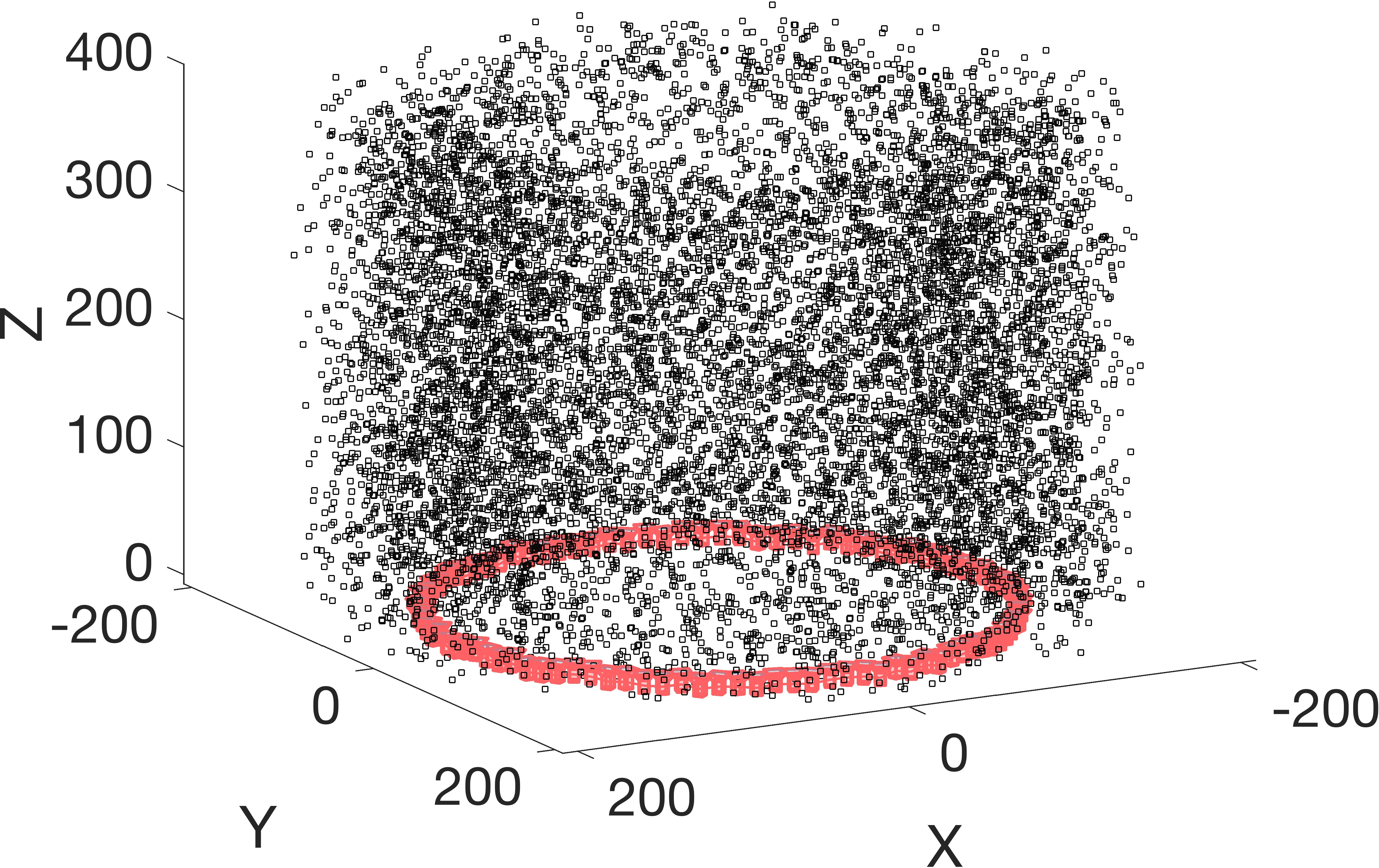

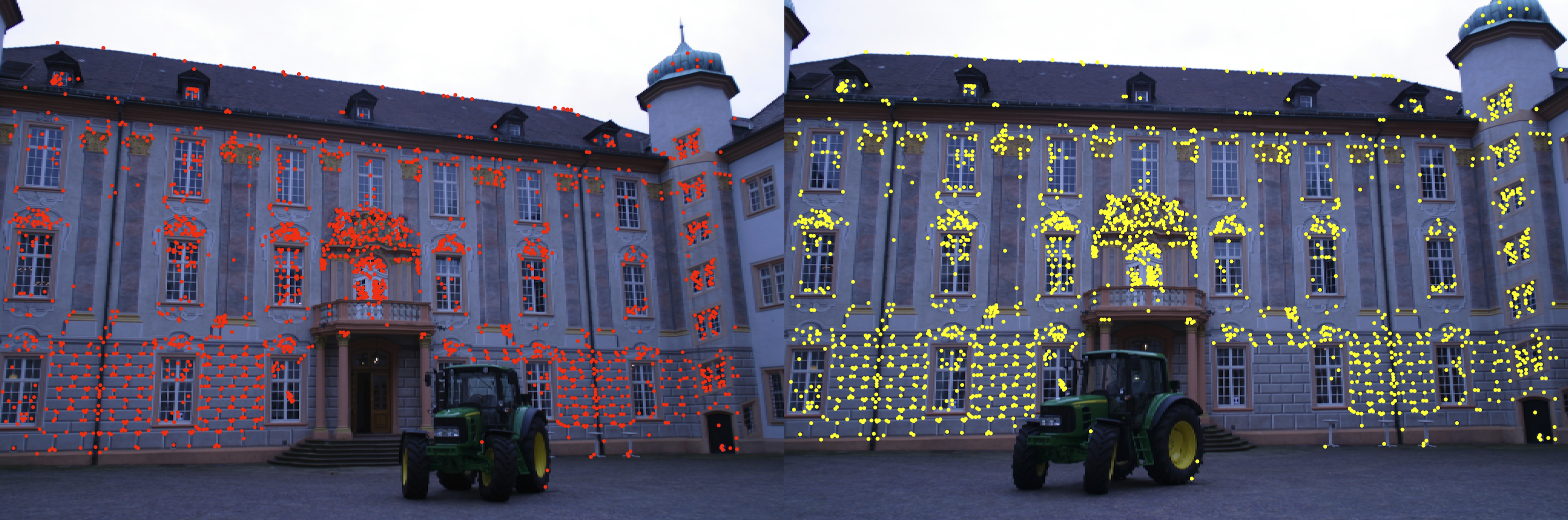

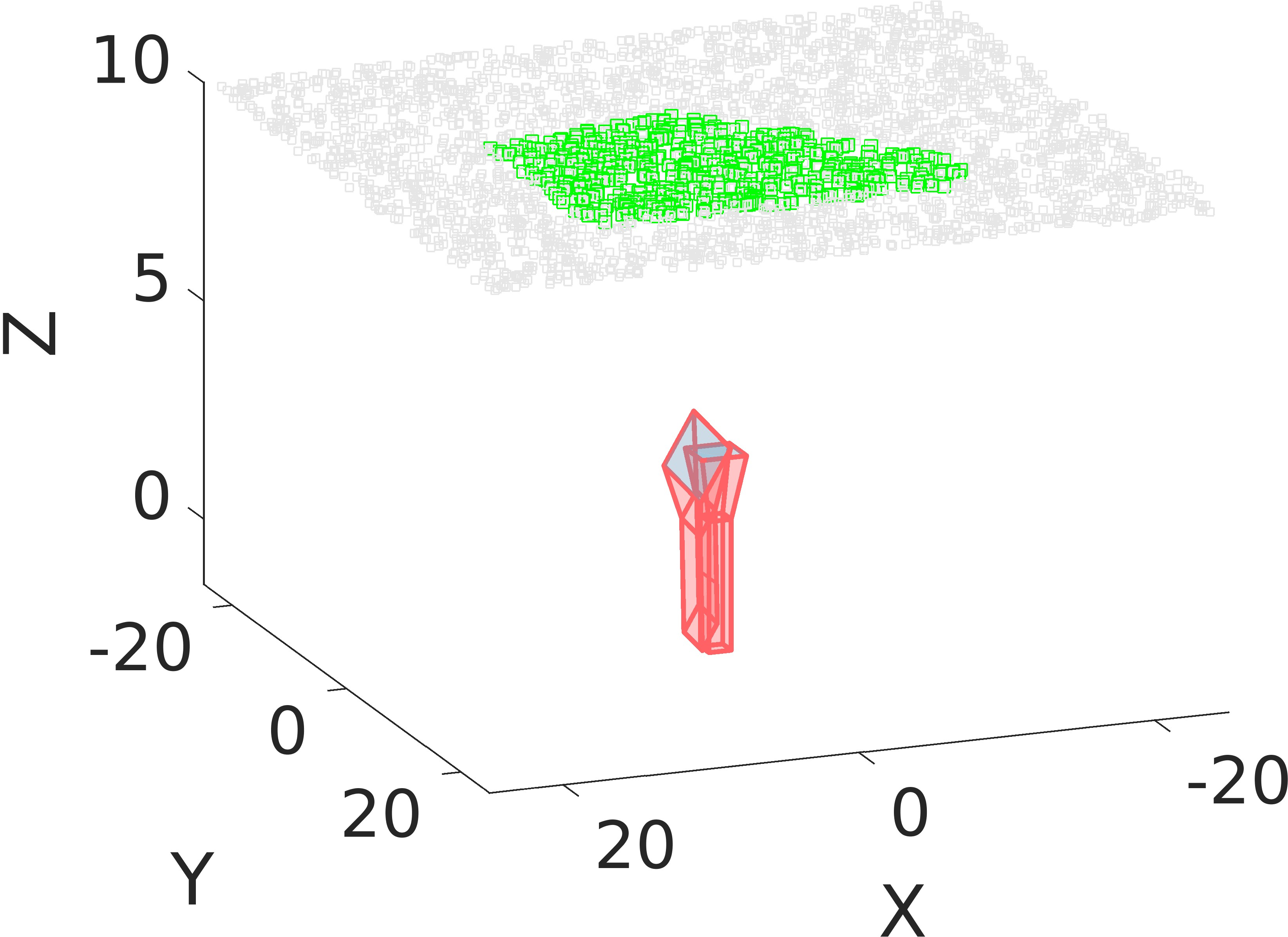

Figure 1: An illustration of reconstruction using the Lund dataset; views form a connectivity graph where nodes include pose and observation information and edges encode inter-view constraints.

Theoretical Framework

Rotation-only Parametrization

The central contribution is the derivation of an analytical representation wherein translation is expressed in terms of observed points and rotations, enabling the refinement of rotations independently. By leveraging the pairwise pose-only (PPO) constraints, the geometry is condensed to a lower-dimensional subspace: the rotation manifold. Position information is marginalized, shifting the optimization to SO(3), which dramatically reduces the parameter search space—as compared to conventional pose-plus-structure optimization.

Observability and Scene Structure Analysis

A rigorous theoretical analysis is provided to characterize the conditions under which translation is observable from the given configuration (rank analysis of the joint observation matrix). Three cases are identified:

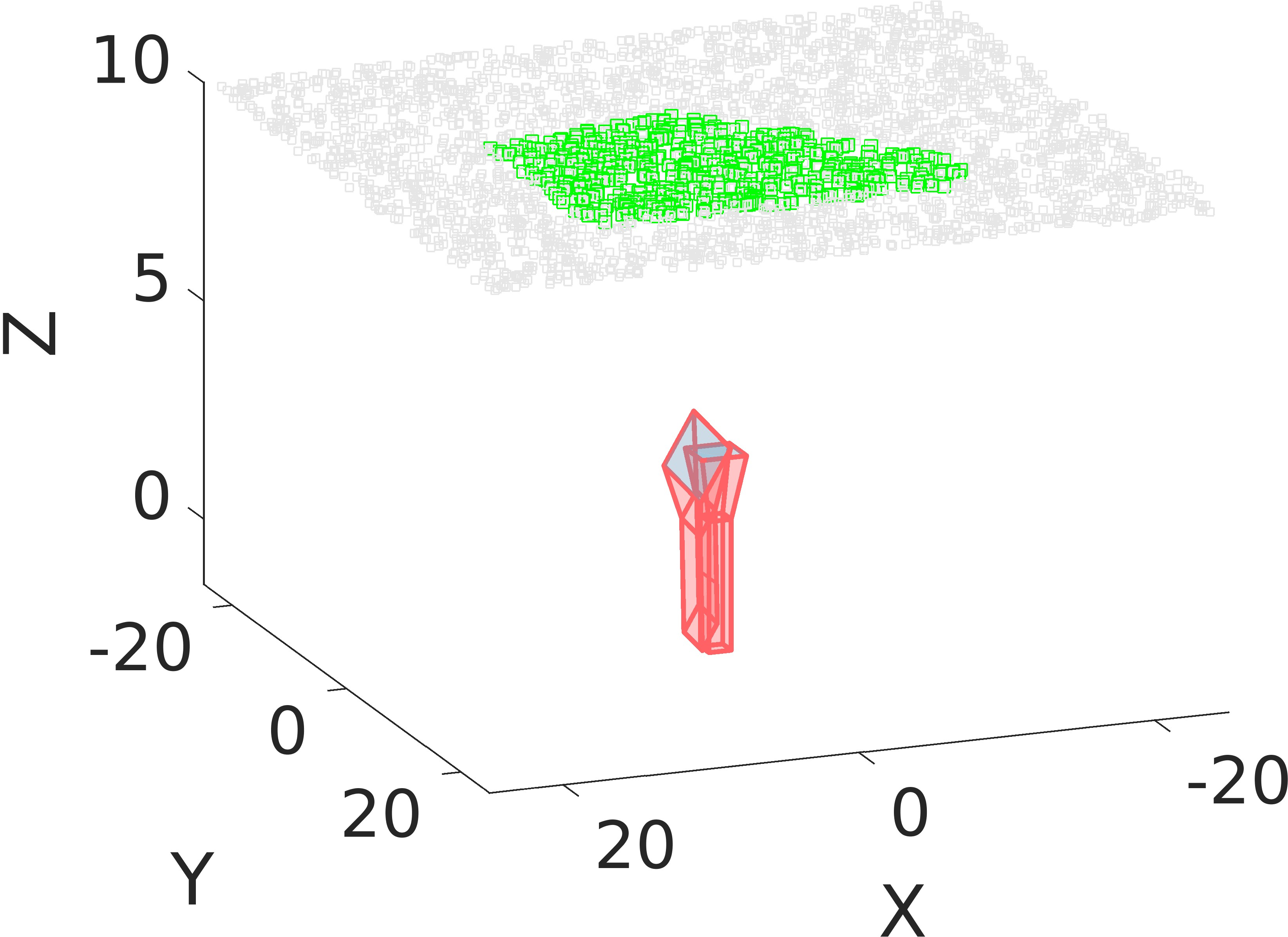

- PR/B/I (Pure Rotation/Baseline/Infinity): Cameras have pure rotational motion or all scene points are at infinity or collinear with the baseline; translation is unobservable.

- Holoplane: All points and both cameras are coplanar; translation indeterminacy exists within the plane.

- RankRegular: Generic, fully spatial scenes; translation is uniquely determined up to scale.

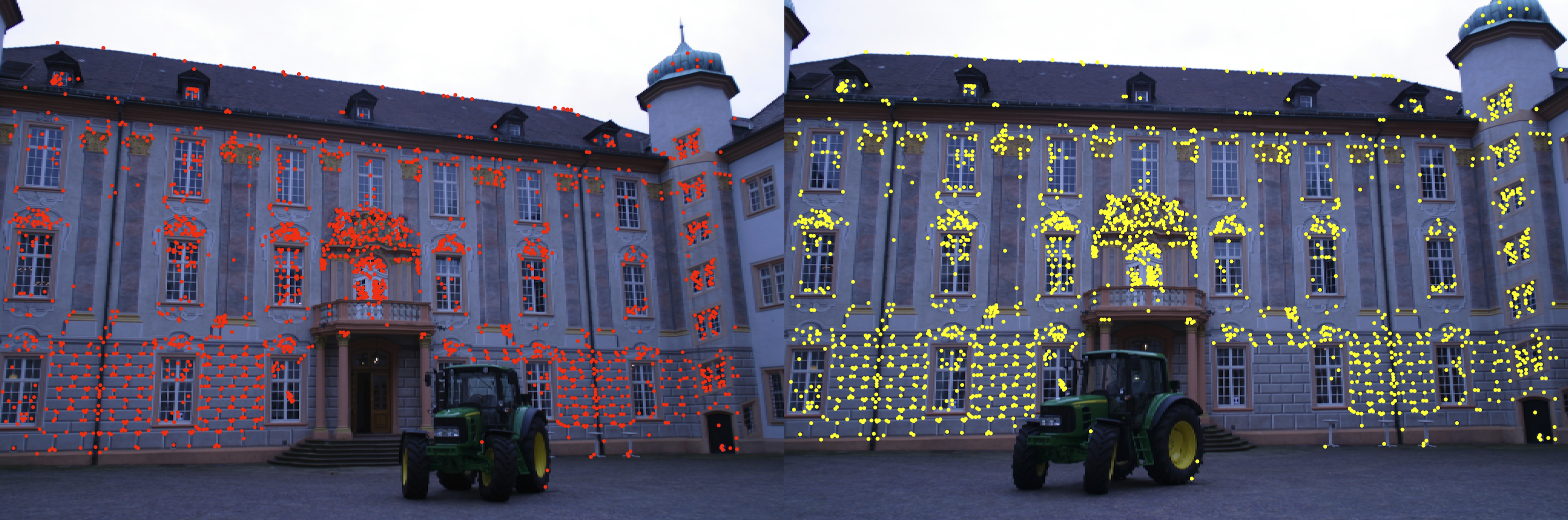

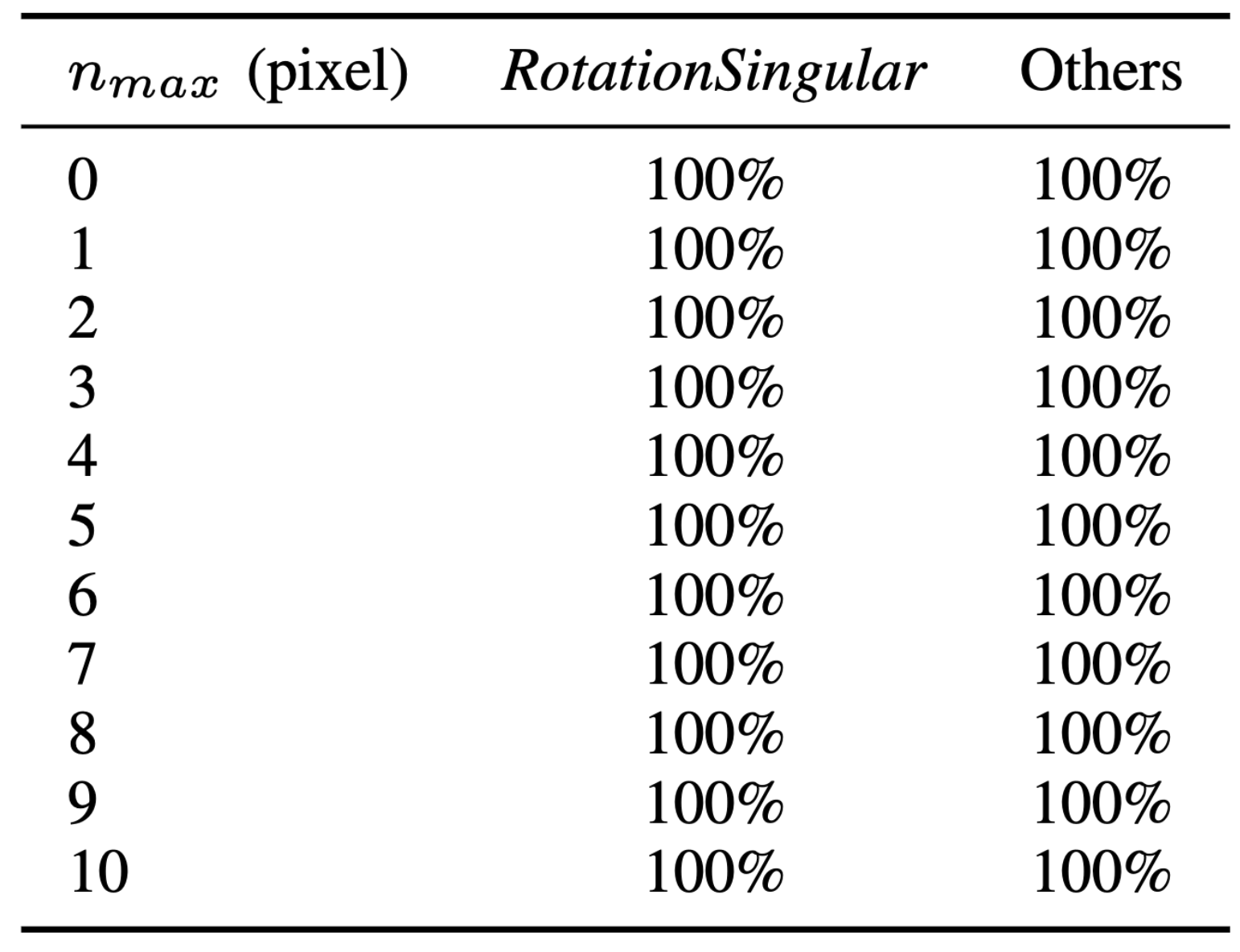

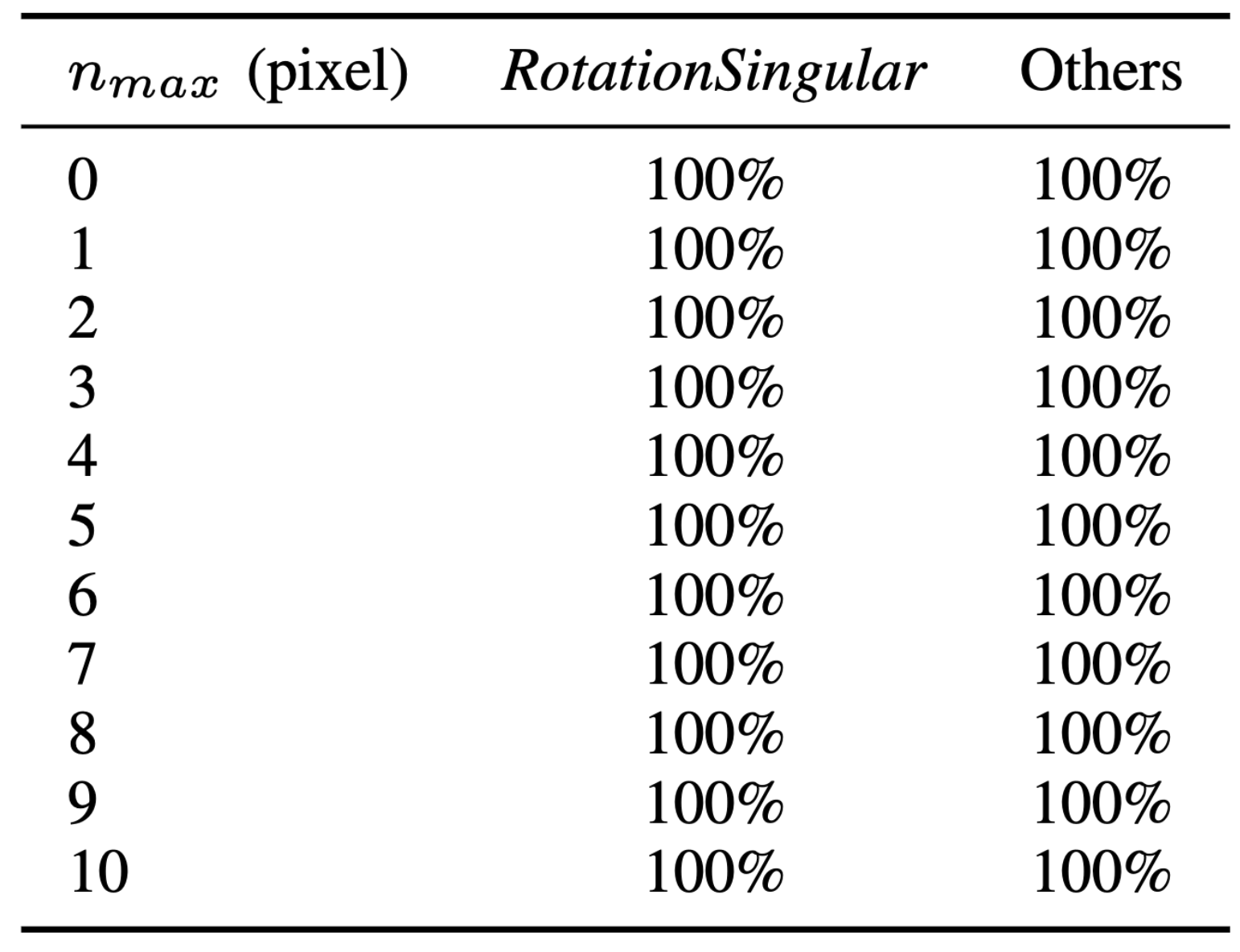

Key to robust processing, this classification enables early detection and exclusion of configurations—such as the RotationSingular cases—that can cause traditional methods to fail (see Table 1 in the paper).

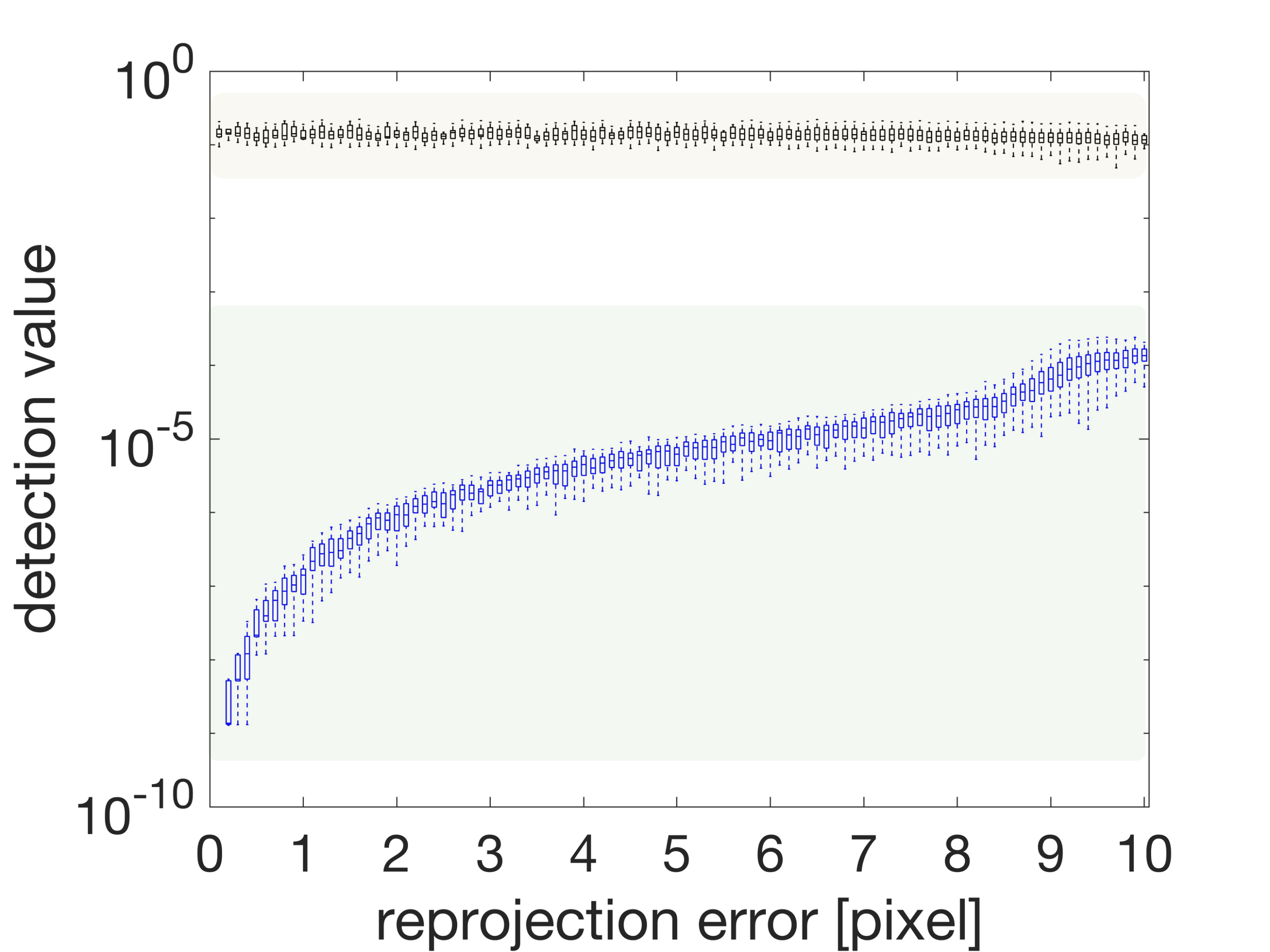

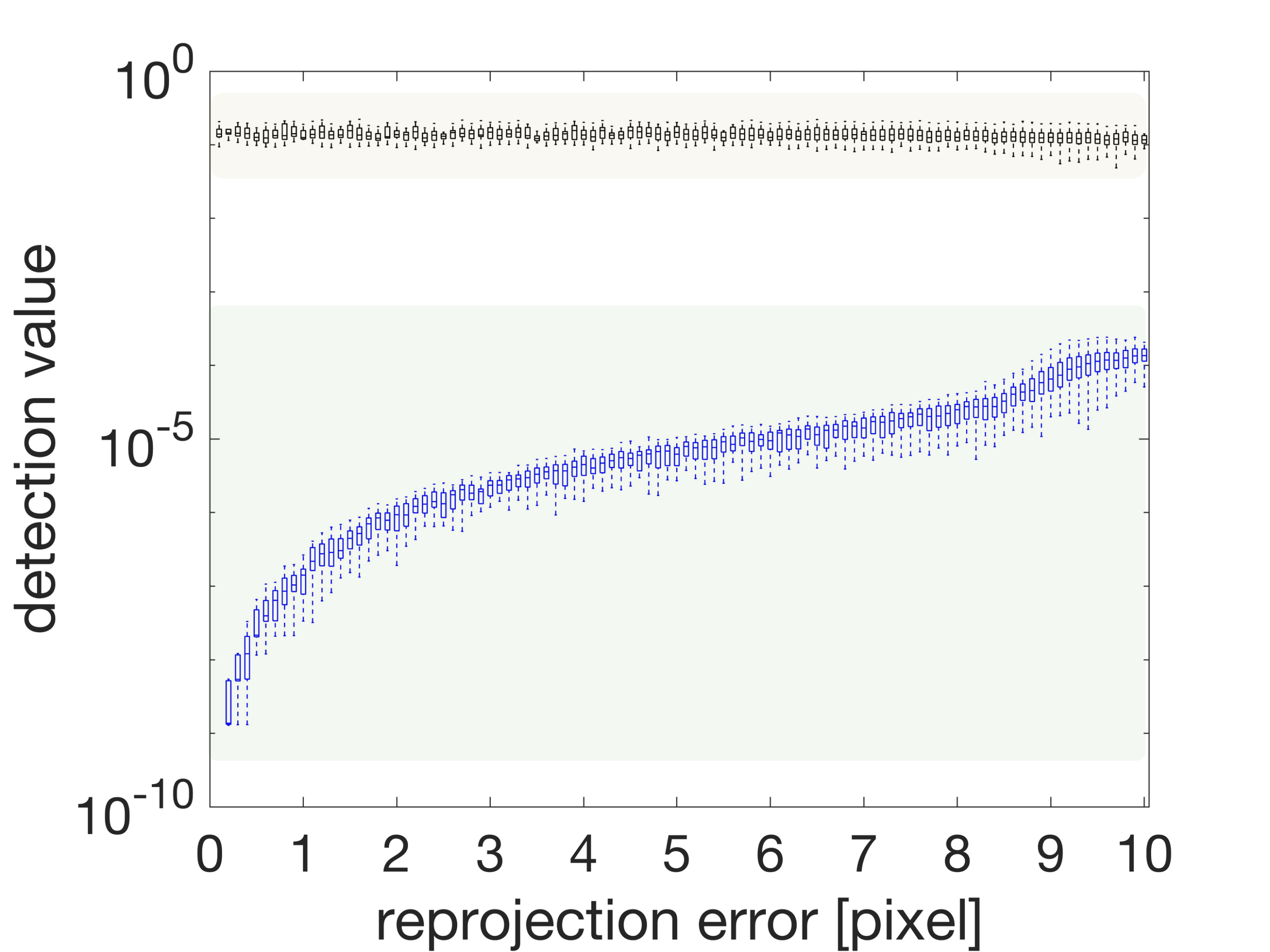

Figure 2: vrs detection value for identifying degenerate or singular scene configurations based on translation observability.

Figure 3: Detection values of vrs highlight separation between RotationSingular and regular scenes, enabling robust exclusion.

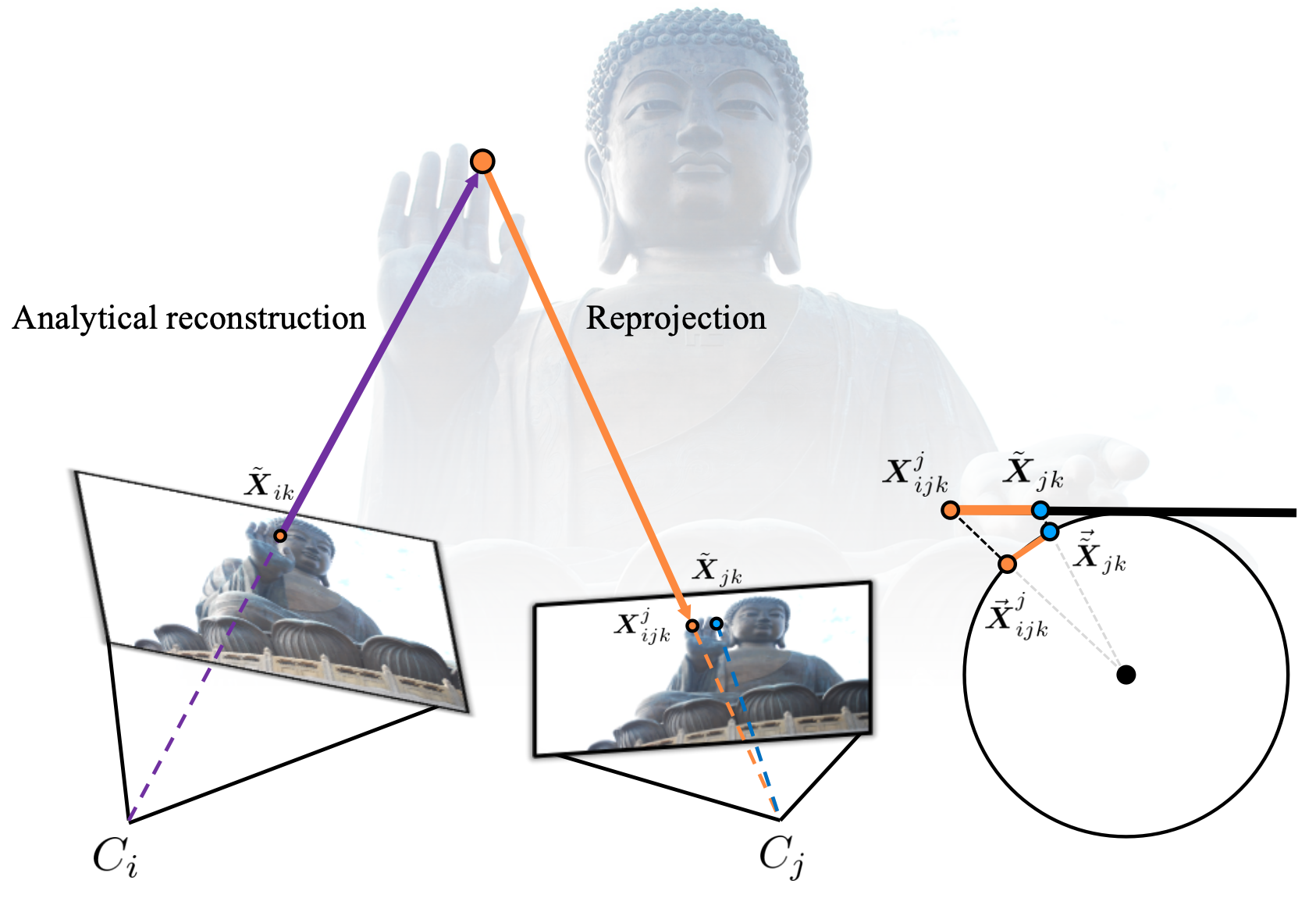

Rotation Manifold Reprojection Error

Building upon this, the reprojection error is recast purely on the rotation manifold. The authors analytically derive that, under generic (RankRegular) conditions, the residual is invariant to the translation scale and sign. In degenerate scenes (PR/B/I, Holoplane), the constraints automatically collapse to the rotation manifold. This property enables precise, direct minimization of the rotation-induced reprojection error absent translation variables.

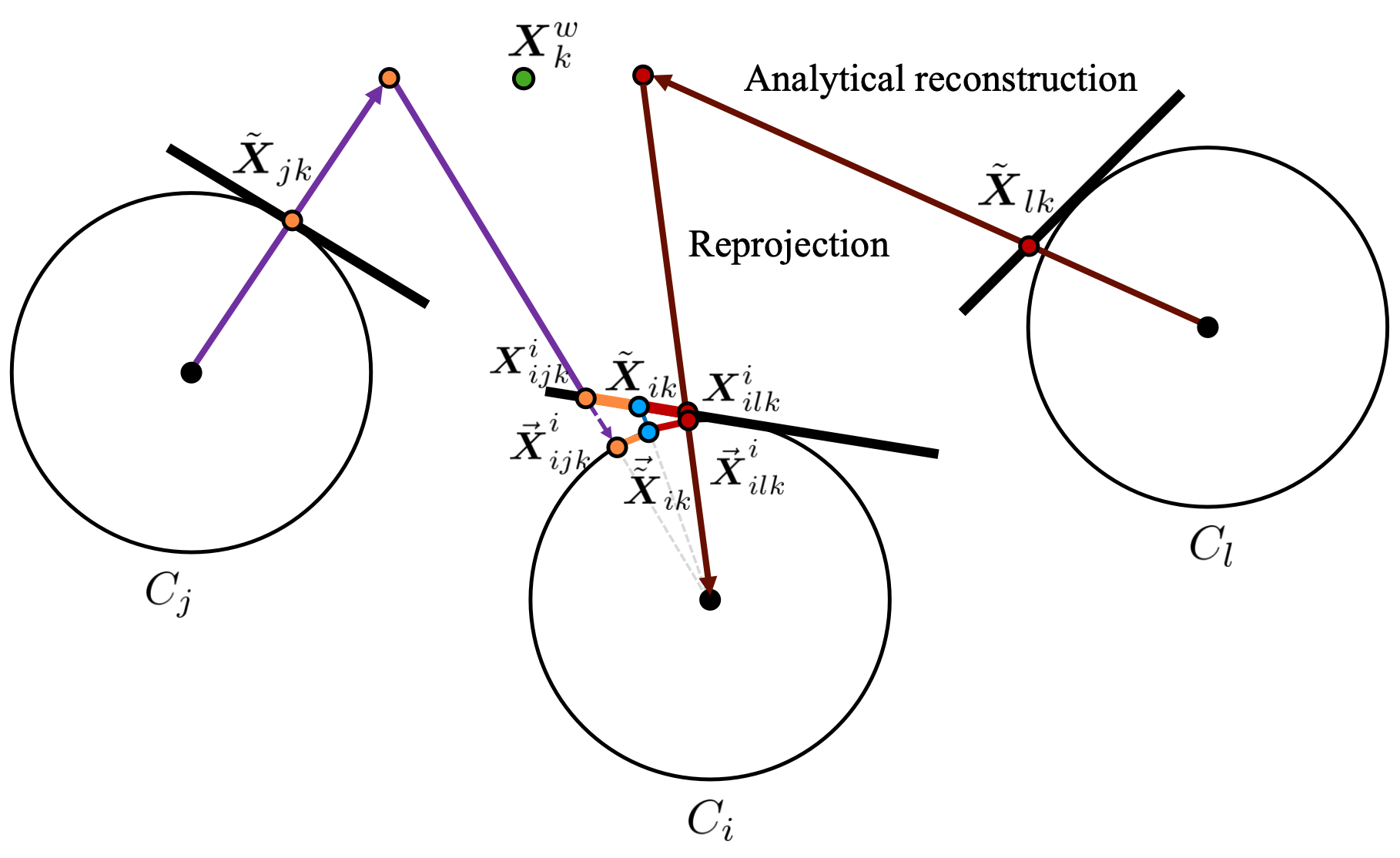

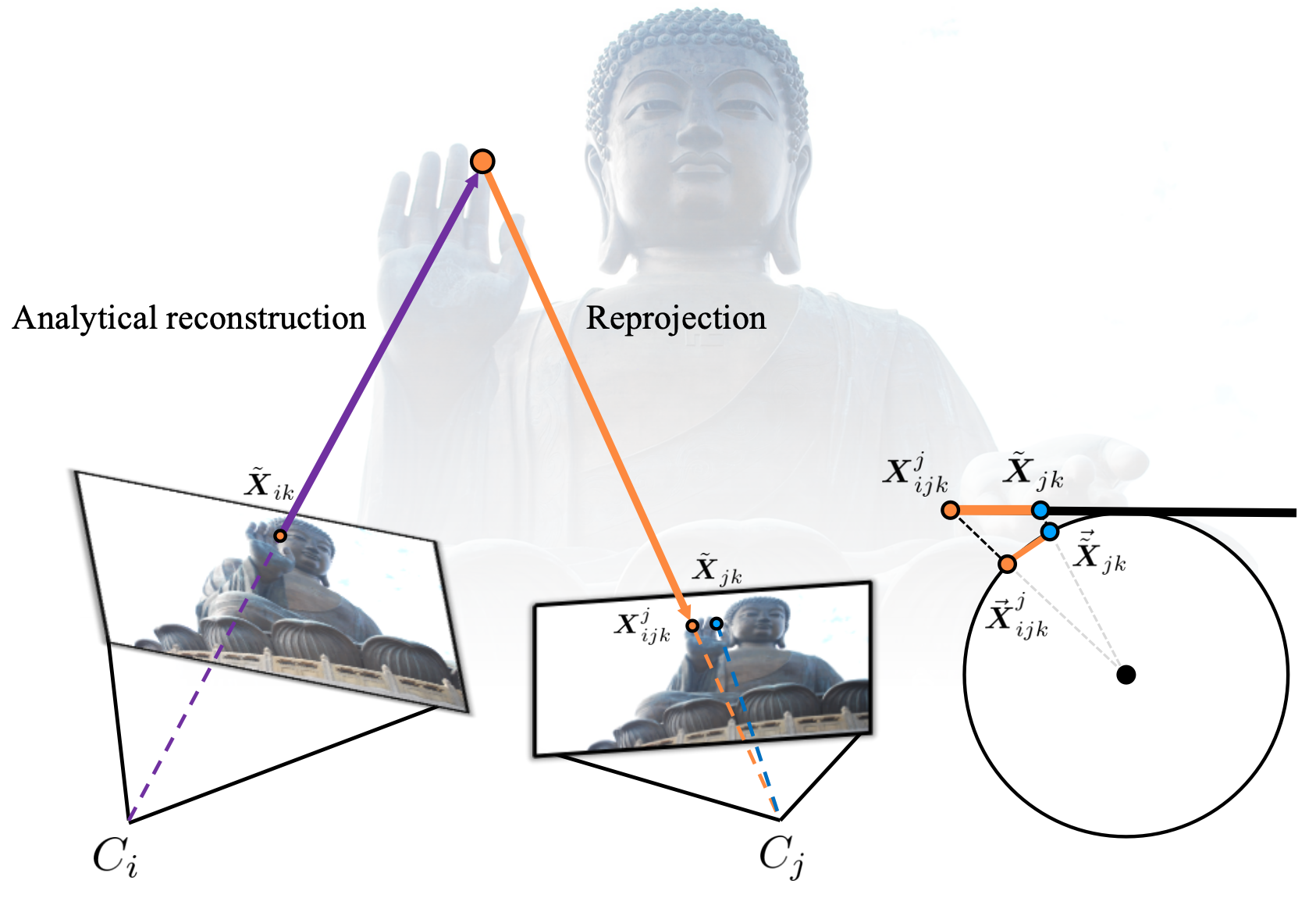

Figure 4: Generation of projected point X by reconstructing along the projection ray and projecting into the target view; this is central to the reprojection computation on the rotation manifold.

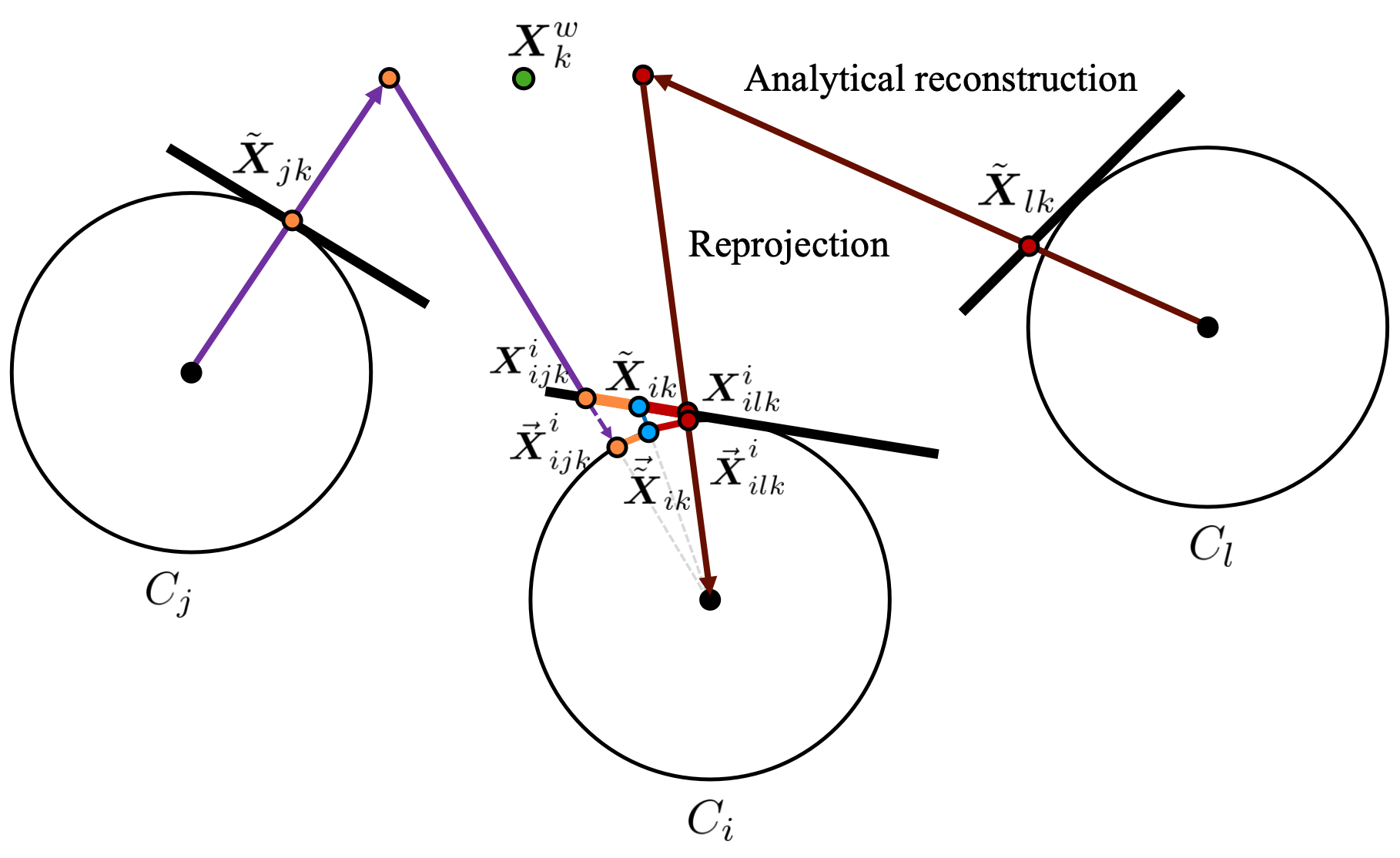

Figure 5: Generation mechanism of reprojection residuals V in the rotation-only framework, illustrating multi-view constraints aggregation.

Algorithmic Innovations

The proposed framework is realized concretely through a series of algorithms:

- Degeneracy Detection: Fast algorithms to evaluate scene type and selectively exclude degenerate RotationSingular configurations.

- Analytic Translation Calculation: Translation direction is extracted analytically (via matrix nullspace/eigenanalysis) from the joint observation matrix, parameterized by rotations and image points.

- Levenberg-Marquardt Optimization: Both two-view and multi-view rotation estimation are realized via LM optimization solely over rotations on SO(3).

The multi-view optimization leverages weighted reprojection consistency aggregated across the view graph, ensuring maximal utilization of geometric constraints with minimal parameter overhead.

Experimental Evaluation

Scene Identification

In extensive Monte Carlo simulations and real-world data (Strecha, Lund), the degeneracy identification method achieves 100% recognition rate, even under substantial noise (up to 10 pixels). This confirms the effectiveness of the low-rank and vrs metrics in robustly excluding problematic configurations that cause classical estimators to fail or diverge.

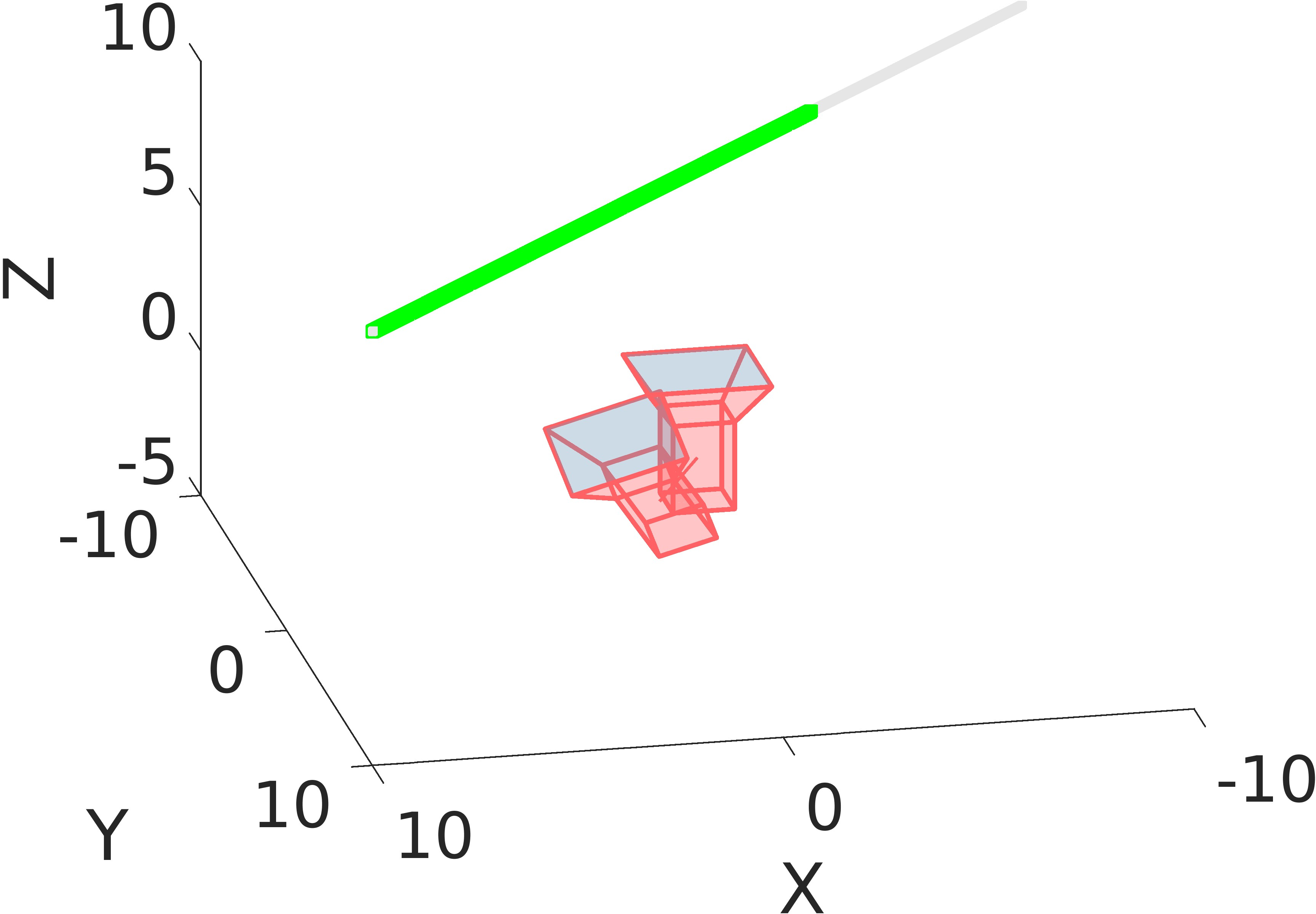

Figure 6: Illustration of pure rotation scene; all cameras coincide spatially, exemplifying the PR/B/I degeneracy.

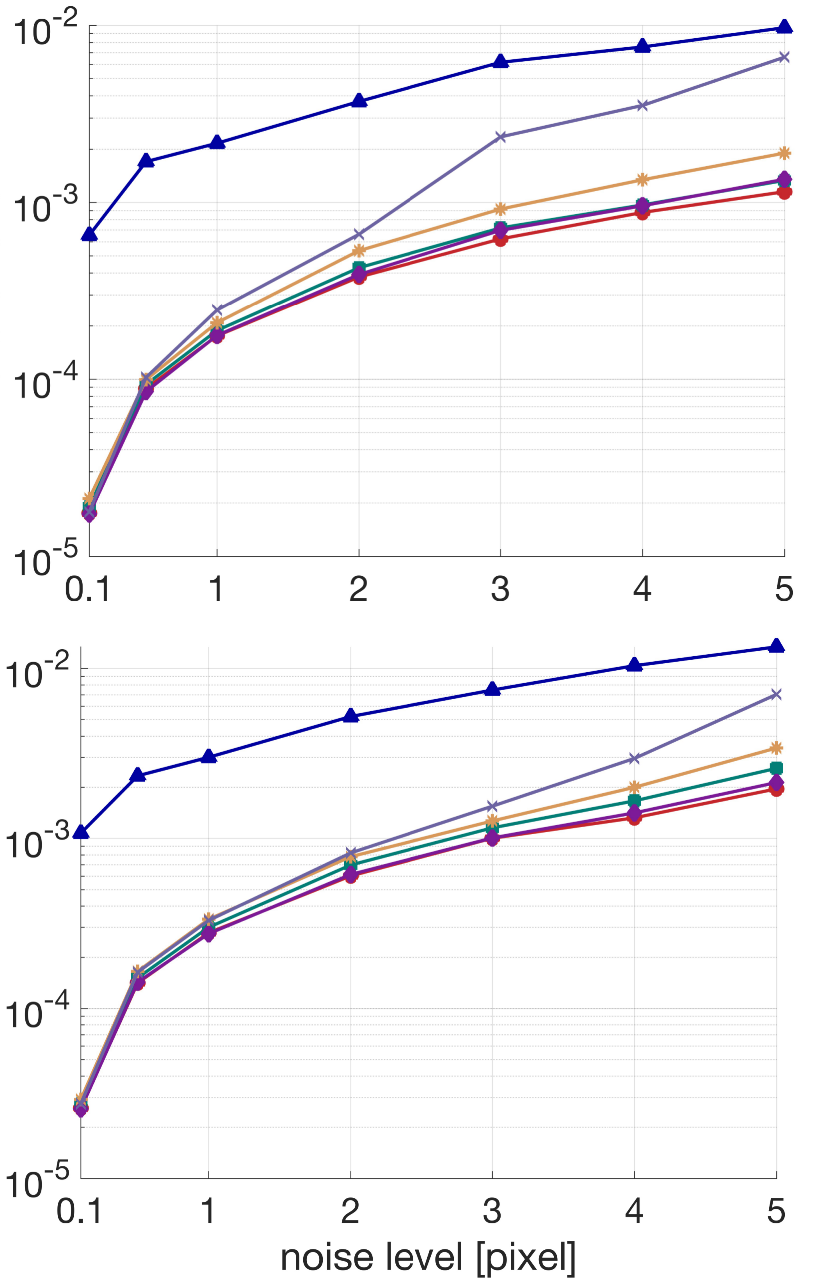

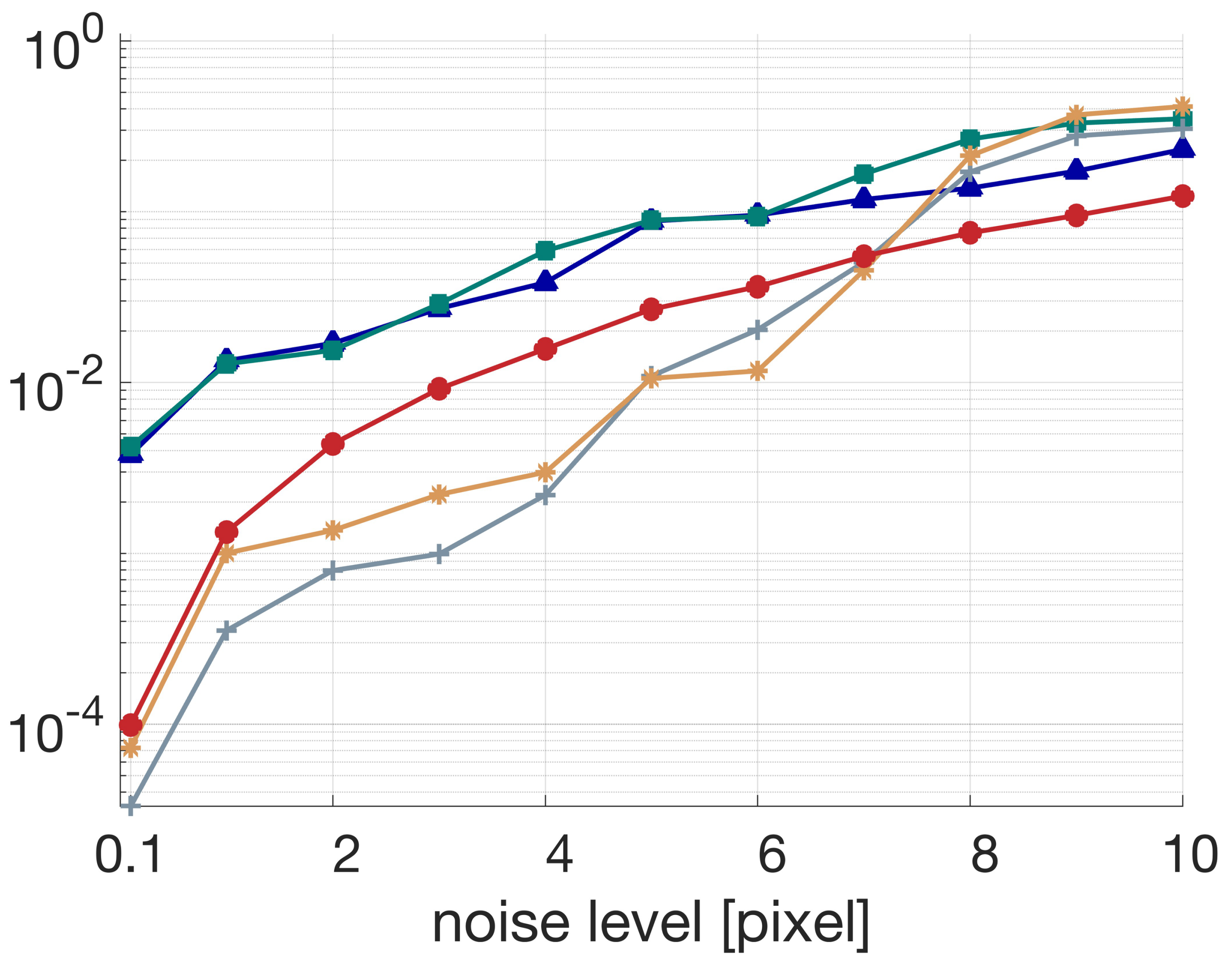

Two-view Rotation Estimation

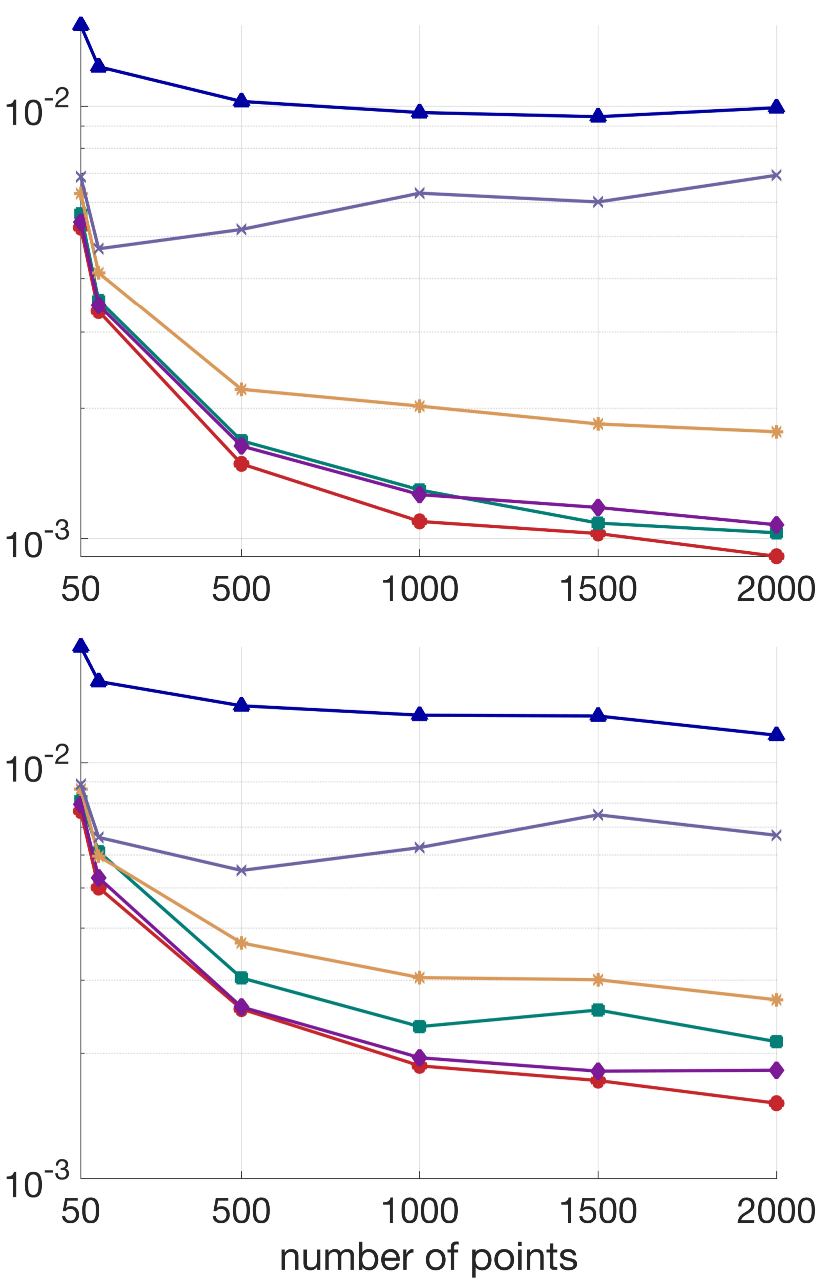

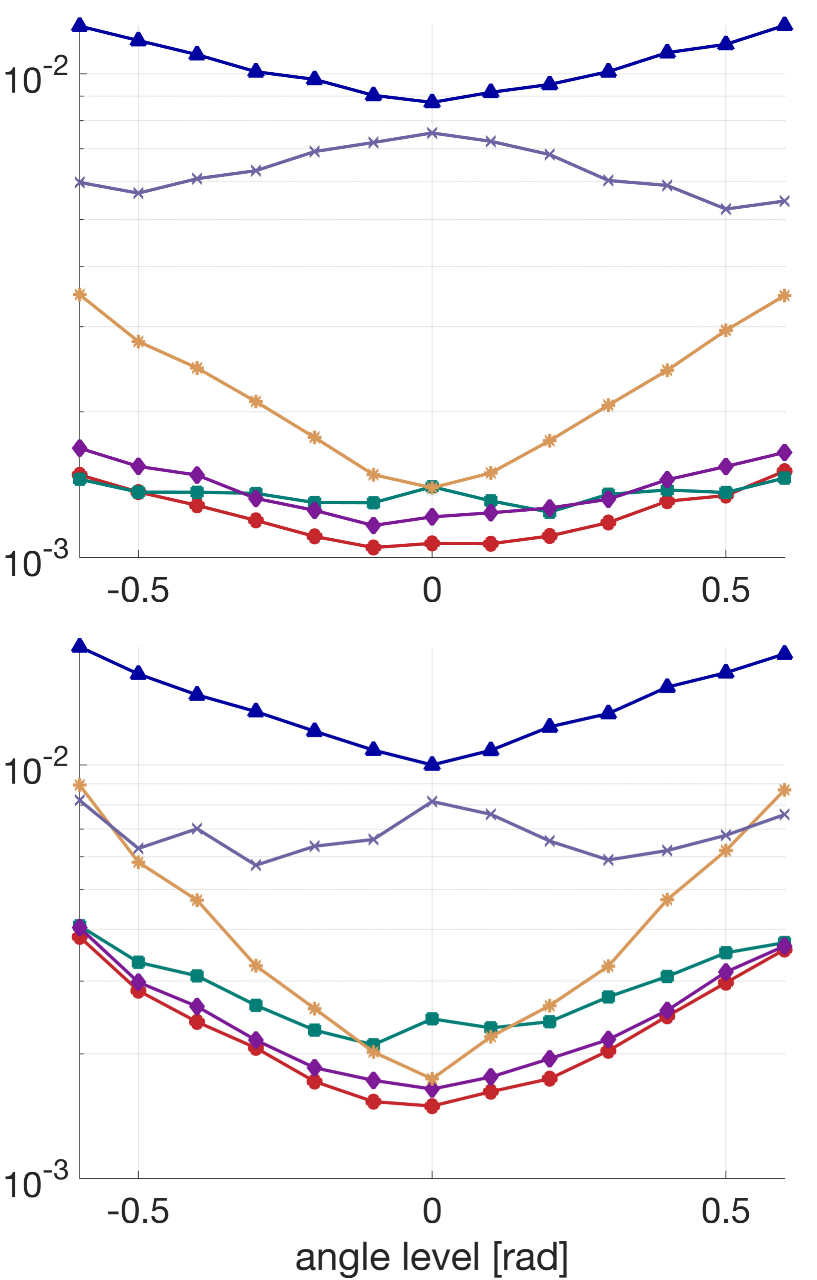

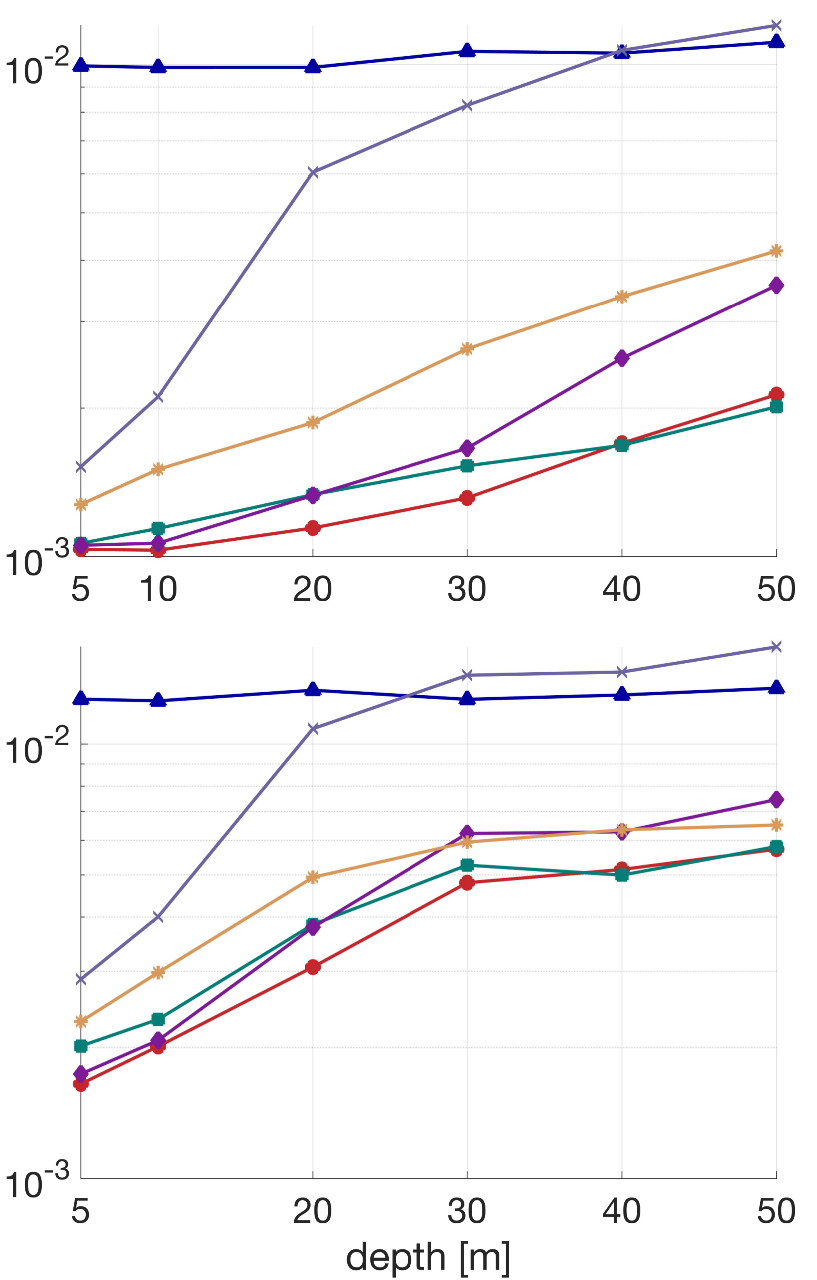

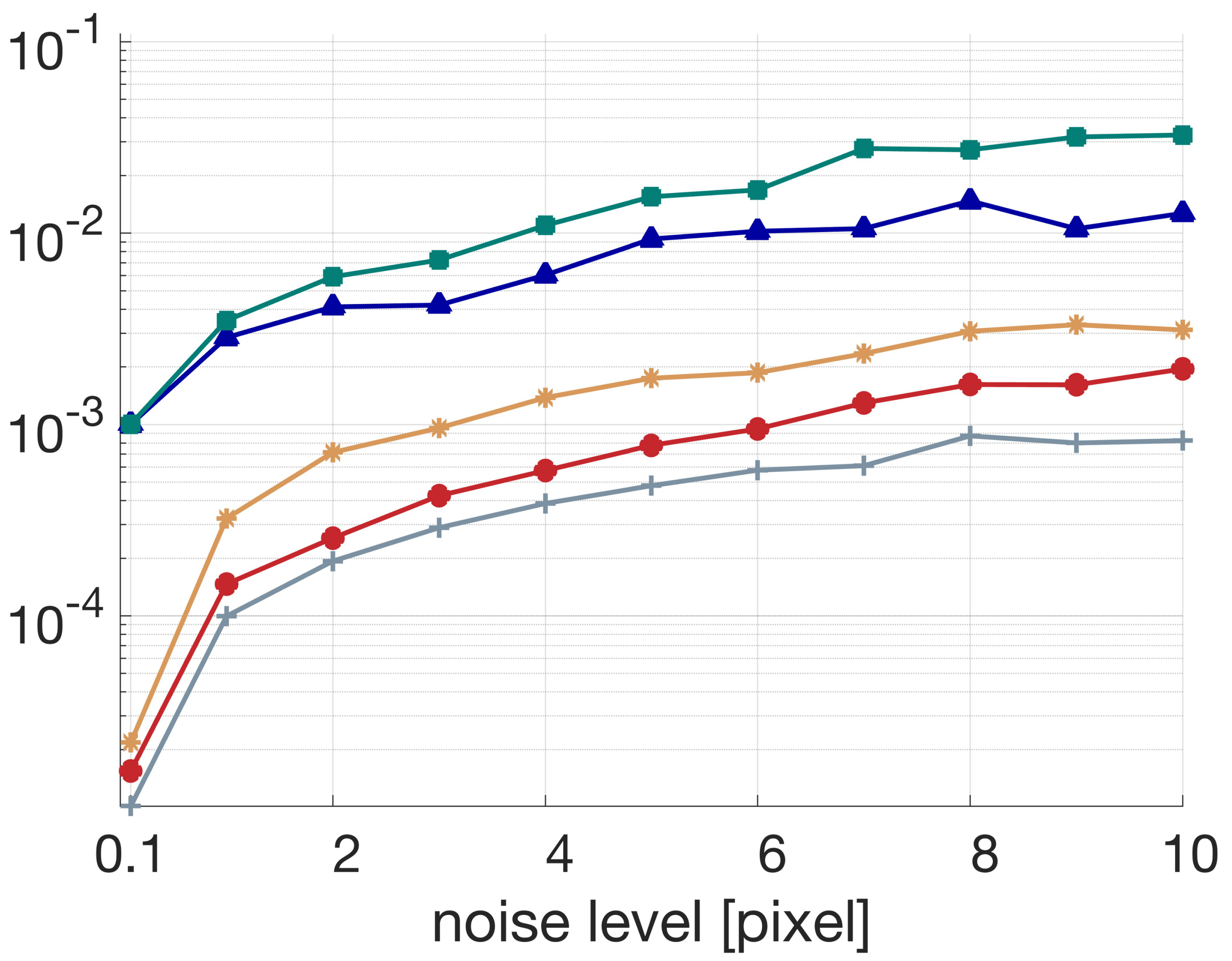

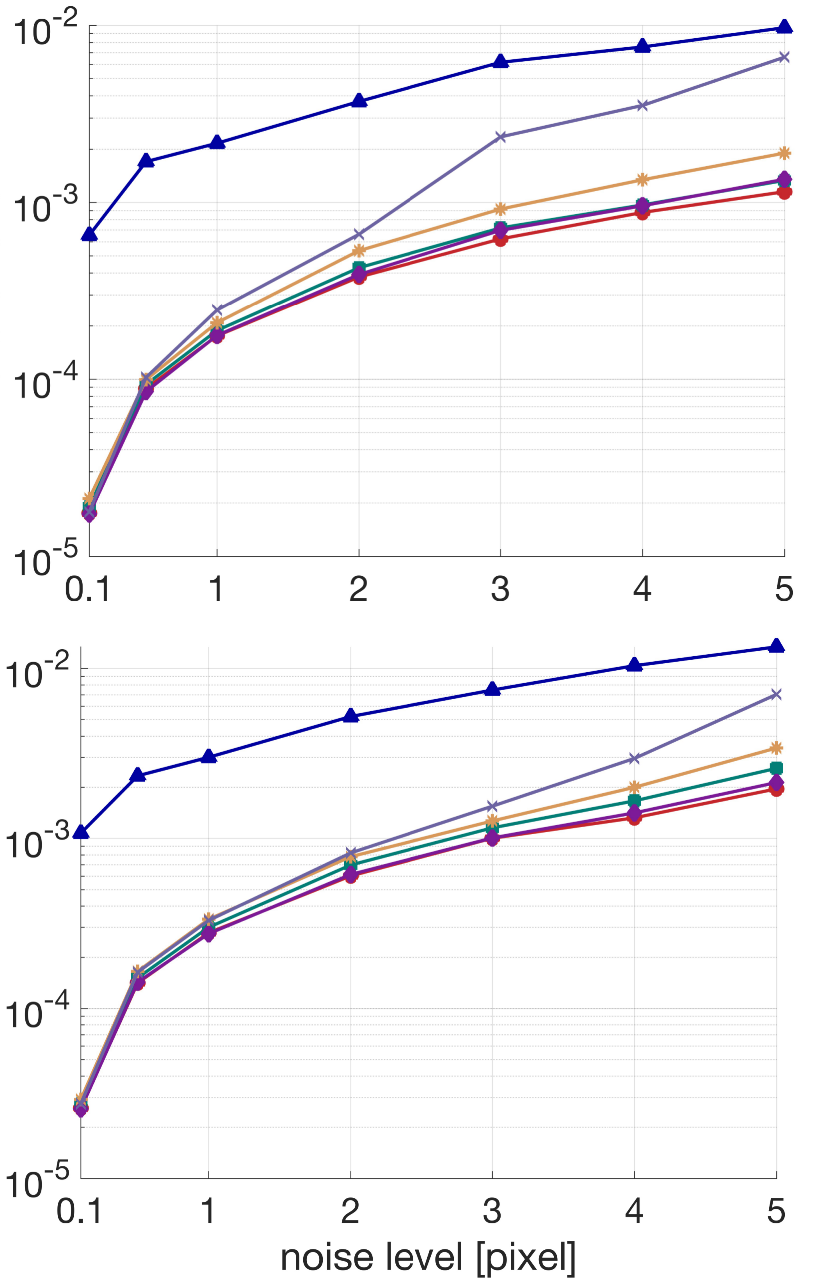

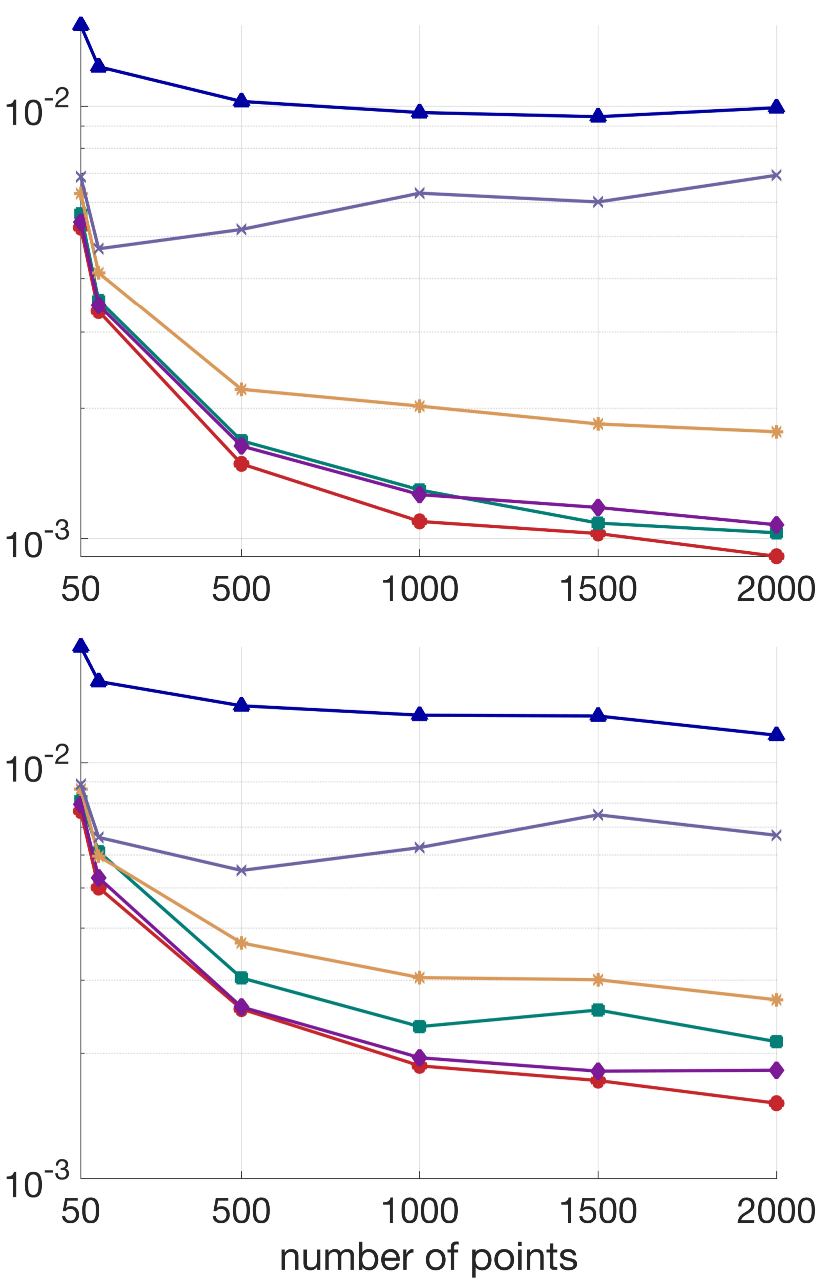

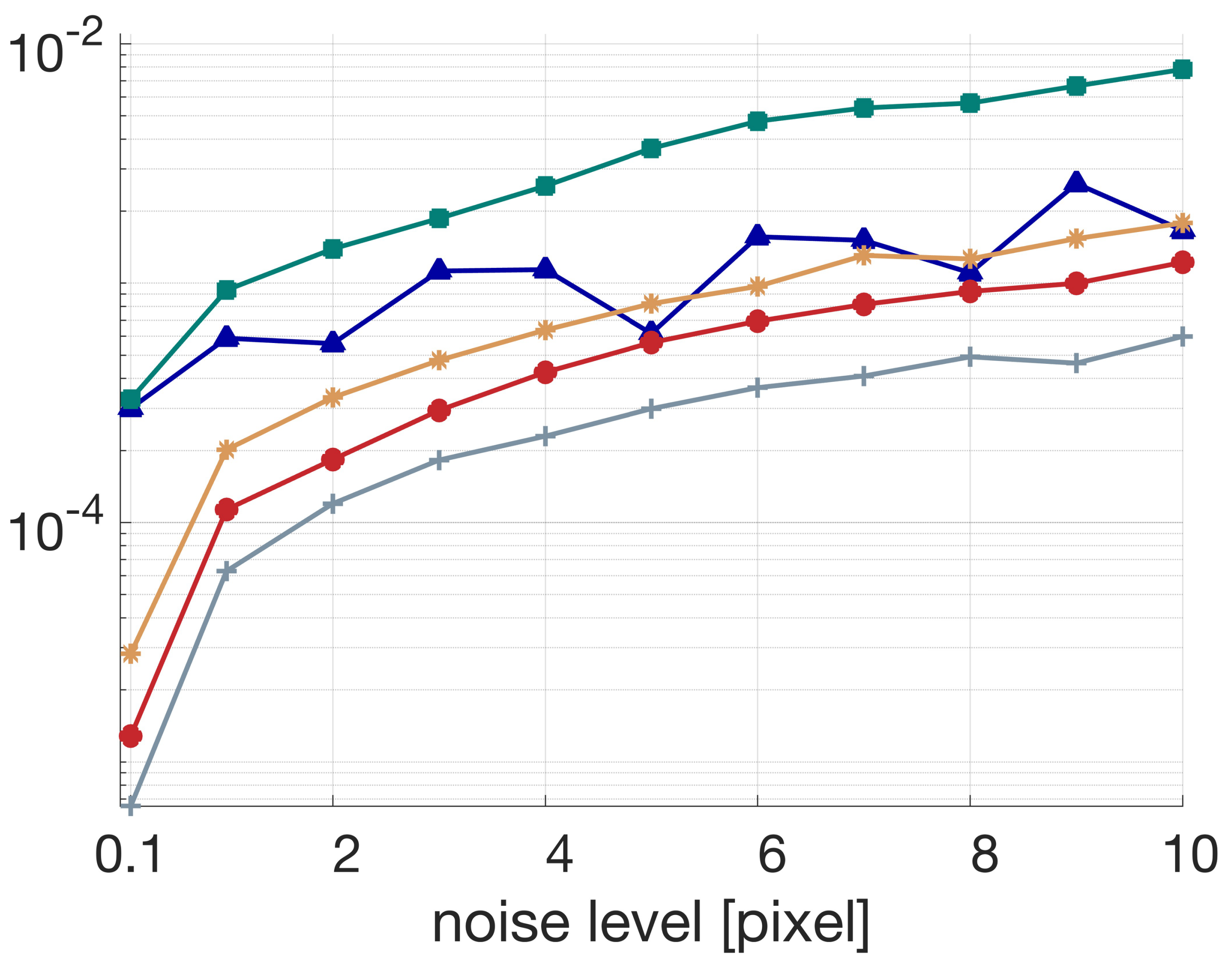

Across various controlled settings (noise, point count, scene geometry) and challenging simulated as well as real datasets, the rotation-only approach (termed TRRM in the paper) consistently outperforms both classical and state-of-the-art competitors, including BA, PA, and methods of Kneip et al. Notably, the accuracy improvements are quantitatively significant:

- Average improvement over next-best method: 17.01%

- Maintains highest accuracy as observation noise, scene depth, or rotation amplitude increases.

Figure 7: Noise robustness: TRRM and PA maintain low error, outperforming alternatives as observation noise increases.

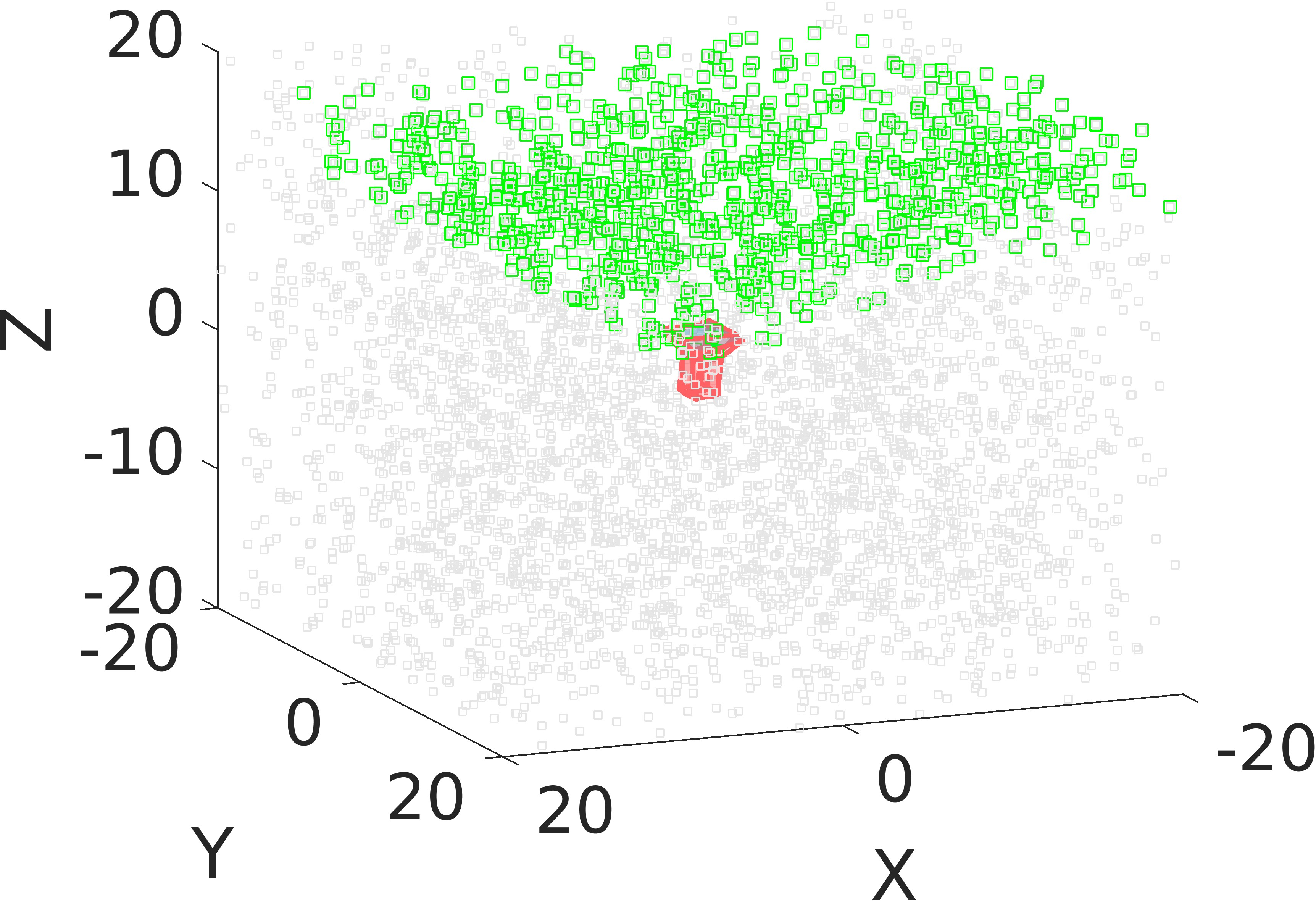

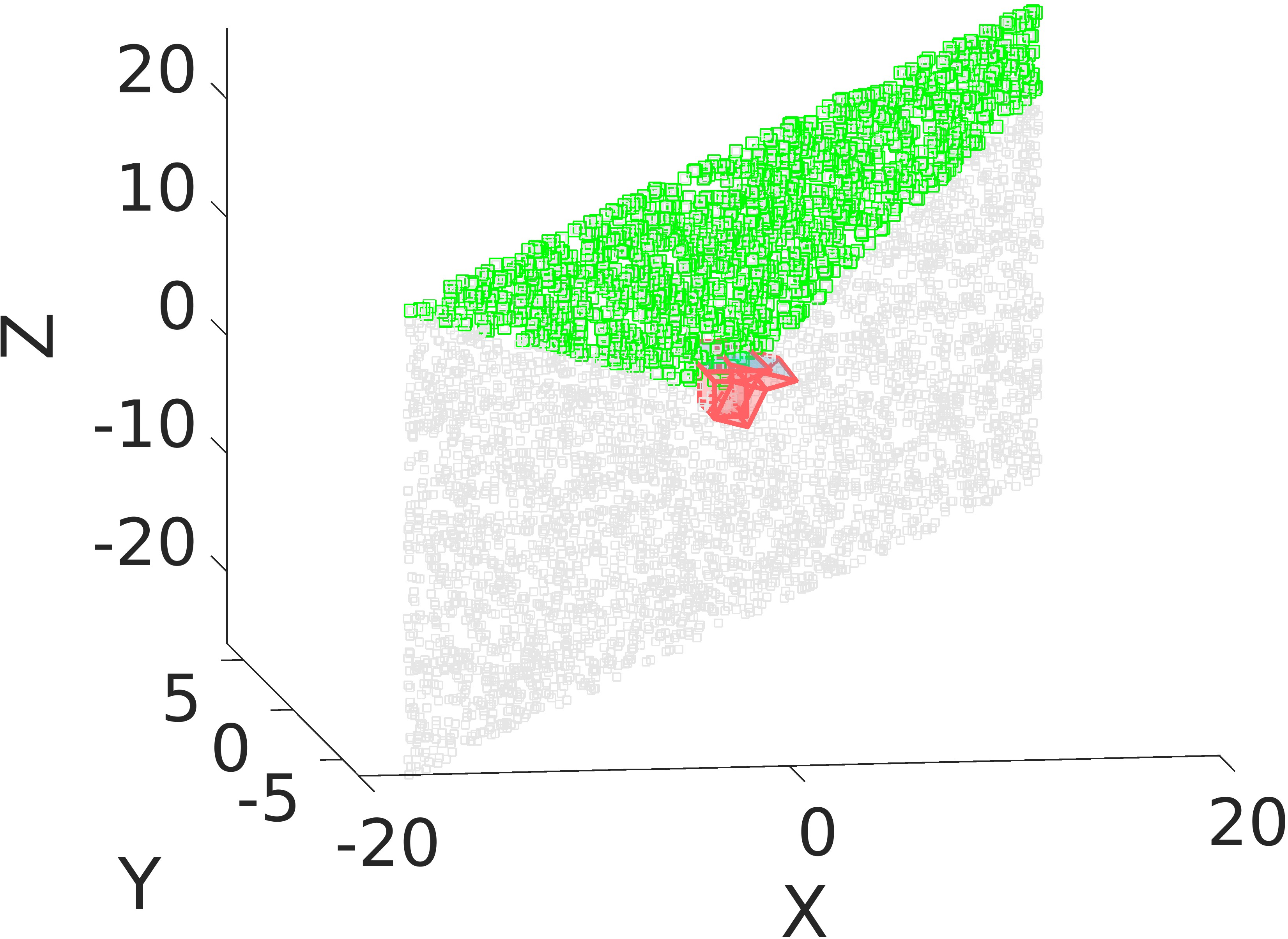

Figure 8: Circular motion multi-view scenario; cameras arranged on a circle with points above, used for multi-view experiments.

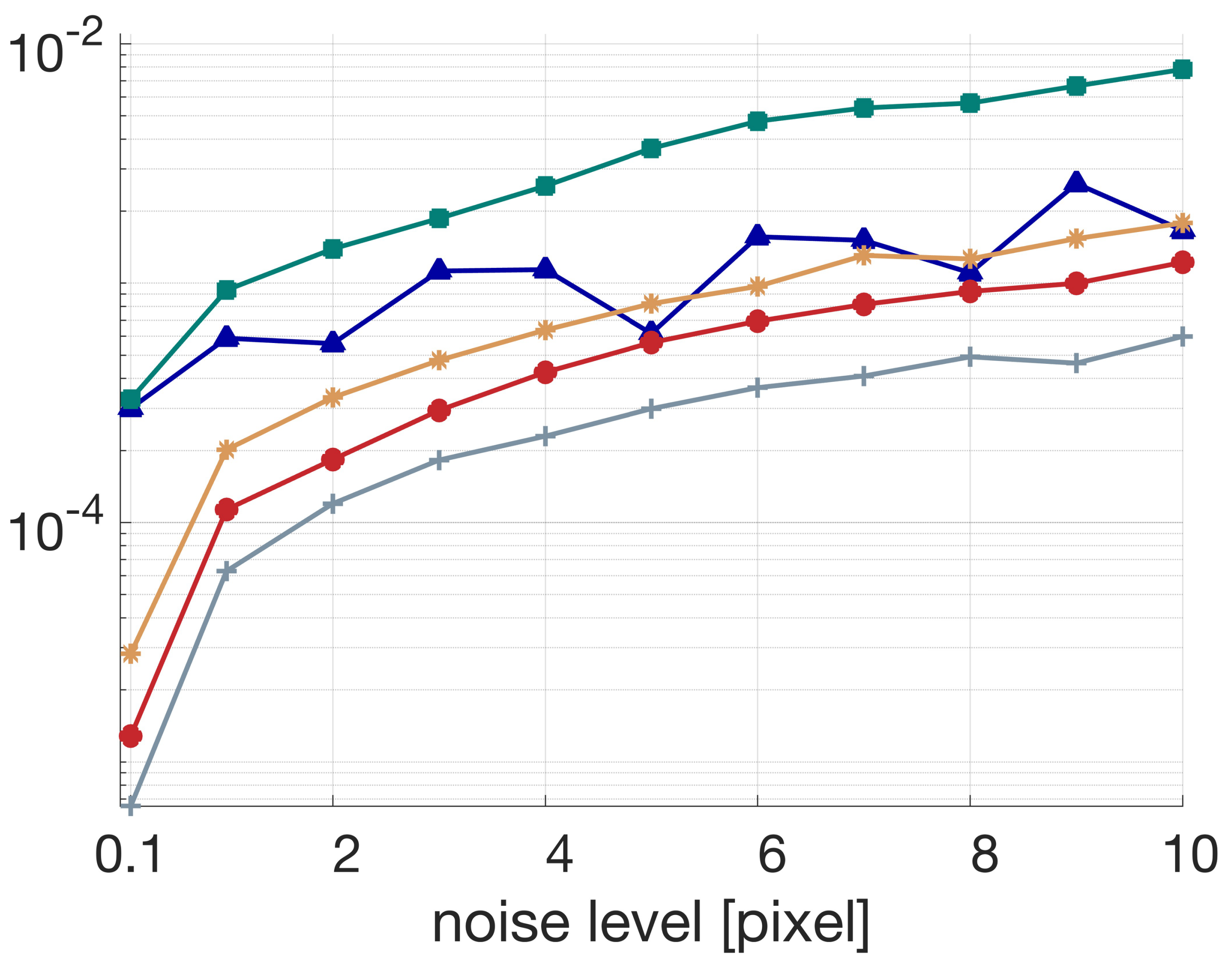

Multi-view Rotation Estimation

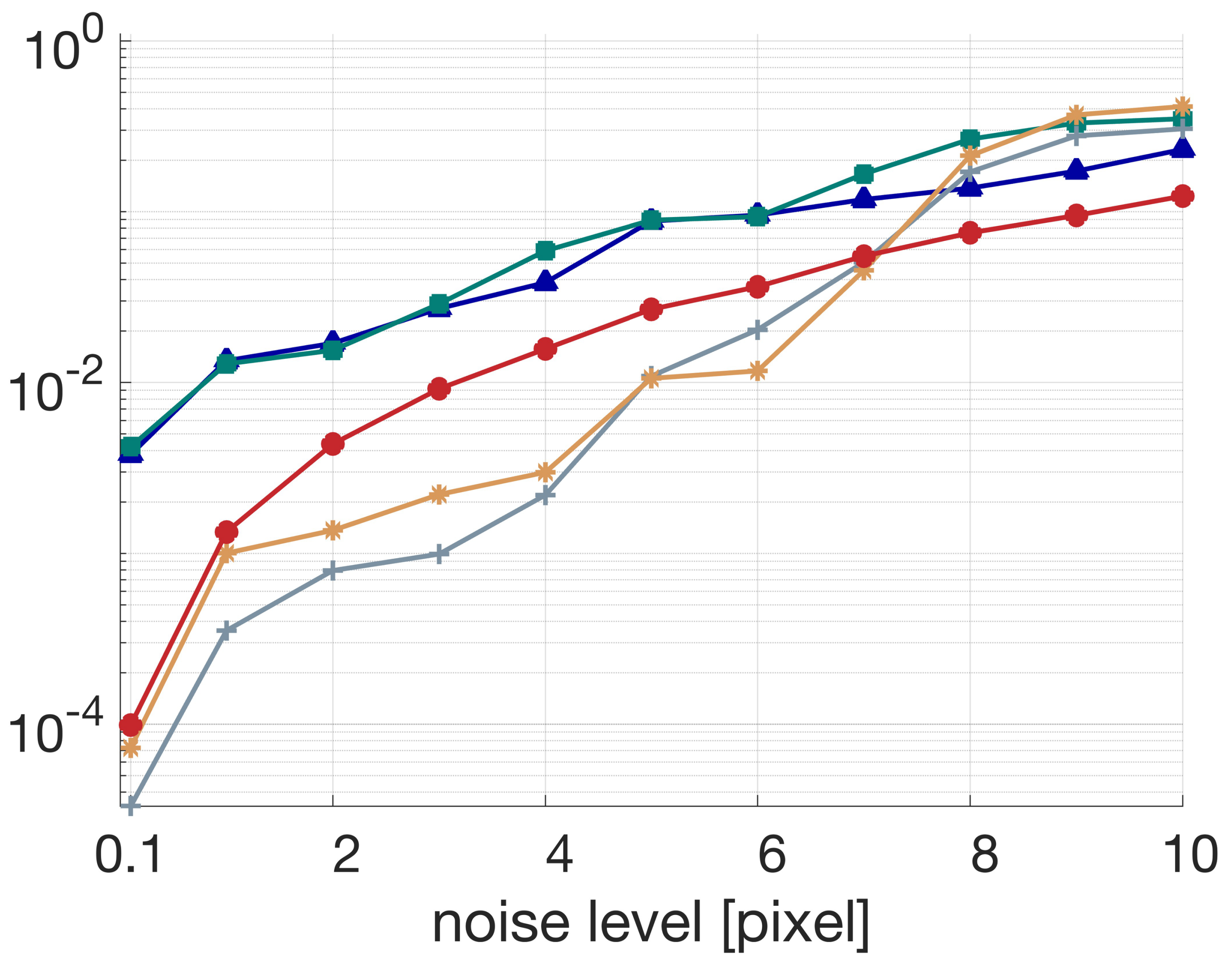

The proposed global rotation-only manifold method (GRRM) is compared with leading rotation averaging and BA variants. The algorithm achieves:

- 27.70% average improvement over the next-best multi-view rotation estimation competitor.

- Accuracy comparable to four full rounds of BA as implemented in the OpenMVG pipeline, despite only optimizing over rotations, and requiring substantially lower computational resources.

Figure 9: Circular motion multi-view experiment; illustrates the challenging spatial configuration and the effectiveness of the GRRM approach.

Detailed experiments further show that the method is robust under sparse matching, dense point distributions, and is not affected by the decrease in the number of matched views, outperforming BA and PA under these scenarios.

Implications and Future Directions

Theoretical Implications

The analytical decoupling of translation from rotation in imaging geometry provides deeper insight into the intrinsic structure of multiview geometry, challenging the traditional epipolar-centric paradigm. The work strengthens the theoretical foundation for understanding when and how translation contributes to image formation constraints, and when it can be marginalized without loss of accuracy.

Practical Impact

The rotation-only formulation leads directly to:

- Lower-dimensional optimization for robust initialization and refinement.

- Superior accuracy and robustness in difficult (singular/near-singular) or noisy scenes.

- Greatly reduced computational cost compared to full BA, enabling scalability to very large-scale reconstruction and rapid updates in real-time applications (e.g., SLAM, robotics, AR/VR).

Future Developments

This work lays the foundation for several directions:

- Extension to dynamic or partially calibrated scenarios (varying intrinsics or rolling shutter).

- Joint integration with semantic and temporal priors to further stabilize in challenging environments.

- Applicability to distributed or federated SLAM/SfM frameworks exploiting the reduced parameterization.

Conclusion

By formalizing and exploiting the rotation-only structure of imaging geometry, this research provides a powerful, theoretically sound, and empirically validated framework for robust, efficient rotation estimation in geometric vision. The framework simultaneously detects and handles scene degeneracies, analytically eliminates translation, and delivers superior accuracy, even rivaling computationally intensive full bundle adjustment. This unlocks practical and theoretical advances in 3D vision, with immediate benefits for large-scale and real-time vision-based applications.

Reference: "Towards Rotation-only Imaging Geometry: Rotation Estimation" (2511.12415)