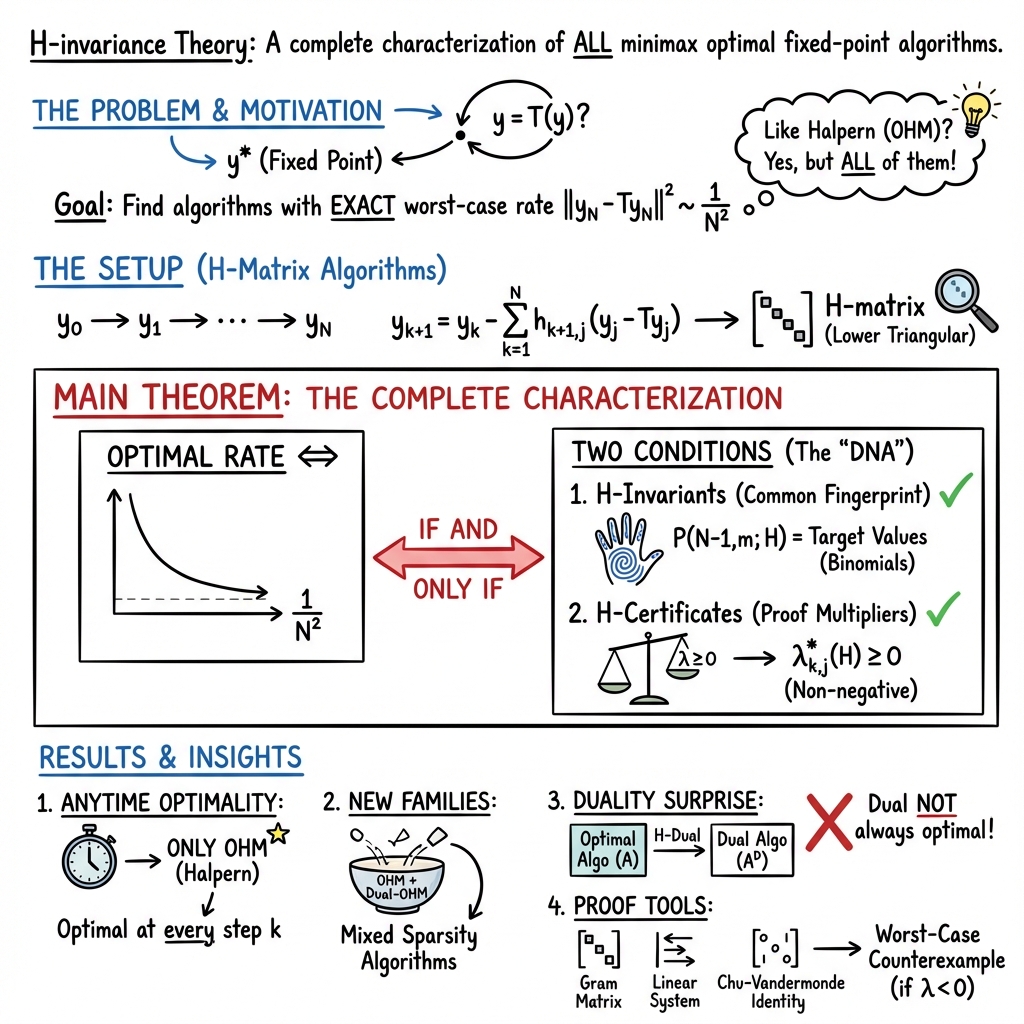

H-invariance theory: A complete characterization of minimax optimal fixed-point algorithms

Abstract: For nonexpansive fixed-point problems, Halpern's method with optimal parameters, its so-called H-dual algorithm, and in fact, an infinite family of algorithms containing them, all exhibit the exactly minimax optimal convergence rates. In this work, we provide a characterization of the complete, exhaustive family of distinct algorithms using predetermined step-sizes, represented as lower triangular H-matrices, which attain the same optimal convergence rate. The characterization is based on polynomials in the entries of the H-matrix that we call H-invariants, whose values stay constant over all optimal H-matrices, together with H-certificates, of which nonnegativity precisely specifies the region of optimality within the common level set of H-invariants. The H-invariance theory we present offers a novel view of optimal acceleration in first-order optimization as a mathematical study of carefully selected invariants, certificates, and structures induced by them.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about finding the fastest possible ways to reach a “fixed point” of a function. A fixed point is a special input where the function gives back the same (so ). The authors focus on a big, important class of functions called “nonexpansive” functions, which never increase distances between points. They show there isn’t just one best algorithm to find fixed points—there are many. Then they give a complete, exact description of all these best algorithms using two ideas they call H-invariants and H-certificates.

Key Questions

The paper asks and answers:

- What is the best-possible speed any algorithm can have (the “minimax optimal” rate) when finding a fixed point of a nonexpansive function?

- Do we have only one algorithm that achieves this best speed, or are there many?

- If there are many, how can we list and recognize all of them exactly?

- Can we prove a simple, checkable set of rules to tell whether a given algorithm is truly optimal?

Methods (Explained Simply)

Think of solving a fixed-point problem like trying to land a drone exactly on a target spot. Each move you make depends on where you are and how far you are from the target. The paper studies algorithms that use a fixed recipe of “mixing” old information to make the next move.

Here’s the everyday-language version of how they approach it:

- H-matrix algorithms: Imagine a spreadsheet (a lower triangular matrix called ) that tells you how much to use each past “error” to make the next move. An “error” is the gap between where you are and where the function wants you to be, . Using , the next step looks like:

- “New position” = “Old position” minus a weighted mix of past gaps.

- Fingerprints (H-invariants): The authors define special polynomials built from the numbers inside . These are like fingerprints: for all best algorithms, these polynomials have the same values. If your algorithm’s fingerprints match, it’s a strong sign you’re optimal.

- Safety checks (H-certificates): The authors also define certificate numbers (called ) that arise from the inequalities used in the convergence proof. If all these certificates are nonnegative (think: no red flags), then your algorithm is in the exact “optimal zone.”

- Lower bound and matching upper bound: They first show a speed limit no algorithm can beat—after steps, the squared error can’t be smaller than . Then they show some algorithms, like the classic Halpern method, actually hit this limit (so they are minimax optimal).

- Algebra + geometry: Behind the scenes, they use algebra (polynomials, linear systems) and geometric properties of nonexpansive functions (and related monotone operators) to prove their results. They also use some combinatorics (binomial identities) to get clean formulas.

Main Findings

- Exact speed limit: No deterministic first-order algorithm can beat the best-possible squared error rate of after steps. The Halpern method with optimal parameters achieves this exact rate:

- Halpern’s update:

- Many optimal algorithms: It’s not just one method—there are infinitely many distinct algorithms that also achieve the exact rate. One example is the “Dual-OHM,” which mirrors Halpern’s method across the matrix’s anti-diagonal.

- Complete characterization: The paper gives an “if and only if” rule for when an H-matrix algorithm is exactly optimal:

- The H-invariants (polynomials in ’s entries) must equal specific numbers: for .

- All H-certificates must be nonnegative: for certain index pairs.

- Together, these conditions fully describe the family of all minimax optimal fixed-step algorithms.

- Only one “anytime” optimal algorithm: If you want an algorithm that is optimal at every single step (not just at the final step), the Halpern method is the only one.

- New designs: Using their framework, the authors construct new optimal algorithms, including ones that are “self-H-dual” (they mirror themselves) or have no optimal mirror partner.

Why It Matters

- Practical impact: Fixed-point problems are everywhere—optimization, machine learning, signal processing, game theory, and control systems. Faster, provably optimal methods mean you can solve large problems more efficiently and reliably.

- Blueprint for algorithm design: Instead of guessing and testing, you can now design optimal algorithms by checking two clear ingredients:

- Do your H-invariants match the target values?

- Are your H-certificates nonnegative?

- New perspective on acceleration: The work reframes optimal acceleration (speeding up convergence) as finding and enforcing the right “fingerprints” and “safety checks.” This could inspire similar complete characterizations in other areas (like smooth or nonsmooth optimization).

In short, the paper doesn’t just find fast algorithms—it provides a full map of all the fastest ones and simple rules to recognize them.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise list of gaps and open problems that remain unresolved by the paper. Each item is concrete to help guide future research.

- Scope limited to H-matrix representable algorithms with predetermined step-sizes: the characterization does not extend to adaptive (data-dependent) step-size schemes, line-search, or algorithms whose coefficients depend on past iterates/residuals.

- Characterization confined to the class A_H (H-matrix representable) within deterministic first-order methods: a complete characterization for the broader span class A_span and the full deterministic class A_det remains open.

- Nonexpansive fixed-point setting only: it is unclear whether an analogous invariance/certificate framework exists for other problem classes (e.g., smooth/strongly convex minimization, composite optimization, monotone inclusions beyond resolvent form, convex-concave saddle-point problems).

- Terminal-iterate optimality versus anytime behavior: the paper proves OHM is the only anytime-optimal algorithm within A_H, but it does not characterize near-anytime optimal algorithms (e.g., optimal up to constants at all iterates) or the trade-offs between intermediate-iterate performance and terminal optimality for the full optimal set.

- Horizon knowledge: most optimal constructions depend on the horizon N; outside of OHM, the paper does not establish whether horizon-free (N-agnostic) optimal algorithms exist beyond A_H or under broader algorithmic models.

- Practical verification complexity: the H-certificates comprise O(N2) polynomial inequalities; the paper does not analyze the computational complexity, numerical stability, or conditioning of verifying nonnegativity for large N, nor provide scalable algorithms (e.g., convex relaxations, SDP surrogates) to certify optimality.

- Explicit parametrization and geometry of the optimal set: while the set is characterized implicitly by polynomial equalities (H-invariants) and inequalities (H-certificates), the paper does not provide an explicit global parametrization, dimension count, connectedness/convexity, boundary structure, or measure of the full optimal set.

- Constructive completeness: the proposed procedure (enforcing sparsity by setting most certificates to zero) yields new optimal algorithms, but the paper does not prove that this constructive approach can enumerate all optimal H-matrices (including non-sparse interior solutions); coverage guarantees are missing.

- Conditions for H-duality: the paper reports examples that are self-H-dual or lack optimal H-duals, but does not provide a general structural characterization of when an optimal algorithm admits an H-dual, nor the necessary/sufficient conditions for self-duality.

- Robustness to oracle noise and inexactness: the theory assumes exact nonexpansive or resolvent evaluations; the impact of stochastic noise, inexact proximal/ resolvent computations, or approximate nonexpansiveness on invariants, certificates, and optimality is not analyzed.

- Alternative performance metrics: results are stated for squared fixed-point residual at the terminal iterate; the paper does not address whether invariance/certificate methods extend to other metrics (e.g., residual norm, distance to fixed point, average residuals across iterates, ergodic measures).

- Dimension dependence: the lower bound uses d ≥ 2(N−1); the paper does not clarify whether the characterization or optimal set changes in low-dimensional regimes (d < 2(N−1)) or provide minimal dimension requirements for optimality.

- Computational synthesis of optimal H: given the invariant equalities and certificate inequalities, a practical algorithm for synthesizing H (e.g., solving a polynomial system with inequality constraints) is not provided, nor are complexity bounds or guarantees of finding solutions numerically.

- Structural uniqueness of invariants: H-invariants are defined via linear-operator analysis; the paper does not explore whether different invariant families could yield equivalent characterizations, nor whether invariants can be generalized to adaptive or non-polynomial update structures.

- Extension to randomized or multi-oracle settings: the framework is deterministic and single-oracle; it is unknown whether an invariance theory exists for randomized algorithms, mini-batching, multi-operator composites (e.g., alternating resolvents), or preconditioned schemes.

- Sensitivity to perturbations: optimality requires exact satisfaction of invariant equalities and certificate inequalities; the paper leaves open whether small perturbations (due to rounding or implementation errors) preserve near-optimality and how to quantify sensitivity or condition numbers of the constraints.

- Inter-iterate trade-offs and multi-objective design: beyond proving OHM’s anytime uniqueness, the paper does not quantify the Pareto frontier of intermediate versus terminal performance across the optimal set or propose design principles for balancing these objectives.

- Asymptotic and continuous-time limits: the paper does not analyze the behavior of the optimal set as N → ∞, nor connect the invariance framework to continuous-time limits or ODE interpretations of acceleration (which may reveal deeper structural invariants).

- Structural exploitation beyond worst-case: the framework treats black-box nonexpansive operators; for structured classes (e.g., averaged operators arising from proximal mappings of convex functions), it remains open whether stronger invariants or improved rates (constants) are achievable.

- Extension of the certificate-based proof technique: while certificates provide concise convergence proofs, the paper does not examine whether similar certificate constructions can be derived for other accelerated methods or whether a unified “certificate calculus” can be formulated across problem classes.

Practical Applications

Immediate Applications

The following applications can be deployed now by practitioners who work with nonexpansive fixed-point problems (e.g., proximal mappings, projection methods, resolvents of monotone operators) and who can control step-size schedules or iteration budgets.

- Minimax-optimal fixed-budget solvers for proximal and projection-based workflows (software, data science, imaging)

- What: Use the characterized family of H-matrix representable algorithms to design “fixed-budget optimal” solvers that minimize the worst-case squared residual at a pre-specified number of operator calls N.

- How: Choose an H-matrix satisfying the H-invariants P(N−1,m;H) = (1/N)·C(N,m+1) and verify nonnegative H-certificates λk,j⋆(H). Implement the iteration y_{k+1} = y_k − Σ{j=0}k h{k+1,j+1}(y_j − T(y_j).

- Where: Proximal point and projection methods in sparse recovery, denoising, deblurring, compressed sensing, portfolio projection onto convex sets, feasibility problems.

- Tools/products: Add “Fixed-Budget Prox” mode to optimization libraries (e.g., PyTorch, TensorFlow, JAX, Julia Optim/ProximalOperators), exposing N and precomputed H matrices; scripts to compute/validate H-invariants and H-certificates.

- Assumptions/dependencies: T must be nonexpansive; performance guarantee targets the squared fixed-point residual at the terminal iteration; dimension-independent, but lower-bound construction uses d ≥ 2(N−1); oracle access cost dominates.

- Anytime-safe acceleration via unique optimal Halpern method parameters (software, robotics/control, real-time systems)

- What: Deploy Optimal Halpern Method (OHM) when iteration budgets are uncertain or when decisions must be taken at intermediate steps; OHM is shown to be the only algorithm that achieves the minimax optimal rate at all iterations.

- How: Use y_{k+1} = (1/(k+2))·y_0 + ((k+1)/(k+2))·T(y_k) with standard anchoring y_0; this provides anytime minimax optimal convergence of the residual.

- Where: Real-time feasibility restoration in model predictive control, online signal processing, streaming inference pipelines, safety-critical systems requiring interruptible optimization.

- Tools/products: “Anytime Prox” solver flag selecting OHM; integration into embedded control stacks; standardized fallback algorithm when iteration budgets shrink.

- Assumptions/dependencies: Nonexpansive operator; anchoring at y_0; users accept residual-based convergence as the KPI.

- Designing terminal-optimal variants for fixed compute budgets (software, energy, edge AI)

- What: For workloads with hard ceilings on operator calls (e.g., edge devices), select from the characterized family (including Dual-OHM and self-H-dual variants) to optimize the terminal residual at exactly N iterations.

- How: Use the anti-diagonal transpose (H-dual) when linear operators or memory patterns benefit from reversed schedules; generate sparse-certificate algorithms for simpler proofs and potentially simpler implementations.

- Where: On-device convex feasibility routines, federated learning proximal corrections, projection-based calibration with fixed time slots.

- Tools/products: H-matrix “designer” that takes N and returns candidate schedules with verified certificates; code templates for Dual-OHM-like schemes.

- Assumptions/dependencies: Known N; operators are nonexpansive; optimality is terminal, not necessarily monotonic in k; certificate checks can be automated.

- Faster consensus and averaging protocols cast as nonexpansive fixed points (networks, distributed systems)

- What: Accelerate convergence in distributed averaging/consensus when the update operator is 1-Lipschitz by choosing OHM or an optimal H-matrix schedule.

- How: Recast updates as y ← T(y) with nonexpansive T; apply OHM for anytime safety or a terminal-optimal schedule when rounds are fixed.

- Where: Sensor networks, distributed estimation, decentralized optimization with averaging-type operators.

- Tools/products: Consensus libraries offering “Halpern-accelerated” rounds; schedulers that map round budgets to optimal H-coefficients.

- Assumptions/dependencies: Operator nonexpansiveness under network topology; communication delays and noise may require robustness tuning.

- Simple convergence proofs via sparse H-certificates for documentation and verification (academia, regulated industries)

- What: Use the paper’s procedure to discover optimal algorithms with sparse certificates (only one nonzero λ per column index), leading to simple, auditable convergence proofs.

- How: Generate H-matrices that set most λk,j⋆(H) to zero; attach the reduced inequality set to formal proofs or model documentation.

- Where: Safety cases in healthcare ML calibration, finance risk controls, mission-critical engineering optimizers needing formal attestations.

- Tools/products: Automated proof generators emitting the small set of inequalities used in the certificate; CI pipelines that validate invariants/certificates.

- Assumptions/dependencies: Nonexpansive operator; acceptance of residual as the performance metric; organizational processes for proof integration.

- Educational and benchmarking use (education, policy/standards)

- What: Teach and benchmark worst-case optimality with exact characterizations (not just up-to-constants), demonstrating the difference between anytime and terminal-optimal algorithms.

- How: Course modules/labs implementing OHM, Dual-OHM, and new H-matrix schedules; standardized benchmarks that fix N and measure residuals.

- Where: Graduate courses in optimization; internal standards for algorithm selection in public-sector analytics projects.

- Tools/products: Open-source notebooks that compute P-invariants and λ-certificates; benchmark suites for fixed-point residual convergence.

- Assumptions/dependencies: Access to operator evaluation; reproducible nonexpansive test cases; acceptance of worst-case metrics in pedagogy.

Long-Term Applications

The following applications require further research, scaling, engineering development, or cross-domain adaptation before widespread deployment.

- Auto-synthesis and verification of optimal algorithms across operator classes (software, academia)

- What: Extend the invariants–certificates paradigm beyond nonexpansive fixed points (e.g., to smooth/composite convex optimization, saddle-point problems) to auto-synthesize exact optimal first-order schemes.

- How: Generalize H-invariants and certificates to other problem classes; build an “algorithm compiler” that produces schedules with proofs.

- Potential tools/products: A meta-optimizer that takes oracle type and iteration budget N, emits optimal update rules and machine-checkable proofs.

- Assumptions/dependencies: New invariants/certificates must exist and be tractable; integration with formal methods tooling (e.g., SMT solvers, proof assistants).

- Hardware accelerators for fixed-point proximal operators with precomputed schedules (semiconductors, edge computing)

- What: Design hardware blocks that implement H-matrix update patterns efficiently, leveraging sparse certificates and H-duality for memory-efficient time-reversal or checkpointing.

- How: Map H-coefficients to microcode; exploit self-H-dual schedules to reduce buffering; incorporate anytime OHM as safe default.

- Potential tools/products: DSP cores or ASIC IP for proximal/POCS routines in cameras, AR/VR devices, medical imaging scanners.

- Assumptions/dependencies: Stable operator oracles on hardware; robust handling of quantization/noise; certification pipelines embedded in toolchains.

- Policy frameworks for compute-budgeted optimization with verifiable guarantees (policy, governance, sustainability)

- What: Establish standards for fixed-budget algorithm selection in public projects (e.g., energy-constrained analytics), where worst-case optimality and auditable proofs are mandated.

- How: Codify residual-based criteria; require invariant/certificate reporting; define “anytime-safe” defaults (OHM) for interruptible operations.

- Potential tools/products: Compliance checklists; audits that verify P-invariants and λ-certificates; procurement guidelines referencing algorithmic guarantees.

- Assumptions/dependencies: Acceptance of residual guarantees as policy-relevant; tooling for automated verification; training of reviewers.

- Robust and stochastic extensions (research, applied ML)

- What: Adapt invariants/certificates to noisy, stochastic, or inexact oracle settings, common in large-scale ML and distributed systems.

- How: Develop “approximate nonexpansiveness” frameworks; derive modified certificates guaranteeing high-probability or robust bounds.

- Potential tools/products: Stochastic proximal solvers that retain fixed-budget optimality guarantees up to statistical tolerance.

- Assumptions/dependencies: New theory for stochastic certificates; empirical validation on realistic noise models.

- Formal verification and reversible computing for optimization (software engineering, safety)

- What: Use self-H-dual designs and dual relationships to build reversible or checkpoint-friendly optimization routines with formal proofs of correctness and optimality.

- How: Encode schedules and certificates in verification-friendly DSLs; exploit duality to recover states and reduce memory footprints.

- Potential tools/products: Verified optimization kernels for avionics/automotive control systems; reversible proximal routines for low-power computing.

- Assumptions/dependencies: Maturity of formal languages/provers for numerical algorithms; predictable operator behavior.

- Cross-domain transfer to accelerated consensus/control (networks, robotics)

- What: Systematically apply OHM and optimal H-schedules to accelerate consensus and fixed-point control laws beyond linear settings.

- How: Ensure nonexpansiveness in closed-loop mappings; adapt certificates to multi-agent interactions and delays.

- Potential tools/products: Accelerated consensus protocols with guarantees; controllers that maintain anytime safety under network constraints.

- Assumptions/dependencies: Nonexpansiveness in networked dynamics; robustness to asynchronous updates and packet loss.

- Learning-to-optimize with invariant constraints (ML, AutoML)

- What: Train meta-optimizers that search over H-matrix families while enforcing invariant equalities and certificate nonnegativity, ensuring learned algorithms are worst-case optimal.

- How: Add polynomial constraints to the search space; use differentiable or constraint-based optimizers to satisfy P-invariants and λ ≥ 0.

- Potential tools/products: AutoML modules producing task-specific, budget-aware, provably optimal proximal schedules.

- Assumptions/dependencies: Efficient constraint handling in learning loops; generalization across operators; interpretability and proof extraction.

In both immediate and long-term scenarios, practitioners should check the key assumptions: the operator T must be nonexpansive; optimality is measured via the squared fixed-point residual; terminal optimality requires known iteration budgets (only OHM is anytime-optimal); and H-certificates must be nonnegative to ensure the guarantees.

Glossary

Below is an alphabetical list of advanced domain-specific terms from the paper, each with a short definition and a verbatim usage example.

- Accelerated gradient method (AGM): A first-order optimization algorithm that achieves optimal convergence rates for smooth convex minimization by using momentum-like updates. "the celebrated accelerated gradient method (AGM) of \citet{Nesterov1983_method} achieves "

- Anti-diagonal transpose: A matrix transformation that reflects entries across the anti-diagonal (top-right to bottom-left), used to define the H-dual of an algorithm. "which is the anti-diagonal transpose of $H_{\text{OHM}$, i.e., they are the reflections of each other along the anti-diagonal:"

- Anytime algorithm: An algorithm whose convergence guarantees hold at every iteration, not just at a terminal iteration. "We show that \ref{eqn:OHM} is the only anytime algorithm, converging at the minimax optimal rate for all iterations ."

- Chu-Vandermonde identity: A combinatorial identity relating binomial coefficients, used to solve systems involving polynomial invariants. "the standard Chu-Vandermonde identity derived from the binomial series expansion, provided in Appendix~\ref{section:combinatorial-lemmas}"

- Computation oracle: An abstract interface that provides algorithm-specific information (e.g., gradients or operator evaluations) and is counted to measure algorithmic complexity. "the computation oracle associated with the algorithm class"

- Deterministic first-order algorithm: An algorithm that uses only first-order information (like gradients or operator evaluations) without randomness. "among all deterministic first-order algorithms using the gradient oracle "

- Dual-OHM: The H-dual counterpart of the Optimal Halpern Method that achieves the same optimal rate via time-reversed or anti-diagonal-transposed structure. "they introduced the Dual-OHM algorithm which exhibits the same rate~\eqref{eqn:optimal-rate-N-iterate} as OHM"

- Fixed-point residual: The discrepancy vector between a point and its image under a fixed-point operator, often measured by its norm to assess convergence. "accelerated convergence in squared fixed-point residual"

- Halpern iteration: A classical scheme for nonexpansive fixed-point problems that combines the current iterate and a fixed anchor point via convex interpolation. "The lower bound of \cref{theorem:lower-bound} is matched by the classical Halpern iteration \citep{Halpern1967_fixed} with optimized interpolation parameters"

- H-certificates: Polynomially-defined multipliers coupled with monotonicity inequalities; their nonnegativity characterizes the optimality region within the level set of H-invariants. "We additionally define what we call H-certificates "

- H-dual: The algorithm obtained by taking the anti-diagonal transpose of an algorithm’s H-matrix; it preserves certain invariant properties, including optimal rates. "In general, the H-dual of an algorithm in is an algorithm represented by the anti-diagonal transpose of its H-matrix"

- H-invariants: Homogeneous polynomial quantities in H-matrix entries that remain constant across all minimax-optimal algorithms and characterize them. "we call H-invariants, whose values stay constant over all optimal H-matrices"

- H-matrix: A lower triangular matrix encoding predetermined step-sizes for H-matrix representable algorithms. "define its H-matrix as the lower triangular matrix "

- H-matrix representable: A class of algorithms whose updates can be written as linear combinations of past residuals with predetermined coefficients forming a lower triangular H-matrix. "we say it is H-matrix representable"

- Homogeneous polynomial: A polynomial where all terms have the same total degree; used to define H-invariants in the entries of H-matrices. "each is a homogeneous polynomial with degree "

- Lower triangular matrix: A matrix with nonzero entries only on or below the main diagonal; used to structure H-matrices for algorithm representation. "lower triangular H-matrices"

- Maximally monotone operator: A monotone operator that cannot be extended while preserving monotonicity; arises via resolvent transformations of nonexpansive maps. " is a maximally monotone operator"

- Minimax optimality: A performance guarantee showing that an algorithm attains the best possible worst-case rate over a problem class. "representing minimax optimality:"

- Monotone inclusion problems: Problems involving finding a point included in a monotone operator’s graph; equivalent to certain proximal fixed-point formulations. "(equivalently, proximal setting for monotone inclusion problems)"

- Nonexpansive operator: A 1-Lipschitz mapping that does not expand distances; central to fixed-point convergence analysis. "nonexpansive operator "

- Optimal Halpern Method (OHM): The Halpern iteration with optimized parameters that exactly matches the minimax lower bound for nonexpansive fixed-point problems. "which we call the Optimal Halpern Method (OHM):"

- Proximal setting: A formulation where problems are solved via resolvent or proximal mappings, often equivalent to fixed-point problems for monotone operators. "(equivalently, proximal setting for monotone inclusion problems)"

- Resolvent: The operator associated with a monotone operator , used to transform nonexpansive maps into monotone inclusions. "where is the resolvent"

- Resisting oracle technique: A method to construct worst-case instances against a given algorithm class to prove lower bounds. "using the resisting oracle technique of \citet{NemirovskiYudin1983_problem}"

- Span condition: A structural constraint where each update lies in the span of previous residual directions, used in lower-bound analyses. "satisfying the span condition"

- Worst-case rate: The convergence rate guaranteed for the worst possible instance in a problem class; often expressed as an inequality bound. "exact optimal worst-case rate"

- Young's inequality: An inequality used to bound products of norms and derive rate guarantees in convergence proofs. "the last inequality is Young's inequality"

Collections

Sign up for free to add this paper to one or more collections.